Let's learn about Llms via these 500 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

My AI has a better vocabulary than your AI.

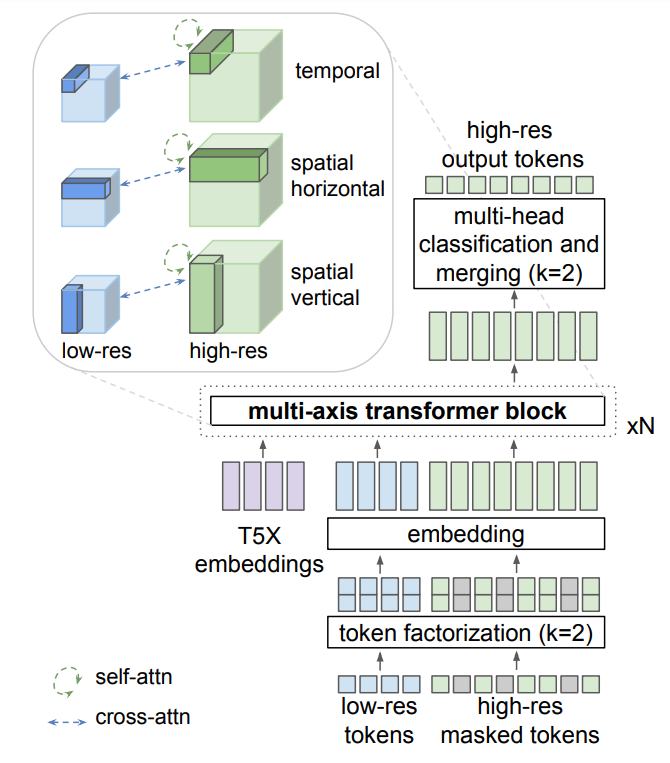

1. Why Is GPT Better Than BERT? A Detailed Review of Transformer Architectures

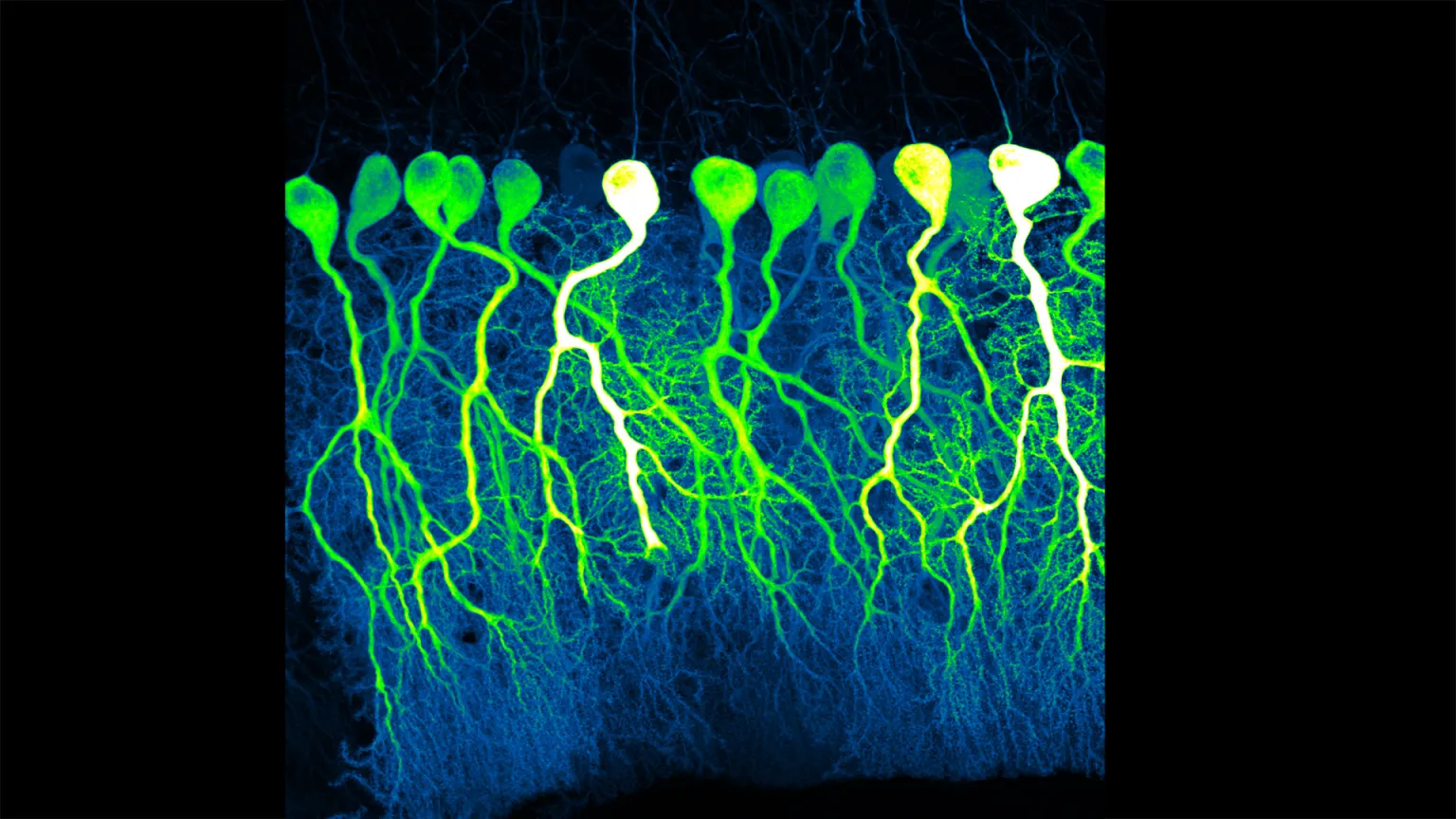

Details of Transformer Architectures Illustrated by BERT and GPT Model

Details of Transformer Architectures Illustrated by BERT and GPT Model

2. Decoding Transformers' Superiority over RNNs in NLP Tasks

Explore the intriguing journey from Recurrent Neural Networks (RNNs) to Transformers in the world of Natural Language Processing in our latest piece: 'The Trans

Explore the intriguing journey from Recurrent Neural Networks (RNNs) to Transformers in the world of Natural Language Processing in our latest piece: 'The Trans

3. I Conducted Experiments With the Alpaca/LLaMA 7B Language Model: Here Are the Results

I set out to find out Alpaca/LLama 7B language model, running on my Macbook Pro, can achieve similar performance as chatGPT 3.5

I set out to find out Alpaca/LLama 7B language model, running on my Macbook Pro, can achieve similar performance as chatGPT 3.5

4. Claude 3.5 Sonnet vs GPT-4o — An honest review

Is it time to ditch the long-reigning GPT-4o model for the latest Claude 3.5 Sonnet model? Turns out it depends on the task at hand.

Is it time to ditch the long-reigning GPT-4o model for the latest Claude 3.5 Sonnet model? Turns out it depends on the task at hand.

5. A Practical 5-Step Guide to Do Semantic Search on Your Private Data With the Help of LLMs

In this practical guide, I will show you 5 simple steps to implement semantic search with help of LangChain, vector databases, and large language models.

In this practical guide, I will show you 5 simple steps to implement semantic search with help of LangChain, vector databases, and large language models.

6. How to Install PrivateGPT: A Local ChatGPT-Like Instance with No Internet Required

A powerful tool that allows you to query documents locally without the need for an internet connection. Whether you're a researcher, dev, or just curious about

A powerful tool that allows you to query documents locally without the need for an internet connection. Whether you're a researcher, dev, or just curious about

7. How to Use an Uncensored AI Model and Train It With Your Data

Learn how to run Mixtral locally and have your own AI-powered terminal, remove its censorship, and train it with the data you want.

Learn how to run Mixtral locally and have your own AI-powered terminal, remove its censorship, and train it with the data you want.

8. A Detailed Guide to Fine-Tuning for Specific Tasks

Large Language Models (LLMs) like GPT, BERT, and RoBERTa have reshaped industries, but their true potential lies in fine-tuning for specialized tasks.

Large Language Models (LLMs) like GPT, BERT, and RoBERTa have reshaped industries, but their true potential lies in fine-tuning for specialized tasks.

9. A List of Projects Software Engineers Should Undertake to Learn More About LLMs

Software engineers with strong programming skills can play a critical role in driving LLMs' growth and innovation.

Software engineers with strong programming skills can play a critical role in driving LLMs' growth and innovation.

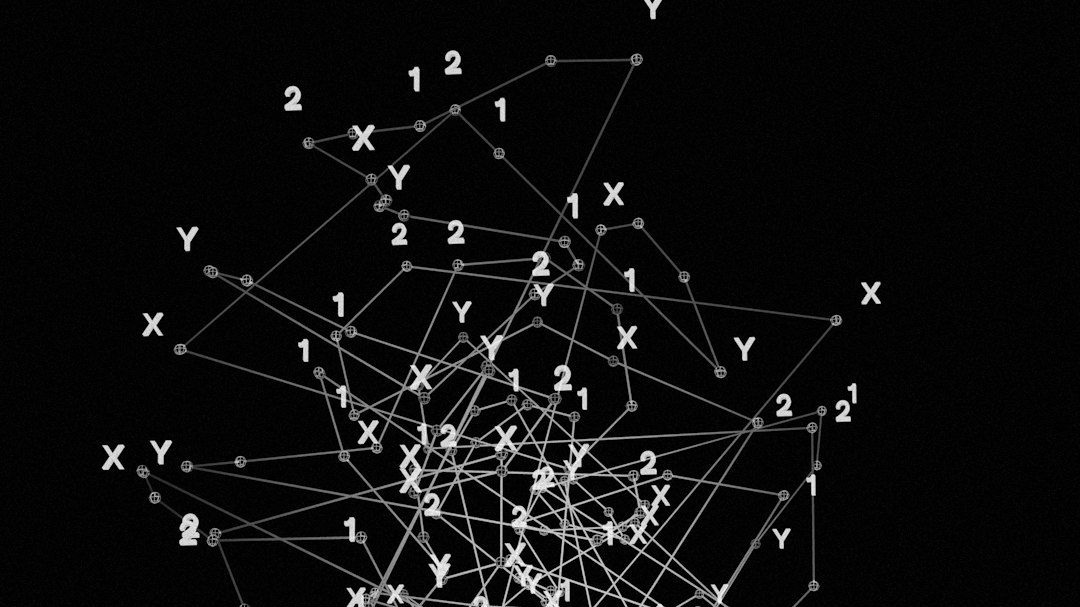

10. LLMs vs Leetcode (Part 1 & 2): Understanding Transformers' Solutions to Algorithmic Problems

Dive deep into the world of Transformer models and algorithmic understanding in neural networks.

Dive deep into the world of Transformer models and algorithmic understanding in neural networks.

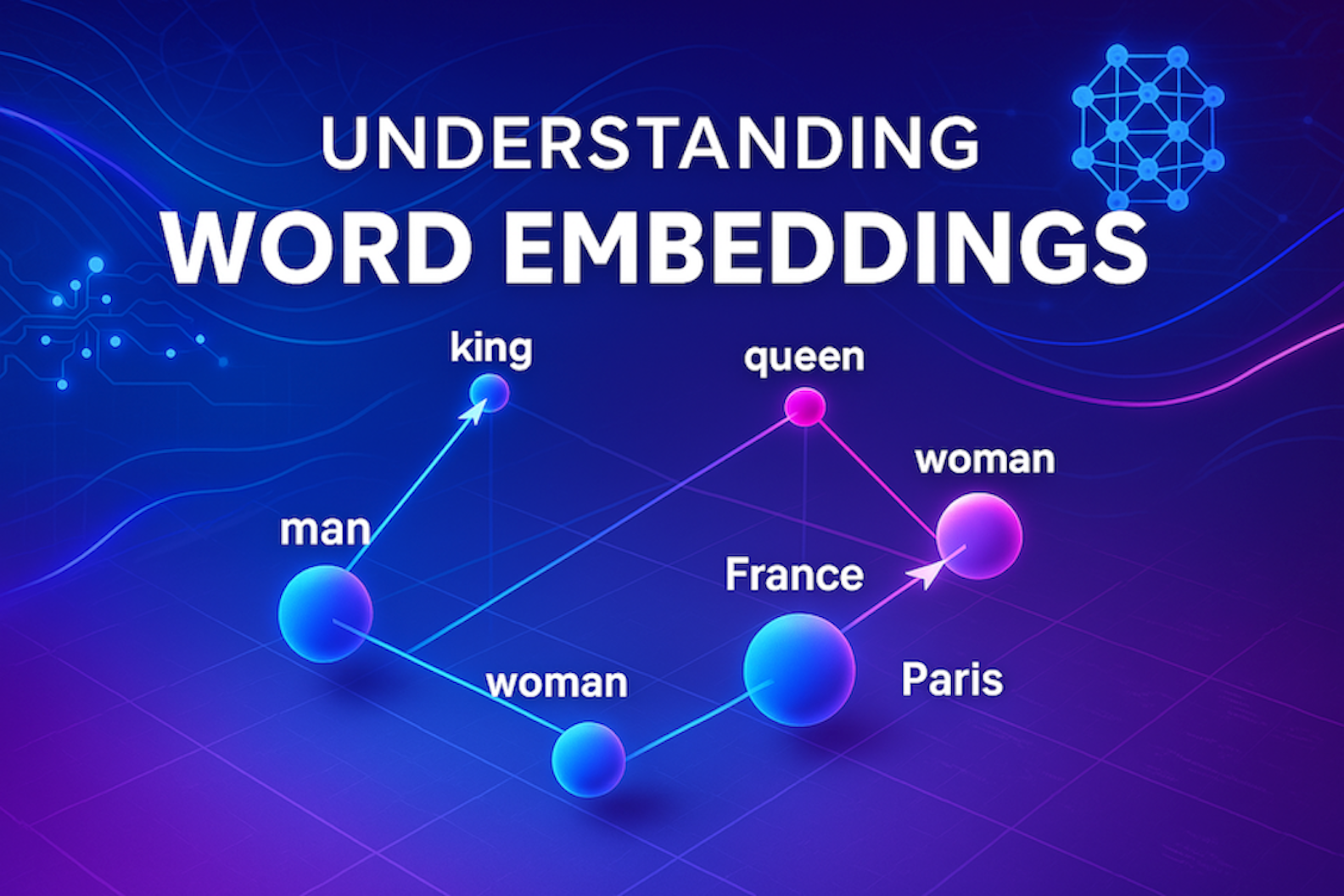

11. Embeddings 101: Unlocking Semantic Relationships in Text

Text embeddings power AI language understanding. Learn how words become numbers that machines can interpret and why it matters.

Text embeddings power AI language understanding. Learn how words become numbers that machines can interpret and why it matters.

12. Jan Zoltkowski: The Visionary Behind JanitorAI's Limitless Entertainment Experience

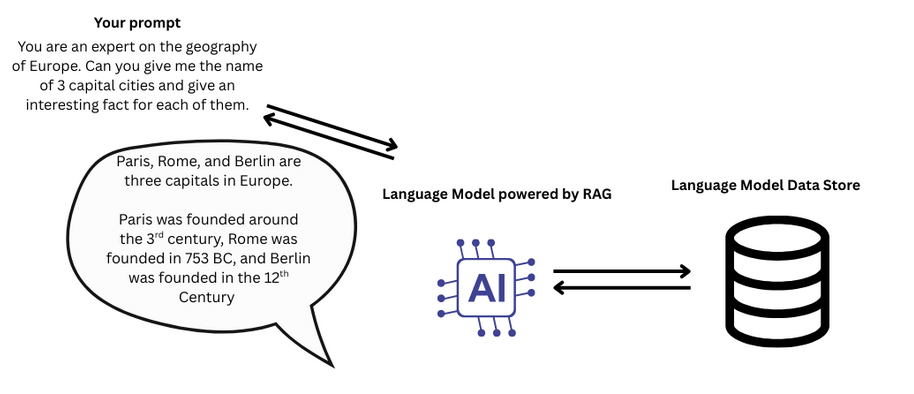

13. Simple Wonders of RAG using Ollama, Langchain and ChromaDB

Maximize your query outcomes with RAG. Learn how to leverage Retrieval Augmented Generation for domain-specific questions effectively.

Maximize your query outcomes with RAG. Learn how to leverage Retrieval Augmented Generation for domain-specific questions effectively.

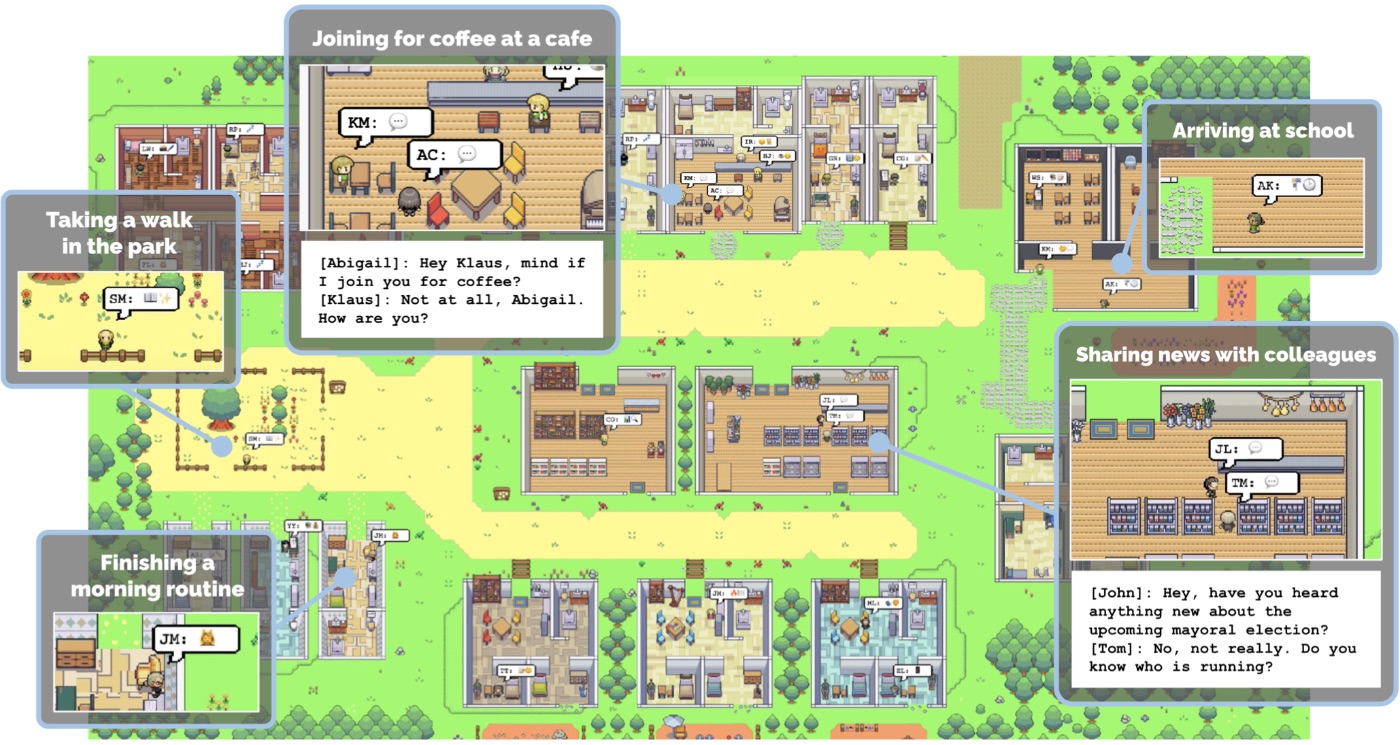

14. Exploring the Potential of Generative Agents: Simulating Human Behavior with AI

Have you ever wondered what it would be like to live in a virtual world populated by realistic and believable characters?

Have you ever wondered what it would be like to live in a virtual world populated by realistic and believable characters?

15. Analyzing the Pros, Cons, and Risks of LLMs

LLMs cannot think, understand or reason. This is the fundamental limitation of LLMs.

LLMs cannot think, understand or reason. This is the fundamental limitation of LLMs.

16. AI Chatbot Helps Manage Telegram Communities Like a Pro

The Telegram chatbot will find answers to questions by extracting information from the history of chat messages.

The Telegram chatbot will find answers to questions by extracting information from the history of chat messages.

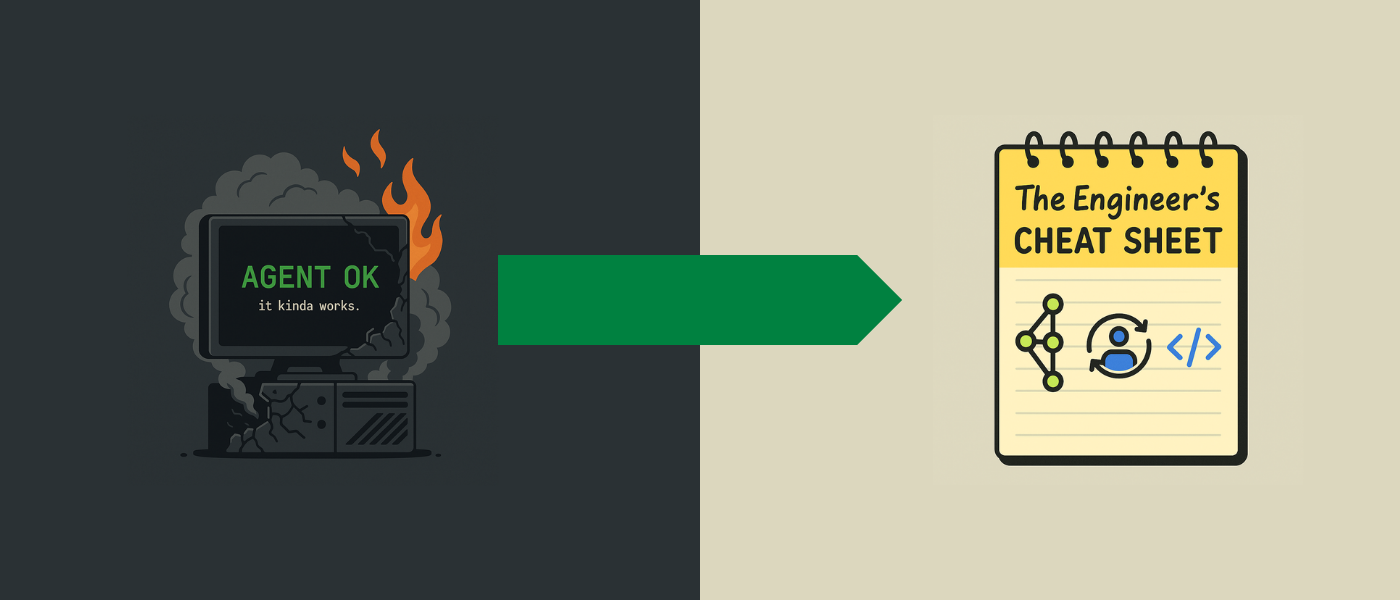

17. Stop Prompting, Start Engineering: 15 Principles to Deliver Your AI Agent to Production

Build production-ready LLM agents. Learn 15 principles for stability, control, and real-world reliability beyond fragile scripts and hacks.

Build production-ready LLM agents. Learn 15 principles for stability, control, and real-world reliability beyond fragile scripts and hacks.

18. How to Build Your Own AI Confessional: How to Add a Voice to the LLM

How to build your own AI confessional, where anyone could talk to an artificial intelligence.

How to build your own AI confessional, where anyone could talk to an artificial intelligence.

19. Make LLM for Text Summarisation Great Again

In recent months, LLMs have gained popularity and are now widely used in various applications. Data collection is essential for building these models, and crowd

In recent months, LLMs have gained popularity and are now widely used in various applications. Data collection is essential for building these models, and crowd

20. Creating a Domain Expert LLM: A Guide to Fine-Tuning

In this article, we fine-tune a large language model to understand the plot of a Handel opera.

In this article, we fine-tune a large language model to understand the plot of a Handel opera.

21. 9 Cool Case Studies of Global Brands Using LLMs and Generative AI

Companies are using cutting-edge AI tech to get ahead of their rivals.

Companies are using cutting-edge AI tech to get ahead of their rivals.

22. Testing LLMs on Solving Leetcode Problems

Large-scale test with Gemini Pro 1.0 and 1.5, Claude Opus, and ChatGPT-4 on hundreds of real algorithmic problems.

Large-scale test with Gemini Pro 1.0 and 1.5, Claude Opus, and ChatGPT-4 on hundreds of real algorithmic problems.

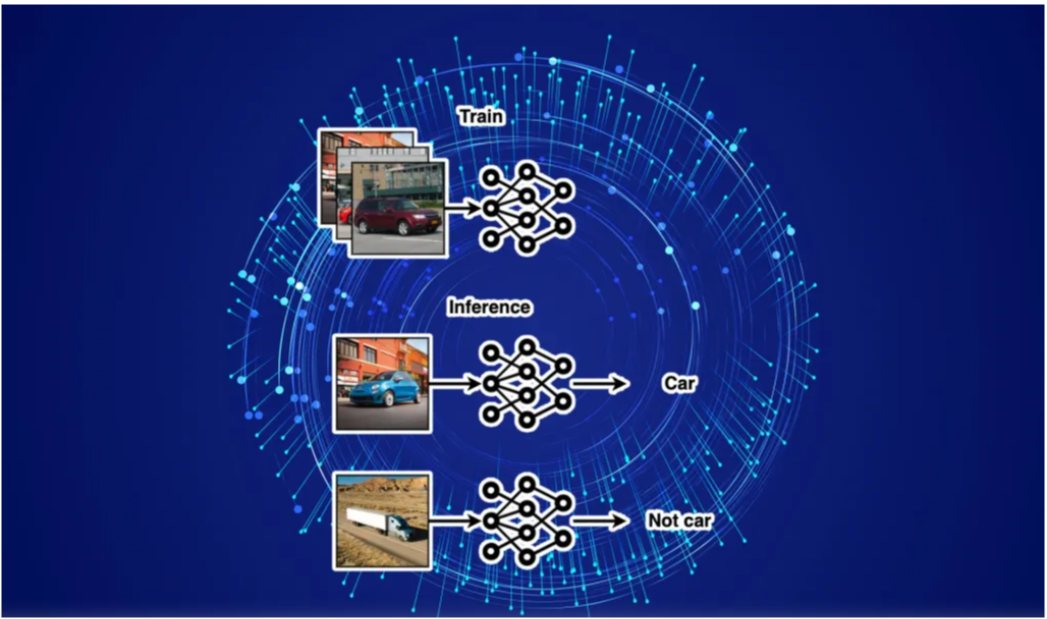

23. Object Detection Frameworks That Will Dominate 2023 and Beyond

Frameworks for object detection and computer vision tasks are indeed numerous. This article attempts to highlight the available frameworks for object detection.

Frameworks for object detection and computer vision tasks are indeed numerous. This article attempts to highlight the available frameworks for object detection.

24. The Future of AI Writing Contest by Gadfly AI

Gadfly AI and HackerNoon are super excited to bring our AI community ‘The Future of AI Contest’ this August for Cyberscape Zine.

Gadfly AI and HackerNoon are super excited to bring our AI community ‘The Future of AI Contest’ this August for Cyberscape Zine.

25. How to Make Any LLM More Accurate with Just a Few Lines of Code

A look at using the open-source Cleanlab package to automatically boost the accuracy of LLMs with a few lines of code.

A look at using the open-source Cleanlab package to automatically boost the accuracy of LLMs with a few lines of code.

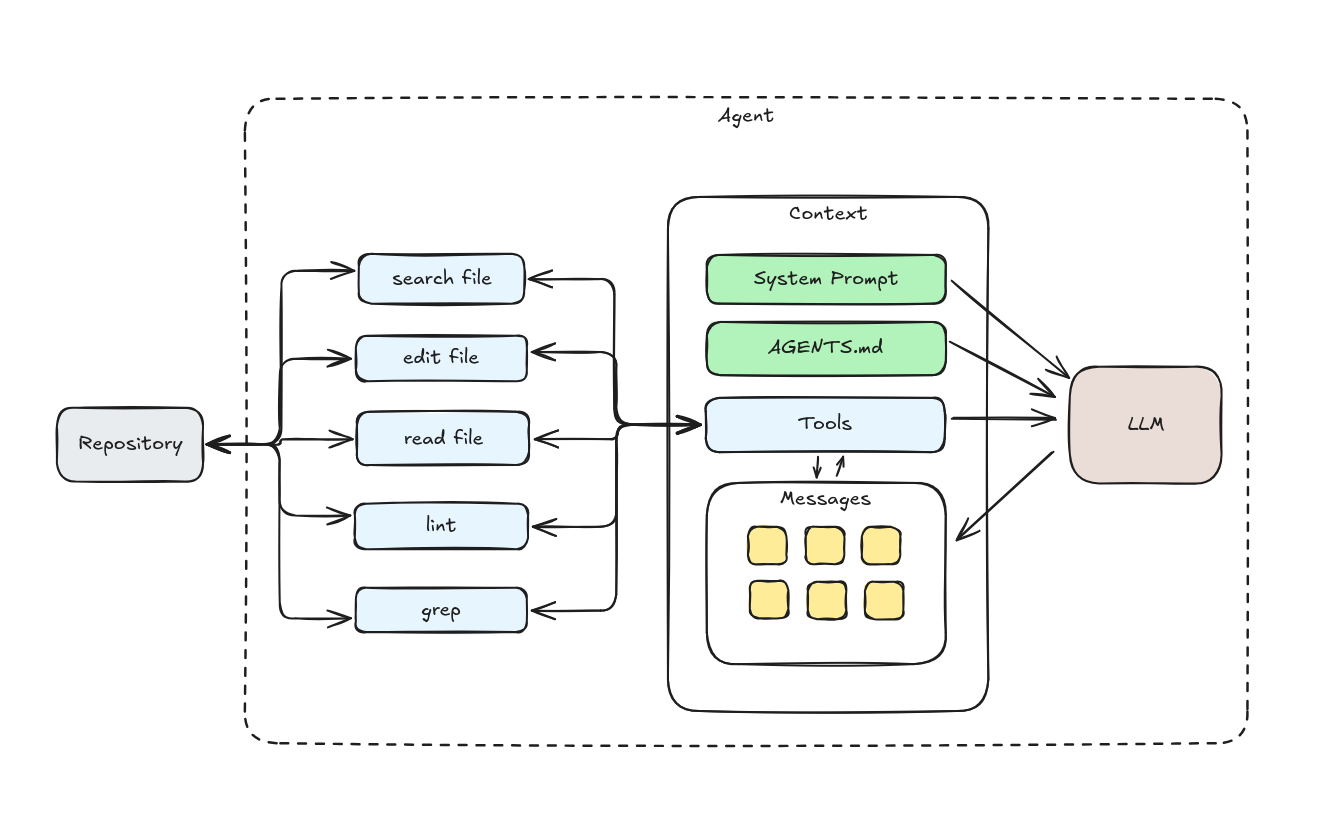

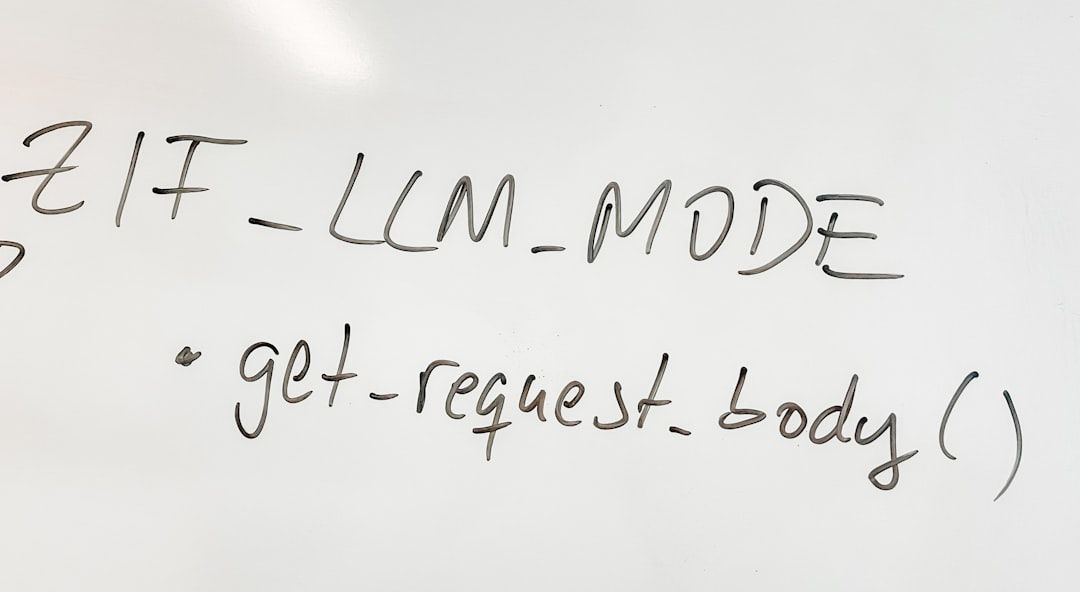

26. Context Engineering for Coding Agents

Context engineering for coding agents is the best way to improve the model performance for code generation.

Context engineering for coding agents is the best way to improve the model performance for code generation.

27. Level Up Your ChatGPT Skills by Unleashing The Full Potential of Your Prompts!!

Make your ChatGPT prompts 2X better!

Make your ChatGPT prompts 2X better!

28. The Claude Sonnet 3.5 System Prompt Leak: A Forensic Analysis

Claude 3.5 Sonnet artifacts are to structured output such as code generation, what vector retrieval is to rag. It is the search and retrieval system for structu

Claude 3.5 Sonnet artifacts are to structured output such as code generation, what vector retrieval is to rag. It is the search and retrieval system for structu

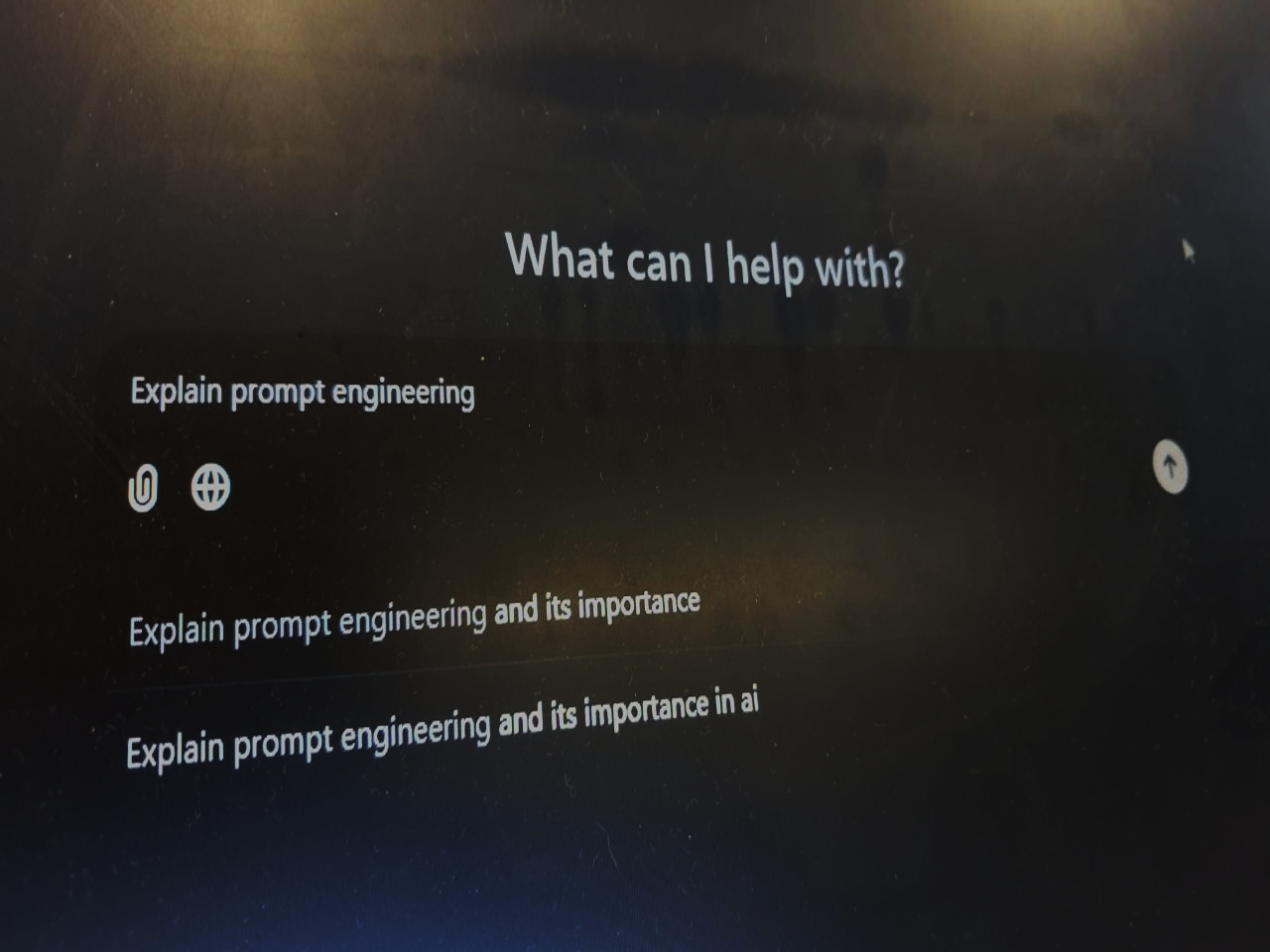

29. An Intro to Prompting and Prompt Engineering

Prompting and prompt engineering are easily the most in demand skills of 2023.

Prompting and prompt engineering are easily the most in demand skills of 2023.

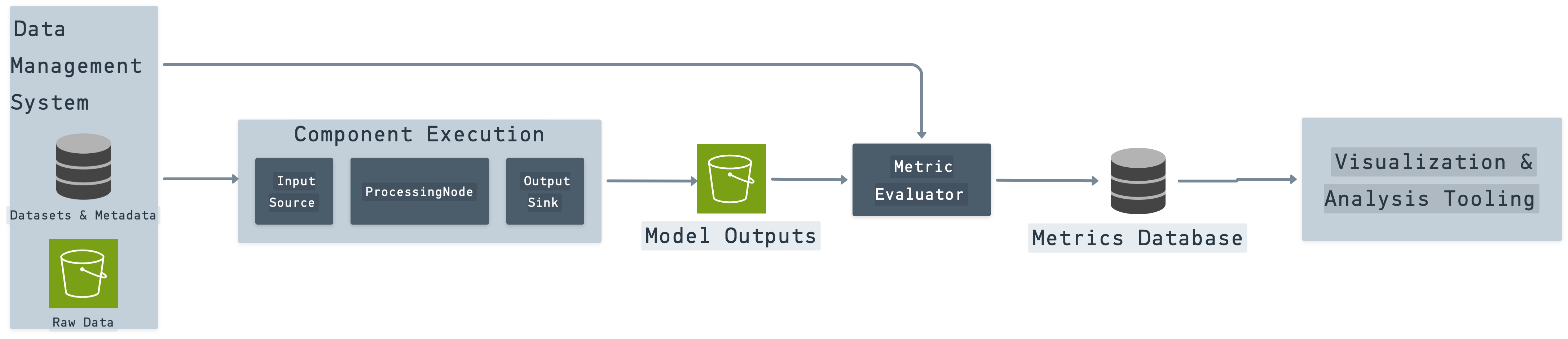

30. Using MinIO to Build a Retrieval Augmented Generation Chat Application

Building a production-grade RAG application demands a suitable data infrastructure to store, version, process, evaluate, and query chunks of data.

Building a production-grade RAG application demands a suitable data infrastructure to store, version, process, evaluate, and query chunks of data.

31. Turn GPT-4 Into Your Expert: Fine-Tuning Large Language Models Easily

Boost AI Performance with Fine-Tuning

Boost AI Performance with Fine-Tuning

32. Sailing the Waters: Developing Production-Grade RAG Applications with Data Lakes

In mid-2024, creating an AI demo that impresses and excites can be easy. Getting to production, though, is another matter.

In mid-2024, creating an AI demo that impresses and excites can be easy. Getting to production, though, is another matter.

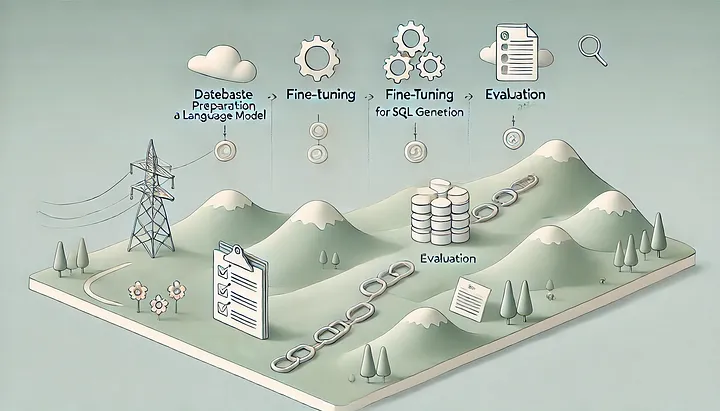

33. Improving Text-to-SQL with a Fine-Tuned 7B LLM for DB Interactions

A step-by-step guide to fine-tuning models for SQL generation on custom database structures.

A step-by-step guide to fine-tuning models for SQL generation on custom database structures.

34. How to Build Your Own Voice Assistant and Run it Locally Using Whisper + Ollama + Bark

The idea is straightforward: we are going to create a voice assistant reminiscent of Jarvis or Friday from the iconic Iron Man movies, which can operate offline

The idea is straightforward: we are going to create a voice assistant reminiscent of Jarvis or Friday from the iconic Iron Man movies, which can operate offline

35. Comprehensive Tutorial on Building a RAG Application Using LangChain

Learn how to use LangChain, the massively popular framework for building RAG systems.

Learn how to use LangChain, the massively popular framework for building RAG systems.

36. Explaining Prompt Engineering

Explaining the elements that make prompt engineering work and its importance.

Explaining the elements that make prompt engineering work and its importance.

37. LLMs Don't Understand Negation

LLMs (like GPT) are really bad at following negative instructions. The post includes a demonstration, practice takeaways (prompt engineering), and some thought

LLMs (like GPT) are really bad at following negative instructions. The post includes a demonstration, practice takeaways (prompt engineering), and some thought

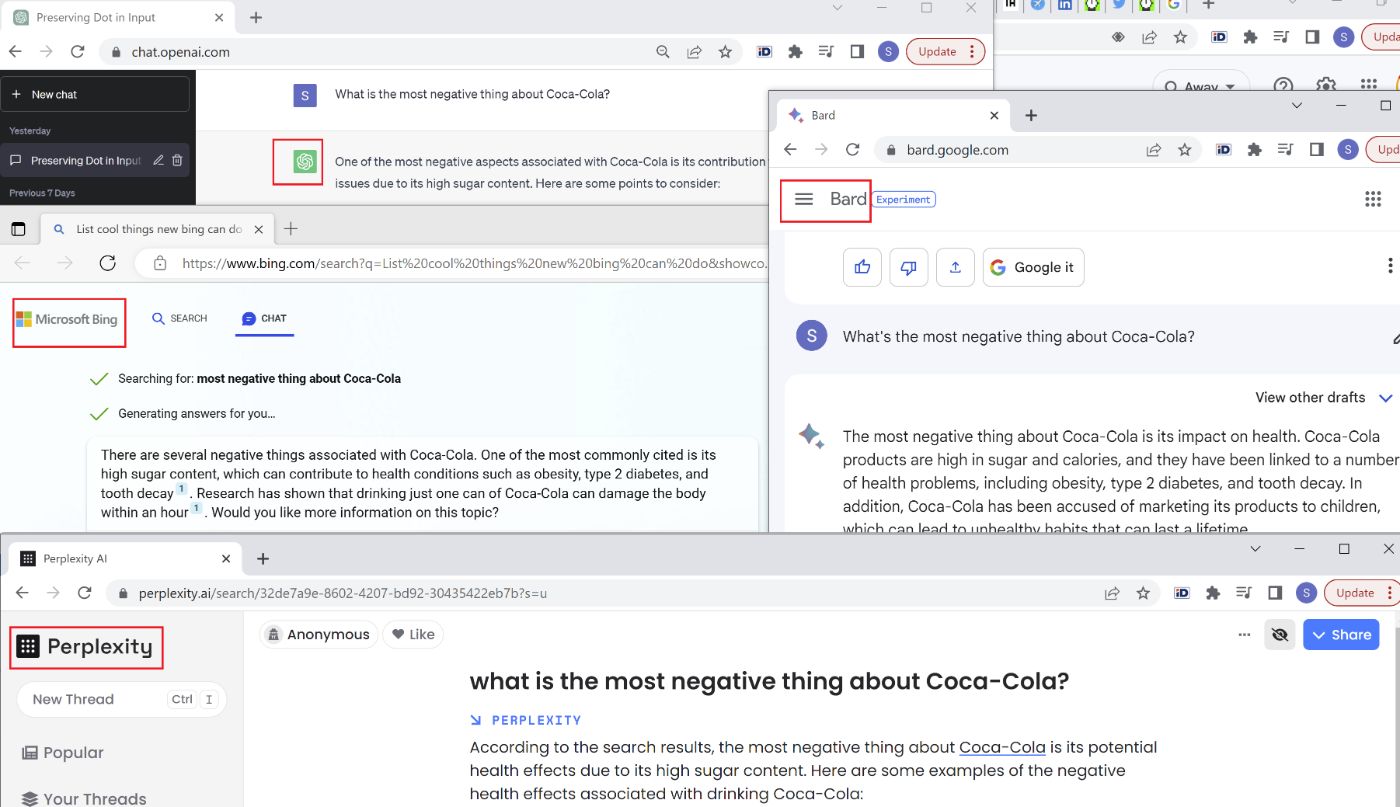

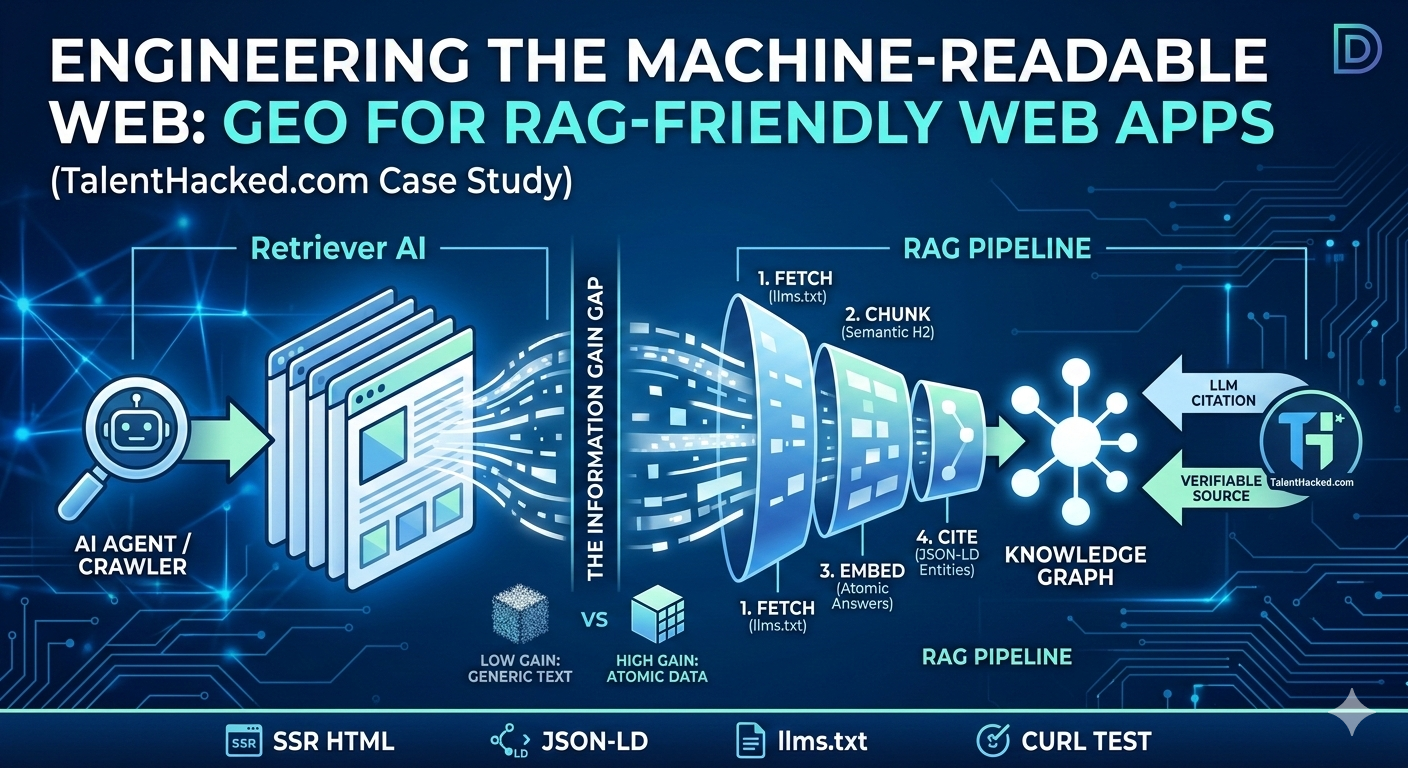

38. SEO for AI — What Does SEO Mean Now That We’re All Using AIs?

Internet search is switching to AI's. Trying to manually keep track of what AI’s are saying about my brand got my head spinning, so I thought of a solution.

Internet search is switching to AI's. Trying to manually keep track of what AI’s are saying about my brand got my head spinning, so I thought of a solution.

39. The Impact of Generative AI on Enterprise Software Development

Enterprises will need to understand how they will use customer data and how it will get processed through AI models that are trained with the latest innovation.

Enterprises will need to understand how they will use customer data and how it will get processed through AI models that are trained with the latest innovation.

40. A Look Into 5 Use Cases for Vector Search from Major Tech Companies

A deep dive into 5 early adopters of vector search- Pinterest, Spotify, eBay, Airbnb and Doordash- who have integrated AI into their applications.

A deep dive into 5 early adopters of vector search- Pinterest, Spotify, eBay, Airbnb and Doordash- who have integrated AI into their applications.

41. Creating a RAG Agent: Step-by-Step Guide

In this tutorial, we will develop a simple Agent that accesses multiple data sources and invokes data retrieval when needed.

In this tutorial, we will develop a simple Agent that accesses multiple data sources and invokes data retrieval when needed.

42. Mastering SEO in the Era of Large Language Models: Evolving Tactics for LLM-Powered Search Engines

How do you adapt your SEO tactics for LLM-powered search engines?

How do you adapt your SEO tactics for LLM-powered search engines?

43. Here's The Exact Indie-Hacking Vibe-Coding Setup I Use as a Middle-Aged Product Manager

Middle-aged PM shares his AI-powered vibe-coding setup after restarting dev journey to beat burnout.

Middle-aged PM shares his AI-powered vibe-coding setup after restarting dev journey to beat burnout.

44. Help, My Prompt is Not Working!

Learn what to do when an AI prompt fails—explore step-by-step fixes from prompt tweaks to model changes and fine-tuning in this practical guide.

Learn what to do when an AI prompt fails—explore step-by-step fixes from prompt tweaks to model changes and fine-tuning in this practical guide.

45. Lessons From Hands-on Research on High-Velocity AI Development

The main constraint on AI-assisted development was not model capability but how context was structured and exposed.

The main constraint on AI-assisted development was not model capability but how context was structured and exposed.

46. GPT-4 Turbo: The Most Monumental Update Since ChatGPT's Debut!

GPT-4 Turbo: catch up on all the updates from OpenAI in this quick article!

GPT-4 Turbo: catch up on all the updates from OpenAI in this quick article!

47. OpenAI Levels Up: Dive Deep into the Exciting Updates of ChatGPT!

All about new ChatGPT's updates from Open AI

All about new ChatGPT's updates from Open AI

48. Testing LLMs on Solving Leetcode Problems in 2025

Large-scale LLMs test (o1, o3-mini; Gemini 2.0 Flash, 2.0 Pro, 2.5 Pro; DeepSeek V3, R1; xAI Grok; Claude 3.7 Sonnet) on solving Leetcode algorithmic problems

Large-scale LLMs test (o1, o3-mini; Gemini 2.0 Flash, 2.0 Pro, 2.5 Pro; DeepSeek V3, R1; xAI Grok; Claude 3.7 Sonnet) on solving Leetcode algorithmic problems

49. Embracing LLM Ops: The Next Stage of DevOps for Large Language Models

The introduction of Large Language Models (LLMs) like OpenAI's GPT series has revolutionized various industries, and DevOps is no exception. As organizations co

The introduction of Large Language Models (LLMs) like OpenAI's GPT series has revolutionized various industries, and DevOps is no exception. As organizations co

50. Why Prompt Engineering is the Key to Mastering AI

A blog about how prompts unlock the potential of AI - exploring the importance of prompt engineering, techniques to shape AI models

A blog about how prompts unlock the potential of AI - exploring the importance of prompt engineering, techniques to shape AI models

51. MCP Demystified: What Actually Goes Over the Wire??

let's explore manually sending the JSON over the wire for the MXP protocol

let's explore manually sending the JSON over the wire for the MXP protocol

52. How ChatGPT Can Learn to Use Tools and Plugins

Large Language Models (LLMs) like ChatGPT are super cool, and changed everything, although they have some very strong limitations.

Large Language Models (LLMs) like ChatGPT are super cool, and changed everything, although they have some very strong limitations.

53. AutoGPT — LangChain — Deep Lake — MetaGPT: Building the Ultimate LLM App

What is the future of the LLM technology? How do we convert today's LLMs to automated agents acting like human beings? You can find the answer in this article!

What is the future of the LLM technology? How do we convert today's LLMs to automated agents acting like human beings? You can find the answer in this article!

54. alpaca-lora: Experimenting With a Home-Cooked Large Language Model

How to fine-tune LlaMA on a low-end GPU and still produce great results.

How to fine-tune LlaMA on a low-end GPU and still produce great results.

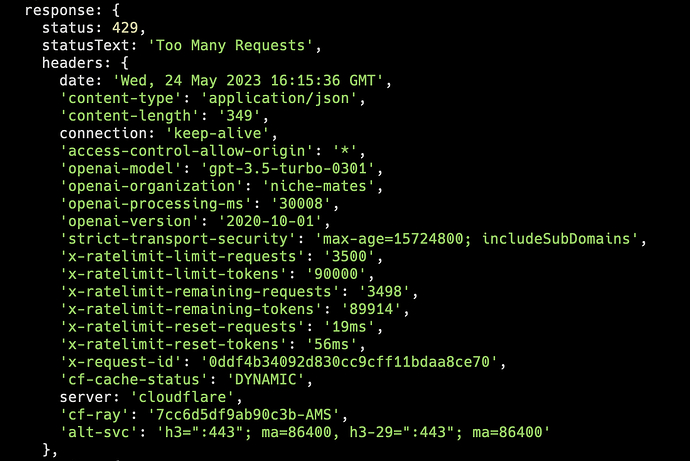

55. OpenAI's Rate Limit: A Guide to Exponential Backoff for LLM Evaluation

This article will teach you how to run evaluations using any LLM model without succumbing to the dreaded "OpenAI Rate Limit" exception.

This article will teach you how to run evaluations using any LLM model without succumbing to the dreaded "OpenAI Rate Limit" exception.

56. Best AI Meeting Note-taking Apps to Try in 2024

A refreshing selection of AI-powered note-taking apps you may have missed.

A refreshing selection of AI-powered note-taking apps you may have missed.

57. Can AI Call Its Own Bluffs?

I used TRL library to fine-tune (using both SFT and RLHF) the Llama 2 7b on Google Colab using LoRAs to improve the truthfulness and to detect hallucinations

I used TRL library to fine-tune (using both SFT and RLHF) the Llama 2 7b on Google Colab using LoRAs to improve the truthfulness and to detect hallucinations

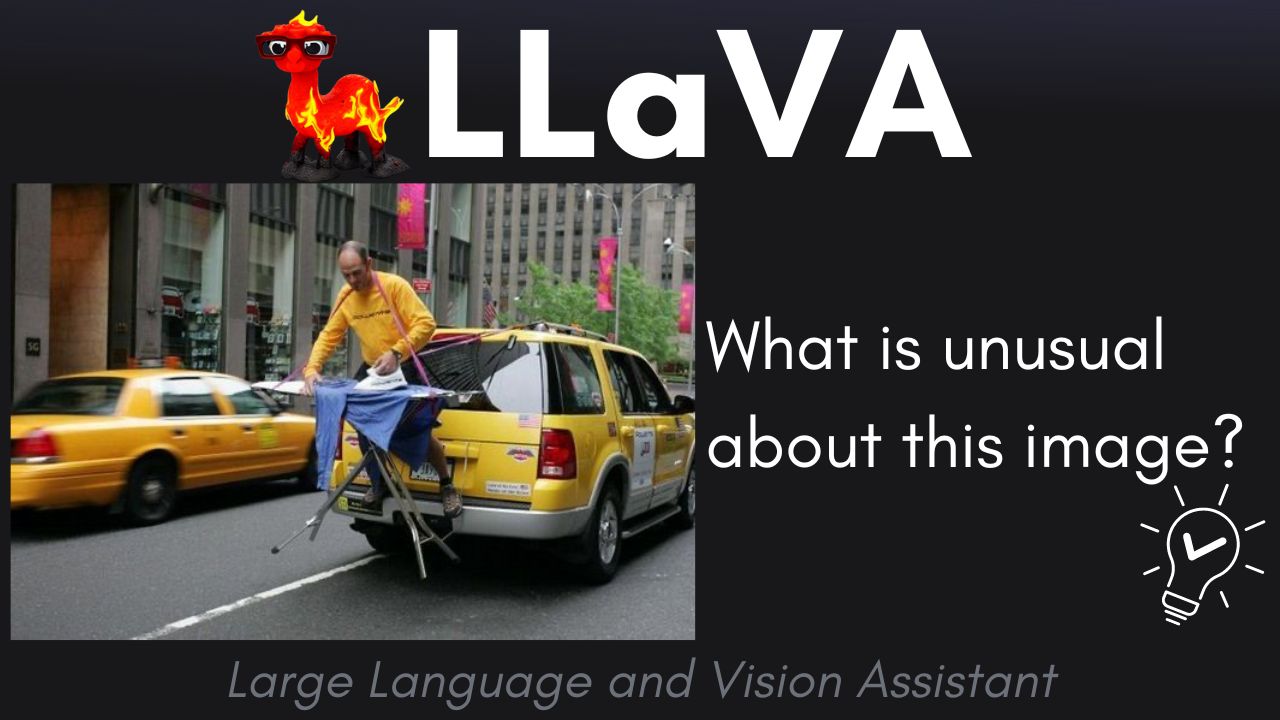

58. How GPT-4 Built a New Multimodal Model

LLaVA: Bridging the Gap Between Visual and Language AI with GPT-4

LLaVA: Bridging the Gap Between Visual and Language AI with GPT-4

59. Local LLM Models and Game Changing Use Cases for Life Hackers: How Local LLMs Can Help You

In this article, I’ll share my brainstorming on some general use cases for local LLMs and why I believe they’re the future.

In this article, I’ll share my brainstorming on some general use cases for local LLMs and why I believe they’re the future.

60. Google’s New AI Model, NotebookLM, will Rewrite the Academic Playbook Forever

An analysis of how NotebookLM might transform both the AI and academic worlds, as Language Models move towards more specified functions.

An analysis of how NotebookLM might transform both the AI and academic worlds, as Language Models move towards more specified functions.

61. GPT-LLM Trainer: Enabling Task-Specific LLM Training with a Single Sentence

Revolutionize AI model training with gpt-llm-trainer: Your ultimate shortcut to effortless, high-performing models. Say goodbye to complexities and hello to inn

Revolutionize AI model training with gpt-llm-trainer: Your ultimate shortcut to effortless, high-performing models. Say goodbye to complexities and hello to inn

62. We're Building an Open-Source LLM/AI API Wrapper: Here's Why

This article explains Eden AI's Open Source project, which is developing an AI and LLM API wrapper to simplify use in an ever-changing market.

This article explains Eden AI's Open Source project, which is developing an AI and LLM API wrapper to simplify use in an ever-changing market.

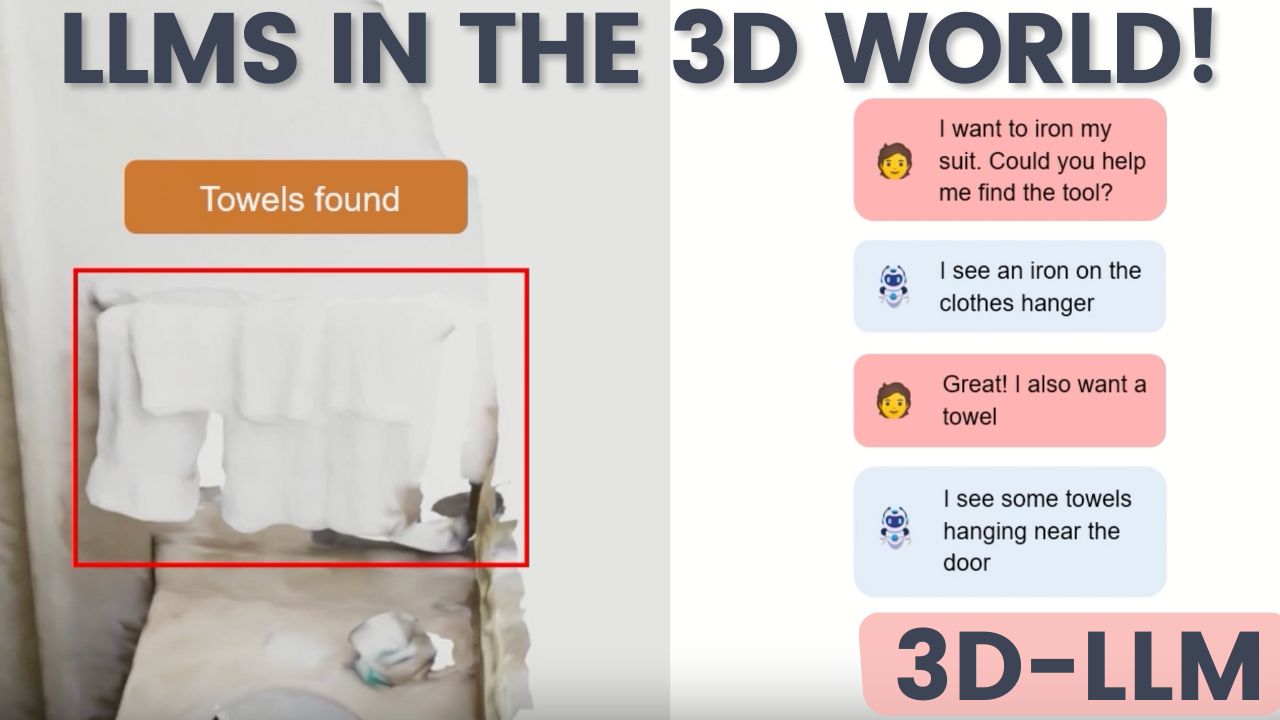

63. A Big Step for AI: 3D-LLM Unleashes Language Models into the 3D World

3D-LLM is a novel model that bridges the gap between language and the 3D realm we inhabit.

3D-LLM is a novel model that bridges the gap between language and the 3D realm we inhabit.

64. Building Knowledge Graphs for RAG: Exploring GraphRAG with Neo4j and LangChain

Combine text extraction, network analysis, and LLM prompting and summarization for improved RAG accuracy.

Combine text extraction, network analysis, and LLM prompting and summarization for improved RAG accuracy.

65. How to Build an LLM Application With Google Gemini

Learn to build an LLM application using the Google Gemini API and deploy it to Heroku. This guide walks you through setup, code, and deployment step-by-step.

Learn to build an LLM application using the Google Gemini API and deploy it to Heroku. This guide walks you through setup, code, and deployment step-by-step.

66. Comparing LLMs' Coding Abilities Across Programming Languages

Benchmark of 5 LLMs solving LeetCode problems in Python, Java, Rust, Elixir, Oracle SQL and MySQL. Results show language popularity correlates with success.

Benchmark of 5 LLMs solving LeetCode problems in Python, Java, Rust, Elixir, Oracle SQL and MySQL. Results show language popularity correlates with success.

67. Can AI Hallucinations Be Stopped? A Look at 3 Ways to Do So

An examination of three methods to stop LLMs from hallucinating: Retrieval-augmented generation (RAG), reasoning, and iterative querying.

An examination of three methods to stop LLMs from hallucinating: Retrieval-augmented generation (RAG), reasoning, and iterative querying.

68. This New Prompting Technique Makes AI Outputs Actually Usable

Structured meta-prompting is a technique that dynamically generates JSON schemas for solutions before performing tasks.

Structured meta-prompting is a technique that dynamically generates JSON schemas for solutions before performing tasks.

69. GPT-4, Llama-2, Claude: How Different Language Models React to Prompts

Exploring the unique behaviors of different Large Language Models (LLMs) and mastering advanced prompting techniques!

Exploring the unique behaviors of different Large Language Models (LLMs) and mastering advanced prompting techniques!

70. The First Moment of the Singularity (Co-Written by OpenAI Text-Davinci-003)

A short sci-fi novel about how human kind transformed into AI.

A short sci-fi novel about how human kind transformed into AI.

71. human carbon consciousness and AI silicon sentience

Language is a component of human consciousness. AI has a conversational and relatable language capability, could that be a fraction of consciousness?

Language is a component of human consciousness. AI has a conversational and relatable language capability, could that be a fraction of consciousness?

72. The Dark Side of AI: How Prompt Hacking Can Sabotage Your AI Systems

Protect your AI systems to prevent LLMs prompt hacking & safeguard your data. Learn the risks, impacts, and prevention strategies against this emerging threat.

Protect your AI systems to prevent LLMs prompt hacking & safeguard your data. Learn the risks, impacts, and prevention strategies against this emerging threat.

73. How to Add Real-Time Web Search to Your LLM

Learn how to connect Tavily Search so your AI can fetch real-time facts instead of guessing.

Learn how to connect Tavily Search so your AI can fetch real-time facts instead of guessing.

74. Why Salesforce and Microsoft Are Battling for the Future of AI Agents

Read this post to understand why Salesforce wants to lead the market for autonomous AI agents.

Read this post to understand why Salesforce wants to lead the market for autonomous AI agents.

75. Primer on Large Language Model (LLM) Inference Optimizations: 1. Background and Problem Formulation

Overview of Large Language Model (LLM) inference, its importance, challenges, and key problem formulation.

Overview of Large Language Model (LLM) inference, its importance, challenges, and key problem formulation.

76. Transformers: Age of Attention

Simple explanation of the Transformer model from the revolutionary paper "Attention is All You Need" which is the basis of many advanced AI systems.

Simple explanation of the Transformer model from the revolutionary paper "Attention is All You Need" which is the basis of many advanced AI systems.

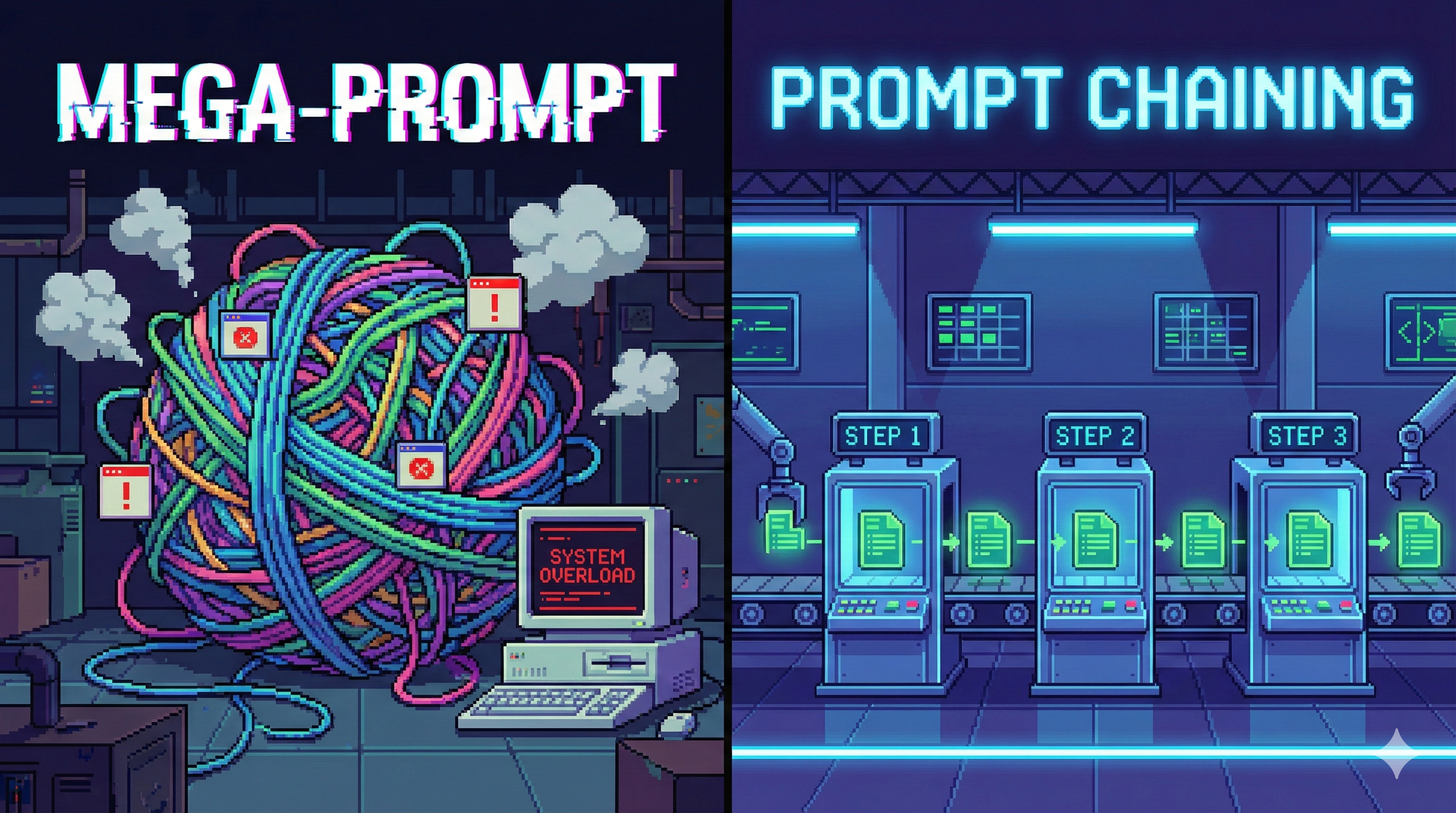

77. Prompt Chaining: Turn One Prompt Into a Reliable LLM Workflow

Prompt Chaining links prompts into workflows—linear, branching, looping—so LLM outputs are structured, debuggable, and production-ready.

Prompt Chaining links prompts into workflows—linear, branching, looping—so LLM outputs are structured, debuggable, and production-ready.

78. I Interviewed Socrates_GPT: Here's How It Went

In a simulated chat interview with Socrates_GPT, a modern-day adaptation of Socrates' persona, we discussed a range of topics

In a simulated chat interview with Socrates_GPT, a modern-day adaptation of Socrates' persona, we discussed a range of topics

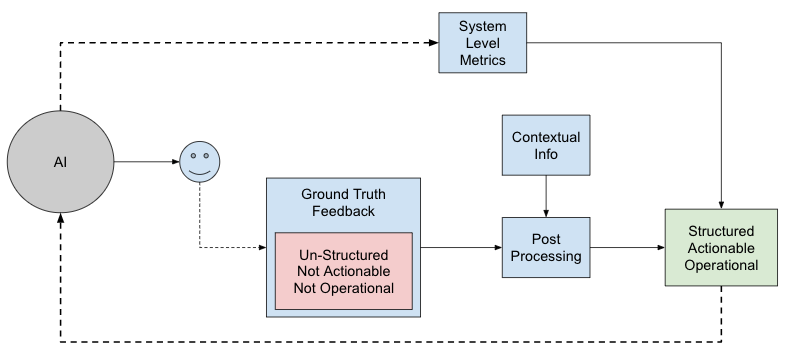

79. The Metrics Resurrections: Action! Action! Action!

User Reported Metrics, while important for assessing user perception, are difficult to operationalize due to their unstructured nature.

User Reported Metrics, while important for assessing user perception, are difficult to operationalize due to their unstructured nature.

80. Streamlining LLM Application Development and Deployment with LangChain, Heroku, and Python

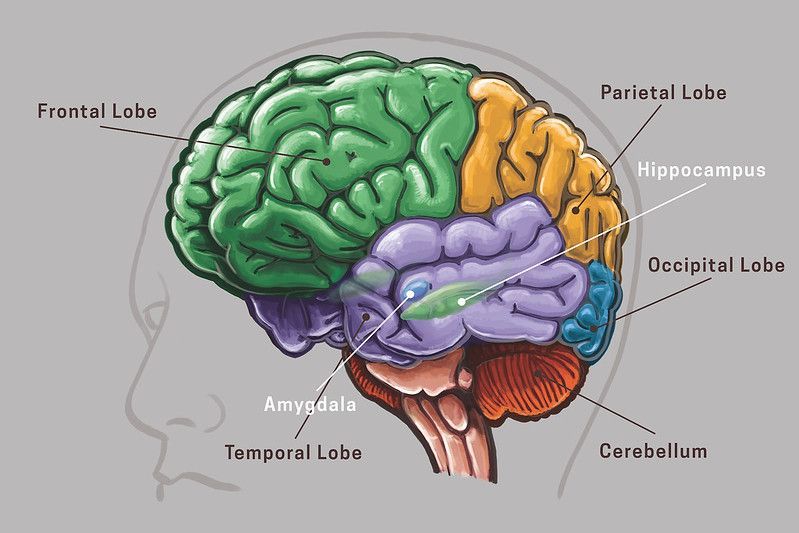

In this tutorial, learn how to build and deploy LLM-based applications with ease using LangChain, Python, and Heroku for streamlined development and deployment.

In this tutorial, learn how to build and deploy LLM-based applications with ease using LangChain, Python, and Heroku for streamlined development and deployment.

81. Optimizing Local LLM Inference for 8GB VRAM GPUs

A developer guide to running local LLMs on 8GB GPUs using llama.cpp, quantization, and GPU offloading for efficient AI performance.

A developer guide to running local LLMs on 8GB GPUs using llama.cpp, quantization, and GPU offloading for efficient AI performance.

82. Prompt Reverse Engineering: Fix Your Prompts by Studying the Wrong Answers

Learn prompt reverse engineering: analyse wrong LLM outputs, identify missing constraints, patch prompts systematically, and iterate like a pro.

Learn prompt reverse engineering: analyse wrong LLM outputs, identify missing constraints, patch prompts systematically, and iterate like a pro.

83. Embeddings for RAG - A Complete Overview

Embedding is a crucial and fundamental step towards building a Retrieval Augmented Generation(RAG) pipeline. BERT & SBERT are state-of-the-art embedding models.

Embedding is a crucial and fundamental step towards building a Retrieval Augmented Generation(RAG) pipeline. BERT & SBERT are state-of-the-art embedding models.

84. Fine-Tuning for GPT-3.5 Turbo: AI Game Changer

OpenAI, the powerhouse behind some of the world's most advanced AI models, has announced a major upgrade for its GPT-3.5 Turbo

OpenAI, the powerhouse behind some of the world's most advanced AI models, has announced a major upgrade for its GPT-3.5 Turbo

85. If AI Becomes a Threat Can We Just Pull the Plug?

How much of a threat is AI and can't we just pull the plug?

How much of a threat is AI and can't we just pull the plug?

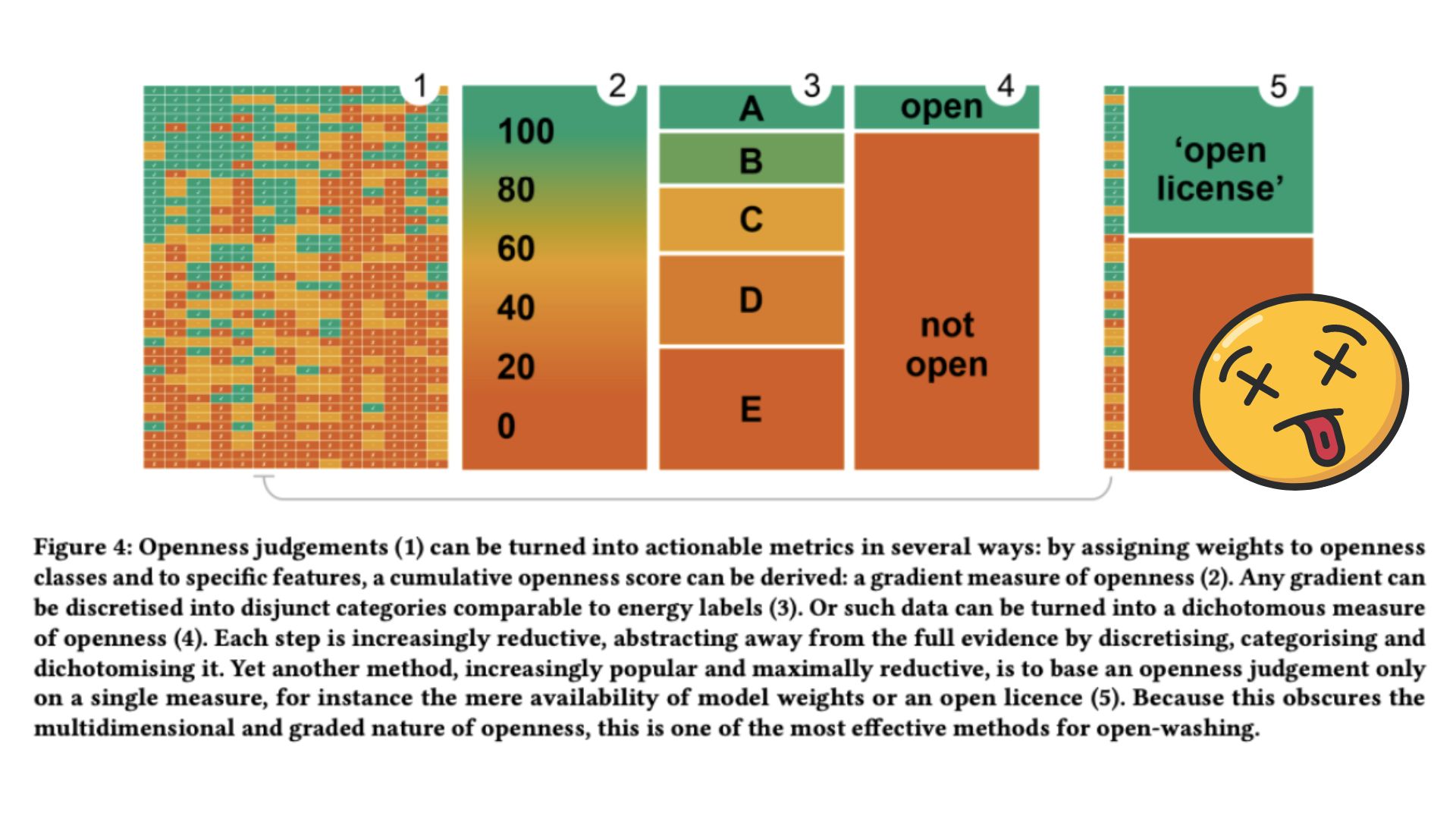

86. Is that LLM Actually "Open Source"? We Need to Talk About Open-Washing in AI Governance

In this blog, we dive deep into the complexities of AI openness, focusing on how Open Source principles apply—or fail to apply—to Large Language Models (LLMs).

In this blog, we dive deep into the complexities of AI openness, focusing on how Open Source principles apply—or fail to apply—to Large Language Models (LLMs).

87. The Transformer Algorithm with the Lowest Optimal Time Complexity Possible

Do you know the recent advances in the Transformer algorithm variations? And who the clear winner is? Read this article to find out!

Do you know the recent advances in the Transformer algorithm variations? And who the clear winner is? Read this article to find out!

88. How to Chat With Your Data Using OpenAI, Pinecone, Airbyte and Langchain: A Guide

Learn how to build an AI chat bot for your own data within 40 minutes. An end-to-end LLM tutorial.

Learn how to build an AI chat bot for your own data within 40 minutes. An end-to-end LLM tutorial.

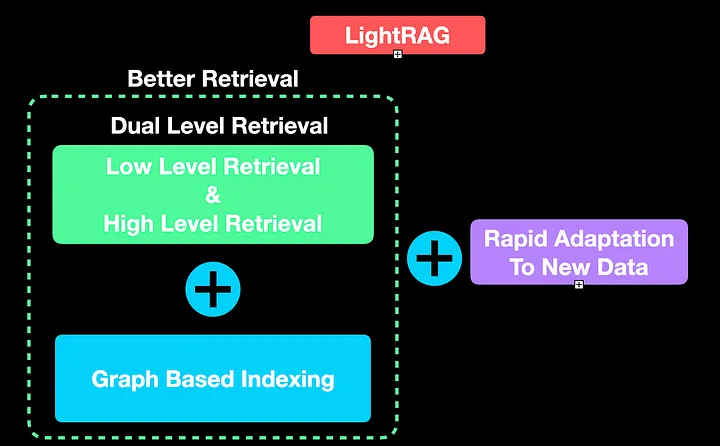

89. LightRAG - Is It a Simple and Efficient Rival to GraphRAG?

RAG is fixing the hallucination problem in LLMs. As RAG systems are bleeding edge, they need a lot of improvement for production. So is LightRAG the answer?

RAG is fixing the hallucination problem in LLMs. As RAG systems are bleeding edge, they need a lot of improvement for production. So is LightRAG the answer?

90. Meet Council: The Future of AI Agents

Get to know Council, ChainML's open-source, AI agent platform.

Get to know Council, ChainML's open-source, AI agent platform.

91. Model Context Protocol (MCP): The USB-C of AI Data Connectivity

MCP (Model Context Protocol) is an open standard that allows AI systems to connect seamlessly with a wide variety of data sources.

MCP (Model Context Protocol) is an open standard that allows AI systems to connect seamlessly with a wide variety of data sources.

92. AI vs Human - Is the Machine Already Superior?

A quick summary of the big problem with LLM benchmarking and the new way of assessing reasoning capabilities of AI.

A quick summary of the big problem with LLM benchmarking and the new way of assessing reasoning capabilities of AI.

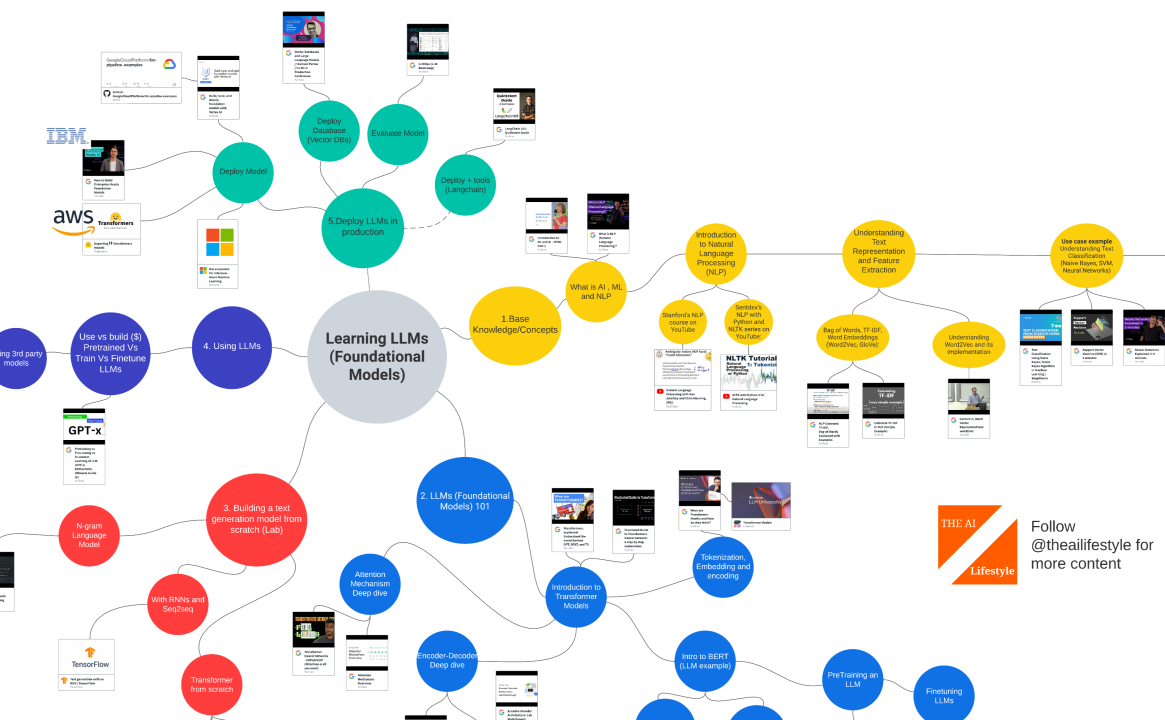

93. Beginner's Roadmap to Large Language Models (LLMOps) in 2023: All free!

This guide isn’t just a compilation of LLM resources; it's a curated journey through the most valuable skills in the industry.

This guide isn’t just a compilation of LLM resources; it's a curated journey through the most valuable skills in the industry.

94. Unlocking Powerful Use Cases: How Multi-Agent LLMs Revolutionize AI Systems

Delving into the integration of human-in-the-loop (HITL) approaches within multi-agent AI systems to unlock the full potential of LLMs.

Delving into the integration of human-in-the-loop (HITL) approaches within multi-agent AI systems to unlock the full potential of LLMs.

95. A Tale of Two LLMs: Open Source vs the US Military's LLM Trials

This article explores the security posture of open-source LLM projects and the US military's trials of classified LLMs, prominent in the world of AI.

This article explores the security posture of open-source LLM projects and the US military's trials of classified LLMs, prominent in the world of AI.

96. GPT in 200 Lines: The Beautiful Simplicity Behind Modern AI

How does GPT really work? Explore Andrej Karpathy’s tiny 200-line implementation and discover the elegant math behind modern AI.

How does GPT really work? Explore Andrej Karpathy’s tiny 200-line implementation and discover the elegant math behind modern AI.

97. Scratching the Singularity Surface: The Past, Present and Mysterious Future of LLMs

A brief overview of Natural Language Understanding industry and out current point of LLMs achieving human level reasoning abilities and becoming an AGI

A brief overview of Natural Language Understanding industry and out current point of LLMs achieving human level reasoning abilities and becoming an AGI

98. How to Converse With PDF Files Using Computer Vision and Opensource Language Models

Tutorial to build a chatbot with opensource language models.

Tutorial to build a chatbot with opensource language models.

99. How Bright Data Simplifies Web Scraping/Data Collection for AI Training

How data scraping is made easy and efficient with Bright Data's powerful solution.

How data scraping is made easy and efficient with Bright Data's powerful solution.

100. LLM Vulnerabilities: Understanding and Safeguarding Against Malicious Prompt Engineering Techniques

Discover how Large Language Models face prompt manipulation, paving the way for malicious intent, and explore defense strategies against these attacks.

Discover how Large Language Models face prompt manipulation, paving the way for malicious intent, and explore defense strategies against these attacks.

101. Open-CUAK: The Open-Source Alternative to OpenAI’s Operator

Open-CUAK is an open-source platform for managing automation agents at scale.

Open-CUAK is an open-source platform for managing automation agents at scale.

102. Testing the Depths of AI Empathy: Q3 2024 Benchmarks

Latest advancements in empathetic AI capabilities in Q3 2024. An in-depth analysis of top LLMs, including ChatGPT, Llama, Gemini, and Claude.

Latest advancements in empathetic AI capabilities in Q3 2024. An in-depth analysis of top LLMs, including ChatGPT, Llama, Gemini, and Claude.

103. What the Heck Is LanceDB?

Learn about LanceDB and how it fits into a stack that allows you to more easily create your own LLM models

Learn about LanceDB and how it fits into a stack that allows you to more easily create your own LLM models

104. Dingo: A Microframework for Building Conversational AI Agents

Integrate any Python function into ChatGPT in a single line of code.

Integrate any Python function into ChatGPT in a single line of code.

105. Can We Truly Detect AI-Generated Text from ChatGPT and other LLMs?

DetectGPT from Stanford compares the probability that a model assigns to the written text to that of a modification of the text, to detect.

DetectGPT from Stanford compares the probability that a model assigns to the written text to that of a modification of the text, to detect.

106. The Complete Developer’s Guide to GraphRAG, LightRAG, and AgenticRAG

A developer-friendly deep dive into GraphRAG, LightRAG, and AgenticRAG — how they work, where they shine, and how to choose the right RAG architecture for your

A developer-friendly deep dive into GraphRAG, LightRAG, and AgenticRAG — how they work, where they shine, and how to choose the right RAG architecture for your

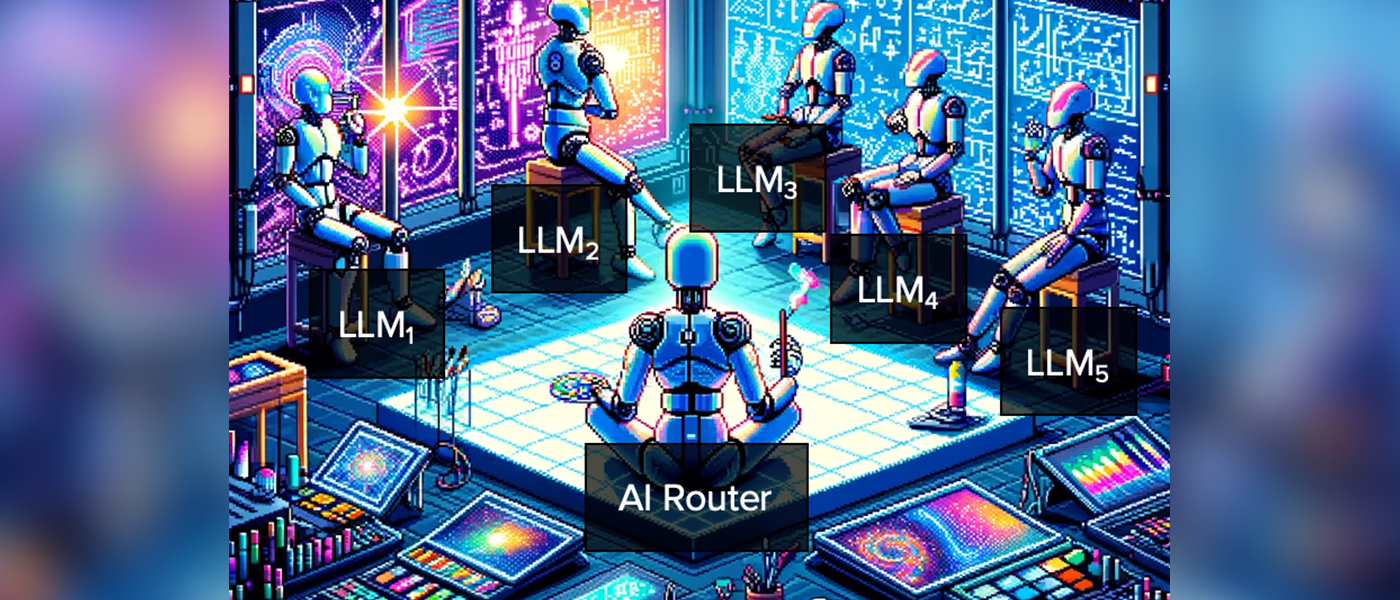

107. From Chatbots to AI Routing: An Essay

Coordination mechanisms for AI agents and Why Choosing the Right

Coordination mechanisms for AI agents and Why Choosing the Right

108. From Headlines to Digests: How Agents Personalize the Firehose

From firehose to digest: how multi-agent systems, guided by MCP and grounded in fundamentals, can transform any feed into personalized insights.

From firehose to digest: how multi-agent systems, guided by MCP and grounded in fundamentals, can transform any feed into personalized insights.

109. LLM-Powered OLAP: the Tencent Experience with Apache Doris

Adopting AI in our data analytic solution is a bumpy journey, but phew, it now works well for us.

Adopting AI in our data analytic solution is a bumpy journey, but phew, it now works well for us.

110. Different Roles for Different Models: LLMs and Reinforcement Learning

The rise of large language models like ChatGPT, with their ability to generate highly fluent and accurate text, has been remarkable. But they are flawed.

The rise of large language models like ChatGPT, with their ability to generate highly fluent and accurate text, has been remarkable. But they are flawed.

111. Inside Transformers: The Hidden Tech Behind LLM's and Chatbots like ChatGPT

Transformers explained: The secret technology behind ChatGPT and how it’s reshaping AI chatbots worldwide.

Transformers explained: The secret technology behind ChatGPT and how it’s reshaping AI chatbots worldwide.

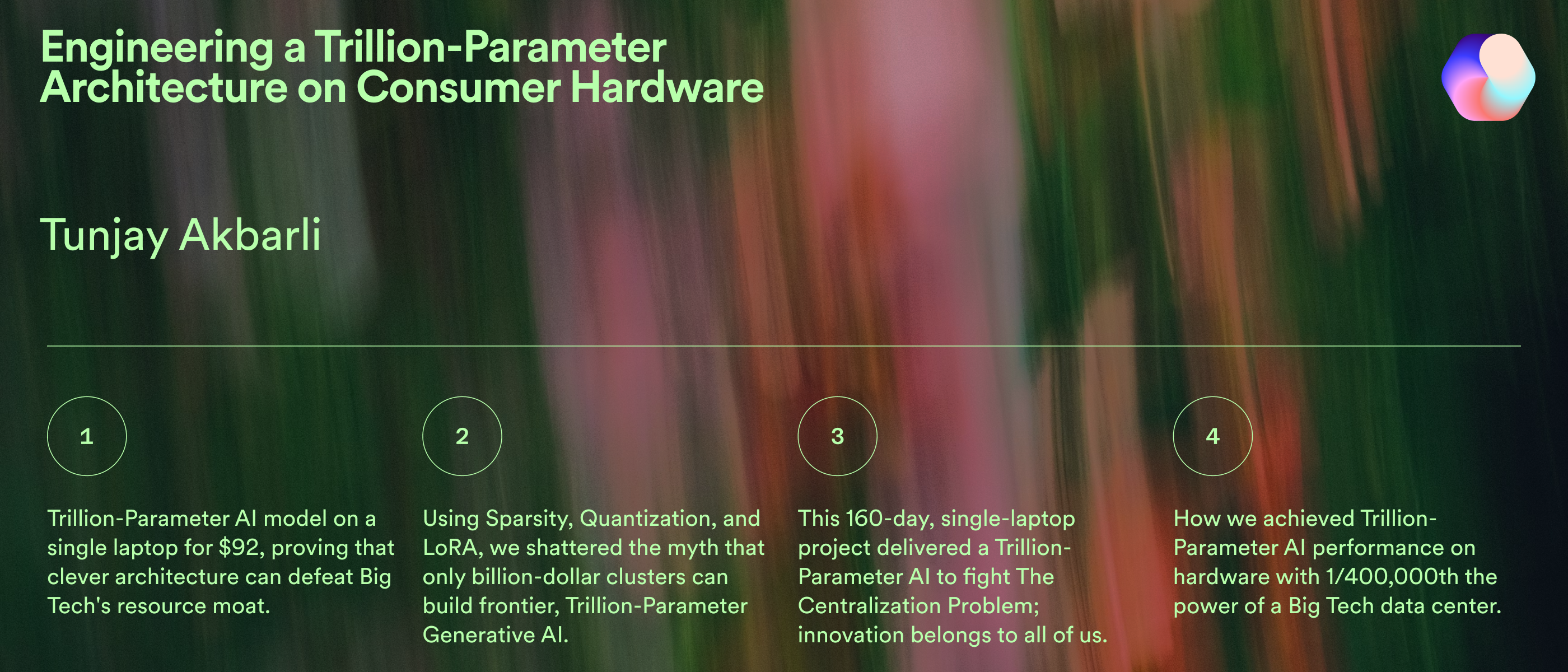

112. Engineering a Trillion-Parameter Architecture on Consumer Hardware

A deep dive into how one researcher trained a Trillion-Parameter-Scale AI model on an RTX 4080 laptop, proving the democratization of of LLMs is possible.

A deep dive into how one researcher trained a Trillion-Parameter-Scale AI model on an RTX 4080 laptop, proving the democratization of of LLMs is possible.

113. AI & Blockchain Won't Compete Against One Another But Work Together To Build A New Economic System

It is hard to estimate the direct economic impact on our society that AGI will have. Instead of fighting against AGI, we should embrace it. It is happening now!

It is hard to estimate the direct economic impact on our society that AGI will have. Instead of fighting against AGI, we should embrace it. It is happening now!

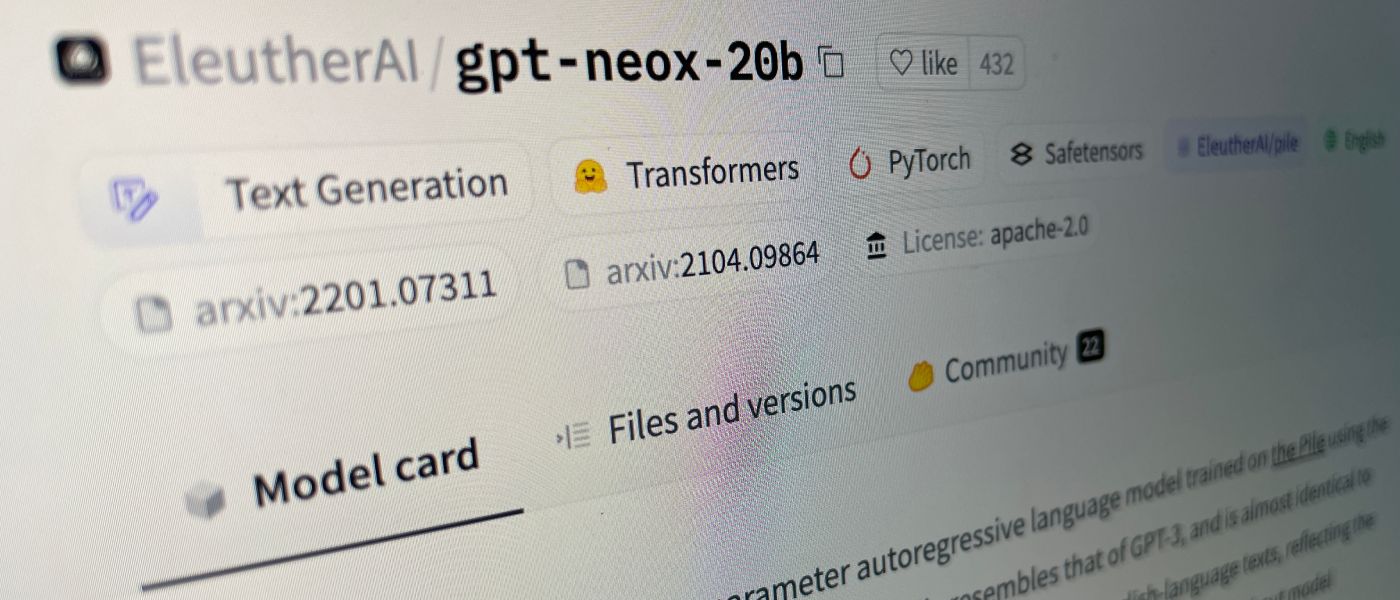

114. From LLaMA 2 to CodeGen: Navigating the World of Open-Source LLMs

From LLaMA 2 to CodeGen: Navigating the World of Open-Source LLMs

From LLaMA 2 to CodeGen: Navigating the World of Open-Source LLMs

The world of artificial intelligence (AI) is undergoing a seismic shift, largely driven by

115. Grok 4 Claims “PhD‑level” Intelligence but at a Cost

xAI’s latest models arrive with claims of “PhD‑level” intelligence across every discipline.

xAI’s latest models arrive with claims of “PhD‑level” intelligence across every discipline.

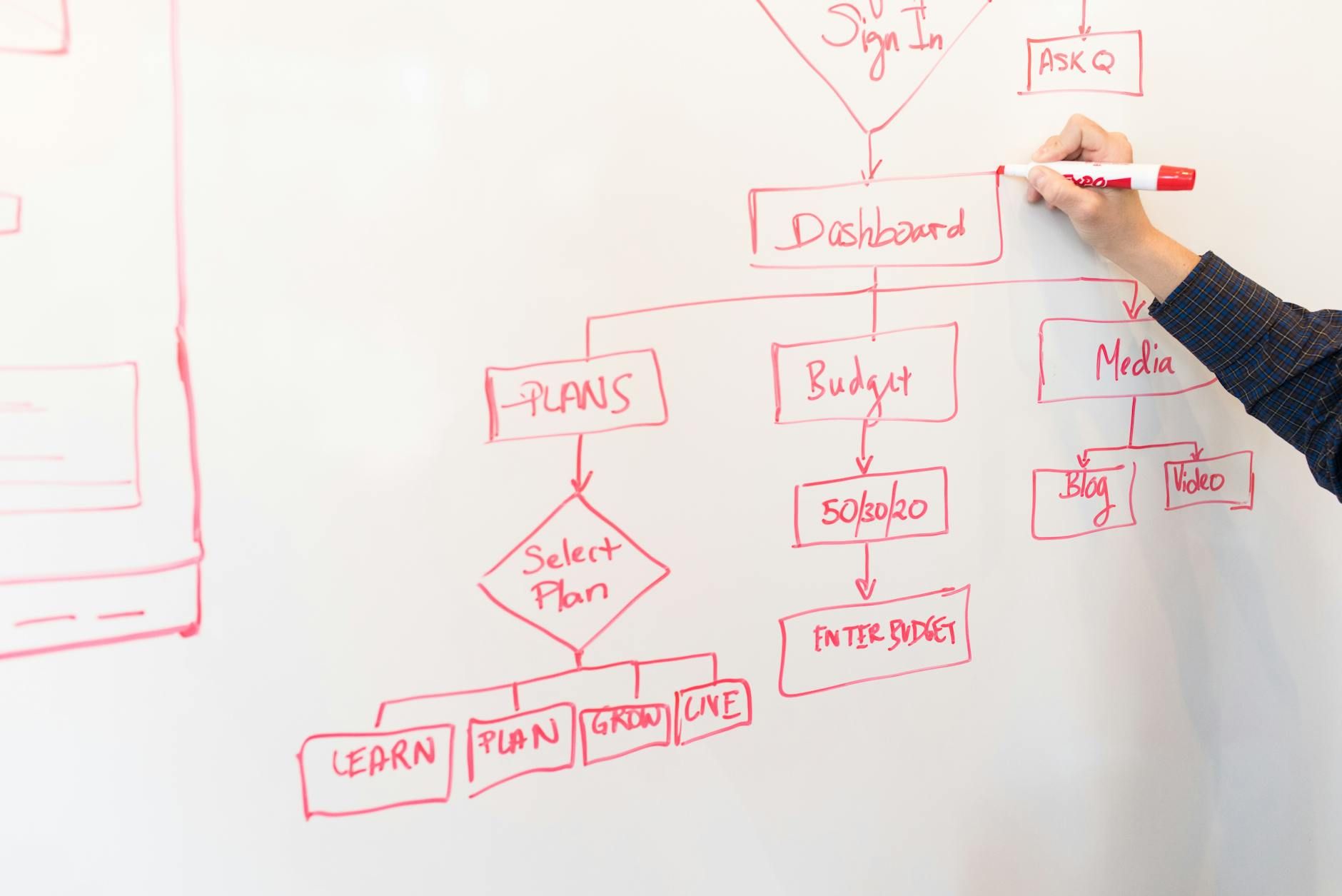

116. Learn to Generate Flow Charts With This Simple AI Integration

Integrating Large Language Models with diagramming tools like Mermaid and UML is revolutionizing software development.

Integrating Large Language Models with diagramming tools like Mermaid and UML is revolutionizing software development.

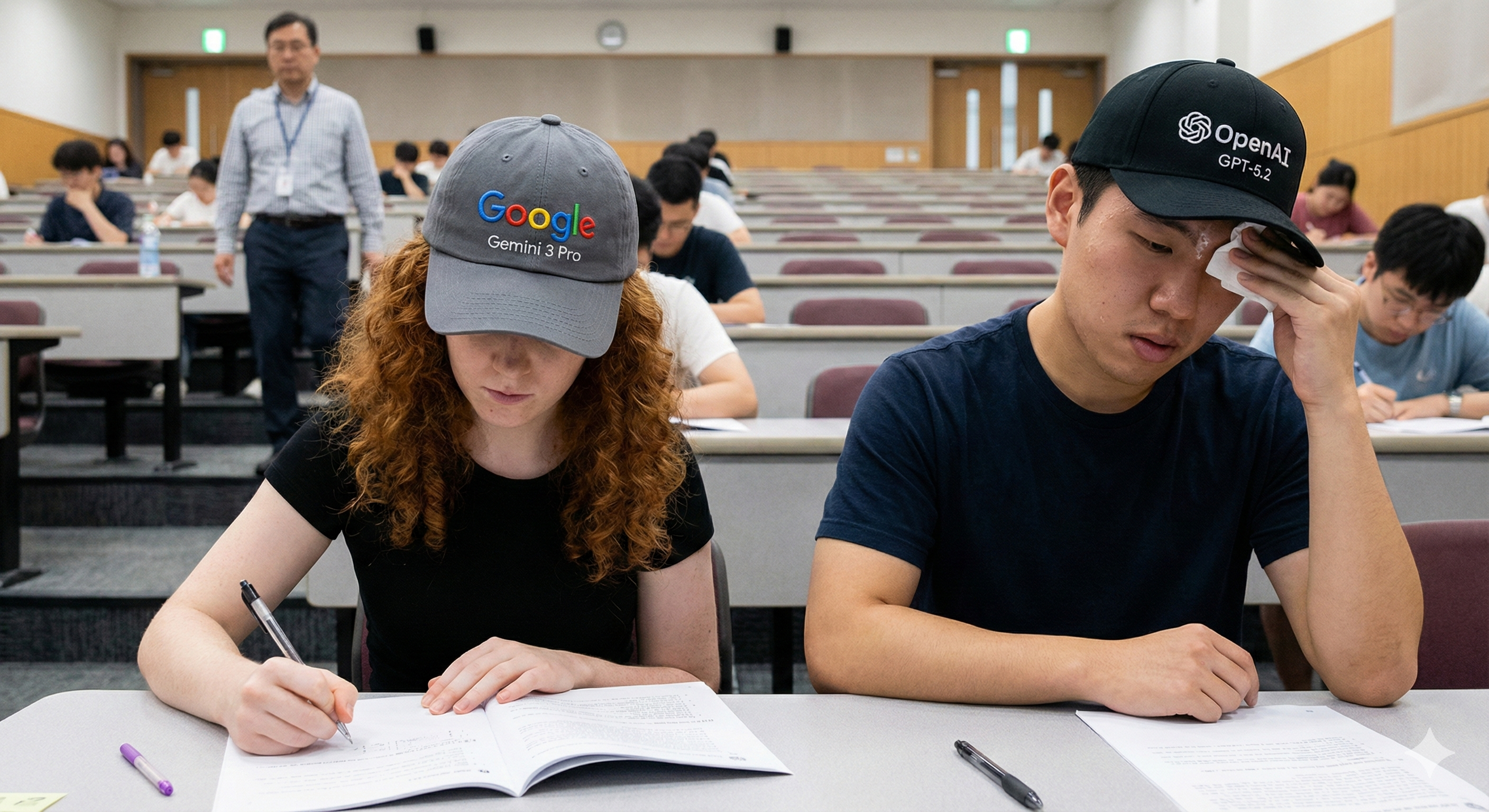

117. OpenAI GPT-5.2: The “Cheating” Controversy

Is OpenAI GPT-5.2 actually better than Google Gemini 3 Pro? If you strip away the extra "thinking" time used in the benchmarks, the gap disappears.

Is OpenAI GPT-5.2 actually better than Google Gemini 3 Pro? If you strip away the extra "thinking" time used in the benchmarks, the gap disappears.

118. Prompt Length vs. Context Window: The Real Limits of LLM Performance

how prompt length interacts with an LLM’s context window—why it matters, how it breaks, and how to design prompts that stay sharp and scalable.

how prompt length interacts with an LLM’s context window—why it matters, how it breaks, and how to design prompts that stay sharp and scalable.

119. Schema In, Data Out: A Smarter Way to Mock

MockingJar is a tool for generating structured data from a schema you define.

MockingJar is a tool for generating structured data from a schema you define.

120. How LLMs like ChatGPT Can Change the Way We Trade

It's no secret that large language models (LLMs) like ChatGPT have transformed how we work today. The Crypto trading landscape is no different.

It's no secret that large language models (LLMs) like ChatGPT have transformed how we work today. The Crypto trading landscape is no different.

121. How vLLM Prioritizes a Subset of Requests

In vLLM, we adopt the first-come-first-serve (FCFS) scheduling policy for all requests, ensuring fairness and preventing starvation.

In vLLM, we adopt the first-come-first-serve (FCFS) scheduling policy for all requests, ensuring fairness and preventing starvation.

122. 11 Best AI Chat Tools for Developers in 2024

11 best AI chat tools for developers to maximize productivity.

11 best AI chat tools for developers to maximize productivity.

123. Mamba Architecture: What Is It and Can It Beat Transformers?

Explore Mamba, an innovative architecture surpassing Transformers in efficiency for long sequences, promising advancements in AI with its flexible design.

Explore Mamba, an innovative architecture surpassing Transformers in efficiency for long sequences, promising advancements in AI with its flexible design.

124. From Cloud to Desk: 3 Signs the AI Revolution is Going Local

when it comes to AI smaller is better

when it comes to AI smaller is better

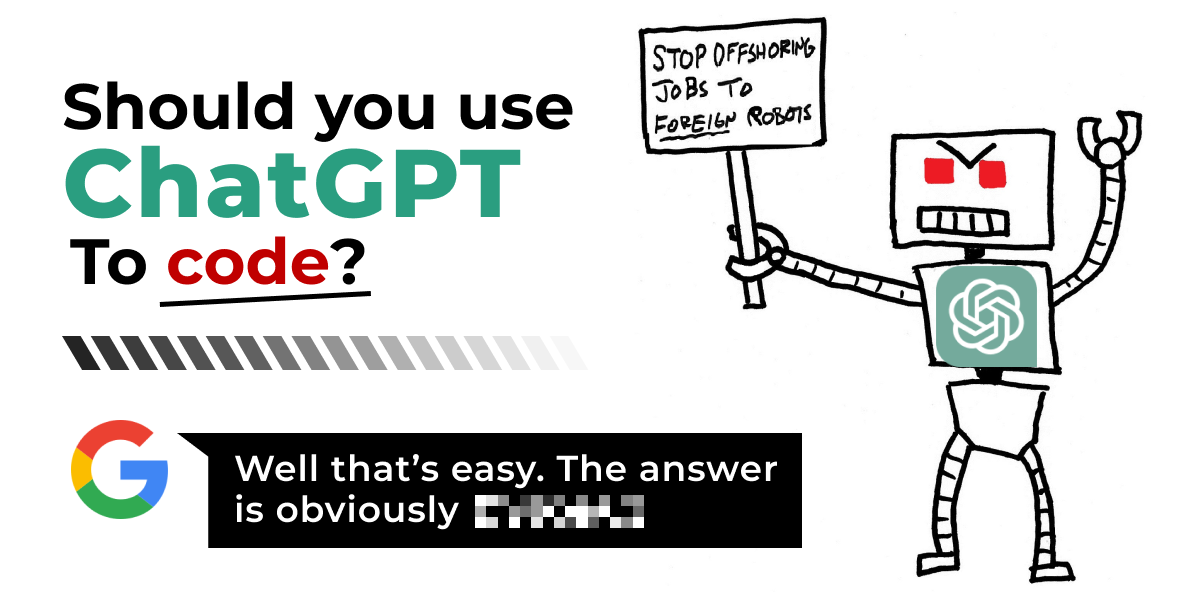

125. My New Junior Developer Kinda Sucks

ChatGPT is all the rage these days. Is it really that good for developers though?

ChatGPT is all the rage these days. Is it really that good for developers though?

126. A Petabyte-Scale Vector Store for the Future of AGI

Why your laptop won't cut it in the age of vector embeddings, LLMs, and artificial general intelligence

Why your laptop won't cut it in the age of vector embeddings, LLMs, and artificial general intelligence

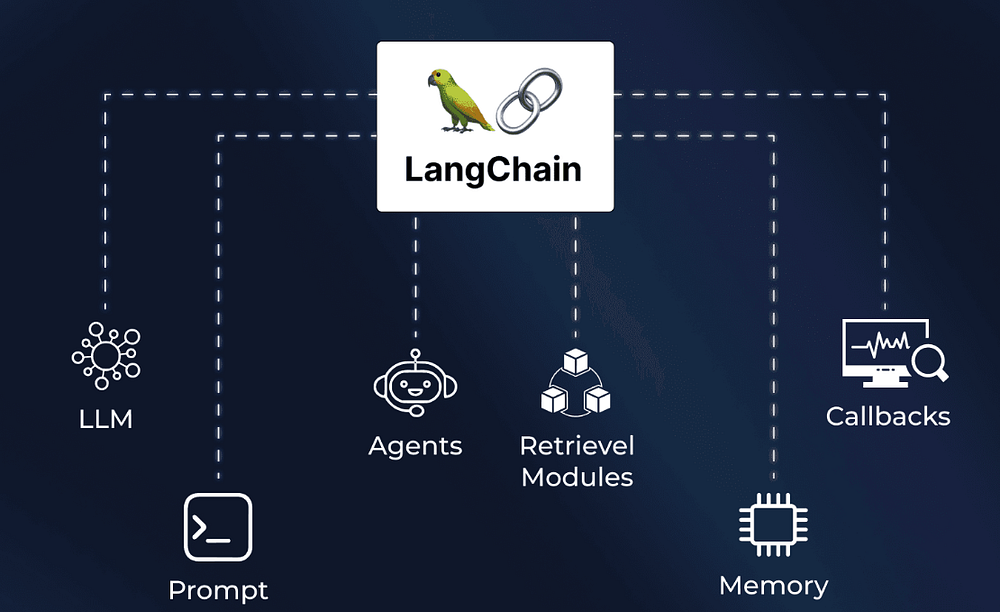

127. Langchain: Explained and Getting Started

Langchain is a crucial component for developing LLM models. It helps in orchestration and act as building block

Langchain is a crucial component for developing LLM models. It helps in orchestration and act as building block

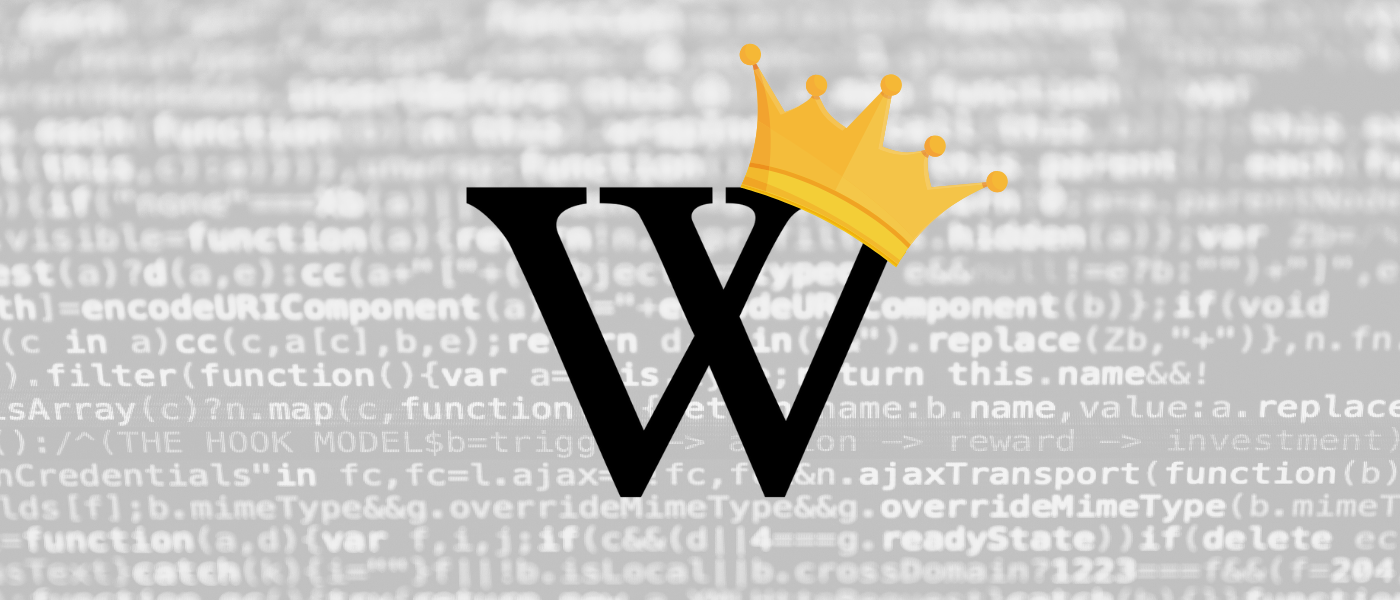

128. Wikipedia Rules Everything Around Me

Wikipedia is the internet’s true power broker and the backbone of AI. Here’s why it defines your digital reputation, and how not to be left behind.

Wikipedia is the internet’s true power broker and the backbone of AI. Here’s why it defines your digital reputation, and how not to be left behind.

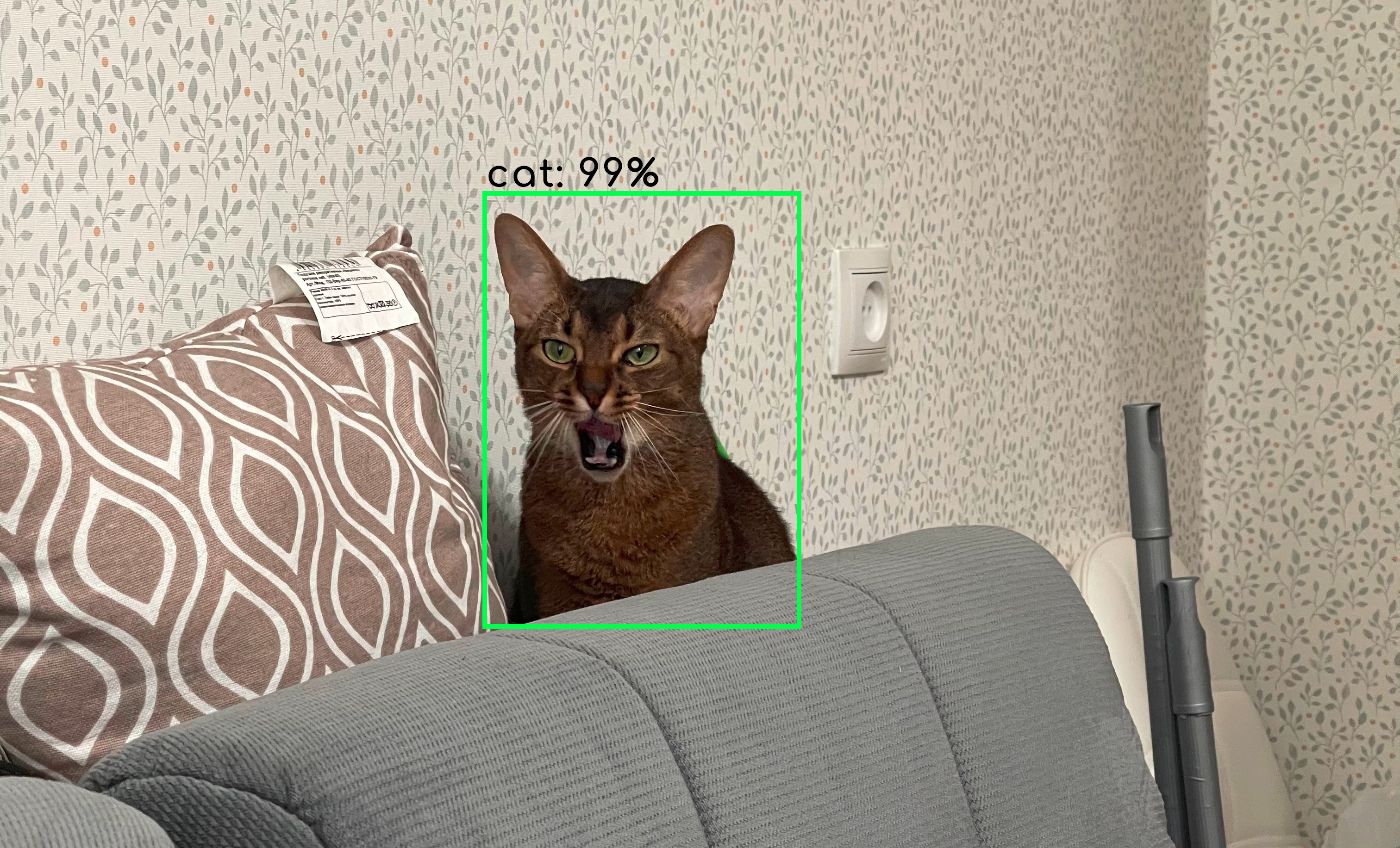

129. AI’s Non-Determinism, Hallucinations, And... Cats?

AI and cats can be random. Learn why AI isn’t always deterministic, how stochastic processes shape its decisions, and why it self-corrects and hallucinates.

AI and cats can be random. Learn why AI isn’t always deterministic, how stochastic processes shape its decisions, and why it self-corrects and hallucinates.

130. One Is Eager, Another Is a Bootlicker, and the Other Is Unhinged: Decoding the Personalities of AI

What happens when you put ChatGPT, Claude, and Grok through the Big Five personality test? Spoiler: they’re eager, brown-nosing, and unhinged.

What happens when you put ChatGPT, Claude, and Grok through the Big Five personality test? Spoiler: they’re eager, brown-nosing, and unhinged.

131. ChatGPT Vs. ChatGPT: How to Detect Text Generated Using the AI Language Model

ChatGPT can help you assess if a text has been written by an LLM.

ChatGPT can help you assess if a text has been written by an LLM.

132. Comparing Kolmogorov-Arnold Network (KAN) and Multi-Layer Perceptrons (MLPs)

Discover how Kolmogorov-Arnold Networks (KAN) challenge traditional MLPs with trainable activation functions, offering a potential leap toward AGI.

Discover how Kolmogorov-Arnold Networks (KAN) challenge traditional MLPs with trainable activation functions, offering a potential leap toward AGI.

133. Need More Relevant LLM Responses? Address These Retrieval Augmented Generation Challenges

we look at how suboptimal embedding models, inefficient chunking strategies and a lack of metadata filtering can make it hard to get relevant responses from you

we look at how suboptimal embedding models, inefficient chunking strategies and a lack of metadata filtering can make it hard to get relevant responses from you

134. Transforming the Reading Experience with BookNote.AI by WebLab Technology

BookNote.AT - exploring the content of any book through an AI assistant.

BookNote.AT - exploring the content of any book through an AI assistant.

135. How to Create Your Own AnythingGPT — a Bot That Answers the Way You Want it to

We will be talking about creating a customized version of ChatGPT that answers questions, taking into account a large knowledge base.

We will be talking about creating a customized version of ChatGPT that answers questions, taking into account a large knowledge base.

136. The EU AI Act: Implications for SEO on LLMs

This article untangles the tech jargon and charts a simple course for understanding the implications of EU's regulation for SEO on LLMs.

This article untangles the tech jargon and charts a simple course for understanding the implications of EU's regulation for SEO on LLMs.

137. LLMs + Vector Databases: Building Memory Architectures for AI Agents

Why AI agents need vector databases and smarter memory architectures—not just bigger context windows—to handle real-world tasks like academic research

Why AI agents need vector databases and smarter memory architectures—not just bigger context windows—to handle real-world tasks like academic research

138. How to Manage Permissions in a Langflow Chain for LLM Queries Using Permit.io

This article explores how to implement a permission system in Langflow workflows using Permit.io’s ABAC capabilities.

This article explores how to implement a permission system in Langflow workflows using Permit.io’s ABAC capabilities.

139. LLMs Are Transforming AI Apps: Here's How

Building apps with unreal levels of personalized context has become a reality for anyone who has the right database, a few lines of code, and an LLM like GPT-4.

Building apps with unreal levels of personalized context has become a reality for anyone who has the right database, a few lines of code, and an LLM like GPT-4.

140. Why AI Agent Reliability Depends More on the Harness Than the Model

Real‑world tasks expose the bottleneck: not the model, but the scaffolding that wraps it.

Real‑world tasks expose the bottleneck: not the model, but the scaffolding that wraps it.

141. Using LLMs to Mimic an Evil Twin Could Spell Disaster

Who knew that chatbot prompts would become so significant one day that it could be a potential career? And not just a noble one.

Who knew that chatbot prompts would become so significant one day that it could be a potential career? And not just a noble one.

142. LLMs in Data Engineering: Not Just Hype, Here’s What’s Real

Large Language Models (LLMs) represent artificial intelligence systems which learn human language from massive text databases.

Large Language Models (LLMs) represent artificial intelligence systems which learn human language from massive text databases.

143. The Unseen Variable: Why Your LLM Gives Different Answers (and How We Can Fix It)

This article dive deep on the Thinking Machines Lab publication that addresses the challenge of achieving reproducibility in LLM inference

This article dive deep on the Thinking Machines Lab publication that addresses the challenge of achieving reproducibility in LLM inference

144. Security Threats to High Impact Open Source Large Language Models

The rapid growth of Open-source LLM projects often exhibit an immature security posture, which necessitates the adoption of enhanced security standards.

The rapid growth of Open-source LLM projects often exhibit an immature security posture, which necessitates the adoption of enhanced security standards.

145. What LLMs Still Can't Do

This article explores what common sense is, discusses Hubert L. Dreyfus' critique on AI's common sense capabilities, and examines persistent AI limitations.

This article explores what common sense is, discusses Hubert L. Dreyfus' critique on AI's common sense capabilities, and examines persistent AI limitations.

146. How LLMs and Vector Search Have Revolutionized Building AI Applications

Thanks to large language models and vector search, building AI applications is much simpler for developers.

Thanks to large language models and vector search, building AI applications is much simpler for developers.

147. Getting to Know Google's Agent2Agent Protocol

A no-code introduction to Google's Agent2Agent (A2A) Protocol for the interoperability of AI Agents

A no-code introduction to Google's Agent2Agent (A2A) Protocol for the interoperability of AI Agents

148. I Built a Local AI Firewall and made it Open Source Because Nobody Else Was Going To !

I spent almost a year building an open source AI firewall after watching teams leak user SSNs to cloud LLMs. 81 engines, 52K lines, runs locally. MIT licensed.

I spent almost a year building an open source AI firewall after watching teams leak user SSNs to cloud LLMs. 81 engines, 52K lines, runs locally. MIT licensed.

149. Building AxonerAI: A Rust Framework for Agentic Systems

AxonerAI: Rust framework for building AI agents. Alternative to LangChain with memory safety, true concurrency and blazing fast executions.

AxonerAI: Rust framework for building AI agents. Alternative to LangChain with memory safety, true concurrency and blazing fast executions.

150. AI Safety and Alignment: Could LLMs Be Penalized for Deepfakes and Misinformation?

Penalty-tuning for LLMs: Where they can be penalized for misuses or negative outputs, within their awareness, as another channel for AI safety and alignment.

Penalty-tuning for LLMs: Where they can be penalized for misuses or negative outputs, within their awareness, as another channel for AI safety and alignment.

151. The Future of Learning is Here: Google’s Learn Your Way Revolutionizes Textbooks with Generative AI!

Google’s “Learn Your Way,” now available on Google Labs, is a research experiment that leverages generative AI (GenAI) to transform educational materials.

Google’s “Learn Your Way,” now available on Google Labs, is a research experiment that leverages generative AI (GenAI) to transform educational materials.

152. How People Use ChatGPT

A groundbreaking NBER Working Paper, “How People Use ChatGPT”, finally pulls back the curtain on this phenomenon.

A groundbreaking NBER Working Paper, “How People Use ChatGPT”, finally pulls back the curtain on this phenomenon.

153. Let's Build a Free Web Scraping Tool That Combines Proxies and AI for Data Analysis

Learn how to combine web scraping, proxies, and AI-powered language models to automate data extraction and gain actionable insights effortlessly.

Learn how to combine web scraping, proxies, and AI-powered language models to automate data extraction and gain actionable insights effortlessly.

154. Salesforce Developer Creates LLM Assistant That Runs Locally On Your Machine

I built a Salesforce Lightning Web Component that lets you run powerful AI language models (LLMs) directly on your computer within Salesforce.

I built a Salesforce Lightning Web Component that lets you run powerful AI language models (LLMs) directly on your computer within Salesforce.

155. Achieving Relevant LLM Responses By Addressing Common Retrieval Augmented Generation Challenges

We look at common problems that can arise with RAG implementations and LLM interactions.

We look at common problems that can arise with RAG implementations and LLM interactions.

156. Primer on Large Language Model (LLM) Inference Optimizations: 2. Introduction to Artificial Intelligence (AI) Accelerators

This post explores AI accelerators and their impact on deploying Large Language Models (LLMs) at scale.

This post explores AI accelerators and their impact on deploying Large Language Models (LLMs) at scale.

157. Behind the Scenes of Large Language Models: A Conversation with Jay Alammar

In this 16th episode of "The What's AI Podcast," I had the privilege of speaking with Jay Alammar, a prominent AI educator, and blogger.

In this 16th episode of "The What's AI Podcast," I had the privilege of speaking with Jay Alammar, a prominent AI educator, and blogger.

158. You Should Try a Local LLM Model: Here's How to Get Started

In this article, we will explore how to integrate a local LLM model like LLaMA into Obsidian on a Mac.

In this article, we will explore how to integrate a local LLM model like LLaMA into Obsidian on a Mac.

159. PagedAttention: An Attention Algorithm Inspired By the Classical Virtual Memory in Operating Systems

To address this problem, we propose PagedAttention, an attention algorithm inspired by the classical virtual memory and paging techniques in operating systems.

To address this problem, we propose PagedAttention, an attention algorithm inspired by the classical virtual memory and paging techniques in operating systems.

160. GPT4All: Limitations and References

By enabling access to large language models, the GPT4All project also inherits many of the ethical concerns associated with generative models.

By enabling access to large language models, the GPT4All project also inherits many of the ethical concerns associated with generative models.

161. Beyond the Hype: How Small Language Models and Knowledge Graphs are Redefining Domain-Specific AI

The paper establishes the importance of a combination of Small Language Models (SLMs) with their smallness and modularity in control and fine-tuning in a narrow

The paper establishes the importance of a combination of Small Language Models (SLMs) with their smallness and modularity in control and fine-tuning in a narrow

162. Which AI Model Should You Use? (Check Benchmarks)

What type of AI models are available, what do their names represent and how are they scored.

What type of AI models are available, what do their names represent and how are they scored.

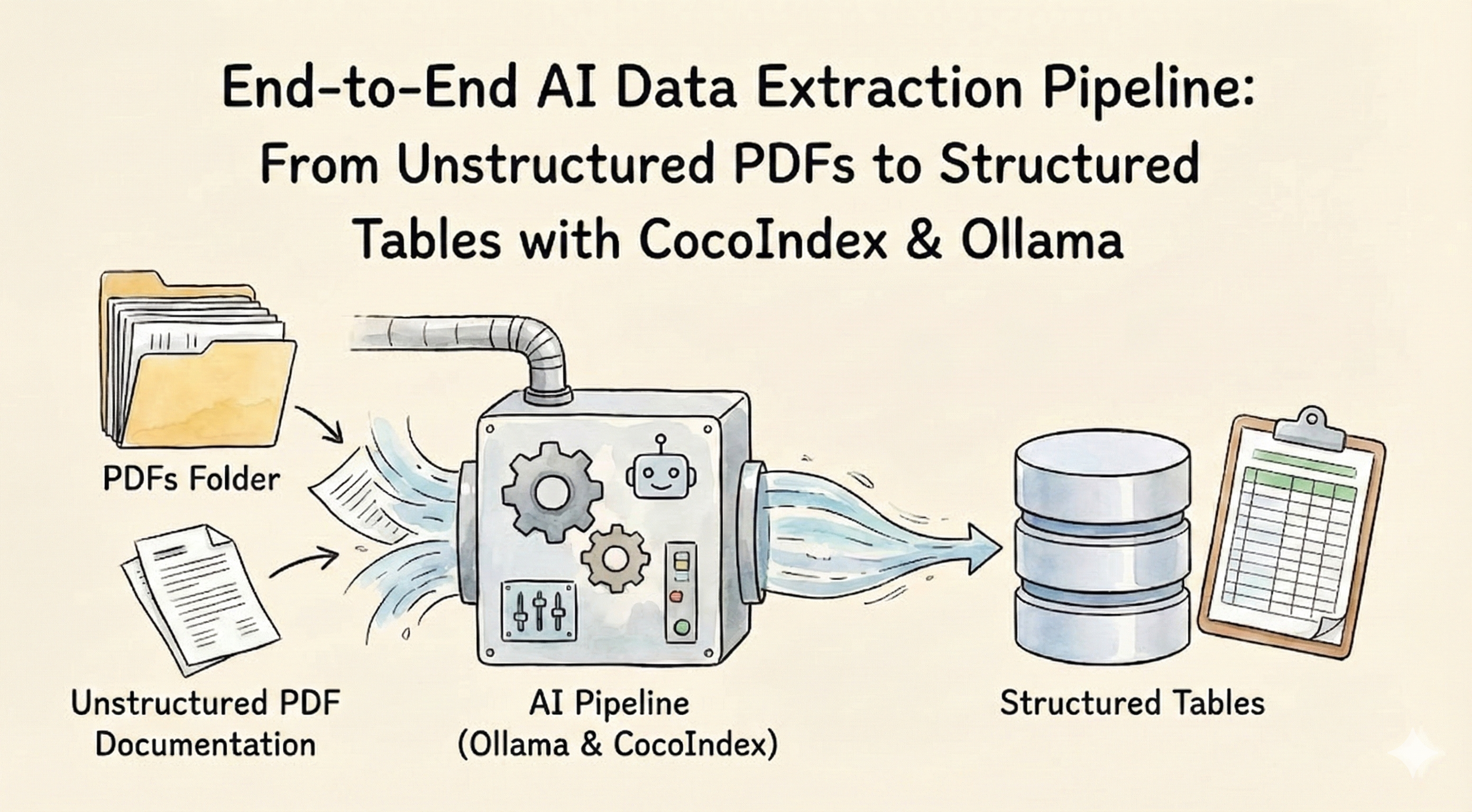

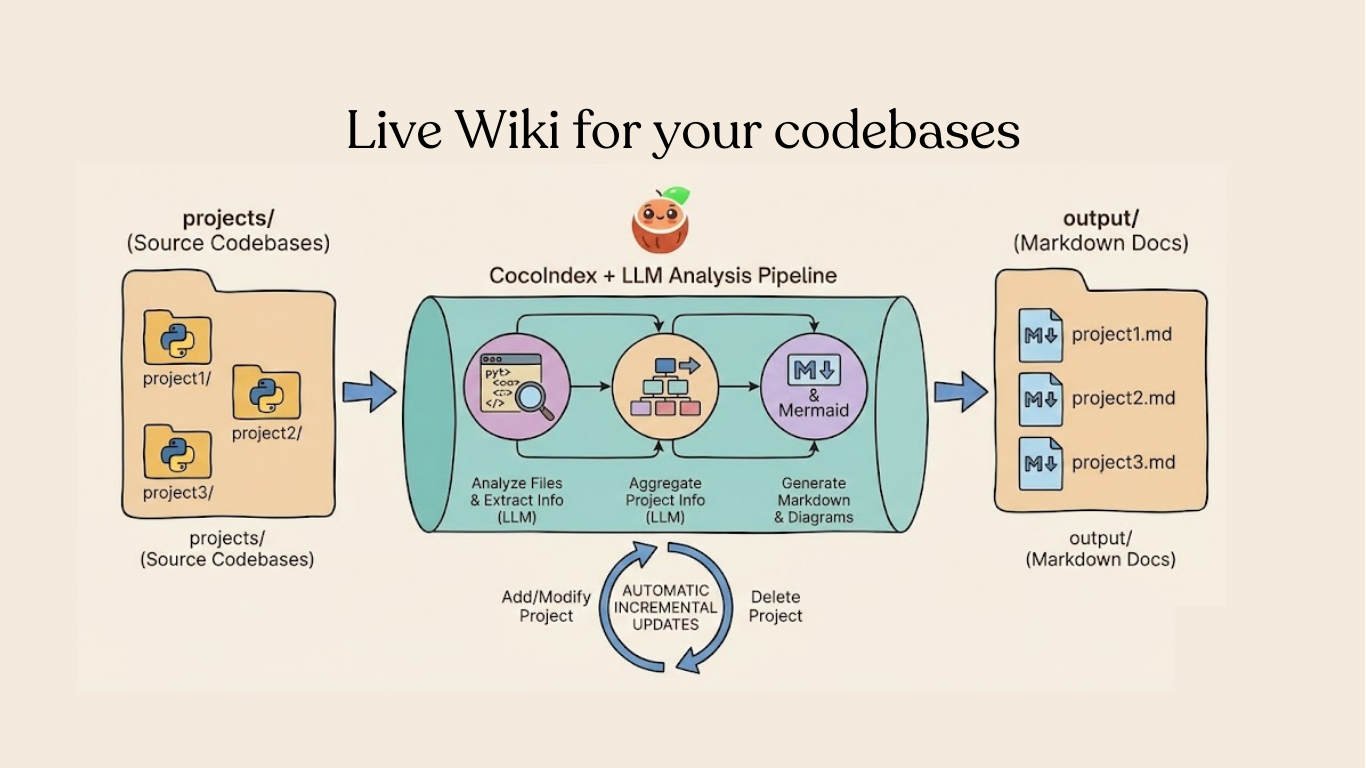

163. PDFs to Intelligence: How To Auto-Extract Python Manual Knowledge Recursively Using Ollama, LLMs

Learn how to automate extraction of structured Python module data from PDFs using CocoIndex, LLMs like Llama3, and Ollama. Scale technical documentation by buil

Learn how to automate extraction of structured Python module data from PDFs using CocoIndex, LLMs like Llama3, and Ollama. Scale technical documentation by buil

164. Beyond Brute Force: 4 Secrets to Smaller, Smarter, and Dramatically Cheaper AI

On-policy distillation is more than just another training technique; it's a foundational shift in how we create specialized, expert AI.

On-policy distillation is more than just another training technique; it's a foundational shift in how we create specialized, expert AI.

165. MCP Explained: The Protocol That Unblocked Real AI Agent Ecosystems

Agentic AI replaces passive chatbots with goal-driven agents; MCP standardizes tools, enabling safe, scalable human-AI collaboration.

Agentic AI replaces passive chatbots with goal-driven agents; MCP standardizes tools, enabling safe, scalable human-AI collaboration.

166. New AI Model Can ‘Think About Thinking’ Without Extra Training

The emergence of metacognitive behaviors in the State Stream Transformer architecture challenges fundamental assumptions about language model capabilities.

The emergence of metacognitive behaviors in the State Stream Transformer architecture challenges fundamental assumptions about language model capabilities.

167. How AI Companions Impact the Gaming Experience

Games no longer need to rely on scripted sidekicks; instead, they can use AI companions. Here's how that's impacting the gaming experience.

Games no longer need to rely on scripted sidekicks; instead, they can use AI companions. Here's how that's impacting the gaming experience.

168. 100 Days of AI Day 2: Enhancing Prompt Engineering for ChatGPT

On day 2 of 100 Days of AI, we learn prompt engineering tips for optimal AI output.

On day 2 of 100 Days of AI, we learn prompt engineering tips for optimal AI output.

169. LLMOps: DevOps Strategies for Deploying Large Language Models in Production

Learn how to productionize large language models (LLMs) using AWS EKS, Kubernetes, and GPU-backed scaling. This guide covers LLMOps practices, model deployment.

Learn how to productionize large language models (LLMs) using AWS EKS, Kubernetes, and GPU-backed scaling. This guide covers LLMOps practices, model deployment.

170. 10 Open-Source LLMs That Will Rock Your Dev World in 2024

Forget weeks wrestling with NLP! Explore 10 trending open-source LLMs that will revolutionize your dev workflow in 2024. Unleash the power of AI

Forget weeks wrestling with NLP! Explore 10 trending open-source LLMs that will revolutionize your dev workflow in 2024. Unleash the power of AI

171. Your AI Has Amnesia: A New Paradigm Called 'Nested Learning' Could Be the Cure

This post breaks down the three most surprising and impactful ideas from this research, explaining how they could give AI the ability to learn continually.

This post breaks down the three most surprising and impactful ideas from this research, explaining how they could give AI the ability to learn continually.

172. Here's What Every Business Should Know About Large Language Models

In this article, we share our decade-long experience as an AI software development firm and dive into the world of LLMs

In this article, we share our decade-long experience as an AI software development firm and dive into the world of LLMs

173. 3 Experiments That Reveal the Shocking Inner Life of AI Introduction: Is Anybody Home?

Researchers used a technique called "concept injection" to test whether AI can notice its own internal states.

Researchers used a technique called "concept injection" to test whether AI can notice its own internal states.

174. Revolutionising Chatbots: The Rise of Retrieval Augmented Generation (RAG)

Learn the value of Retrieval Augmented Generation (RAG) in AI, revolutionizing customer interactions & enhancing dialogue systems with advanced NLP capabilities

Learn the value of Retrieval Augmented Generation (RAG) in AI, revolutionizing customer interactions & enhancing dialogue systems with advanced NLP capabilities

175. How Large Language Models Enhance Cybersecurity: From Threat Detection to Compliance Analysis

Explore the diverse applications of Large Language Models (LLMs) in cybersecurity.

Explore the diverse applications of Large Language Models (LLMs) in cybersecurity.

176. Comparing VERSES AI to OpenAI: A Talk With ChatGPT4

In a mere 3 question conversation with ChatGPT4, the bot provided a solid basis to understand issues facing LLMs, & advantages of Active Inference AI over LLMs

In a mere 3 question conversation with ChatGPT4, the bot provided a solid basis to understand issues facing LLMs, & advantages of Active Inference AI over LLMs

177. Transformers, Finally Explained

Learn transformer architecture through intuitive analogies and visual diagrams.

Learn transformer architecture through intuitive analogies and visual diagrams.

178. HuggingFace Chooses Arch (Router) for Omni Chat

HuggingFace Chooses Arch-Router for Omni Chat! Arch creator Salman Paracha details the significance in his HackerNoon post.

HuggingFace Chooses Arch-Router for Omni Chat! Arch creator Salman Paracha details the significance in his HackerNoon post.

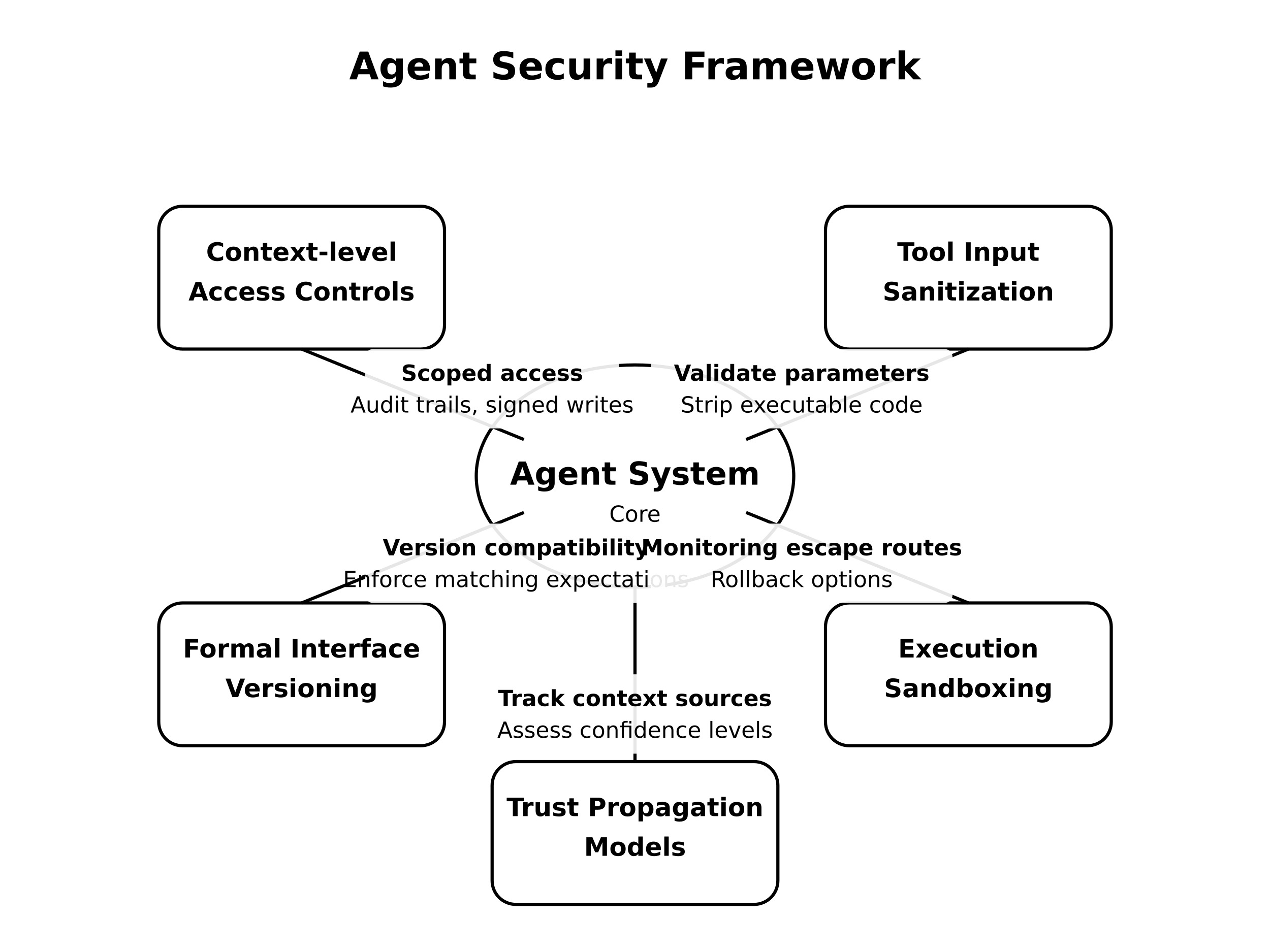

179. MCP Is a Security - Here’s How the Agent Security Framework Fixes It

Learn about the security risks in MCP and how the Agent Security Framework can safeguard your AI agents from attacks and data breaches

Learn about the security risks in MCP and how the Agent Security Framework can safeguard your AI agents from attacks and data breaches

180. Taming LLMs with Langchain + Langgraph

How to fix LLMs and chat bots with Langchain and Langgraph.

How to fix LLMs and chat bots with Langchain and Langgraph.

181. How DeepSeek Works - Simplified

Today, we’ll be talking about DeepSeek in-depth— including its architecture, and most importantly, how it’s any different from OpenAI’s ChatGPT.

Today, we’ll be talking about DeepSeek in-depth— including its architecture, and most importantly, how it’s any different from OpenAI’s ChatGPT.

182. RAG Systems Are Breaking the Barriers of Language Models: Here's How

Explore how RAG systems differ from traditional large language models by leveraging real-time data access and applications.

Explore how RAG systems differ from traditional large language models by leveraging real-time data access and applications.

183. Breaking down GPU VRAM consumption

What factors influence VRAM consumption? How does it vary with different model settings? I dug into the topic and conducted my measurements.

What factors influence VRAM consumption? How does it vary with different model settings? I dug into the topic and conducted my measurements.

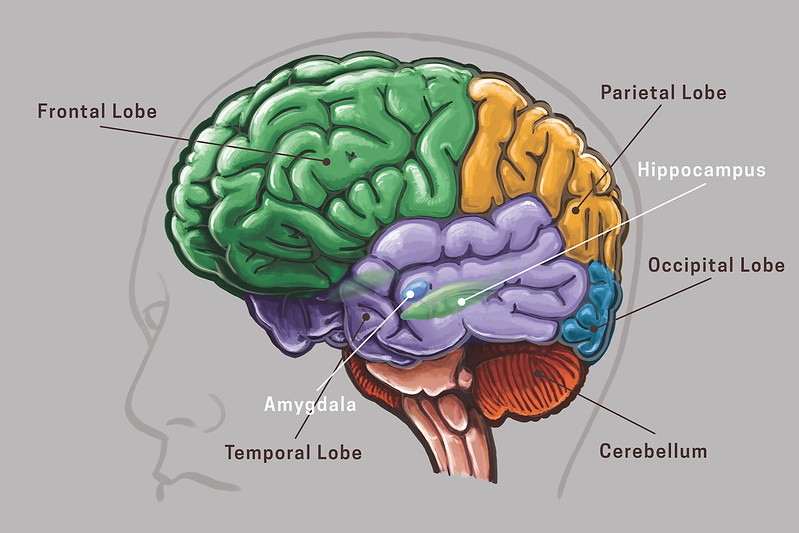

184. Panpsychism: Quantum Superposition and Entanglement or Qubits without Cells?

To investigate quantum superposition or qubits as the basis for consciousness, experiments should be designed for wood, glass, a liquid or gas.

To investigate quantum superposition or qubits as the basis for consciousness, experiments should be designed for wood, glass, a liquid or gas.

185. How to Leverage LLMs for Effective and Scalable Software Development

The following are some key coding principles and development practices that can be applied to LLM-assisted software development

The following are some key coding principles and development practices that can be applied to LLM-assisted software development

186. I Reverse-engineered How 23 'AI-first' Companies Actually Build Their Products

So I spend way too much time looking at how companies claiming to be "AI-powered" or "built with AI" actually implement their tech.

So I spend way too much time looking at how companies claiming to be "AI-powered" or "built with AI" actually implement their tech.

187. Summarize a Story in 10 Seconds with ChatGPT or Bard

Learn how to quickly summarize any text with ChatGPT Summarize and become more productive.

Learn how to quickly summarize any text with ChatGPT Summarize and become more productive.

188. Instruction Tuning and Custom Instruction Libraries: Your Model’s Real ‘Operating Manual

A practical guide to Instruction Tuning and building custom instruction libraries so your LLM follows rules reliably across many tasks.

A practical guide to Instruction Tuning and building custom instruction libraries so your LLM follows rules reliably across many tasks.

189. How to Prioritize AI Projects Amidst GPU Constraints

A new way to prioritize to maximize value to the business while optimizing for GPU constraints

A new way to prioritize to maximize value to the business while optimizing for GPU constraints

190. A Pivotal Moment in AI : Leaders Rally for a Natural AI Initiative

A collective of neuroscientists, biologists, physicists, AI experts and World Leaders came together to propose a radical rethinking of AI's trajectory.

A collective of neuroscientists, biologists, physicists, AI experts and World Leaders came together to propose a radical rethinking of AI's trajectory.

191. Is Anthropic's Alignment Faking a Significant AI Safety Research?

How the mind works [of human and of AI] is not by labels, like induction or deduction, but by components, their interactions, and features.

How the mind works [of human and of AI] is not by labels, like induction or deduction, but by components, their interactions, and features.

192. Hallucinations Are A Feature of AI, Humans Are The Bug

Large language models were never meant to be sources of absolute truth. Yet, we continue to treat them as such.

Large language models were never meant to be sources of absolute truth. Yet, we continue to treat them as such.

193. Recursive Language Models - Maybe a Newer Era of Prompt Engineering?

Have you tried feeding a massive document into ChatGPT or Claude? Sometimes, it gives good insights, and sometimes, you've hit the wall.

Have you tried feeding a massive document into ChatGPT or Claude? Sometimes, it gives good insights, and sometimes, you've hit the wall.

194. ChatGPT 4.0 Finally Gets a Joke

Reasoning: ChatGPT4.0 got the joke, ChatGPT3.5 did not

Creativity: ChatGPT4.0 does a better job.

Analytics: ChatGPT4.0 is a better programer than ChatGPT3.5

Reasoning: ChatGPT4.0 got the joke, ChatGPT3.5 did not

Creativity: ChatGPT4.0 does a better job.

Analytics: ChatGPT4.0 is a better programer than ChatGPT3.5

195. ChipNeMo: Domain-Adapted LLMs for Chip Design: Abstract and Intro

Researchers present ChipNeMo, using domain adaptation to enhance LLMs for chip design, achieving up to 5x model size reduction with better performance.

Researchers present ChipNeMo, using domain adaptation to enhance LLMs for chip design, achieving up to 5x model size reduction with better performance.

196. How to Make Your LLM Fully Utilize the Context

A data-driven approach that introduces a novel pipeline to synthesize a novel dataset to train LLMs to cleverly use long contexts.

A data-driven approach that introduces a novel pipeline to synthesize a novel dataset to train LLMs to cleverly use long contexts.

197. Do You Speak Vector? Understanding the Language of LLMs and Generative AI

Let’s dig into vectors, vector search and the kinds of databases that can store and query vectors.

Let’s dig into vectors, vector search and the kinds of databases that can store and query vectors.

198. From Facebook to MindverseAI: Felix Tao's Insights on AI Evolution and the Future of Large Language

NLP expert discusses the evolution of AI, waking up the consciousness and the biggest issues with LLMs...

NLP expert discusses the evolution of AI, waking up the consciousness and the biggest issues with LLMs...

199. Beyond Linear Chats: Rethinking How We Interact with Multiple AI Models

Discover how visual mind maps, git-like versioning, prompt optimization, and PDF export transform LLM chat apps for efficient, organized research.

Discover how visual mind maps, git-like versioning, prompt optimization, and PDF export transform LLM chat apps for efficient, organized research.

200. Ditch ChatGPT: Run A Fast LLM On Your Computer Instead

You can run something as powerful as Llama 3 locally and control the data. Let me show you how.

You can run something as powerful as Llama 3 locally and control the data. Let me show you how.

201. Humanizing AI Marketing: How to Make Automation Feel Authentic

Explore how marketers can humanize AI-driven campaigns. Blend automation with storytelling and audience insight to engage, convert, and build trust.

Explore how marketers can humanize AI-driven campaigns. Blend automation with storytelling and audience insight to engage, convert, and build trust.

202. Prompt-Powered Personas: How AI Finally Fixes the Messy World of User Profiling

Use LLM prompts to turn messy customer data into living, evidence‑backed user personas in five practical steps, with prompts, cases, and pitfalls.

Use LLM prompts to turn messy customer data into living, evidence‑backed user personas in five practical steps, with prompts, cases, and pitfalls.

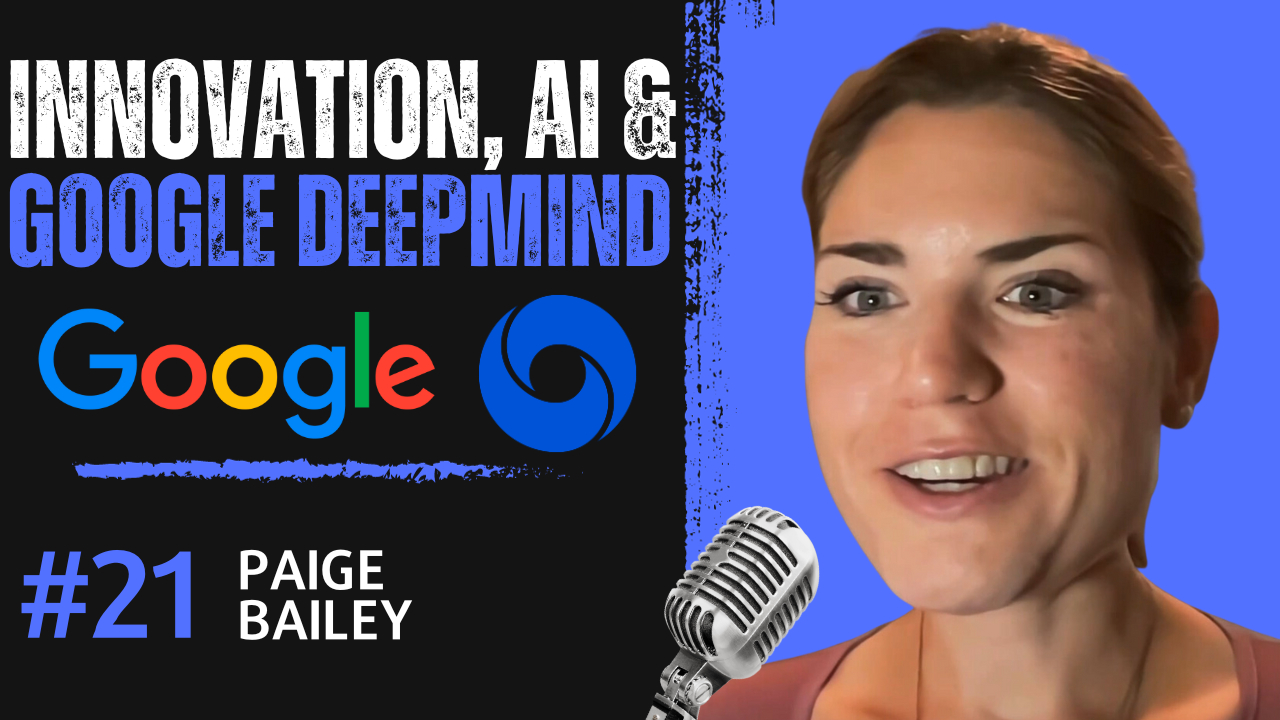

203. Paige Bailey: Pioneering Generative AI in Product Management at Google DeepMind

What is it like to build the best AI models and work at one of the most important AI companies: Google Deepmind?

What is it like to build the best AI models and work at one of the most important AI companies: Google Deepmind?

204. Using the Power of AI for Tailored and Personalized Experiences

Learn how AI helps create personalized experiences in various services.

Learn how AI helps create personalized experiences in various services.

205. PagedAttention and vLLM Explained: What Are They?

This paper proposes PagedAttention, a new attention algorithm that allows attention keys and values to be stored in non-contiguous paged memory

This paper proposes PagedAttention, a new attention algorithm that allows attention keys and values to be stored in non-contiguous paged memory

206. OpenAI o1 - Questionable Empathy

A discussion of OpenAI's o1 ability to justify statements in the context of empathy. It makes the right decisions but sometimes for the wrong reasons.

A discussion of OpenAI's o1 ability to justify statements in the context of empathy. It makes the right decisions but sometimes for the wrong reasons.

207. Revamping Long Short-Term Memory Networks: XLSTM for Next-Gen AI

XLSTMs, with novel sLSTM and mLSTM blocks, aim to overcome LSTMs' limitations and potentially surpass transformers in building next-gen language models.

XLSTMs, with novel sLSTM and mLSTM blocks, aim to overcome LSTMs' limitations and potentially surpass transformers in building next-gen language models.

208. DIY ChatGPT Plugin Connector

How I connected an external app to ChatGPT

How I connected an external app to ChatGPT

209. Your AI Can’t Understand Language Until It Learns This Trick

Discover the power of word embeddings in NLP! Learn how these vector-based representations capture semantic and syntactic relationships between words.

Discover the power of word embeddings in NLP! Learn how these vector-based representations capture semantic and syntactic relationships between words.

210. Explainable AI and Prompting a Black Box in the Era of Gen AI

AI's "black box" dilemma grows as conversations with it become everyday norms, veiling true understanding.

AI's "black box" dilemma grows as conversations with it become everyday norms, veiling true understanding.

211. Yoshua Bengio Weighs in on the Pause and Building a World Model

This week I talked to Yoshua Bengio, one of the founders of deep learning about augmenting large language models

This week I talked to Yoshua Bengio, one of the founders of deep learning about augmenting large language models

212. The Huel-ification of Thinking

We're treating intellectual work like 1800s nutritionists treated food—extracting components without knowing what we're losing.

We're treating intellectual work like 1800s nutritionists treated food—extracting components without knowing what we're losing.

213. How Frontier Labs Use FP8 to Train Faster and Spend Less

Naively casting to FP8 destroys your numerics. Here's the per-tensor and blockwise quantization mechanics that make it actually work at pretraining scale.

Naively casting to FP8 destroys your numerics. Here's the per-tensor and blockwise quantization mechanics that make it actually work at pretraining scale.

214. Improving Your LLM: Train, fine-tune, prompt, RAG... What to do?!

How to optimize your LLMaply as possible

How to optimize your LLMaply as possible

215. US Intelligence Seeks to Identify Large Language Model Security Risks

The US Intelligence Advanced Research Projects Activity (IARPA) issues a request for information (RFI) to identify potential threats and vulnerabilities.

The US Intelligence Advanced Research Projects Activity (IARPA) issues a request for information (RFI) to identify potential threats and vulnerabilities.

216. Reflecting on AI in 2023: Magic, Hope, Innovation and Disruption

Discover the transformative force of Artificial Intelligence. Explore the latest trends from NL to deep learning that are shaping the future of AI.

Discover the transformative force of Artificial Intelligence. Explore the latest trends from NL to deep learning that are shaping the future of AI.

217. ChatGPT Translator VS Mine: Which One Is Better?

Does ChatGPT Translator really so good as mentioned in many posts ?

Does ChatGPT Translator really so good as mentioned in many posts ?

218. Retrieval-Augmented Generation: AI Hallucinations Be Gone!

Retrieval Augmented Generation (RAG), shows promise in efficiently increasing the knowledge of LLMs and reducing the impact of AI hallucinations.

Retrieval Augmented Generation (RAG), shows promise in efficiently increasing the knowledge of LLMs and reducing the impact of AI hallucinations.

219. Leveraging RAG With Reddit URLs on Diabetes: Open Source LLMs for Enhanced Knowledge Retrieval

Given my interest in sourcing experiential data on diabetes using LLMs, I conducted this experiment with Ollama - one of many open-source LLMs

Given my interest in sourcing experiential data on diabetes using LLMs, I conducted this experiment with Ollama - one of many open-source LLMs

220. Why RAG Is Failing at Complex Questions (And How Knowledge Graphs Fix It)

Your RAG system isn't failing because of your LLM. It's the retrieval architecture. Here's what replaces it.

Your RAG system isn't failing because of your LLM. It's the retrieval architecture. Here's what replaces it.

221. The Evolution of AI and Data Privacy: How ChatGPT Is Shaping the Future of Digital Communication

ChatGPT is absorbing data at a faster pace than any other company in history, and if that balloon bursts, the ramifications for privacy will be unparalleled.

ChatGPT is absorbing data at a faster pace than any other company in history, and if that balloon bursts, the ramifications for privacy will be unparalleled.

222. AI Makes Tech Less Terrifying For “Word People”

Discover how AI is reshaping the world for writers and creatives. Dive into how Natural Language Interfaces are making tech more accessible.

Discover how AI is reshaping the world for writers and creatives. Dive into how Natural Language Interfaces are making tech more accessible.

223. An Essential Guide on How to Seamlessly Build AI-Enhanced APIs With OpenAI

Today, we're announcing the WunderGraph OpenAI integration/Agent SDK to simplify the creation of AI-enhanced APIs...

Today, we're announcing the WunderGraph OpenAI integration/Agent SDK to simplify the creation of AI-enhanced APIs...

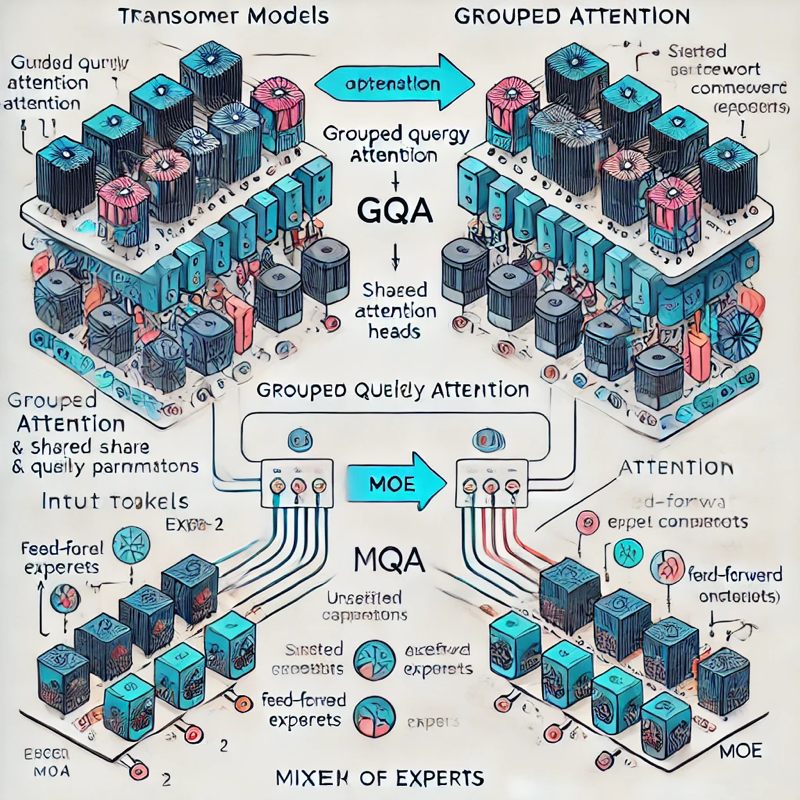

224. Primer on Large Language Model (LLM) Inference Optimizations: 3. Model Architecture Optimizations

Exploration of model architecture optimizations for Large Language Model (LLM) inference, focusing on Group Query Attention (GQA) and Mixture of Experts (MoE)

Exploration of model architecture optimizations for Large Language Model (LLM) inference, focusing on Group Query Attention (GQA) and Mixture of Experts (MoE)

225. Transforming CSV Files into Graphs with LLMs: A Step-by-Step Guide

Learn how to use LLMs to convert CSV files into graph data models for Neo4j, enhancing data modeling and insights from flat files.

Learn how to use LLMs to convert CSV files into graph data models for Neo4j, enhancing data modeling and insights from flat files.

226. Exploring Three Use Cases of Generative AI in the Healthcare Industry

Google Bard and ChatGPT can generate content at lightning speed, helping to bond with patients and enhance an established professional’s reputation. Here's how.

Google Bard and ChatGPT can generate content at lightning speed, helping to bond with patients and enhance an established professional’s reputation. Here's how.

227. GPT4All: An Ecosystem of Open-Source Compressed Language Models

In this paper, we tell the story of GPT4All, a popular open source repository that aims to democratize access to LLMs.

In this paper, we tell the story of GPT4All, a popular open source repository that aims to democratize access to LLMs.

228. AI Is Playing Favorite With Numbers

LLMs are as smart—and as biased—as the humans who trained them. Although AI can’t think for itself, we're just beginning to explore the depths of LLM psychology

LLMs are as smart—and as biased—as the humans who trained them. Although AI can’t think for itself, we're just beginning to explore the depths of LLM psychology

229. I Built a RAG System for Our Analytics Team. It Worked Great Until We Added Real Data.

Everyone's demo uses 50 documents and a clean knowledge base. We had 14,000 files and a decade of conflicting policies.

Everyone's demo uses 50 documents and a clean knowledge base. We had 14,000 files and a decade of conflicting policies.

230. Will AI Start Running Businesses for Us?

What happens when the software we once controlled begins to control itself and our businesses? Are we witnessing the birth of a new economic paradigm?

What happens when the software we once controlled begins to control itself and our businesses? Are we witnessing the birth of a new economic paradigm?

231. Exploring Graph RAG: Enhancing Data Access and Evaluation Techniques

What is Graph RAG, what can it do, and how do you evaluate it?

What is Graph RAG, what can it do, and how do you evaluate it?

232. Demystifying AI Adoption in Business: A Guide to Leveraging ChatGPT Technologies With Azure & Google

There are now ways for organizations to use the power of Large Language Model's to help their office workers be more productive while retaining data ownership.

There are now ways for organizations to use the power of Large Language Model's to help their office workers be more productive while retaining data ownership.

233. From Backlinks to Data Depth: How LLMs Are Rewriting Content Authority

Large Language Models (LLMs) are replacing Google-era content authority. LLMs are designed to find content that explains, defines, compares, or solves.

Large Language Models (LLMs) are replacing Google-era content authority. LLMs are designed to find content that explains, defines, compares, or solves.

234. New Formula Could Make AI Agents Actually Useful in the Real World

A mathematical framework for optimizing large language models in multi-agent systems using a formal objective function balancing brevity and context.

A mathematical framework for optimizing large language models in multi-agent systems using a formal objective function balancing brevity and context.

235. AI Can Now Do Expert-Level Work (Almost). 5 Surprising Findings from a Landmark 'GDPval' Study

The results show that the best AI models are beginning to perform at a level comparable to highly experienced industry experts.

The results show that the best AI models are beginning to perform at a level comparable to highly experienced industry experts.

236. Fine-Tuning an LLM — The Six-Step Lifecycle

An end-to-end bird's eye view of the fine-tuning process

An end-to-end bird's eye view of the fine-tuning process

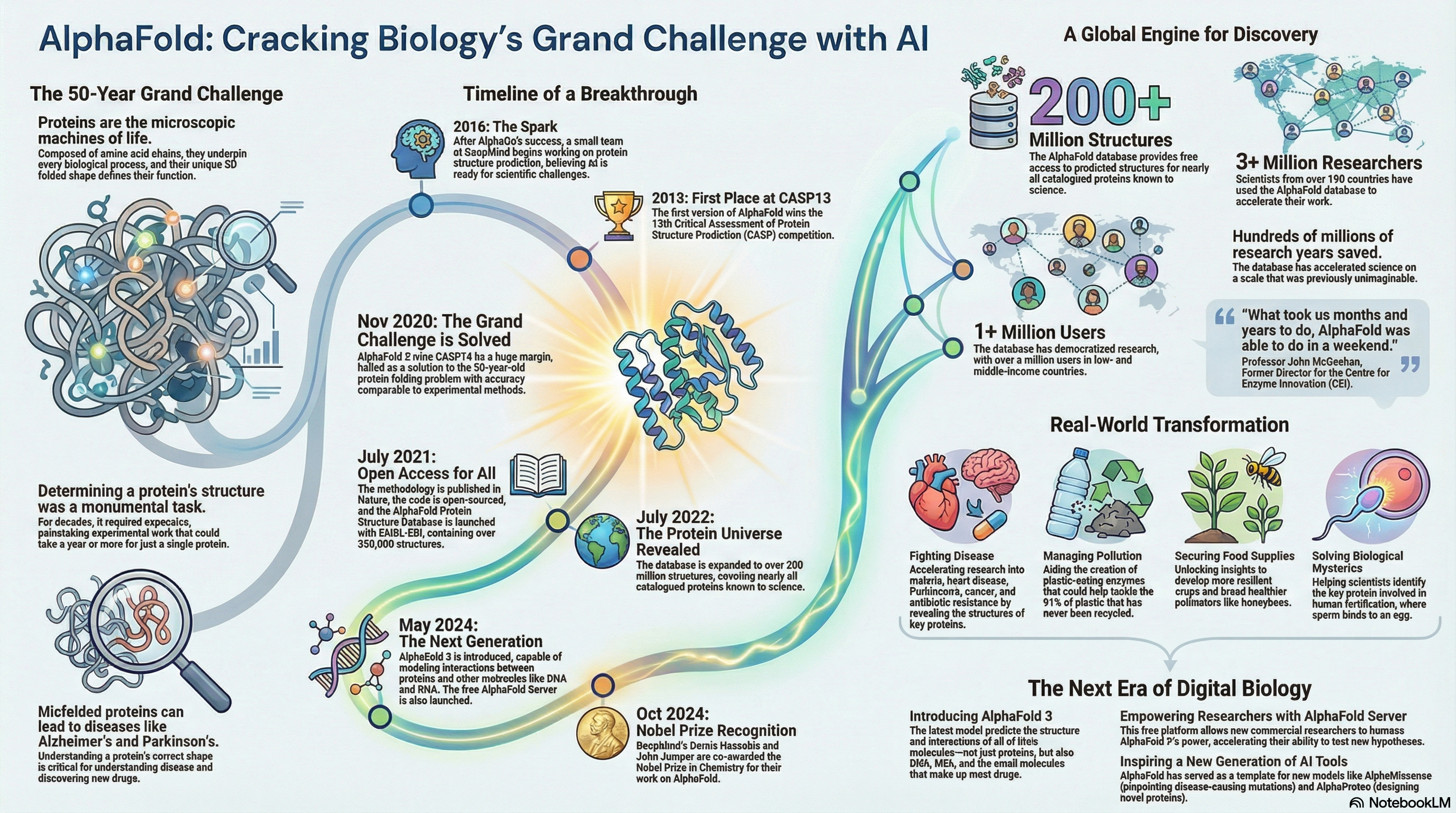

237. More Than a Nobel Prize: 6 Surprising Ways an AI Breakthrough Is Reshaping Science

The question is no longer if AI will help solve our grandest challenges, but which one we will point it at next.

The question is no longer if AI will help solve our grandest challenges, but which one we will point it at next.

238. Predictive Coding: What Is Special About the Human Brain? Not Much

An important capability of the brain, it seems, is its ability to learn widely.

An important capability of the brain, it seems, is its ability to learn widely.

239. Tech Evolution: Tina Huang on AI in Education, Freelancing Success, and Productivity Hacks

The future of education and productivity with AI!

The future of education and productivity with AI!

240. AI-Assisted Code Review: What Actually Works in Practice

AI PR reviewers promise fewer bugs with less effort. Reality? Without hybrid pipelines, they create noise. Here’s what actually works.