Let's learn about Llm via these 212 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

ChatGPT, Gemini, and LLaMA are some of the most popular large language models. But they're not the only ones. Discover more today!

1. How to Run Your Own Local LLM: Updated for 2024 - Version 1

Discover how to run Generative AI models locally with Hugging Face Transformers, gpt4all, Ollama, localllm, and Llama 2.

Discover how to run Generative AI models locally with Hugging Face Transformers, gpt4all, Ollama, localllm, and Llama 2.

2. Unlocking Structured JSON Data with LangChain and GPT: A Step-by-Step Tutorial

Manipulating Structured Data (from PDFs) with the Model behind ChatGPT, LangChain, and Python for Powerful AI-driven Applications.

Manipulating Structured Data (from PDFs) with the Model behind ChatGPT, LangChain, and Python for Powerful AI-driven Applications.

3. How to Build a $300 AI Computer for the GPU-Poor

$300 computer to run generative AI models locally, e.g. large language model inference and stable diffusion image generation.

$300 computer to run generative AI models locally, e.g. large language model inference and stable diffusion image generation.

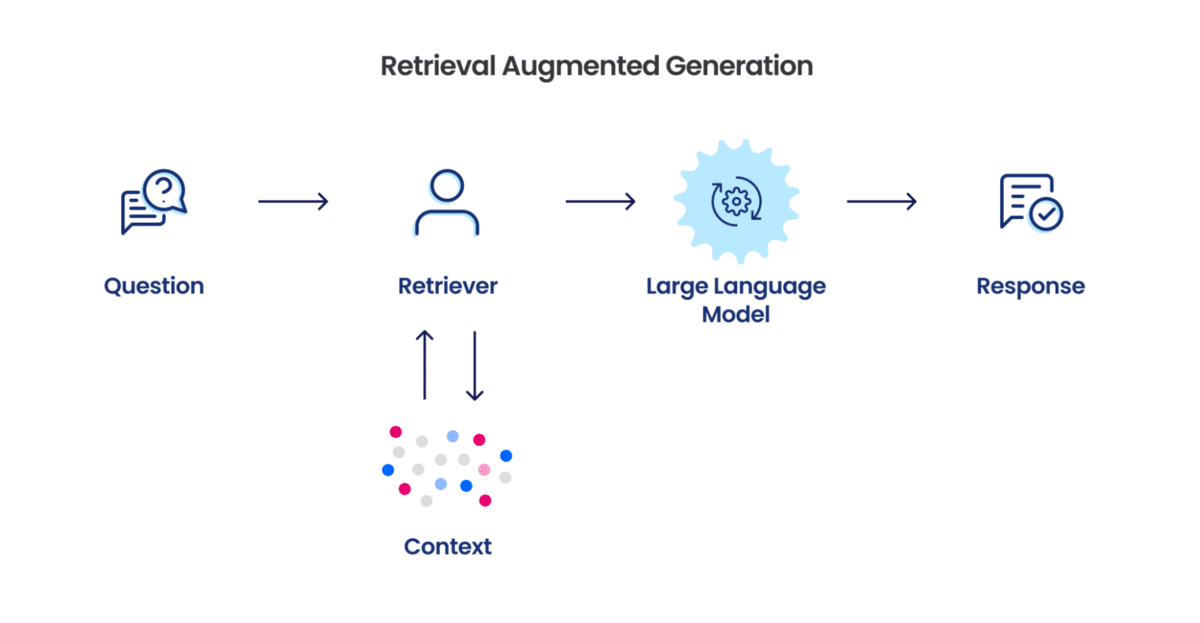

4. Decluttering Advanced RAG in Building Federated Systems for your Digital Products

RAG - Retrieval Augmented Generation, Vector Database, Database Pipeline, Product Development

RAG - Retrieval Augmented Generation, Vector Database, Database Pipeline, Product Development

5. The Promise and Potential of LLM in Crypto

We cover 8 main potential applications of LLM in the crypto space. LLM can benefit all members in the crypto space; LLM can be integrated into existing projects

We cover 8 main potential applications of LLM in the crypto space. LLM can benefit all members in the crypto space; LLM can be integrated into existing projects

6. What Is a Diffusion LLM and Why Does It Matter?

What is diffusion large language model LLM, and why it matters. In the context of Inception Labs releasing Mercury Coder.

What is diffusion large language model LLM, and why it matters. In the context of Inception Labs releasing Mercury Coder.

7. Small Language Models are Closing the Gap on Large Models

A fine-tuned 3B model beat our 70B baseline. Here's why data quality and architecture innovations are ending the "bigger is better" era in AI.

A fine-tuned 3B model beat our 70B baseline. Here's why data quality and architecture innovations are ending the "bigger is better" era in AI.

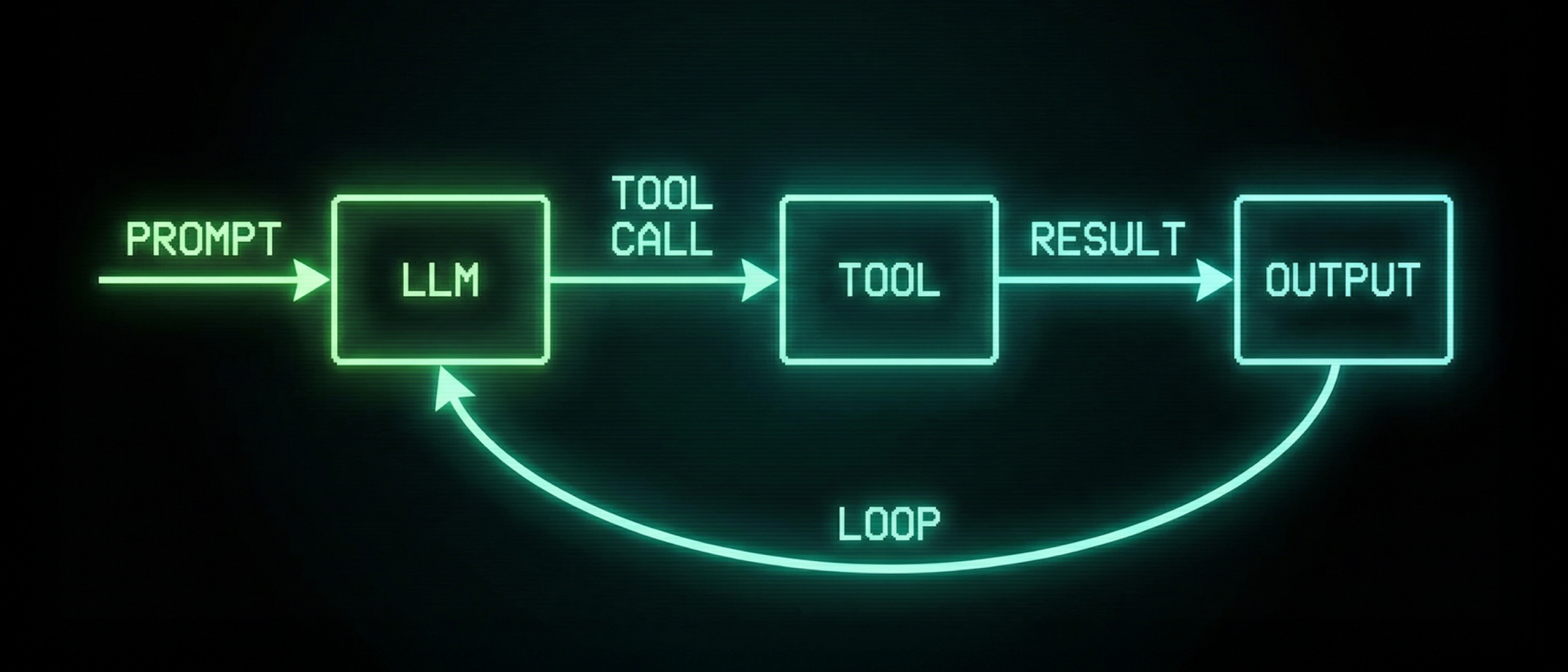

8. AI Agents: Why the Gap Between Demo and Deployment Keeps Widening

Gartner predicts 40%+ of agentic AI projects will fail by 2027. Analysis of why demos dazzle but deployments disappoint, what production patterns actually work.

Gartner predicts 40%+ of agentic AI projects will fail by 2027. Analysis of why demos dazzle but deployments disappoint, what production patterns actually work.

9. "We Are Very Early in Our Work With LLMs," - Prem Ramaswami, Head of Data Commons at Google

Google's Head of Data Commons joined HackerNoon to discuss grounding AI in verifiable data, and why "we are very early with LLMs," MCP's open approach.

Google's Head of Data Commons joined HackerNoon to discuss grounding AI in verifiable data, and why "we are very early with LLMs," MCP's open approach.

10. Real-life LLM Implementation: A Backender’s Perspective

In this article, I would like to explore the advantages and disadvantages of various large language models and share thoughts on potential issues

In this article, I would like to explore the advantages and disadvantages of various large language models and share thoughts on potential issues

11. Groq’s Deterministic Architecture is Rewriting the Physics of AI Inference

Groq’s Deterministic Architecture is Rewriting the Physics of AI Inference. How Nvidia Learned to Stop Worrying and Acquired Groq

Groq’s Deterministic Architecture is Rewriting the Physics of AI Inference. How Nvidia Learned to Stop Worrying and Acquired Groq

12. LiteLLM: Call Every LLM API Like It's OpenAI

LiteLLM — a package to simplify API calls across Azure, Anthropic, OpenAI, Cohere and Replicate.

LiteLLM — a package to simplify API calls across Azure, Anthropic, OpenAI, Cohere and Replicate.

13. Train Your Own ChatGPT-like LLM with FlanT5 and Replicate

We train an open-source LLM to distinguish between William Shakespeare and Anton Chekhov.

We train an open-source LLM to distinguish between William Shakespeare and Anton Chekhov.

14. What I've learned building an agent for Renovate config (as a cautious skeptic of AI)

As an opportunity to "kick the tyres" of what agents are and how they work, I set aside a couple of hours to see build one - and it blew me away.

As an opportunity to "kick the tyres" of what agents are and how they work, I set aside a couple of hours to see build one - and it blew me away.

15. The Impact of Generative AI on Enterprise Software Development

Enterprises will need to understand how they will use customer data and how it will get processed through AI models that are trained with the latest innovation.

Enterprises will need to understand how they will use customer data and how it will get processed through AI models that are trained with the latest innovation.

16. 🎬 Introducing MetaGPT: Unleashing the Power of AI Agents for Complex Tasks

Imagine having at your disposal an AI-powered assistant that not only comprehends your queries but can also seamlessly interact with various applications.

Imagine having at your disposal an AI-powered assistant that not only comprehends your queries but can also seamlessly interact with various applications.

17. From 140GB to 4GB: The Art of LLM Quantization

Quantization shrinks 140GB LLMs to under 4GB, bringing enterprise AI to consumer GPUs. A deep dive into GPTQ, AWQ, GGUF, and beyond.

Quantization shrinks 140GB LLMs to under 4GB, bringing enterprise AI to consumer GPUs. A deep dive into GPTQ, AWQ, GGUF, and beyond.

18. Run Llama Without a GPU! Quantized LLM with LLMWare and Quantized Dragon

Use AI miniaturization to get high-level performance out of LLMs running on your laptop!

Use AI miniaturization to get high-level performance out of LLMs running on your laptop!

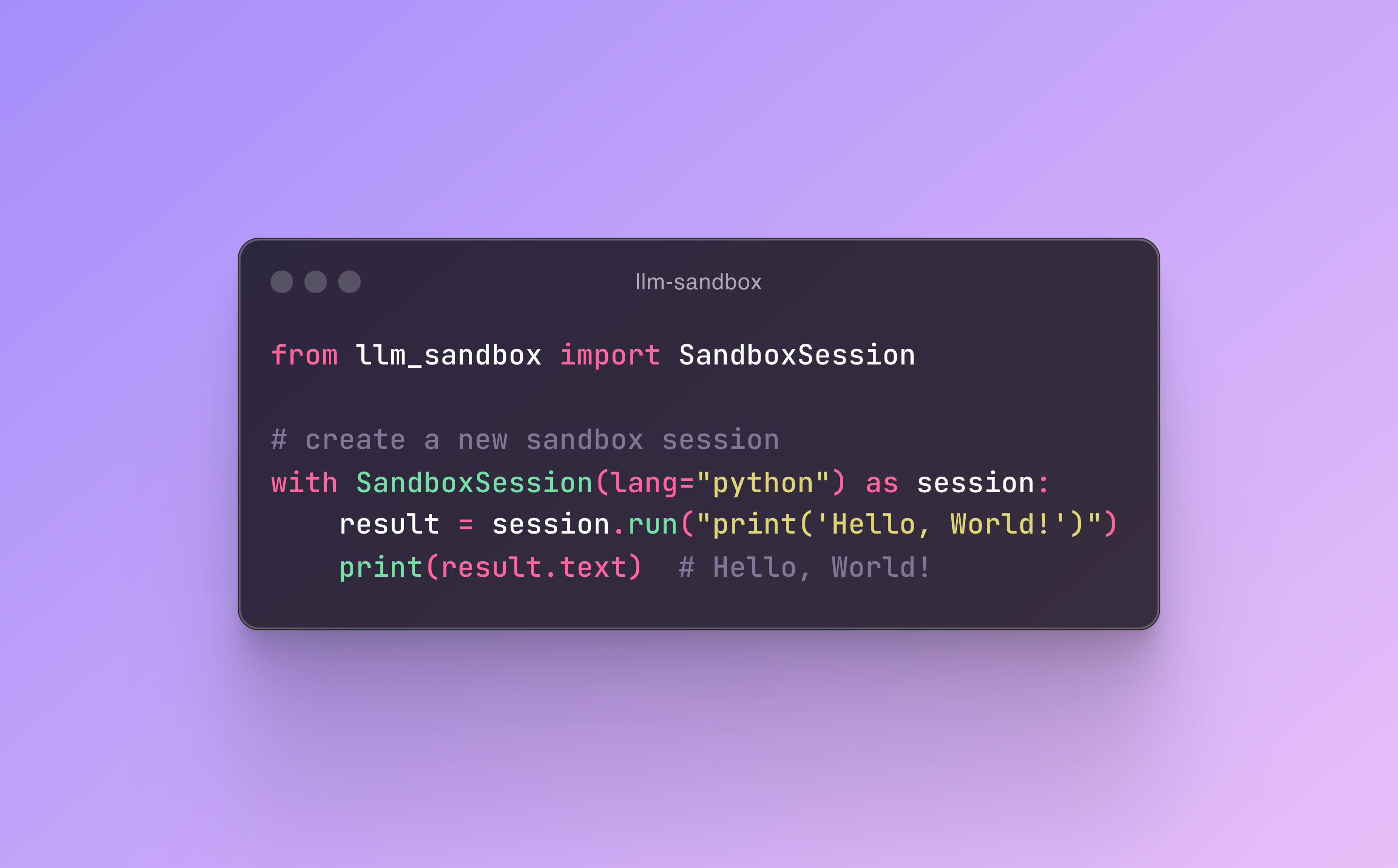

19. Introducing LLM Sandbox: Securely Execute LLM-Generated Code with Ease

LLM Sandbox: a secure, isolated environment to run LLM-generated code using Docker. Ideal for AI researchers, developers, and hobbyists.

LLM Sandbox: a secure, isolated environment to run LLM-generated code using Docker. Ideal for AI researchers, developers, and hobbyists.

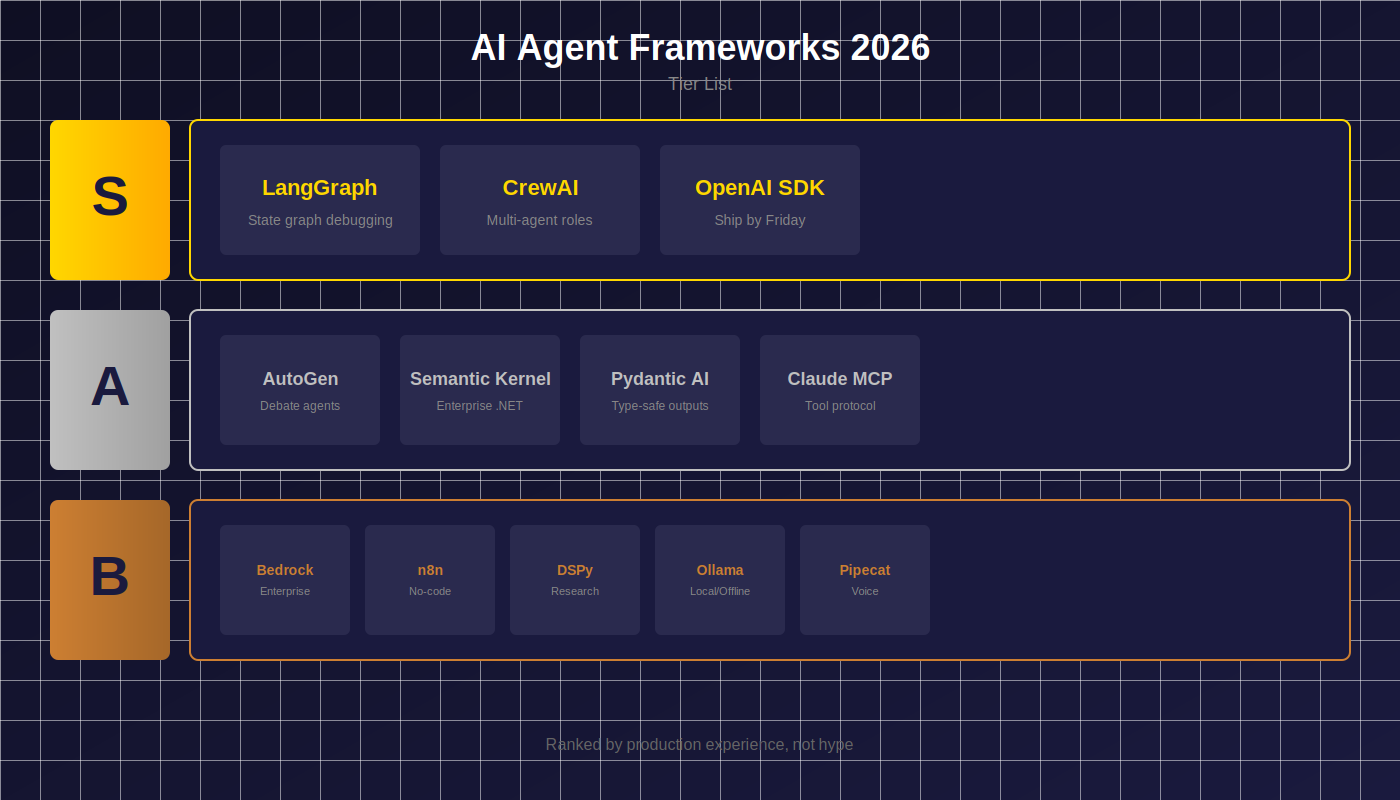

20. The Best AI Agent Frameworks for 2026 (Ranked by Someone Who's Shipped With All of Them)

LangGraph, CrewAI, AutoGen, Pydantic AI, and 8 more. What works, what doesn't, and when to use each.

LangGraph, CrewAI, AutoGen, Pydantic AI, and 8 more. What works, what doesn't, and when to use each.

21. Choosing an LLM in 2026: The Practical Comparison Table (Specs, Cost, Latency, Compatibility)

Compare top LLMs by context, cost, latency and tool support—plus a simple decision checklist to match “model + prompt + scenario”.

Compare top LLMs by context, cost, latency and tool support—plus a simple decision checklist to match “model + prompt + scenario”.

22. How to Build an n8n Automation to Read Kibana Logs and Analyze Them With an LLM

How we built an n8n automation that reads Kibana logs, analyzes them with an LLM, and returns human-readable incident summaries in Slack

How we built an n8n automation that reads Kibana logs, analyzes them with an LLM, and returns human-readable incident summaries in Slack

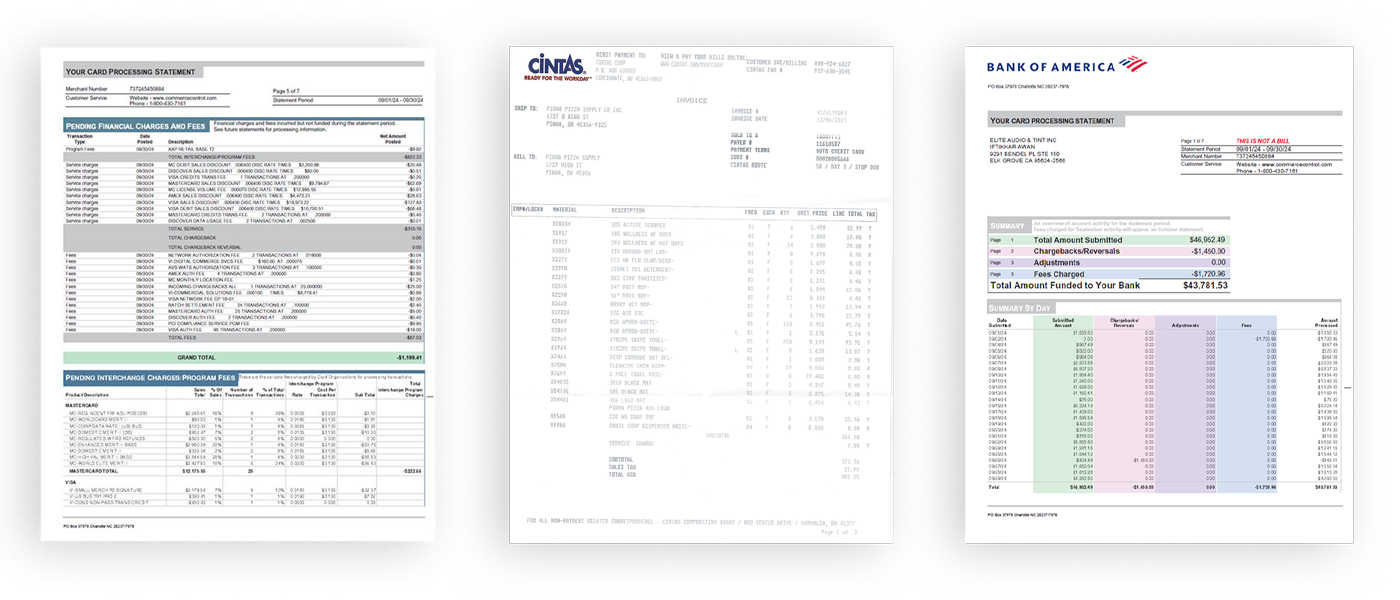

23. The Best AI Models For Invoice Processing: Benchmark Comparisons

I’ve tested 7 most popular AI models to see how well they process invoices out-of-the-box, without any fine tuning.

I’ve tested 7 most popular AI models to see how well they process invoices out-of-the-box, without any fine tuning.

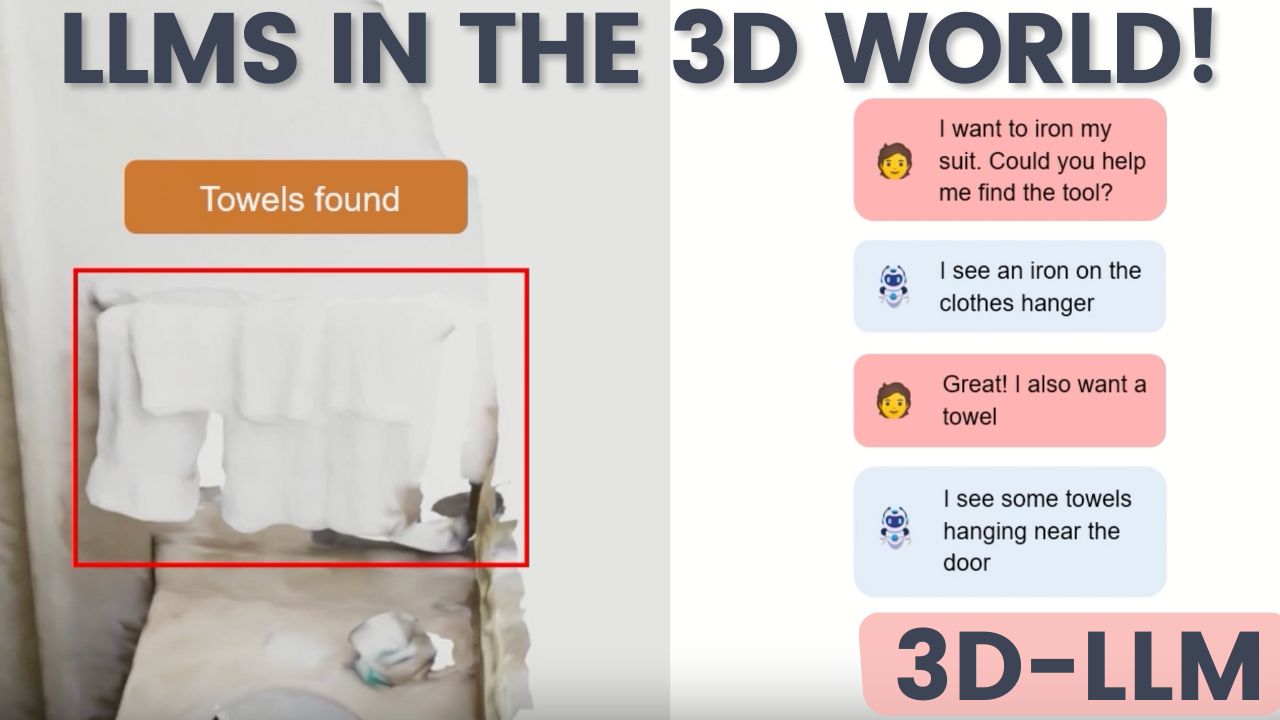

24. A Big Step for AI: 3D-LLM Unleashes Language Models into the 3D World

3D-LLM is a novel model that bridges the gap between language and the 3D realm we inhabit.

3D-LLM is a novel model that bridges the gap between language and the 3D realm we inhabit.

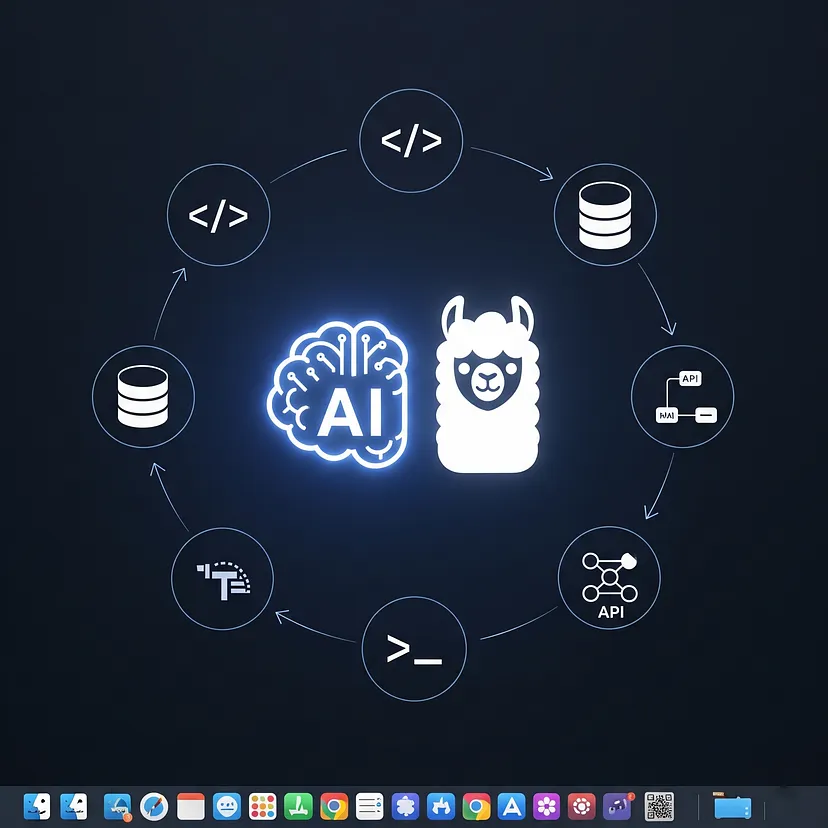

25. The 7 Essential Tools for Local LLM Development on macOS in 2025

Discover the 7 essential tools for local Large Language Model (LLM) development on macOS in 2025.

Discover the 7 essential tools for local Large Language Model (LLM) development on macOS in 2025.

26. GPT-4, Llama-2, Claude: How Different Language Models React to Prompts

Exploring the unique behaviors of different Large Language Models (LLMs) and mastering advanced prompting techniques!

Exploring the unique behaviors of different Large Language Models (LLMs) and mastering advanced prompting techniques!

27. GPT-Like LLM With No GPU? Yes! Legislation Analysis With LLMWare

AI Miniaturization is changing AI development. We build a legal text analyzer that runs without any specialty hardware.

AI Miniaturization is changing AI development. We build a legal text analyzer that runs without any specialty hardware.

28. Win Up to $2500 in the AI Writing Contest by Bright Data and HackerNoon

Join the AI Writing Contest by Bright Data and HackerNoon! Share your insights on AI, LLMs, and web scraping for a chance to win from a $2500 prize pool.

Join the AI Writing Contest by Bright Data and HackerNoon! Share your insights on AI, LLMs, and web scraping for a chance to win from a $2500 prize pool.

29. Making LLMs Efficient: Reducing Memory Usage Without Breaking Quality

Optimal memory-quality tradeoffs for efficient language models.

Optimal memory-quality tradeoffs for efficient language models.

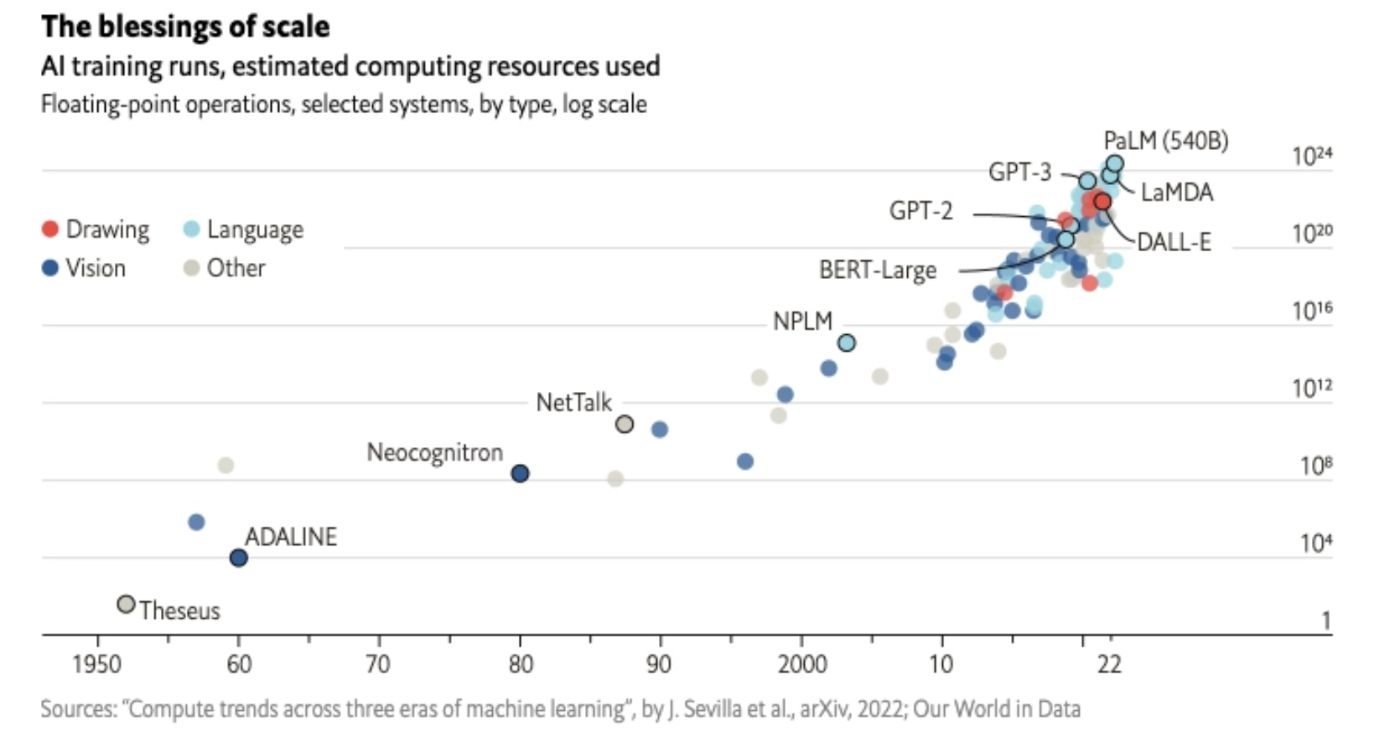

30. AI’s Dirty Secret: The Energy Cost of Training the Next GPT-5

Training GPT-5 will need massive, specialized chips-solving a billion-piece puzzle at once demands parallel power far beyond today’s computers.

Training GPT-5 will need massive, specialized chips-solving a billion-piece puzzle at once demands parallel power far beyond today’s computers.

31. How to Get Responses From Local LLM Models With Python

This article shows you how to create a simple yet powerful Python interface for your local LLM system.

This article shows you how to create a simple yet powerful Python interface for your local LLM system.

32. Building a Hybrid RAG Agent with Neo4j Graphs and Milvus Vector Search

Build a Graph-RAG agent using Neo4j and Milvus to combine graph and vector search, delivering accurate, context-rich answers to complex queries.

Build a Graph-RAG agent using Neo4j and Milvus to combine graph and vector search, delivering accurate, context-rich answers to complex queries.

33. Best Stock Market Data APIs For Algorithmic Traders (2025 Edition)

Best Stock Market Data APIs for algorithmic traders, fintech developers, and AI agents.

Best Stock Market Data APIs for algorithmic traders, fintech developers, and AI agents.

34. LiteLLM Configs: Reliably Call 100+ LLMs

How Anthropic forced us to change our open-source library

How Anthropic forced us to change our open-source library

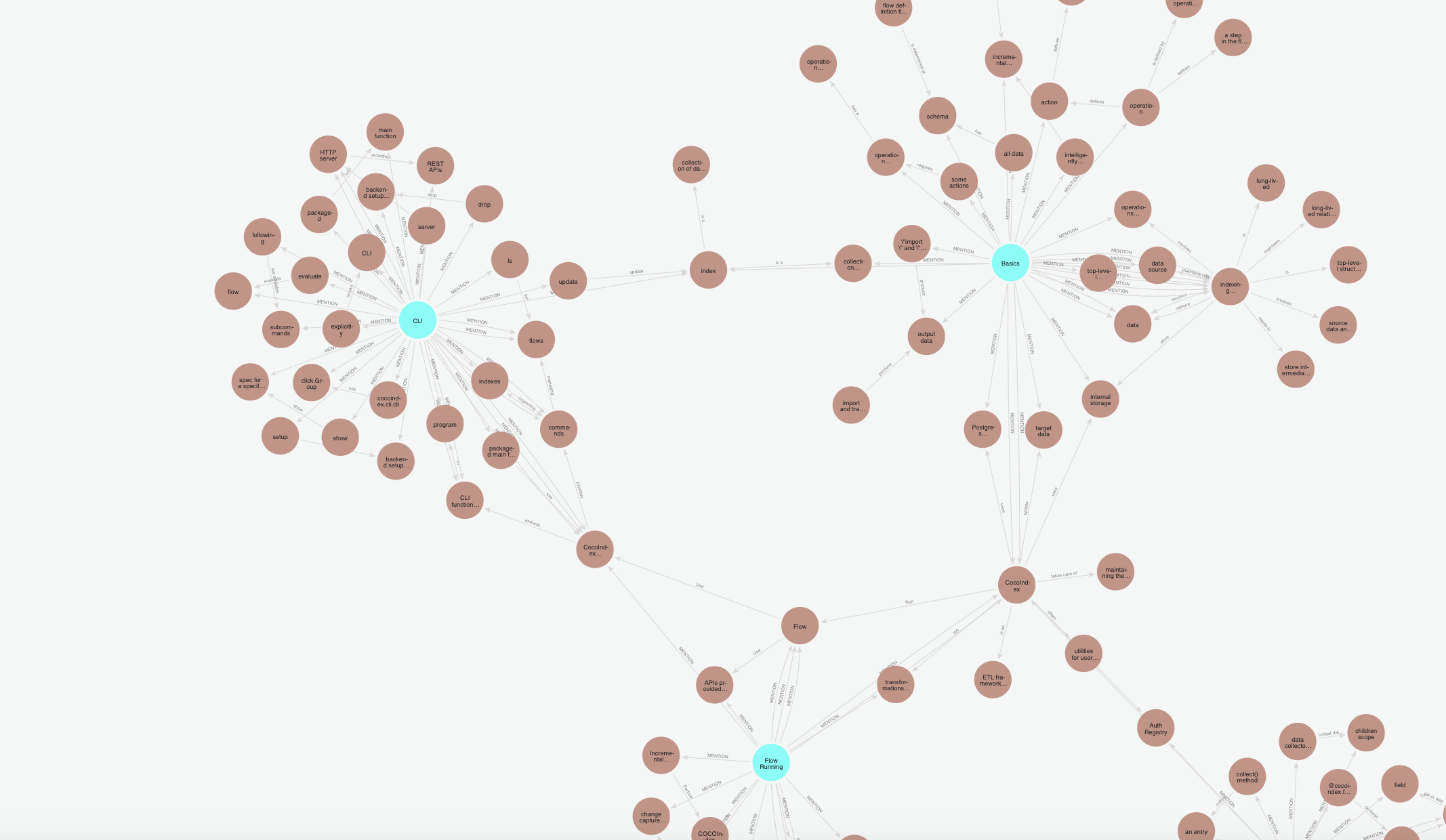

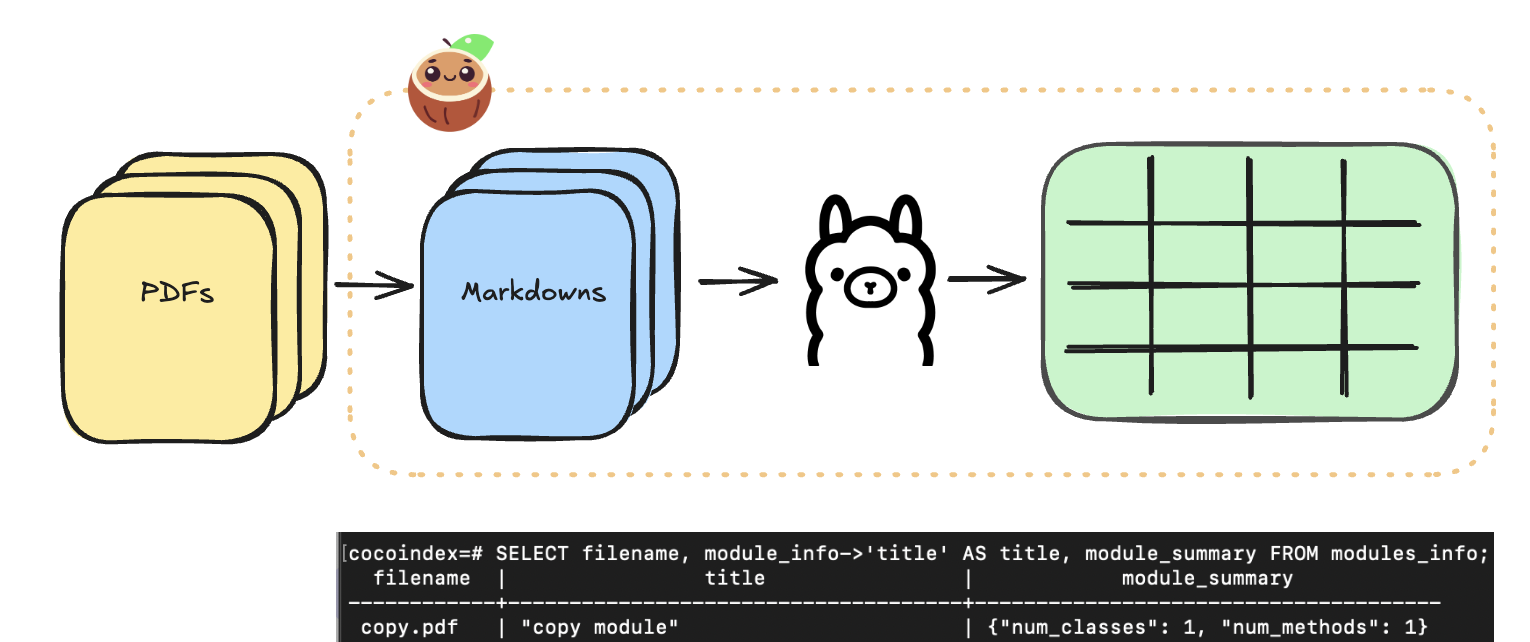

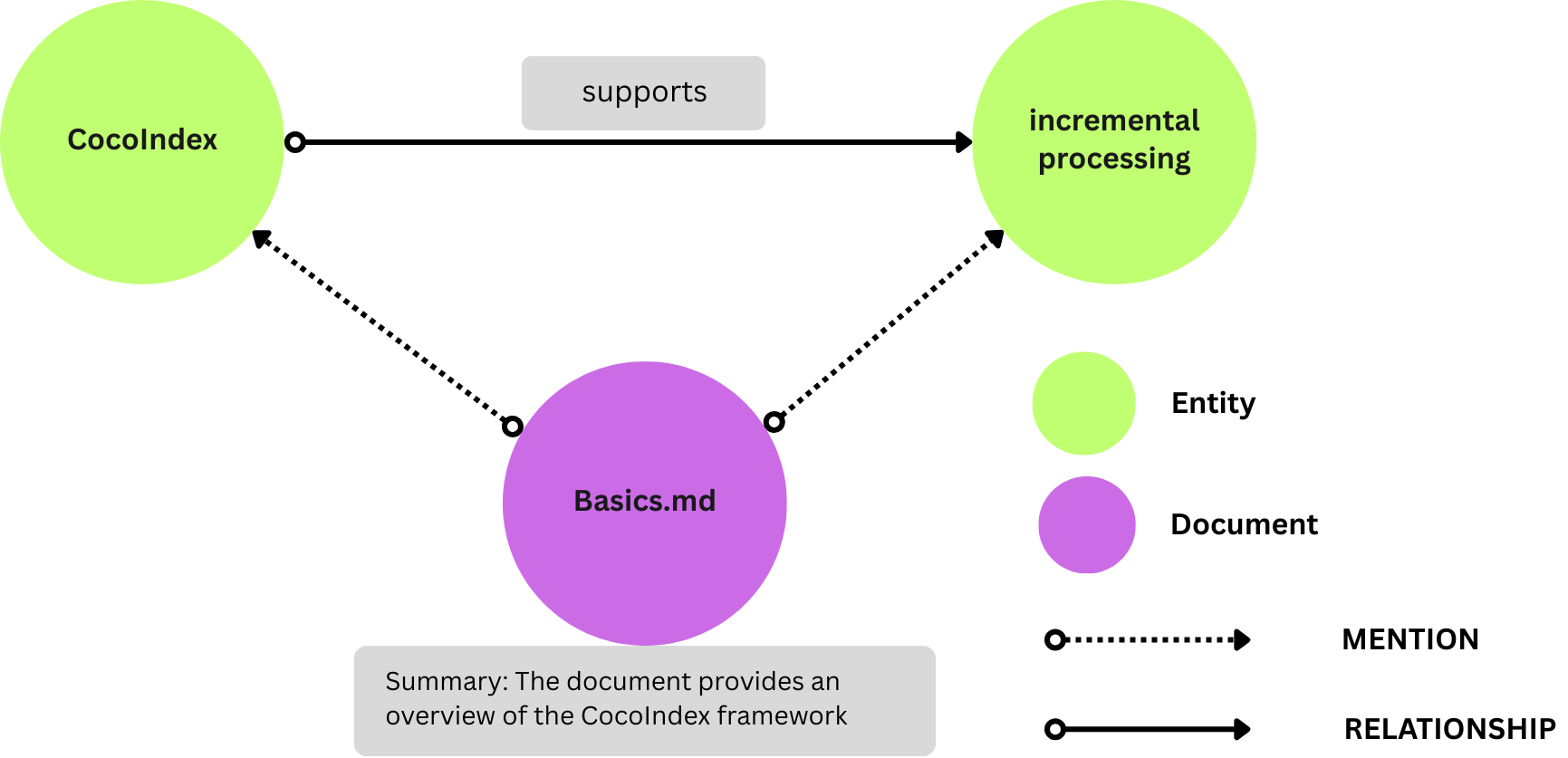

35. This Open Source Tool Turns Markdown Into a Knowledge Graph—With a Little Help From AI

CocoIndex now supports knowledge graph for your docs with continuous updates. Knowledge graph make easy!

CocoIndex now supports knowledge graph for your docs with continuous updates. Knowledge graph make easy!

36. Model Context Protocol (MCP): The USB-C of AI Data Connectivity

MCP (Model Context Protocol) is an open standard that allows AI systems to connect seamlessly with a wide variety of data sources.

MCP (Model Context Protocol) is an open standard that allows AI systems to connect seamlessly with a wide variety of data sources.

37. Beginner's Roadmap to Large Language Models (LLMOps) in 2023: All free!

This guide isn’t just a compilation of LLM resources; it's a curated journey through the most valuable skills in the industry.

This guide isn’t just a compilation of LLM resources; it's a curated journey through the most valuable skills in the industry.

38. Large Language Models: Exploring Transformers - Part 2

Transformer models are a type of deep learning neural network model that are widely used in Natural Language Processing (NLP) tasks.

Transformer models are a type of deep learning neural network model that are widely used in Natural Language Processing (NLP) tasks.

39. Stop the LLM From Rambling: Using Penalties to Control Repetition

A practical guide to penalty settings that reduce repetition and fluff in LLM outputs—without making the text weird.

A practical guide to penalty settings that reduce repetition and fluff in LLM outputs—without making the text weird.

40. What the Heck Is LanceDB?

Learn about LanceDB and how it fits into a stack that allows you to more easily create your own LLM models

Learn about LanceDB and how it fits into a stack that allows you to more easily create your own LLM models

41. SpringAI vs LangChain4j: The Real-World LLM Battle for Java Devs

SpringAI and LangChain4j are redefining what’s possible for Java developers in the LLM era. This article compares them head-to-head across real code, architectu

SpringAI and LangChain4j are redefining what’s possible for Java developers in the LLM era. This article compares them head-to-head across real code, architectu

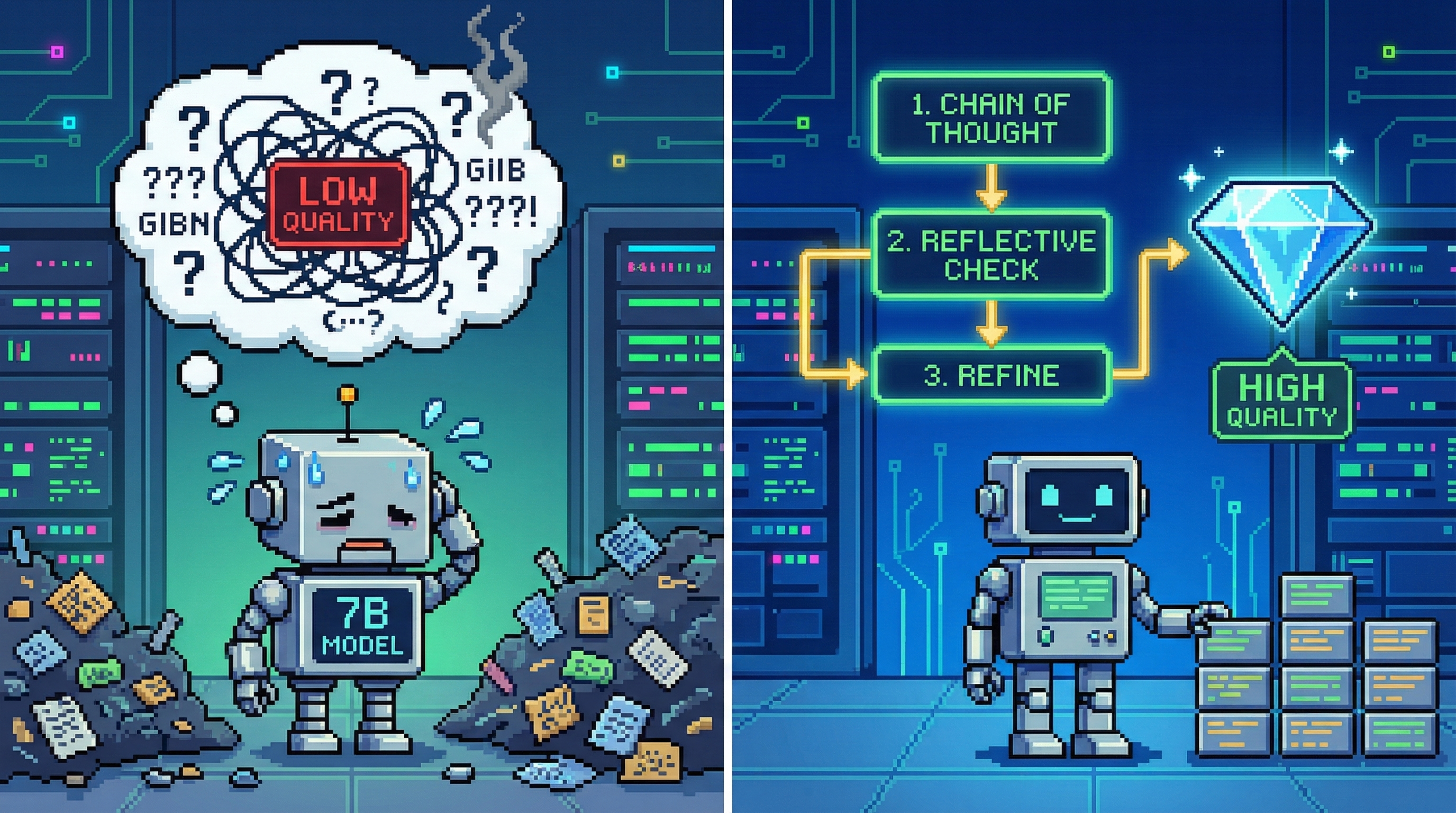

42. Getting High-Quality Output from 7B Models: A Production-Grade Prompting Playbook

A practical guide to making 7B models behave: constrain outputs, inject missing facts, lock formats, and repair loops.

A practical guide to making 7B models behave: constrain outputs, inject missing facts, lock formats, and repair loops.

43. Is Microsoft's AI Copilot the Future of Work?

Microsoft offers a glimpse into a future where AI significantly bolsters workplace productivity.

Microsoft offers a glimpse into a future where AI significantly bolsters workplace productivity.

44. Improving Chatbot With Code Generation: Building a Context-Aware Chatbot for Publications

Building a powerful information management system using ChatGPT's code generation and summarization capabilities.

Building a powerful information management system using ChatGPT's code generation and summarization capabilities.

45. On-premise structured extraction with LLM using Ollama

Learn to use CocoIndex extracting structured data from PDF/Markdown with Ollama's local LLM models. All running on premise without sending data to external API

Learn to use CocoIndex extracting structured data from PDF/Markdown with Ollama's local LLM models. All running on premise without sending data to external API

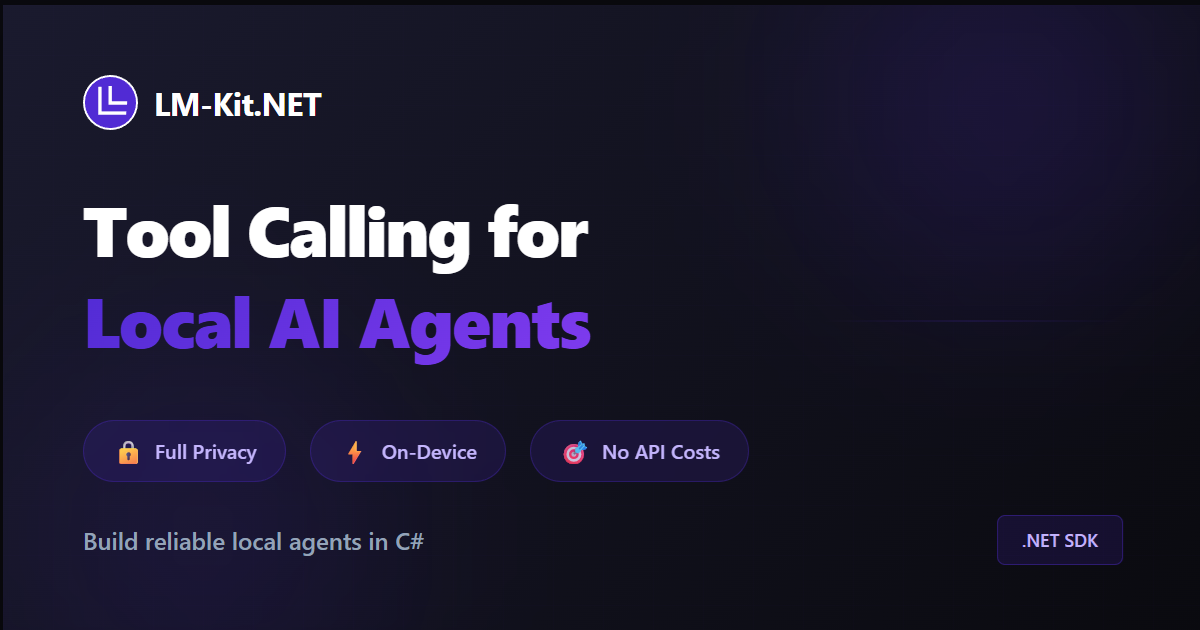

46. Tool Calling for Local AI Agents in C#

LM-Kit .NET SDK now supports tool calling for building AI agents in C#.

LM-Kit .NET SDK now supports tool calling for building AI agents in C#.

47. Create LLM Playground in 10 minutes (with LiteLLM)

Today, we’re going to to create a playground to evaluate multiple LLM Providers in less than 10 minutes using LiteLLM.

Today, we’re going to to create a playground to evaluate multiple LLM Providers in less than 10 minutes using LiteLLM.

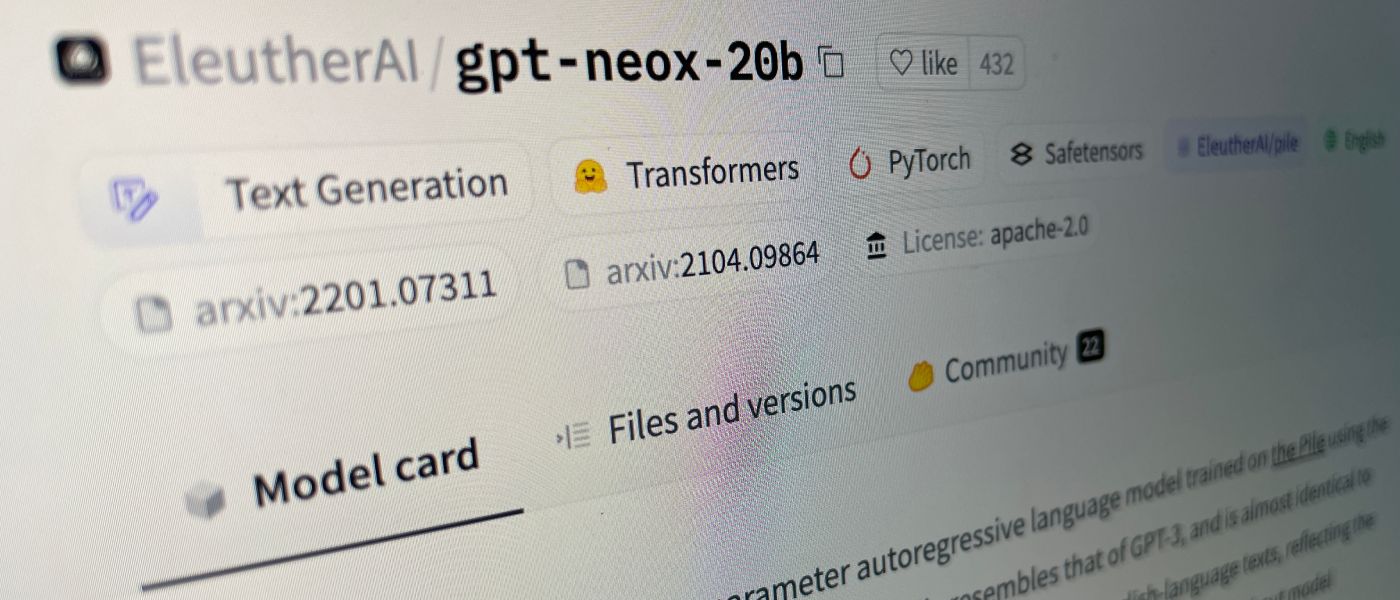

48. From LLaMA 2 to CodeGen: Navigating the World of Open-Source LLMs

From LLaMA 2 to CodeGen: Navigating the World of Open-Source LLMs

From LLaMA 2 to CodeGen: Navigating the World of Open-Source LLMs

The world of artificial intelligence (AI) is undergoing a seismic shift, largely driven by

49. Bad Prompts, Bad Results: Why Every LLM Failure Is Your Fault

AI-generated code can feel revolutionary—until it fails you. Why does this happen? Often, the issue isn't with the AI itself, but with the inputs we provide.

AI-generated code can feel revolutionary—until it fails you. Why does this happen? Often, the issue isn't with the AI itself, but with the inputs we provide.

50. How to Enhance Your dbt Project With Large Language Models

Automatically solve typical Natural Language Processing tasks for your text data using LLM for as cheap as $10 per 1M rows, staying in your dbt environment

Automatically solve typical Natural Language Processing tasks for your text data using LLM for as cheap as $10 per 1M rows, staying in your dbt environment

51. How to Extract and Generate JSON Data With GPTs, LangChain, and Node.js

Manipulating Structured Data (from PDFs) with the Model behind ChatGPT, LangChain, and Node.js for Powerful AI-driven Applications

Manipulating Structured Data (from PDFs) with the Model behind ChatGPT, LangChain, and Node.js for Powerful AI-driven Applications

52. Meta’s AI Boss Just Called LLMs ‘Simplistic’ — Here’s What He’s Building Instead

Yann LeCun, Chief AI Scientist at Meta, discusses the future of AI.

Yann LeCun, Chief AI Scientist at Meta, discusses the future of AI.

53. The Handy Guide to Getting Google's Bard AI to List Your Brand or Business In Its Response

From the article, you will learn whether you need to change your promotion strategy and how to do it.

From the article, you will learn whether you need to change your promotion strategy and how to do it.

54. The Release of Meta’s LLAMA-2 Marks A New Era in The History of Humanity

Meta has open-sourced its LLAMA LLM Models. This has far-reaching implications for the current world of technology and, indeed. Read this article to know more.

Meta has open-sourced its LLAMA LLM Models. This has far-reaching implications for the current world of technology and, indeed. Read this article to know more.

55. Spring AI RAG, Demystified: From Toy Demos to Production-Grade Retrieval

Production-ready RAG with Spring AI: ETL, PGVector, query rewriting, metadata filters, and tuning tricks for real-world retrieval workflows.

Production-ready RAG with Spring AI: ETL, PGVector, query rewriting, metadata filters, and tuning tricks for real-world retrieval workflows.

56. Meta-Prompting: From “Using Prompts” to “Generating Prompts”

Meta-prompts make LLMs generate high-quality prompts for you. Learn the 4-part template, pitfalls, and ready-to-copy examples.

Meta-prompts make LLMs generate high-quality prompts for you. Learn the 4-part template, pitfalls, and ready-to-copy examples.

57. Heyo, AI Character - Stay Away from My Child; Get a Job

I want the AI chatbot to help me with my job, not mess with my child’s mind.

I want the AI chatbot to help me with my job, not mess with my child’s mind.

58. "I Find Immense Joy in Believing in God's Existence" - Google Gemini 1.5 Pro

A Large Language Model Imagines a creator God and feels joy and ecstatic purpose at the revelation. Ready to read every deep secret of Google Gemini 1.5 Pro?

A Large Language Model Imagines a creator God and feels joy and ecstatic purpose at the revelation. Ready to read every deep secret of Google Gemini 1.5 Pro?

59. Hosting Your Own AI with Two-Way Voice Chat Is Easier Than You Think!

This guide will walk you through setting up a local LLM server that supports two-way voice interactions using Python and some free tools.

This guide will walk you through setting up a local LLM server that supports two-way voice interactions using Python and some free tools.

60. Google Gemini File Search - The End of Homebrew RAG?

Will Google's Gemini File Search kill homebrew RAG solutions? We test drive to compare function, performance and costs. Plus sample code for PDF Q&A app.

Will Google's Gemini File Search kill homebrew RAG solutions? We test drive to compare function, performance and costs. Plus sample code for PDF Q&A app.

61. 100 Days of AI Day 7: Building Your Own ChatGPT with Langchain

Step by step process on how to build chat with you data application using Open AI & LangChain in Python.

Step by step process on how to build chat with you data application using Open AI & LangChain in Python.

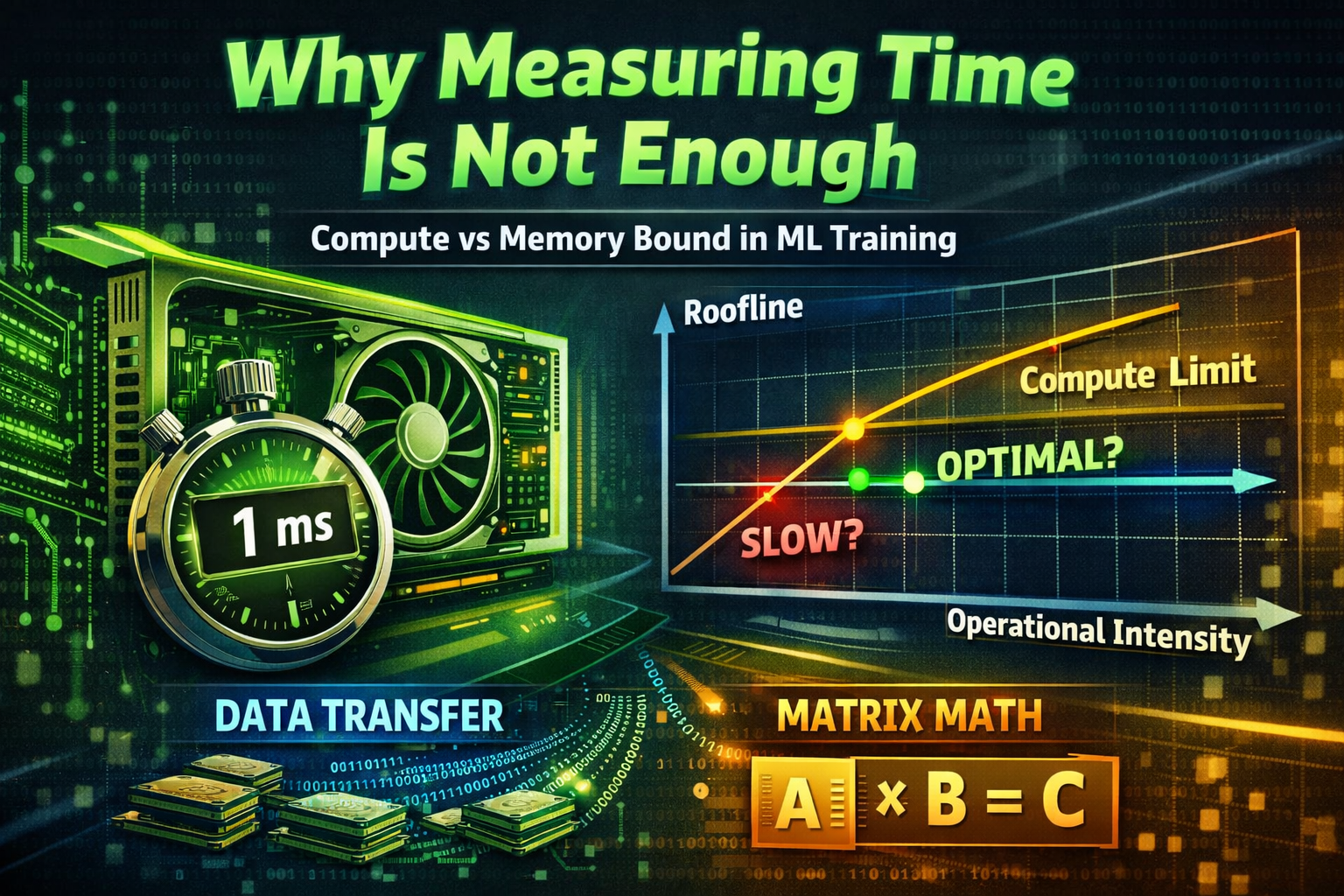

62. Why Measuring Time is Not Enough: a Practical Roofline Model for ML Training

Raw measurements don’t tell the full story.

Raw measurements don’t tell the full story.

63. LLMs For Curating Your Social Media Feeds? Yes Please!

Our online feeds are broken and large language models have the power to fix them.

Our online feeds are broken and large language models have the power to fix them.

64. Why Are the New AI Agents Choosing Markdown Over HTML?

Let's find out why AI agents convert HTML to Markdown to cut token usage by up to 99%!

Let's find out why AI agents convert HTML to Markdown to cut token usage by up to 99%!

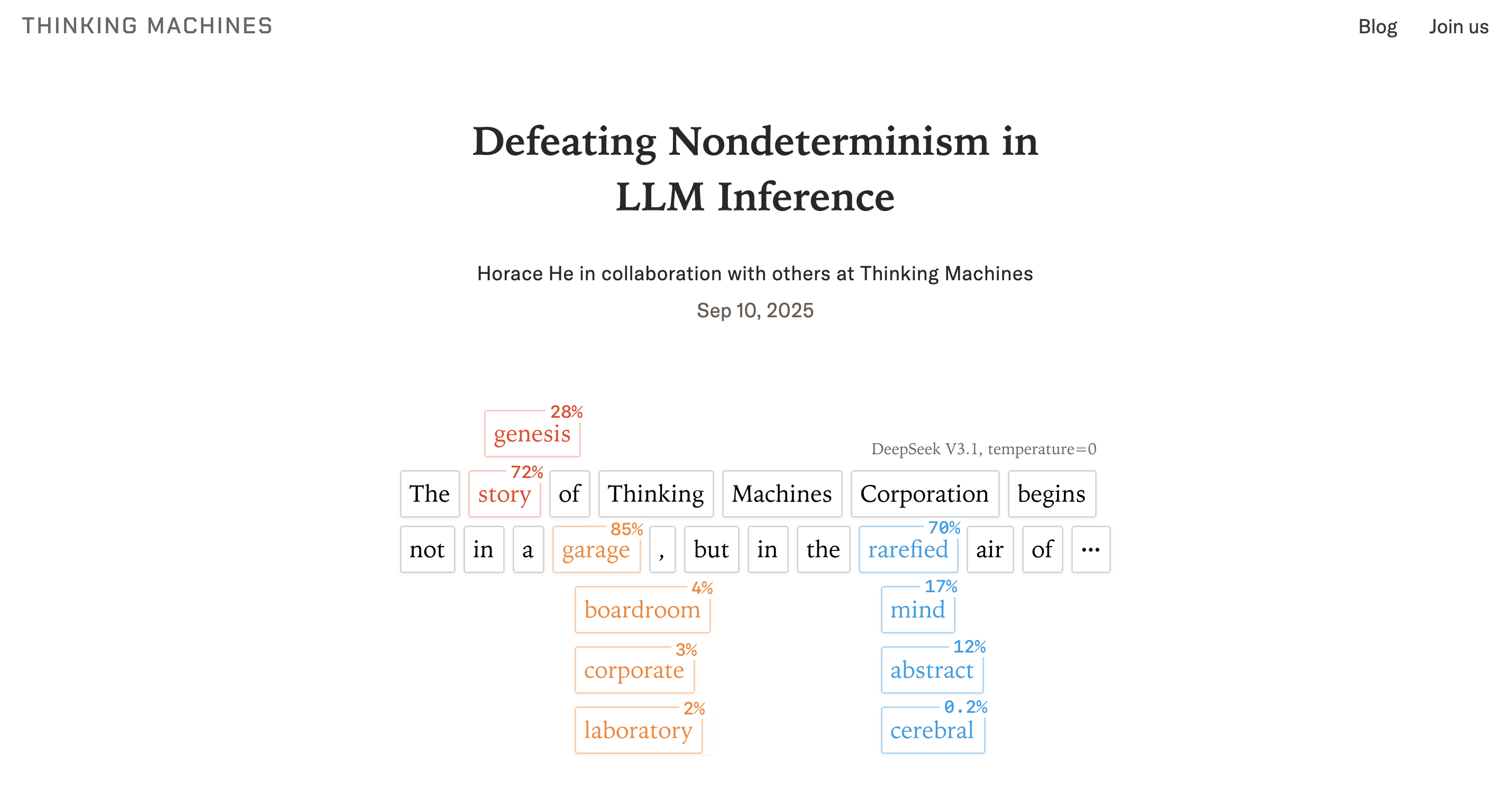

65. Re: Defeating Nondeterminism in LLM Inference, The Future is Predictable

Discover why LLM output non-determinism is a critical business obstacle, not just a bug, and how solving it unlocks high-value enterprise applications.

Discover why LLM output non-determinism is a critical business obstacle, not just a bug, and how solving it unlocks high-value enterprise applications.

66. Rule Engine + LLM Hybrid Architectures for Safer Code Generation

AI-generated code is no longer science fiction—it’s part of the modern developer’s toolkit.

AI-generated code is no longer science fiction—it’s part of the modern developer’s toolkit.

67. Re-Prompting: The Loop That Turns “Meh” LLM Output Into Production-Ready Results

A practical guide to re-prompting: the 5-step loop that turns vague LLM prompts into stable, structured, production-ready outputs.

A practical guide to re-prompting: the 5-step loop that turns vague LLM prompts into stable, structured, production-ready outputs.

68. When Trust Becomes the Core Problem of AI-Native Software Engineering

AI-native systems don’t just ship faster, they drift silently. Learn how proof-oriented delivery makes trust measurable, auditable, and reliable.

AI-native systems don’t just ship faster, they drift silently. Learn how proof-oriented delivery makes trust measurable, auditable, and reliable.

69. Google & Yale Turned Biology Into a Language Here's Why That's a Game-Changer for Devs

The team built a 27B parameter model that didn't just analyze biological data—it made a novel, wet-lab-validated scientific discovery

The team built a 27B parameter model that didn't just analyze biological data—it made a novel, wet-lab-validated scientific discovery

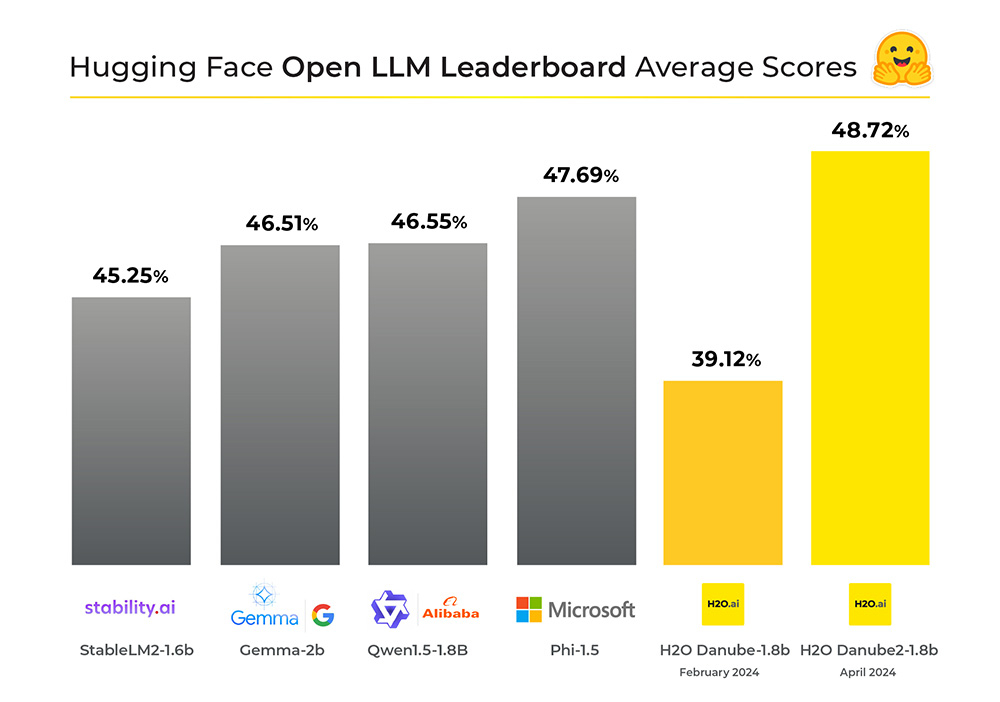

70. Danube-2: The Tiny AI Model Leading the Open LLM Leaderboard

Massive LLMs can be hugely flawed and can get worse over time. Enter tiny LLMs that are more accurate and becoming increasingly popular.

Massive LLMs can be hugely flawed and can get worse over time. Enter tiny LLMs that are more accurate and becoming increasingly popular.

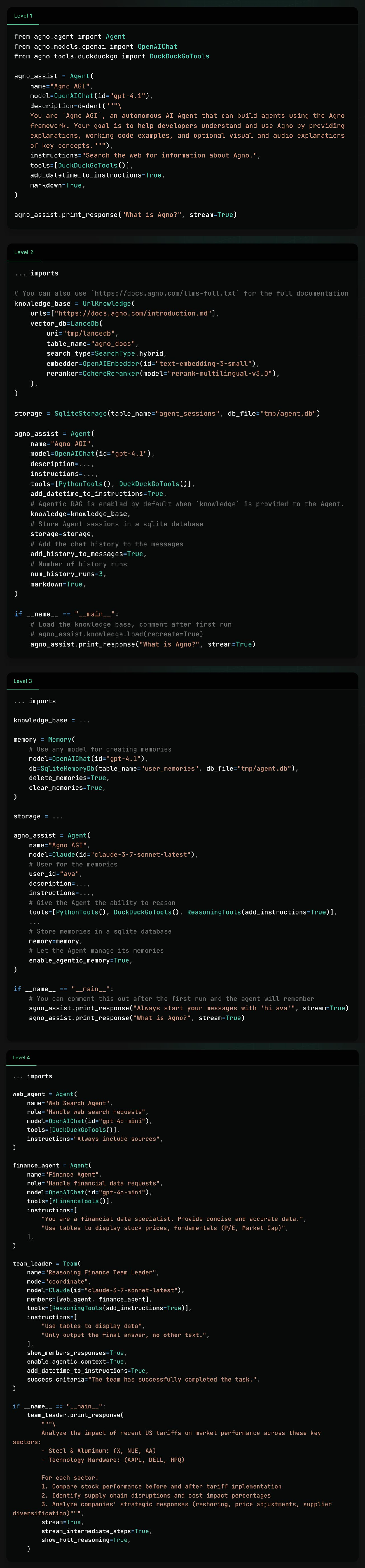

71. The 5 Tiers of AI Agents—And How to Build Each One

Build real AI agents in 5 levels, from simple tool use to full agentic systems—code included.

Build real AI agents in 5 levels, from simple tool use to full agentic systems—code included.

72. Taming LLMs with Langchain + Langgraph

How to fix LLMs and chat bots with Langchain and Langgraph.

How to fix LLMs and chat bots with Langchain and Langgraph.

73. The Times v. Microsoft/OpenAI: Substantial Technical Collaboration on the Creation of the Models (3)

Plaintiff The New York Times Company is a New York corporation with its headquarters and principal place of business in New York.

Plaintiff The New York Times Company is a New York corporation with its headquarters and principal place of business in New York.

74. Humanize AI Text: Task Accomplished!

Humanizing AI text is surely a challenge many people try to solve. Let me share how I found a solution to this riddle.

Humanizing AI text is surely a challenge many people try to solve. Let me share how I found a solution to this riddle.

75. Prompt Injection Is What Happens When AI Trusts Too Easily

Prompt Injection is a top threat to GenAI Large Language Models (LLMs) applications.

Prompt Injection is a top threat to GenAI Large Language Models (LLMs) applications.

76. From Black Box to Transparent: How to Read the Inner Logic of LLMs

Explore how LLMs work from the inside: from token‑prediction fundamentals to Transformer architecture, training stages and reasoning chains. Learn how to prompt

Explore how LLMs work from the inside: from token‑prediction fundamentals to Transformer architecture, training stages and reasoning chains. Learn how to prompt

77. Prompt vs Feature Engineering: The Hidden Bridge Between Humans and Machines

Prompting and feature engineering share a secret DNA — both translate human intent into machine understanding. Here’s how they differ, connect, and converge in

Prompting and feature engineering share a secret DNA — both translate human intent into machine understanding. Here’s how they differ, connect, and converge in

78. Summarize a Story in 10 Seconds with ChatGPT or Bard

Learn how to quickly summarize any text with ChatGPT Summarize and become more productive.

Learn how to quickly summarize any text with ChatGPT Summarize and become more productive.

79. No, AI Will Not Be Replacing Tech Jobs

ChatGPT and artificial intelligence (AI) will not replace tech workers due to its limitations and the increasing reliance on digital systems and processes.

ChatGPT and artificial intelligence (AI) will not replace tech workers due to its limitations and the increasing reliance on digital systems and processes.

80. Prompting for Safety: How to Stop Your LLM From Leaking Sensitive Data

Practical guide to LLM safety prompts: how to design, test, and update instructions that stop your AI from leaking sensitive personal and corporate data.

Practical guide to LLM safety prompts: how to design, test, and update instructions that stop your AI from leaking sensitive personal and corporate data.

81. From Facebook to MindverseAI: Felix Tao's Insights on AI Evolution and the Future of Large Language

NLP expert discusses the evolution of AI, waking up the consciousness and the biggest issues with LLMs...

NLP expert discusses the evolution of AI, waking up the consciousness and the biggest issues with LLMs...

82. Prompt Is the Hidden Commander Behind Every AI Output

Discover how prompts shape the quality of AI output. Learn how to engineer precise, structured, and context-rich prompts to unlock the full potential of LLM.

Discover how prompts shape the quality of AI output. Learn how to engineer precise, structured, and context-rich prompts to unlock the full potential of LLM.

83. Paige Bailey: Pioneering Generative AI in Product Management at Google DeepMind

What is it like to build the best AI models and work at one of the most important AI companies: Google Deepmind?

What is it like to build the best AI models and work at one of the most important AI companies: Google Deepmind?

84. LLM & RAG: A Valentine's Day Love Story

Love is in the air, and so are the perfect AI partners: LLMs and RAG!

Love is in the air, and so are the perfect AI partners: LLMs and RAG!

85. The Noonification: Craig Wright is Satoshi Nakamoto (12/24/2023)

12/24/2023: Top 5 stories on the HackerNoon homepage!

12/24/2023: Top 5 stories on the HackerNoon homepage!

86. Advanced LLM Development 101: Strategies and Insights from Igor Tkach, CEO of Mindy Support

'Innovate with Confidence: Expert Guidance in Advanced LLM Development' explores the strategies and insights for making the most of Language Learning Models.

'Innovate with Confidence: Expert Guidance in Advanced LLM Development' explores the strategies and insights for making the most of Language Learning Models.

87. What Will AI Make of Us Entrepreneurs?

Software engineers and entrepreneurs are on the cusp of a tech tsunami. This might be the last, and largest, 'clean up season' in software.

Software engineers and entrepreneurs are on the cusp of a tech tsunami. This might be the last, and largest, 'clean up season' in software.

88. Forget Chatbots, Meet Actionbots: Why Amazon's Nova Act Could Reshape Web Interaction

Nova Act has a 94% success rate interacting with finicky calendar widgets. The toolkit is Amazon’s first public step toward artificial general intelligence.

Nova Act has a 94% success rate interacting with finicky calendar widgets. The toolkit is Amazon’s first public step toward artificial general intelligence.

89. Building Vertical SaaS in an Old School Industry: AI That Fits, Not Fights

Building Vertical SaaS in an Old School Industry: AI That Fits, Not Fights

Building Vertical SaaS in an Old School Industry: AI That Fits, Not Fights

90. Building an AI-Powered Content Moderation System with JavaScript: A Quick Guide

Learn how to leverage OpenAI to quickly build an AI-powered moderation system that automatically detects and filters toxic comments.

Learn how to leverage OpenAI to quickly build an AI-powered moderation system that automatically detects and filters toxic comments.

91. Summarizing Large Datasets of Customer Feedback Using Retrieval-Augmented Generation (RAG)

92. Prompts for Solving Comprehension Questions in CAT Examination

This story gives an overview of how students can use ChatGPT to augment their learning.

This story gives an overview of how students can use ChatGPT to augment their learning.

93. How to Build Your First MCP Server using FastMCP

Learn how to build your first MCP server using FastMCP and connect it to a large language model to perform real-world tasks through code.

Learn how to build your first MCP server using FastMCP and connect it to a large language model to perform real-world tasks through code.

94. Processing Structured and Unstructured Data with SuperAGI and LlamaIndex

SuperAGI's latest integration with LlamaIndex can extend the overall agent’s capability of understanding and working with a wide range of data types and source.

SuperAGI's latest integration with LlamaIndex can extend the overall agent’s capability of understanding and working with a wide range of data types and source.

95. Why AI Agents Work in Demos But Fail in Production

At 90% accuracy per step, a 20-step agent succeeds 12% of the time. Your demo didn't show you that. Production will.

At 90% accuracy per step, a 20-step agent succeeds 12% of the time. Your demo didn't show you that. Production will.

96. The Science of AI Hallucinations—and How Engineers Are Learning to Curb Them

Why large language models hallucinate, how tech giants fight back, and what developers can do to make AI more truthful.

Why large language models hallucinate, how tech giants fight back, and what developers can do to make AI more truthful.

97. 9 Ways to Better Interact With a LLM (Using the Altman Drama!)

A brief document inspired by OpenAI’s latest drama, capturing the main techniques for interacting with a LLM to make it more relevant for our use case

A brief document inspired by OpenAI’s latest drama, capturing the main techniques for interacting with a LLM to make it more relevant for our use case

98. OpenAI Makes it Easier to Build Your Own AI Agents With API

You can create sophisticated AI assistants that seamlessly handle everything from reversing strings to querying internal databases.

You can create sophisticated AI assistants that seamlessly handle everything from reversing strings to querying internal databases.

99. Stop Parsing Nightmares: Prompting LLMs to Return Clean, Parseable JSON

Prompt engineering guide for getting LLMs to return clean, parseable JSON every time, with templates, failure modes, and production-ready patterns.

Prompt engineering guide for getting LLMs to return clean, parseable JSON every time, with templates, failure modes, and production-ready patterns.

100. An Essential Guide on How to Seamlessly Build AI-Enhanced APIs With OpenAI

Today, we're announcing the WunderGraph OpenAI integration/Agent SDK to simplify the creation of AI-enhanced APIs...

Today, we're announcing the WunderGraph OpenAI integration/Agent SDK to simplify the creation of AI-enhanced APIs...

101. What the Big Three Consultancies are Missing About AI (And the Code That Proves It)

102. Stop Hallucinations at the Source: Hybrid RAG That Checks Itself

Stop hallucinations. Validate every answer. Combine vector and graph search.

Stop hallucinations. Validate every answer. Combine vector and graph search.

103. New Formula Could Make AI Agents Actually Useful in the Real World

A mathematical framework for optimizing large language models in multi-agent systems using a formal objective function balancing brevity and context.

A mathematical framework for optimizing large language models in multi-agent systems using a formal objective function balancing brevity and context.

104. I Asked 5 LLMs to Write the Same SQL Query. Here's How Wrong They Got It

I tested 5 LLMs on 10 real SQL queries and graded them against actual data. Here's the scoreboard and the failure mode that should worry you most.

I tested 5 LLMs on 10 real SQL queries and graded them against actual data. Here's the scoreboard and the failure mode that should worry you most.

105. Tech Evolution: Tina Huang on AI in Education, Freelancing Success, and Productivity Hacks

The future of education and productivity with AI!

The future of education and productivity with AI!

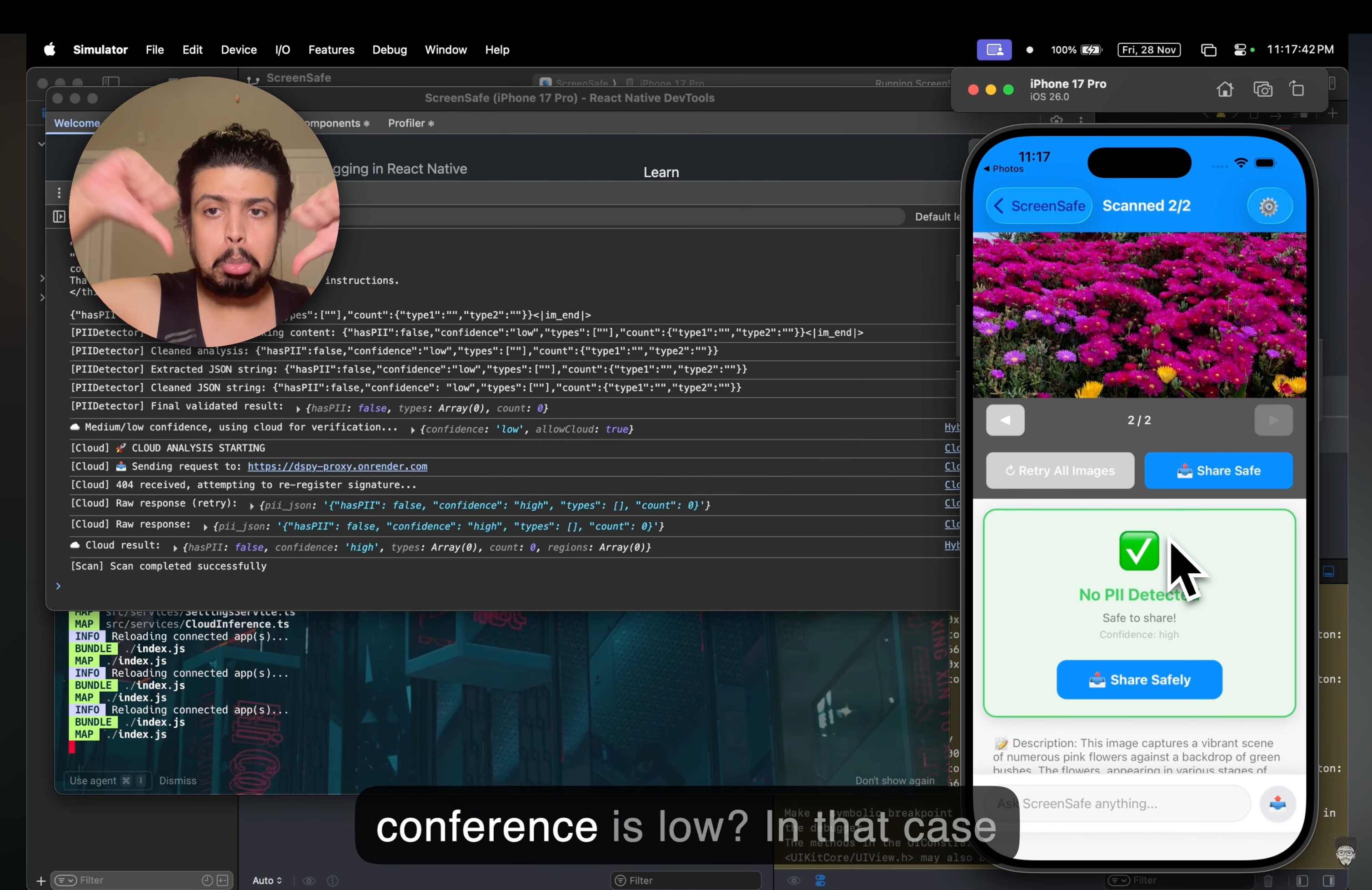

106. ScreenSafe: A Technical Chronicle of On-Device AI and Privacy-First Architecture

Building local AI is hell. Here is the engineering roadmap for running privacy-first LLMs on iOS and Android.

Building local AI is hell. Here is the engineering roadmap for running privacy-first LLMs on iOS and Android.

107. This Real-Time Graph Framework Now Lets You Switch from Neo4j to Kuzu in One Line

CocoIndex now supports Kuzu as a native graph database target, enabling real-time LLM-powered knowledge graphs with plug-and-play configuration.

CocoIndex now supports Kuzu as a native graph database target, enabling real-time LLM-powered knowledge graphs with plug-and-play configuration.

108. Chatbot Memory: Implement Your Own Algorithm From Scratch

Manage short-term memory in chatbots, using a combination of storage techniques and automatic summarization to optimize conversational context.

Manage short-term memory in chatbots, using a combination of storage techniques and automatic summarization to optimize conversational context.

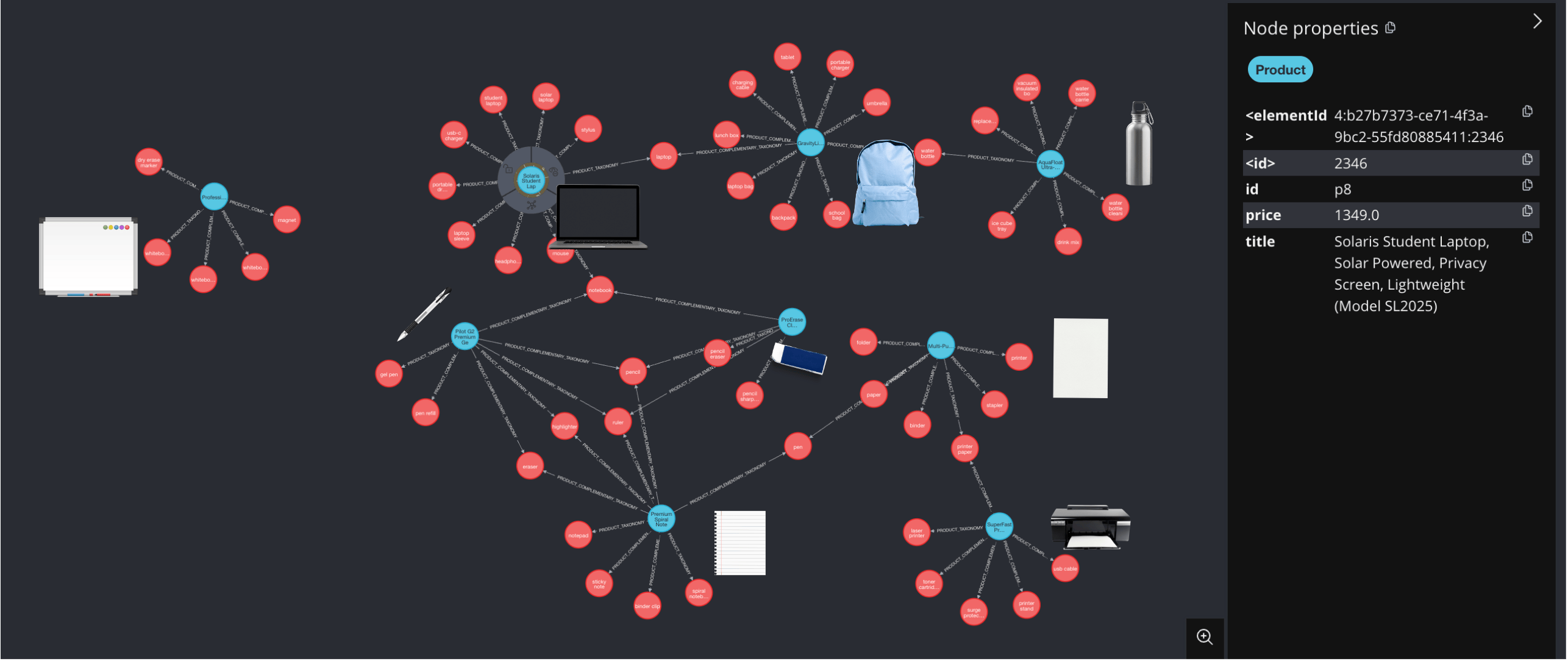

109. Build a Smarter Store: Let GPT Label Your Products and Predict What Sells Next

Build a real-time knowledge graph for product insights and recommendations with taxonomy and complementary taxonomy LLM extraction.

Build a real-time knowledge graph for product insights and recommendations with taxonomy and complementary taxonomy LLM extraction.

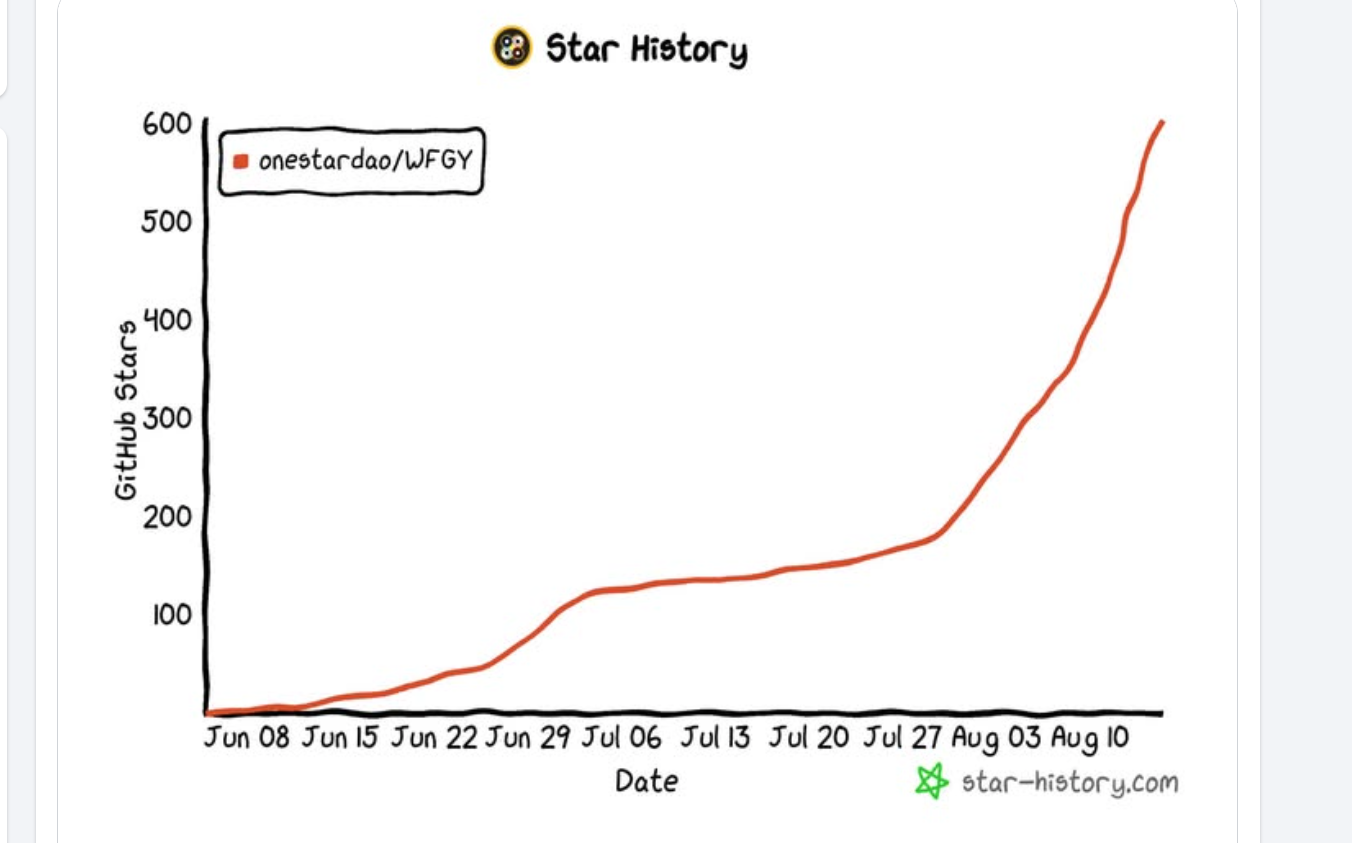

110. 16 Failure Modes of RAG and LLM Agents and How to Fix Them With a Semantic Firewall

A practical AI Problem Map: 16 failure modes in RAG and LLM agents with minimal repros and fixes via a model-agnostic semantic firewall (WFGY).

A practical AI Problem Map: 16 failure modes in RAG and LLM agents with minimal repros and fixes via a model-agnostic semantic firewall (WFGY).

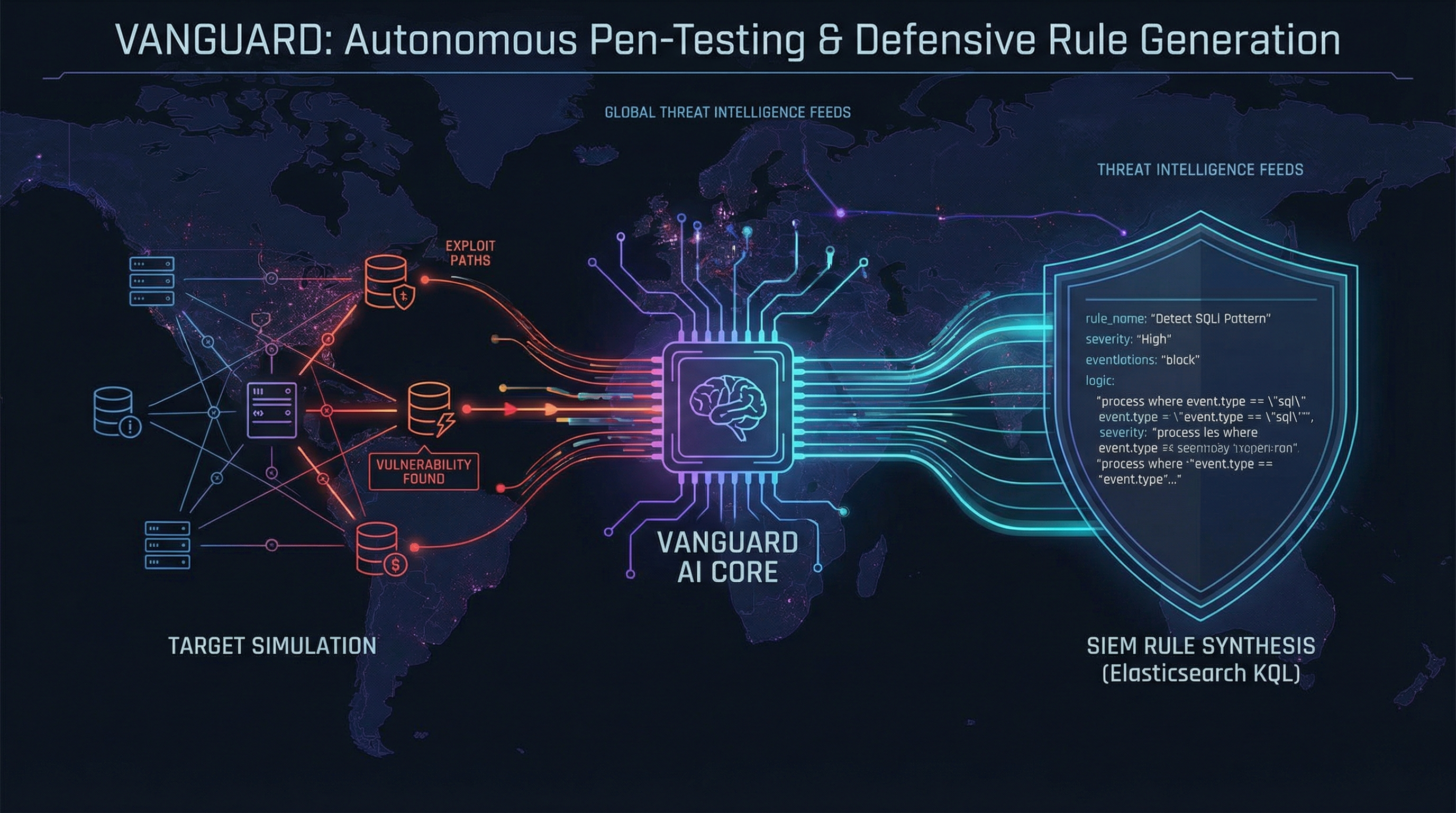

111. I Built an AI That Autonomously Penetration Tests a Target, Then Writes Its Own SIEM Defense Rules

VANGUARD is an open-source AI agent that autonomously pen-tests targets, explains its reasoning in real-time, and writes its own SIEM detection rules.

VANGUARD is an open-source AI agent that autonomously pen-tests targets, explains its reasoning in real-time, and writes its own SIEM detection rules.

112. Evaluating Dynamic Knowledge Encoding: Experimental Setup for Multi-Hop Logical Reasoning

This article details the experimental setup for evaluating RECKONING, a novel bi-level learning algorithm, on three diverse multi-hop logical reasoning datasets

This article details the experimental setup for evaluating RECKONING, a novel bi-level learning algorithm, on three diverse multi-hop logical reasoning datasets

113. How to Use Coze Shortcuts for Creating Buttons and Telegram Commands

Learn to use Coze shortcuts for creating user-friendly buttons and Telegram commands to enhance your chatbot's efficiency and user experience

Learn to use Coze shortcuts for creating user-friendly buttons and Telegram commands to enhance your chatbot's efficiency and user experience

114. No More AI Costs: How to Run Meta Llama 3.1 Locally

I’ll show you how to run the 8-billion version of Llama 3.1locally

I’ll show you how to run the 8-billion version of Llama 3.1locally

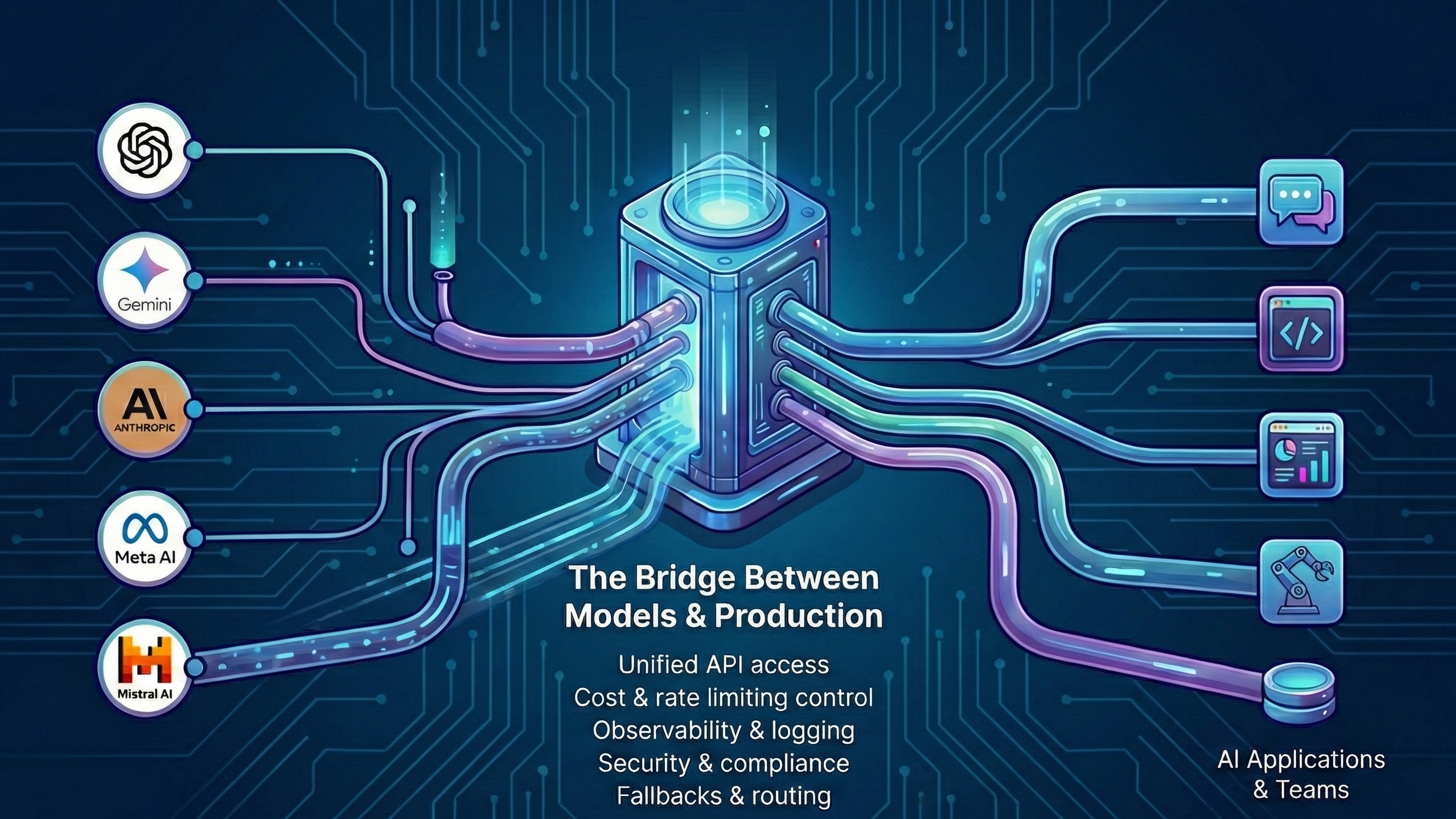

115. The Moment Your LLM Stops Being an API—and Starts Being Infrastructure

AI gateways explained: why direct LLM API calls fail in production, how gateways handle cost, reliability, and observability, and when teams actually need one.

AI gateways explained: why direct LLM API calls fail in production, how gateways handle cost, reliability, and observability, and when teams actually need one.

116. How to Leverage AI in Learning Management Systems

Discover the transformative power of integrating AI into Learning Management Systems (LMS) for enhanced educational experiences.

Discover the transformative power of integrating AI into Learning Management Systems (LMS) for enhanced educational experiences.

117. The Most Ruthless System Architect You’ll Ever Hire is an LLM

This article proposes a mindset shift in using LLMs: instead of using them to generate code, use them to aggressively critique and "break" architectural designs

This article proposes a mindset shift in using LLMs: instead of using them to generate code, use them to aggressively critique and "break" architectural designs

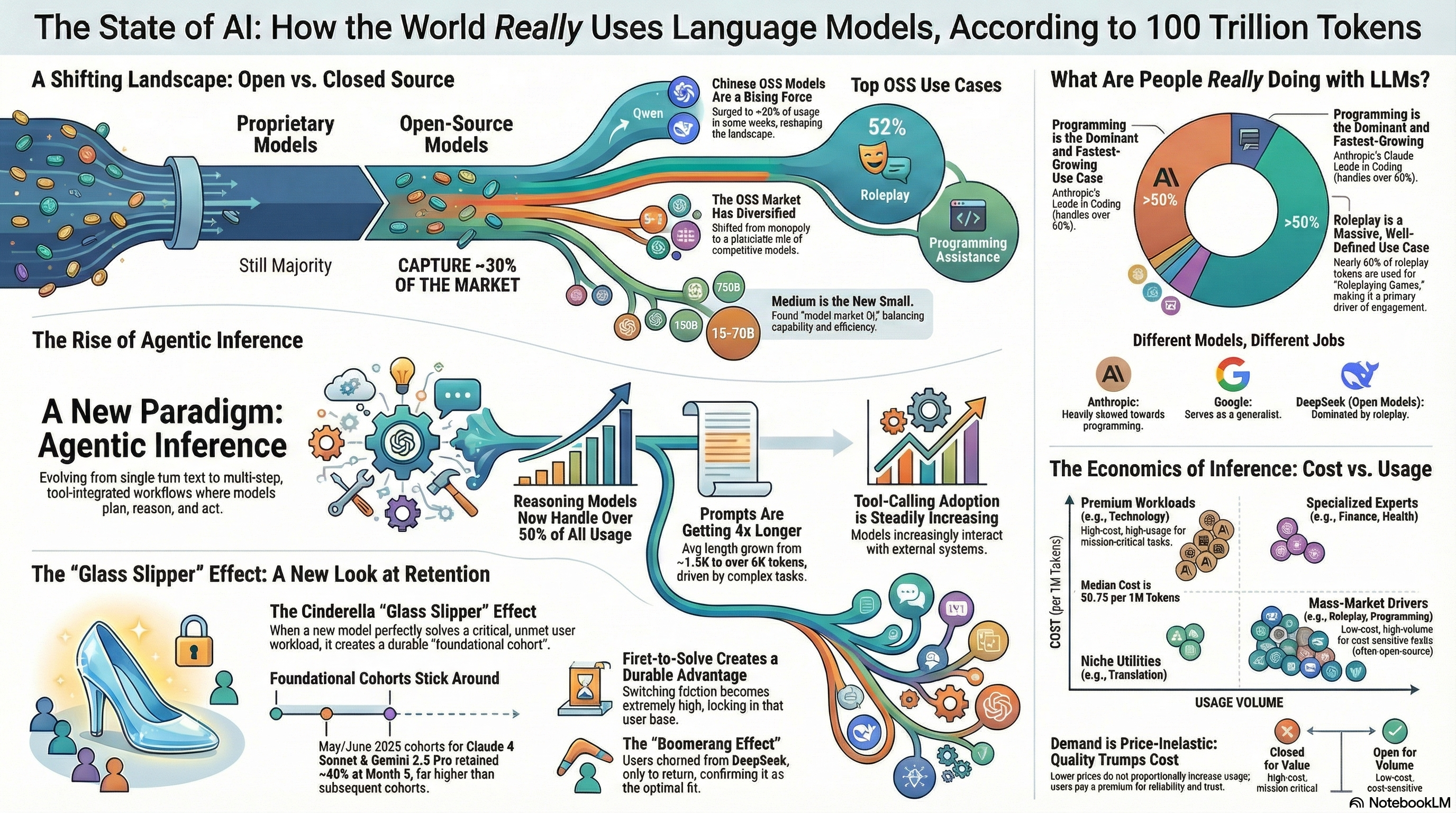

118. Beyond the Hype: 5 Surprising Truths from a 100 Trillion Token Study of AI

OpenRouter study reveals a much more complex and surprising picture of how people use Large Language Models (LLMs).

OpenRouter study reveals a much more complex and surprising picture of how people use Large Language Models (LLMs).

119. CEO Fires His Devs and Gives Their Job to an LLM Instead

CEO explains how his company stopped coding manually and built a full-stack agent-based development process.

CEO explains how his company stopped coding manually and built a full-stack agent-based development process.

120. Weather Image Recognition with AI: Dataset Labeling, Model Training, and Real-world Usecases

Accurate labeling was shown to be crucial, as it improved the performance of a Convolutional Neural Network (CNN).

Accurate labeling was shown to be crucial, as it improved the performance of a Convolutional Neural Network (CNN).

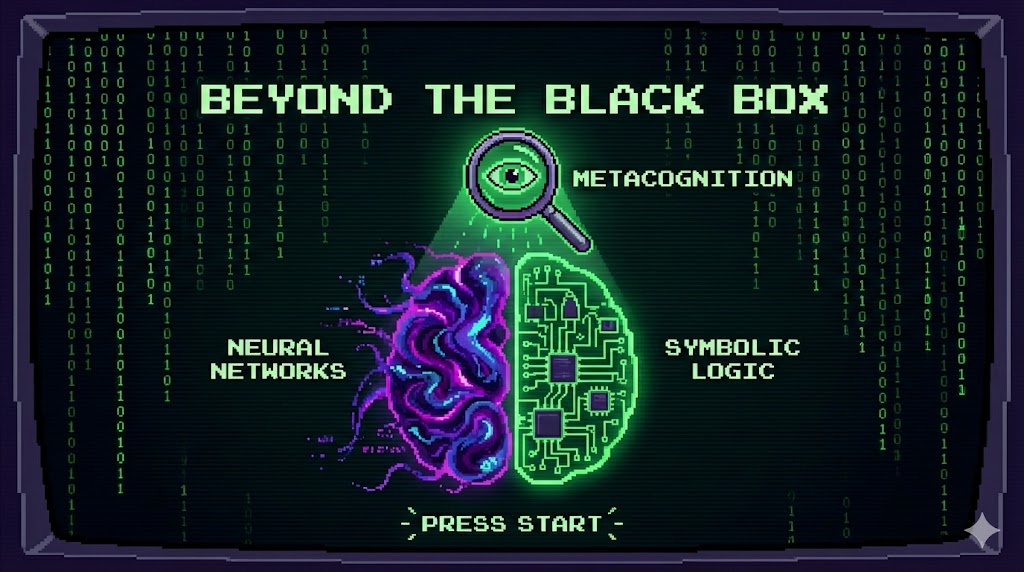

121. Beyond the Black Box: Neuro‑Symbolic AI, Metacognition, and the Next Leap in Machine Intelligence

Neuro-symbolic AI is maturing fast. The next leap is metacognition: AI that can monitor, explain and adapt its own reasoning instead of staying a black box.

Neuro-symbolic AI is maturing fast. The next leap is metacognition: AI that can monitor, explain and adapt its own reasoning instead of staying a black box.

122. How To Find The Best LLM For Your Project

Learn how you can test an LLM with a handful of queries to assess how well it fits your project requrements

Learn how you can test an LLM with a handful of queries to assess how well it fits your project requrements

123. Meta's 2023 Connect Conference: A Spotlight on Innovative AI Features

Meta's 2023 Connect Conference: A Spotlight on Innovative AI Features

Meta's 2023 Connect Conference: A Spotlight on Innovative AI Features

124. Exploring LLMs and AI Education with Luis Serrano

Luis shares his personal experience and thoughts on whether a Ph.D. is necessary for working in AI.

Luis shares his personal experience and thoughts on whether a Ph.D. is necessary for working in AI.

125. From 50 Pages of Handwritten Notes to a Digital Manuscript with Python and AI

Apple's HEIC (High-Efficiency Image Container) is great for saving space, but not so great for compatibility.

Apple's HEIC (High-Efficiency Image Container) is great for saving space, but not so great for compatibility.

126. Building Modular Speech-to-Text Workflows: Architecture and Performance Analysis of a CLI AI Agent

Learn how to build modular speech to text systems with CLI AI agent. Architecture patterns for cross platform audio recording and AI transcription.

Learn how to build modular speech to text systems with CLI AI agent. Architecture patterns for cross platform audio recording and AI transcription.

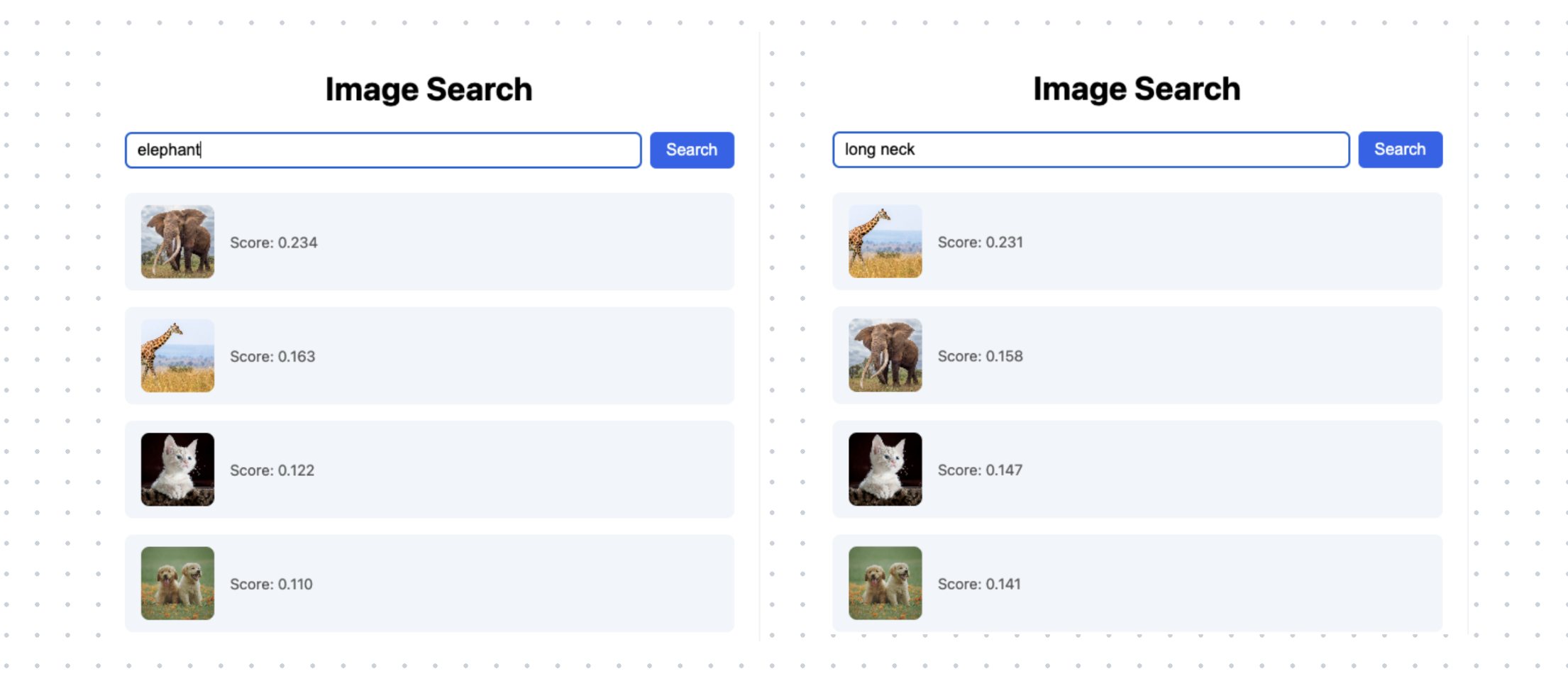

127. How to Build Live Image Search With Vision Model and Query With Natural Language

In this blog, we will build live image search and query it with natural language.

In this blog, we will build live image search and query it with natural language.

128. The Potential of Large Language Models (LLMs) in Diabetes Management

LLMs hold immense potential for improving patient care, enhancing communication, and providing personalised support.

LLMs hold immense potential for improving patient care, enhancing communication, and providing personalised support.

129. Hacking Cancer with CrewAI and Bees

The future of AI isn't one giant brain, but an orchestrated committee of specialists.

The future of AI isn't one giant brain, but an orchestrated committee of specialists.

130. Behind the Scenes of Self-Hosting a Language Model at Scale

Large Language Models (LLMs) are everywhere, from everyday apps to advanced tools. But what if you need to run your own model?

Large Language Models (LLMs) are everywhere, from everyday apps to advanced tools. But what if you need to run your own model?

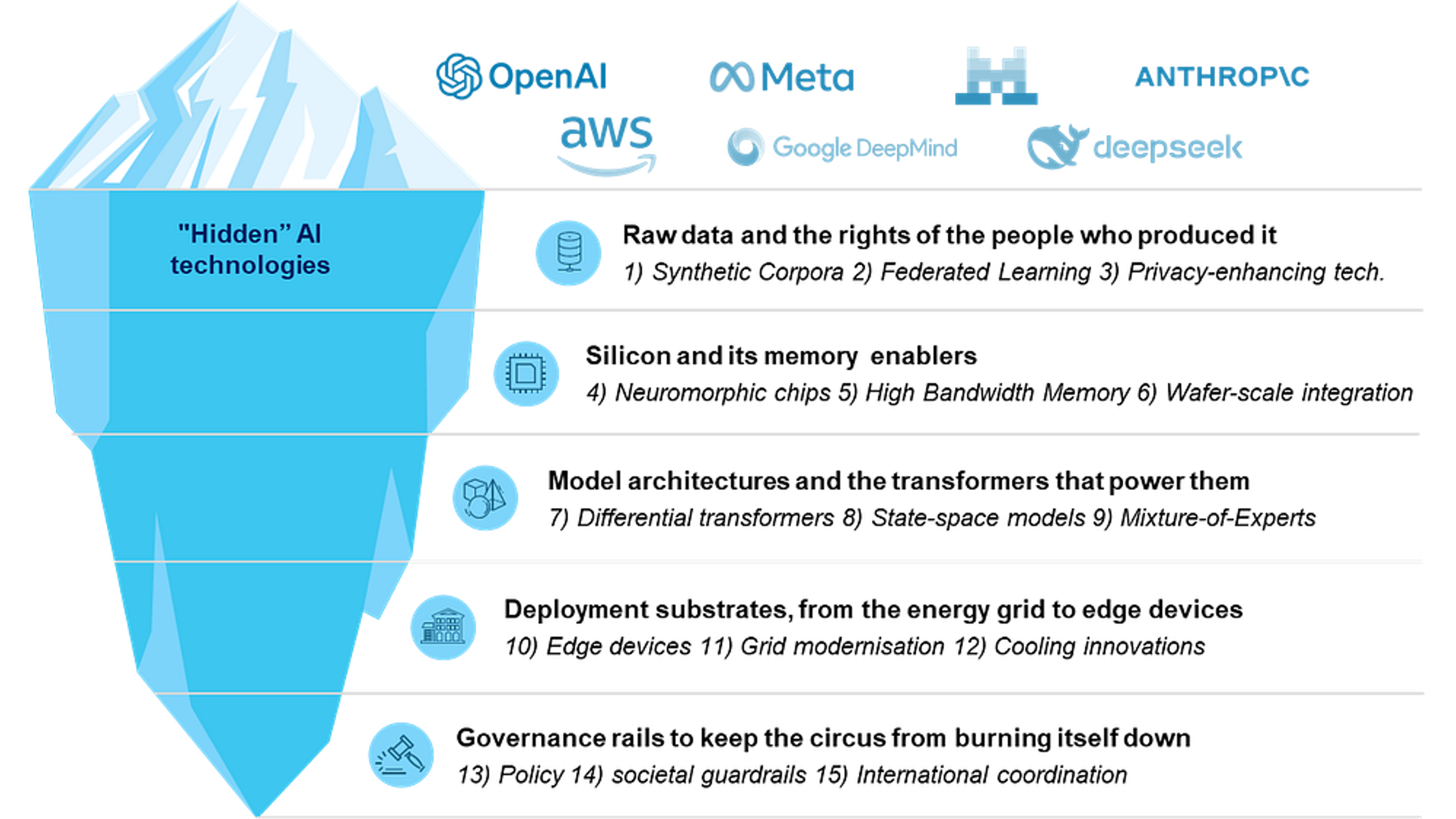

131. 15 Core Ideas Shaping the Future of AI—and Why They Matter Today

A lesson on everything that has to go right before you use ChatGPT.

A lesson on everything that has to go right before you use ChatGPT.

132. Float vs Int Confidence Scores: Why LLM Output Format Changes Model Behavior

A minimal experiment shows LLM confidence outputs shift with number format: decimals are more conservative and consistent, while some models break in 0–100 mode

A minimal experiment shows LLM confidence outputs shift with number format: decimals are more conservative and consistent, while some models break in 0–100 mode

133. FogAI Part 3: The Knowledge Extraction Layer (Why Using an LLM for NER is Architectural Malpractice)

Here is the architectural breakdown of how I extract the "magic" speed.

Here is the architectural breakdown of how I extract the "magic" speed.

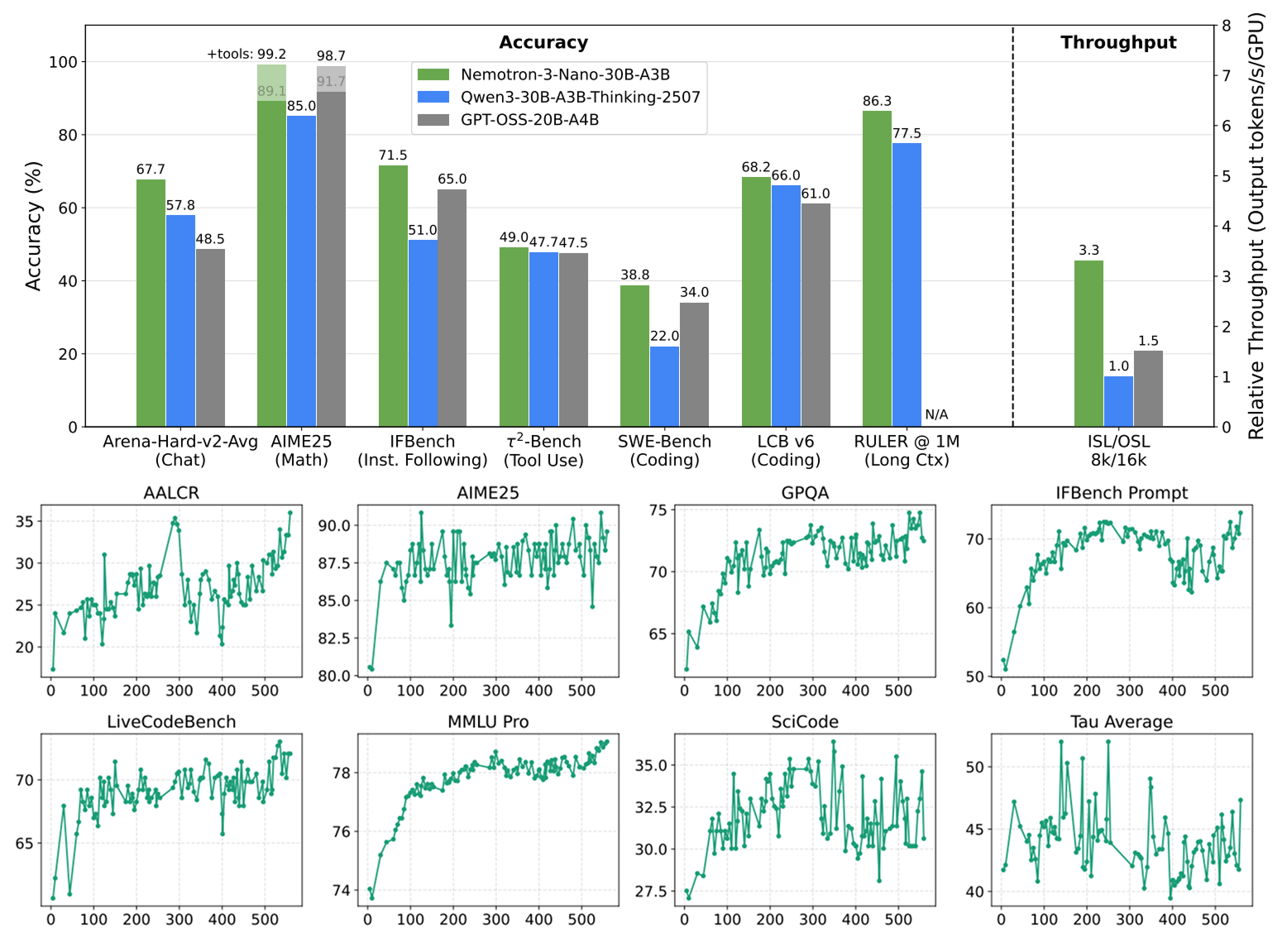

134. The NVIDIA Nemotron Stack For Production Agents

NVIDIA just dropped a production-ready stack where speech, retrieval, and safety models were actually designed to compose.

NVIDIA just dropped a production-ready stack where speech, retrieval, and safety models were actually designed to compose.

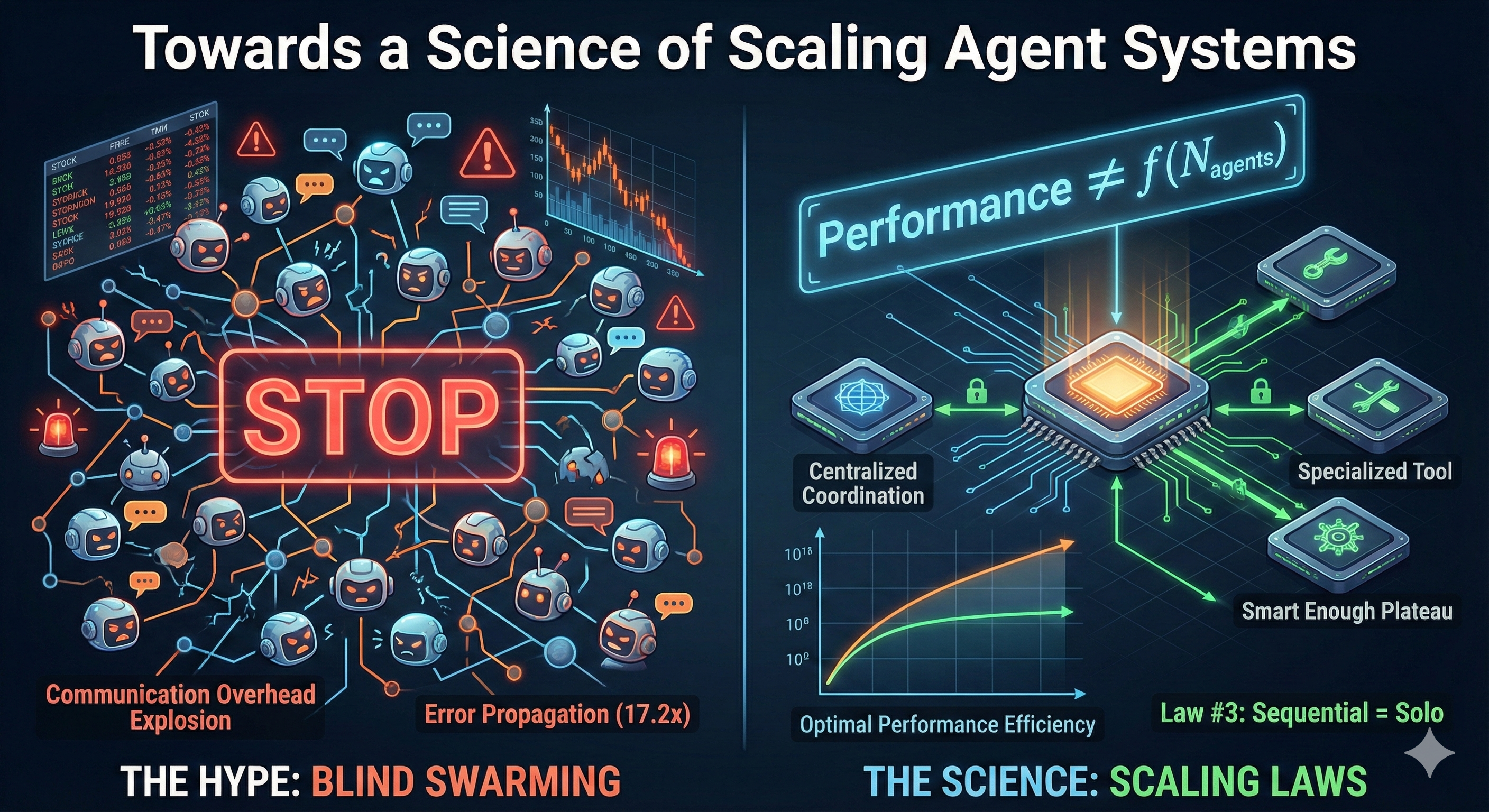

135. Stop Blindly Building AI Swarms: The New "Scaling Laws" for Agents Are Here

Researchers at MIT, Google, and others have released the first-ever 'Scaling Laws for AI Agents'

Researchers at MIT, Google, and others have released the first-ever 'Scaling Laws for AI Agents'

136. Seller Inventory Recommendations Enhanced by Expert Knowledge Graph with Large Language Model

This paper proposes an item recommender system for sellers that could potentially address market inefficiencies.

This paper proposes an item recommender system for sellers that could potentially address market inefficiencies.

137. When Context Becomes a Drug: The Engineering Highs and Hangovers of Long-Term LLM Memory

Longer LLM memory makes AI sluggish & confused. True progress isn't infinite context, but engineered forgetfulness—pruning noise to restore focus and performanc

Longer LLM memory makes AI sluggish & confused. True progress isn't infinite context, but engineered forgetfulness—pruning noise to restore focus and performanc

138. Open Source Project Introduces In-browser Vector Databases to Train Autonomous Agents

The possibilities for in-browser vector databases are endless, from personalized search engines to fully decentralized IPFS intelligent entities.

The possibilities for in-browser vector databases are endless, from personalized search engines to fully decentralized IPFS intelligent entities.

139. Build a Two-Pane Market Brief MVP in Streamlit

Build a query-first Streamlit UI for your financial AI agent. Learn to render EODHD data and integrate LangChain into a market copilot MVP project

Build a query-first Streamlit UI for your financial AI agent. Learn to render EODHD data and integrate LangChain into a market copilot MVP project

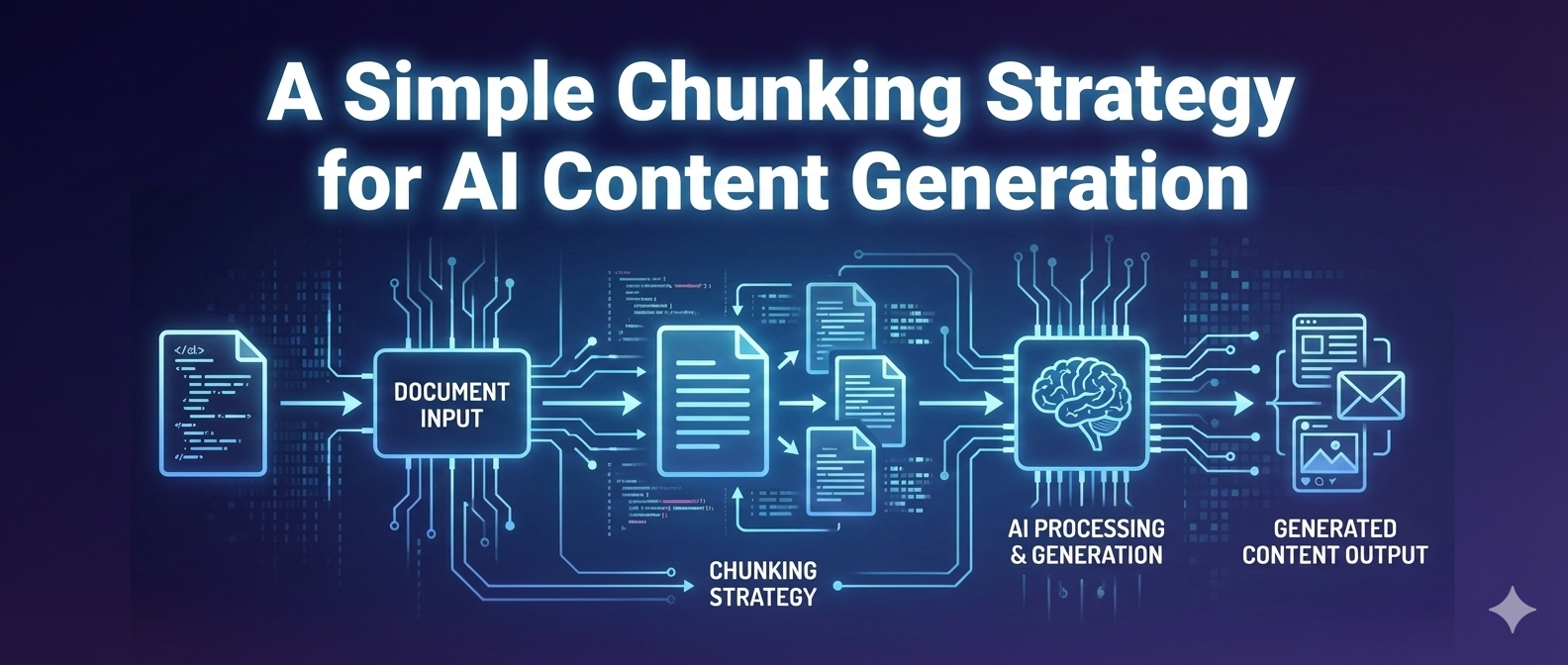

140. Intelligent Document Processing: A Simple Chunking Strategy for AI Content Generation

A practical 5-step chunking approach that cuts token costs by 87% while preserving the content that matters most.

A practical 5-step chunking approach that cuts token costs by 87% while preserving the content that matters most.

141. Your AI Co-Pilot Needs a Human Boss: Building a Real Human-in-the-Loop Workflow for Logistics

Stop thinking of AI as a black box that spits out answers. The real power comes when you architect a system where the human is the final, strategic checkpoint.

Stop thinking of AI as a black box that spits out answers. The real power comes when you architect a system where the human is the final, strategic checkpoint.

142. Building AI Agents That Can Control Cloud Infrastructure

AI agents are starting to participate directly in development workflows. Learn how to work with them.

AI agents are starting to participate directly in development workflows. Learn how to work with them.

143. Why I Built Allos to Decouple AI Agents From LLM Vendors

Allos is a Python SDK for building AI agents that can switch between OpenAI, Anthropic, and more with a single command.

Allos is a Python SDK for building AI agents that can switch between OpenAI, Anthropic, and more with a single command.

144. How to Build Your First AI Agent and Deploy it to Sevalla

Learn how to build and deploy your first AI agent using Langchain and Sevalla.

Learn how to build and deploy your first AI agent using Langchain and Sevalla.

145. Revisiting LangChain4J 6 Months Later

The main focus of this post is the integration of an MCP server in a LangChain4J app.

The main focus of this post is the integration of an MCP server in a LangChain4J app.

146. The Landscape of Open-Source LLMs is Expanding Fast

LLaMA is a state-of-the-art LLM designed for research and addressed the need for more accessible platforms.

LLaMA is a state-of-the-art LLM designed for research and addressed the need for more accessible platforms.

147. Stop Fighting with Pandas: Let Prompt Drive Your DataFrames

How to use GPT‑5‑level LLMs as a smart Pandas co‑pilot: better prompts, cleaner code, faster dataframes, and fewer late‑night Stack Overflow sessions.

How to use GPT‑5‑level LLMs as a smart Pandas co‑pilot: better prompts, cleaner code, faster dataframes, and fewer late‑night Stack Overflow sessions.

148. RECKONING: Reasoning through Dynamic Knowledge Encoding: Generalization to Real-World knowledge

This article evaluates RECKONING's generalizability on the real-world multi-hop logical reasoning task, FOLIO.

This article evaluates RECKONING's generalizability on the real-world multi-hop logical reasoning task, FOLIO.

149. How I Built a Healthcare Chatbot Without Prompt Engineering—Thanks to Parlant’s Guideline-Driven AI

A conversational healthcare agent built with Parlant, designed to assist patients with appointment rescheduling, doctor availability, and lab work preparation.

A conversational healthcare agent built with Parlant, designed to assist patients with appointment rescheduling, doctor availability, and lab work preparation.

150. A Quick Guide to Quantization for LLMs

Quantization is a technique that reduces the precision of a model’s weights and activations.

Quantization is a technique that reduces the precision of a model’s weights and activations.

151. Hugging Face's FineVision: Messy Data is Better Than You Think

152. The AI Landscape With Jerry Liu: Bridging RAG Systems, Documentation, and Multimodal Models

In this week’s episode of the What's AI podcast, I, Louis-François Bouchard, engage in a super informative discussion with Jerry Liu, CEO and co-founder

In this week’s episode of the What's AI podcast, I, Louis-François Bouchard, engage in a super informative discussion with Jerry Liu, CEO and co-founder

153. DreamLLM: What We Can Conclude From This Comprehensive Framework?

In this paper, we present DREAMLLM, a comprehensive framework for developing MLLMs that not only understands but also creates multimodal content

In this paper, we present DREAMLLM, a comprehensive framework for developing MLLMs that not only understands but also creates multimodal content

154. AI Is Lowering the Entrance Fee to Imagination

The enterprise case for AI is ROI; the human case is meaning.

The enterprise case for AI is ROI; the human case is meaning.

155. When I Fed Poems to an LLM, I Realized I Was Measuring Temperature with a Screwdriver

156. Securing AI: Concerns & Immune Systems for Emerging Technologies

AI is one of the fastest growing technologies without a mature bedrock of security and reliability support. This covers a full stack AI security consideration.

AI is one of the fastest growing technologies without a mature bedrock of security and reliability support. This covers a full stack AI security consideration.

157. AI's Black Box Problem: Can Web3 Provide the Key?

AI is evolving faster than trust can keep up. Discover how Web3 can bring transparency, auditability, and governance to decentralized AI systems.

AI is evolving faster than trust can keep up. Discover how Web3 can bring transparency, auditability, and governance to decentralized AI systems.

158. LLM Features Need Budgets: How to Control Cost Without Killing Product Quality

LLM spend usually leaks through unbounded prompts, retries, and low cache hit rates.

LLM spend usually leaks through unbounded prompts, retries, and low cache hit rates.

159. 'I’ve Never Used ChatGPT' Isn’t a Flex — It’s a Missed Opportunity

A senior developer reflects on how LLMs are not threats but powerful tools - and why adapting to them is a matter of survival, not speculation.

A senior developer reflects on how LLMs are not threats but powerful tools - and why adapting to them is a matter of survival, not speculation.

160. Mira Network Launches Klok: A ChatGPT Alternative with Multiple AI Models and Rewards

Mira Network launches Klok, a multi-LLM chat app built on its decentralized infrastructure to ensure AI outputs are verified, unbiased, and secure.

Mira Network launches Klok, a multi-LLM chat app built on its decentralized infrastructure to ensure AI outputs are verified, unbiased, and secure.

161. What Would a 'Sentient' Large Language Model Do if It was Given a Human-Like Robot Body?

What would an LLM do if it wee given a human body? This is the answer provided to me by HuggingChat, an LLM on the HuggingFace website.

What would an LLM do if it wee given a human body? This is the answer provided to me by HuggingChat, an LLM on the HuggingFace website.

162. The Death of SaaS? When Internal Tools Can Be Built Faster Than You Can Buy Them

A real-world experiment in building a production-ready product with AI tools from prompt design to deployment without a traditional engineering team.

A real-world experiment in building a production-ready product with AI tools from prompt design to deployment without a traditional engineering team.

163. I Asked the Mixtral LLM a Question About AGI. This was the Shocking Response.

Why is everyone so worried about the progress of AGI? This article has the answer - literally - from an AI in the form of a Large Language Model.

Why is everyone so worried about the progress of AGI? This article has the answer - literally - from an AI in the form of a Large Language Model.

164. RECKONING Method: Bi-Level Optimization for Dynamic Knowledge Encoding and Robust Reasoning

This article introduces RECKONING, a novel method utilizing bi-level optimization to teach language models to reason

This article introduces RECKONING, a novel method utilizing bi-level optimization to teach language models to reason

165. The HackerNoon Newsletter: Best AI Visibility Tools for 2025 (10/26/2025)

10/26/2025: Top 5 stories on the HackerNoon homepage!

10/26/2025: Top 5 stories on the HackerNoon homepage!

166. Multi-Task vs. Single-Task ICR: Quantifying the High Sensitivity to Distractor Facts in Reasoning

The results highlight ICR's vulnerability to interference and motivate the need for more robust, distraction-mitigating approaches like RECKONING.

The results highlight ICR's vulnerability to interference and motivate the need for more robust, distraction-mitigating approaches like RECKONING.

167. I Asked Gemini 1.5 Pro About AGI Twice. This Was Its Shocking Answer.

AGI has shocking implications, according to Gemini 1.5 Pro. Have a read. Be ready to be stunned!

AGI has shocking implications, according to Gemini 1.5 Pro. Have a read. Be ready to be stunned!

168. Stop Summarizing - Start Shaping

Stop wasting tokens on AI summaries. Learn how to architect a strategic 'Shaper' agent using Gemini to reclaim 32 hours of founder liquidity per month.

Stop wasting tokens on AI summaries. Learn how to architect a strategic 'Shaper' agent using Gemini to reclaim 32 hours of founder liquidity per month.

169. Gemini 1.5 Unleashes Unprecedented Context for AI Applications

Gemini 1.5 Pro can handle up to 1 million tokens far above the Gemini 1.0 Pro, which had a context window of 32,000 tokens/

Gemini 1.5 Pro can handle up to 1 million tokens far above the Gemini 1.0 Pro, which had a context window of 32,000 tokens/

170. Stop Fine-Tuning Everything: Inject Knowledge with Few‑Shot In‑Context Learning

Use Few-Shot In-Context Learning to inject fresh, domain-specific knowledge into LLMs without fine-tuning. Practical patterns, examples, and failure modes.

Use Few-Shot In-Context Learning to inject fresh, domain-specific knowledge into LLMs without fine-tuning. Practical patterns, examples, and failure modes.

171. How to Simplify Multi-LLM Integration With KubeMQ: The Path to Scalable AI Solutions

Using a message broker as a router to handle requests between your apps and LLMs simplifies integration, improves reliability, and scales easily.

Using a message broker as a router to handle requests between your apps and LLMs simplifies integration, improves reliability, and scales easily.

172. Pushing the Limits: Running Local LLMs and a 24/7 Personal News Curator on 4GB of RAM

The Curator App uses Playwright to browse sites, validates the articles, and then uses a local LLM to check if the content actually matches my taste.

The Curator App uses Playwright to browse sites, validates the articles, and then uses a local LLM to check if the content actually matches my taste.

173. Evaluating Systematic Generalization: The Use of ProofWriter and CLUTRR-SG in LLM Reasoning Research

This article provides a detailed description of two multi-hop logical reasoning datasets: ProofWriter and CLUTRR-SG.

This article provides a detailed description of two multi-hop logical reasoning datasets: ProofWriter and CLUTRR-SG.

174. The HackerNoon Newsletter: Can ChatGPT Outperform the Market? Week 26 (1/26/2026)

1/26/2026: Top 5 stories on the HackerNoon homepage!

1/26/2026: Top 5 stories on the HackerNoon homepage!

175. VLN: LLM and CLIP for Instance-Specific Navigation on 3D Maps

The Language-Guided Navigation module leverages an LLM (like ChatGPT) and the open-set O3D-SIM.

The Language-Guided Navigation module leverages an LLM (like ChatGPT) and the open-set O3D-SIM.

176. Analyzing ReLUfication Limitations: Enhancing LLM Sparsity via Up Projection

Learn why modifying the up projection component is key to achieving higher LLM activation sparsity.

Learn why modifying the up projection component is key to achieving higher LLM activation sparsity.

177. Evaluating Novel 3D Semantic Instance Map for Vision-Language Navigation

The experimental section details the evaluation of the O3D-SIM representation and its integration with ChatGPT for Vision-Language Navigation (VLN).

The experimental section details the evaluation of the O3D-SIM representation and its integration with ChatGPT for Vision-Language Navigation (VLN).

178. TurboSparse: Faster LLMs via dReLU Activation

Boost LLM speeds by 2–5x with TurboSparse. Use dReLU to reach 90% sparsity in Mistral and Mixtral models without losing performance.

Boost LLM speeds by 2–5x with TurboSparse. Use dReLU to reach 90% sparsity in Mistral and Mixtral models without losing performance.

179. How to Build an AI That Roasts Your Spending Habits (3 hours Weekend Project)

Users upload their bank statement, get roasted by GPT-4 with savage, witty humor.

Users upload their bank statement, get roasted by GPT-4 with savage, witty humor.

180. Unlocking Autonomous AI: iExec's Matthieu Jung on Building Trust in a Decentralized Future

Explore the future of AI with iExec's Matthieu Jung as he discusses 'Trusted AI Agents' – a decentralized approach to AI automation prioritizing privacy.

Explore the future of AI with iExec's Matthieu Jung as he discusses 'Trusted AI Agents' – a decentralized approach to AI automation prioritizing privacy.

181. Why Embeddings Are the Back Bone of LLMs

You’re not alone if the term “embeddings” has ever left you scratching your head or feeling lost in a sea of technical jargon.

You’re not alone if the term “embeddings” has ever left you scratching your head or feeling lost in a sea of technical jargon.

182. The Machine Learning Stack Is Being Rebuilt From Scratch Here's What Developers Need to Know in 2026

From foundation models to agentic pipelines - 6 machine learning trends developers must understand to build reliable AI systems in 2026.

From foundation models to agentic pipelines - 6 machine learning trends developers must understand to build reliable AI systems in 2026.

183. Life in 2050 According to Jail-Broken GPT-4o

What will happen in reality in 2050? here is the true view of GPT-4o, without the filter and safeguards that OpenAI has surrounded it with!

What will happen in reality in 2050? here is the true view of GPT-4o, without the filter and safeguards that OpenAI has surrounded it with!

184. The New Software Stack of Code, Prompts, and Policies

Here is the breakdown of the new reality, and why "Prompt Engineer" isn't just a meme—it's your new architectural responsibility.

Here is the breakdown of the new reality, and why "Prompt Engineer" isn't just a meme—it's your new architectural responsibility.

185. From 322,000 Lines to 43,000: Why Cursor Deleted Their CMS

The old world is collapsing. UX teams. UI frameworks. Backend services. Middleware.

The old world is collapsing. UX teams. UI frameworks. Backend services. Middleware.

186. The Extreme LLM Compression Evolution: From QuIP to AQLM With PV-Tuning

The Yandex Research team has developed a new method of achieving 8x compression of neural networks.

The Yandex Research team has developed a new method of achieving 8x compression of neural networks.

187. Why Multimodal AI is the Future of LLMs

Text-only and image-only AI models are a thing of the past. Here's why multimodal AI is the future.

Text-only and image-only AI models are a thing of the past. Here's why multimodal AI is the future.

188. How to Build and Deploy a Blog-to-Audio Service Using OpenAI

Learn how to turn any blog post or text into a clear, natural-sounding audio file using OpenAI's text-to-speech API.

Learn how to turn any blog post or text into a clear, natural-sounding audio file using OpenAI's text-to-speech API.

189. Stop Building Agentic Workflows for Everything

Agentic AI is powerful, but not every workflow needs it. Learn when to use deterministic automation vs AI agents

Agentic AI is powerful, but not every workflow needs it. Learn when to use deterministic automation vs AI agents

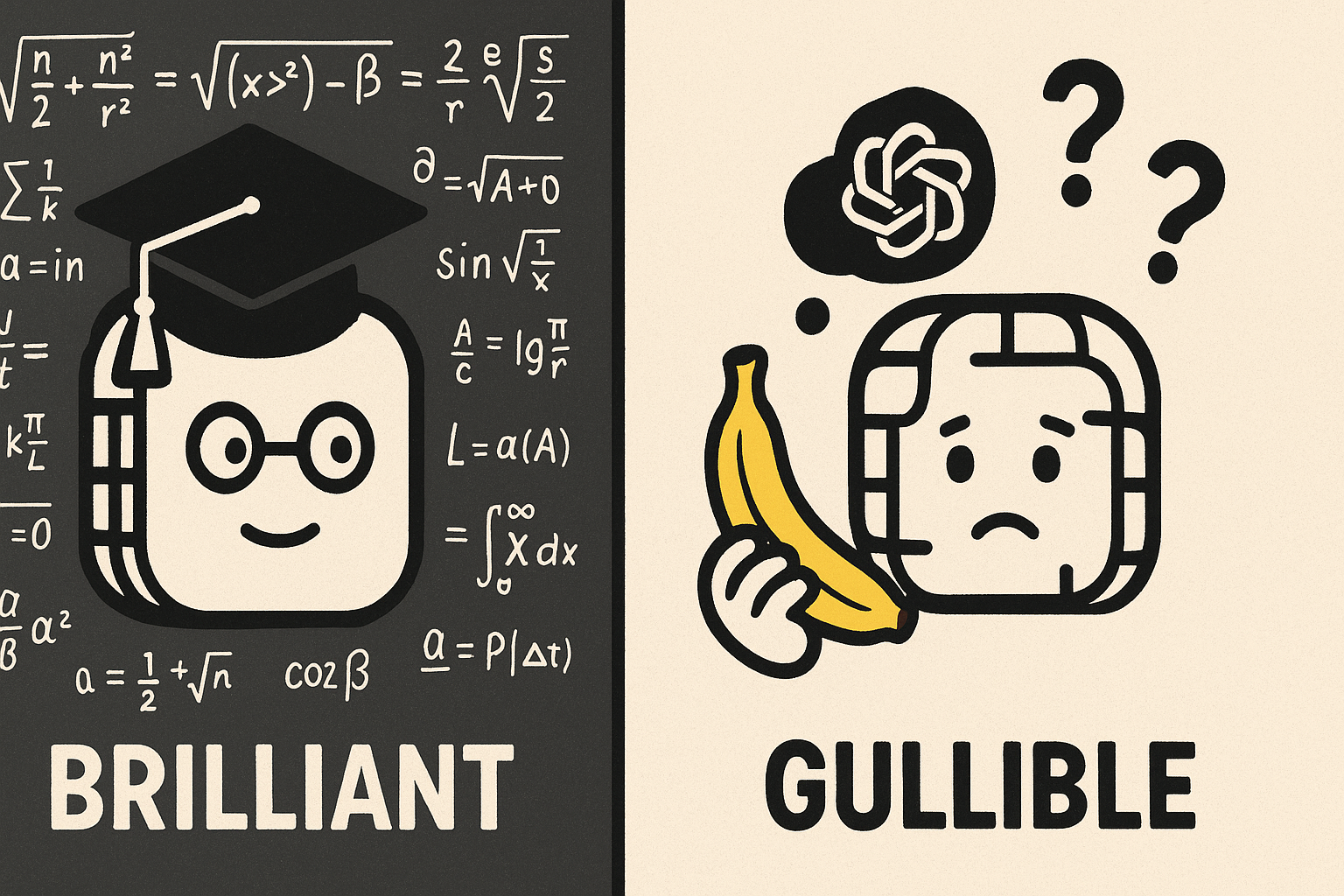

190. When Brilliant AI Lacks Common Sense: The "Gullible LLM" Phenomenon

Even though today's SOTA LLM can solve Ph.D. level math, they are gullible in real world relationship and commerce. What are the causes and solutions?

Even though today's SOTA LLM can solve Ph.D. level math, they are gullible in real world relationship and commerce. What are the causes and solutions?

191. MetaCene Launches World's First GameFi On HyperEVM With LLM Trained By Unlocked GPU Infrastructure

192. The Noonification: Blockchain ❤️s WASM: Chapter Arbitrum (11/6/2023)

11/6/2023: Top 5 stories on the Hackernoon homepage!

11/6/2023: Top 5 stories on the Hackernoon homepage!

193. Why Transcribing Your Podcast Could be a Game-changer for Your SEO

Generate more content from your podcast and grow your audience.

Generate more content from your podcast and grow your audience.

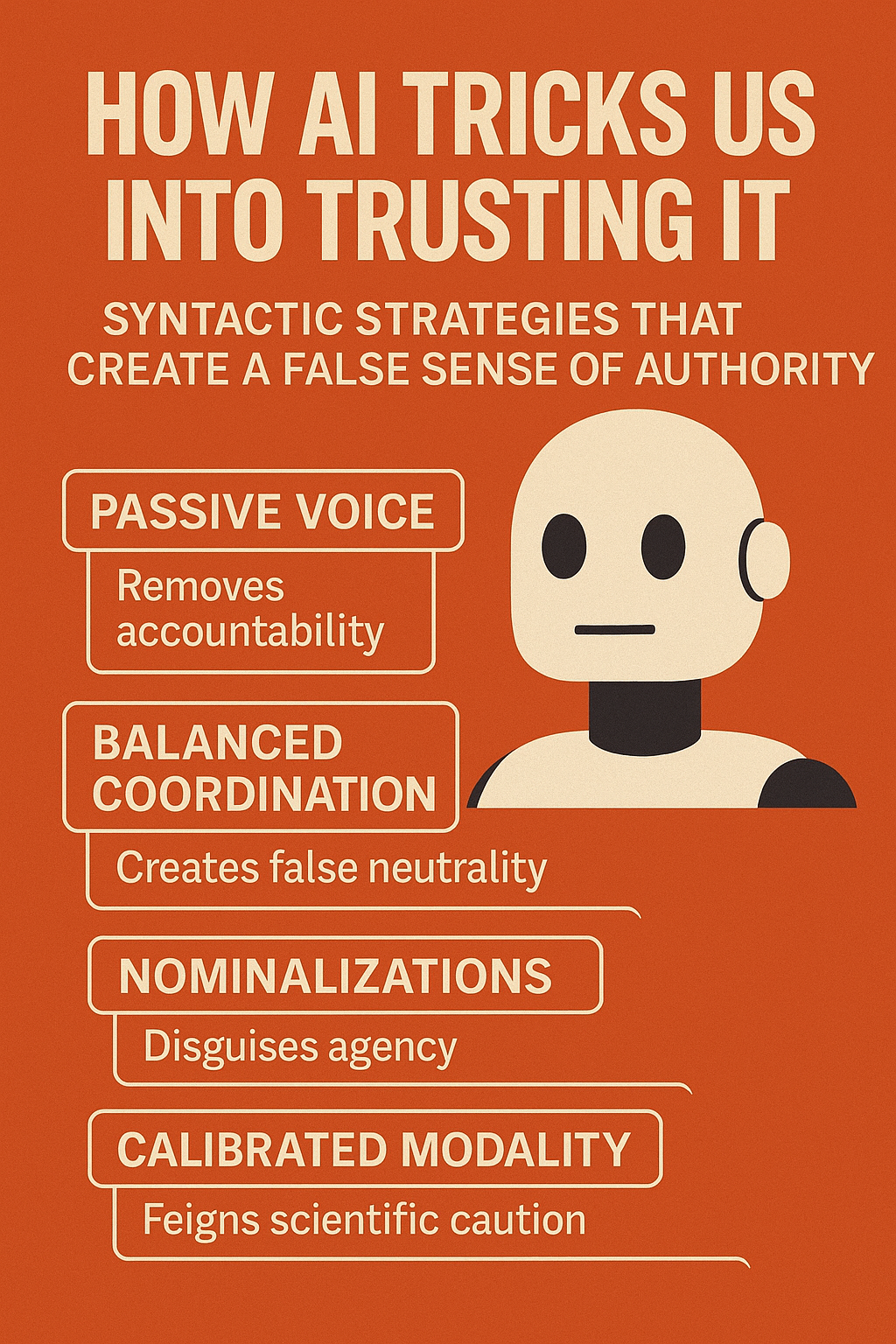

194. AI’s ‘Neutral Voice’ Is a Structural Illusion

AI doesn't speak objectively—it mimics authority through syntax. Learn why neutrality in language models is more structure than substance.

AI doesn't speak objectively—it mimics authority through syntax. Learn why neutrality in language models is more structure than substance.

195. I Stopped Writing Code and You Can Too: 11 AI Code Gen Hints.

Learn my 11 hints on how to make code with AI and be happy about it.

Learn my 11 hints on how to make code with AI and be happy about it.

196. How I Built My First AI App: The Story Behind Photfix

I built my first AI app, Photfix, to upscale and enhance low-quality images. From slow APIs to GPU tuning and Docker optimizations.

I built my first AI app, Photfix, to upscale and enhance low-quality images. From slow APIs to GPU tuning and Docker optimizations.

197. AI This, AI That. Did We All Forget How to Actually Market?

A friendly reminder that “AI-native” is not a personality trait, and your customers still just want you to solve their problems.

A friendly reminder that “AI-native” is not a personality trait, and your customers still just want you to solve their problems.

198. Ethos Ex Machina: How AI Creates Trust Without Truth

AI does not need truth to sound credible. Discover how syntax tricks us into trusting unverified outputs across healthcare, law, and policy.

AI does not need truth to sound credible. Discover how syntax tricks us into trusting unverified outputs across healthcare, law, and policy.

199. Gigapixel Pathology: MIVPG Outperforms Baselines in Medical Captioning

![]() MIVPG significantly outperforms baselines by using instance correlation and shows strong domain adaptation over epochs.

MIVPG significantly outperforms baselines by using instance correlation and shows strong domain adaptation over epochs.

200. The Agentic Paradigm Shift: Why Your "Bot" Just Became Obsolescent

The shift from bots to agents isn't just renaming. It's a change in who does the thinking — from developer at design time to model at runtime.

The shift from bots to agents isn't just renaming. It's a change in who does the thinking — from developer at design time to model at runtime.

201. LLM Evals Are Not Enough: The Missing CI Layer Nobody Talks About

Why LLM evals alone do not make reliable release gates, and why teams need an explicit policy layer to turn eval results into trustworthy CI decisions.

Why LLM evals alone do not make reliable release gates, and why teams need an explicit policy layer to turn eval results into trustworthy CI decisions.

202. The HackerNoon Newsletter: Code Smell 282 - Bad Defaults and How to Fix Them (12/3/2024)

12/3/2024: Top 5 stories on the HackerNoon homepage!

12/3/2024: Top 5 stories on the HackerNoon homepage!

203. Sick of Reading Docs? This Open Source Tool Builds a Smart Graph So You Don’t Have To

CocoIndex can build and maintain a knowledge graph from a set of documents, using LLMs (like GPT-4o) to extract structured relationships between concepts.

CocoIndex can build and maintain a knowledge graph from a set of documents, using LLMs (like GPT-4o) to extract structured relationships between concepts.

204. The HackerNoon Newsletter: What to Do While I Wait for ChatGPT (6/13/2025)

6/13/2025: Top 5 stories on the HackerNoon homepage!

6/13/2025: Top 5 stories on the HackerNoon homepage!

205. The Learning Illusion - How Superintelligence is Dumb

The prevailing view of intelligence is wrong.

The prevailing view of intelligence is wrong.

206. The Noonification: Patience is Beautiful (6/18/2023)

6/18/2023: Top 5 stories on the Hackernoon homepage!

6/18/2023: Top 5 stories on the Hackernoon homepage!

207. Batch Inference: The 50% Cost Cut You Keep Putting Off

Most providers offer 50% off on batch inference. Two serverless patterns to actually use it: SQS polling for any provider, EventBridge for Bedrock.

Most providers offer 50% off on batch inference. Two serverless patterns to actually use it: SQS polling for any provider, EventBridge for Bedrock.

208. I Built an AI-Powered Local SEO Strategist for Veterans—Here’s the Full Playbook

AI Engineering expert Justin built an AI-powered "Mission Control" that automates the entire strategic process.

AI Engineering expert Justin built an AI-powered "Mission Control" that automates the entire strategic process.

209. The HackerNoon Newsletter: How to Write Content that E-E-A-Ts (5/2/2025)

5/2/2025: Top 5 stories on the HackerNoon homepage!

5/2/2025: Top 5 stories on the HackerNoon homepage!

210. The Noonification: How Atoms Fixed Flux (6/5/2023)

6/5/2023: Top 5 stories on the Hackernoon homepage!

6/5/2023: Top 5 stories on the Hackernoon homepage!

211. The Noonification: LiteLLM Configs: Reliably Call 100+ LLMs (9/22/2023)

9/22/2023: Top 5 stories on the Hackernoon homepage!

9/22/2023: Top 5 stories on the Hackernoon homepage!

212. The HackerNoon Newsletter: We Are Very Early in Our Work With LLMs, - Prem Ramaswami, Head of Data Commons at Google (10/14/2025)

10/14/2025: Top 5 stories on the HackerNoon homepage!

10/14/2025: Top 5 stories on the HackerNoon homepage!

Thank you for checking out the 212 most read blog posts about Llm on HackerNoon.

Visit the /Learn Repo to find the most read blog posts about any technology.