Let's learn about Deep Learning via these 500 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

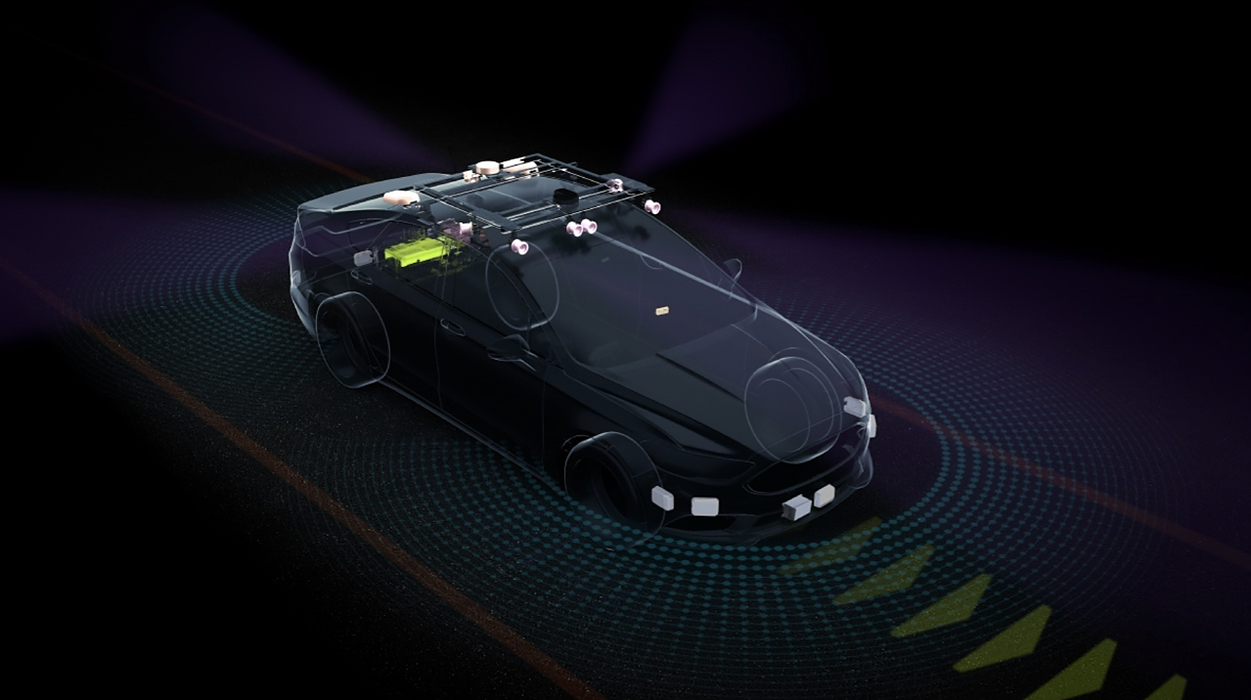

Curious about the deepfakes and self-driving cars? One must have an opinion on the algorithms mimicking the human brain.

1. Deep Learning vs. Machine Learning: A Simple Explanation

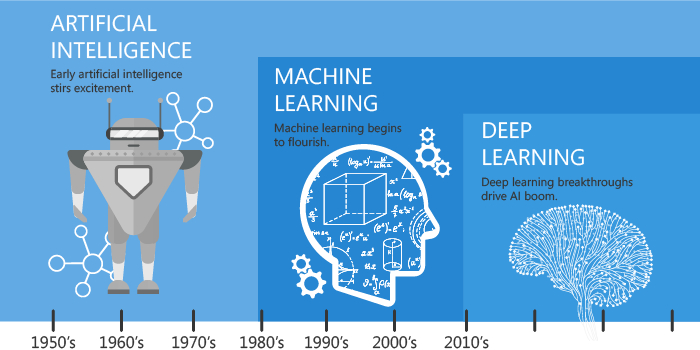

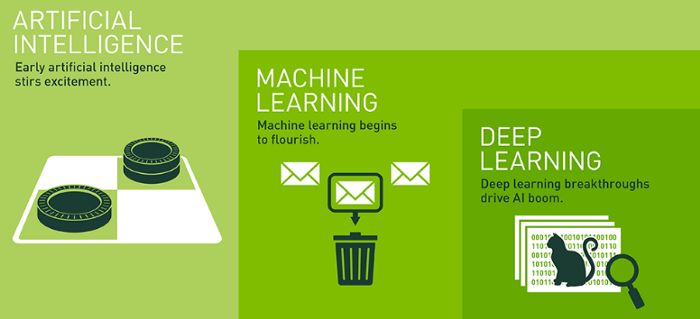

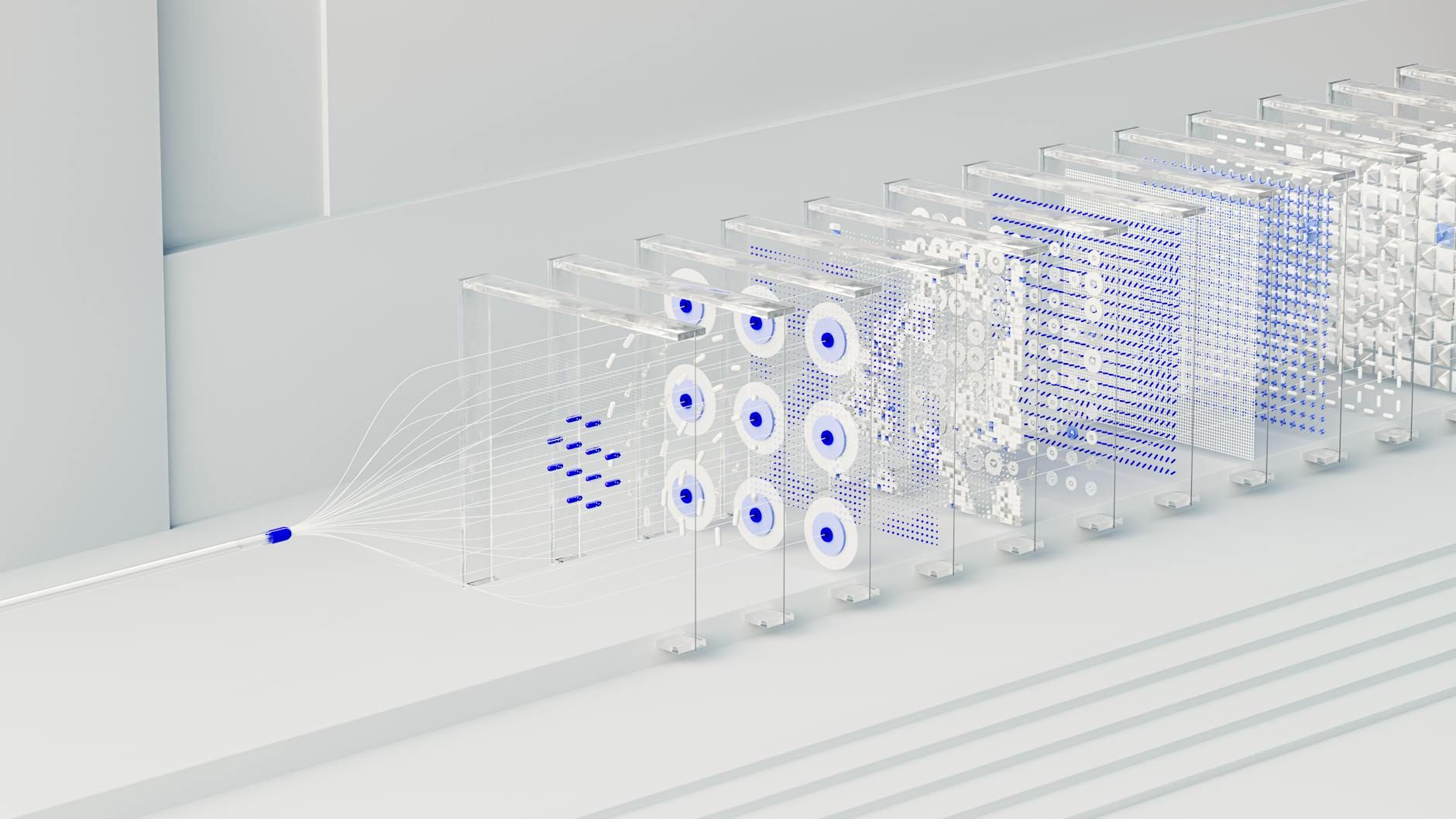

Machine learning and deep learning are two subsets of artificial intelligence which have garnered a lot of attention over the past two years. If you’re here looking to understand both the terms in the simplest way possible, there’s no better place to be.

Machine learning and deep learning are two subsets of artificial intelligence which have garnered a lot of attention over the past two years. If you’re here looking to understand both the terms in the simplest way possible, there’s no better place to be.

2. Gradient Descent: All You Need to Know

What’s the one algorithm that’s used in almost every Machine Learning model? It’s <strong>Gradient Descent</strong>. There are a few variations of the algorithm but this, essentially, is how any ML model learns. Without this, ML wouldn’t be where it is right now.

What’s the one algorithm that’s used in almost every Machine Learning model? It’s <strong>Gradient Descent</strong>. There are a few variations of the algorithm but this, essentially, is how any ML model learns. Without this, ML wouldn’t be where it is right now.

3. A list of artificial intelligence tools you can use today — for personal use (1/3)

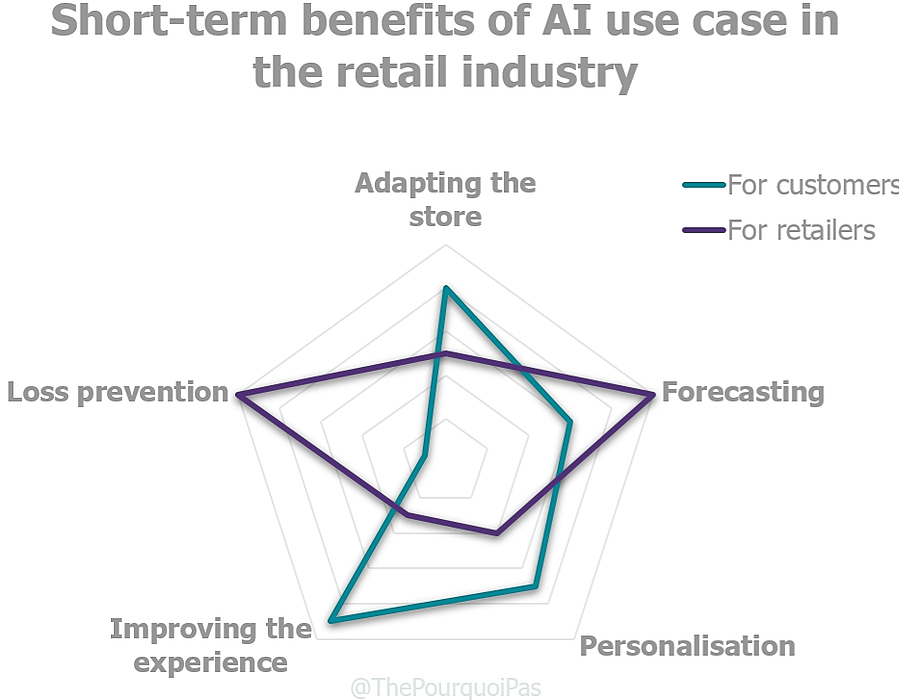

Artificial Intelligence and the fourth industrial revolution has made some considerable progress over the last couple of years. Most of this current progress that is usable has been developed for industry and business purposes, as you’ll see in coming posts. Research institutes and dedicated, specialised companies are working toward the ultimate goal of AI (cracking artificial general intelligence), developing open platforms and the looking into the ethics that follow suit. There are also a good handful of companies working on AI products for consumers, which is what we’ll be kicking this series of posts off with.

Artificial Intelligence and the fourth industrial revolution has made some considerable progress over the last couple of years. Most of this current progress that is usable has been developed for industry and business purposes, as you’ll see in coming posts. Research institutes and dedicated, specialised companies are working toward the ultimate goal of AI (cracking artificial general intelligence), developing open platforms and the looking into the ethics that follow suit. There are also a good handful of companies working on AI products for consumers, which is what we’ll be kicking this series of posts off with.

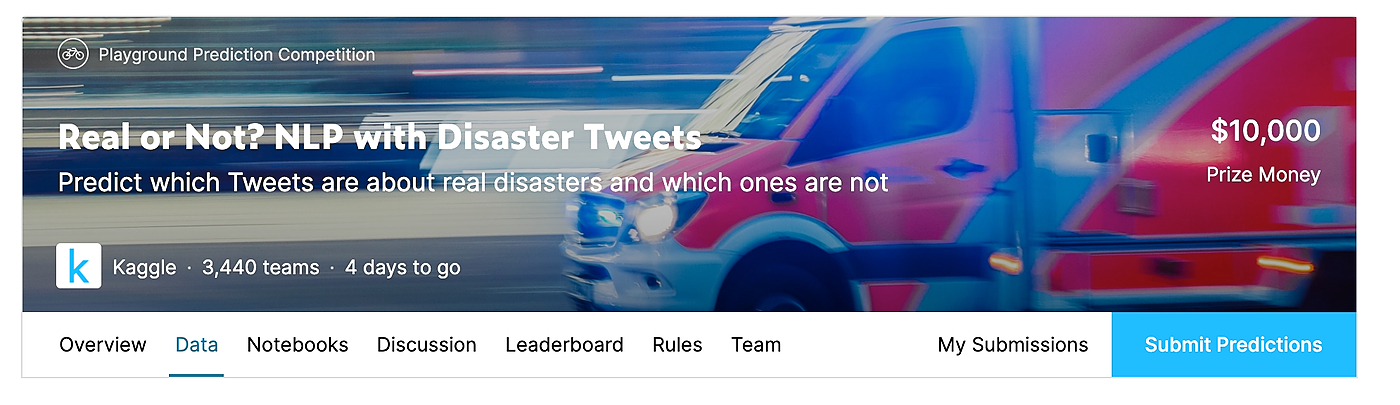

4. Top 5 Machine Learning Projects for Beginners

As a beginner, jumping into a new machine learning project can be overwhelming. The whole process starts with picking a data set, and second of all, study the data set in order to find out which machine learning algorithm class or type will fit best on the set of data.

As a beginner, jumping into a new machine learning project can be overwhelming. The whole process starts with picking a data set, and second of all, study the data set in order to find out which machine learning algorithm class or type will fit best on the set of data.

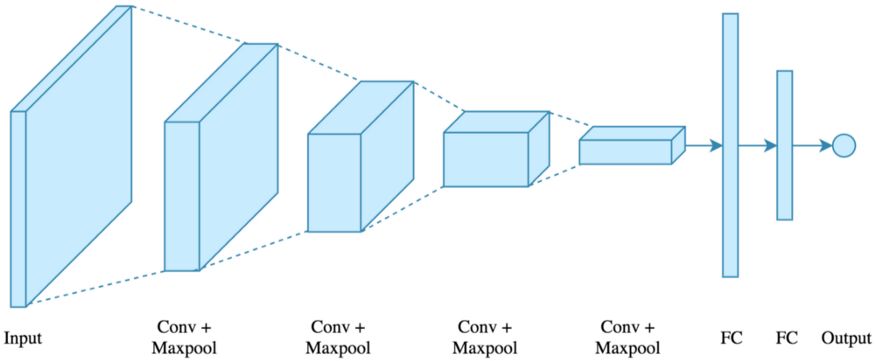

5. Deep Learning CNN’s in Tensorflow with GPUs

6. Search Algorithms in Artificial Intelligence

There can be one or many solutions to a given problem, depending on the scenario, As there can be many ways to solve that problem. <strong><em>Think about how do you approach a problem.</em></strong> Lets say you need to do something straight forward like a math multiplication. Clearly there is one correct solution, but many algorithms to multiply, depending on the size of the input. Now, take a more complicated problem, like playing a game(imagine your favorite game, chess, poker, call of duty, DOTA, anything..). In most of these games, at a given point in time, you have multiple moves that you can make, and you choose the one that gives you best possible outcome. In this scenario, there is no one correct solution, but there is a best possible solution, depending on what you want to achieve. Also, there are multiple ways to approach the problem, based on what strategy you choose to have for your game play.

There can be one or many solutions to a given problem, depending on the scenario, As there can be many ways to solve that problem. <strong><em>Think about how do you approach a problem.</em></strong> Lets say you need to do something straight forward like a math multiplication. Clearly there is one correct solution, but many algorithms to multiply, depending on the size of the input. Now, take a more complicated problem, like playing a game(imagine your favorite game, chess, poker, call of duty, DOTA, anything..). In most of these games, at a given point in time, you have multiple moves that you can make, and you choose the one that gives you best possible outcome. In this scenario, there is no one correct solution, but there is a best possible solution, depending on what you want to achieve. Also, there are multiple ways to approach the problem, based on what strategy you choose to have for your game play.

7. Rational Agents for Artificial Intelligence

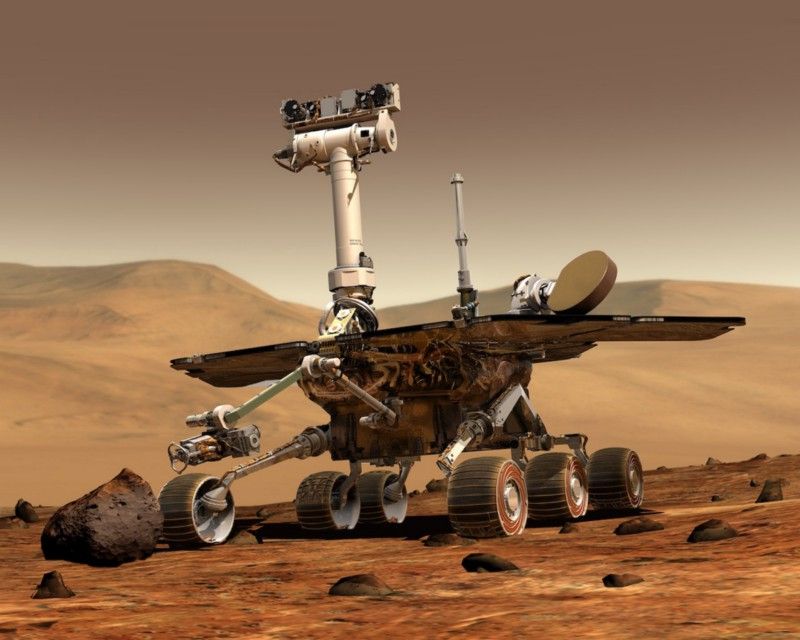

There are multiple approaches that you might take to create Artificial Intelligence, based on what we hope to achieve with it and how will we measure its success. It ranges from extremely rare and complex systems, like self driving cars and robotics, to something that is a part of our daily lives, like face recognition, machine translation and email classification.

There are multiple approaches that you might take to create Artificial Intelligence, based on what we hope to achieve with it and how will we measure its success. It ranges from extremely rare and complex systems, like self driving cars and robotics, to something that is a part of our daily lives, like face recognition, machine translation and email classification.

8. Begin your Deep Learning project for free (free GPU processing , free storage , free easy upload…

In this story i would go through how to begin a working on deep learning without the need to have a powerful computer with the best gpu , and without the need of having to rent a virtual machine , I would go through how to have a free processing on a GPU , and connect it to a free storage , how to directly add files to your online storage without the need to download then upload , and how to unzip file for free online .

In this story i would go through how to begin a working on deep learning without the need to have a powerful computer with the best gpu , and without the need of having to rent a virtual machine , I would go through how to have a free processing on a GPU , and connect it to a free storage , how to directly add files to your online storage without the need to download then upload , and how to unzip file for free online .

9. 🎁 Releasing “Supervisely Person” dataset for teaching machines to segment humans

Hello, Machine Learning community!

Hello, Machine Learning community!

10. Deep Learning Chatbots: Everything You Need to Know

When you’re creating a chatbot, your goal should be to make one that it requires minimal or no human interference. This can be achieved by two methods.

When you’re creating a chatbot, your goal should be to make one that it requires minimal or no human interference. This can be achieved by two methods.

11. How to Initialize weights in a neural net so it performs well?

We know that in a neural network, weights are initialized usually randomly and that kind of initialization takes fair / significant amount of repetitions to converge to the least loss and reach to the ideal weight matrix. The problem is, this kind of initialization is prone to vanishing or exploding gradient problems.

We know that in a neural network, weights are initialized usually randomly and that kind of initialization takes fair / significant amount of repetitions to converge to the least loss and reach to the ideal weight matrix. The problem is, this kind of initialization is prone to vanishing or exploding gradient problems.

12. 10 Machine Learning, Data Science, and Deep Learning Courses for Programmers in 2020

A curated list of courses to learn data science, machine learning, and deep learning fundamentals.

A curated list of courses to learn data science, machine learning, and deep learning fundamentals.

13. 6 Biggest Limitations of Artificial Intelligence Technology

While the release of GPT-3 marks a significant milestone in the development of AI, the path forward is still obscure. There are still certain limitations to the technology today. Here are six of the major limitations facing data scientists today.

While the release of GPT-3 marks a significant milestone in the development of AI, the path forward is still obscure. There are still certain limitations to the technology today. Here are six of the major limitations facing data scientists today.

14. How to Interpret A Contour Plot

Contour Plot

Contour Plot

15. 5 Cool Python Project Ideas For Inspiration

In the past few years, the programming language that has got the highest fame across the globe is Python. The stardom Python has today in the IT industry is sky-high. And why not? Python has got everything that makes it the deserving candidate for the tag of- “Most Demanded Programming language on the Planet.” So, now it’s your time to do something innovative.

In the past few years, the programming language that has got the highest fame across the globe is Python. The stardom Python has today in the IT industry is sky-high. And why not? Python has got everything that makes it the deserving candidate for the tag of- “Most Demanded Programming language on the Planet.” So, now it’s your time to do something innovative.

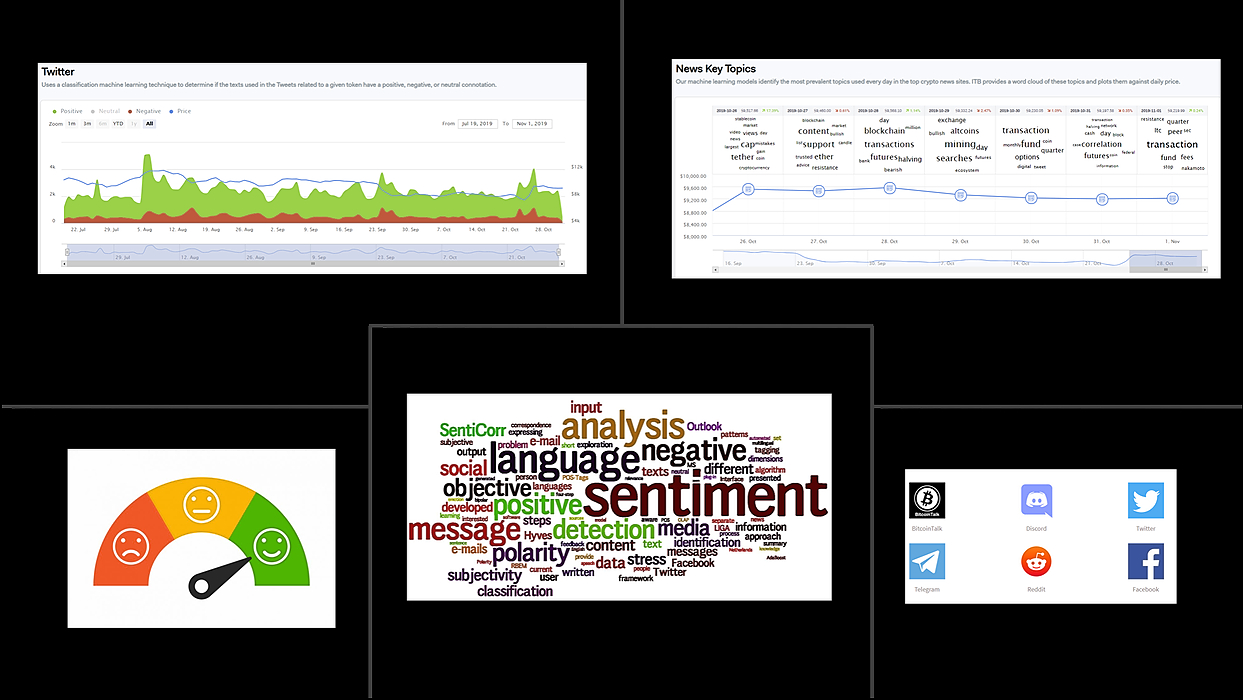

16. What I Learned Trying to Predict the Price of Cryptocurrencies

A few days ago, I presented a webinar about price predictions for cryptocurrencies. The webinar summarized some of the lessons we have learned building prediction models for crypto-assets in the IntoTheBlock platform. We have a lot of interesting IP and research coming out in this area but I wanted to summarize some key ideas that can result helpful if you are intrigued by the idea of predicting the price of crypto-assets.

A few days ago, I presented a webinar about price predictions for cryptocurrencies. The webinar summarized some of the lessons we have learned building prediction models for crypto-assets in the IntoTheBlock platform. We have a lot of interesting IP and research coming out in this area but I wanted to summarize some key ideas that can result helpful if you are intrigued by the idea of predicting the price of crypto-assets.

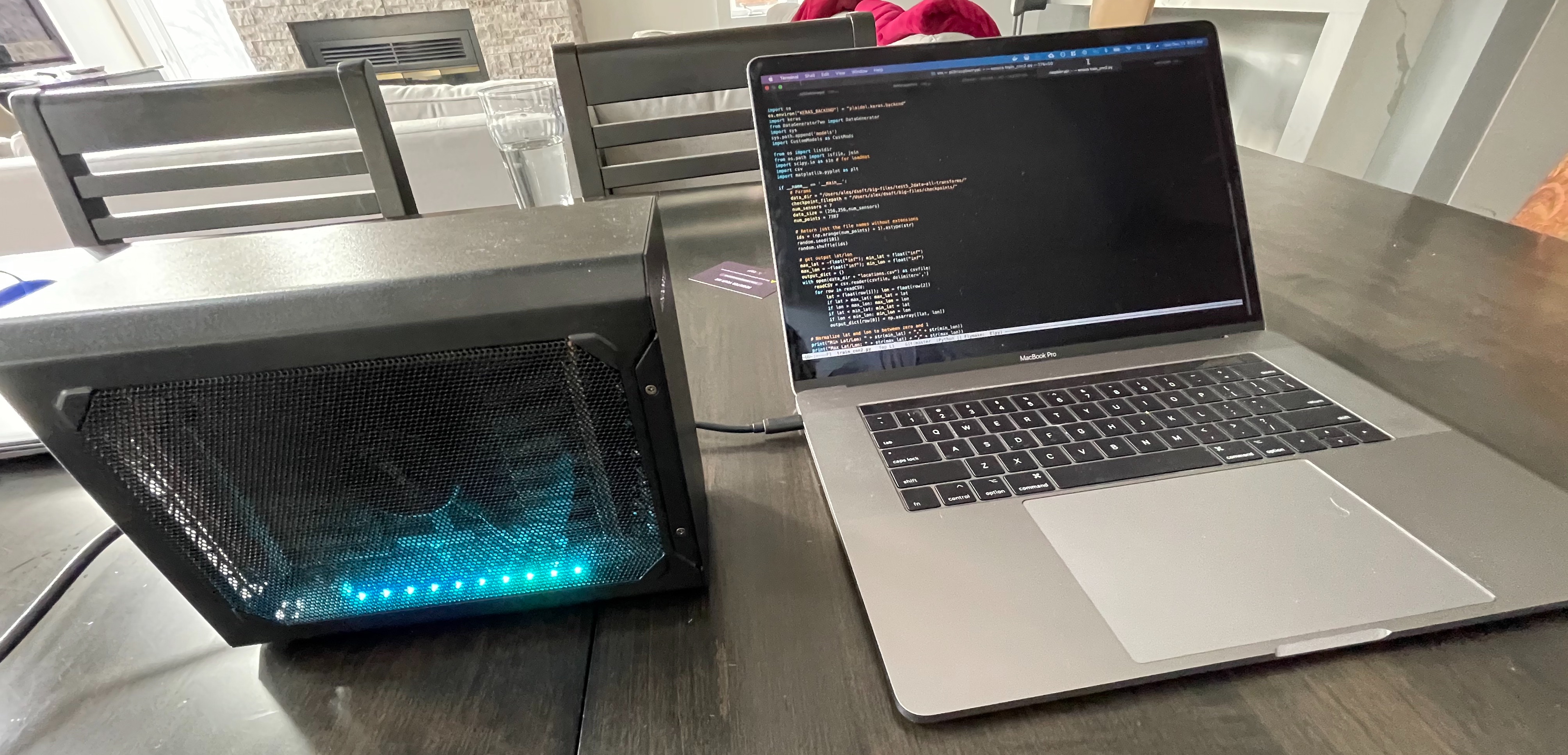

17. The Cheapskate’s Guide to Fine-Tuning LLaMA-2 and Running It on Your Laptop

Everyone is GPU-poor these days So my mission is to fine-tune a LLaMA-2 model with only one GPU and run on my laptop

Everyone is GPU-poor these days So my mission is to fine-tune a LLaMA-2 model with only one GPU and run on my laptop

18. A list of artificial intelligence tools you can use today — for businesses (2/3)

19. Contextual Multi-Armed Bandit Problems in Reinforcement Learning

Explore context-based multi-armed bandit problems in RL. Learn to implement LinUCB, Decision Trees, and Neural Networks to solve them.

Explore context-based multi-armed bandit problems in RL. Learn to implement LinUCB, Decision Trees, and Neural Networks to solve them.

20. Difference between Artificial Intelligence, Machine learning, and deep learning

The development in the field of technology has enhanced over the years. With time, we get terms like Artificial Intelligence, machine learning, and deep learning in technology. We often confuse in these terms and define them similarly. But it is not a precise definition as these terms are different from each other. If you do not want to make this mistake again, then you must read out this article. Here we are going to discuss the difference in these three terms AI, ML, and Deep learning.

The development in the field of technology has enhanced over the years. With time, we get terms like Artificial Intelligence, machine learning, and deep learning in technology. We often confuse in these terms and define them similarly. But it is not a precise definition as these terms are different from each other. If you do not want to make this mistake again, then you must read out this article. Here we are going to discuss the difference in these three terms AI, ML, and Deep learning.

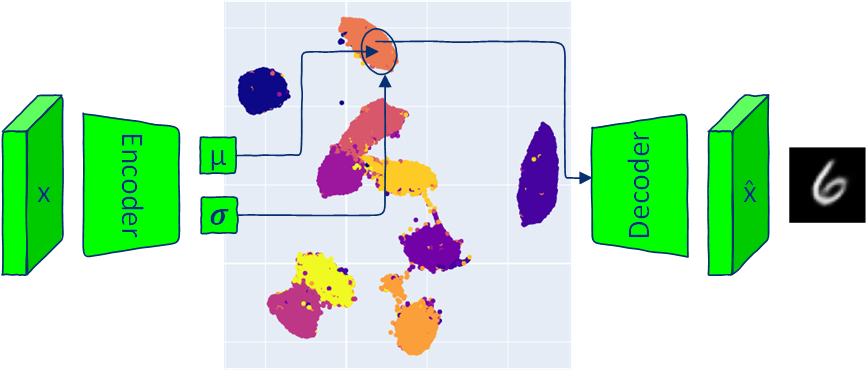

21. How to Sample From Latent Space With Variational Autoencoder

Explore the unique aspects of VAEs, which separate them from traditional autoencoders by enabling data generation through sampling from a distribution

Explore the unique aspects of VAEs, which separate them from traditional autoencoders by enabling data generation through sampling from a distribution

22. Top Resources for Learning About AI in Finance

Curated list of top resources to learn about AI in finance.

Curated list of top resources to learn about AI in finance.

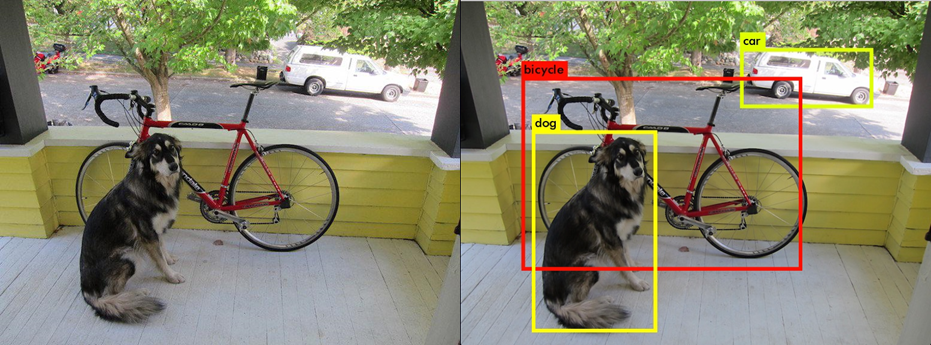

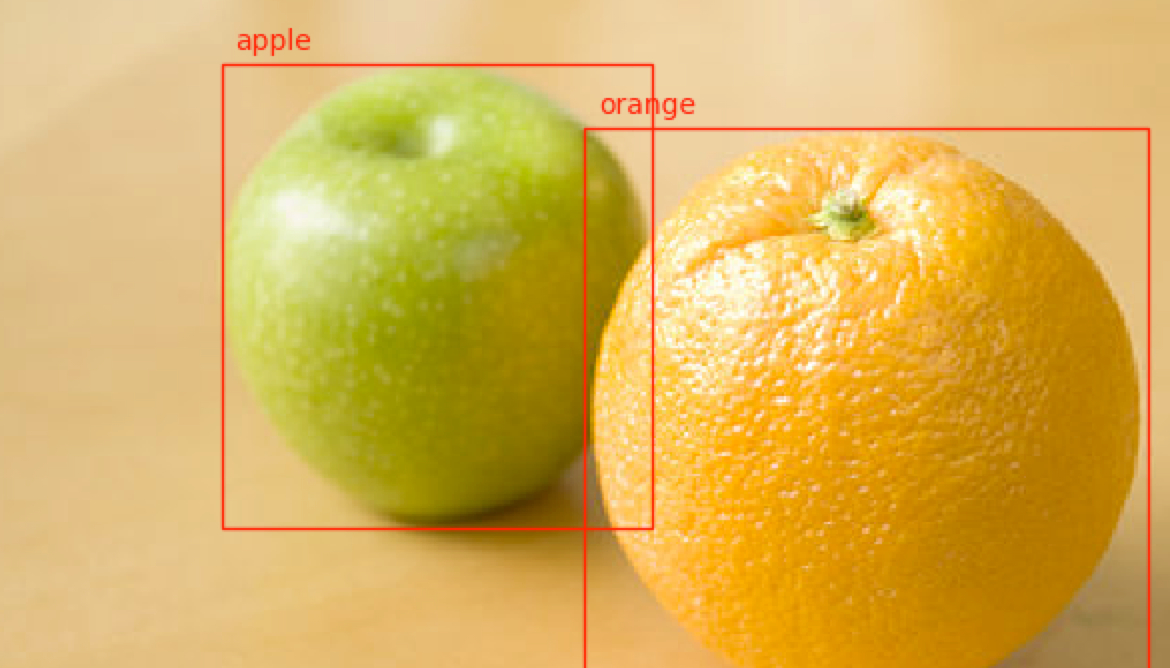

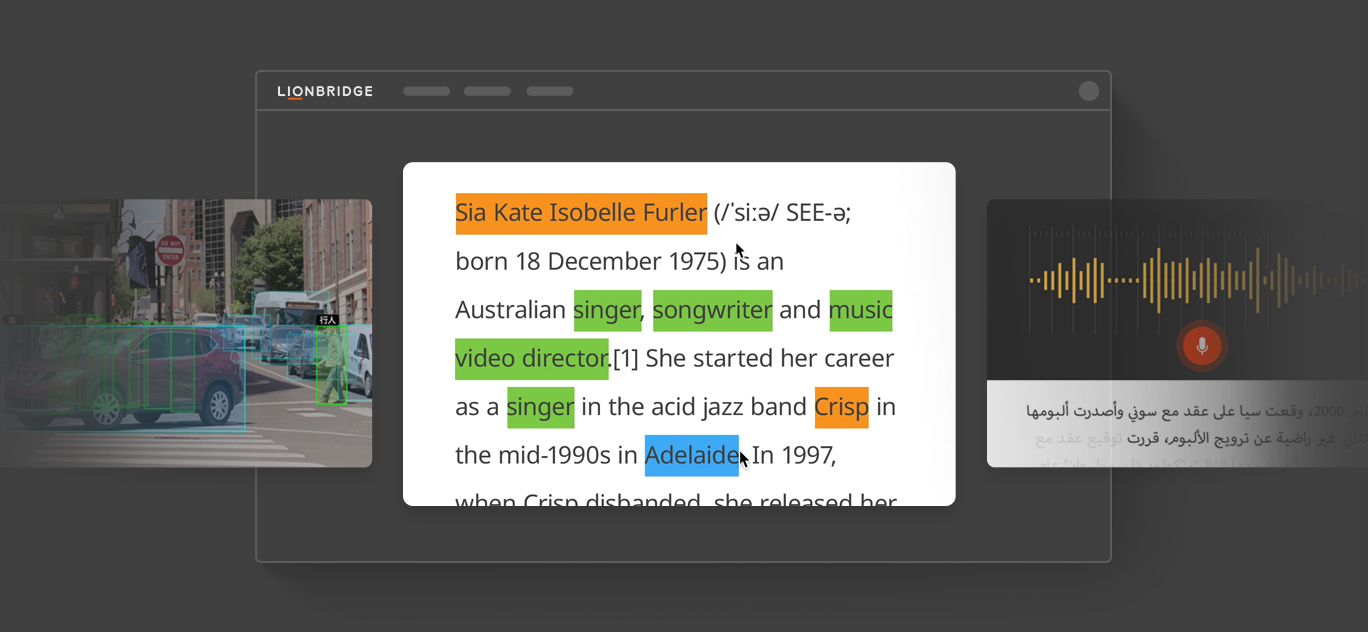

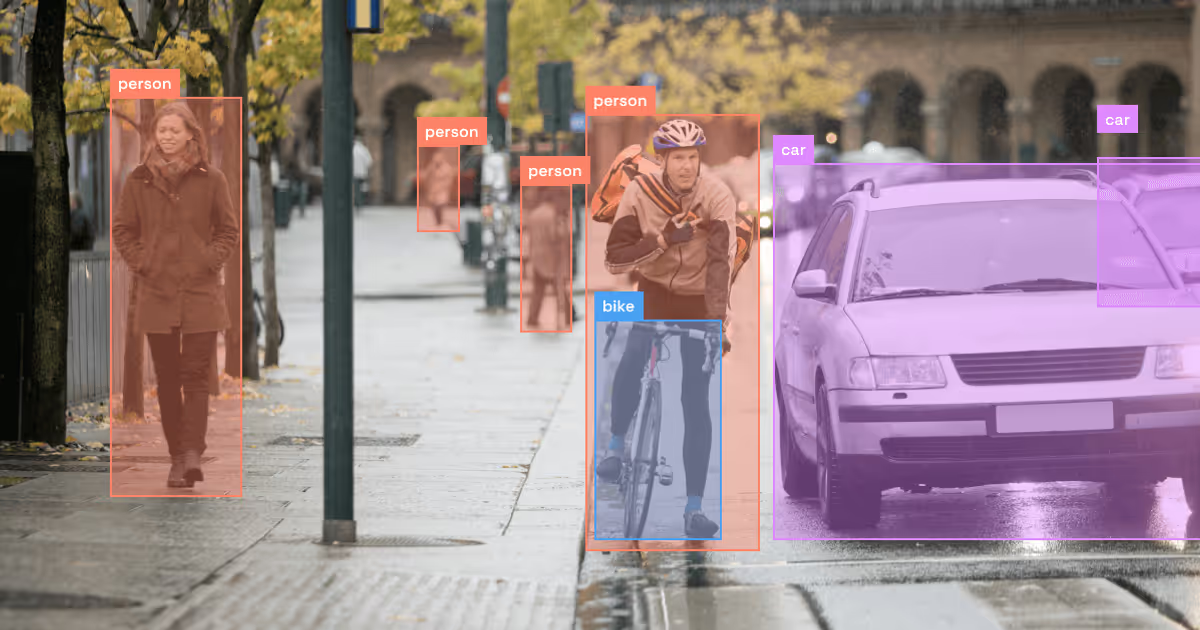

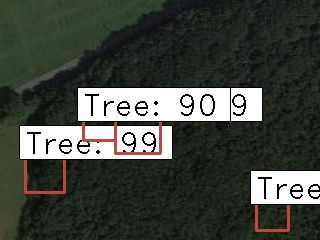

23. What's The Best Image Labeling Tool for Object Detection?

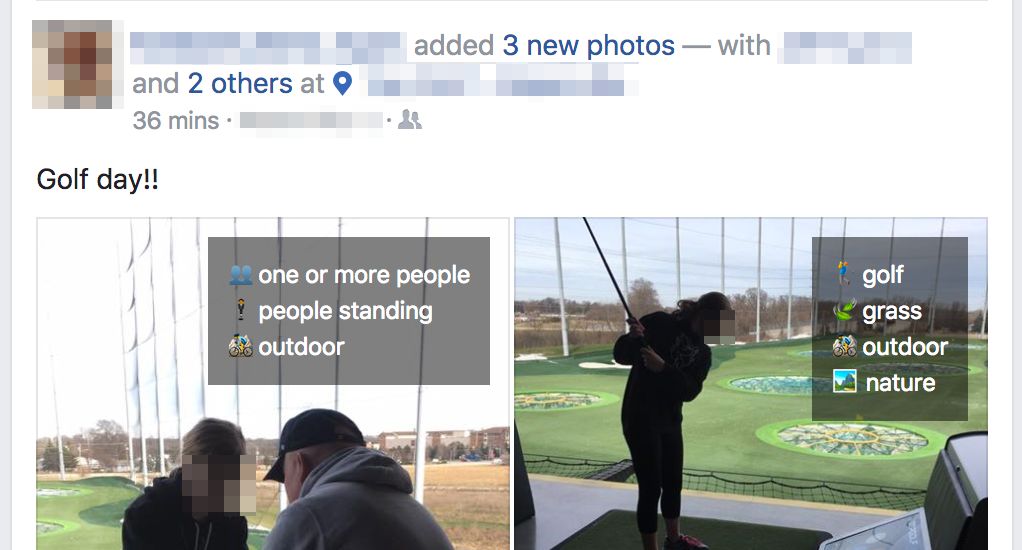

An image labeling or annotation tool is used to label the images for bounding box object detection and segmentation. It is the process of highlighting the images by humans. They have to be readable for machines. With the help of the image labeling tools, the objects in the image could be labeled for a specific purpose. The process of object labeling makes it easy for people to understand what is in the image. The labeling tool helps the people to mark the items in an image. There are several image labeling tools for object detection, and some of them use varied techniques for detection of the object, like a semantic, bounding box, key-point, cuboid, semantic and many more. In this article, we will talk about image labeling and the best image labeling tools.

An image labeling or annotation tool is used to label the images for bounding box object detection and segmentation. It is the process of highlighting the images by humans. They have to be readable for machines. With the help of the image labeling tools, the objects in the image could be labeled for a specific purpose. The process of object labeling makes it easy for people to understand what is in the image. The labeling tool helps the people to mark the items in an image. There are several image labeling tools for object detection, and some of them use varied techniques for detection of the object, like a semantic, bounding box, key-point, cuboid, semantic and many more. In this article, we will talk about image labeling and the best image labeling tools.

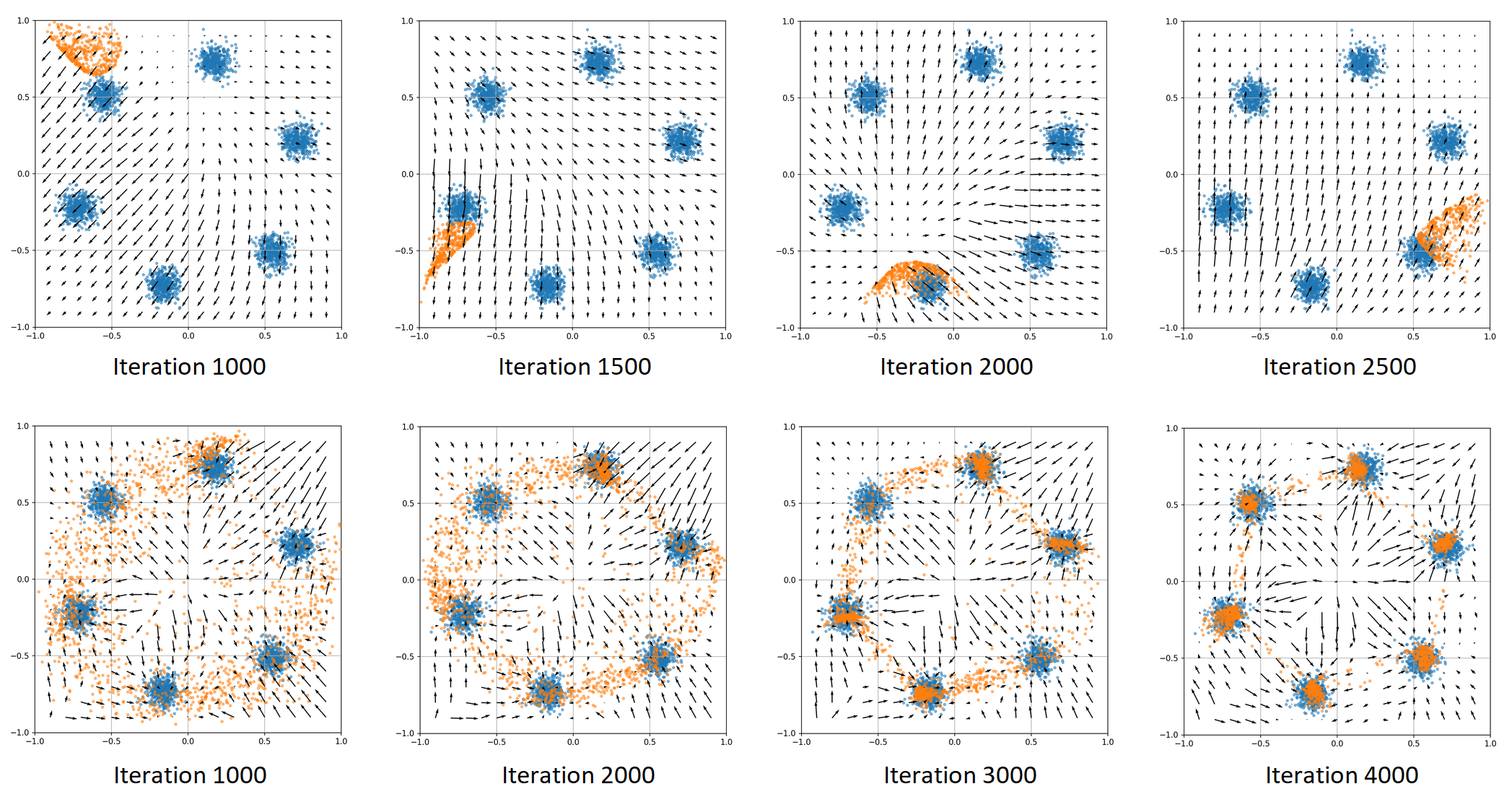

24. Implementing different variants of Gradient Descent Optimization Algorithm in Python using Numpy

Learn how tensorflow or pytorch implement optimization algorithms by using numpy and create beautiful animations using matplotlib

Learn how tensorflow or pytorch implement optimization algorithms by using numpy and create beautiful animations using matplotlib

25. Deep Learning & Artificial Neural Networks: Solving The Black Box Mystery

I often hear people talking about neural networks as something as a black-box that you don’t understand what it does or what they mean. Actually many people can’t understand what they mean by that. If you understand how back-propagation works, then how is it a black-box?

I often hear people talking about neural networks as something as a black-box that you don’t understand what it does or what they mean. Actually many people can’t understand what they mean by that. If you understand how back-propagation works, then how is it a black-box?

26. Why I Dropped Out of College in 2020 to Design My Own ML and AI Degree

Most people would think I was crazy for starting 2020 as a college dropout (sorry mom!), but I wish I made this decision sooner.

Most people would think I was crazy for starting 2020 as a college dropout (sorry mom!), but I wish I made this decision sooner.

27. Driver Drowsiness Detection System: A Python Project with Source Code

Drowsiness detection is a safety technology that can prevent accidents that are caused by drivers who fell asleep while driving.

Drowsiness detection is a safety technology that can prevent accidents that are caused by drivers who fell asleep while driving.

28. Revolutionizing Image Analysis: YOLOv3 and PolyRNN++ Integration for Image Annotation

29. Karate Club a Python library for graph representation learning

Karate Club is an unsupervised machine learning extension library for the NetworkX Python package. See the documentation here.

Karate Club is an unsupervised machine learning extension library for the NetworkX Python package. See the documentation here.

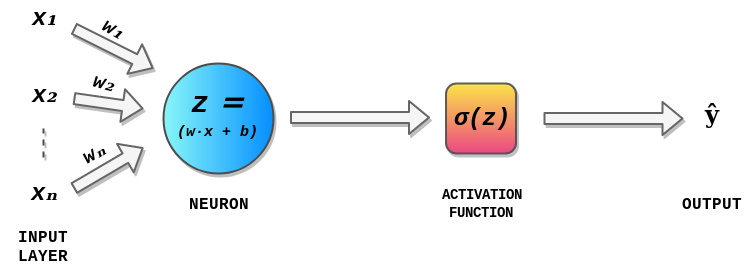

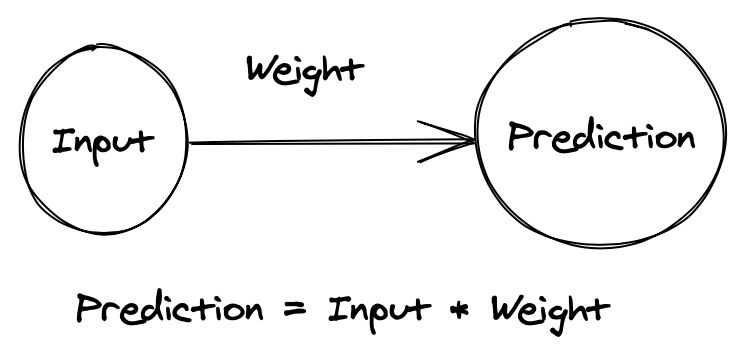

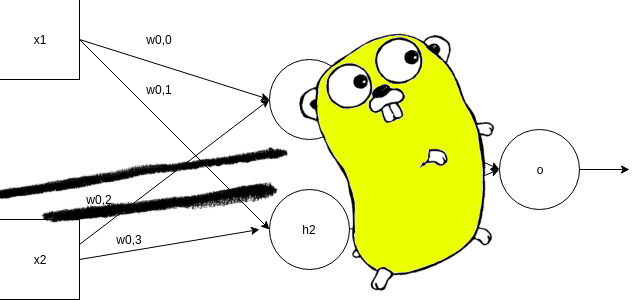

30. Introduction To Maths Behind Neural Networks

Today, with open source machine learning software libraries such as TensorFlow, Keras or PyTorch we can create neural network, even with a high structural complexity, with just a few lines of code. Having said that, the Math behind neural networks is still a mystery to some of us and having the Math knowledge behind neural networks and deep learning can help us understand what’s happening inside a neural network. It is also helpful in architecture selection, fine-tuning of Deep Learning models, hyperparameters tuning and optimization.

Today, with open source machine learning software libraries such as TensorFlow, Keras or PyTorch we can create neural network, even with a high structural complexity, with just a few lines of code. Having said that, the Math behind neural networks is still a mystery to some of us and having the Math knowledge behind neural networks and deep learning can help us understand what’s happening inside a neural network. It is also helpful in architecture selection, fine-tuning of Deep Learning models, hyperparameters tuning and optimization.

31. Is GPU Really Necessary for Data Science Work?

A big question for Machine Learning and Deep Learning apps developers is whether or not to use a computer with a GPU, after all, GPUs are still very expensive. To get an idea, see the price of a typical GPU for processing AI in Brazil costs between US $ 1,000.00 and US $ 7,000.00 (or more).

A big question for Machine Learning and Deep Learning apps developers is whether or not to use a computer with a GPU, after all, GPUs are still very expensive. To get an idea, see the price of a typical GPU for processing AI in Brazil costs between US $ 1,000.00 and US $ 7,000.00 (or more).

32. 20 Best Machine Learning Resources for Data Scientists

Whether you’re a beginner looking for introductory articles or an intermediate looking for datasets or papers about new AI models, this list of machine learning resources has something for everyone interested in or working in data science. In this article, we will introduce guides, papers, tools and datasets for both computer vision and natural language processing.

Whether you’re a beginner looking for introductory articles or an intermediate looking for datasets or papers about new AI models, this list of machine learning resources has something for everyone interested in or working in data science. In this article, we will introduce guides, papers, tools and datasets for both computer vision and natural language processing.

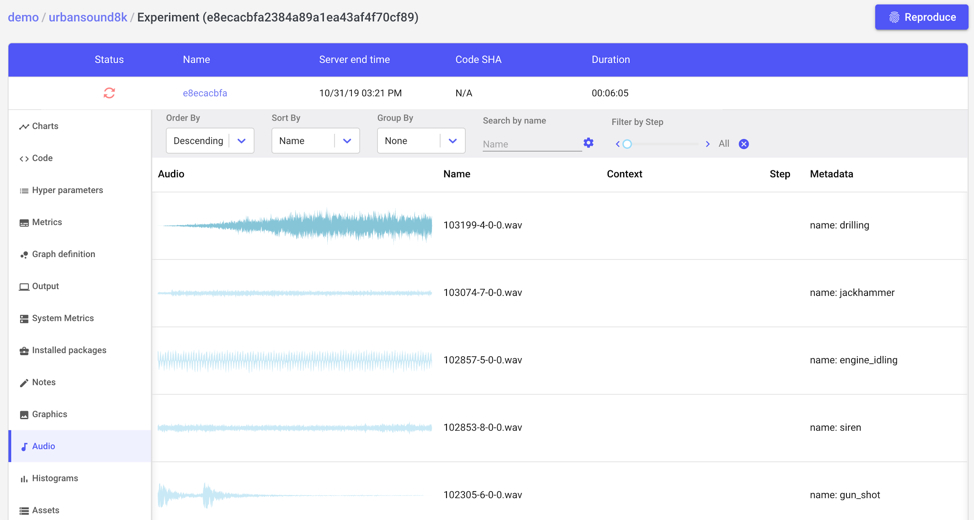

33. How To Apply Machine Learning And Deep Learning Methods to Audio Analysis

To view the code, training visualizations, and more information about the python example at the end of this post, visit the Comet project page.

To view the code, training visualizations, and more information about the python example at the end of this post, visit the Comet project page.

34. Creative AI ‘Shakes’ the Core of Humanity and Requires a Broader Discussion About Ethics

The boundary between machine and humans was clear. But now the machine has become creative! Can self expression still be at the core of our humanity?

The boundary between machine and humans was clear. But now the machine has become creative! Can self expression still be at the core of our humanity?

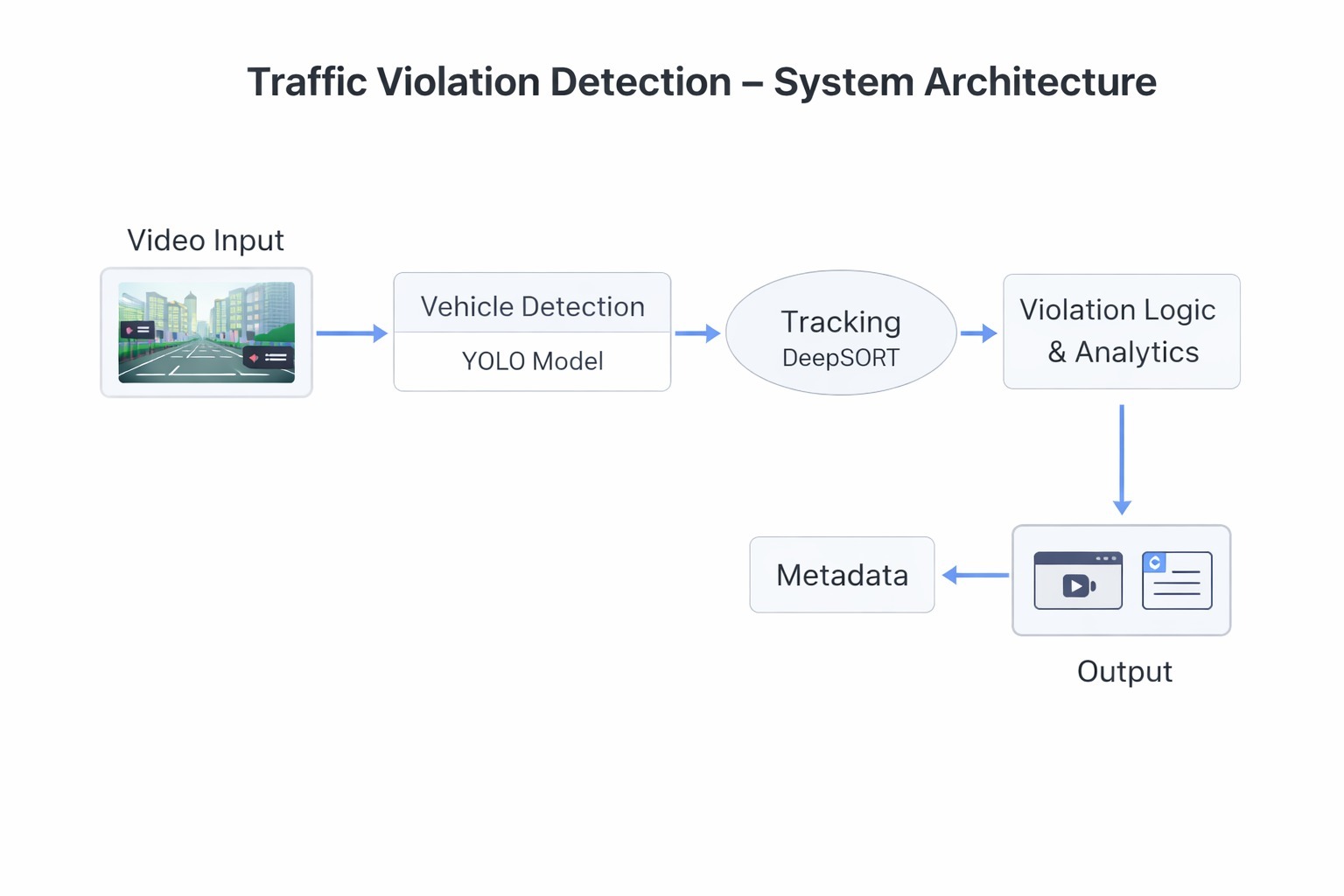

35. How I Built an AI to Detect License Plate Number Registration (ANPR)

This Car Mod Is A Privacy Nightmare! (AI Number Plate Reader with Python, Tensorflow, OpenCV, OpenALPR)

This Car Mod Is A Privacy Nightmare! (AI Number Plate Reader with Python, Tensorflow, OpenCV, OpenALPR)

36. Build an Abstractive Text Summarizer in 94 Lines of Tensorflow !! (Tutorial 6)

This tutorial is the sixth one from a series of tutorials that would help you build an abstractive text summarizer using tensorflow , today we would build an abstractive text summarizer in tensorflow in an optimized way .

This tutorial is the sixth one from a series of tutorials that would help you build an abstractive text summarizer using tensorflow , today we would build an abstractive text summarizer in tensorflow in an optimized way .

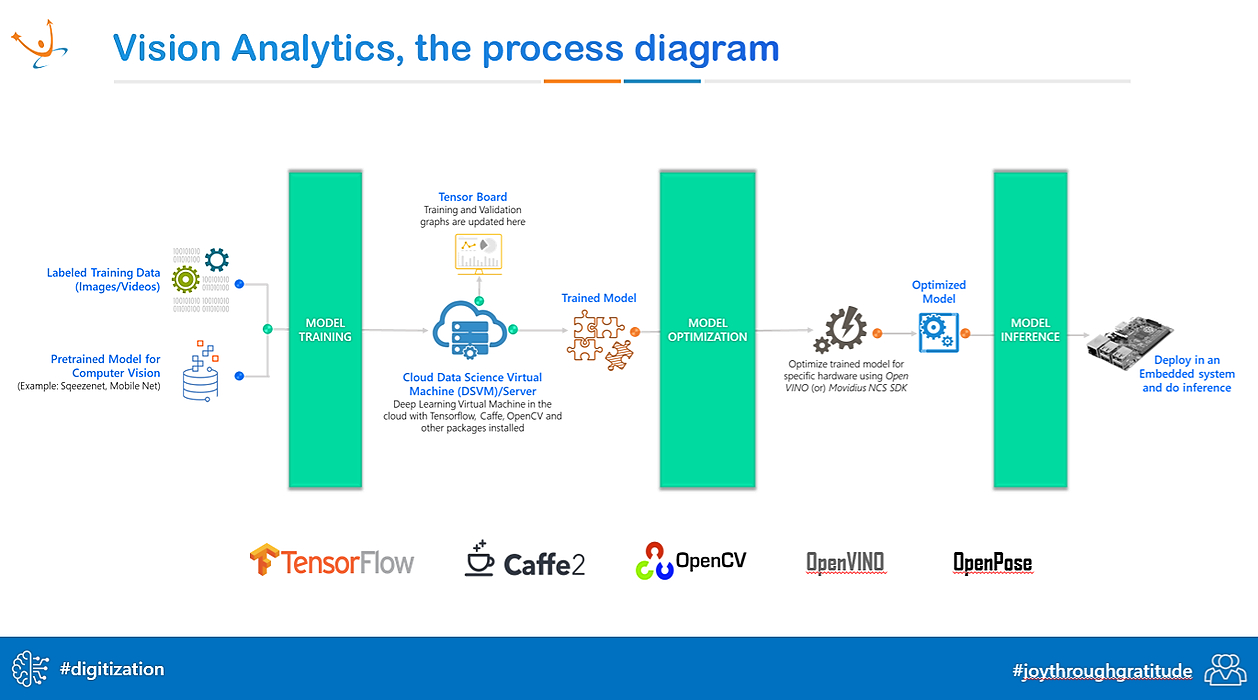

37. Deploy Computer Vision Models with Triton Inference Server

There are a lot of Machine Learning courses, and we are pretty good at modeling and improving our accuracy or other metrics.

There are a lot of Machine Learning courses, and we are pretty good at modeling and improving our accuracy or other metrics.

38. Deploying Deep Learning Models with Model Server

Learn how to deploy deep learning models with Model Server.

Learn how to deploy deep learning models with Model Server.

39. Build a Custom-Trained Object Detection Model With 5 Lines of Code

These days, machine learning and computer vision are all the craze. We’ve all seen the news about self-driving cars and facial recognition and probably imagined how cool it’d be to build our own computer vision models. However, it’s not always easy to break into the field, especially without a strong math background. Libraries like PyTorch and TensorFlow can be tedious to learn if all you want to do is experiment with something small.

These days, machine learning and computer vision are all the craze. We’ve all seen the news about self-driving cars and facial recognition and probably imagined how cool it’d be to build our own computer vision models. However, it’s not always easy to break into the field, especially without a strong math background. Libraries like PyTorch and TensorFlow can be tedious to learn if all you want to do is experiment with something small.

40. This AI Creates Videos From a Couple of Images

Researchers created a simple collection of photos and transformed them into a 3-dimensional model.

Researchers created a simple collection of photos and transformed them into a 3-dimensional model.

41. How Search Engines Actually Answer Your Questions

Modern search Q&A explained: how knowledge graphs, DeepQA, and MRC turn messy web pages into direct, trustworthy answers.

Modern search Q&A explained: how knowledge graphs, DeepQA, and MRC turn messy web pages into direct, trustworthy answers.

42. The Hidden Problem With Group Rewards in Multi-Agent AI

Group rewards are breaking your multi-agent RL training. Decoupled normalization keeps coordination intact while stopping gradient collapse.

Group rewards are breaking your multi-agent RL training. Decoupled normalization keeps coordination intact while stopping gradient collapse.

43. The Revolutionary Potential of 1-Bit Language Models (LLMs)

The Revolutionary Potential of 1-Bit Language Models

The Revolutionary Potential of 1-Bit Language Models

44. How Does "Hey Siri!" Work Without Your iPhone Listening To You At All Times?

Ever wondered if our phone can detect the “Hey Siri!” command anytime and interpret it, is it recording our daily life conversations too?

Ever wondered if our phone can detect the “Hey Siri!” command anytime and interpret it, is it recording our daily life conversations too?

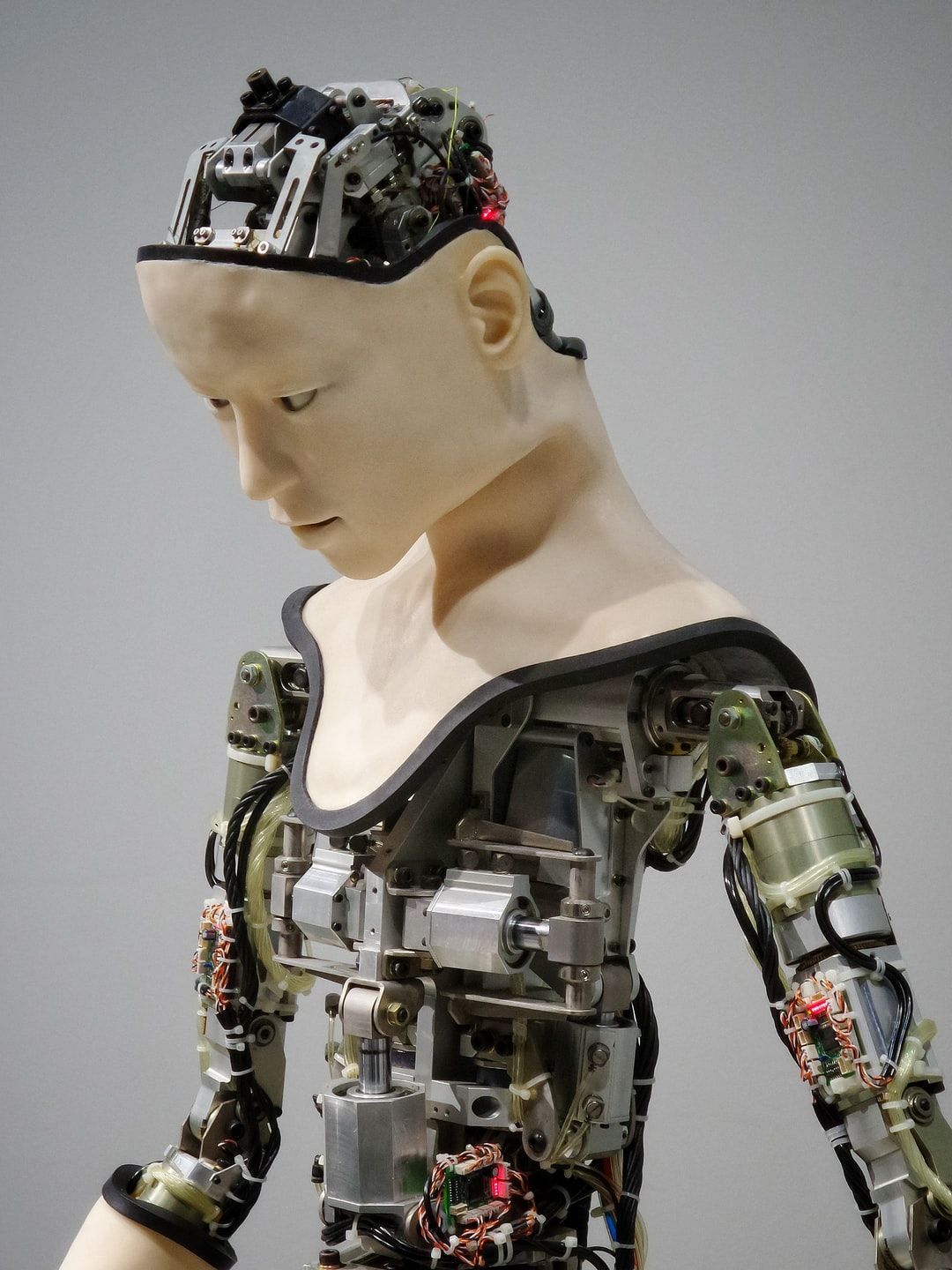

45. So you think you know what is Artificial Intelligence?

When you think of Artificial Intelligence, the first thing that comes to mind is either Robots or Machines with Brains or Matrix or Terminator or Ex Machina or any of the other amazing concepts having machines that can think. This is an appropriate but vague understanding of Artificial Intelligence. In this article we’ll see what A.I. really is and how the definition has changed in the past.

When you think of Artificial Intelligence, the first thing that comes to mind is either Robots or Machines with Brains or Matrix or Terminator or Ex Machina or any of the other amazing concepts having machines that can think. This is an appropriate but vague understanding of Artificial Intelligence. In this article we’ll see what A.I. really is and how the definition has changed in the past.

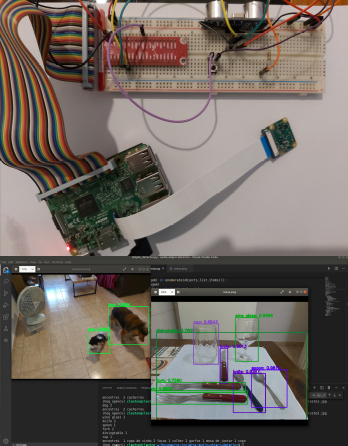

46. Introductory Guide To Real-time Object Detection with Python

Researchers have been studying the possibilities of giving machines the ability to distinguish and identify objects through vision for years now. This particular domain, called Computer Vision or CV, has a wide range of modern-day applications.

Researchers have been studying the possibilities of giving machines the ability to distinguish and identify objects through vision for years now. This particular domain, called Computer Vision or CV, has a wide range of modern-day applications.

47. An Introduction to “Liquid” Neural Networks

Liquid neural networks are capable of adapting their underlying behavior during the training phase.

Liquid neural networks are capable of adapting their underlying behavior during the training phase.

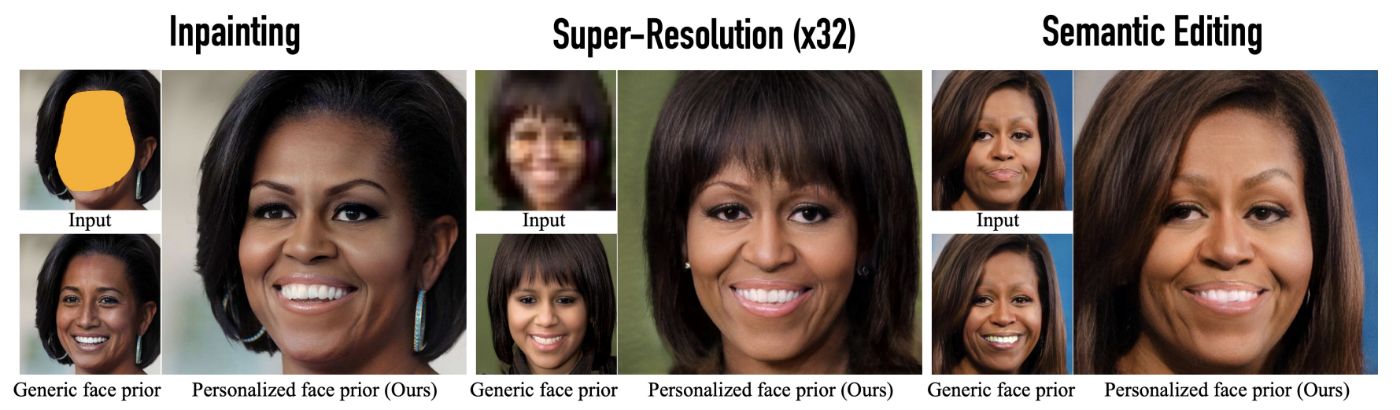

48. The World's Most Powerful Deepfake Model was Just Released by Google

This AI can reconstruct, enhance and edit your images!

This AI can reconstruct, enhance and edit your images!

49. Top 10 Data Science Project Ideas for 2020

As an aspiring data scientist, the best way for you to increase your skill level is by practicing. And what better way is there for practicing your technical skills than making projects.

As an aspiring data scientist, the best way for you to increase your skill level is by practicing. And what better way is there for practicing your technical skills than making projects.

50. Going From Not Being Able To Code To Deep Learning Hero

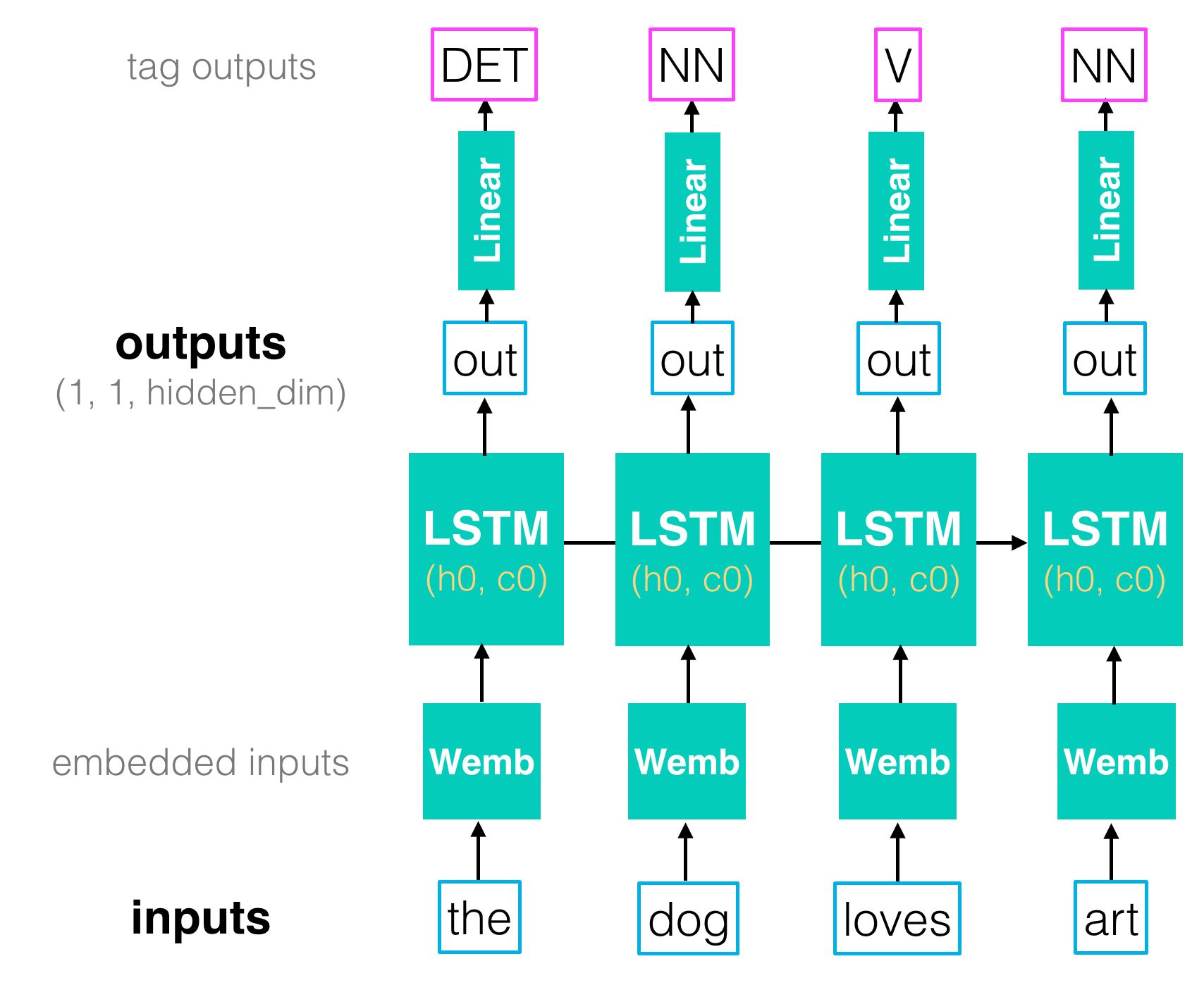

51. What is an RNN (Recurrent Neural Network) in Deep Learning?

RNN is one of the popular neural networks that is commonly used to solve natural language processing tasks.

RNN is one of the popular neural networks that is commonly used to solve natural language processing tasks.

52. Accelerating Neural Networks: The Power of Quantization

A hands-on guide to neural network quantization: theory, PyTorch implementation, and practical tips for optimizing models for edge devices

A hands-on guide to neural network quantization: theory, PyTorch implementation, and practical tips for optimizing models for edge devices

53. Demystifying Different Variants of Gradient Descent Optimization Algorithm

54. An Intro to Prompting and Prompt Engineering

Prompting and prompt engineering are easily the most in demand skills of 2023.

Prompting and prompt engineering are easily the most in demand skills of 2023.

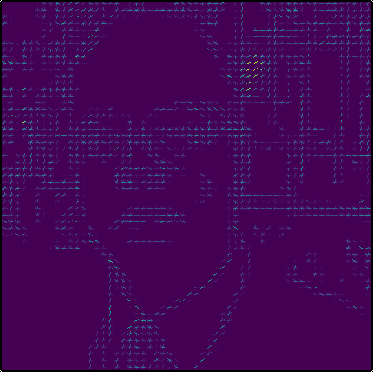

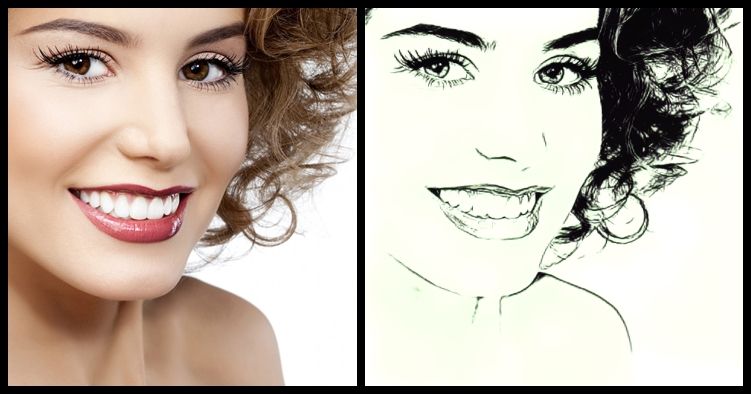

55. Facial Recognition Comparison with Java and C ++ using HOG

HOG - Histogram of Oriented Gradients (histogram of oriented gradients) is an image descriptor format, capable of summarizing the main characteristics of an image, such as faces for example, allowing comparison with similar images.

HOG - Histogram of Oriented Gradients (histogram of oriented gradients) is an image descriptor format, capable of summarizing the main characteristics of an image, such as faces for example, allowing comparison with similar images.

56. Entendiendo PyTorch: las bases de las bases para hacer inteligencia artificial

<meta name="monetization" content="$ilp.uphold.com/EXa8i9DQ32qy">

<meta name="monetization" content="$ilp.uphold.com/EXa8i9DQ32qy">

57. Neural Network Layers: All You Need Is Inside Comprehensive Overview

Explore an in-depth overview of various neural network layers, their history, mathematical formulations, and code implementations. The publication covers common

Explore an in-depth overview of various neural network layers, their history, mathematical formulations, and code implementations. The publication covers common

58. Image Annotation Types For Computer Vision And Its Use Cases

There are many types of image annotations for computer vision out there, and each one of these annotation techniques has different applications.

There are many types of image annotations for computer vision out there, and each one of these annotation techniques has different applications.

59. Manipulación de tensores en PyTorch. ¡El primer paso para el deep learning!

*Nota: Contactar a Omar Espejel (omar@tsc.ai) para cualquier observación. Cualquier error es responsabilidad del autor.

*Nota: Contactar a Omar Espejel (omar@tsc.ai) para cualquier observación. Cualquier error es responsabilidad del autor.

60. Data Testing for Machine Learning Pipelines Using Deepchecks, DagsHub, and GitHub Actions

A complete setup of a ML project using version control (also for data with DVC), experiment tracking, data checks with deepchecks and GitHub Action

A complete setup of a ML project using version control (also for data with DVC), experiment tracking, data checks with deepchecks and GitHub Action

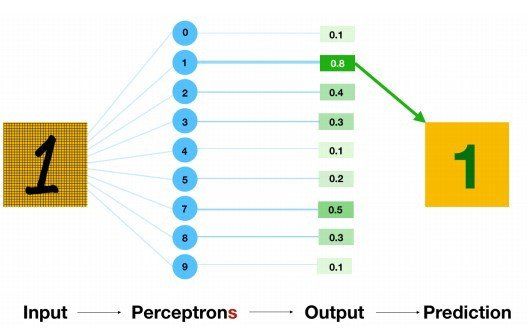

61. How to Perform MNIST Digit Recognition with a Multi-layer Neural Network

Human Visual System is a marvel of the world. People can readily recognise digits. But it is not as simple as it looks like. The human brain has a million neurons and billions of connections between them, which makes this exceptionally complex task of image processing easier. People can effortlessly recognize digits.

Human Visual System is a marvel of the world. People can readily recognise digits. But it is not as simple as it looks like. The human brain has a million neurons and billions of connections between them, which makes this exceptionally complex task of image processing easier. People can effortlessly recognize digits.

62. ChatSQL: Enabling ChatGPT to Generate SQL Queries from Plain Text

ChatGPT was released in June 2020 that it is developed by OpenAI. It has led to revolutionary developments in many areas. One of these areas is the creation of

ChatGPT was released in June 2020 that it is developed by OpenAI. It has led to revolutionary developments in many areas. One of these areas is the creation of

63. Increase The Size of Your Datasets Through Data Augmentation

Access to training data is one of the largest blockers for many machine learning projects. Luckily, for various different projects, we can use data augmentation to increase the size of our training data many times over.

Access to training data is one of the largest blockers for many machine learning projects. Luckily, for various different projects, we can use data augmentation to increase the size of our training data many times over.

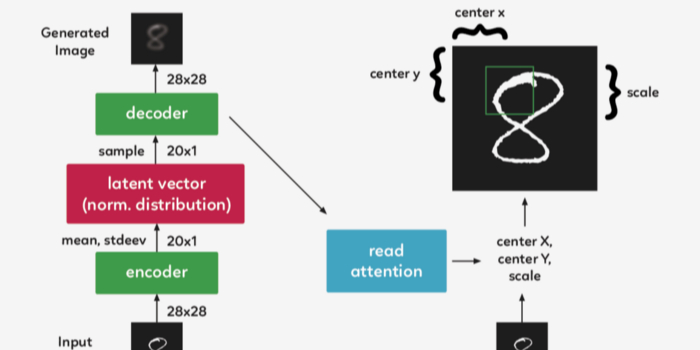

64. Understanding A Recurrent Neural Network For Image Generation

The purpose of this post is to implement and understand Google Deepmind’s paper DRAW: A Recurrent Neural Network For Image Generation. The code is based on the work of Eric Jang, who in his original code was able to achieve the implementation in only 158 lines of Python code.

The purpose of this post is to implement and understand Google Deepmind’s paper DRAW: A Recurrent Neural Network For Image Generation. The code is based on the work of Eric Jang, who in his original code was able to achieve the implementation in only 158 lines of Python code.

65. From 140GB to 4GB: The Art of LLM Quantization

Quantization shrinks 140GB LLMs to under 4GB, bringing enterprise AI to consumer GPUs. A deep dive into GPTQ, AWQ, GGUF, and beyond.

Quantization shrinks 140GB LLMs to under 4GB, bringing enterprise AI to consumer GPUs. A deep dive into GPTQ, AWQ, GGUF, and beyond.

66. Model Quantization in Deep Neural Networks

To get your AI models to work on laptops, mobiles and tiny devices quantization is essential

To get your AI models to work on laptops, mobiles and tiny devices quantization is essential

67. Gain State-Of-The-Art Results on Tabular Data with Deep Learning & Embedding Layers [A How To Guide]

Tree-based models like Random Forest and XGBoost have become very popular in solving tabular(structured) data problems and gained a lot of tractions in Kaggle competitions lately. It has its very deserving reasons. However, in this article, I want to introduce a different approach from fast.ai’s Tabular module leveraging.

Tree-based models like Random Forest and XGBoost have become very popular in solving tabular(structured) data problems and gained a lot of tractions in Kaggle competitions lately. It has its very deserving reasons. However, in this article, I want to introduce a different approach from fast.ai’s Tabular module leveraging.

68. Understanding The Importance Of Data For Machine Learning

Data is the most important and must-have food for machine learning. It can be any fact, text, symbols, images, videos, etc., but in unprocessed form. Let us see

Data is the most important and must-have food for machine learning. It can be any fact, text, symbols, images, videos, etc., but in unprocessed form. Let us see

69. Character AI in 2025: A Practical Guide and Comparison With ChatGPT, Gemini, & More

Character AI lets you build and chat with AI personas—but how useful is it really? This guide covers its features, flaws, and how it stacks up against tools.

Character AI lets you build and chat with AI personas—but how useful is it really? This guide covers its features, flaws, and how it stacks up against tools.

70. Text Classification With Zero Shot Learning

Zero-shot text classification using trnasformers and TARSclassifier.

Zero-shot text classification using trnasformers and TARSclassifier.

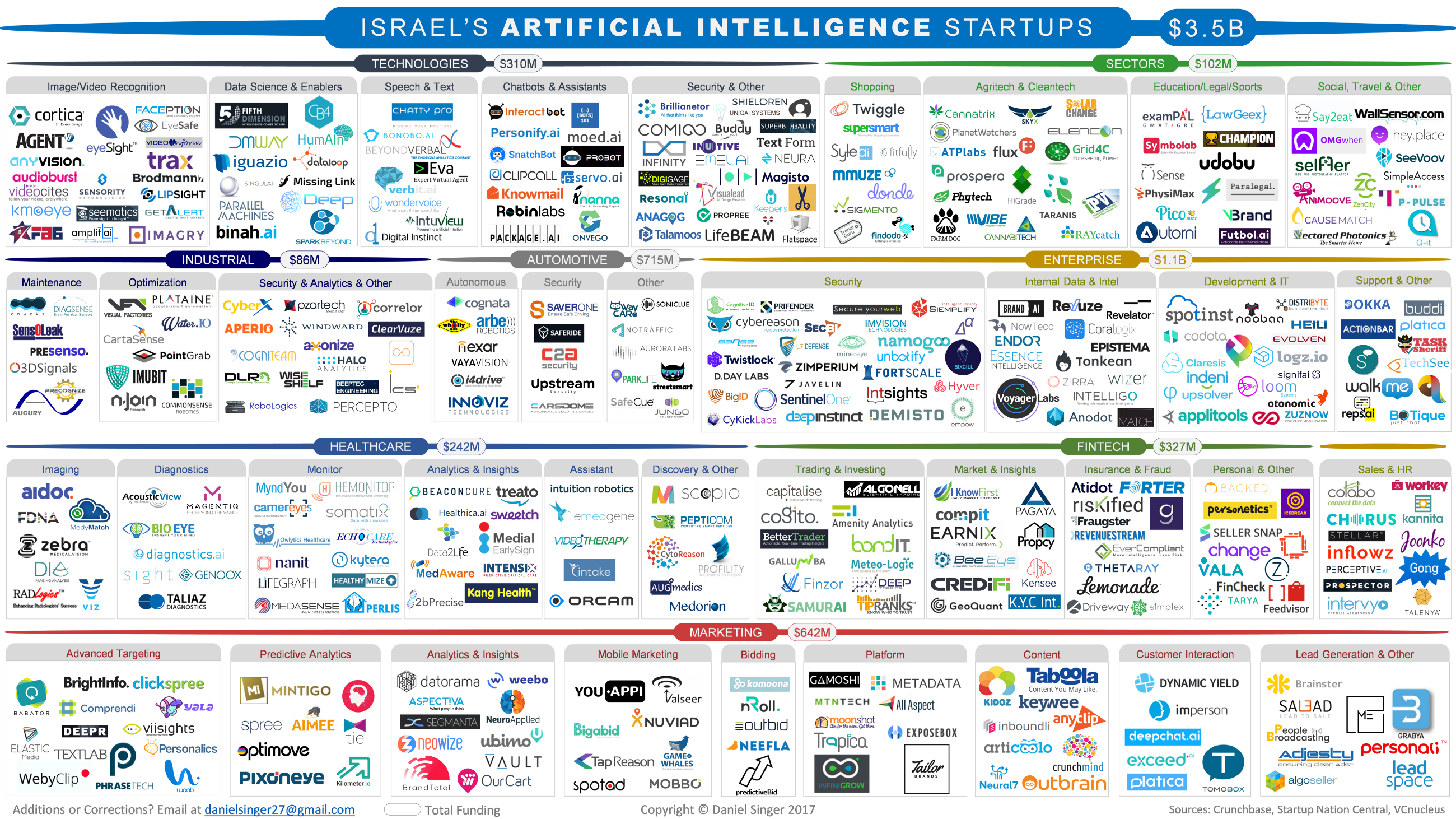

71. Israel’s Artificial Intelligence Startups

The artificial intelligence industry is expected to be worth <a href="https://www.tractica.com/newsroom/press-releases/artificial-intelligence-software-revenue-to-reach-59-8-billion-worldwide-by-2025/" target="_blank">$59.8 billion by 2025</a>, and the term AI has become ubiquitous worldwide; the frenzy of many tech enthusiasts, or the topic of discussion at a dinner table. But the hype actually lives up to its name. AI startups are flush with VC cash and even key corporate leaders are actively utilizing the technology to add value and gain a competitive edge.

The artificial intelligence industry is expected to be worth <a href="https://www.tractica.com/newsroom/press-releases/artificial-intelligence-software-revenue-to-reach-59-8-billion-worldwide-by-2025/" target="_blank">$59.8 billion by 2025</a>, and the term AI has become ubiquitous worldwide; the frenzy of many tech enthusiasts, or the topic of discussion at a dinner table. But the hype actually lives up to its name. AI startups are flush with VC cash and even key corporate leaders are actively utilizing the technology to add value and gain a competitive edge.

72. How to Structure a PyTorch ML Project With Google Colab and TensorBoard

Let’s build a fashion-MNIST CNN, PyTorch style. This is A Line-by-line guide on how to structure a PyTorch ML project from scratch using Google Colab and TensorBoard

Let’s build a fashion-MNIST CNN, PyTorch style. This is A Line-by-line guide on how to structure a PyTorch ML project from scratch using Google Colab and TensorBoard

73. The Best Slack Groups for Data Scientists to Join

The online data science community is supportive and collaborative. One of the ways you can join the community is to find machine learning and AI Slack groups.

The online data science community is supportive and collaborative. One of the ways you can join the community is to find machine learning and AI Slack groups.

74. No-Code Machine Learning inside Google Sheets

Introduction

Introduction

75. Data Set and Data Augmentation for Face Detection and Recognition

When it comes to building an Artificially Intelligent (AI) application, your approach must be data first, not application first.

When it comes to building an Artificially Intelligent (AI) application, your approach must be data first, not application first.

76. Flax: Google’s Open Source Approach To Flexibility In Machine Learning

Thinking of Machine Learning, the first frameworks that come to mind are Tensorflow and PyTorch, which are currently the state-of-the-art frameworks if you want to work with Deep Neural Networks. Technology is changing rapidly and more flexibility is needed, so Google researchers are developing a new high performance framework for the open source community: Flax.

Thinking of Machine Learning, the first frameworks that come to mind are Tensorflow and PyTorch, which are currently the state-of-the-art frameworks if you want to work with Deep Neural Networks. Technology is changing rapidly and more flexibility is needed, so Google researchers are developing a new high performance framework for the open source community: Flax.

77. Can Graph Neural Networks Solve Real-World Problems?

In this article, we will learn about GNNs and its structure as well as its applications

In this article, we will learn about GNNs and its structure as well as its applications

78. Artificial Intelligence, Machine Learning, and Human Beings

In a conversation with HackerNoon CEO, David Smooke, he identified artificial intelligence as an area of technology in which he anticipates vast growth. He pointed out, somewhat cheekily, that it seems like AI could be further along in figuring out how to alleviate some of our most basic electronic tasks—coordinating and scheduling meetings, for instance. This got me reflecting on the state of artificial intelligence. And mostly why my targeted ads suck so much...

In a conversation with HackerNoon CEO, David Smooke, he identified artificial intelligence as an area of technology in which he anticipates vast growth. He pointed out, somewhat cheekily, that it seems like AI could be further along in figuring out how to alleviate some of our most basic electronic tasks—coordinating and scheduling meetings, for instance. This got me reflecting on the state of artificial intelligence. And mostly why my targeted ads suck so much...

79. How I Designed My Own Machine Learning and Artificial Intelligence Degree

After noticing my programming courses in college were outdated, I began this year by dropping out of college to teach myself machine learning and artificial intelligence using online resources. With no experience in tech, no previous degrees, here is the degree I designed in Machine Learning and Artificial Intelligence from beginning to end to get me to my goal — to become a well-rounded machine learning and AI engineer.

After noticing my programming courses in college were outdated, I began this year by dropping out of college to teach myself machine learning and artificial intelligence using online resources. With no experience in tech, no previous degrees, here is the degree I designed in Machine Learning and Artificial Intelligence from beginning to end to get me to my goal — to become a well-rounded machine learning and AI engineer.

80. Why Quadratic Cost Functions Are Ineffective in Neural Network Training

Explore why quadratic cost functions hinder neural network training and how cross-entropy improves learning efficiency in deep learning models.

Explore why quadratic cost functions hinder neural network training and how cross-entropy improves learning efficiency in deep learning models.

81. How AI Is Transforming Your Smartphone

The tech industry and the world are relying on artificial intelligence to solve big problems such as cybersecurity, healthcare and sustainability.

The tech industry and the world are relying on artificial intelligence to solve big problems such as cybersecurity, healthcare and sustainability.

82. Why It’s Very Difficult to Create AI-Based Slow Motion

Over the last few years a number of open source machine learning

projects have emerged that are capable of raising the frame rate of

source video to 60 frames per second and beyond, producing a smoothed,

'hyper-real' look.

Over the last few years a number of open source machine learning

projects have emerged that are capable of raising the frame rate of

source video to 60 frames per second and beyond, producing a smoothed,

'hyper-real' look.

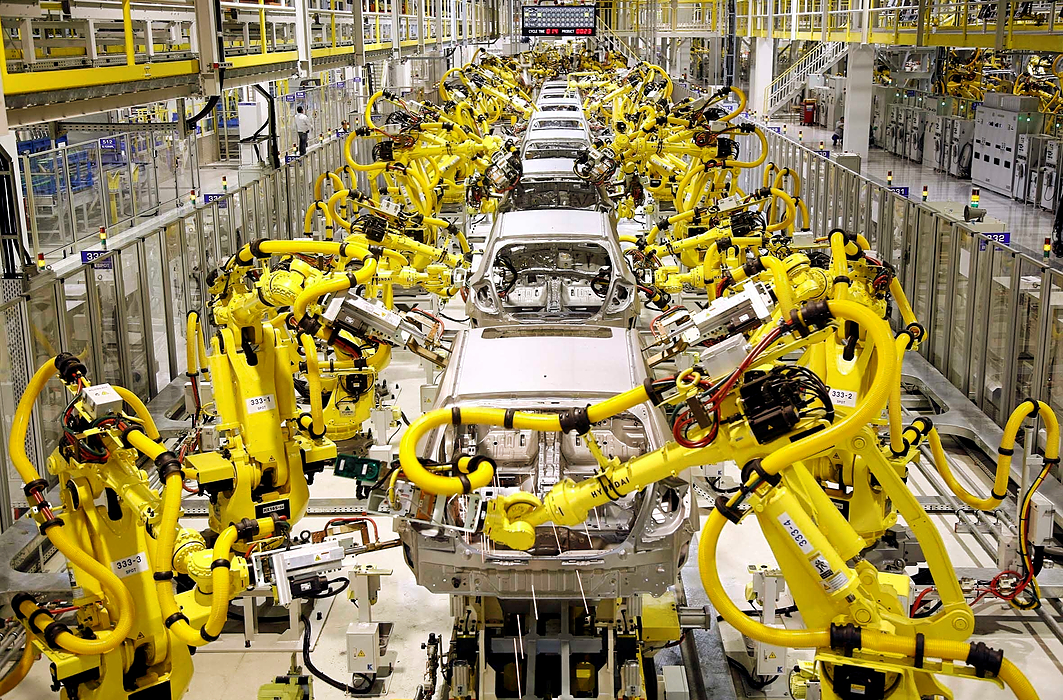

83. Five Successful AI and ML Use Cases In Manufacturing

How can manufacturers put artificial intelligence to work in the industry? In this article, you will find five possible applications of Machine learning and Deep learning to industrial processes optimization.

How can manufacturers put artificial intelligence to work in the industry? In this article, you will find five possible applications of Machine learning and Deep learning to industrial processes optimization.

84. My Time at NUS, Singapore

Singapore is home to some of the best schools in the field of Computer Science, specifically Artificial Intelligence. The cutting edge research going on there is unparalleled. Colleges like Nanyang Technological University (NTU) and National University of Singapore (NUS) have a great reputation all over the world for their CS programs.

Singapore is home to some of the best schools in the field of Computer Science, specifically Artificial Intelligence. The cutting edge research going on there is unparalleled. Colleges like Nanyang Technological University (NTU) and National University of Singapore (NUS) have a great reputation all over the world for their CS programs.

85. Summarizing Most Popular Text-to-Image Synthesis Methods With Python

Comparative Study of Different Adversarial Text to Image Methods

Comparative Study of Different Adversarial Text to Image Methods

86. Where to Learn Machine and Deep Learning for Free

87. Image to Image Translation and Segmentation Tutorial

In this article and the following, we will take a close look at two computer vision subfields: Image Segmentation and Image Super-Resolution. Two very fascinating fields.

In this article and the following, we will take a close look at two computer vision subfields: Image Segmentation and Image Super-Resolution. Two very fascinating fields.

88. PixelLib: Image and Video Segmentation [Maybe just a Quick One]

PIxelLib: Image and video segmentation with just a few lines of code.

PIxelLib: Image and video segmentation with just a few lines of code.

89. How To Create A Simple Neural Network Using Python

I built a simple Neural Network using Python that outputs a target number given a specific input number.

I built a simple Neural Network using Python that outputs a target number given a specific input number.

90. The Implications of Open-Source AI: Should You Release Your AI Source Code Publicly?

In this article, I will share my thoughts on why it's better and safer to bring the new AI tech into the hands of business rather than release it into the wild.

In this article, I will share my thoughts on why it's better and safer to bring the new AI tech into the hands of business rather than release it into the wild.

91. Retraining Machine Learning Model Approaches

Retraining Machine Learning Model, Model Drift, Different ways to identify model drift, Performance Degradation

Retraining Machine Learning Model, Model Drift, Different ways to identify model drift, Performance Degradation

92. PyTorch vs TensorFlow: Who has More Pre-trained Deep Learning Models?

Given the importance of pre-trained Deep Learning models, which Deep Learning framework - PyTorch or TensorFlow - has more of these models available to users is

Given the importance of pre-trained Deep Learning models, which Deep Learning framework - PyTorch or TensorFlow - has more of these models available to users is

93. AI Facts Every Dev Should Know: Artificial intelligence is older than you, probably

The hype around AI is growing rapidly, as most research companies predict AI will take on an increasingly important role in the future.

The hype around AI is growing rapidly, as most research companies predict AI will take on an increasingly important role in the future.

94. How to Turn Your Business into a Cognitive Enterprise with AI Technologies?

95. 11 Awesome (and Worrisome) Applications of AI

For years AI was touted to be the next big technology. Expected to revolutionize the job industry and effectively kill millions of human jobs, it became the poster child for job cuts. Despite this, its adoption has been increasingly well-received. To the tech experts, this wasn’t really surprising given its vast range of use cases.

For years AI was touted to be the next big technology. Expected to revolutionize the job industry and effectively kill millions of human jobs, it became the poster child for job cuts. Despite this, its adoption has been increasingly well-received. To the tech experts, this wasn’t really surprising given its vast range of use cases.

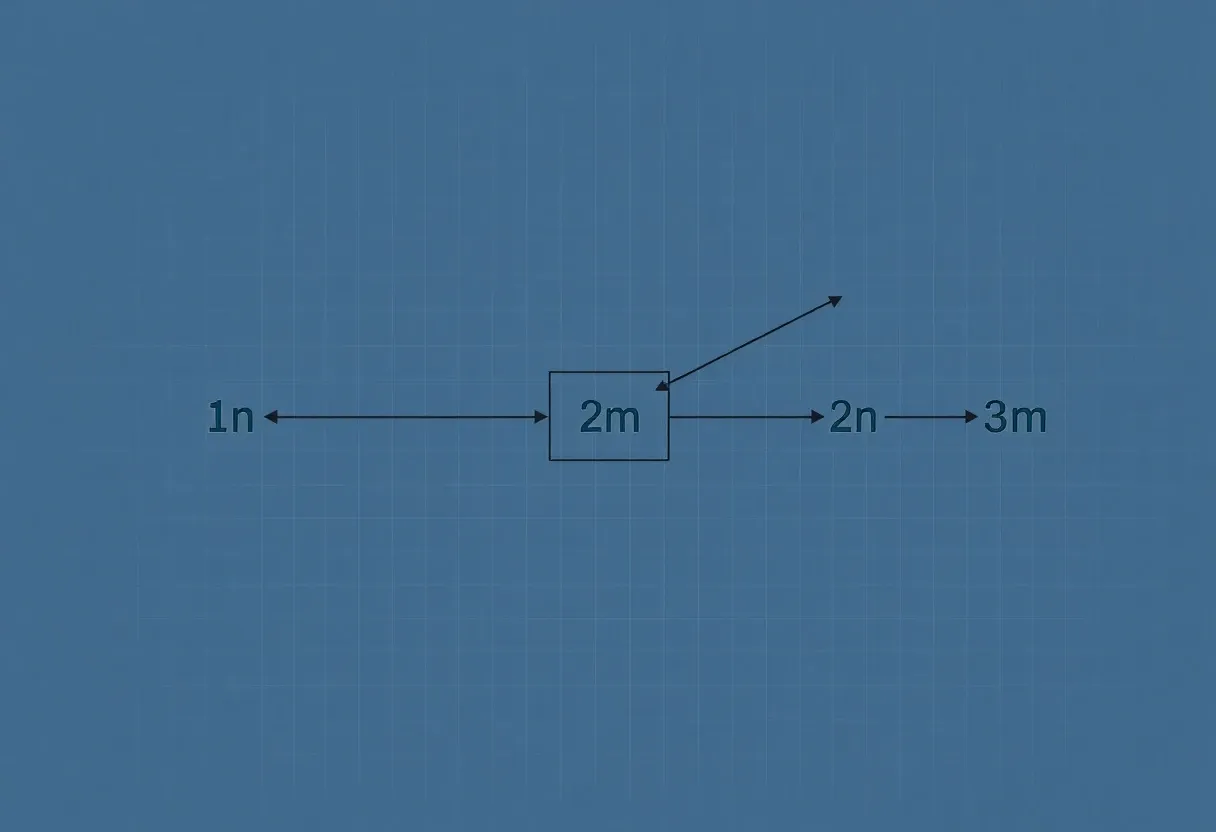

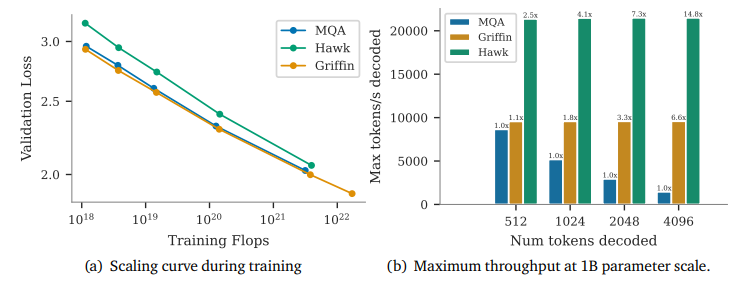

96. Primer on Large Language Model (LLM) Inference Optimizations: 1. Background and Problem Formulation

Overview of Large Language Model (LLM) inference, its importance, challenges, and key problem formulation.

Overview of Large Language Model (LLM) inference, its importance, challenges, and key problem formulation.

97. DJL: Deep Java Library and How To Get Started

Want to get your hands dirty with Machine Learning / Deep Learning, but have a Java background and not sure where to start? Then read on! This article is about using an existing Java skillset and ramp-up your journey to start building deep learning models.

Want to get your hands dirty with Machine Learning / Deep Learning, but have a Java background and not sure where to start? Then read on! This article is about using an existing Java skillset and ramp-up your journey to start building deep learning models.

98. Living in the world of AI - The Human Transformation

Today, if you stop and ask anyone working in a technology company, “What is the one thing that would help them change the world or make them grow faster than anyone else in their field?” The answer would be Data. Yes, data is everything. Because data can essentially change, cure, fix, and support just about any problem. Data is the truth behind everything from finding a cure for cancer to studying the shifting weather patterns.

Today, if you stop and ask anyone working in a technology company, “What is the one thing that would help them change the world or make them grow faster than anyone else in their field?” The answer would be Data. Yes, data is everything. Because data can essentially change, cure, fix, and support just about any problem. Data is the truth behind everything from finding a cure for cancer to studying the shifting weather patterns.

99. Why You Should Use Deep Learning - A Thread

Rich Harang explains why you should use deep learning.

Rich Harang explains why you should use deep learning.

100. 'El transformador ilustrado' una traducción al español

<meta name="monetization" content="$ilp.uphold.com/EXa8i9DQ32qy">

<meta name="monetization" content="$ilp.uphold.com/EXa8i9DQ32qy">

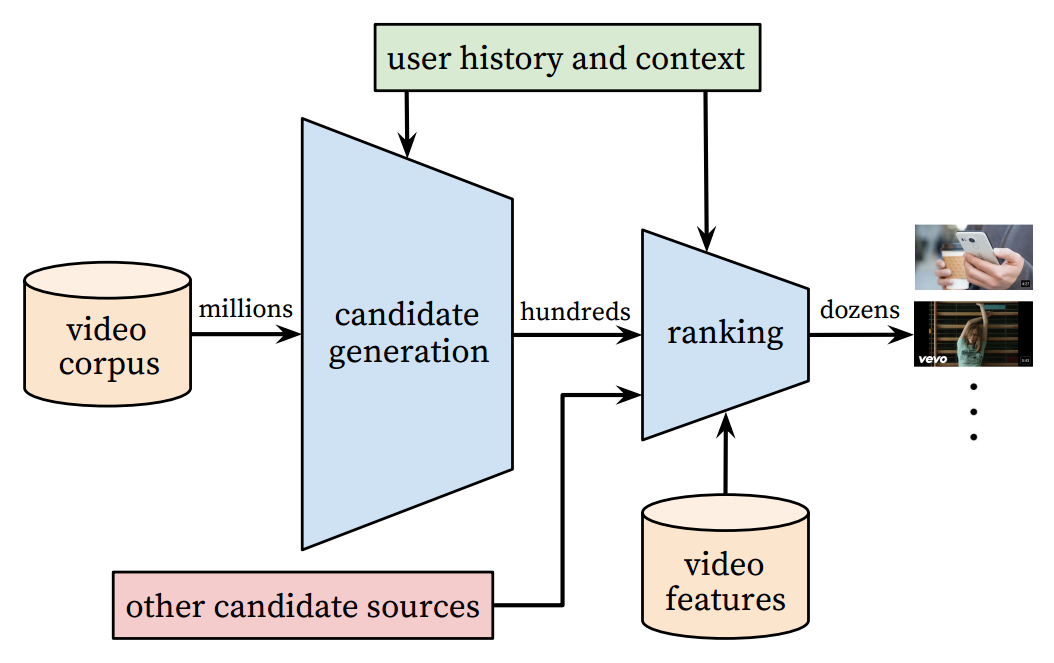

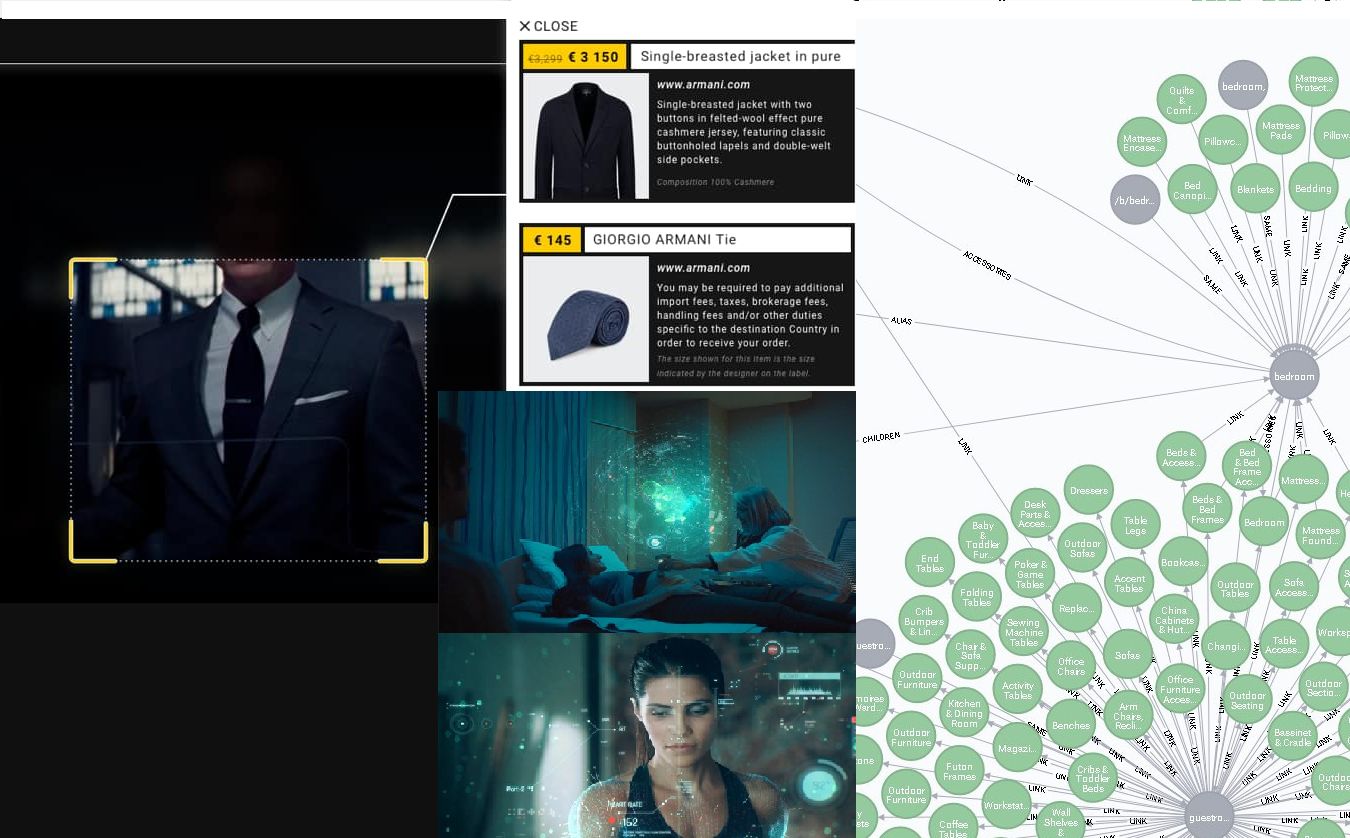

101. How Amazon Uses Deep Learning to Improve Buying Experience

Up to 80 percent of customer interactions are managed by AI today.

Up to 80 percent of customer interactions are managed by AI today.

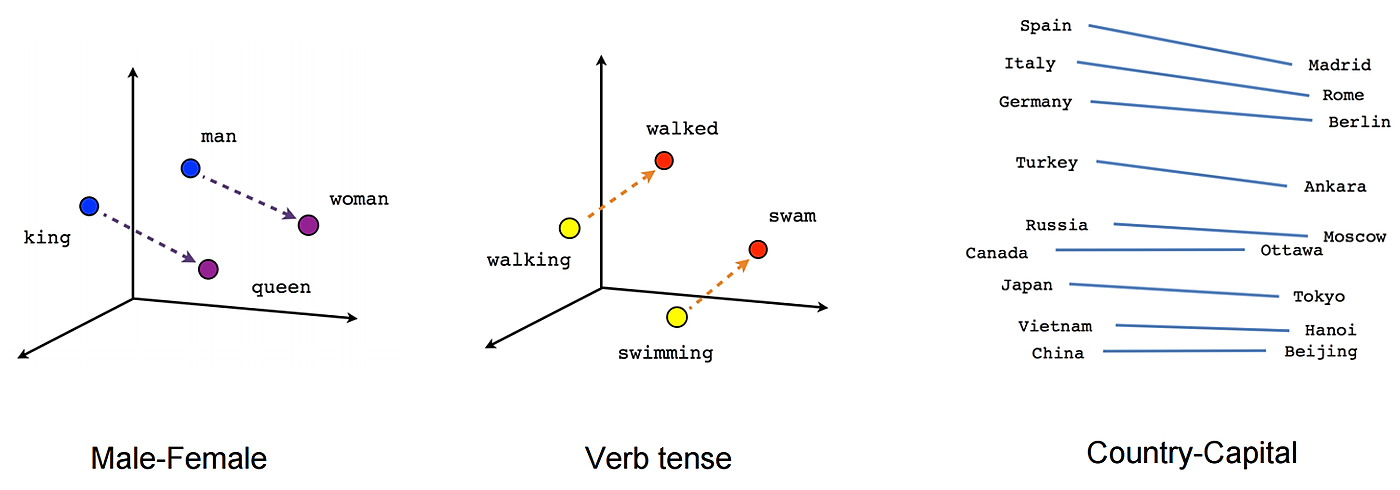

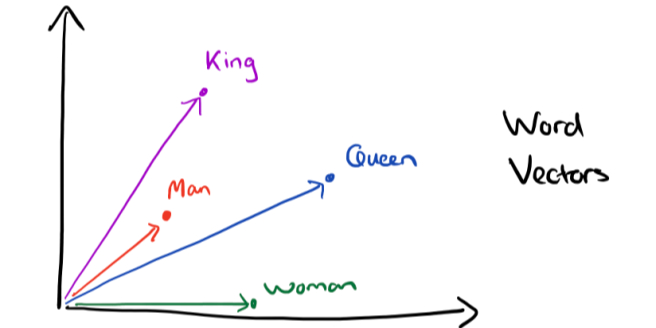

102. How to Remove Gender Bias in Machine Learning Models: NLP and Word Embeddings

Most word embeddings used are glaringly sexist, let us look at some ways to de-bias such embeddings.

Most word embeddings used are glaringly sexist, let us look at some ways to de-bias such embeddings.

103. Use plaidML to do Machine Learning on macOS with an AMD GPU

Want to train machine learning models on your Mac’s integrated AMD GPU or an external graphics card? Look no further than PlaidML.

Want to train machine learning models on your Mac’s integrated AMD GPU or an external graphics card? Look no further than PlaidML.

104. Beat The Heat with Machine Learning Cheat Sheet

If you are a beginner and just started machine learning or even an intermediate level programmer, you might have been stuck on how do you solve this problem. Where do you start? and where do you go from here?

If you are a beginner and just started machine learning or even an intermediate level programmer, you might have been stuck on how do you solve this problem. Where do you start? and where do you go from here?

105. Small Object Detection in Computer Vision: The Patch-Based Approach

How to carry out small object detection with Computer Vision - An example of finding lost people in a forest.

How to carry out small object detection with Computer Vision - An example of finding lost people in a forest.

106. How to Get Started With Embeddings

Getting started with embeddings using open-source tools.

Getting started with embeddings using open-source tools.

107. How to Build a Training Pipeline on Multiple GPUs

In the current big data regime, it is hard to fit all the data into a single CPU.

In the current big data regime, it is hard to fit all the data into a single CPU.

108. Black Mirror's 'Be Right Back' in Real Life: Clone Yourself as a Chatbot

Replika AI has created a platform where anyone, including people with zero knowledge of machine learning, can create and train a chatbot of their own.

Replika AI has created a platform where anyone, including people with zero knowledge of machine learning, can create and train a chatbot of their own.

109. Machine Learning 101: How And Where To Start For Absolute Beginners

This post covers all you will need for your Journey as a Beginner. All the Resources are provided with links. You just need Time and Your dedication.

This post covers all you will need for your Journey as a Beginner. All the Resources are provided with links. You just need Time and Your dedication.

110. Embeddings for RAG - A Complete Overview

Embedding is a crucial and fundamental step towards building a Retrieval Augmented Generation(RAG) pipeline. BERT & SBERT are state-of-the-art embedding models.

Embedding is a crucial and fundamental step towards building a Retrieval Augmented Generation(RAG) pipeline. BERT & SBERT are state-of-the-art embedding models.

111. Building an End-to-End Speech Recognition Model in PyTorch with AssemblyAI

This post was written by Michael Nguyen, Machine Learning Research Engineer at AssemblyAI. AssemblyAI uses Comet to log, visualize, and understand their model development pipeline.

This post was written by Michael Nguyen, Machine Learning Research Engineer at AssemblyAI. AssemblyAI uses Comet to log, visualize, and understand their model development pipeline.

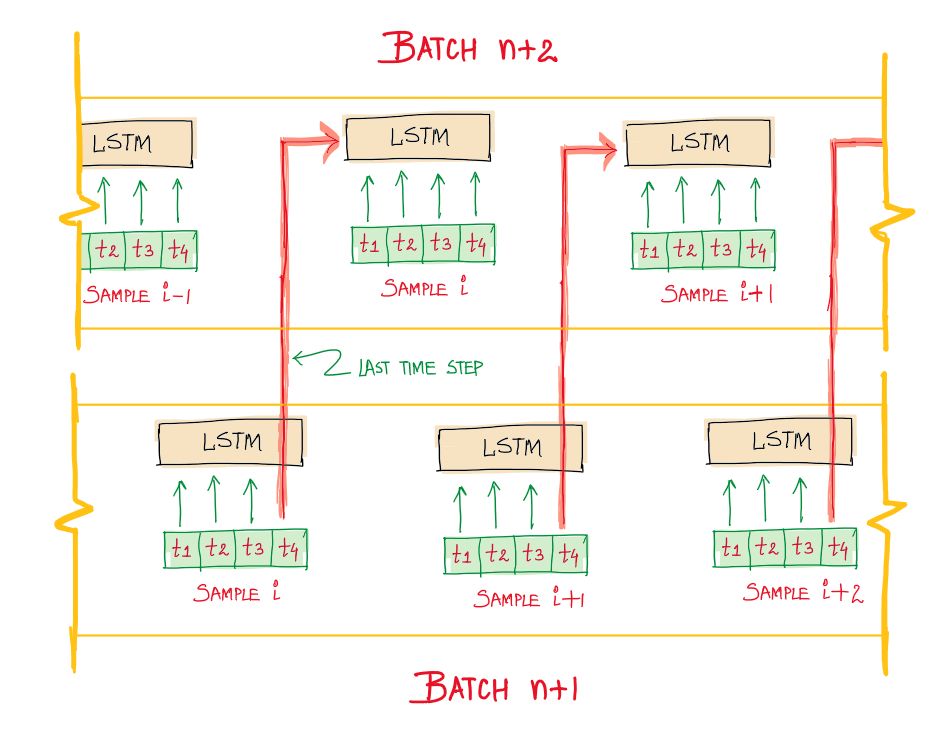

112. Stateless vs Stateful LSTMs in Machine Learning

A brief description of Stateful and Stateless LSTM (one of the sequence modeling algorithms)

A brief description of Stateful and Stateless LSTM (one of the sequence modeling algorithms)

113. Basic Use Cases of AI, ML, Deep Learning and Internet of Things

114. Procesando Datos Para Deep Learning: Datasets, visualizaciones y DataLoaders en PyTorch

<meta name="monetization" content="$ilp.uphold.com/EXa8i9DQ32qy">

<meta name="monetization" content="$ilp.uphold.com/EXa8i9DQ32qy">

115. Spoken Language Understanding (SLU) vs. Natural Language Understanding (NLU)

Differences between SLU (Spoken Language Understanding) and NLU (Natural Language Understanding). Top FOSS and paid engines and their approach to SLU.

Differences between SLU (Spoken Language Understanding) and NLU (Natural Language Understanding). Top FOSS and paid engines and their approach to SLU.

116. P-HAR: Pornographic Human Action Recognition

We utilize advanced human action recognition models to accurately identify individuals performing various actions within pornographic videos.

We utilize advanced human action recognition models to accurately identify individuals performing various actions within pornographic videos.

117. Approach Pre-Trained Deep Learning Models With Caution

Pre-trained models are easy to use, but are you glossing over details that could impact your model performance?

Pre-trained models are easy to use, but are you glossing over details that could impact your model performance?

118. Fraud Detection Using Artificial Intelligence and Machine Learning

Explore how AI and ML techniques are revolutionizing fraud detection across industries, improving accuracy, adaptability, and real-time threat response.

Explore how AI and ML techniques are revolutionizing fraud detection across industries, improving accuracy, adaptability, and real-time threat response.

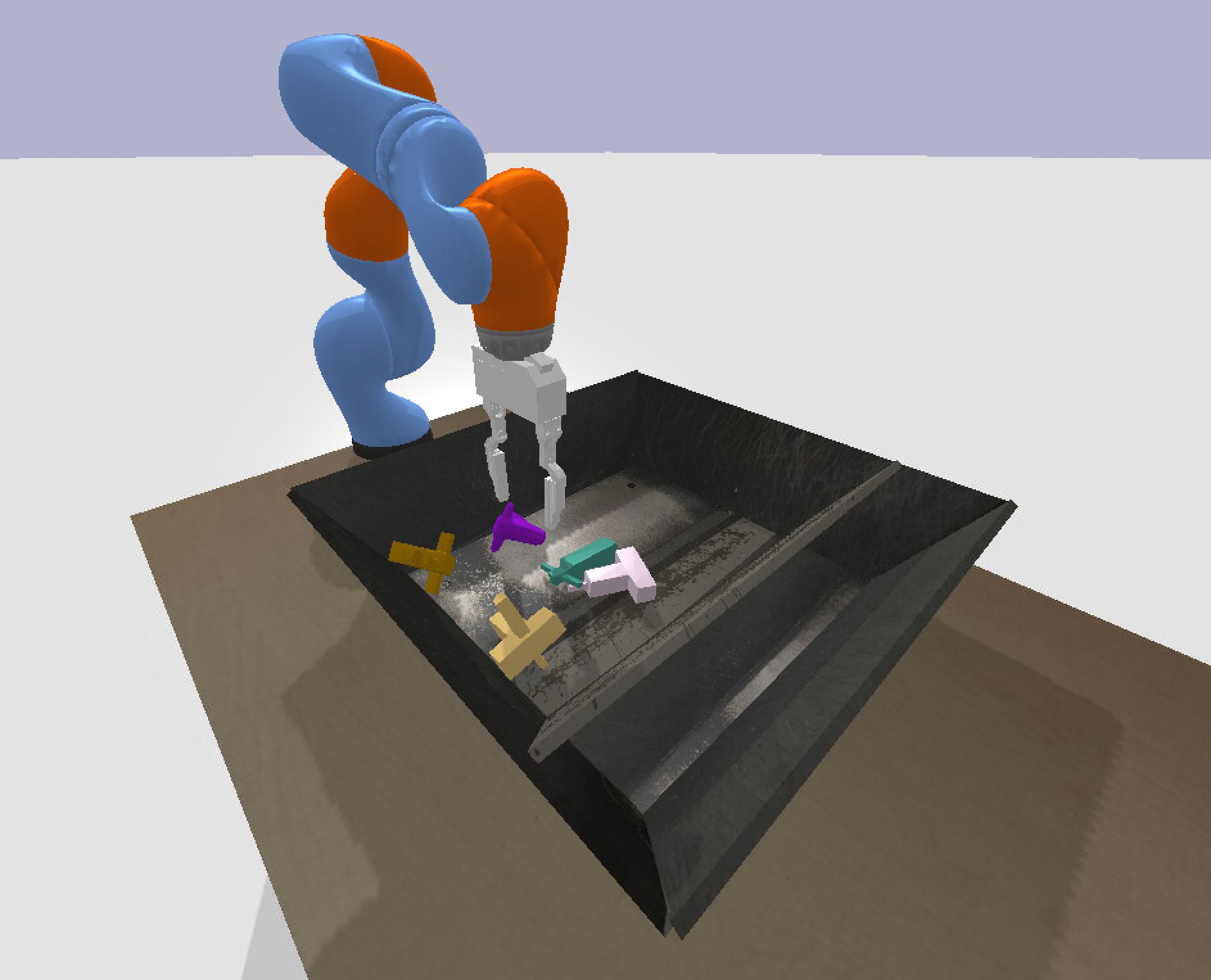

119. Using Reinforcement Learning to Build a Self-Learning Grasping Robot

Tips and tricks to build an autonomous grasping Kuka robot

Tips and tricks to build an autonomous grasping Kuka robot

120. Gone Are Those Days of AI

AI has truly evolved over the past decade - from a baby to a beast. Here I quickly summarise what has changed

AI has truly evolved over the past decade - from a baby to a beast. Here I quickly summarise what has changed

121. Facebook's Deepfake Challenge That Will defeat Deepfakes. Hopefully.

Nowadays, we are seeing a new wave and great advancements in different technologies. Things like Deep Learning, Computer Vision, and Artificial Intelligence are improving every single day. And Researchers and scientists are having amazing use-cases with these technologies which can change the direction of our world.

Nowadays, we are seeing a new wave and great advancements in different technologies. Things like Deep Learning, Computer Vision, and Artificial Intelligence are improving every single day. And Researchers and scientists are having amazing use-cases with these technologies which can change the direction of our world.

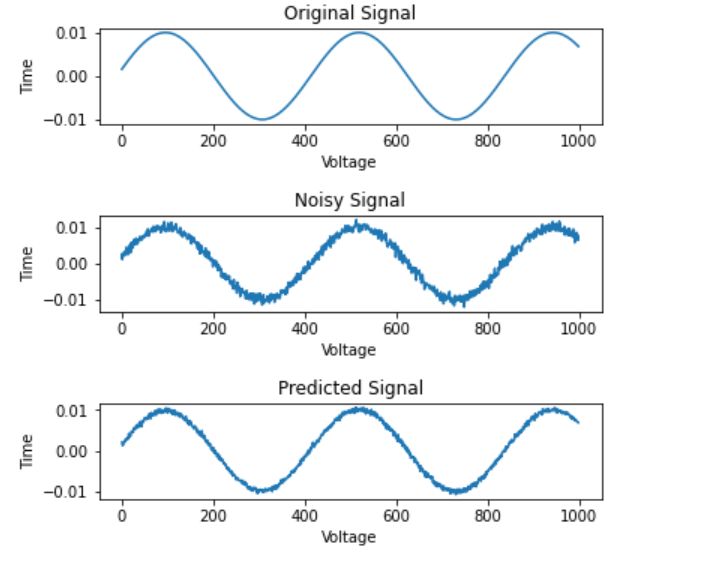

122. Signal DeNoising using Auto Encoders

A project that added Additive White Gaussian Noise to a sinusoidal signal before training machine learning networks to denoise it effectively as a challenge.

A project that added Additive White Gaussian Noise to a sinusoidal signal before training machine learning networks to denoise it effectively as a challenge.

123. Tired of Slow Python ML Pipelines? Try Purem

Purem brings native-speed execution to Python ML workloads – no boilerplate, no wrappers. Just pip install purem and run code up to 500x faster.

Purem brings native-speed execution to Python ML workloads – no boilerplate, no wrappers. Just pip install purem and run code up to 500x faster.

124. Embeddings at E-commerce

125. AI as the "Bad Student" in Class

Join an ongoing quest to uncover the true nature of AI's "intelligence".

Join an ongoing quest to uncover the true nature of AI's "intelligence".

126. GPT in 200 Lines: The Beautiful Simplicity Behind Modern AI

How does GPT really work? Explore Andrej Karpathy’s tiny 200-line implementation and discover the elegant math behind modern AI.

How does GPT really work? Explore Andrej Karpathy’s tiny 200-line implementation and discover the elegant math behind modern AI.

127. ML for Diabetes from Bangladesh

128. Creating neural networks without human intervention

…And where is the blockchain in it?

…And where is the blockchain in it?

129. Unpredictability of Artificial Intelligence

The young field of AI Safety is still in the process of identifying its challenges and limitations. In this paper, we formally describe one such impossibility result, namely Unpredictability of AI. We prove that it is impossible to precisely and consistently predict what specific actions a smarter-than-human intelligent system will take to achieve its objectives, even if we know terminal goals of the system. In conclusion, impact of Unpredictability on AI Safety is discussed.

The young field of AI Safety is still in the process of identifying its challenges and limitations. In this paper, we formally describe one such impossibility result, namely Unpredictability of AI. We prove that it is impossible to precisely and consistently predict what specific actions a smarter-than-human intelligent system will take to achieve its objectives, even if we know terminal goals of the system. In conclusion, impact of Unpredictability on AI Safety is discussed.

130. Will We See AI Like Jarvis and Samantha in Our Lifetime?

While these AI systems have been glamorized and personified in Hollywood, it begs the question: are we on the cusp of seeing such advanced AI in our lifetime?

While these AI systems have been glamorized and personified in Hollywood, it begs the question: are we on the cusp of seeing such advanced AI in our lifetime?

131. How Machine Generated Virtual Assistants can 10x Your Productivity in 2022

AI assistant technology is in many ways similar to a traditional chatbot but integrates next-generation machine learning, AR/VR and data science.

AI assistant technology is in many ways similar to a traditional chatbot but integrates next-generation machine learning, AR/VR and data science.

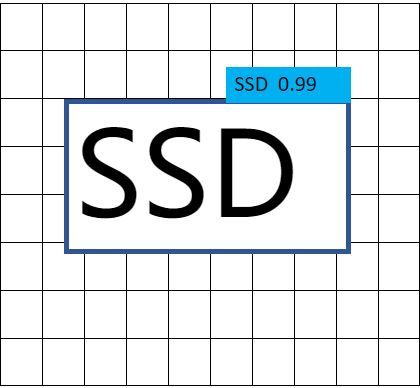

132. Object Detection Using Single Shot MultiBox Detector (A Case Study Approach)

This blog post delivers the fundamental principles behind object detection and it's algorithms with rigorous intuition.

This blog post delivers the fundamental principles behind object detection and it's algorithms with rigorous intuition.

133. Top AI and ML YouTube Channels for Data Scientists to Subscribe to

Subscribe to these Machine Learning YouTube channels today for AI, ML, and computer science tutorial videos.

Subscribe to these Machine Learning YouTube channels today for AI, ML, and computer science tutorial videos.

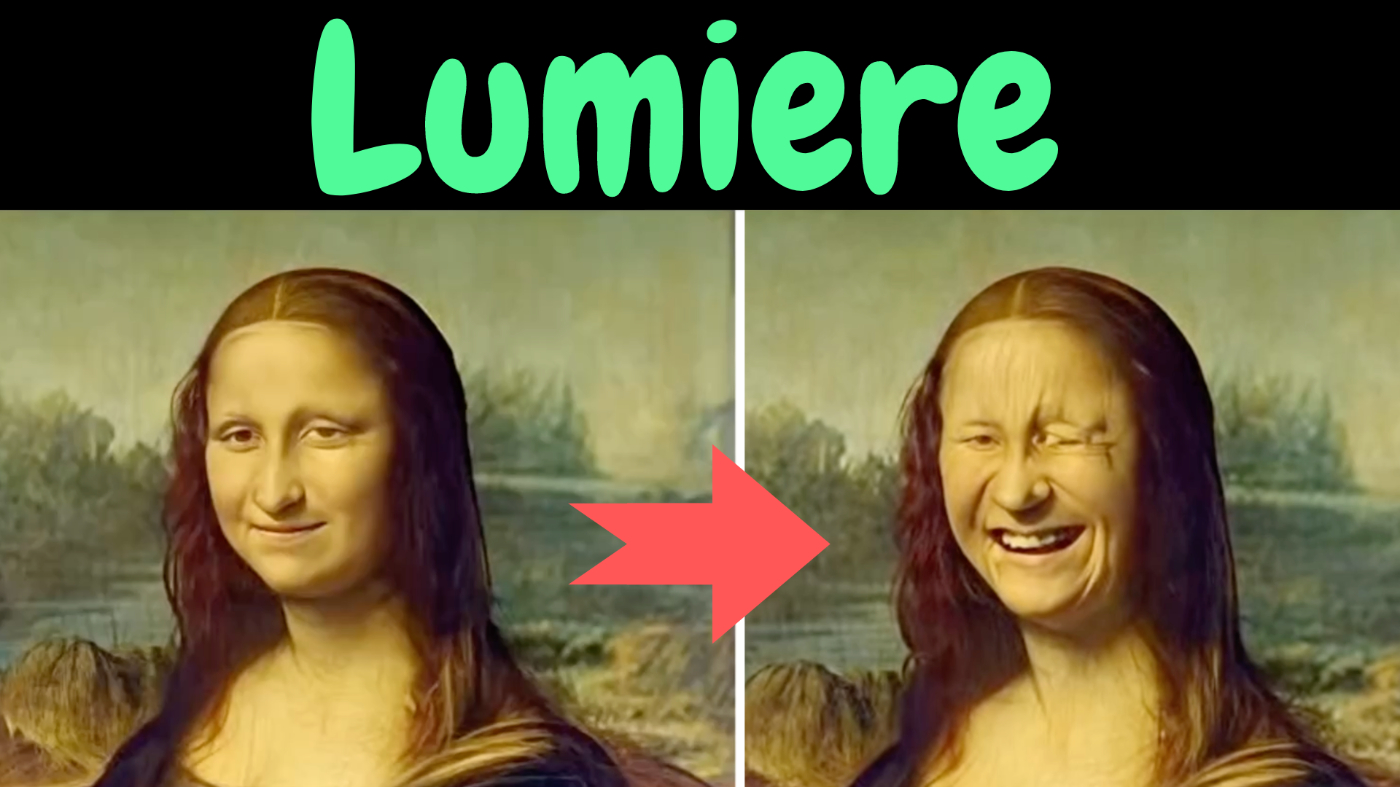

134. Google Unveils Its Most Promising Text-to-Video Model Yet: Lumiere

Sometimes simplicity is key to getting the best results. And that's what Lumiere by Google offers.

Sometimes simplicity is key to getting the best results. And that's what Lumiere by Google offers.

135. How to Configure Experiments With Hydra - From an ML Enginner Perspective

Hydra offers a solution to these challenges. Below, you will find a basic guide on how to use it.

Hydra offers a solution to these challenges. Below, you will find a basic guide on how to use it.

136. 👨🔬️ Top 10 Data Scientist Skills to Develop to Get Yourself Hired

List of Top 10 Data Scientist skills that guaranteed employment. As well as a selection of helpful resources to master these skills

List of Top 10 Data Scientist skills that guaranteed employment. As well as a selection of helpful resources to master these skills

137. 10 Best + Free Machine Learning Courses Collection

Here's a compilation of some of the best + free machine learning courses available online.

Here's a compilation of some of the best + free machine learning courses available online.

138. 70-Page Report on the COCO Dataset and Object Detection [Part 2]

This blog is part 1 of (and contains a link to) a 70+ page report was created to quickly find data resources and/or assets for a given dataset and a specific ta

This blog is part 1 of (and contains a link to) a 70+ page report was created to quickly find data resources and/or assets for a given dataset and a specific ta

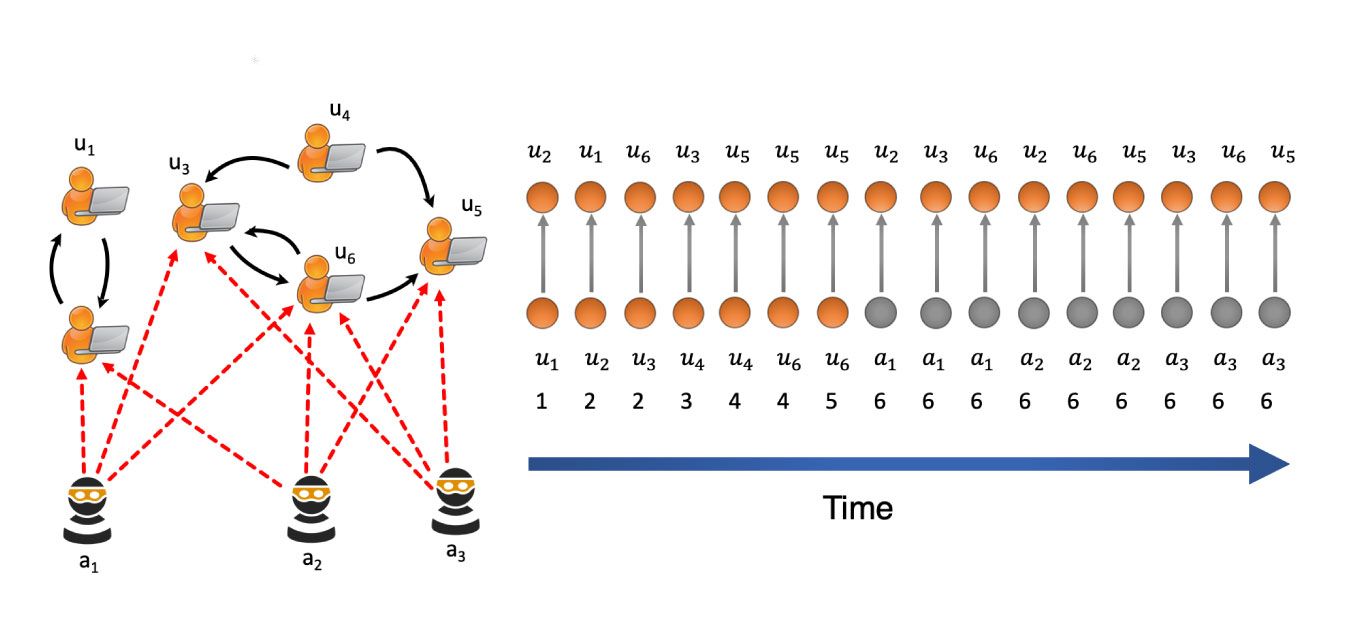

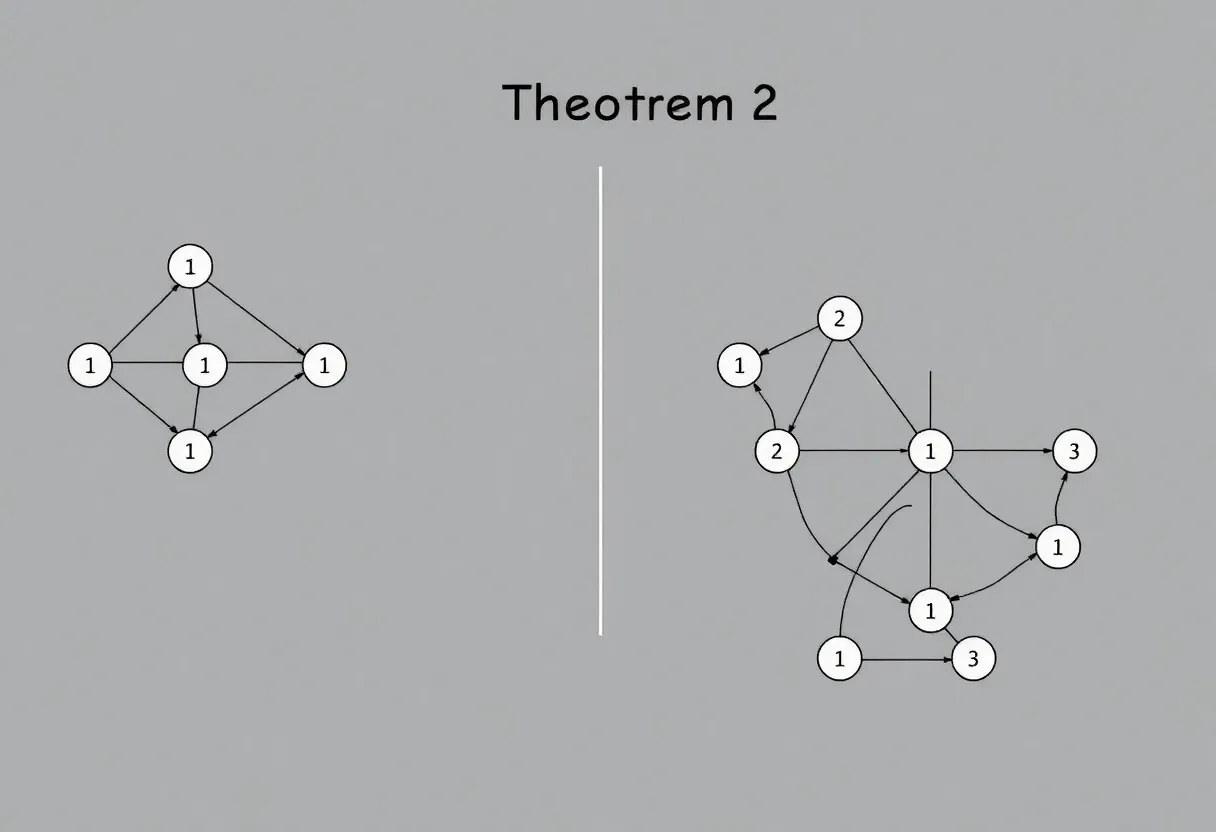

139. MIDAS: A State-of-the-Art Model for Anomaly Detection in Graphs

In machine learning, hot topics such as autonomous vehicles, GANs, and face recognition often take up most of the media spotlight. However, another equally important issue that data scientists are working to solve is anomaly detection. From network security to financial fraud, anomaly detection helps protect businesses, individuals, and online communities. To help improve anomaly detection, researchers have developed a new approach called MIDAS.

In machine learning, hot topics such as autonomous vehicles, GANs, and face recognition often take up most of the media spotlight. However, another equally important issue that data scientists are working to solve is anomaly detection. From network security to financial fraud, anomaly detection helps protect businesses, individuals, and online communities. To help improve anomaly detection, researchers have developed a new approach called MIDAS.

140. Interviews with My Machine Learning Heroes

Meta Article with links to all the interviews with my Machine Learning Heroes: Practitioners, Researchers and Kagglers.

Meta Article with links to all the interviews with my Machine Learning Heroes: Practitioners, Researchers and Kagglers.

141. Deep Learning in an Hour, Day, Season, or Decade

The definitive list of resources for learning how deep learning works on any time scale

The definitive list of resources for learning how deep learning works on any time scale

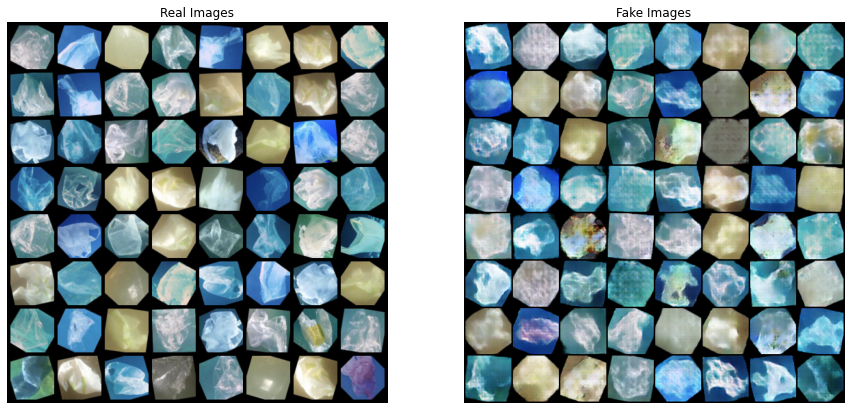

142. Understanding GAN Mode Collapse: Causes and Solutions

Explore the causes of GAN mode collapse, including catastrophic forgetting and discriminator overfitting, to enhance the diversity of AI-generated outputs.

Explore the causes of GAN mode collapse, including catastrophic forgetting and discriminator overfitting, to enhance the diversity of AI-generated outputs.

143. Human Intelligence or Artificial Intelligence? We Need Both.

Artificial intelligence (AI) has reached a tipping point, leveraging the massive pools of data gathered by every app, website, and device in our lives to make increasingly sophisticated decisions on our behalf. AI is at work in our inboxes sorting and blocking emails. It takes and processes our increasingly complex requests through voice assistants. It supplements customer support through chatbots, and heavily automates complex processes to reduce the workload for knowledge workers. Evidently, devices can adapt on the fly to human behavior.

Artificial intelligence (AI) has reached a tipping point, leveraging the massive pools of data gathered by every app, website, and device in our lives to make increasingly sophisticated decisions on our behalf. AI is at work in our inboxes sorting and blocking emails. It takes and processes our increasingly complex requests through voice assistants. It supplements customer support through chatbots, and heavily automates complex processes to reduce the workload for knowledge workers. Evidently, devices can adapt on the fly to human behavior.

144. The Best AI Articles of October 2022

Support vector machines, decision trees, and AI-generated content are some of the topics in the best AI articles of October.

Support vector machines, decision trees, and AI-generated content are some of the topics in the best AI articles of October.

145. 6 important Python Libraries for Machine Learning and Data Science

In this guide, we’ll show the must know Python libraries for machine learning and data science.

In this guide, we’ll show the must know Python libraries for machine learning and data science.

146. Debunking 4 Common Myths About Machine Learning

Machine learning is a subset of artificial intelligence that involves the use of algorithms and statistical models to enable computers to improve them.

Machine learning is a subset of artificial intelligence that involves the use of algorithms and statistical models to enable computers to improve them.

147. Takeaways And Quotes From The World’s Largest Kaggle GrandMaster Panel

148. MusicGen from Meta AI — Understanding Model Architecture, Vector Quantization and Model Conditioning

Wish to generate high quality, realistic, controllable music using AI? Meta's new MusicGen is the answer.

Wish to generate high quality, realistic, controllable music using AI? Meta's new MusicGen is the answer.

149. Detecting Humans in Smart Homes with Computer Vision

Learn more about OpenCV, how you can use it to identify and track people in real-time, and what challenges you can meet.

Learn more about OpenCV, how you can use it to identify and track people in real-time, and what challenges you can meet.

150. 15 Essential Python Libraries for Data Science and Machine Learning

Discover 15 essential Python libraries for data science & machine learning, covering data mining, visualization & processing.

Discover 15 essential Python libraries for data science & machine learning, covering data mining, visualization & processing.

151. A Complete Guide To The Machine Learning Tools On AWS

In this article, we will take a look at each one of the machine learning tools offered by AWS and understand the type of problems they try to solve for their customers.

In this article, we will take a look at each one of the machine learning tools offered by AWS and understand the type of problems they try to solve for their customers.

152. NEON.LIFE: Meet Your Digital Avatar from Samsung

Anyone watched Blade Running 2049 must remember ‘Joi’, the pretty and sophisticated holographic projection of an artificial human. She speaks to you, helps you with house affairs, tells jokes to you, keeps you accompanied, and some more… just like a real human. She even has her own memory with you and developed character over time. Except, ‘she’ is not human. She is just a super complicated ‘modeling’ of a real human that can speak, act and react like one. Yet still, quite some people secretly wish that they could also have their own ‘Joi’. Well, she might not be as far away as you think. Enter NEON, Samsung’s new artificial human.

Anyone watched Blade Running 2049 must remember ‘Joi’, the pretty and sophisticated holographic projection of an artificial human. She speaks to you, helps you with house affairs, tells jokes to you, keeps you accompanied, and some more… just like a real human. She even has her own memory with you and developed character over time. Except, ‘she’ is not human. She is just a super complicated ‘modeling’ of a real human that can speak, act and react like one. Yet still, quite some people secretly wish that they could also have their own ‘Joi’. Well, she might not be as far away as you think. Enter NEON, Samsung’s new artificial human.

153. Why Learning PyTorch Can Make you a Better Engineer

Pytorch is a powerful open-source deep-learning framework that is quickly gaining popularity among researchers and developers

Pytorch is a powerful open-source deep-learning framework that is quickly gaining popularity among researchers and developers

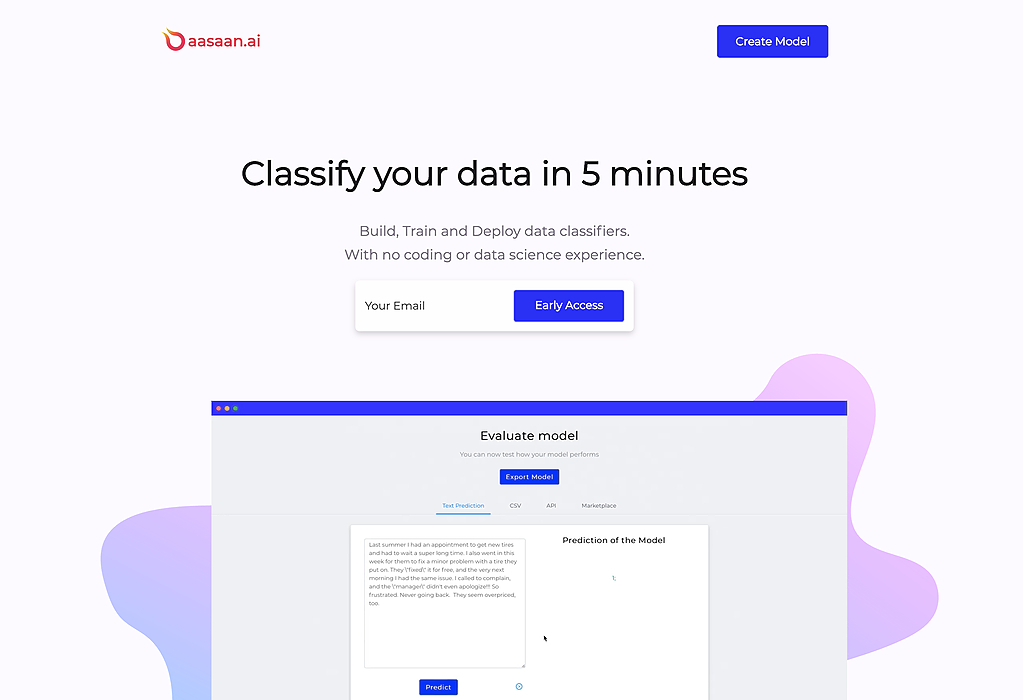

154. How to Use Model Playground for No-Code Model Building

We're launching Model Playground, a model-building product where you can train AI models without writing any code yourself. Still, with you in complete control.

We're launching Model Playground, a model-building product where you can train AI models without writing any code yourself. Still, with you in complete control.

155. Will Generative Models Be The Next Machine Learning Boom?

Machine Learning is a rapidly growing and very complex field of study. Generative Models might prove to be a new breakthrough that will make a new boom.

Machine Learning is a rapidly growing and very complex field of study. Generative Models might prove to be a new breakthrough that will make a new boom.

156. Comparing Kolmogorov-Arnold Network (KAN) and Multi-Layer Perceptrons (MLPs)

Discover how Kolmogorov-Arnold Networks (KAN) challenge traditional MLPs with trainable activation functions, offering a potential leap toward AGI.

Discover how Kolmogorov-Arnold Networks (KAN) challenge traditional MLPs with trainable activation functions, offering a potential leap toward AGI.

157. Best Machine Learning Books You Should Read: 2020 Edition

These books cover the Introductory level to Expert level of knowledge and concepts in ML. These Books have some core factors about ML. Give them a try. Lets Start.

These books cover the Introductory level to Expert level of knowledge and concepts in ML. These Books have some core factors about ML. Give them a try. Lets Start.

158. Top 25 Quotes from ML Heroes Interviews (+ an exciting announcement!)

Re-boot of “Interview with Machine Learning Heroes” and collection of best pieces of advice

Re-boot of “Interview with Machine Learning Heroes” and collection of best pieces of advice

159. An Old Statistical Trick Might Help Better Explain the Apparent Correlation Between Bitcoin and Gold

The relationship between Bitcoin and Gold is one of the dynamics that seems to constantly capture the minds of financial analysts. Recently, there have been a series of new articles claiming an increasing “correlation” between Bitcoin and Gold and the phenomenon seems to be constantly debated in financial media outlets like CNBC or Bloomberg.

The relationship between Bitcoin and Gold is one of the dynamics that seems to constantly capture the minds of financial analysts. Recently, there have been a series of new articles claiming an increasing “correlation” between Bitcoin and Gold and the phenomenon seems to be constantly debated in financial media outlets like CNBC or Bloomberg.

160. Eight Awesome AI Youtube Videos Under 10 Minutes

Machine learning educational content is often in the form of academic papers or blog articles. These resources are incredibly valuable. However, they can sometimes be lengthy and time-consuming. If you just want to learn basic concepts and don’t require all the math and theory behind them, concise machine learning videos may be a better option.

Machine learning educational content is often in the form of academic papers or blog articles. These resources are incredibly valuable. However, they can sometimes be lengthy and time-consuming. If you just want to learn basic concepts and don’t require all the math and theory behind them, concise machine learning videos may be a better option.

161. Why Use Pandas? An Introductory Guide for Beginners

Pandas is a powerful and popular library for working with data in Python. It provides tools for handling and manipulating large and complex datasets.

Pandas is a powerful and popular library for working with data in Python. It provides tools for handling and manipulating large and complex datasets.

162. Optical Character Recognition Technology for Business Owners

How to use Machine learning, Deep learning and Computer Vision for building Optical Character Recognition (OCR) solution for text recognition.

How to use Machine learning, Deep learning and Computer Vision for building Optical Character Recognition (OCR) solution for text recognition.

163. Best Resources To Learn Machine Learning And AI

“Anybody can code” , I know this sentence sounds cliche so let me give you another one “Anybody can learn AI”. Well, know it sounds overwhelming except if you are not a PhD or a mad scientist.

“Anybody can code” , I know this sentence sounds cliche so let me give you another one “Anybody can learn AI”. Well, know it sounds overwhelming except if you are not a PhD or a mad scientist.

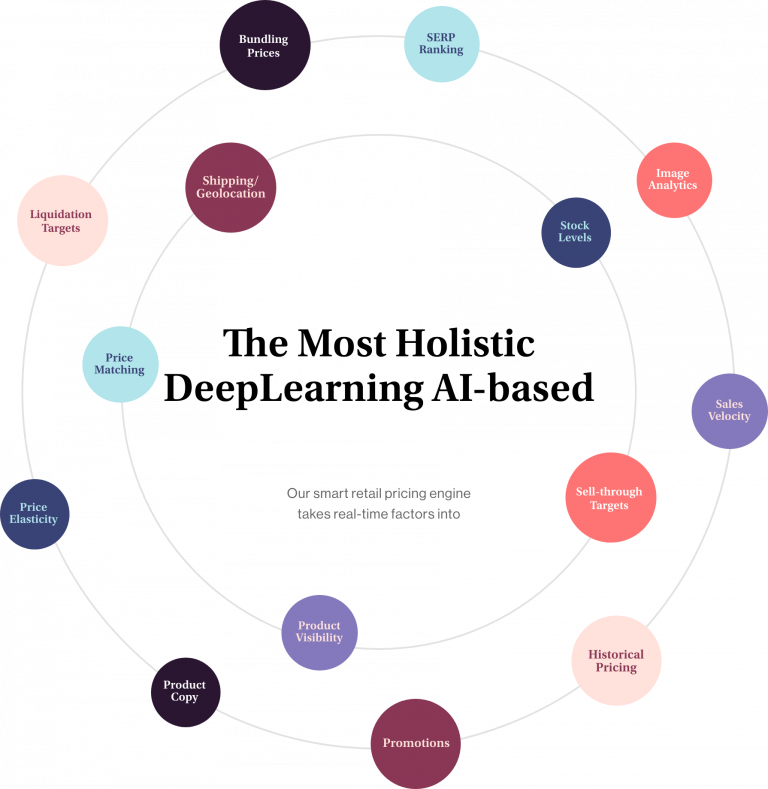

164. 5 Steps To Build Your Dynamic Pricing Engine

With the emergence of online platforms, B2B businesses have had to reconsider their pricing strategies. But, these same technologies help the organizations create dynamic B2B pricing models that bring substantial profits if implemented correctly. For example, an integrated sales and B2B pricing software can help sales reps negotiate with customers and reduce the processing period.

With the emergence of online platforms, B2B businesses have had to reconsider their pricing strategies. But, these same technologies help the organizations create dynamic B2B pricing models that bring substantial profits if implemented correctly. For example, an integrated sales and B2B pricing software can help sales reps negotiate with customers and reduce the processing period.

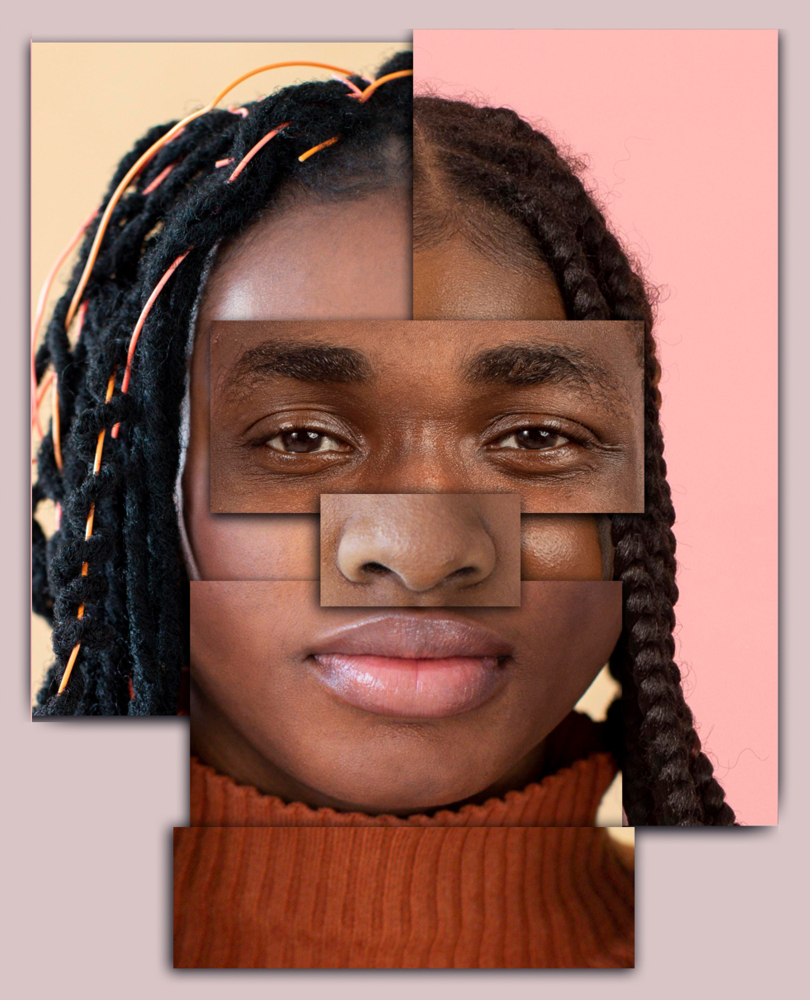

165. 5 Papers on Face Recognition Every Data Scientist Should Read

Facial recognition, is one of the largest areas of research within computer vision. This article will introduce 5 face recognition papers for data scientists.

Facial recognition, is one of the largest areas of research within computer vision. This article will introduce 5 face recognition papers for data scientists.

166. The Main Patterns in Generative AI Lifecycles

Discover the evolution and integration of generative AI in enterprise environments: from rule-based systems to large-scale real-time content generation.

Discover the evolution and integration of generative AI in enterprise environments: from rule-based systems to large-scale real-time content generation.

167. Federated Learning: A Decentralized Form of Machine Learning

Major companies using AI and machine learning now use federated learning – a form of machine learning that trains algorithms on a distributed set of devices.

Major companies using AI and machine learning now use federated learning – a form of machine learning that trains algorithms on a distributed set of devices.

168. How Predictive RBPs with DL can help get a vaccine for Corona faster?

The entire world is engulfed into a corona pandemic attack. At present, there are 191127 positive cases of noble COVID-19 infection all over the world with total fatalities of 7807 according to a report by the World Health Organization(WHO).

The entire world is engulfed into a corona pandemic attack. At present, there are 191127 positive cases of noble COVID-19 infection all over the world with total fatalities of 7807 according to a report by the World Health Organization(WHO).

169. Merging Datasets from Different Timescales

One of the trickiest situations in machine learning is when you have to deal with datasets coming from different time scales.

One of the trickiest situations in machine learning is when you have to deal with datasets coming from different time scales.

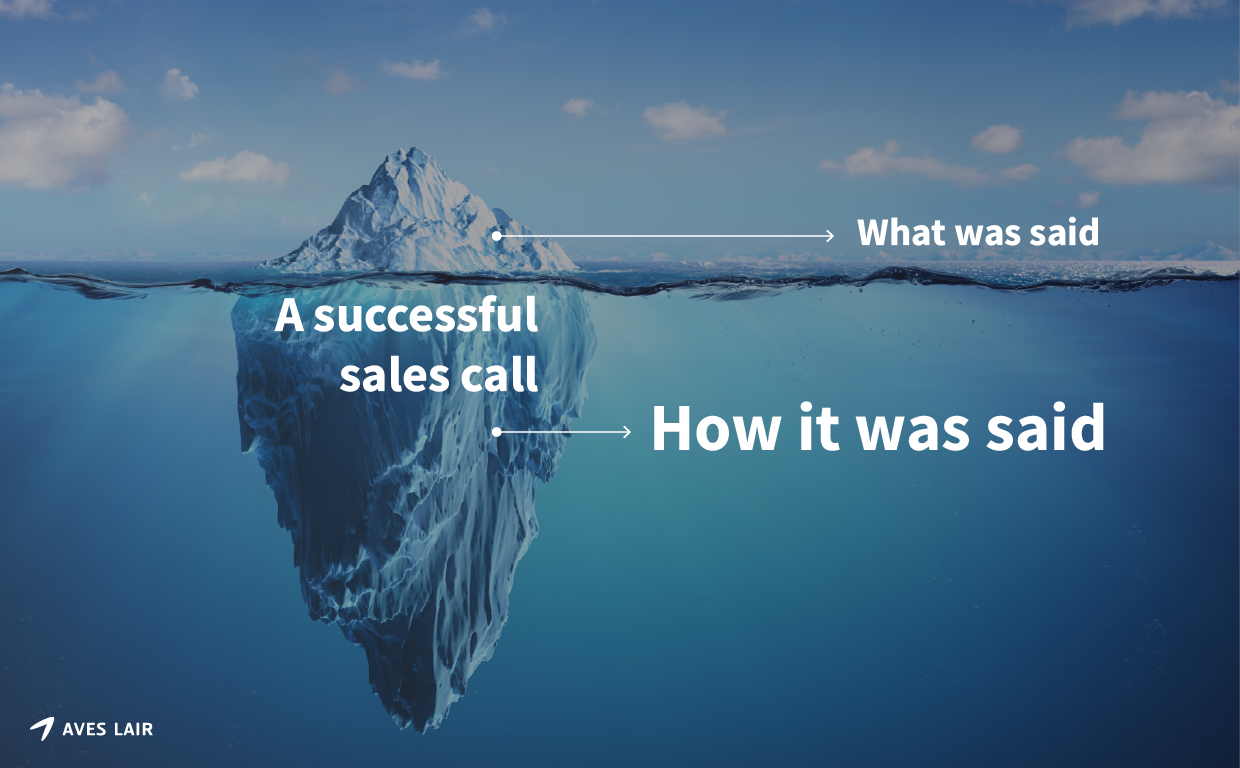

170. B2B Sales Is Broken. New Tech Can Help

Closing b2b deals is difficult. People are not buying aggressive selling techniques. Existing sales softwares aren't helping. New tech can help.

Closing b2b deals is difficult. People are not buying aggressive selling techniques. Existing sales softwares aren't helping. New tech can help.

171. Automated Essay Scoring Using Large Language Models

Explore innovations in Automated Essay Scoring (AES), using models like Longformer and multi-task learning to address challenges in cohesion, grammar, and more.

Explore innovations in Automated Essay Scoring (AES), using models like Longformer and multi-task learning to address challenges in cohesion, grammar, and more.

172. The Dark Matter of AI: Common Sense Is Not So Common

There are still areas where AI lacks and causes problems and frustration to end-users, and these areas pose a great challenge for researchers right now.

There are still areas where AI lacks and causes problems and frustration to end-users, and these areas pose a great challenge for researchers right now.

173. Large Language Models: A Beginner's Journey—Part 1

Explore the world of Large Language Models (LLMs) in our comprehensive guide. From understanding their capabilities to overcoming limitations, discover how LLMs

Explore the world of Large Language Models (LLMs) in our comprehensive guide. From understanding their capabilities to overcoming limitations, discover how LLMs

174. My Experiments And How To Start with Machine Learning

[https://hackernoon.com/images/dJ7MzRYbq8et9JjFyKAEWhkCfPO2-2023yst.jpg]

First of all, let me be clear, what this blog-post is and what it isn’t. This

[https://hackernoon.com/images/dJ7MzRYbq8et9JjFyKAEWhkCfPO2-2023yst.jpg]

First of all, let me be clear, what this blog-post is and what it isn’t. This

175. College Admissions: How AI Can Help Fight Biases

This article is co-authored by Alex Stern & Eugene Sidorin.

This article is co-authored by Alex Stern & Eugene Sidorin.

176. Answering the 12 Most Common Questions About Python

Python is an open-source high-level programming language that is easy to learn and user-friendly. It is one of the first choices of many programmers be it a beginner or experienced. So, today we have prepared a list of most asked questions on Python programming language.

Python is an open-source high-level programming language that is easy to learn and user-friendly. It is one of the first choices of many programmers be it a beginner or experienced. So, today we have prepared a list of most asked questions on Python programming language.

177. The Issue Of Machine Ethics and Robot Rights

Machine ethics and robot rights are quickly becoming hot topics in artificial intelligence/robotics communities. We will argue that the attempts to allow machines to make ethical decisions or to have rights are misguided. Instead we propose a new science of safety engineering for intelligent artificial agents. In particular we issue a challenge to the scientific community to develop intelligent systems capable of proving that they are in fact safe even under recursive self-improvement.

Machine ethics and robot rights are quickly becoming hot topics in artificial intelligence/robotics communities. We will argue that the attempts to allow machines to make ethical decisions or to have rights are misguided. Instead we propose a new science of safety engineering for intelligent artificial agents. In particular we issue a challenge to the scientific community to develop intelligent systems capable of proving that they are in fact safe even under recursive self-improvement.

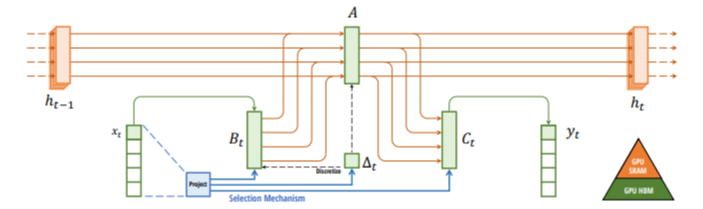

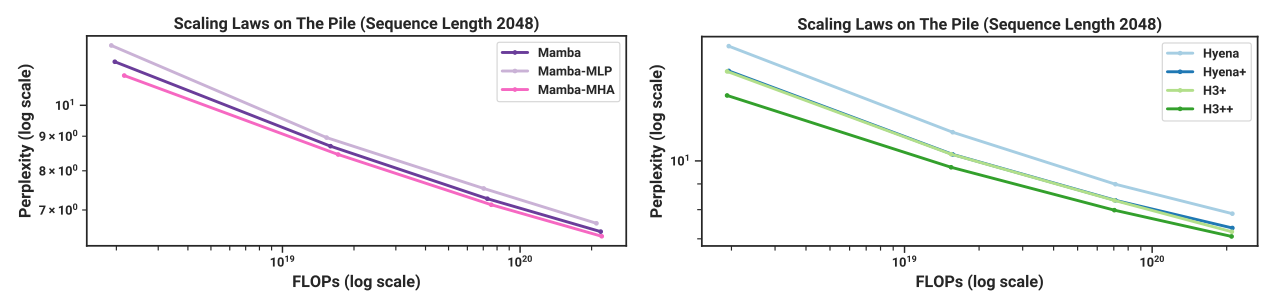

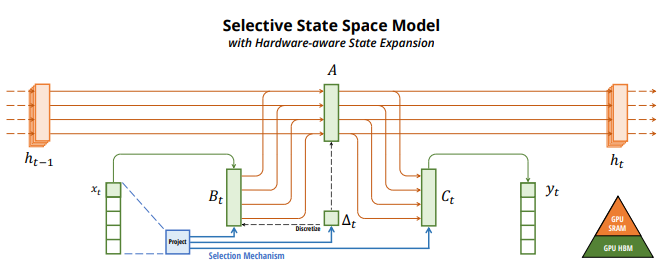

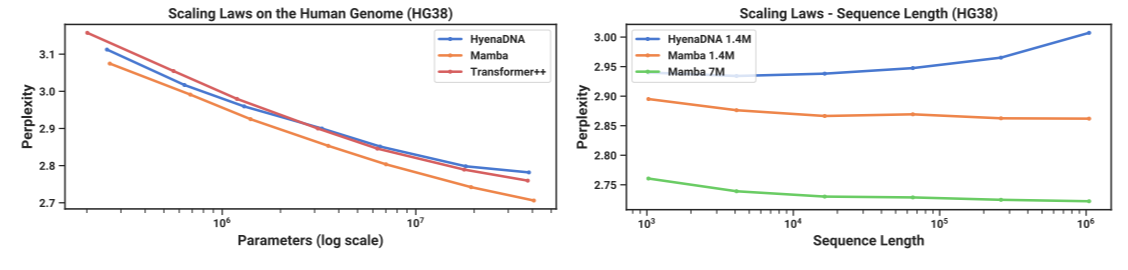

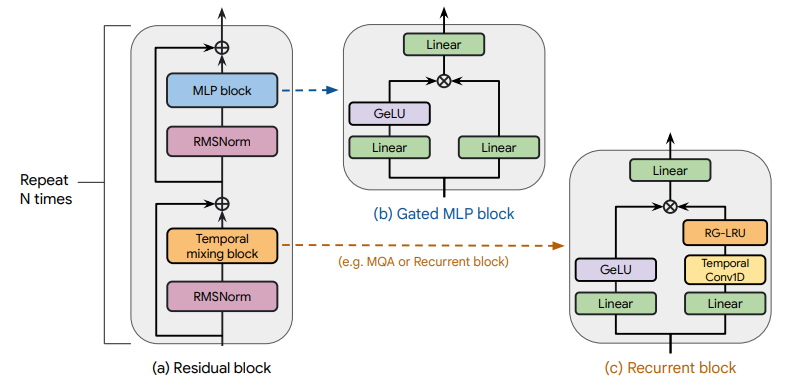

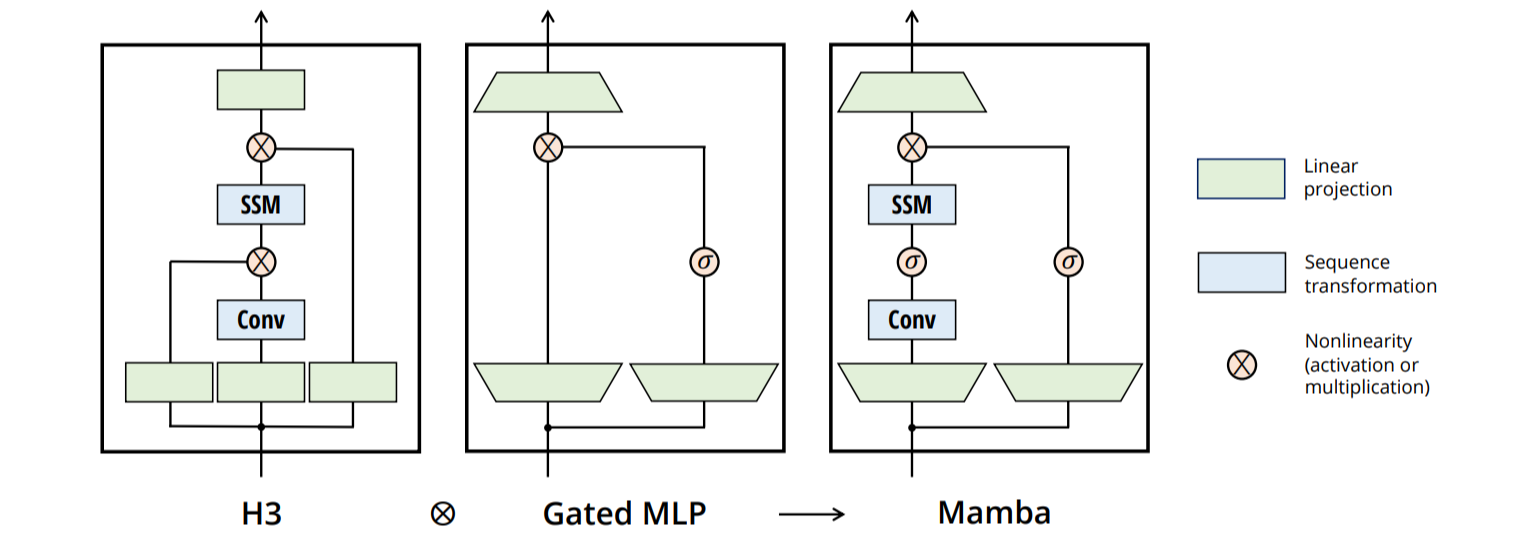

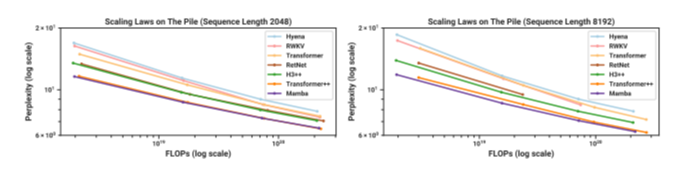

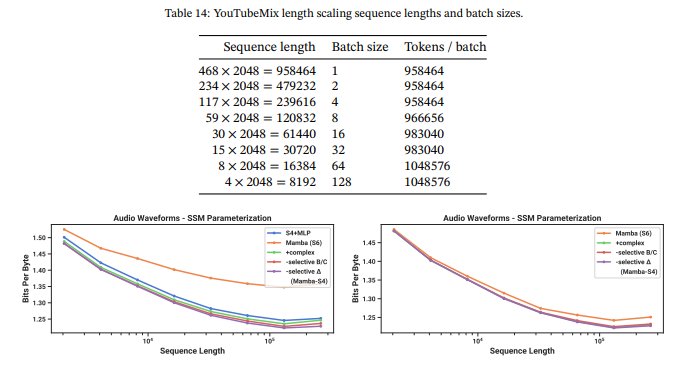

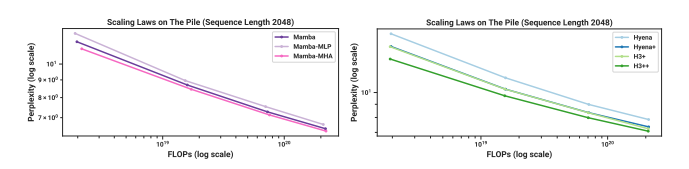

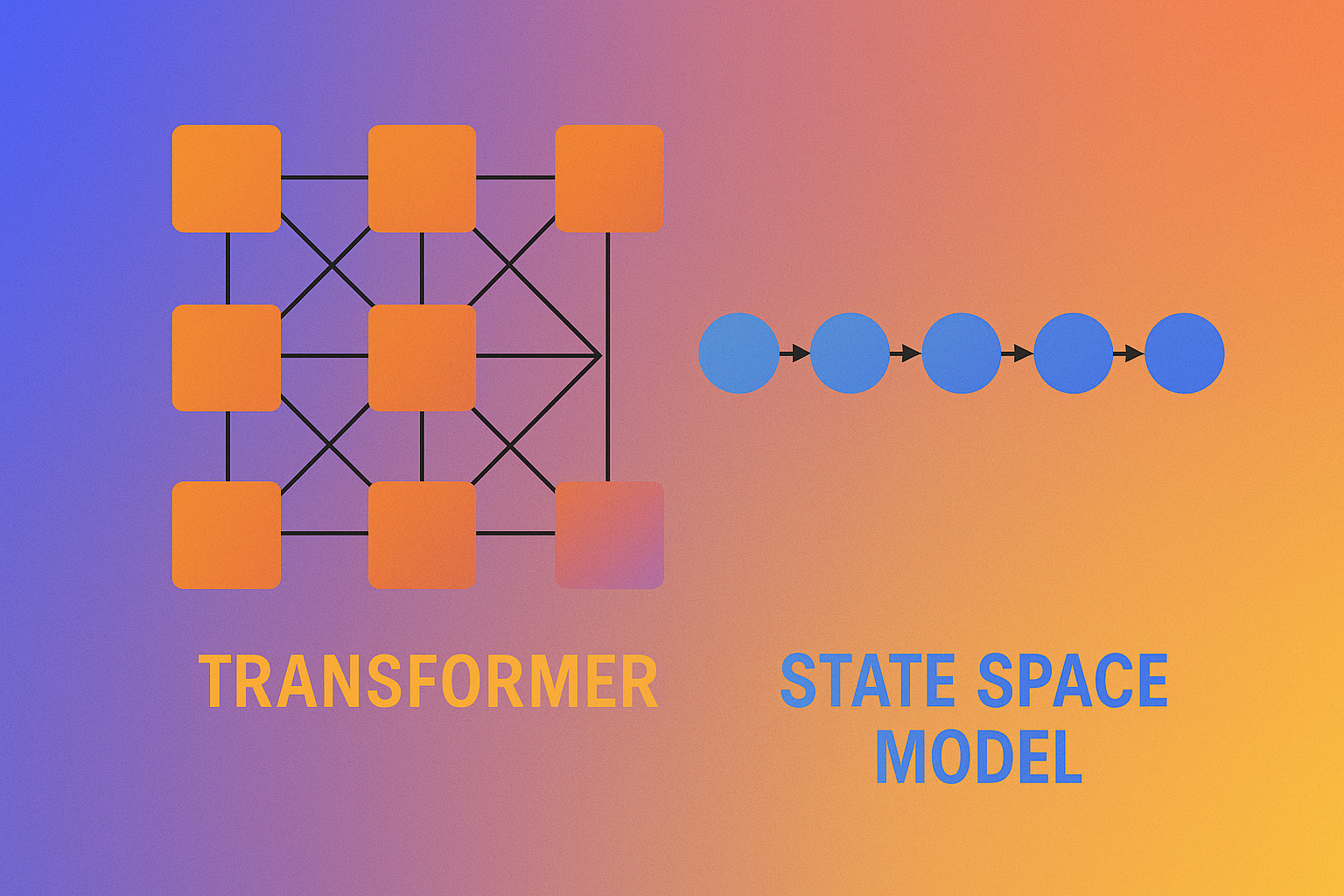

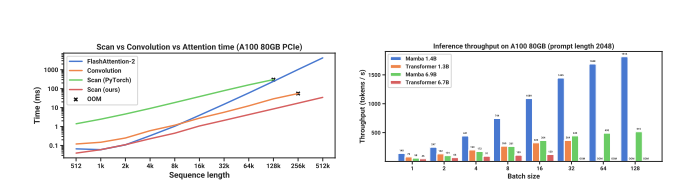

178. The AI World Has a New Darling—And It’s Not a Transformer

Discover Mamba, a novel selective state space model (SSM) that outperforms Transformers in speed and scalability.