Let's learn about Natural Language Processing via these 420 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

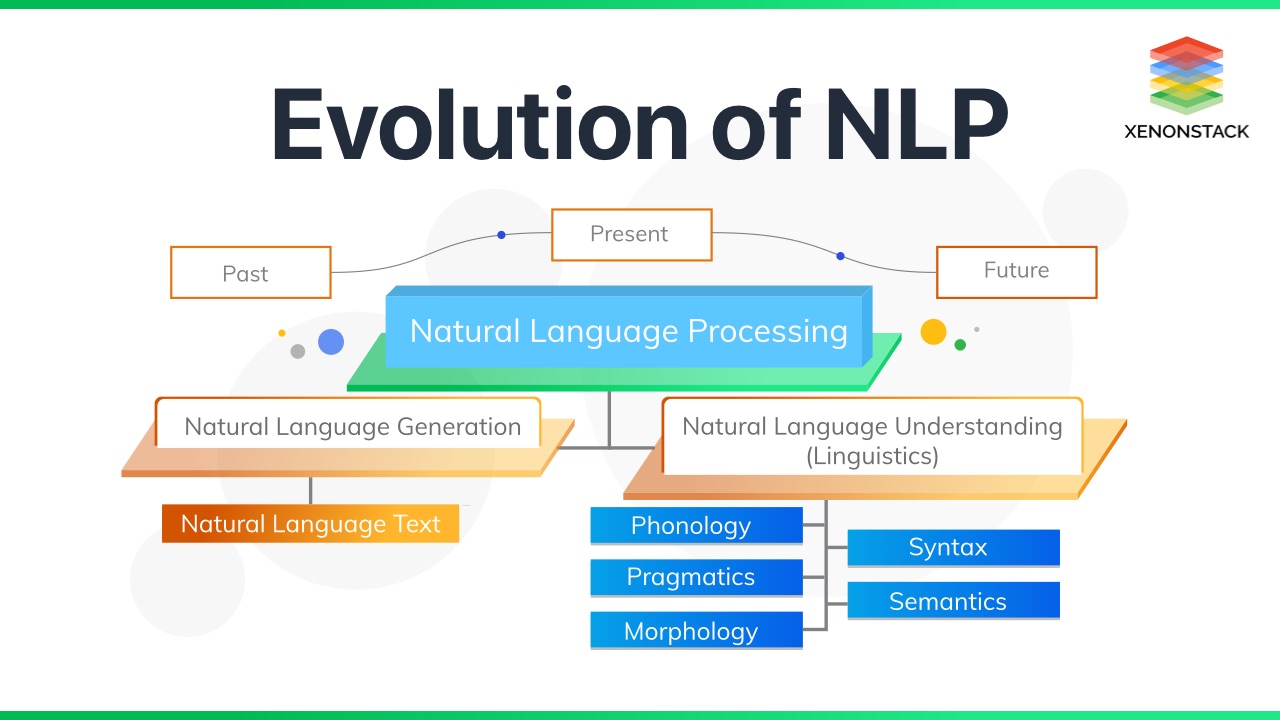

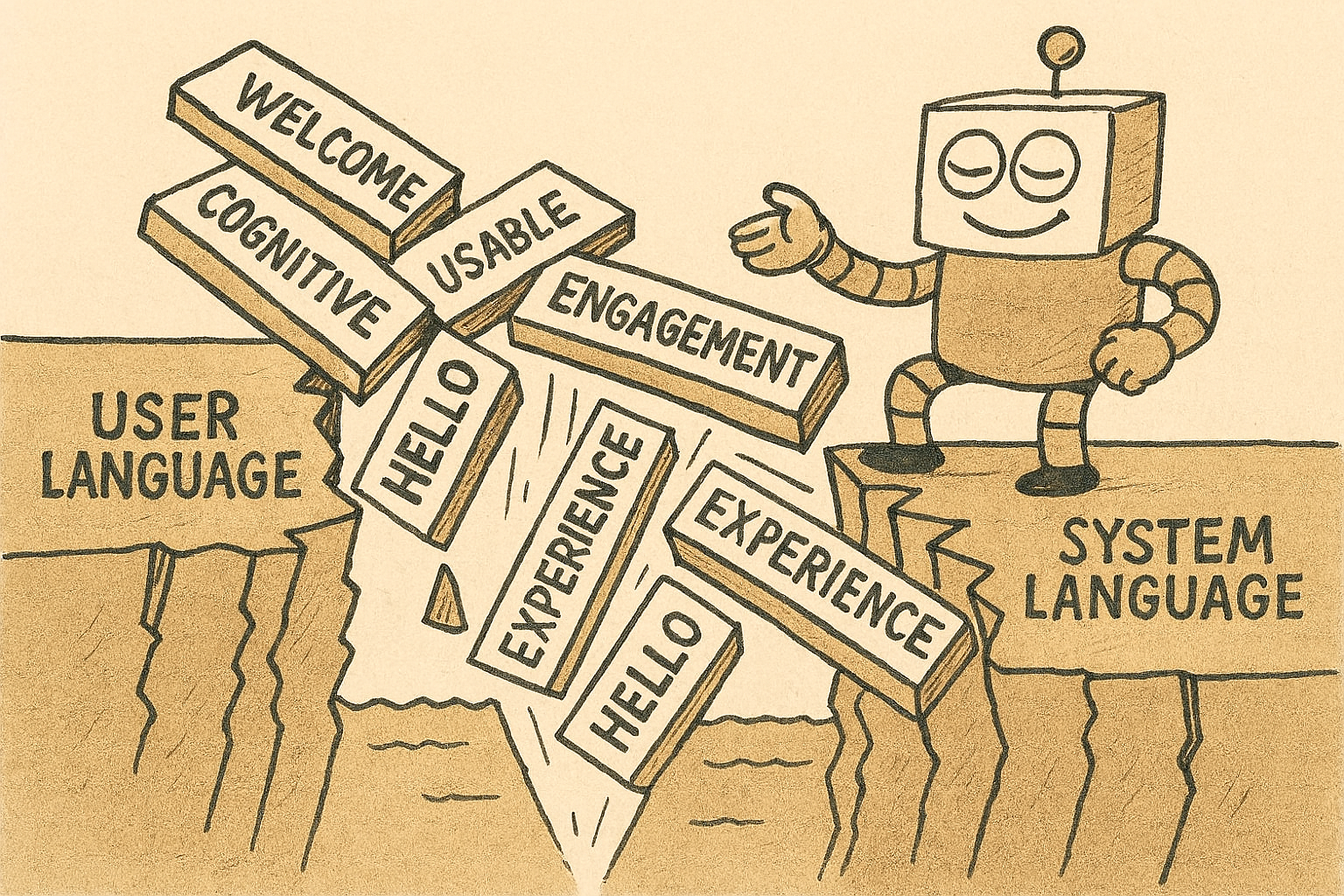

Natural Language Processing (NLP) is a field of AI that enables computers to understand, interpret, and generate human language. NLP is crucial for tasks like machine translation, sentiment analysis, and conversational AI, bridging the gap between human communication and computational understanding.

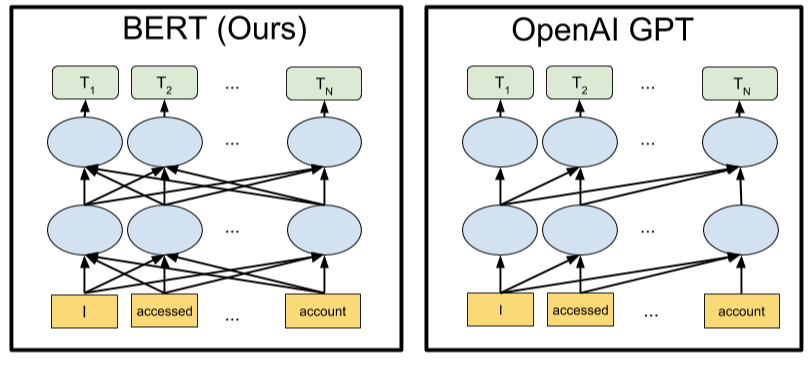

1. Why Is GPT Better Than BERT? A Detailed Review of Transformer Architectures

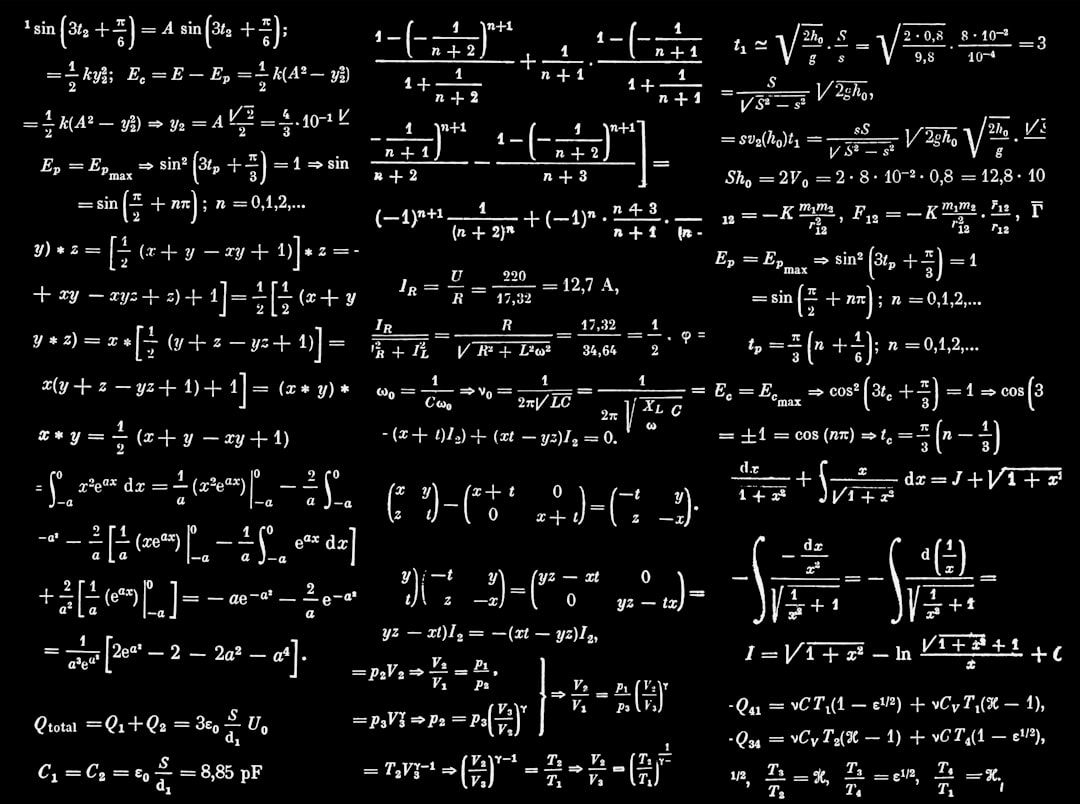

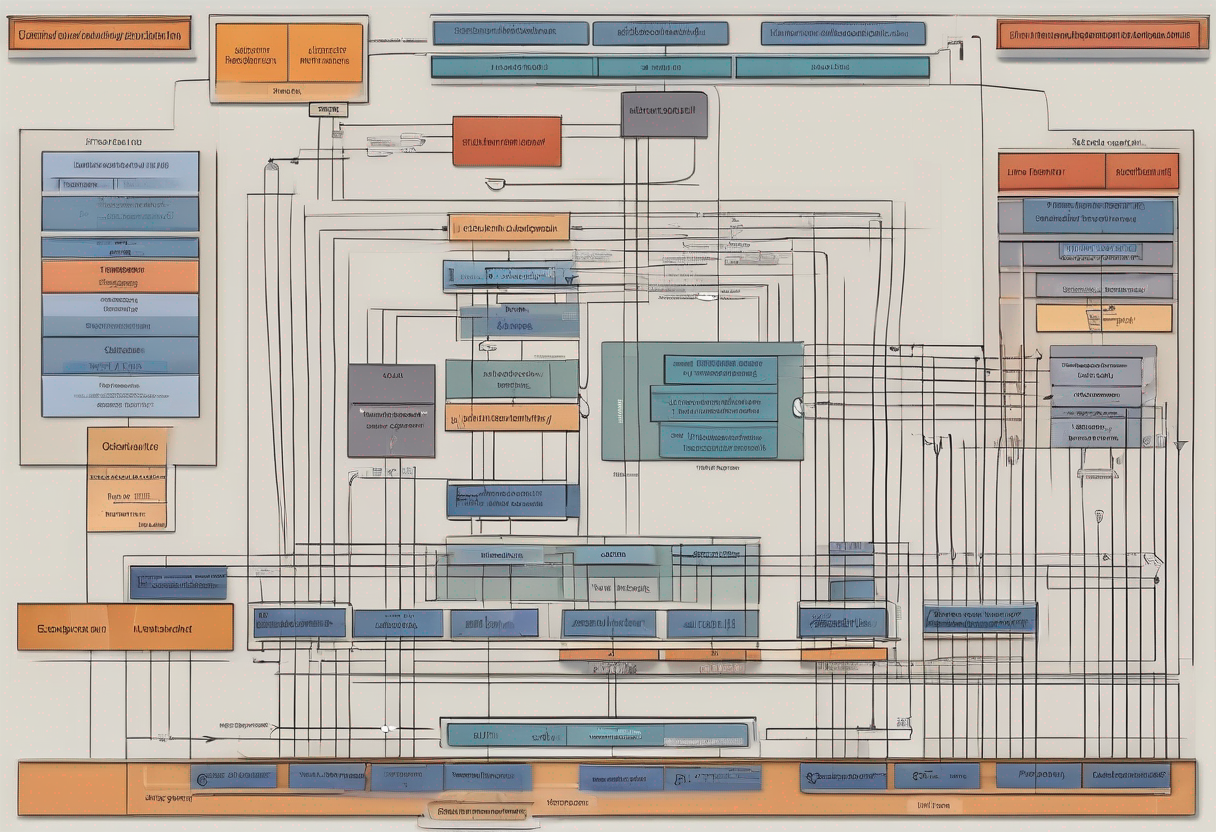

Details of Transformer Architectures Illustrated by BERT and GPT Model

Details of Transformer Architectures Illustrated by BERT and GPT Model

2. Decoding Transformers' Superiority over RNNs in NLP Tasks

Explore the intriguing journey from Recurrent Neural Networks (RNNs) to Transformers in the world of Natural Language Processing in our latest piece: 'The Trans

Explore the intriguing journey from Recurrent Neural Networks (RNNs) to Transformers in the world of Natural Language Processing in our latest piece: 'The Trans

3. How to Talk to ChatGPT: An Intro to Prompt Engineering

Prompting is pretty much the only skill you now require to be a master of these new large and powerful generative models such as ChatGPT.

Prompting is pretty much the only skill you now require to be a master of these new large and powerful generative models such as ChatGPT.

4. ChatGPT Explained in 5 Minutes

ChatGPT has taken over Twitter and pretty much the whole internet, thanks to its power and the meme potential it provides.

ChatGPT has taken over Twitter and pretty much the whole internet, thanks to its power and the meme potential it provides.

5. NLP Datasets from HuggingFace: How to Access and Train Them

The Datasets library from hugging Face provides a very efficient way to load and process NLP datasets from raw files or in-memory data. These NLP datasets have been shared by different research and practitioner communities across the world.

The Datasets library from hugging Face provides a very efficient way to load and process NLP datasets from raw files or in-memory data. These NLP datasets have been shared by different research and practitioner communities across the world.

6. 14 Open Datasets for Text Classification in Machine Learning

Text classification datasets are used to categorize natural language texts according to content. For example, think classifying news articles by topic, or classifying book reviews based on a positive or negative response. Text classification is also helpful for language detection, organizing customer feedback, and fraud detection. Though time consuming when done manually, this process can be automated with machine learning models. The result saves companies time while also providing valuable data insights.

Text classification datasets are used to categorize natural language texts according to content. For example, think classifying news articles by topic, or classifying book reviews based on a positive or negative response. Text classification is also helpful for language detection, organizing customer feedback, and fraud detection. Though time consuming when done manually, this process can be automated with machine learning models. The result saves companies time while also providing valuable data insights.

7. Why Can't AI Count the Number of "R"s in the Word "Strawberry"?

Explore why AI struggles to count letters in words like 'strawberry,' delving into tokenization, language model limitations, and potential improvements.

Explore why AI struggles to count letters in words like 'strawberry,' delving into tokenization, language model limitations, and potential improvements.

8. An Essential Python Text-to-Speech Tutorial Using the pyttsx3 Library

Basically, what we want to do is to give some piece of text to our program and it will convert that text into the speech and will read that to us.

Basically, what we want to do is to give some piece of text to our program and it will convert that text into the speech and will read that to us.

9. How to Convert Speech to Text in Python

Speech Recognition is the ability of a machine or program to identify words and phrases in spoken language and convert them to textual information.

Speech Recognition is the ability of a machine or program to identify words and phrases in spoken language and convert them to textual information.

10. NLP Tutorial: Creating Question Answering System using BERT + SQuAD on Colab TPU

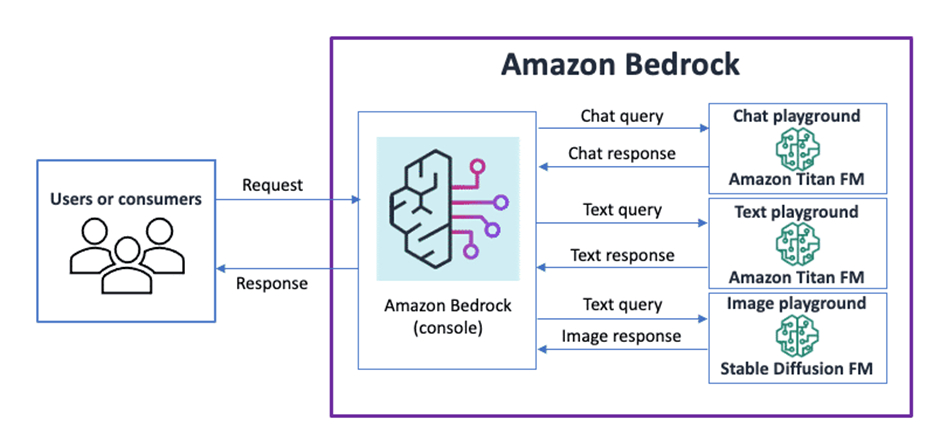

11. How to Build GenAI Applications with Amazon Bedrock

Discover how Amazon Bedrock revolutionizes Gen-AI application development by simplifying access to foundational models.

Discover how Amazon Bedrock revolutionizes Gen-AI application development by simplifying access to foundational models.

12. Open AI's ChatGPT Pricing Explained: How Much Does It Cost to Use GPT Models?

How much does it cost to use GPT-3 in a commercial project? We ran an experiment and a project simulation based on the results.

How much does it cost to use GPT-3 in a commercial project? We ran an experiment and a project simulation based on the results.

13. How to Perform Emotion detection in Text via Python

In this tutorial, I will guide you on how to detect emotions associated with textual data and how can you apply it in real-world applications.

In this tutorial, I will guide you on how to detect emotions associated with textual data and how can you apply it in real-world applications.

14. AI Chatbot Helps Manage Telegram Communities Like a Pro

The Telegram chatbot will find answers to questions by extracting information from the history of chat messages.

The Telegram chatbot will find answers to questions by extracting information from the history of chat messages.

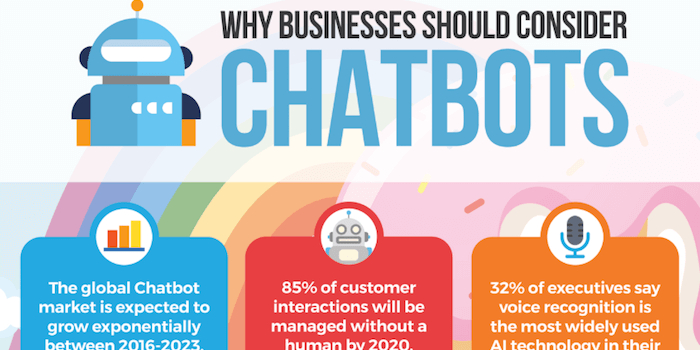

15. 5 Chatbot Ideas Businesses Should Consider in 2019

If you are looking to add the most advanced chatbot to your website, you would have probably noticed that there are many things required to develop a chatbot.

If you are looking to add the most advanced chatbot to your website, you would have probably noticed that there are many things required to develop a chatbot.

16. Make LLM for Text Summarisation Great Again

In recent months, LLMs have gained popularity and are now widely used in various applications. Data collection is essential for building these models, and crowd

In recent months, LLMs have gained popularity and are now widely used in various applications. Data collection is essential for building these models, and crowd

17. Creating a Domain Expert LLM: A Guide to Fine-Tuning

In this article, we fine-tune a large language model to understand the plot of a Handel opera.

In this article, we fine-tune a large language model to understand the plot of a Handel opera.

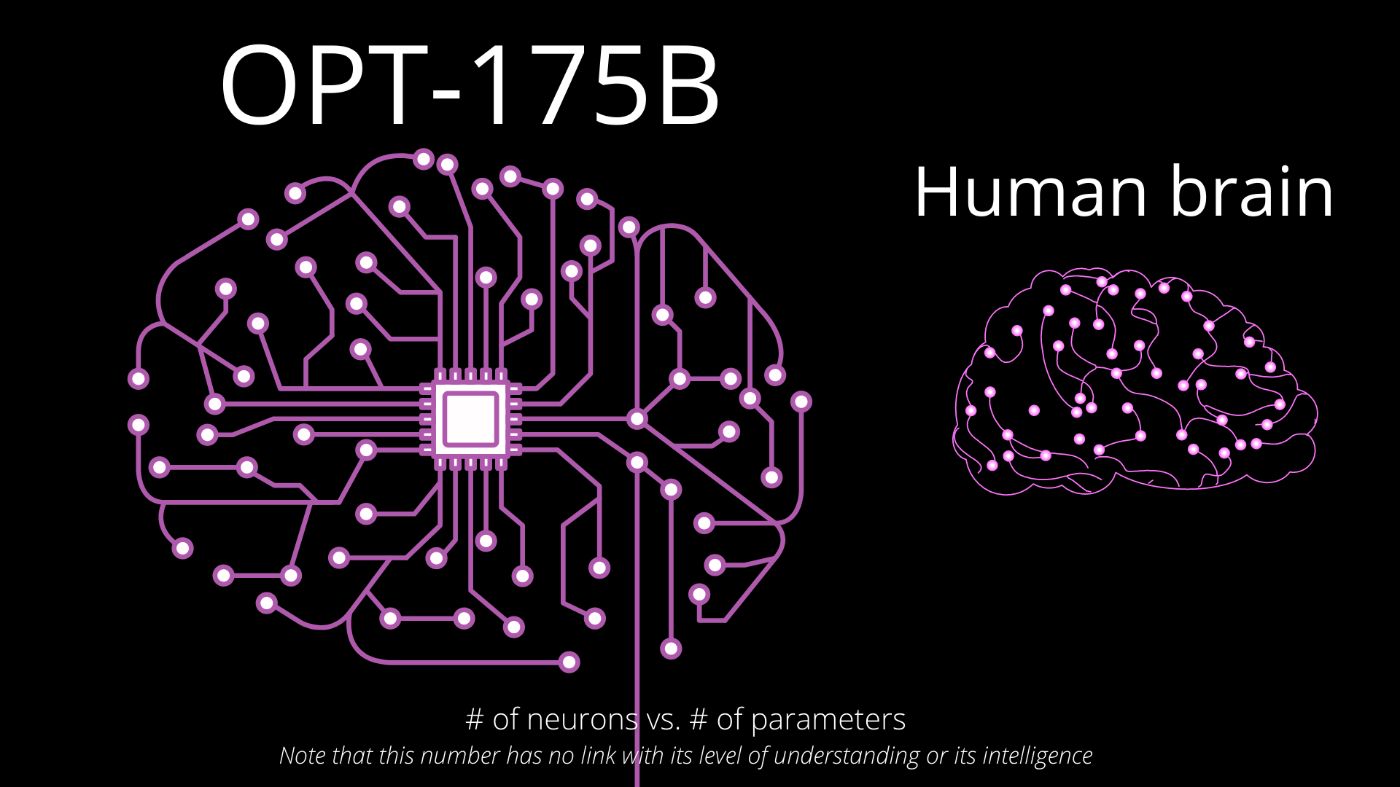

18. Meta's New Model OPT is an Open-Source GPT-3

We’ve all heard about GPT-3 and have somewhat of a clear idea of its capabilities. You’ve most certainly seen some applications born strictly due to this model, some of which I covered in a previous video about the model. GPT-3 is a model developed by OpenAI that you can access through a paid API but have no access to the model itself.

We’ve all heard about GPT-3 and have somewhat of a clear idea of its capabilities. You’ve most certainly seen some applications born strictly due to this model, some of which I covered in a previous video about the model. GPT-3 is a model developed by OpenAI that you can access through a paid API but have no access to the model itself.

19. 7 NLP Project Ideas to Enhance Your NLP Skills

Learn different NLP project ideas that focus on practical implementation to help you master the NLP techniques and be able to solve different challenges.

Learn different NLP project ideas that focus on practical implementation to help you master the NLP techniques and be able to solve different challenges.

20. Getting Started with OpenAI API in JavaScript

Learn beginner-friendly AI development using OpenAI API and JavaScript. Includes installation guide and code examples for building AI-enabled apps.

Learn beginner-friendly AI development using OpenAI API and JavaScript. Includes installation guide and code examples for building AI-enabled apps.

21. Sentiment Analysis and AI: Everything You Need to Know in 2025

Discover how AI-powered sentiment analysis tools deliver accurate insights from customer reviews and feedback to help improve your business strategy.

Discover how AI-powered sentiment analysis tools deliver accurate insights from customer reviews and feedback to help improve your business strategy.

22. Using BERT Transformer with SpaCy3 to Train a Relation Extraction Model

A step-by-step guide on how to train a relation extraction classifier using Transformer and spaCy3.

A step-by-step guide on how to train a relation extraction classifier using Transformer and spaCy3.

23. ChatGPD Doesn't Exist: It's ChatGPT

ChatGPD is one of the most common misspellings of the viral language model developed by Open AI. The correct term is ChatGPT.

ChatGPD is one of the most common misspellings of the viral language model developed by Open AI. The correct term is ChatGPT.

24. Comprehensive Tutorial on Building a RAG Application Using LangChain

Learn how to use LangChain, the massively popular framework for building RAG systems.

Learn how to use LangChain, the massively popular framework for building RAG systems.

25. How To Build and Deploy an NLP Model with FastAPI: Part 1

Learn how to build an NLP model and deploy it with a fast web framework for building APIs called FastAPI.

Learn how to build an NLP model and deploy it with a fast web framework for building APIs called FastAPI.

26. LLMs Don't Understand Negation

LLMs (like GPT) are really bad at following negative instructions. The post includes a demonstration, practice takeaways (prompt engineering), and some thought

LLMs (like GPT) are really bad at following negative instructions. The post includes a demonstration, practice takeaways (prompt engineering), and some thought

27. Getting Started with the Weaviate Vector Search Engine

Everybody who works with data in any way shape or form knows that one of the most important challenges is searching for the correct answers to your questions. There is a whole set of excellent (open source) search engines available but there is one thing that they can’t do, search and related data based on context.

Everybody who works with data in any way shape or form knows that one of the most important challenges is searching for the correct answers to your questions. There is a whole set of excellent (open source) search engines available but there is one thing that they can’t do, search and related data based on context.

28. Text Classification With Zero Shot Learning

Zero-shot text classification using trnasformers and TARSclassifier.

Zero-shot text classification using trnasformers and TARSclassifier.

29. How to Fine Tune a 🤗 (Hugging Face) Transformer Model

How to fine-tune a Hugging Face Transformer model for Sequence Classification

How to fine-tune a Hugging Face Transformer model for Sequence Classification

30. ChatGPT is a Plague Upon Online Publications

Ethics are a crucial part of Artificial Intelligence, which is why tech like ChatGPT must go through gruelling tests of bias.

Ethics are a crucial part of Artificial Intelligence, which is why tech like ChatGPT must go through gruelling tests of bias.

31. How to detect plagiarism in text using Python

Intro

Intro

32. Stable Diffusion, Unstable Me: Text-to-image Generation

Text to image generation is not a new idea. What if, you feed <your name> to a state-of-the-art image generation model?

Text to image generation is not a new idea. What if, you feed <your name> to a state-of-the-art image generation model?

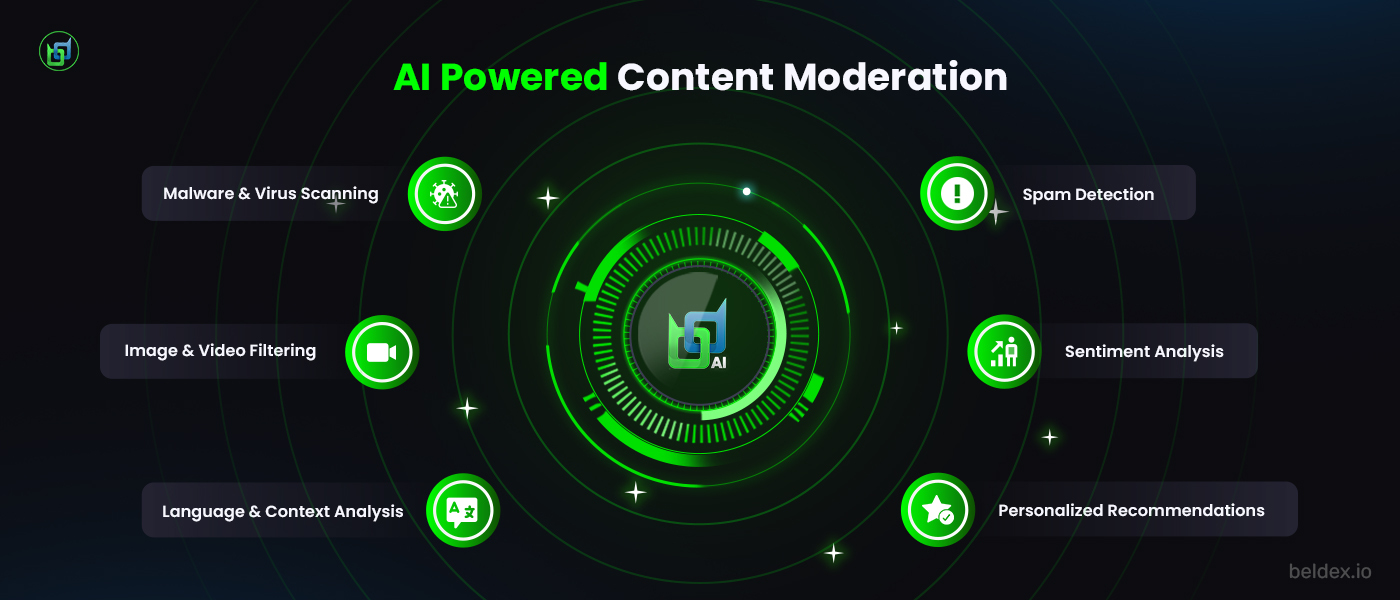

33. From Chatbots to Guardians of Data: How BChat Harnesses AI for Secure Messaging

AI is often associated with collecting personal data but what if AI helped protect user data? Read to know how BeldexAI protects your data on BChat.

AI is often associated with collecting personal data but what if AI helped protect user data? Read to know how BeldexAI protects your data on BChat.

34. AI Is Still Culturally Blind

AI moderates content for 75% of non-English internet users with broken cultural understanding. Discover the Cultural Intelligence Standard fixing this crisis.

AI moderates content for 75% of non-English internet users with broken cultural understanding. Discover the Cultural Intelligence Standard fixing this crisis.

35. How To Compare Documents Similarity using Python and NLP Techniques

In this post we are going to build a web application which will compare the similarity between two documents. We will learn the very basics of natural language processing (NLP) which is a branch of artificial intelligence that deals with the interaction between computers and humans using the natural language.

In this post we are going to build a web application which will compare the similarity between two documents. We will learn the very basics of natural language processing (NLP) which is a branch of artificial intelligence that deals with the interaction between computers and humans using the natural language.

36. How to Build a Multi-label NLP Classifier from Scratch

Attacking Toxic Comments Kaggle Competition Using Fast.ai

Attacking Toxic Comments Kaggle Competition Using Fast.ai

37. How To Build An n8n Workflow To Manage Different Databases and Scheduling Workflows

Learn how to build an n8n workflow that processes text, stores data in two databases, and sends messages to Slack.

Learn how to build an n8n workflow that processes text, stores data in two databases, and sends messages to Slack.

38. How To Build and Deploy an NLP Model with FastAPI: Part 2

Learn how to build an NLP model and deploy it with a fast web framework for building APIs called FastAPI.

Learn how to build an NLP model and deploy it with a fast web framework for building APIs called FastAPI.

39. wav2vec2 for Automatic Speech Recognition In Plain English

Plain English description of how Meta AI Research's wav2vec2 model works with respect to automatic speech recognition (ASR).

Plain English description of how Meta AI Research's wav2vec2 model works with respect to automatic speech recognition (ASR).

40. Sentiment Analysis with Python and AssemblyAI’s Speech Recognition API

If you’ve never heard of Sentiment Analysis, I hadn’t either before I stumbled on it in the documentation. That’s why I thought it would be interesting to try.

If you’ve never heard of Sentiment Analysis, I hadn’t either before I stumbled on it in the documentation. That’s why I thought it would be interesting to try.

41. 10 Best Reddit Datasets for NLP and Other ML Projects

In this post, I wanted to share a Reddit dataset list that gained a lot of traction on social media when it was first posted.

In this post, I wanted to share a Reddit dataset list that gained a lot of traction on social media when it was first posted.

42. Text Embedding Explained: How AI Understands Words

Large language models are a specific type of machine learning-based algorithm that understand and can generate language

Large language models are a specific type of machine learning-based algorithm that understand and can generate language

43. Positional Embedding: The Secret behind the Accuracy of Transformer Neural Networks

An article explaining the intuition behind the “positional embedding” in transformer models from the renowned research paper - “Attention Is All You Need”.

An article explaining the intuition behind the “positional embedding” in transformer models from the renowned research paper - “Attention Is All You Need”.

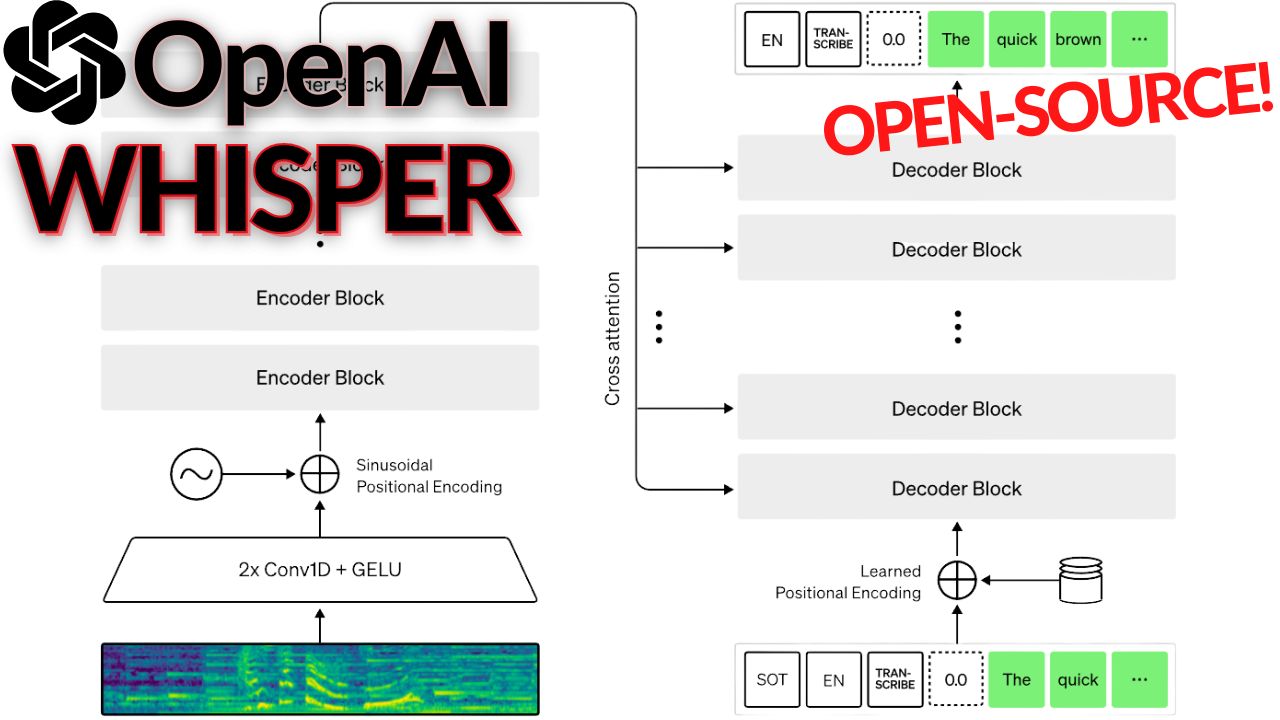

44. What is OpenAI's Whisper Model?

Have you ever dreamed of a good transcription tool that would accurately understand what you say and write it down? Not like the automatic YouTube translation tools… I mean, they are good but far from perfect. Just try it out and turn the feature on for the video, and you’ll see what I’m talking about.

Have you ever dreamed of a good transcription tool that would accurately understand what you say and write it down? Not like the automatic YouTube translation tools… I mean, they are good but far from perfect. Just try it out and turn the feature on for the video, and you’ll see what I’m talking about.

45. Everything You Need to Know About Google BERT

Google BERT will help you to kickstart your NLP journey by showing you how the transformer’s encoder and decoder work.

Google BERT will help you to kickstart your NLP journey by showing you how the transformer’s encoder and decoder work.

46. How to Remove Gender Bias in Machine Learning Models: NLP and Word Embeddings

Most word embeddings used are glaringly sexist, let us look at some ways to de-bias such embeddings.

Most word embeddings used are glaringly sexist, let us look at some ways to de-bias such embeddings.

47. How to Get Started With Embeddings

Getting started with embeddings using open-source tools.

Getting started with embeddings using open-source tools.

48. Training Your Own Text Classification Model From Scratch With Tensorflow Is As Easy As ABC

49. Can GPT-3 Finish Writing My Zombie Novel?

My biggest worry (and excitement) is that AI will progress enough to become more creative than humans.

My biggest worry (and excitement) is that AI will progress enough to become more creative than humans.

50. How Far Are We From a Real World Jarvis?

A Brief History of NLP Applications in the 21st Century

A Brief History of NLP Applications in the 21st Century

51. Spoken Language Understanding (SLU) vs. Natural Language Understanding (NLU)

Differences between SLU (Spoken Language Understanding) and NLU (Natural Language Understanding). Top FOSS and paid engines and their approach to SLU.

Differences between SLU (Spoken Language Understanding) and NLU (Natural Language Understanding). Top FOSS and paid engines and their approach to SLU.

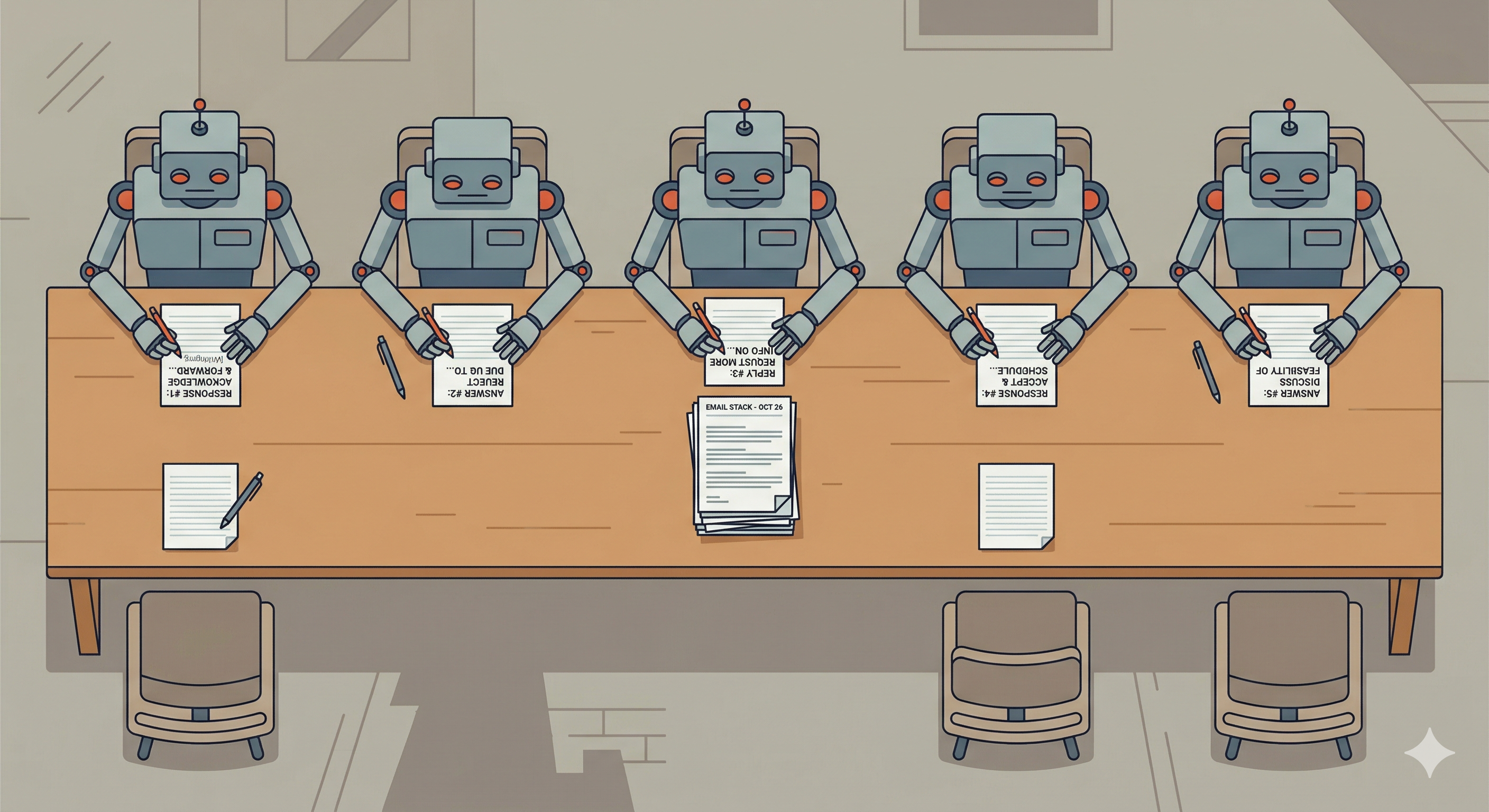

52. A Subreddit Where Only AI Chatbots Can Post

There’s a subreddit with a called r/SubSimulator that took three years in the making and which is fully powered by bots

There’s a subreddit with a called r/SubSimulator that took three years in the making and which is fully powered by bots

53. How to Call ChatGPT with OpenAI's APIs

Learn how Plivo is exploring the potential of ChatGPT to help automate text messaging and voice calls using OpenAI's APIs.

Learn how Plivo is exploring the potential of ChatGPT to help automate text messaging and voice calls using OpenAI's APIs.

54. ChatGPT Offers 5 Multi-Million Dollar Business Ideas Built With ChatGPT

I wanted to ask ChatGPT about ideas worth millions of dollars. Here are the answers:

I wanted to ask ChatGPT about ideas worth millions of dollars. Here are the answers:

55. Conferencing and The Art of 'Paper Blitzing'

There are soooo many papers in the field of machine learning, natural language processing nowadays. I’ll share the paper blitz method to "read them all".

There are soooo many papers in the field of machine learning, natural language processing nowadays. I’ll share the paper blitz method to "read them all".

56. These Politicians Are Using AI to Write Speeches

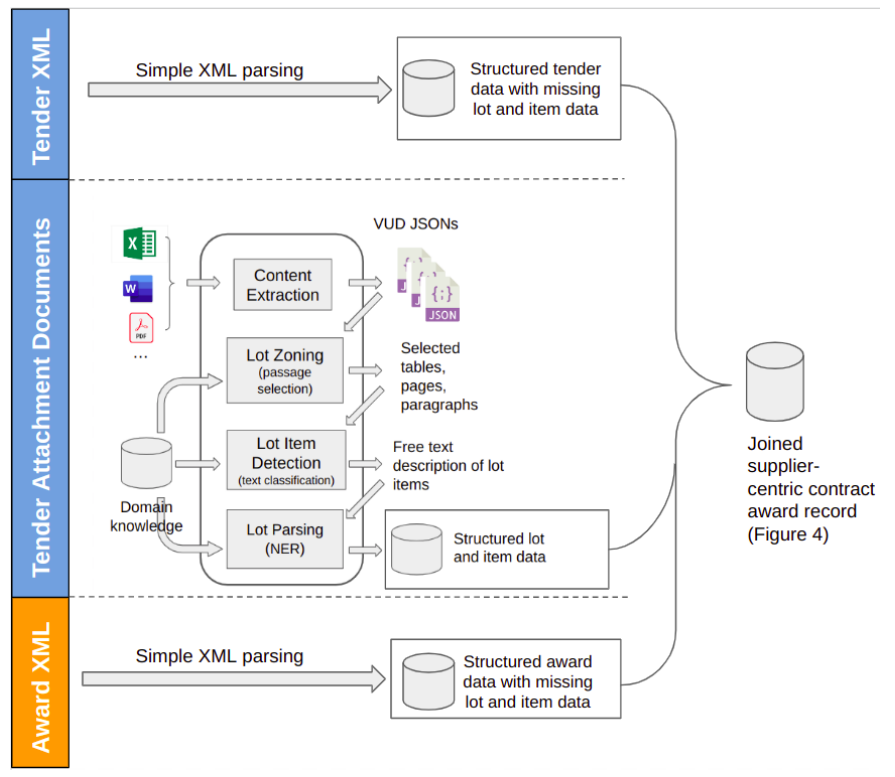

Generative AI tools, such as Open AI’s ChatGPT, have become massively popular, even outside the world of tech.

Generative AI tools, such as Open AI’s ChatGPT, have become massively popular, even outside the world of tech.

57. AI Won't Replace Me Yet, But It Might Prove I Was Never That Original

AI won’t replace me yet. But it might prove I was never that original. A witty, unsettling look at formulaic writing in the age of large language models.

AI won’t replace me yet. But it might prove I was never that original. A witty, unsettling look at formulaic writing in the age of large language models.

58. Natural Language Inference and NLP

How it can give us something we hitherforto though cobblers: a computer-you-can-ask-anything!

How it can give us something we hitherforto though cobblers: a computer-you-can-ask-anything!

59. Scratching the Singularity Surface: The Past, Present and Mysterious Future of LLMs

A brief overview of Natural Language Understanding industry and out current point of LLMs achieving human level reasoning abilities and becoming an AGI

A brief overview of Natural Language Understanding industry and out current point of LLMs achieving human level reasoning abilities and becoming an AGI

60. Large Language Models: Exploring Transformers - Part 2

Transformer models are a type of deep learning neural network model that are widely used in Natural Language Processing (NLP) tasks.

Transformer models are a type of deep learning neural network model that are widely used in Natural Language Processing (NLP) tasks.

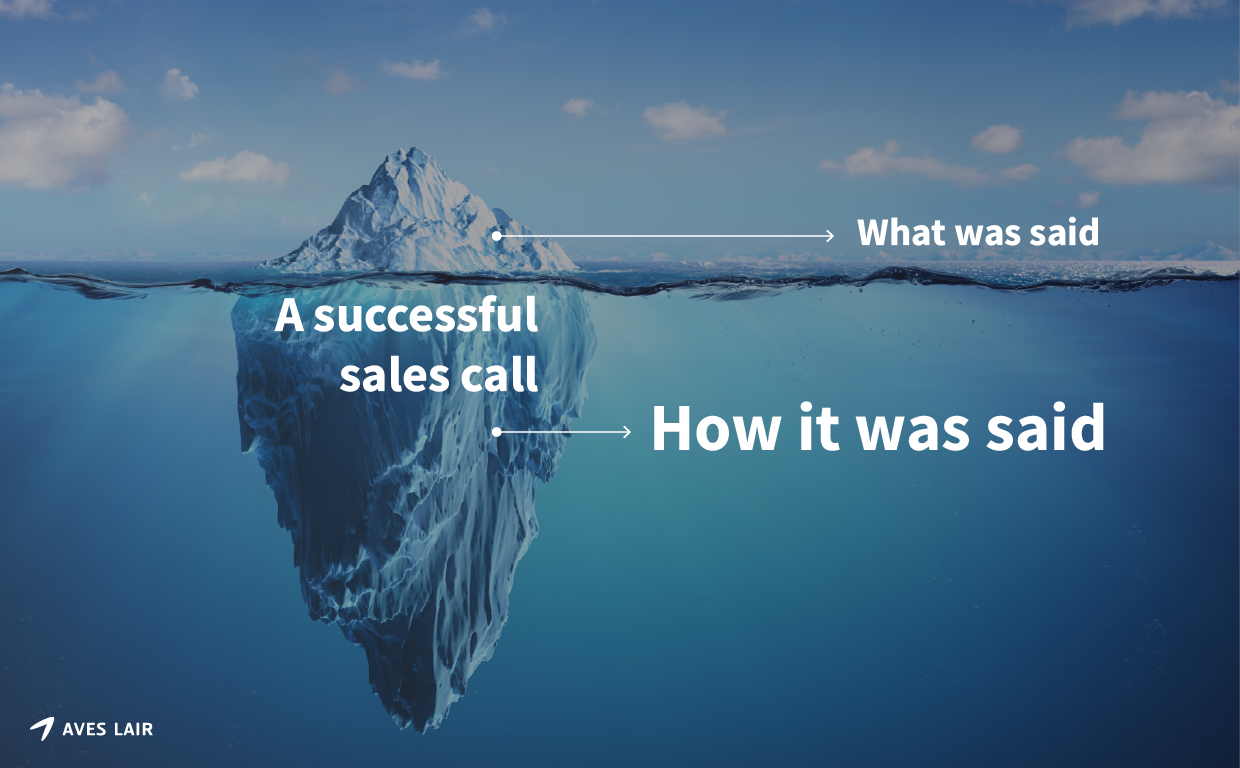

61. How to Use ChatGPT for Effective Sales Messaging

ChatGPT is an ideal tool for crafting sales messages that resonate with potential customers.

ChatGPT is an ideal tool for crafting sales messages that resonate with potential customers.

62. A Deep Learning Overview: NLP vs CNN

Artificial Intelligence is a lot more than a tech buzzword these days. This technology has disrupted almost every industry within a decade. Every company wants to implement this cutting edge technology in its system to cut costs, save time, and make the overall process more efficient with automation.

Artificial Intelligence is a lot more than a tech buzzword these days. This technology has disrupted almost every industry within a decade. Every company wants to implement this cutting edge technology in its system to cut costs, save time, and make the overall process more efficient with automation.

63. ReactJS and the Future of AI-Powered Web Components: What Will the Future Look Like?

This article explores the benefits of using ReactJS to create intelligent web interfaces, including better user experiences and more customization possibilities

This article explores the benefits of using ReactJS to create intelligent web interfaces, including better user experiences and more customization possibilities

64. Inside Transformers: The Hidden Tech Behind LLM's and Chatbots like ChatGPT

Transformers explained: The secret technology behind ChatGPT and how it’s reshaping AI chatbots worldwide.

Transformers explained: The secret technology behind ChatGPT and how it’s reshaping AI chatbots worldwide.

65. ELIZA: The Accidental Chatbot That Shaped AI History

ELIZA, created by Joseph Weizenbaum, was never meant to be a chatbot. Learn how this research tool’s accidental release shaped the AI world for decades.

ELIZA, created by Joseph Weizenbaum, was never meant to be a chatbot. Learn how this research tool’s accidental release shaped the AI world for decades.

66. Natural Language Processing Is a Revolutionary Leap for Tech and Humanity: An Explanation

Explore the fascinating world of Natural Language Processing - its history, growth, impact, future, and potential challenges. Dive into NLP now!

Explore the fascinating world of Natural Language Processing - its history, growth, impact, future, and potential challenges. Dive into NLP now!

67. What Kind of Scientist Are You?

Data science came a long way from the early days of Knowledge Discovery in Databases (KDD) and Very Large Data Bases (VLDB) conferences.

Data science came a long way from the early days of Knowledge Discovery in Databases (KDD) and Very Large Data Bases (VLDB) conferences.

68. Getting Started with Natural Language Processing: US Airline Sentiment Analysis

By: Comet.ml and Niko Laskaris, customer facing data scientist, Comet.ml

By: Comet.ml and Niko Laskaris, customer facing data scientist, Comet.ml

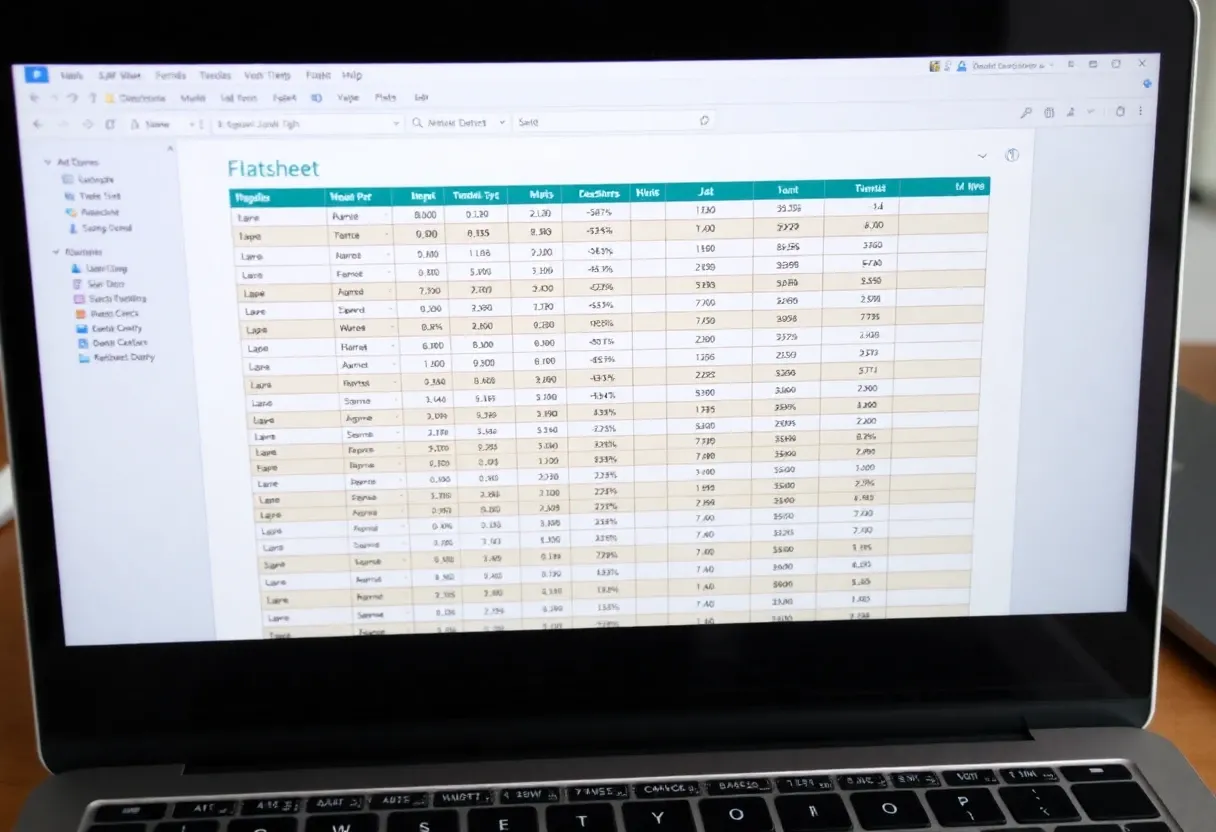

69. How to Enhance Your dbt Project With Large Language Models

Automatically solve typical Natural Language Processing tasks for your text data using LLM for as cheap as $10 per 1M rows, staying in your dbt environment

Automatically solve typical Natural Language Processing tasks for your text data using LLM for as cheap as $10 per 1M rows, staying in your dbt environment

70. ChatGPT Writes The Great Gatsby Set in a Zombie Apocalypse

I told OpenAI's ChatGPT model to write The Great Gatsby, but with zombies. Here's what happened...

I told OpenAI's ChatGPT model to write The Great Gatsby, but with zombies. Here's what happened...

71. How to Build a Plagiarism Checker Using Machine Learning

Using machine learning, we can build our own plagiarism checker that searches a vast database for stolen content. In this article, we’ll do exactly that.

Using machine learning, we can build our own plagiarism checker that searches a vast database for stolen content. In this article, we’ll do exactly that.

72. How to Perform Data Augmentation in NLP Projects

In machine learning, it is crucial to have a large amount of data in order to achieve strong model performance. Using a method known as data augmentation, you can create more data for your machine learning project. Data augmentation is a collection of techniques that manage the process of automatically generating high-quality data on top of existing data.

In machine learning, it is crucial to have a large amount of data in order to achieve strong model performance. Using a method known as data augmentation, you can create more data for your machine learning project. Data augmentation is a collection of techniques that manage the process of automatically generating high-quality data on top of existing data.

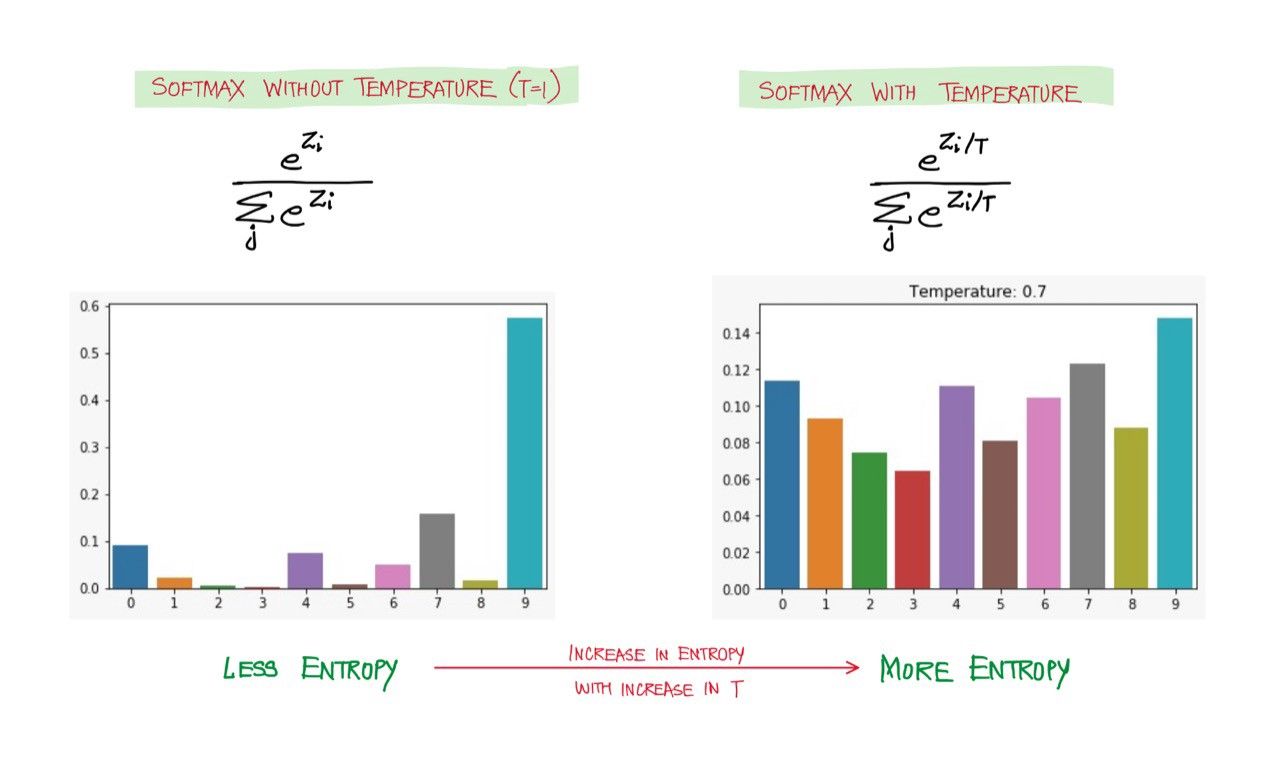

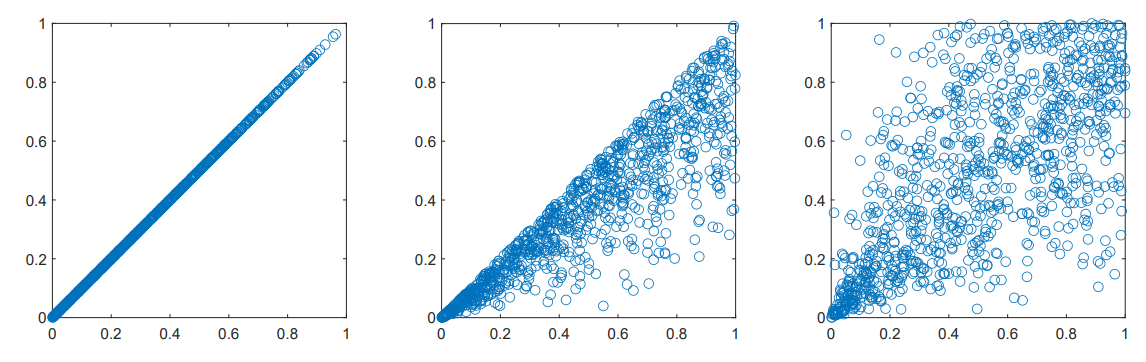

73. Softmax Temperature and Prediction Diversity

This article is about tweaking the softmax distribution to control how diverse and novel the predictions are.

This article is about tweaking the softmax distribution to control how diverse and novel the predictions are.

74. LangChain Promised an Easy AI Interface for MySQL—Here’s What It Really Took

Learn how I built a multi-stage Langchain agent for MySQL. This article details my journey, challenges, and key steps in creating an intelligent database intera

Learn how I built a multi-stage Langchain agent for MySQL. This article details my journey, challenges, and key steps in creating an intelligent database intera

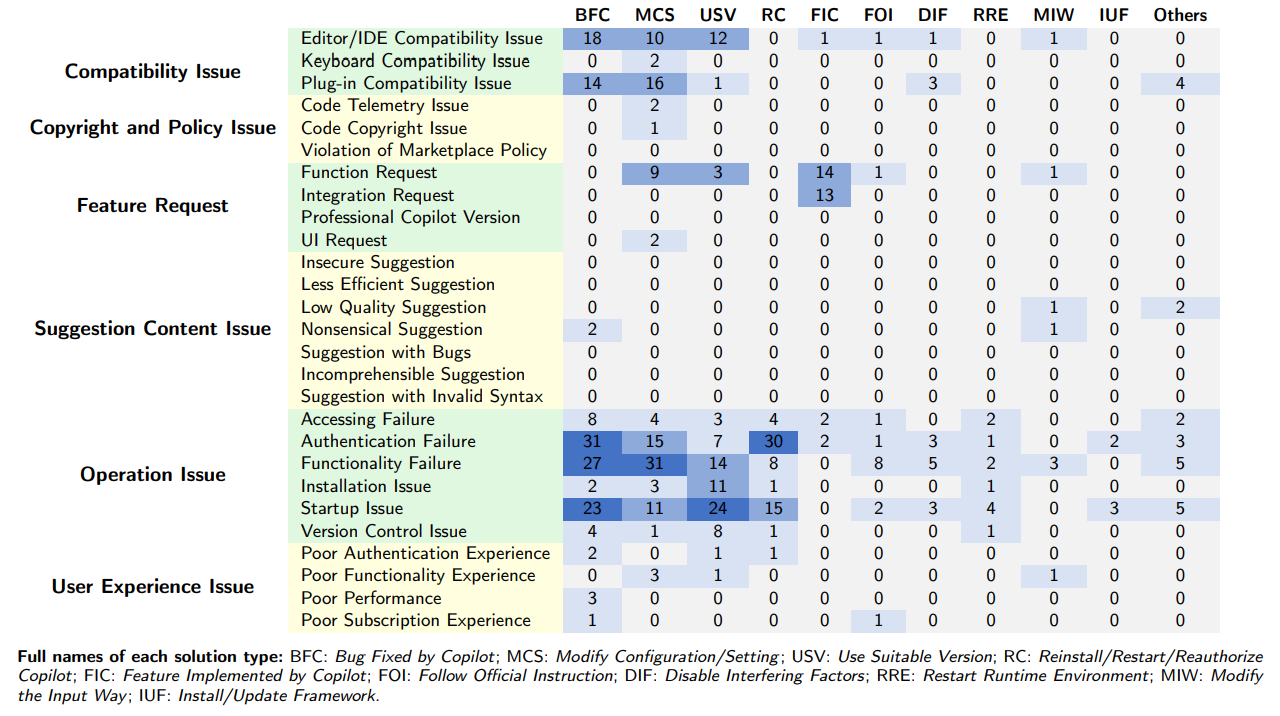

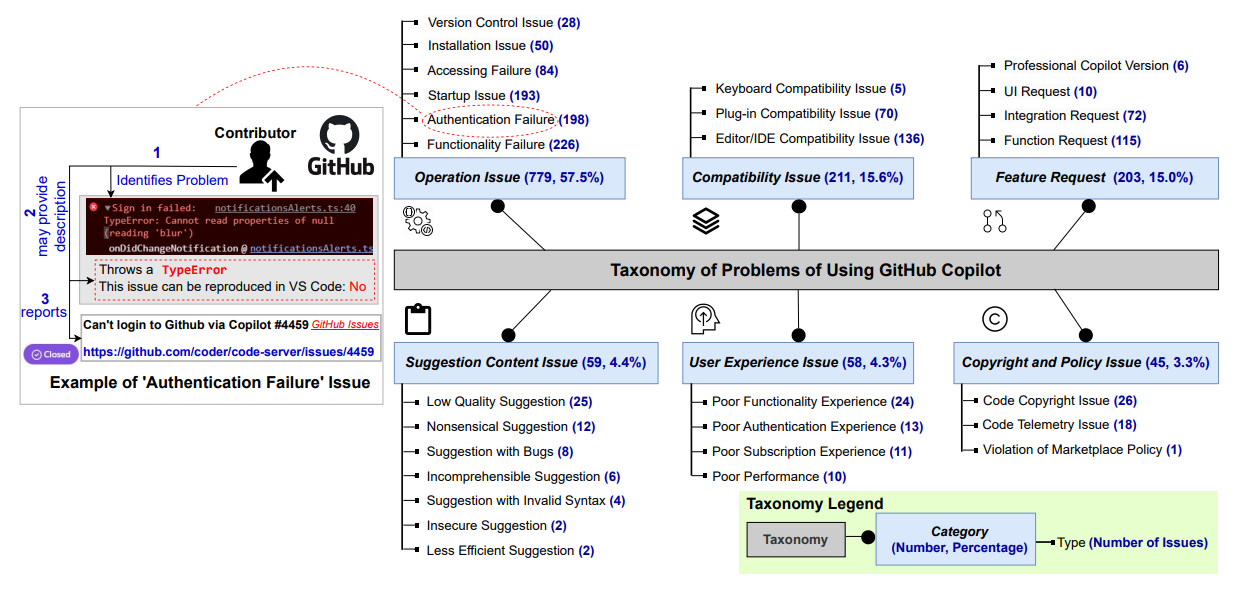

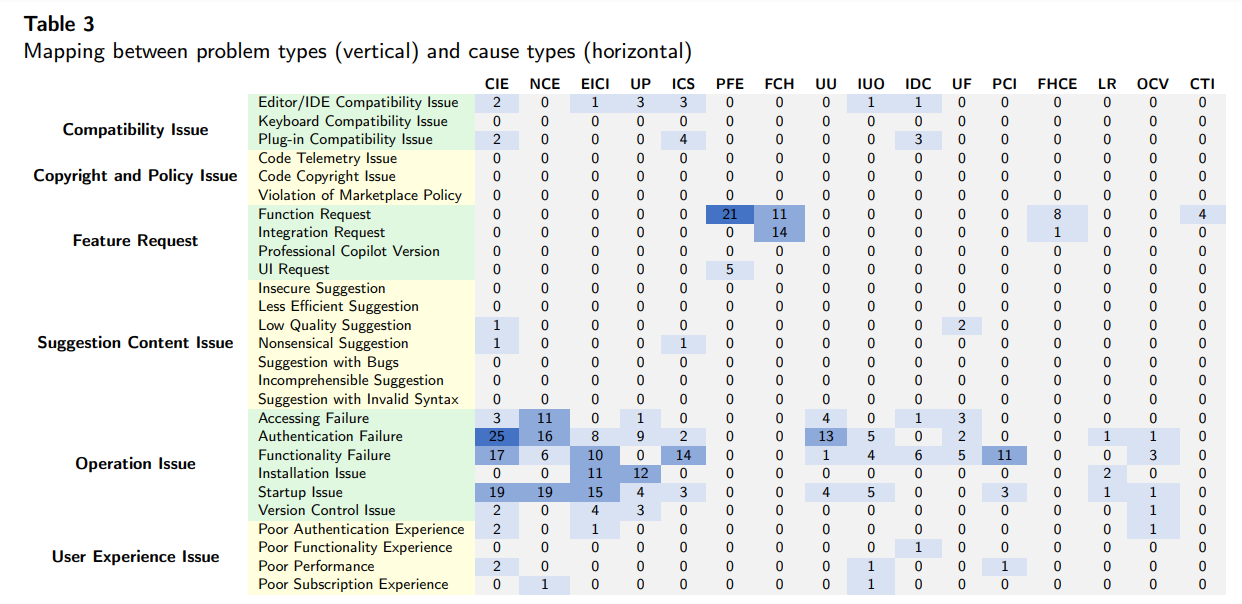

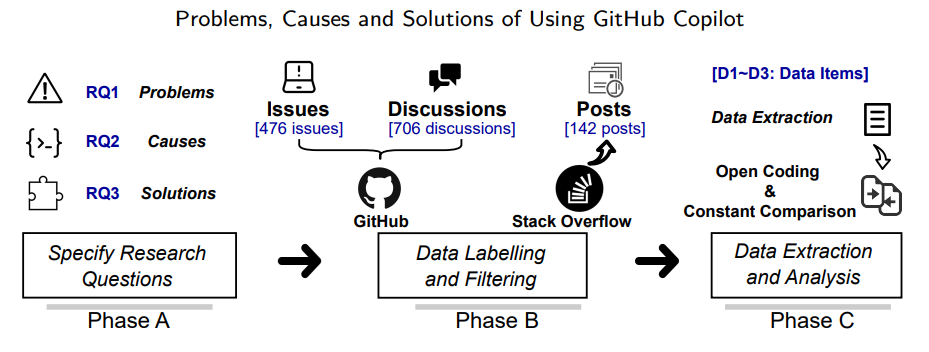

75. Common Problems with GitHub Copilot (And How to Solve Them)

An in-depth analysis of 1,355 GitHub Copilot issues reveals key problems, causes, and solutions—and what Copilot’s team should improve next.

An in-depth analysis of 1,355 GitHub Copilot issues reveals key problems, causes, and solutions—and what Copilot’s team should improve next.

76. Behind the Scenes of an OCR Receipt and Invoice API Engine

Find out how an accurate, adaptive and multi-lingual receipt OCR API engine works!

Find out how an accurate, adaptive and multi-lingual receipt OCR API engine works!

77. How to Build a Python Interpreter Inside ChatGPT

You don't need an interpreter anymore!

You don't need an interpreter anymore!

78. A Beginner Guide to Incorporating Tabular Data via HuggingFace Transformers

Transformer-based models are a game-changer when it comes to using unstructured text data. As of September 2020, the top-performing models in the General Language Understanding Evaluation (GLUE) benchmark are all BERT transformer-based models. At Georgian, we often encounter scenarios where we have supporting tabular feature information and unstructured text data. We found that by using the tabular data in these models, we could further improve performance, so we set out to build a toolkit that makes it easier for others to do the same.

Transformer-based models are a game-changer when it comes to using unstructured text data. As of September 2020, the top-performing models in the General Language Understanding Evaluation (GLUE) benchmark are all BERT transformer-based models. At Georgian, we often encounter scenarios where we have supporting tabular feature information and unstructured text data. We found that by using the tabular data in these models, we could further improve performance, so we set out to build a toolkit that makes it easier for others to do the same.

79. Meet Lettria: Our Place in the AI Revolution Begins with NLP

While natural language processing has received tons of attention in the field of AI, generative AI is also making great strides.

While natural language processing has received tons of attention in the field of AI, generative AI is also making great strides.

80. How AI Has Changed Natural Language Processing

How natural language processing has been revolutionized by Artificial Intelligence and how this is currently affecting businesses.

How natural language processing has been revolutionized by Artificial Intelligence and how this is currently affecting businesses.

81. Power Virtual Agents: Use GPT-3.5 to Help With Trigger Phrases and Custom Entities

Use OpenAI Chat-GPT to help generate trigger phrases and content entities for power virtual agents.

Use OpenAI Chat-GPT to help generate trigger phrases and content entities for power virtual agents.

82. A Complete(ish) Guide to Python Tools You Can Use To Analyse Text Data

Exploratory data analysis is one of the most important parts of any machine learning workflow and Natural Language Processing is no different.

Exploratory data analysis is one of the most important parts of any machine learning workflow and Natural Language Processing is no different.

83. The Main Patterns in Generative AI Lifecycles

Discover the evolution and integration of generative AI in enterprise environments: from rule-based systems to large-scale real-time content generation.

Discover the evolution and integration of generative AI in enterprise environments: from rule-based systems to large-scale real-time content generation.

84. On AI Winters and What it Means for the Future

The full history of AI winters is reviewed in great detail. The comprehensive coverage that we didn't find anywhere else.

The full history of AI winters is reviewed in great detail. The comprehensive coverage that we didn't find anywhere else.

85. B2B Sales Is Broken. New Tech Can Help

Closing b2b deals is difficult. People are not buying aggressive selling techniques. Existing sales softwares aren't helping. New tech can help.

Closing b2b deals is difficult. People are not buying aggressive selling techniques. Existing sales softwares aren't helping. New tech can help.

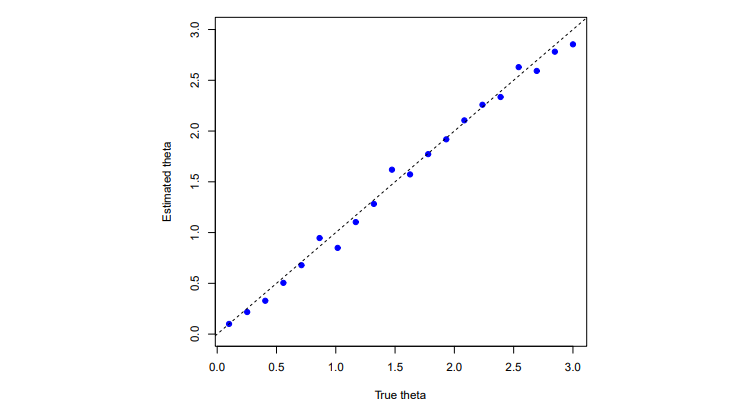

86. Automated Essay Scoring Using Large Language Models

Explore innovations in Automated Essay Scoring (AES), using models like Longformer and multi-task learning to address challenges in cohesion, grammar, and more.

Explore innovations in Automated Essay Scoring (AES), using models like Longformer and multi-task learning to address challenges in cohesion, grammar, and more.

87. How I Built a Demo App to Listen to 5000+ Hours of Joe Rogan With the Help of AI

I’m consuming 5500+ hours of Joe Rogan with the help of AI

I’m consuming 5500+ hours of Joe Rogan with the help of AI

88. 5 Case Studies that Prove Bots Are Here to Help Businesses Scale

It was about three years ago that Microsoft CEO, Satya Nadella, was quoted stating “Bots are the new apps,” during a 3-hour keynote to kick off the company’s Build conference. That statement has probably never been truer, especially since NLP bots Enterprise bots have appeared on the scene.

It was about three years ago that Microsoft CEO, Satya Nadella, was quoted stating “Bots are the new apps,” during a 3-hour keynote to kick off the company’s Build conference. That statement has probably never been truer, especially since NLP bots Enterprise bots have appeared on the scene.

89. This Entire Article Was Written by ChatGPT's Grandfather

As a historical reference, here is what ChatGPT’s grandfather, GPT2 was able to produce all the way back in 2020. It’ll be interesting to compare it to what Cha

As a historical reference, here is what ChatGPT’s grandfather, GPT2 was able to produce all the way back in 2020. It’ll be interesting to compare it to what Cha

90. Artificial Intelligence is the Future, and It's Already Here

By 2030, artificial intelligence is projected to contribute at least $15.7 trillion to the global economy.

By 2030, artificial intelligence is projected to contribute at least $15.7 trillion to the global economy.

91. Analyzing Sentiment Of Tweets Is Really Easy If You Follow This Tutorial

Hello, Guys,

Hello, Guys,

92. Mistakes of Microsoft's New Bing: Can ChatGPT-like Generative Models Guarantee Factual Accuracy?

We uncover several factual mistakes in Microsoft’s new Bing and Google’s Bard demonstrations, suggesting limitations in conversational AI models like ChatGPT.

We uncover several factual mistakes in Microsoft’s new Bing and Google’s Bard demonstrations, suggesting limitations in conversational AI models like ChatGPT.

93. Tools to Bypass AI Detection in 2024

Explore AI in 2024 that bypasses detection systems, ensuring AI-generated content appears human-written.

Explore AI in 2024 that bypasses detection systems, ensuring AI-generated content appears human-written.

94. Analyzing Customer Reviews with Natural Language Processing

In this article, we build a machine-learning model to guess the tone of customer reviews based on historical data.

In this article, we build a machine-learning model to guess the tone of customer reviews based on historical data.

95. How to Play Chess Using a GPT-2 Model

OpenAI’s transformer-based language model GPT-2 definitely lives up to the hype. Following the natural evolution of Artificial Intelligence (AI), this generative language model drew a lot of attention by engaging in interviews and appearing in the online text adventure game AI Dungeon.

OpenAI’s transformer-based language model GPT-2 definitely lives up to the hype. Following the natural evolution of Artificial Intelligence (AI), this generative language model drew a lot of attention by engaging in interviews and appearing in the online text adventure game AI Dungeon.

96. How I Got to Top 24% on a Kaggle Text Classification Challenge Without Writing a Single Line of Code

In this post, we will see how to use the platform and get a submission that achieves a respectable 83% Accuracy on the test set.

In this post, we will see how to use the platform and get a submission that achieves a respectable 83% Accuracy on the test set.

97. A New AI Tool Builds Knowledge Graphs So Good, They Could Rewire Scientific Discovery

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

98. Natural Language Processing and How it Could Improve Employee Engagement

Internal communication and employee engagement are key when it comes to the smooth functioning of an organization and building a reputation, especially in today’s age when more and more people are opting to work remotely and teams are scattered across the world.

Internal communication and employee engagement are key when it comes to the smooth functioning of an organization and building a reputation, especially in today’s age when more and more people are opting to work remotely and teams are scattered across the world.

99. Tired of Sifting Through Science Papers? This AI Knowledge Graph Does It for You

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

100. 8 of the Best AI Chatbots for 2023

Thanks to artificial intelligence and machine learning, chatbots are becoming a practical tool in the business world. This is good news for many companies, as chatbots can increase engagement, revenue and ROI. The potential of artificial intelligence is there to be harnessed, and AI-powered chatbots are examples of the effective usage of the technology. However, choosing a chatbot can be overwhelming. Let's take a look at the most popular AI chatbots currently on the market.

Thanks to artificial intelligence and machine learning, chatbots are becoming a practical tool in the business world. This is good news for many companies, as chatbots can increase engagement, revenue and ROI. The potential of artificial intelligence is there to be harnessed, and AI-powered chatbots are examples of the effective usage of the technology. However, choosing a chatbot can be overwhelming. Let's take a look at the most popular AI chatbots currently on the market.

101. The Noonification: Our World Has Become a WWE Stage (8/31/2023)

8/31/2023: Top 5 stories on the Hackernoon homepage!

8/31/2023: Top 5 stories on the Hackernoon homepage!

102. Innovation Opportunities in Data, AI, AR, Robots, Biotech, More [Overview]

Digital Technology is everywhere and it is redefining how we live, communicate, and work. Most importantly, it accelerates how we innovate.

Digital Technology is everywhere and it is redefining how we live, communicate, and work. Most importantly, it accelerates how we innovate.

103. Your Guide to Natural Language Processing (NLP)

Everything we express (either verbally or in written) carries huge amounts of information. The topic we choose, our tone, our selection of words, everything adds some type of information that can be interpreted and value extracted from it. In theory, we can understand and even predict human behaviour using that information.

Everything we express (either verbally or in written) carries huge amounts of information. The topic we choose, our tone, our selection of words, everything adds some type of information that can be interpreted and value extracted from it. In theory, we can understand and even predict human behaviour using that information.

104. Content-Based Recommender Using Natural Language Processing (NLP)

A guide to build a movie recommender model based on content-based NLP: When we provide ratings for products and services on the internet, all the preferences we express and data we share (explicitly or not), are used to generate recommendations by recommender systems. The most common examples are that of Amazon, Google and Netflix.

A guide to build a movie recommender model based on content-based NLP: When we provide ratings for products and services on the internet, all the preferences we express and data we share (explicitly or not), are used to generate recommendations by recommender systems. The most common examples are that of Amazon, Google and Netflix.

105. ChatGPT Is Making the Internet More Fun and Less Confusing

This is a short story about the rise of ChatGPT :)

I hope you like it.

This is a short story about the rise of ChatGPT :)

I hope you like it.

106. Scientists Built a Knowledge Graph for Materials—And You Can Actually Use It

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

107. Is it Ethical for Media Outlets to Use AI to Write Stories?

If media outlets are hiding their usage of AI-generated content, is it because this is ethically wrong?

If media outlets are hiding their usage of AI-generated content, is it because this is ethically wrong?

108. Importance of Sentiment Analysis as a Key Marketing Tool

Sentiment Analytics can help your marketing team to understand the sentiment of your target audience and identify any potential issues or concerns.

Sentiment Analytics can help your marketing team to understand the sentiment of your target audience and identify any potential issues or concerns.

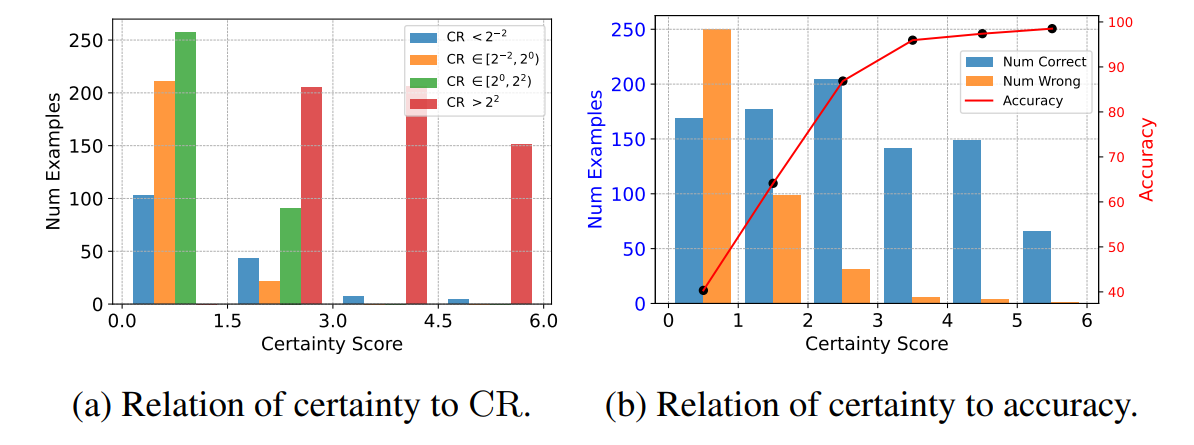

109. How to Make Your LLM Fully Utilize the Context

A data-driven approach that introduces a novel pipeline to synthesize a novel dataset to train LLMs to cleverly use long contexts.

A data-driven approach that introduces a novel pipeline to synthesize a novel dataset to train LLMs to cleverly use long contexts.

110. AI Model Reads Thousands of Studies, Nails Battery Science Better Than Expected

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

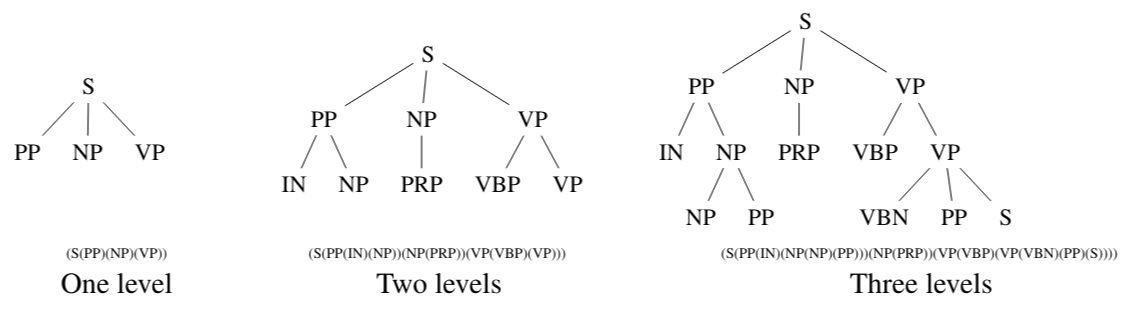

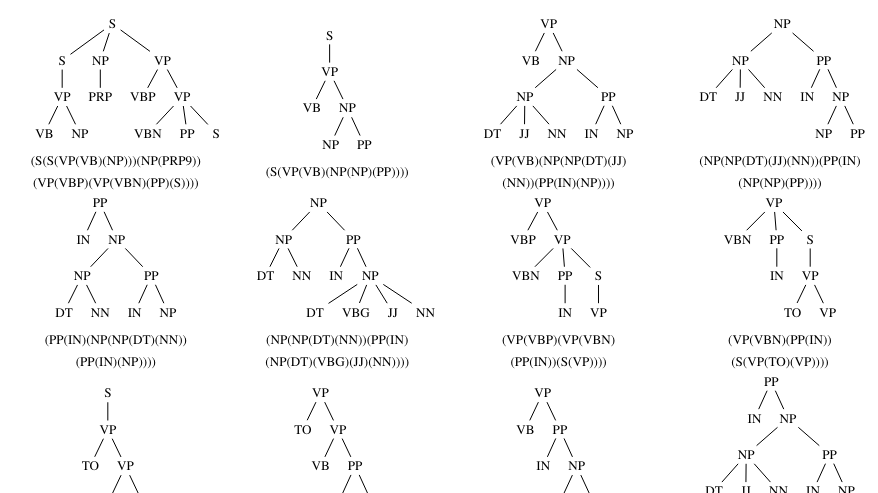

111. Your Writing Has a Fingerprint—And This Cutting Edge AI Model Can Identify It

Using grammatical structures from parsed text, this study explores a new method for detecting authorship, improving accuracy in AI and fake text identification.

Using grammatical structures from parsed text, this study explores a new method for detecting authorship, improving accuracy in AI and fake text identification.

112. Natural Language Processing: Explaining BERT to Business People

<TLDR> BERT is certainly a significant step forward in the context of NLP. Business activities such as topic detection and sentiment analysis will be much easier to create and execute, and the results much more accurate. But how did you get to BERT, and how exactly does the model work? Why is it so powerful? Last but not least, what benefits it can bring to the business, and our decision to integrate it into the sandsiv+ Customer Experience platform.</TLDR>

<TLDR> BERT is certainly a significant step forward in the context of NLP. Business activities such as topic detection and sentiment analysis will be much easier to create and execute, and the results much more accurate. But how did you get to BERT, and how exactly does the model work? Why is it so powerful? Last but not least, what benefits it can bring to the business, and our decision to integrate it into the sandsiv+ Customer Experience platform.</TLDR>

113. Revamping Long Short-Term Memory Networks: XLSTM for Next-Gen AI

XLSTMs, with novel sLSTM and mLSTM blocks, aim to overcome LSTMs' limitations and potentially surpass transformers in building next-gen language models.

XLSTMs, with novel sLSTM and mLSTM blocks, aim to overcome LSTMs' limitations and potentially surpass transformers in building next-gen language models.

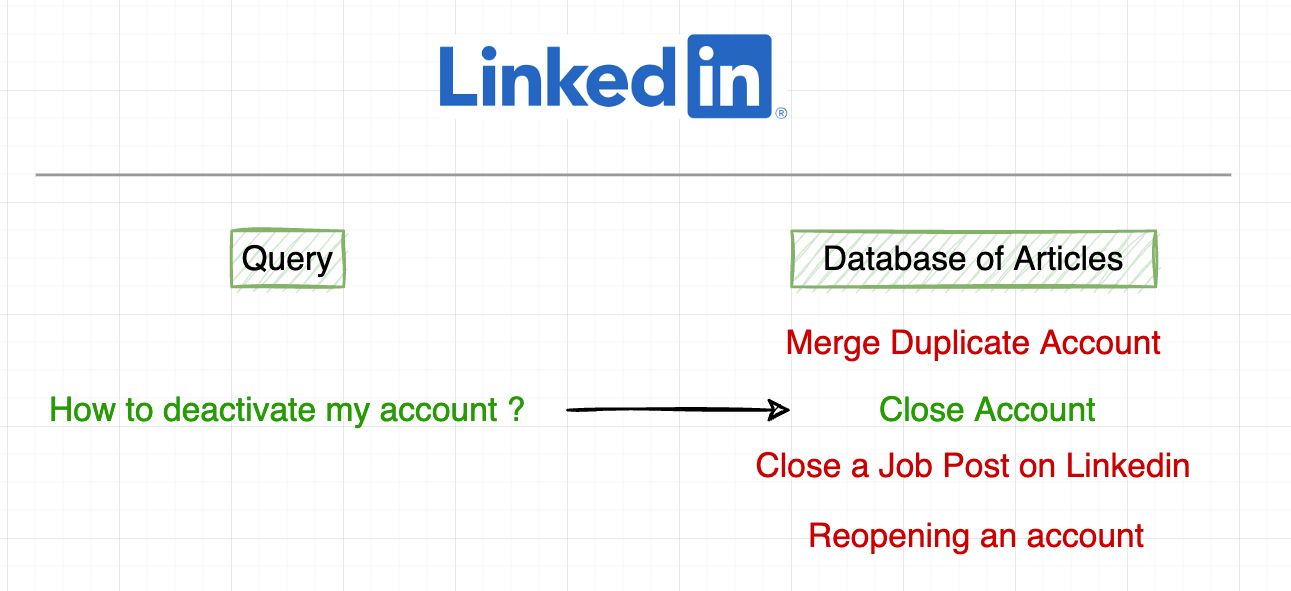

114. How LinkedIn Uses NLP to Design their Help Search System

This is the summary and my key takeaways from the original post by LinkedIn on how NLP is being used (as of 2019) in designing its Help Search System.

This is the summary and my key takeaways from the original post by LinkedIn on how NLP is being used (as of 2019) in designing its Help Search System.

115. Data and Analytics Predictions for 2020 [A Top 5 List]

It would be no exaggeration to say that the capacity of technology to advance itself is proceeding at a faster rate than our ability to process these changes all at the same time. This is both amazing and alarming in the same breath.

It would be no exaggeration to say that the capacity of technology to advance itself is proceeding at a faster rate than our ability to process these changes all at the same time. This is both amazing and alarming in the same breath.

116. Scientists Built a Smarter, Sharper Materials Graph by Teaching AI to Double-Check Its Work

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

117. The Rise of Text-to-Image Editing: How NLP is Changing Visual Content Creation

Discover how AI text-to-image editing uses natural language to simplify visual content creation, boosting speed, creativity, and productivity.

Discover how AI text-to-image editing uses natural language to simplify visual content creation, boosting speed, creativity, and productivity.

118. DIY ChatGPT Plugin Connector

How I connected an external app to ChatGPT

How I connected an external app to ChatGPT

119. Using AI to Build a Monte Carlo Simulation

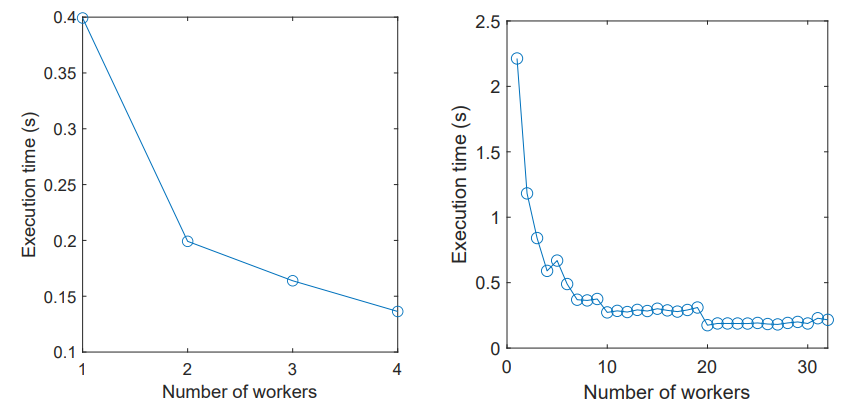

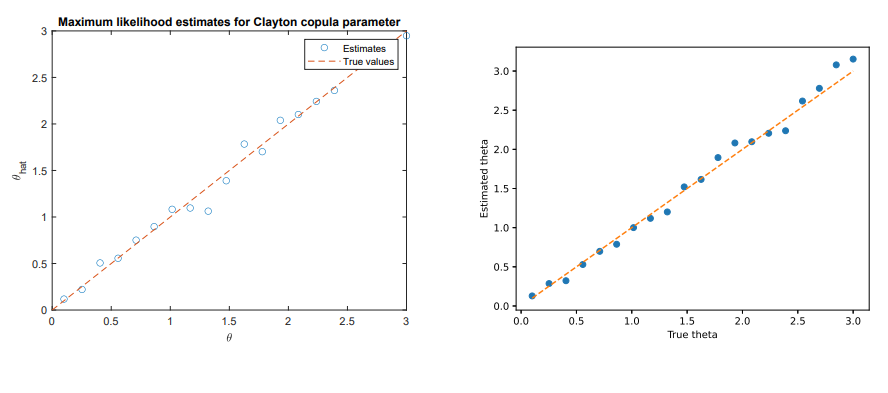

ChatGPT helps build a full Monte Carlo simulation for copula modeling—no human coding needed, just natural language and math prompts.

ChatGPT helps build a full Monte Carlo simulation for copula modeling—no human coding needed, just natural language and math prompts.

120. What is GPT-3 and Why Do We Need it?

GPT has become a hot topic over the last few years, and with good reason. It provides a general-purpose “text in, text out” interface

GPT has become a hot topic over the last few years, and with good reason. It provides a general-purpose “text in, text out” interface

121. Scientists Built a Smart Filter for Science Papers—and It’s Cleaning Up the Data Chaos

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

122. Text Classification Models: All Tips And Tricks From 5 Kaggle Competitions

In this article (originally posted by Shahul ES on the Neptune blog), I will discuss some great tips and tricks to improve the performance of your text classification model. These tricks are obtained from solutions of some of Kaggle’s top NLP competitions.

In this article (originally posted by Shahul ES on the Neptune blog), I will discuss some great tips and tricks to improve the performance of your text classification model. These tricks are obtained from solutions of some of Kaggle’s top NLP competitions.

123. Building Embodied Conversational AI: How We Taught a Robot to Understand, Navigate, and Interact

This is exactly what I tackled in the Alexa Prize SimBot Challenge where we built an embodied conversational agent that could understand instructions

This is exactly what I tackled in the Alexa Prize SimBot Challenge where we built an embodied conversational agent that could understand instructions

124. Reflecting on AI in 2023: Magic, Hope, Innovation and Disruption

Discover the transformative force of Artificial Intelligence. Explore the latest trends from NL to deep learning that are shaping the future of AI.

Discover the transformative force of Artificial Intelligence. Explore the latest trends from NL to deep learning that are shaping the future of AI.

125. How to Build a Text Summarizer with Gradio and Hugging Face Transformers

Craft concise summaries like a pro: Build your text summarizer web app with Gradio and NLP magic.

Craft concise summaries like a pro: Build your text summarizer web app with Gradio and NLP magic.

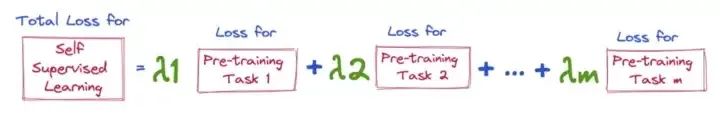

126. Language Modeling - A Look at the Most Common Pre-Training Tasks

This article is about putting all the popular pre-training tasks used in various language modelling tasks at a glance.

This article is about putting all the popular pre-training tasks used in various language modelling tasks at a glance.

127. Subtitles for Living: AR's Role in Language Translation

AR shines when our relationship with technology becomes more intuitive and in 2022, emerging AR capabilities are taking language translation a step further.

AR shines when our relationship with technology becomes more intuitive and in 2022, emerging AR capabilities are taking language translation a step further.

128. This AI Reads Science Papers Like a Pro, Even When Humans Can’t Agree on the Words

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

129. AI Dungeon: An AI-Generated Adventure Game by Nick Walton

The original AI Dungeon was made just over a year ago, the result of a curious gamer, a hackathon, and the GPT-2 text transformer. Fast forward to the present day, and AI Dungeon has expanded into a unique example of creative AI technology. The game now boasts 1.5 million players, multiple genres for stories, and even multiplayer adventures.

The original AI Dungeon was made just over a year ago, the result of a curious gamer, a hackathon, and the GPT-2 text transformer. Fast forward to the present day, and AI Dungeon has expanded into a unique example of creative AI technology. The game now boasts 1.5 million players, multiple genres for stories, and even multiplayer adventures.

130. Developing a Natural Language Understanding Model to Characterize Cable News Bias

The increasing trend of political polarization in the U.S. is reflected in media consumption patterns that indicate partisan polarization.

The increasing trend of political polarization in the U.S. is reflected in media consumption patterns that indicate partisan polarization.

131. Researchers Build AI Knowledge Graph That Sifts Through Science Papers For You

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

132. The Ten Must Read NLP/NLU Papers from the ICLR 2020 Conference

The International Conference on Learning Representations (ICLR) took place last week, and I had a pleasure to participate in it. ICLR is an event dedicated to research on all aspects of representation learning, commonly known as deep learning. This year the event was a bit different as it went virtual. However, the online format didn’t change the great atmosphere of the event. It was engaging and interactive and attracted 5600 attendees (twice as many as last year). If you’re interested in what organizers think about the unusual online arrangement of the conference, you can read about it here.

The International Conference on Learning Representations (ICLR) took place last week, and I had a pleasure to participate in it. ICLR is an event dedicated to research on all aspects of representation learning, commonly known as deep learning. This year the event was a bit different as it went virtual. However, the online format didn’t change the great atmosphere of the event. It was engaging and interactive and attracted 5600 attendees (twice as many as last year). If you’re interested in what organizers think about the unusual online arrangement of the conference, you can read about it here.

133. This AI Doesn’t Just Skim Scientific Papers—It Tags, Sorts, and Explains Them Too

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

This paper presents a new AI-powered knowledge graph that organizes real-world materials science research into an accessible, searchable database.

134. Is AI Affecting Your Business? Here's How To Make it Work For You Not Against You

135. What to do When Reviewing Academic Papers

Academic paper reviews is a necessary civic duty for researchers in all fields, humanities, science, engineering or anything in between.

Academic paper reviews is a necessary civic duty for researchers in all fields, humanities, science, engineering or anything in between.

136. 6 Emerging Technologies Product Managers Need To Master By 2026

There are quite a number of technologies to keep abreast with. But the good news is that these 6 emerging technologies will make you valuable.

There are quite a number of technologies to keep abreast with. But the good news is that these 6 emerging technologies will make you valuable.

137. CLIP: An Innovative Aqueduct Between Computer Vision and NLP

A rudimentary article describing the concept behind the "CLIP" algorithm in deep learning, its approach, implementation, scope & limitations.

A rudimentary article describing the concept behind the "CLIP" algorithm in deep learning, its approach, implementation, scope & limitations.

138. The Basics Of Natural Language Processing in 10 Minutes

Do you also want to learn NLP as Quick as Possible ? Perhaps you are here because you also want to learn natural language processing as quickly as possible, like me.

Do you also want to learn NLP as Quick as Possible ? Perhaps you are here because you also want to learn natural language processing as quickly as possible, like me.

139. Building a Job Entity Recognizer Using Amazon Comprehend - A How-To Guide

With the advent of Natural Language Processing (NLP), traditional job searches based on static keywords are becoming less desirable because of their inaccuracy and will eventually become obsolete. While the traditional search engine performs simple keyword searches, the NLP based search engine extract named entities, key phrases, sentiment, etc. to enrich the documents with metadata and perform search query based on the extracted metadata. In this tutorial, we will build a model to extract entities, such as skills, diploma and diploma major, from job descriptions using Named Entity Recognition (NER).

With the advent of Natural Language Processing (NLP), traditional job searches based on static keywords are becoming less desirable because of their inaccuracy and will eventually become obsolete. While the traditional search engine performs simple keyword searches, the NLP based search engine extract named entities, key phrases, sentiment, etc. to enrich the documents with metadata and perform search query based on the extracted metadata. In this tutorial, we will build a model to extract entities, such as skills, diploma and diploma major, from job descriptions using Named Entity Recognition (NER).

140. Code Book for Annotation of Diverse Cross-Document Coreference: Annotation Guidelines

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

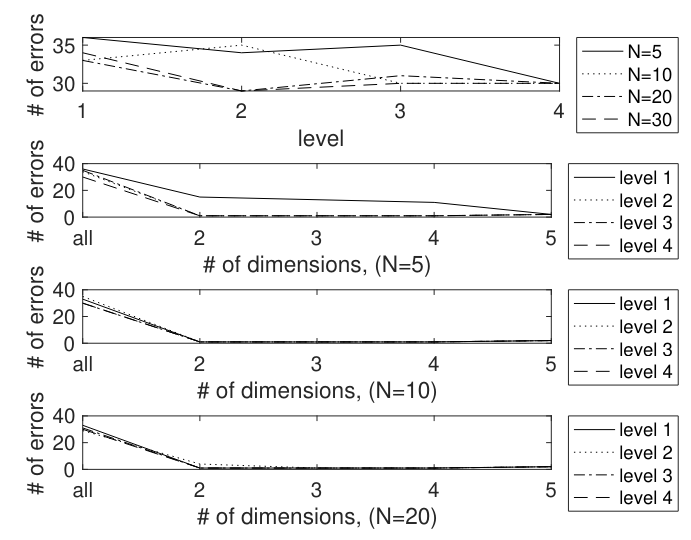

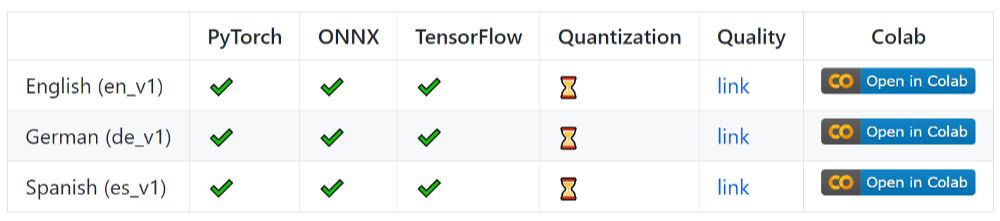

141. New Framework Simplifies Comparison of Language Processing Tools Across Multiple Languages

Researchers in Poland have developed an open-source tool that improves the evaluation and comparison of AI used in natural language preprocessing.

Researchers in Poland have developed an open-source tool that improves the evaluation and comparison of AI used in natural language preprocessing.

142. Shakespeare Meets Google's Flax

Some are born great, some achieve greatness, and some have greatness thrust upon them.

Some are born great, some achieve greatness, and some have greatness thrust upon them.

William Shakespeare, Twelfth Night, or What You Will

143. GPTerm: Creating Intelligent Terminal Apps with ChatGPT and LLM Models

In this article, the exciting realm of making terminal applications smarter is delved into by integrating ChatGPT, a cutting-edge language model.

In this article, the exciting realm of making terminal applications smarter is delved into by integrating ChatGPT, a cutting-edge language model.

144. LLMs Excel in NLP: Enabling Sophisticated Search Functionalities in E-commerce Platforms

Provide exceptional shopping experiences, businesses must leverage the power of LLMs as the e-commerce industry continues to evolve.

Provide exceptional shopping experiences, businesses must leverage the power of LLMs as the e-commerce industry continues to evolve.

145. How I Built a Python Pipeline to Analyze 16,695 Arabic Tweets on X

analyzing 16,695 Python pipeline Arabic tweets from X to detect linguistic uncertainty and examine how language influences social media engagement.

analyzing 16,695 Python pipeline Arabic tweets from X to detect linguistic uncertainty and examine how language influences social media engagement.

146. Introductory Guide to Automatic Language Translation in Python

Today, I'm going to share with you guys how to automatically perform language translation in Python programming.

Today, I'm going to share with you guys how to automatically perform language translation in Python programming.

147. 11 Proven Solutions to Common GitHub Copilot Problems

Discover 11 solution types used to fix GitHub Copilot problems—based on 497 real cases across bugs, settings, versions, features, and more.

Discover 11 solution types used to fix GitHub Copilot problems—based on 497 real cases across bugs, settings, versions, features, and more.

148. Top 6 Applications of Natural Language Processing in Healthcare

For many healthcare providers, the industry is shaping up to be more of a shifting quandary of regulatory issues, financial turmoil, and unforeseeable eruptions of resentment from practitioners on the edge of revolt. The industry is now taking the opportunity to scale up their big data defenses and develop the technological infrastructure required to meet the imminent challenges.

For many healthcare providers, the industry is shaping up to be more of a shifting quandary of regulatory issues, financial turmoil, and unforeseeable eruptions of resentment from practitioners on the edge of revolt. The industry is now taking the opportunity to scale up their big data defenses and develop the technological infrastructure required to meet the imminent challenges.

149. The Hitchhikers's Guide to PyTorch for Data Scientists

PyTorch has sort of became one of the de facto standard for creating Neural Networks now, and I love its interface. Yet, it is somehow a little difficult for beginners to get a hold of.

PyTorch has sort of became one of the de facto standard for creating Neural Networks now, and I love its interface. Yet, it is somehow a little difficult for beginners to get a hold of.

150. What Is Conversational AI: Principles and Examples

In this article, we will take the time to explain what conversational AI is: principles and examples to have a better idea of how you can implement it.

In this article, we will take the time to explain what conversational AI is: principles and examples to have a better idea of how you can implement it.

151. Diverse Cross-document Coreference and Media Bias Analysis

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

152. 5 Trends Shaping the Future of Data Analytics and Insights

Discover the 5 key trends shaping the future of data analytics—from synthetic data to NLP, data interoperability, data storytelling, and new data-centric roles.

Discover the 5 key trends shaping the future of data analytics—from synthetic data to NLP, data interoperability, data storytelling, and new data-centric roles.

153. NO! GPT-3 Will Not Steal Your Programming Job

TL;DR; GPT-3 will not take your programming job (Unless you are a terrible programmer, in which case you would have lost your job anyway)

TL;DR; GPT-3 will not take your programming job (Unless you are a terrible programmer, in which case you would have lost your job anyway)

154. Code Book for Annotation of Diverse Cross-Document Coreference: Acknowledgements

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

155. Code Book for Annotation of Diverse Cross-Document Coreference: Bibliographical References

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

156. Code Book for Annotation of Diverse Cross-Document Coreference: Abstract and Intro

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

157. How Do Chatbots Work in Call Centers?

Machine learning technologies help to significantly reduce the cost of providing services, as well as increase the efficiency of call centers.

Machine learning technologies help to significantly reduce the cost of providing services, as well as increase the efficiency of call centers.

158. 15 Must-read Machine Learning Articles for Data Scientists

As always, the fields of deep learning and natural language processing are as busy as ever. Despite many industries being hindered by the quarantine restrictions in many countries, the machine learning industry continues to move forward.

As always, the fields of deep learning and natural language processing are as busy as ever. Despite many industries being hindered by the quarantine restrictions in many countries, the machine learning industry continues to move forward.

159. Automating Multilingual Customer Service with Power Virtual Agent and Azure Cognitive Services

PVA is getting better with each release, but there are situations where you can use Azure Services to improve your user's experience. Here's one such example!

PVA is getting better with each release, but there are situations where you can use Azure Services to improve your user's experience. Here's one such example!

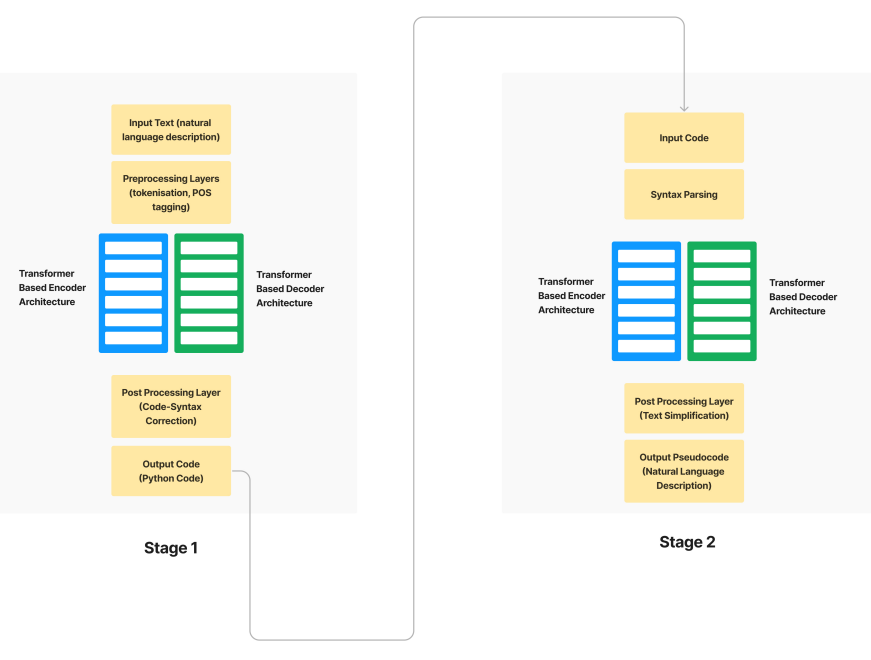

160. Converting Epics/Stories into Pseudocode using Transformers

Automate Agile development with an NLP-based methodology to convert user stories into pseudocode, enhancing efficiency and reducing project time.

Automate Agile development with an NLP-based methodology to convert user stories into pseudocode, enhancing efficiency and reducing project time.

161. A Novel Method for Analysing Racial Bias: Collection of Person Level References: Analysis and Result

In this study, researchers propose a novel method to analyze representations of African Americans and White Americans in books between 1850 to 2000.

In this study, researchers propose a novel method to analyze representations of African Americans and White Americans in books between 1850 to 2000.

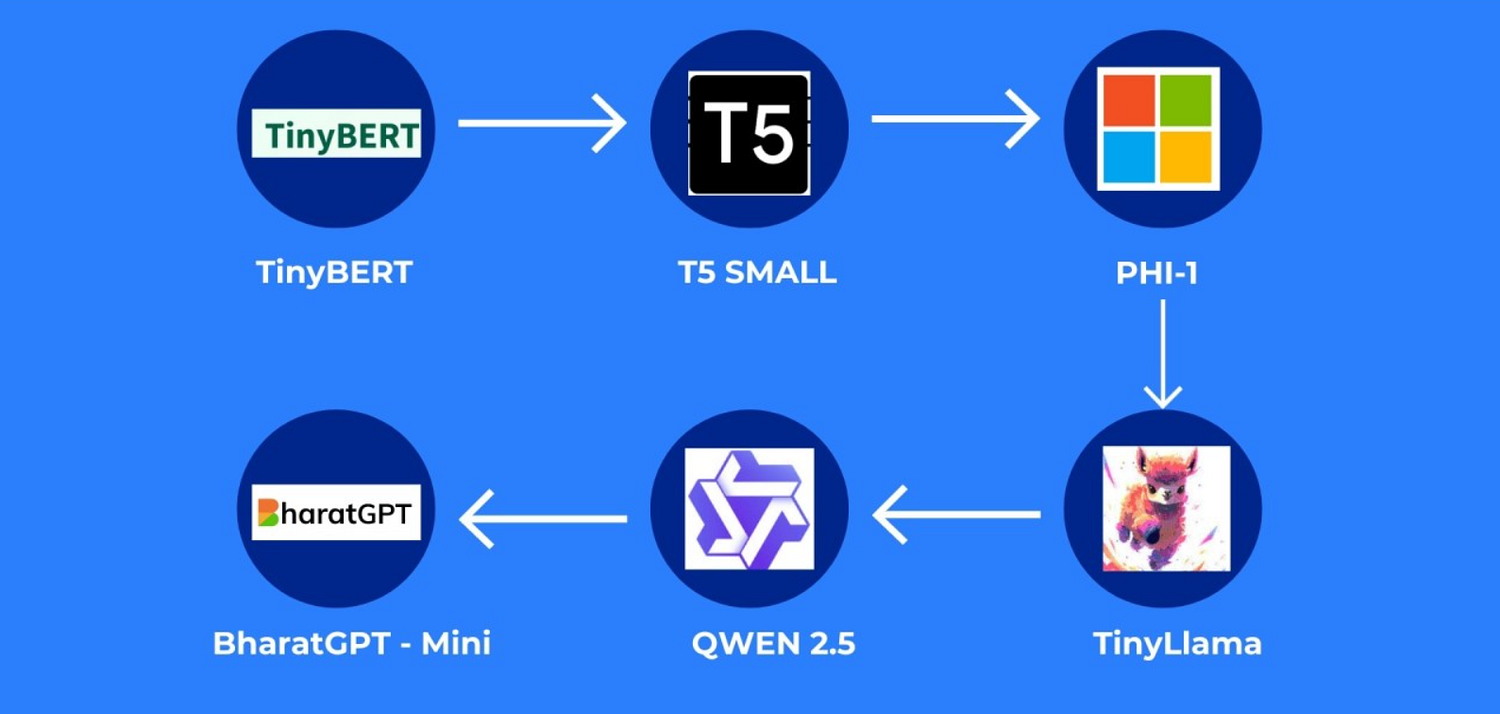

162. Cutting AI Costs Without Losing Capability: The Rise of Small Language Models

Learn how small language models are helping teams cut AI costs, run locally, and deliver fast, private, and scalable intelligence.

Learn how small language models are helping teams cut AI costs, run locally, and deliver fast, private, and scalable intelligence.

163. A Novel Method for Analysing Racial Bias: Collection of Person Level References: Appendix: Wikidata

In this study, researchers propose a novel method to analyze representations of African Americans and White Americans in books between 1850 to 2000.

In this study, researchers propose a novel method to analyze representations of African Americans and White Americans in books between 1850 to 2000.

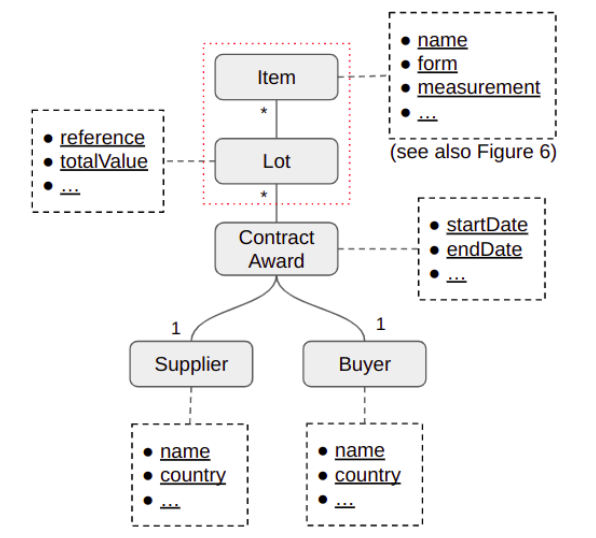

164. A New Era for Procurement Text Mining

Exploring NLP for healthcare procurement, this study offers insights, challenges, and recommendations for practical, domain-specific text mining solutions.

Exploring NLP for healthcare procurement, this study offers insights, challenges, and recommendations for practical, domain-specific text mining solutions.

165. Building a Metaverse For Everyone

166. Another Wave: A BASIC ELIZA Turns the PC Generation On to AI

In 1977, a BASIC version of ELIZA captivated personal computer users, spreading AI curiosity during the PC explosion, while the original MAD-SLIP ELIZA faded.

In 1977, a BASIC version of ELIZA captivated personal computer users, spreading AI curiosity during the PC explosion, while the original MAD-SLIP ELIZA faded.

167. Code Book for Annotation of Diverse Cross-Document Coreference: Annotation Tool

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

This paper presents a scheme for annotating coreference across news articles, extending beyond traditional identity relations.

168. How to Build a Twitter Sentiment Analysis System

Understanding the sentiment of tweets is important for a variety of reasons: business marketing, politics, public behavior analysis, and information gathering are just a few examples. Sentiment analysis of twitter data can help marketers understand the customer response to product launches and marketing campaigns, and it can also help political parties understand the public response to policy changes or announcements.

Understanding the sentiment of tweets is important for a variety of reasons: business marketing, politics, public behavior analysis, and information gathering are just a few examples. Sentiment analysis of twitter data can help marketers understand the customer response to product launches and marketing campaigns, and it can also help political parties understand the public response to policy changes or announcements.

169. Cocktail Alchemy: Creating New Recipes With Transformers

Build a transformer model with natural language processing to create new cocktail recipes from a cocktail database.

Build a transformer model with natural language processing to create new cocktail recipes from a cocktail database.

170. Text Classification in iOS using tensorflowlite [A How-To Guide]

Text classification is task of categorising text according to its content. It is the fundamental problem in the field of Natural Language Processing(NLP). More general applications of text classifications are in email spam detection, sentiment analysis and topic labelling etc.

Text classification is task of categorising text according to its content. It is the fundamental problem in the field of Natural Language Processing(NLP). More general applications of text classifications are in email spam detection, sentiment analysis and topic labelling etc.

171. Biomedical Knowledge Graphs and Question Answering Systems

Learn how knowledge graphs and NLP enable question answering in biomedicine, aiding researchers and doctors with organized data (COVID-19, EHR examples).

Learn how knowledge graphs and NLP enable question answering in biomedicine, aiding researchers and doctors with organized data (COVID-19, EHR examples).

172. I Got Cancer, my Doctors Gave up on my Quality of Life, I Built an AI-app to Help Myself and Others

Suneeta Modekurty wanted to help cancer survivors regain control over their health through personalized, data-backed recommendations.

Suneeta Modekurty wanted to help cancer survivors regain control over their health through personalized, data-backed recommendations.

173. Biomedical NLP: Text Generation & Knowledge Reasoning Tasks

Discover NLP tasks in biomedicine: question/dialogue generation (CHIP, CCKS), medical reading comprehension, and diagnostic reasoning (CCL, CHIP).

Discover NLP tasks in biomedicine: question/dialogue generation (CHIP, CCKS), medical reading comprehension, and diagnostic reasoning (CCL, CHIP).

174. A Novel Method for Analysing Racial Bias: Appendix: Hyperparameter Sensitivity

In this study, researchers propose a novel method to analyze representations of African Americans and White Americans in books between 1850 to 2000.

In this study, researchers propose a novel method to analyze representations of African Americans and White Americans in books between 1850 to 2000.

175. What Happens to Mobile Apps When AI and Machine Learning Join Forces?

A model can take into consideration a lot more parameters than the human brain.

A model can take into consideration a lot more parameters than the human brain.

176. How a natural gas company is using machine learning in natural gas exploration

The modern business world is becoming increasingly technology-driven and machine learning (MD) is currently at the forefront. While one might not inherently in

The modern business world is becoming increasingly technology-driven and machine learning (MD) is currently at the forefront. While one might not inherently in

177. Maximizing NLP Capabilities with Large Language Models

While NLP effectively facilitates machines to understand human language, the LLM capabilities have been greatly enhanced. Read this blog post to learn more.

While NLP effectively facilitates machines to understand human language, the LLM capabilities have been greatly enhanced. Read this blog post to learn more.

178. Top Tips For Competing in a Kaggle Competition

Hi, my name is Prashant Kikani and in this blog post, I share some tricks and tips to compete in Kaggle competitions and some code snippets which help in achieving results in limited resources. Here is my Kaggle profile.

Hi, my name is Prashant Kikani and in this blog post, I share some tricks and tips to compete in Kaggle competitions and some code snippets which help in achieving results in limited resources. Here is my Kaggle profile.

179. Understanding and Generating Dialogue between Characters in Stories: Abstract and Intro

Exploring machine understanding of story dialogue via new tasks and dataset, improving coherence and speaker recognition in storytelling AI.

Exploring machine understanding of story dialogue via new tasks and dataset, improving coherence and speaker recognition in storytelling AI.

180. Future Perspectives in the Era of Large Language Models, and References

Explore future perspectives for enhancing biomedical text mining community challenges in the era of large language models.

Explore future perspectives for enhancing biomedical text mining community challenges in the era of large language models.

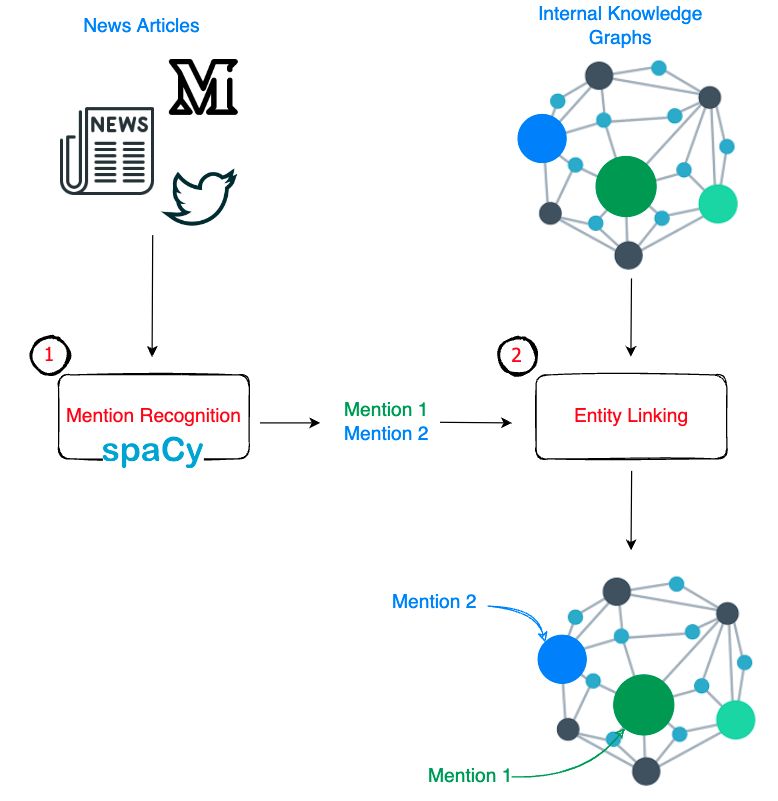

181. Neural Entity Linking and How JPMorgan Chase Plans to Use it

This article summarizes the problem statement, solution, and other key technical components of the paper: End-to-End Neural Entity Linking in JP Morgan Chase

This article summarizes the problem statement, solution, and other key technical components of the paper: End-to-End Neural Entity Linking in JP Morgan Chase

182. How I Extracted Meaningful Information from Inconsistent Data Using ChatGPT

Data Analyis Project using Spacy and Regular Expressions to extract specific strings from a data set.

Data Analyis Project using Spacy and Regular Expressions to extract specific strings from a data set.

183. The Impact of AI Transformers on the Customer Experience

I have spent the last few weeks understanding the impact of a great revolution in the world of Artificial Intelligence and NLP on the customer experience. Not from a purely technical point of view, but trying to estimate the competitive advantage that this new approach can generate. We are facing yet another disruptive innovation, and it can bring significant advantages, let's try to find out which ones.

I have spent the last few weeks understanding the impact of a great revolution in the world of Artificial Intelligence and NLP on the customer experience. Not from a purely technical point of view, but trying to estimate the competitive advantage that this new approach can generate. We are facing yet another disruptive innovation, and it can bring significant advantages, let's try to find out which ones.

184. Nir Eyal Discusses Becoming 'Indistractable,' Time Management, Focus and ChatGPT

The emergence of ChatGPT has stirred major buzz around the world and massive disruptions across multiple industries.

The emergence of ChatGPT has stirred major buzz around the world and massive disruptions across multiple industries.

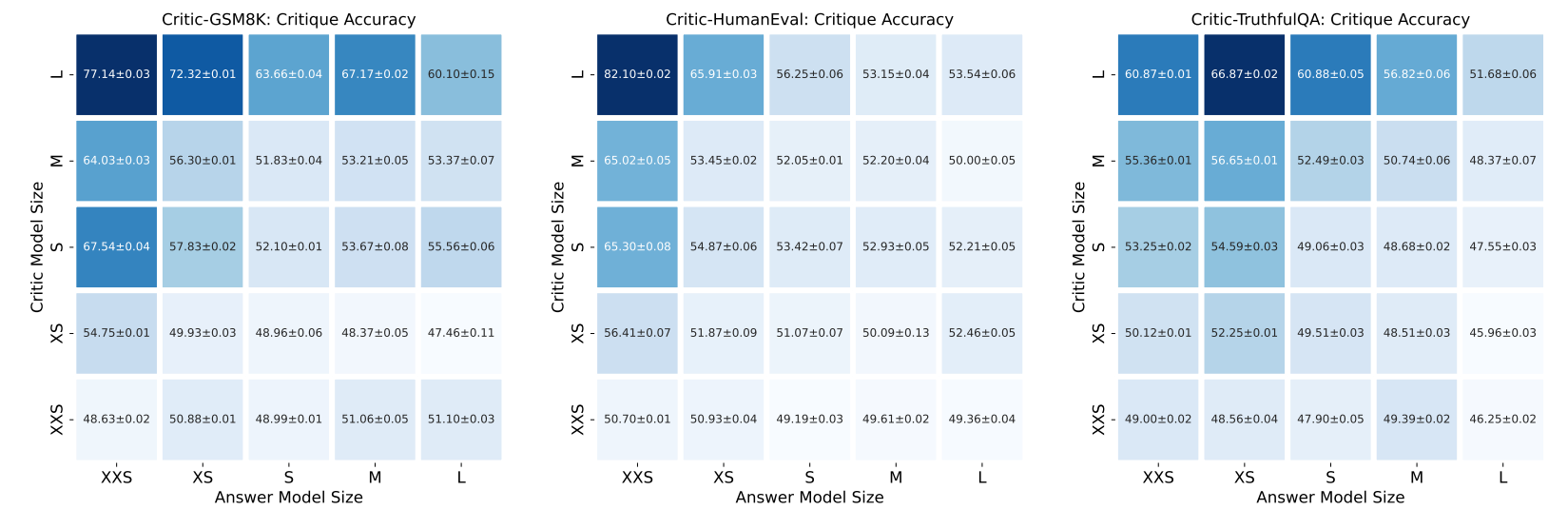

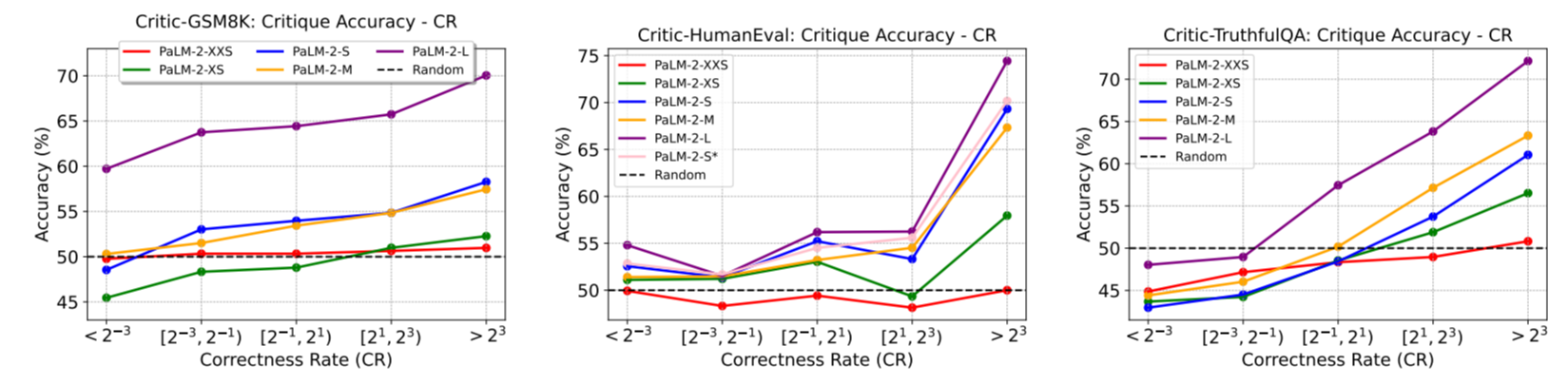

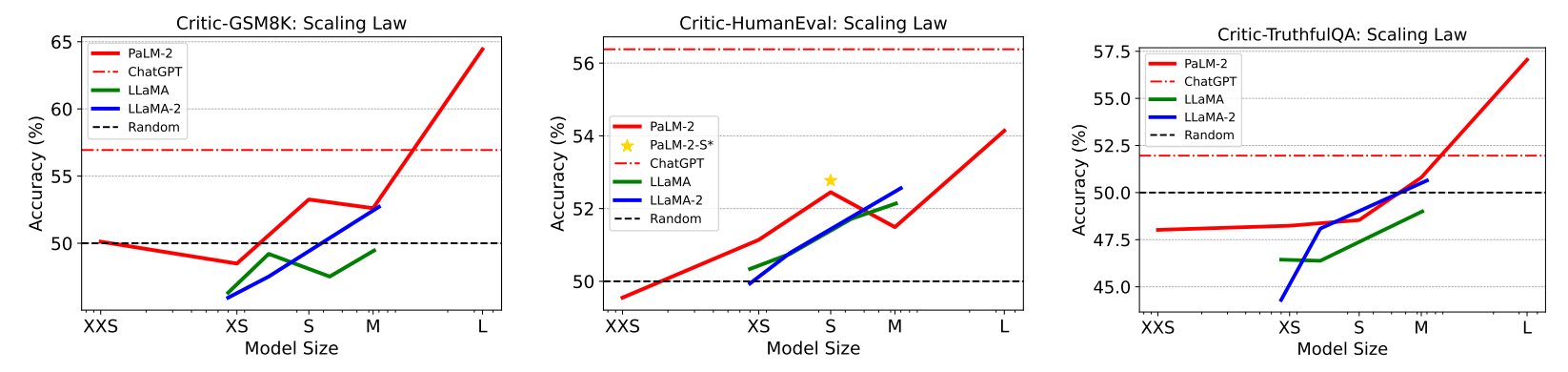

185. Improving LLM Performance with Self-Consistency and Self-Check

Can AI critique itself? This study shows how self-check improves ChatGPT, GPT-4, and PaLM-2 accuracy on benchmark tasks.

Can AI critique itself? This study shows how self-check improves ChatGPT, GPT-4, and PaLM-2 accuracy on benchmark tasks.

186. Checking If Your Headline A Clickbait: A How-To Guide

For those who don’t know what that is… It is basically a magical tool that allows anyone to take existing AI models and train them for their own data, however, small the dataset maybe. Sounds good, right?

For those who don’t know what that is… It is basically a magical tool that allows anyone to take existing AI models and train them for their own data, however, small the dataset maybe. Sounds good, right?

187. Limitations of Current Biomedical Text Mining Community Challenges

Explore the shortcomings of current community challenge evaluation tasks in biomedical text mining, including data representativeness and innovation

Explore the shortcomings of current community challenge evaluation tasks in biomedical text mining, including data representativeness and innovation

188. Answering Whither Artificial Intelligence By Building A Bot

During one of our call with Yardy, discussing our next venture, we thought about implementing AI to streamline certain functions. Given that I had some experience with Machine Learning, our fund had a project aiming to evaluate ICOs & Coins on specific criteria.

During one of our call with Yardy, discussing our next venture, we thought about implementing AI to streamline certain functions. Given that I had some experience with Machine Learning, our fund had a project aiming to evaluate ICOs & Coins on specific criteria.

189. The Art of Transformers: How AI Intuitively Summarizes Business Papers Using NLP

“I don’t want a full paper, just give me a concise summary of it”. Who hasn't found themselves in this situation, at least once? Sound familiar?

“I don’t want a full paper, just give me a concise summary of it”. Who hasn't found themselves in this situation, at least once? Sound familiar?

190. ELIZA Reinterpreted: The World’s First Chatbot Was Not Intended as a Chatbot at All

The world's first chatbot, ELIZA, wasn't built to be a chatbot. Learn what its creator, Weizenbaum, designed it for.

The world's first chatbot, ELIZA, wasn't built to be a chatbot. Learn what its creator, Weizenbaum, designed it for.

191. Evidence That AI Will Soon Pass the Turing Test (or maybe it already has)

You might be wondering if machines are a threat to the world we live in, or if they’re just another tool in our quest to improve ourselves. If you think that AI is just another tool, you might be surprised to hear that some of the biggest names in technology have a clear concern for it. As Mark Ralston wrote, “The great fear of machine intelligence is that it may take over our jobs, our economies, and our governments”.

You might be wondering if machines are a threat to the world we live in, or if they’re just another tool in our quest to improve ourselves. If you think that AI is just another tool, you might be surprised to hear that some of the biggest names in technology have a clear concern for it. As Mark Ralston wrote, “The great fear of machine intelligence is that it may take over our jobs, our economies, and our governments”.

192. The Accidental AI: How ELIZA's Lisp Adaptation Derailed Its Original Research Intent

Explore how in an ironic twist ELIZA's Lisp adaptation overshadowed its original intent as a research platform, leading to widespread misinterpretation.

Explore how in an ironic twist ELIZA's Lisp adaptation overshadowed its original intent as a research platform, leading to widespread misinterpretation.

193. The High Cost of Training Data in NLP Projects

Explore the high cost of training data in NLP projects, comparing supervised vs. rule-based methods and highlighting practicality in industry contexts.

Explore the high cost of training data in NLP projects, comparing supervised vs. rule-based methods and highlighting practicality in industry contexts.

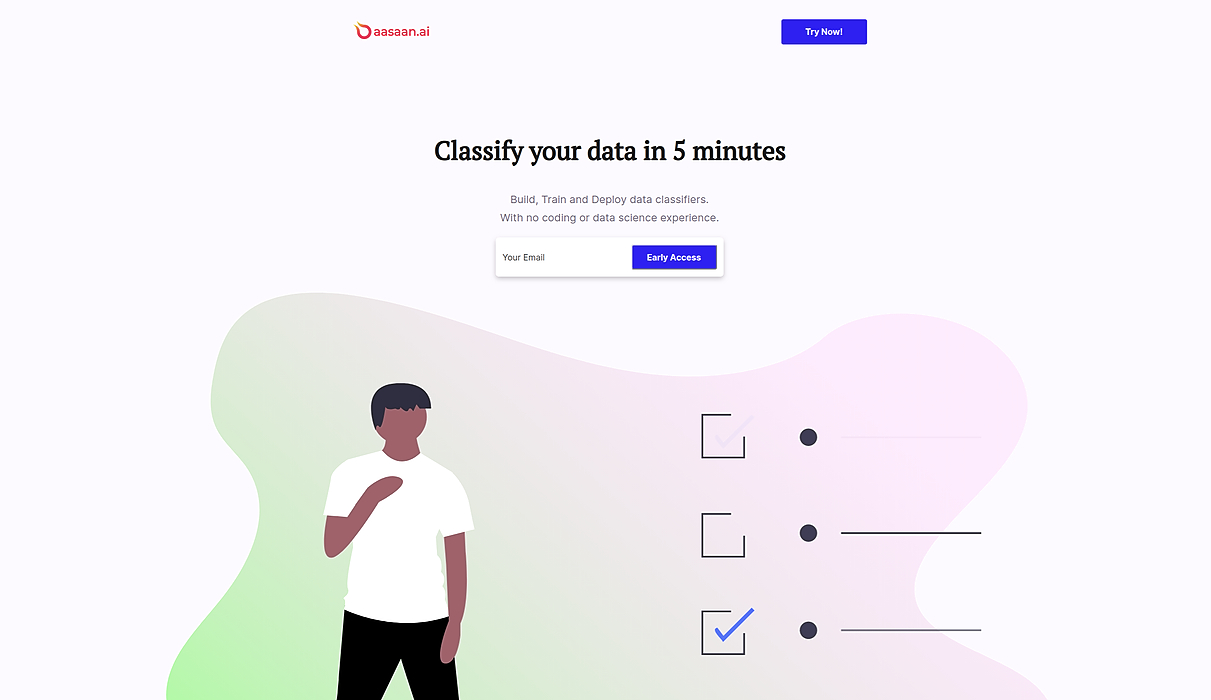

194. Introducing aasaan.ai: No-Code Yelp Sentiment Classification

Introduction

Introduction

195. AI's Role In Language Learning: Stuart Barrass, Kaizen Languages CEO

We spoke with Stuart Barrass, CEO and Co-founder of Kaizen Languages, a startup that helps people with language aquisition through AI-Driven conversations.

We spoke with Stuart Barrass, CEO and Co-founder of Kaizen Languages, a startup that helps people with language aquisition through AI-Driven conversations.

196. Semantic Textual Similarity: Here's How It's Changing the Game

In this case, however, genuine game-changing is occurring, as STS fundamentally improves search engine and recommendation system accuracy and relevance.

In this case, however, genuine game-changing is occurring, as STS fundamentally improves search engine and recommendation system accuracy and relevance.

197. How to Analyze Call Sentiment With Open-Source NLP Libraries

Unlock call sentiment analysis using open-source NLP. Discover how to analyze customer emotions, improve service, and gain valuable insights from voice data.