Let's learn about Neural Networks via these 291 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

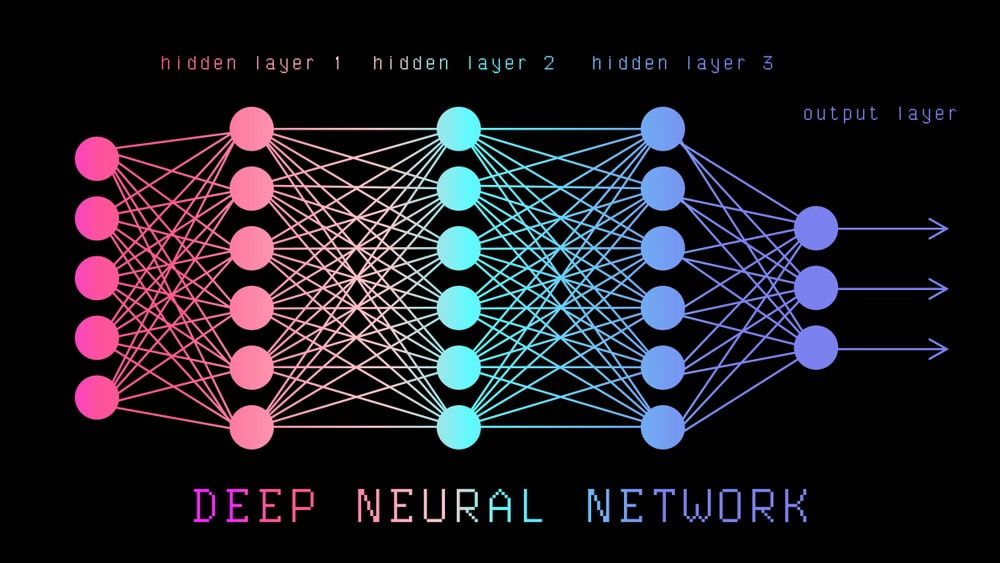

The crux of deep learning algorithms. Neural networks mimic the way that biological neurons signal to one another in the human brain.

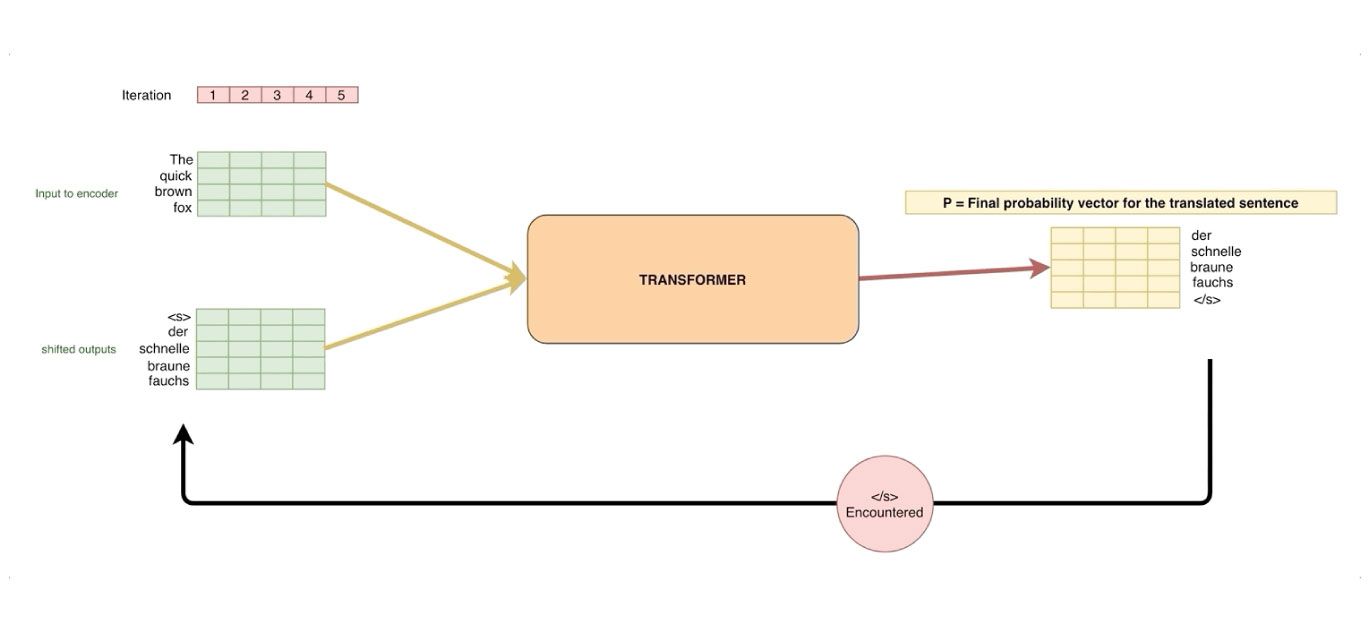

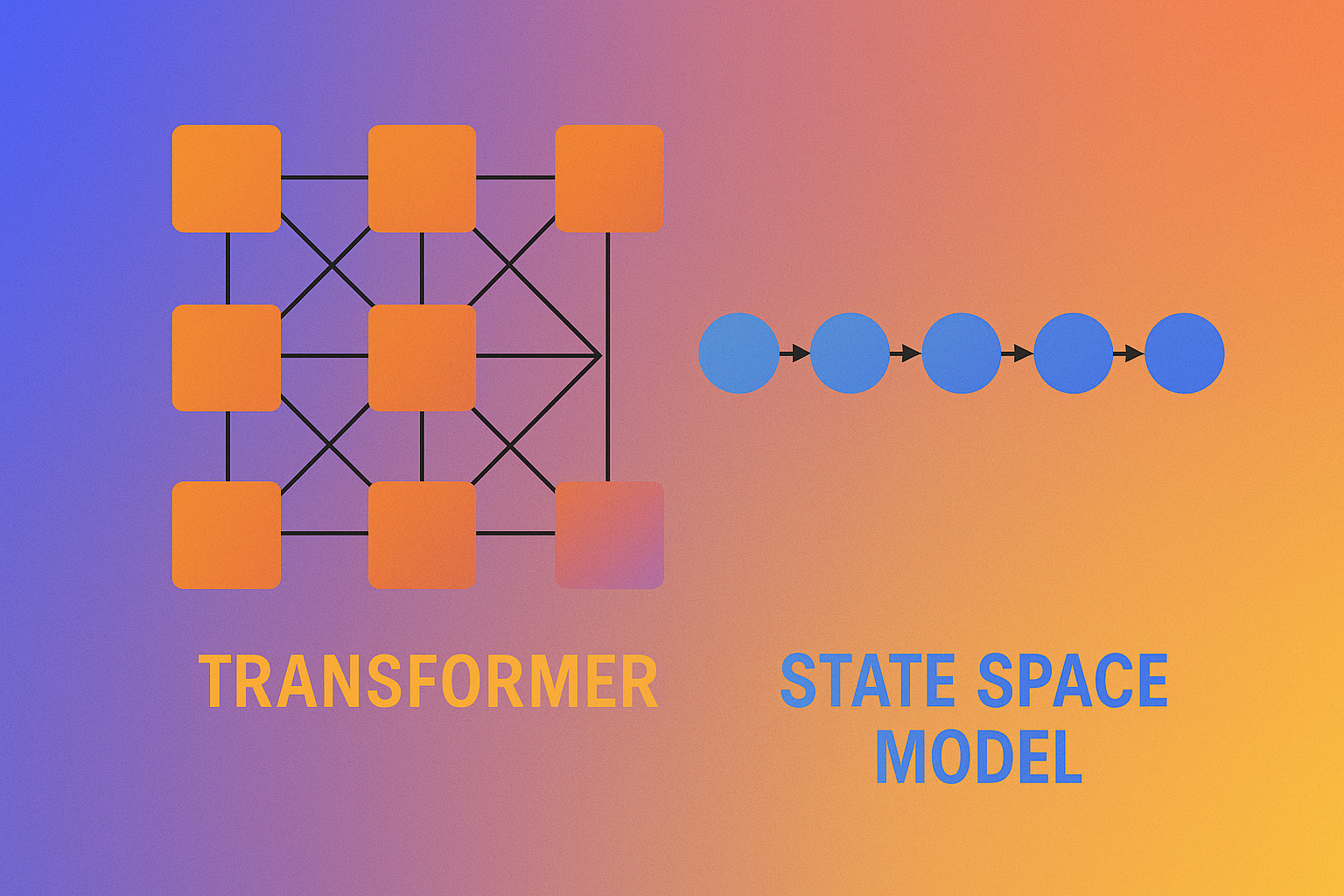

1. Decoding Transformers' Superiority over RNNs in NLP Tasks

Explore the intriguing journey from Recurrent Neural Networks (RNNs) to Transformers in the world of Natural Language Processing in our latest piece: 'The Trans

Explore the intriguing journey from Recurrent Neural Networks (RNNs) to Transformers in the world of Natural Language Processing in our latest piece: 'The Trans

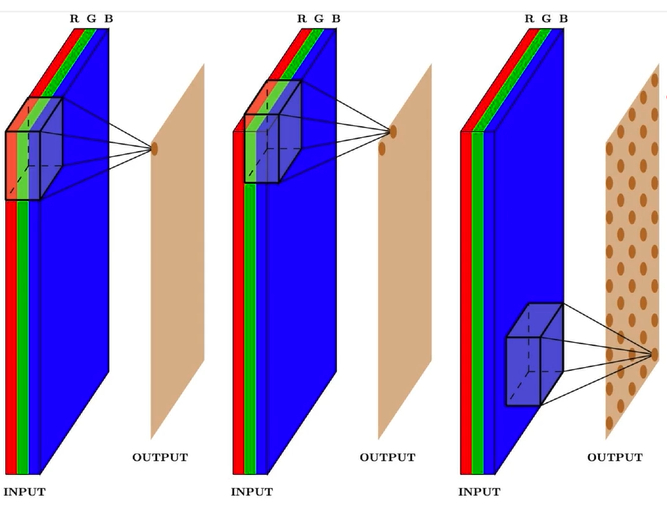

2. Deep Learning CNN’s in Tensorflow with GPUs

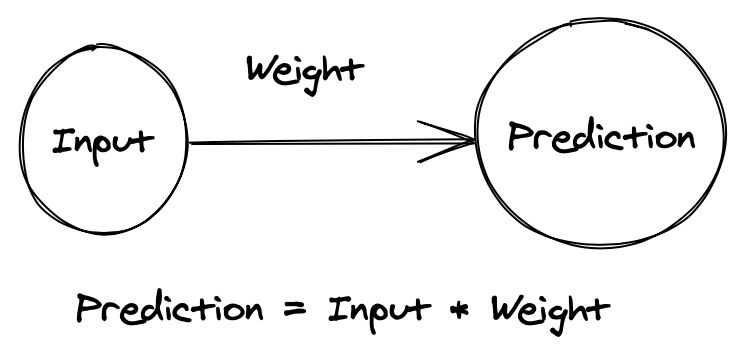

3. How to Initialize weights in a neural net so it performs well?

We know that in a neural network, weights are initialized usually randomly and that kind of initialization takes fair / significant amount of repetitions to converge to the least loss and reach to the ideal weight matrix. The problem is, this kind of initialization is prone to vanishing or exploding gradient problems.

We know that in a neural network, weights are initialized usually randomly and that kind of initialization takes fair / significant amount of repetitions to converge to the least loss and reach to the ideal weight matrix. The problem is, this kind of initialization is prone to vanishing or exploding gradient problems.

4. An introduction to Artificial Intelligence

One of the key feature that distinguish us, humans, from every thing else in the world is intelligence. This ability to understand, apply knowledge and improve skills has played significant role in our evolution and establishing human civilisation. But many people (including Elon Musk) believe that the advancement in technology can create super intelligence that can threaten human existence.

One of the key feature that distinguish us, humans, from every thing else in the world is intelligence. This ability to understand, apply knowledge and improve skills has played significant role in our evolution and establishing human civilisation. But many people (including Elon Musk) believe that the advancement in technology can create super intelligence that can threaten human existence.

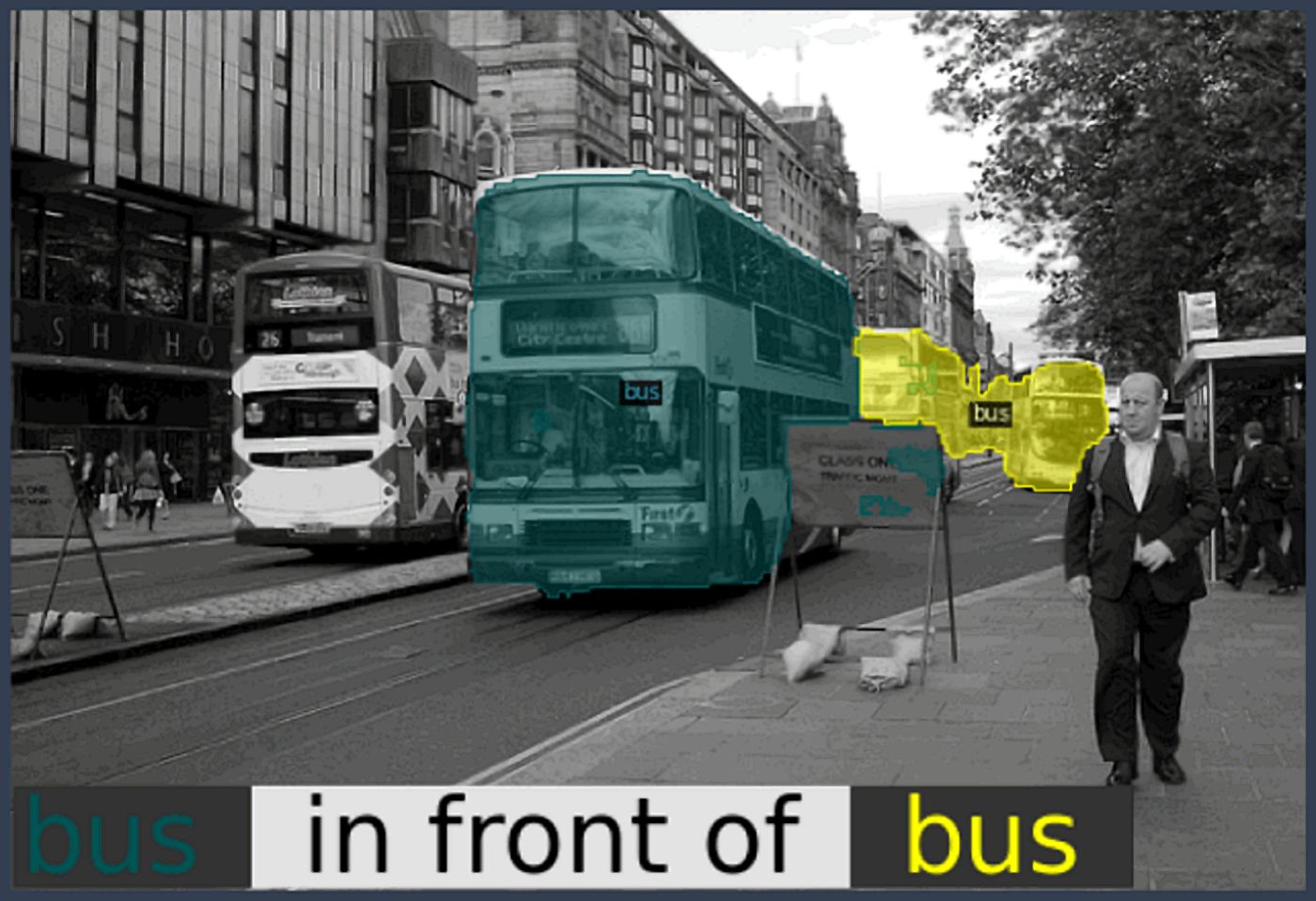

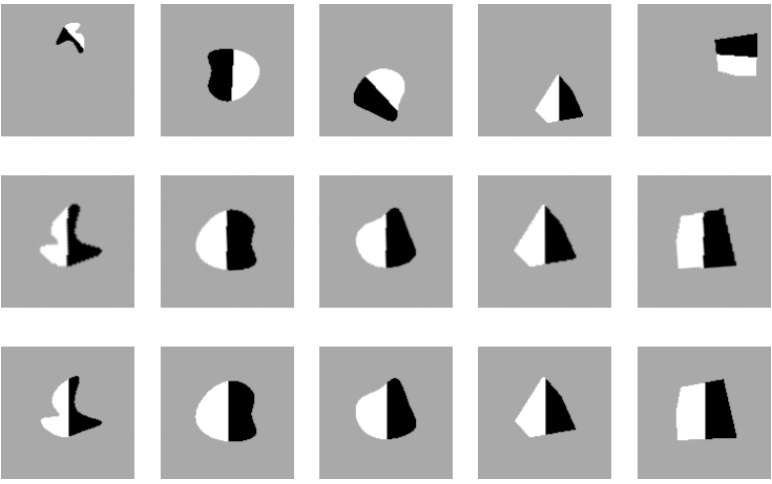

5. A Deep Dive Into Semantic Segmentation Evaluation Metrics

Semantic segmentation is an area of computer vision that specialises in dividing an image into regions based on pixel characteristics.

Semantic segmentation is an area of computer vision that specialises in dividing an image into regions based on pixel characteristics.

6. Karate Club a Python library for graph representation learning

Karate Club is an unsupervised machine learning extension library for the NetworkX Python package. See the documentation here.

Karate Club is an unsupervised machine learning extension library for the NetworkX Python package. See the documentation here.

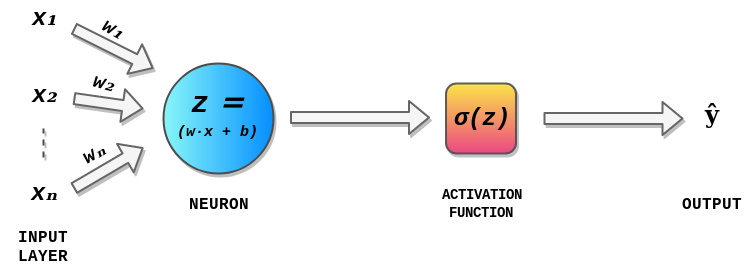

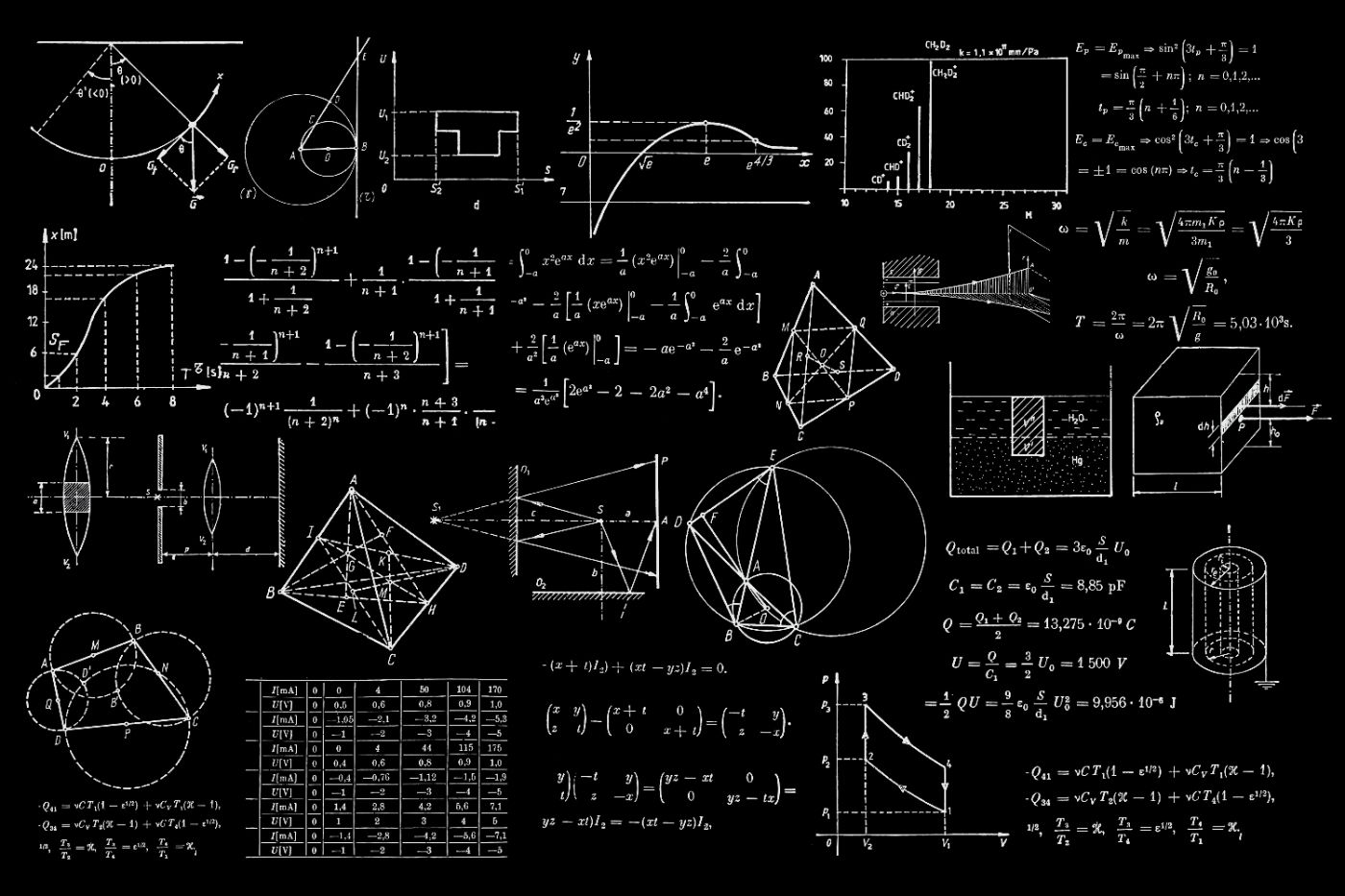

7. Introduction To Maths Behind Neural Networks

Today, with open source machine learning software libraries such as TensorFlow, Keras or PyTorch we can create neural network, even with a high structural complexity, with just a few lines of code. Having said that, the Math behind neural networks is still a mystery to some of us and having the Math knowledge behind neural networks and deep learning can help us understand what’s happening inside a neural network. It is also helpful in architecture selection, fine-tuning of Deep Learning models, hyperparameters tuning and optimization.

Today, with open source machine learning software libraries such as TensorFlow, Keras or PyTorch we can create neural network, even with a high structural complexity, with just a few lines of code. Having said that, the Math behind neural networks is still a mystery to some of us and having the Math knowledge behind neural networks and deep learning can help us understand what’s happening inside a neural network. It is also helpful in architecture selection, fine-tuning of Deep Learning models, hyperparameters tuning and optimization.

8. 20 Best Machine Learning Resources for Data Scientists

Whether you’re a beginner looking for introductory articles or an intermediate looking for datasets or papers about new AI models, this list of machine learning resources has something for everyone interested in or working in data science. In this article, we will introduce guides, papers, tools and datasets for both computer vision and natural language processing.

Whether you’re a beginner looking for introductory articles or an intermediate looking for datasets or papers about new AI models, this list of machine learning resources has something for everyone interested in or working in data science. In this article, we will introduce guides, papers, tools and datasets for both computer vision and natural language processing.

9. The Full Story behind Convolutional Neural Networks and the Math Behind it

Convolutional Neural Networks became really popular after 2010 because they outperformed any other network architecture on visual data, but the concept behind CNN is not new. In fact, it is very much inspired by the human visual system. In this article, I aim to explain in very details how researchers came up with the idea of CNN, how they are structured, how the math behind them works and what techniques are applied to improve their performance.

Convolutional Neural Networks became really popular after 2010 because they outperformed any other network architecture on visual data, but the concept behind CNN is not new. In fact, it is very much inspired by the human visual system. In this article, I aim to explain in very details how researchers came up with the idea of CNN, how they are structured, how the math behind them works and what techniques are applied to improve their performance.

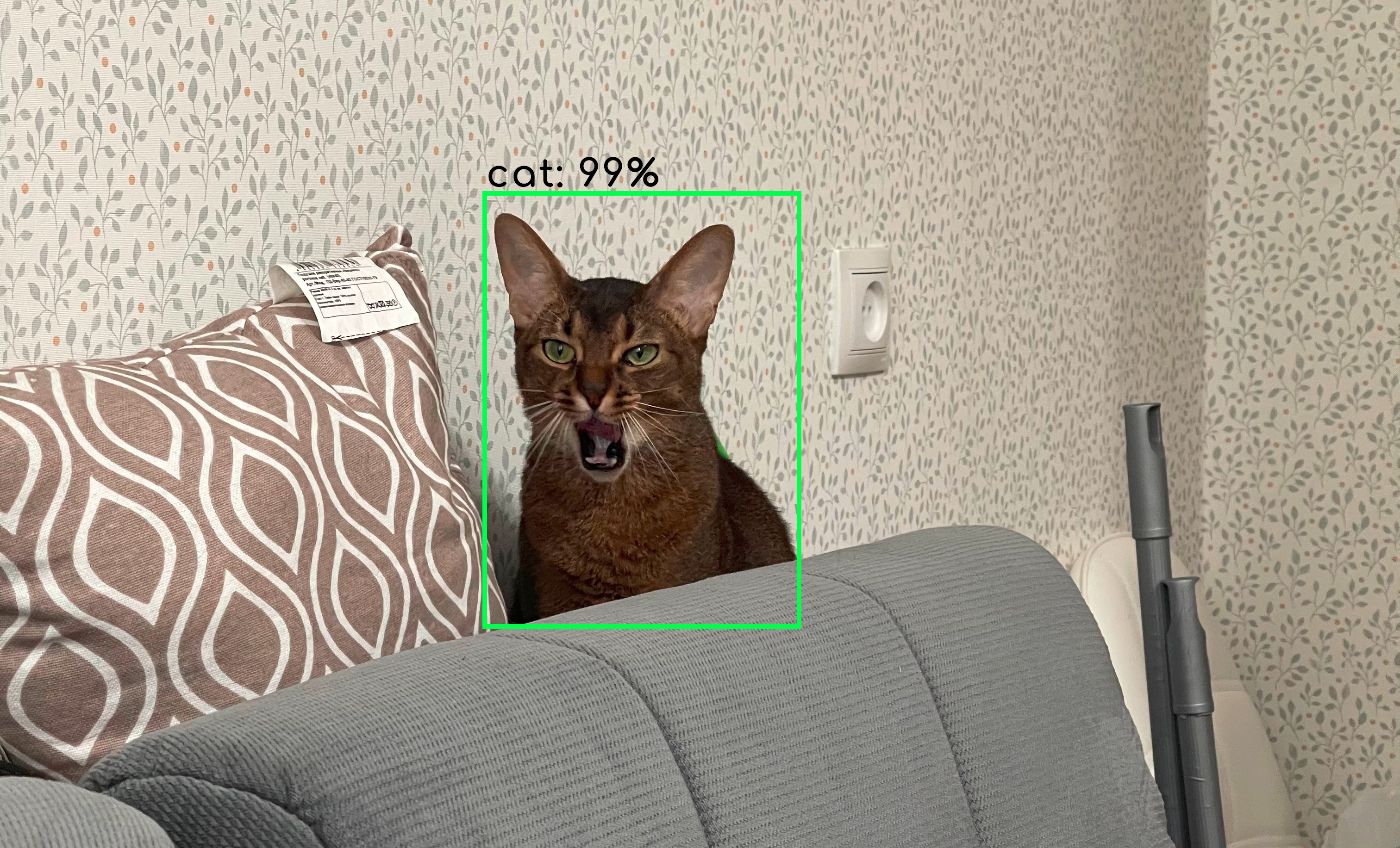

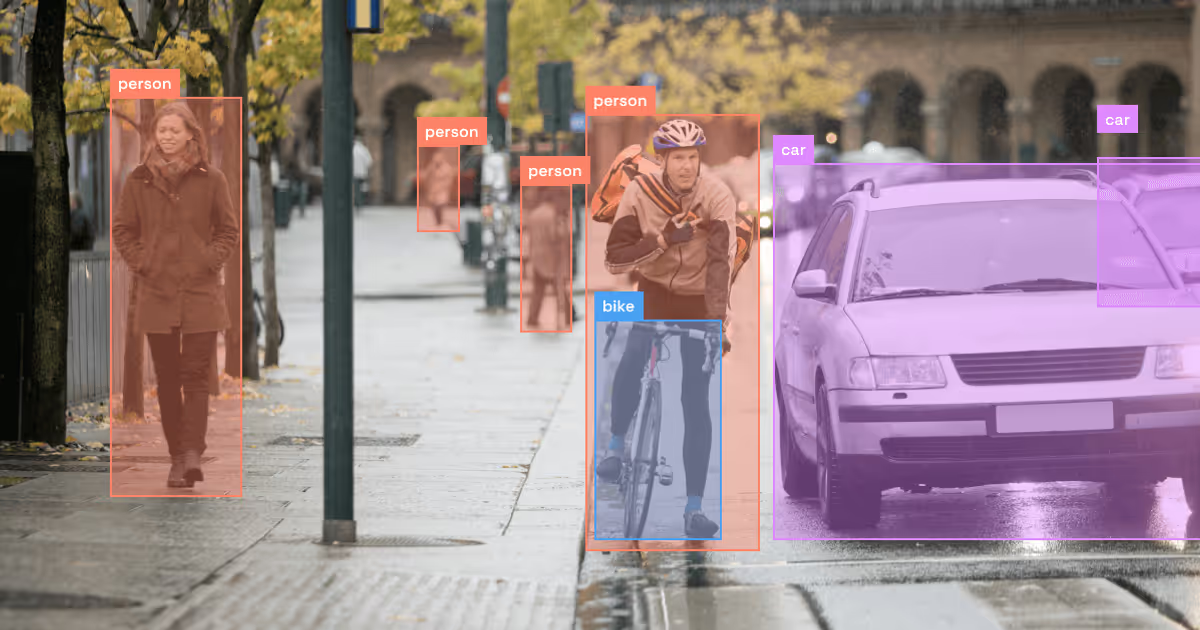

10. Object Detection Frameworks That Will Dominate 2023 and Beyond

Frameworks for object detection and computer vision tasks are indeed numerous. This article attempts to highlight the available frameworks for object detection.

Frameworks for object detection and computer vision tasks are indeed numerous. This article attempts to highlight the available frameworks for object detection.

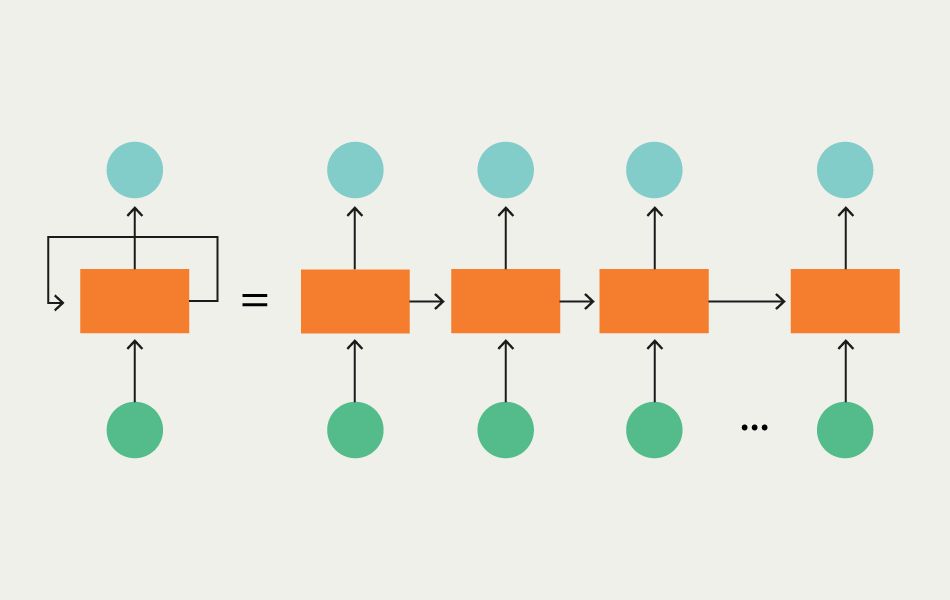

11. What is an RNN (Recurrent Neural Network) in Deep Learning?

RNN is one of the popular neural networks that is commonly used to solve natural language processing tasks.

RNN is one of the popular neural networks that is commonly used to solve natural language processing tasks.

12. Accelerating Neural Networks: The Power of Quantization

A hands-on guide to neural network quantization: theory, PyTorch implementation, and practical tips for optimizing models for edge devices

A hands-on guide to neural network quantization: theory, PyTorch implementation, and practical tips for optimizing models for edge devices

13. Essential Guide to Transformer Models in Machine Learning

Transformer models have become the defacto standard for NLP tasks. As an example, I’m sure you’ve already seen the awesome GPT3 Transformer demos and articles detailing how much time and money it took to train.

Transformer models have become the defacto standard for NLP tasks. As an example, I’m sure you’ve already seen the awesome GPT3 Transformer demos and articles detailing how much time and money it took to train.

14. GANs or Generative Adversarial Networks: Learn By Building One

The machines have been trying to learn to recognize and identify the photos they have seen for years. In 2013, it succeeded in reaching the human level.

The machines have been trying to learn to recognize and identify the photos they have seen for years. In 2013, it succeeded in reaching the human level.

15. Binary Classification: Understanding Activation and Loss Functions with a PyTorch Example

Binary classification NN is used with the sigmoid activation function on its final layer together with BCE loss. The final layer size should be 1.

Binary classification NN is used with the sigmoid activation function on its final layer together with BCE loss. The final layer size should be 1.

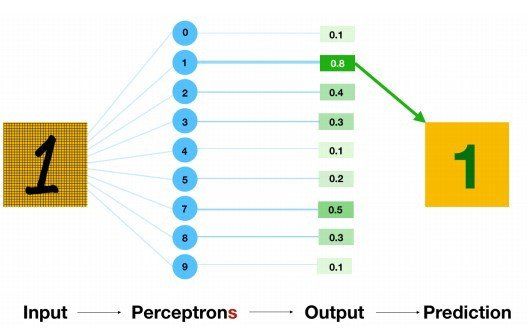

16. How to Perform MNIST Digit Recognition with a Multi-layer Neural Network

Human Visual System is a marvel of the world. People can readily recognise digits. But it is not as simple as it looks like. The human brain has a million neurons and billions of connections between them, which makes this exceptionally complex task of image processing easier. People can effortlessly recognize digits.

Human Visual System is a marvel of the world. People can readily recognise digits. But it is not as simple as it looks like. The human brain has a million neurons and billions of connections between them, which makes this exceptionally complex task of image processing easier. People can effortlessly recognize digits.

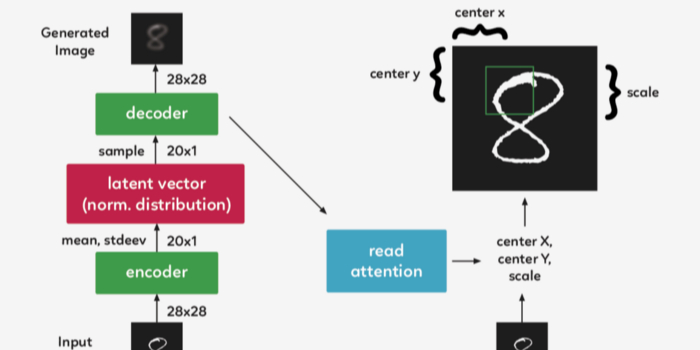

17. Understanding A Recurrent Neural Network For Image Generation

The purpose of this post is to implement and understand Google Deepmind’s paper DRAW: A Recurrent Neural Network For Image Generation. The code is based on the work of Eric Jang, who in his original code was able to achieve the implementation in only 158 lines of Python code.

The purpose of this post is to implement and understand Google Deepmind’s paper DRAW: A Recurrent Neural Network For Image Generation. The code is based on the work of Eric Jang, who in his original code was able to achieve the implementation in only 158 lines of Python code.

18. How to Build Your Own PyTorch Neural Network Layer from Scratch

This is actually an assignment from Jeremy Howard’s fast.ai course, lesson 5. I’ve showcased how easy it is to build a Convolutional Neural Networks from scratch using PyTorch. Today, let’s try to delve down even deeper and see if we could write our own nn.Linear module. Why waste your time writing your own PyTorch module while it’s already been written by the devs over at Facebook?

This is actually an assignment from Jeremy Howard’s fast.ai course, lesson 5. I’ve showcased how easy it is to build a Convolutional Neural Networks from scratch using PyTorch. Today, let’s try to delve down even deeper and see if we could write our own nn.Linear module. Why waste your time writing your own PyTorch module while it’s already been written by the devs over at Facebook?

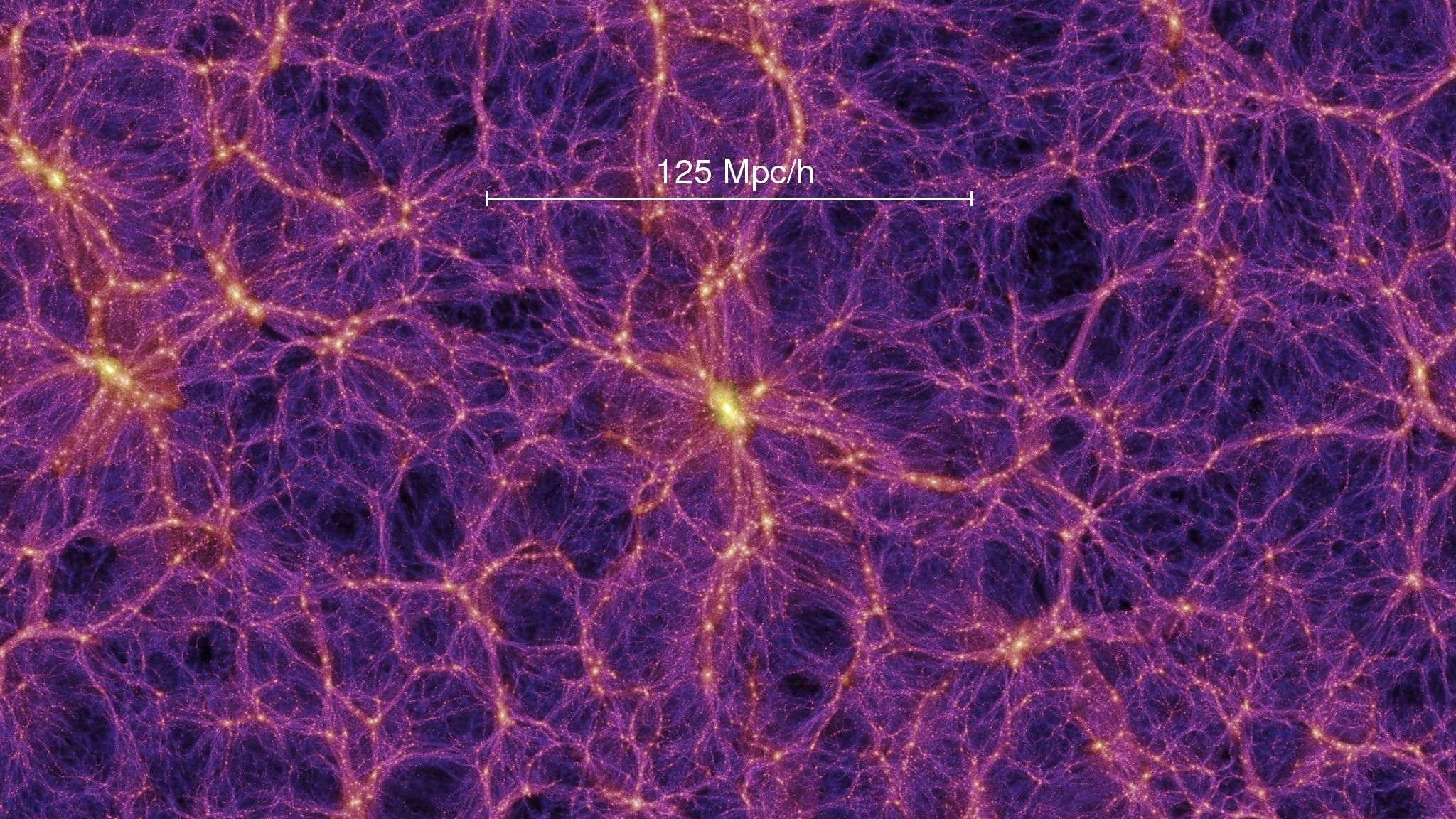

19. Our Universe Is A Massive Neural Network: Here's Why

Some days ago, I read an article on arXiv from Vitaly Vanchurin about ‘The world as a neural network’.

Some days ago, I read an article on arXiv from Vitaly Vanchurin about ‘The world as a neural network’.

20. Using FPGA in the Near Future: Trends and Predictions

The FPGA market continues to boom. According to the global forecast, over the next few years, its CAGR will be at an average of 8.6%. But the most interesting are new appliances of the tech, which are sometimes more akin to science fiction than to real life.

The FPGA market continues to boom. According to the global forecast, over the next few years, its CAGR will be at an average of 8.6%. But the most interesting are new appliances of the tech, which are sometimes more akin to science fiction than to real life.

21. Model Quantization in Deep Neural Networks

To get your AI models to work on laptops, mobiles and tiny devices quantization is essential

To get your AI models to work on laptops, mobiles and tiny devices quantization is essential

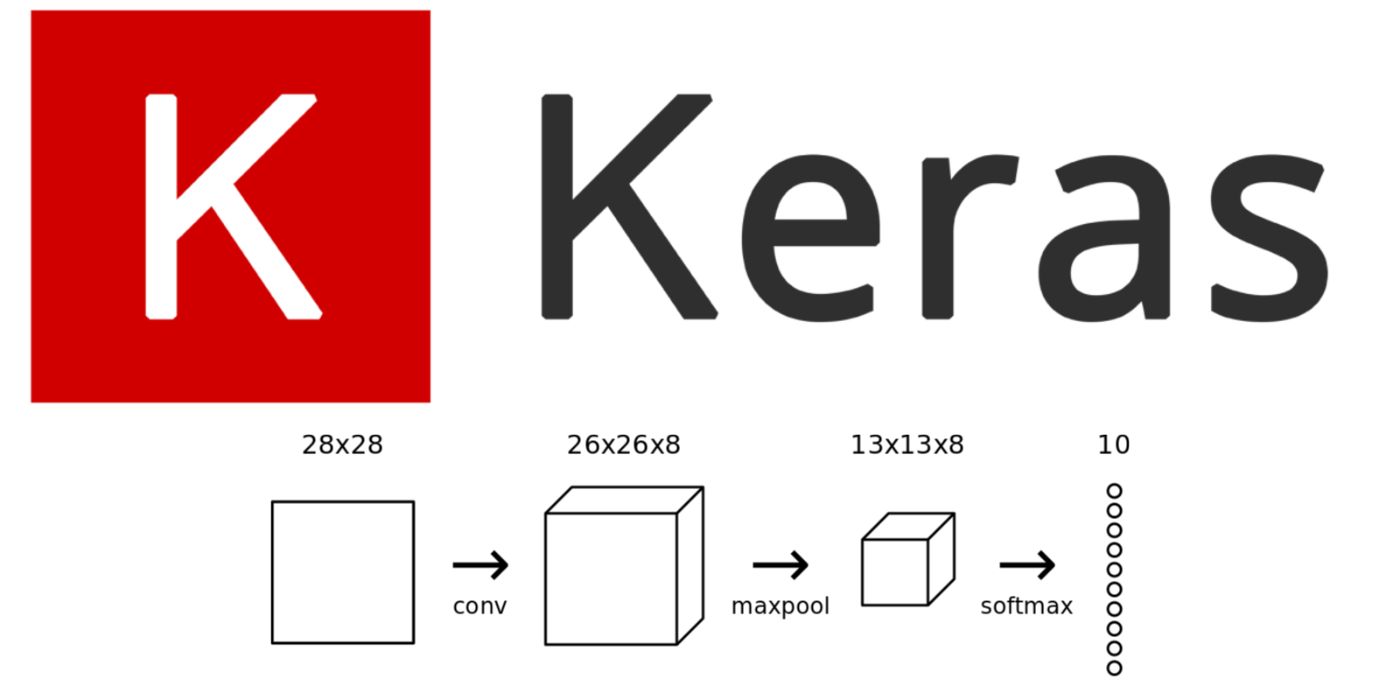

22. 10 Best Keras Datasets for Building and Training Deep Learning Models

This article looks at the Best Keras Datasets for Building and Training Deep Learning Models, accessible to developers and researchers worldwide.

This article looks at the Best Keras Datasets for Building and Training Deep Learning Models, accessible to developers and researchers worldwide.

23. Multi-Class Classification: Understanding Activation and Loss Functions in Neural Networks

Multi-class classification NN is used with the softmax activation function on its final layer together with CE loss. The final layer size = classes_number.

Multi-class classification NN is used with the softmax activation function on its final layer together with CE loss. The final layer size = classes_number.

24. Can the Nvidia RTX A4000 ADA Handle Machine Learning Tasks?

Is the Nvidia RTX A4000 ADA suitable for Machine Learning?

Is the Nvidia RTX A4000 ADA suitable for Machine Learning?

25. Artificial Intelligence, Machine Learning, and Human Beings

In a conversation with HackerNoon CEO, David Smooke, he identified artificial intelligence as an area of technology in which he anticipates vast growth. He pointed out, somewhat cheekily, that it seems like AI could be further along in figuring out how to alleviate some of our most basic electronic tasks—coordinating and scheduling meetings, for instance. This got me reflecting on the state of artificial intelligence. And mostly why my targeted ads suck so much...

In a conversation with HackerNoon CEO, David Smooke, he identified artificial intelligence as an area of technology in which he anticipates vast growth. He pointed out, somewhat cheekily, that it seems like AI could be further along in figuring out how to alleviate some of our most basic electronic tasks—coordinating and scheduling meetings, for instance. This got me reflecting on the state of artificial intelligence. And mostly why my targeted ads suck so much...

26. Complex Document Recognition: OCR Doesn’t Work and Here’s How You Fix It

OCR solutions don't work — at least when it comes to complex documents. Learn how you can supercharge OCR tools wqith AI to handle any document

OCR solutions don't work — at least when it comes to complex documents. Learn how you can supercharge OCR tools wqith AI to handle any document

27. Why Quadratic Cost Functions Are Ineffective in Neural Network Training

Explore why quadratic cost functions hinder neural network training and how cross-entropy improves learning efficiency in deep learning models.

Explore why quadratic cost functions hinder neural network training and how cross-entropy improves learning efficiency in deep learning models.

28. So You Want to Study Machine Learning and Civil Engineering?

Machine Learning (ML) in its literal terms implies, writing algorithms to help Machines learn better than human. ML is an aspect of Artificial Intelligence (AI) that deals with the development of a mathematical model which is fed with training data to identify patterns in that data and produce an output.

Machine Learning (ML) in its literal terms implies, writing algorithms to help Machines learn better than human. ML is an aspect of Artificial Intelligence (AI) that deals with the development of a mathematical model which is fed with training data to identify patterns in that data and produce an output.

29. Intro to Neural Networks: CNN vs. RNN

In machine learning, each type of artificial neural network is tailored to certain tasks. This article will introduce two types of neural networks: convolutional neural networks (CNN) and recurrent neural networks (RNN). Using popular Youtube videos and visual aids, we will explain the difference between CNN and RNN and how they are used in computer vision and natural language processing.

In machine learning, each type of artificial neural network is tailored to certain tasks. This article will introduce two types of neural networks: convolutional neural networks (CNN) and recurrent neural networks (RNN). Using popular Youtube videos and visual aids, we will explain the difference between CNN and RNN and how they are used in computer vision and natural language processing.

30. Neural Tech and Brain Computer Interfaces (BCI) in Video Games: An Overview

Recently, I attended a virtual conference on the use of neuro technology and BCI (Brain Computer Interfaces or BMI, Brain Machine Interfaces) in gaming, put on by NeurotechX.

Recently, I attended a virtual conference on the use of neuro technology and BCI (Brain Computer Interfaces or BMI, Brain Machine Interfaces) in gaming, put on by NeurotechX.

31. What Are Convolution Neural Networks? [ELI5]

Universal Approximation Theorem says that Feed-Forward Neural Network (also known as Multi-layered Network of Neurons) can act as powerful approximation to learn the non-linear relationship between the input and output. But the problem with the Feed-Forward Neural Network is that the network is prone to over-fitting due to the presence of many parameters within the network to learn.

Universal Approximation Theorem says that Feed-Forward Neural Network (also known as Multi-layered Network of Neurons) can act as powerful approximation to learn the non-linear relationship between the input and output. But the problem with the Feed-Forward Neural Network is that the network is prone to over-fitting due to the presence of many parameters within the network to learn.

32. Where to Learn Machine and Deep Learning for Free

33. How To Create A Simple Neural Network Using Python

I built a simple Neural Network using Python that outputs a target number given a specific input number.

I built a simple Neural Network using Python that outputs a target number given a specific input number.

34. Top 10 Machine Learning Optimized Graphics Cards

How to choose the right graphics card and maximize the efficiency of processing large amounts of data and performing parallel computing.

How to choose the right graphics card and maximize the efficiency of processing large amounts of data and performing parallel computing.

35. Retraining Machine Learning Model Approaches

Retraining Machine Learning Model, Model Drift, Different ways to identify model drift, Performance Degradation

Retraining Machine Learning Model, Model Drift, Different ways to identify model drift, Performance Degradation

36. The Weird and Wonderful World of AI Art

While the vast majority of developments in AI technology have centered around practical solutions such as self-driving cars and facial recognition, there's a growing number of artists using AI systems to develop new ideas for artistic projects and generate entirely unique pieces of work.

While the vast majority of developments in AI technology have centered around practical solutions such as self-driving cars and facial recognition, there's a growing number of artists using AI systems to develop new ideas for artistic projects and generate entirely unique pieces of work.

37. Elon Musk's Neuralink Looks to Implant Neural Chips In Humans, In a Year's Time

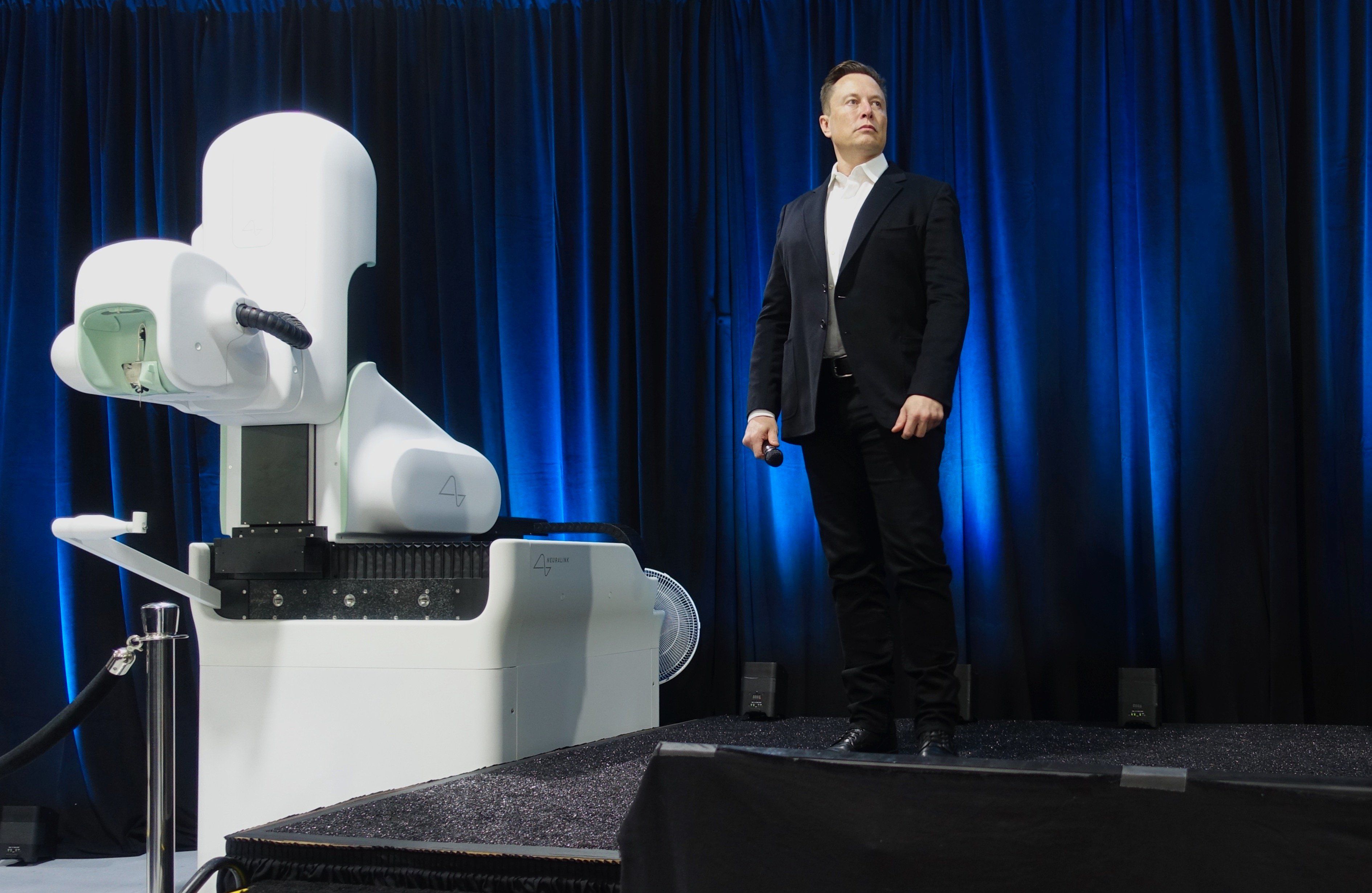

We may be on the verge of a deeper connection with our computers. What used to be mere science fiction has become the very stuff of today’s news, with Elon Musk on the headlines.

We may be on the verge of a deeper connection with our computers. What used to be mere science fiction has become the very stuff of today’s news, with Elon Musk on the headlines.

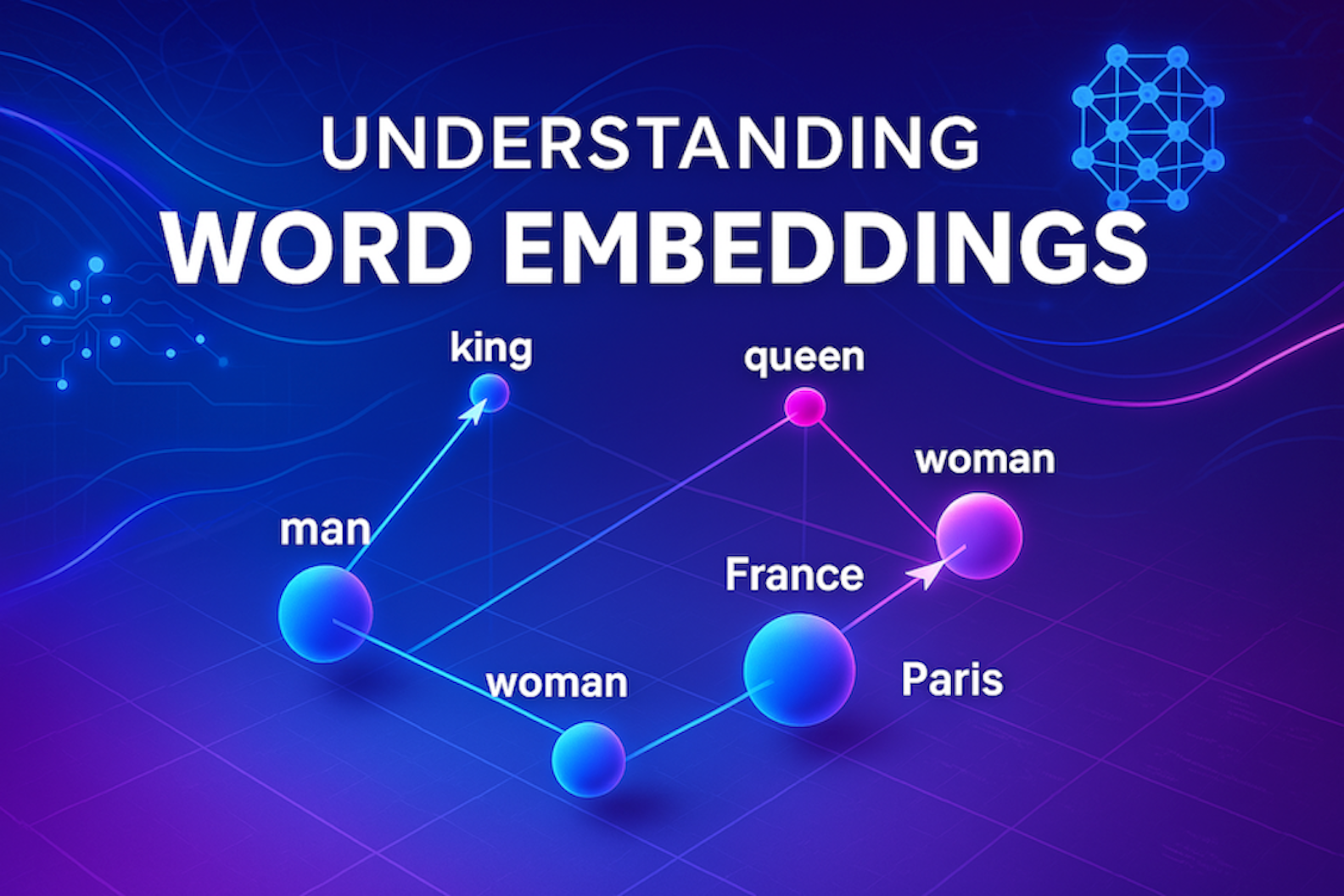

38. How to Remove Gender Bias in Machine Learning Models: NLP and Word Embeddings

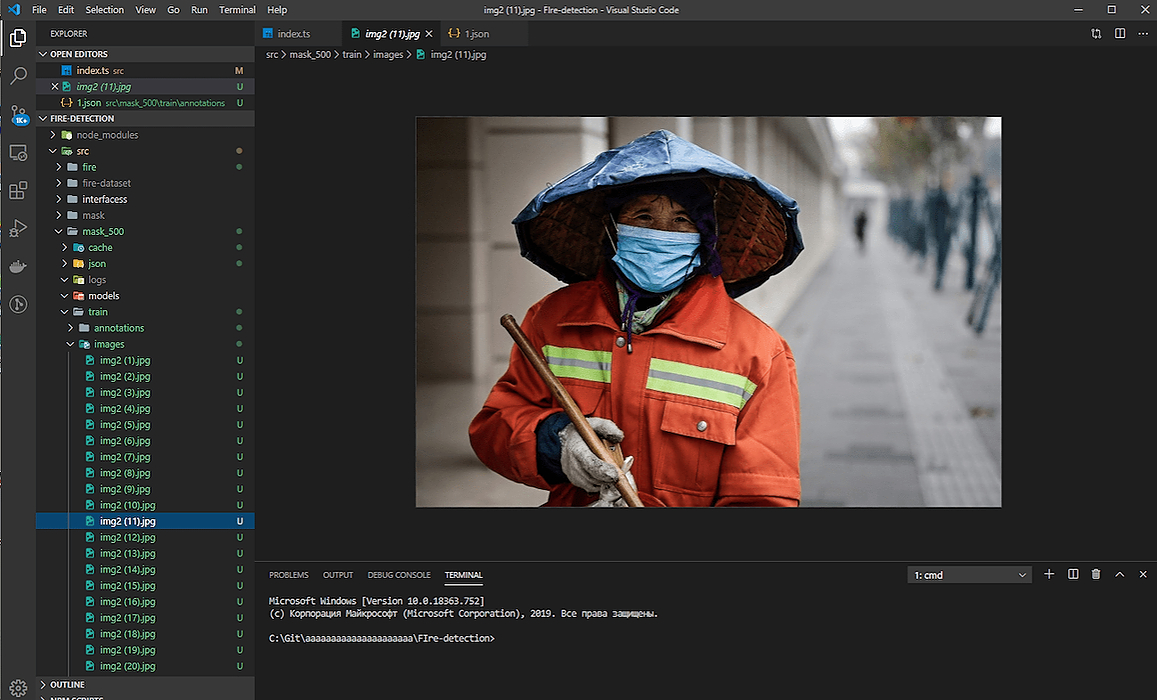

Most word embeddings used are glaringly sexist, let us look at some ways to de-bias such embeddings.

Most word embeddings used are glaringly sexist, let us look at some ways to de-bias such embeddings.

39. Learning AI If You Suck at Math - Part Eight - The Musician in the Machine

"AI isn't just creating new kinds of art; it's creating new kinds of artists.” - Douglas Eck, Magenta Project

"AI isn't just creating new kinds of art; it's creating new kinds of artists.” - Douglas Eck, Magenta Project

40. Thermodynamic Computing: How to Save Machine Learning by Replacing Transistors

Thermodynamic computing is some kind of quantum computing that could save machine learning

Thermodynamic computing is some kind of quantum computing that could save machine learning

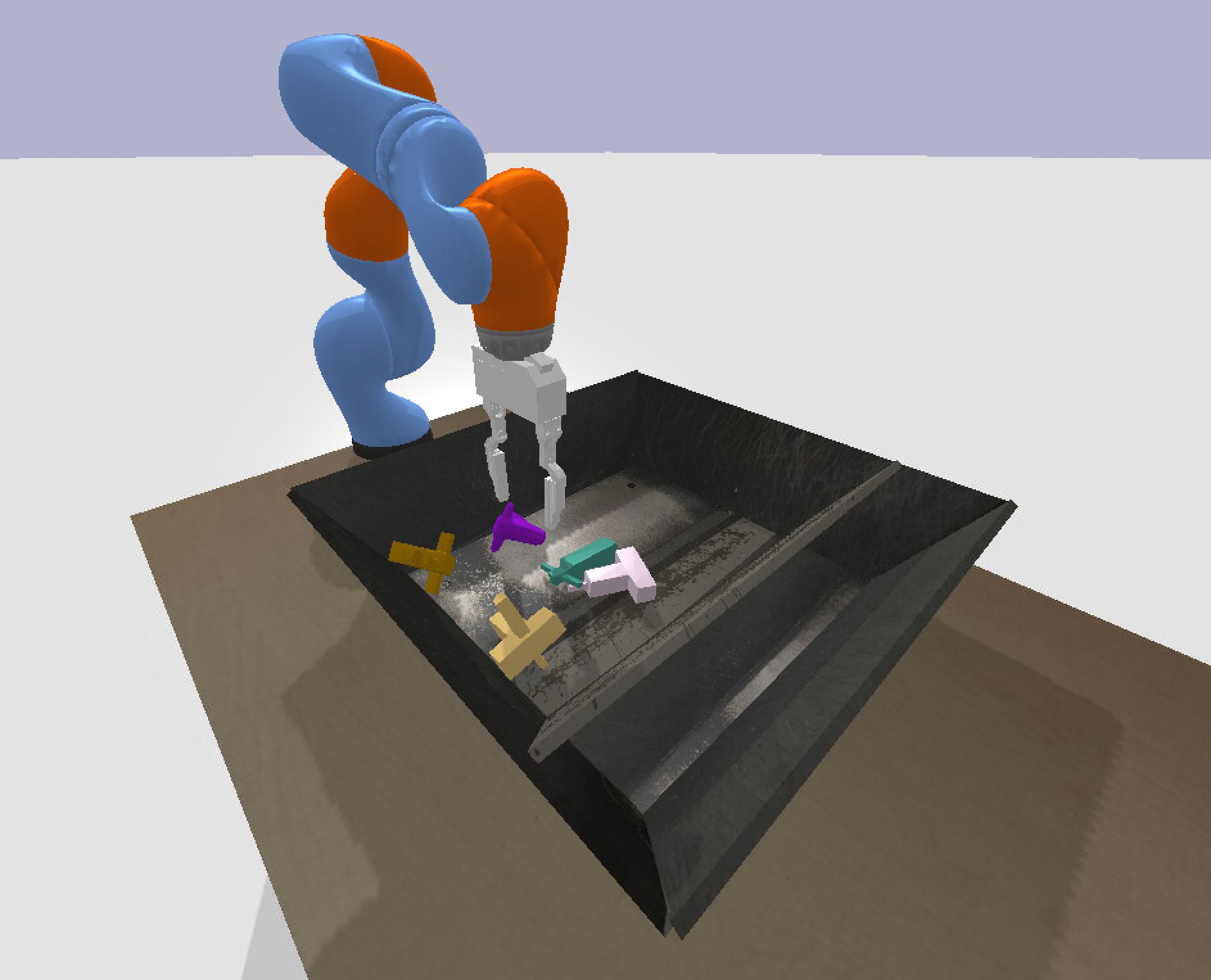

41. Using Reinforcement Learning to Build a Self-Learning Grasping Robot

Tips and tricks to build an autonomous grasping Kuka robot

Tips and tricks to build an autonomous grasping Kuka robot

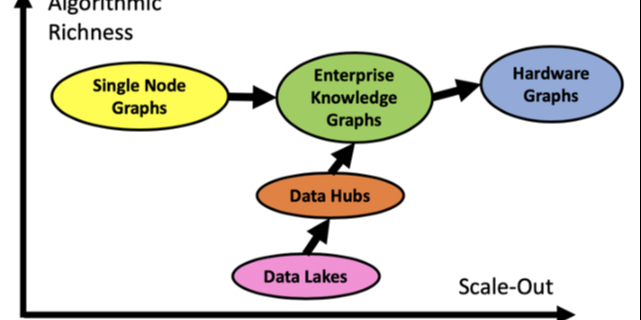

42. Knowledge Graphs May Be the Missing Link Businesses Need for an AI that Works

Knowledge graphs provide the missing “truth layer” for AI that transforms probabilistic outputs into real world business acceleration.

Knowledge graphs provide the missing “truth layer” for AI that transforms probabilistic outputs into real world business acceleration.

43. GPT in 200 Lines: The Beautiful Simplicity Behind Modern AI

How does GPT really work? Explore Andrej Karpathy’s tiny 200-line implementation and discover the elegant math behind modern AI.

How does GPT really work? Explore Andrej Karpathy’s tiny 200-line implementation and discover the elegant math behind modern AI.

44. ML for Diabetes from Bangladesh

45. Creating neural networks without human intervention

…And where is the blockchain in it?

…And where is the blockchain in it?

46. How to Teach a Tiny AI Model Everything a Huge One Knows

Discover the power of Knowledge Distillation in AI! Learn how AI models transfer knowledge to create faster, smaller, and smarter systems!

Discover the power of Knowledge Distillation in AI! Learn how AI models transfer knowledge to create faster, smaller, and smarter systems!

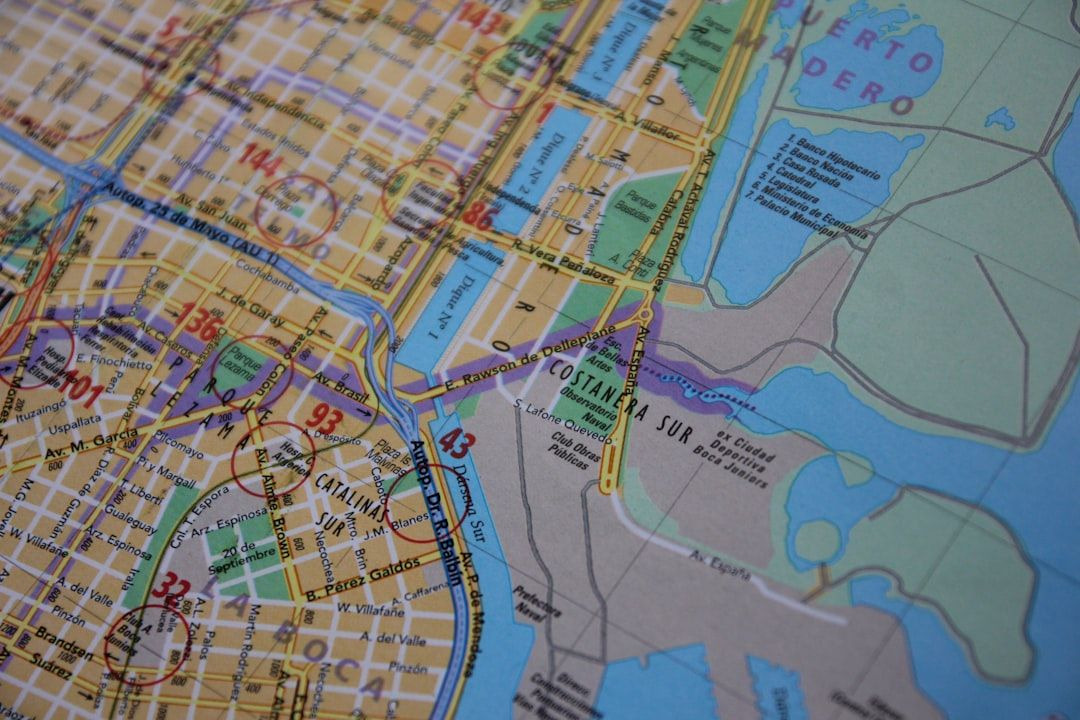

47. Using GANs to Create Anime Faces via Pytorch

Most of us in data science have seen a lot of AI-generated people in recent times, whether it be in papers, blogs, or videos. We’ve reached a stage where it’s becoming increasingly difficult to distinguish between actual human faces and faces generated by artificial intelligence. However, with the current available machine learning toolkits, creating these images yourself is not as difficult as you might think.

Most of us in data science have seen a lot of AI-generated people in recent times, whether it be in papers, blogs, or videos. We’ve reached a stage where it’s becoming increasingly difficult to distinguish between actual human faces and faces generated by artificial intelligence. However, with the current available machine learning toolkits, creating these images yourself is not as difficult as you might think.

48. Human Intelligence or Artificial Intelligence? We Need Both.

Artificial intelligence (AI) has reached a tipping point, leveraging the massive pools of data gathered by every app, website, and device in our lives to make increasingly sophisticated decisions on our behalf. AI is at work in our inboxes sorting and blocking emails. It takes and processes our increasingly complex requests through voice assistants. It supplements customer support through chatbots, and heavily automates complex processes to reduce the workload for knowledge workers. Evidently, devices can adapt on the fly to human behavior.

Artificial intelligence (AI) has reached a tipping point, leveraging the massive pools of data gathered by every app, website, and device in our lives to make increasingly sophisticated decisions on our behalf. AI is at work in our inboxes sorting and blocking emails. It takes and processes our increasingly complex requests through voice assistants. It supplements customer support through chatbots, and heavily automates complex processes to reduce the workload for knowledge workers. Evidently, devices can adapt on the fly to human behavior.

49. Machine Learning News Roundup - 6 Essential AI Articles of 2019

50. Getting Started with Natural Language Processing: US Airline Sentiment Analysis

By: Comet.ml and Niko Laskaris, customer facing data scientist, Comet.ml

By: Comet.ml and Niko Laskaris, customer facing data scientist, Comet.ml

51. Getting Started with Micrograd TS

A TypeScript version of karpathy/micrograd. A tiny scalar-valued autograd engine and a neural net on top of it.

A TypeScript version of karpathy/micrograd. A tiny scalar-valued autograd engine and a neural net on top of it.

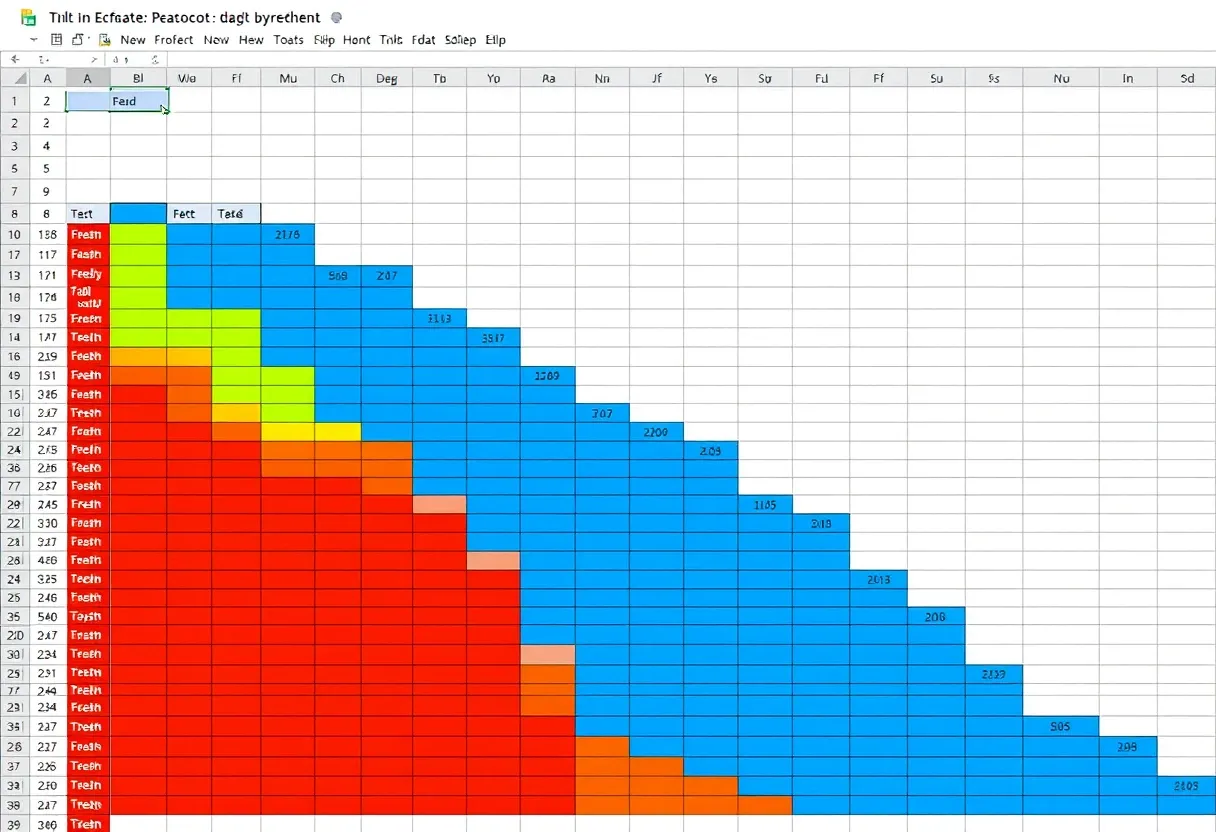

52. Using Machine Learning to Recommend Investments in P2P Lending

Introducing PeerVest: A free ML app to help you pick the best loan pool on a risk-reward basis

Introducing PeerVest: A free ML app to help you pick the best loan pool on a risk-reward basis

53. Comparing Kolmogorov-Arnold Network (KAN) and Multi-Layer Perceptrons (MLPs)

Discover how Kolmogorov-Arnold Networks (KAN) challenge traditional MLPs with trainable activation functions, offering a potential leap toward AGI.

Discover how Kolmogorov-Arnold Networks (KAN) challenge traditional MLPs with trainable activation functions, offering a potential leap toward AGI.

54. 3 Trends of the Neural Network Usage for Algorithmic Trading

Developers of AI systems can create complex algorithms for a wide range of use cases, including in investing and trading.

Developers of AI systems can create complex algorithms for a wide range of use cases, including in investing and trading.

55. The Lottery Ticket Hypothesis: Why Pruned Models Can Sometimes Learn Just as Well as Full Networks

Discover how the Lottery Ticket Hypothesis reshaped our understanding of pruning, showing small subnetworks can rival full neural networks.

Discover how the Lottery Ticket Hypothesis reshaped our understanding of pruning, showing small subnetworks can rival full neural networks.

56. Mission Generation Using Classic Machine Learning and Recurrent Neural Networks in Zombie State

We’ll walk through the big picture of generating missions using classic machine learning and recurrent neural networks for roguelike games.

We’ll walk through the big picture of generating missions using classic machine learning and recurrent neural networks for roguelike games.

57. Graph Algorithms, Neural Networks, and Graph Databases

Year of the Graph Newsletter, September 2019

Year of the Graph Newsletter, September 2019

58. What Neural Networks Teach Us About Schizophrenia

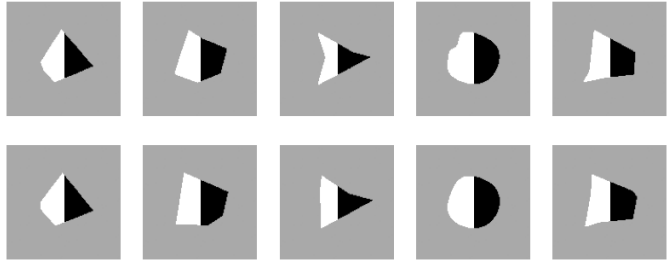

Pretrained Artificial Neural Networks used to work like a Blackbox: You hand them an input and they predict an output with a certain probability — but without us knowing the internal processes of how they came up with their prediction. A Neural Network to recognize images usually consists of around 20 neuron layers, trained with millions of images to tweak the network parameters to give high quality classifications.

Pretrained Artificial Neural Networks used to work like a Blackbox: You hand them an input and they predict an output with a certain probability — but without us knowing the internal processes of how they came up with their prediction. A Neural Network to recognize images usually consists of around 20 neuron layers, trained with millions of images to tweak the network parameters to give high quality classifications.

59. The Dragon Hatchling Learns to Fly: Inside AI’s Next Learning Revolution

Exploring Brain-like Dragon Hatchling (BDH) — a new AI model that learns on the fly, adapts like a brain, and challenges the transformer era.

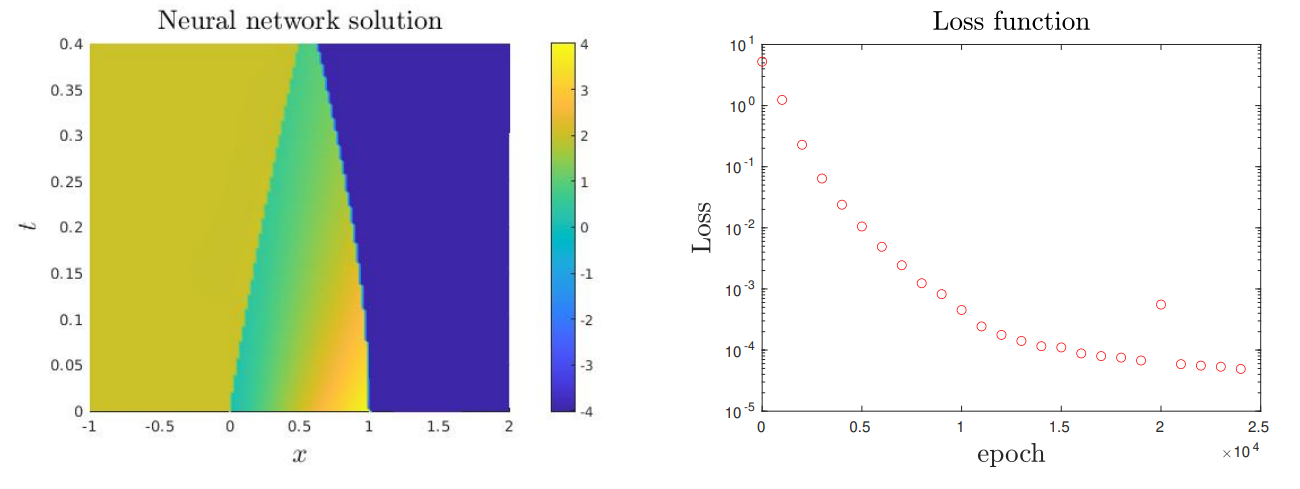

Exploring Brain-like Dragon Hatchling (BDH) — a new AI model that learns on the fly, adapts like a brain, and challenges the transformer era.

60. I Let Karpathy's AutoResearch Agent Run Overnight!

A hands-on review of Andrej Karpathy's autoresearch repo.Check what happens when an AI agent autonomously optimizes a neural network while you sleep.

A hands-on review of Andrej Karpathy's autoresearch repo.Check what happens when an AI agent autonomously optimizes a neural network while you sleep.

61. 3 Ways to Easily Visualize Keras Machine Learning Models

Guide explaining how to use Netron, visualkeras, and TensorBoard to visualize Keras machine learning models.

Guide explaining how to use Netron, visualkeras, and TensorBoard to visualize Keras machine learning models.

62. Federated Learning: A Decentralized Form of Machine Learning

Major companies using AI and machine learning now use federated learning – a form of machine learning that trains algorithms on a distributed set of devices.

Major companies using AI and machine learning now use federated learning – a form of machine learning that trains algorithms on a distributed set of devices.

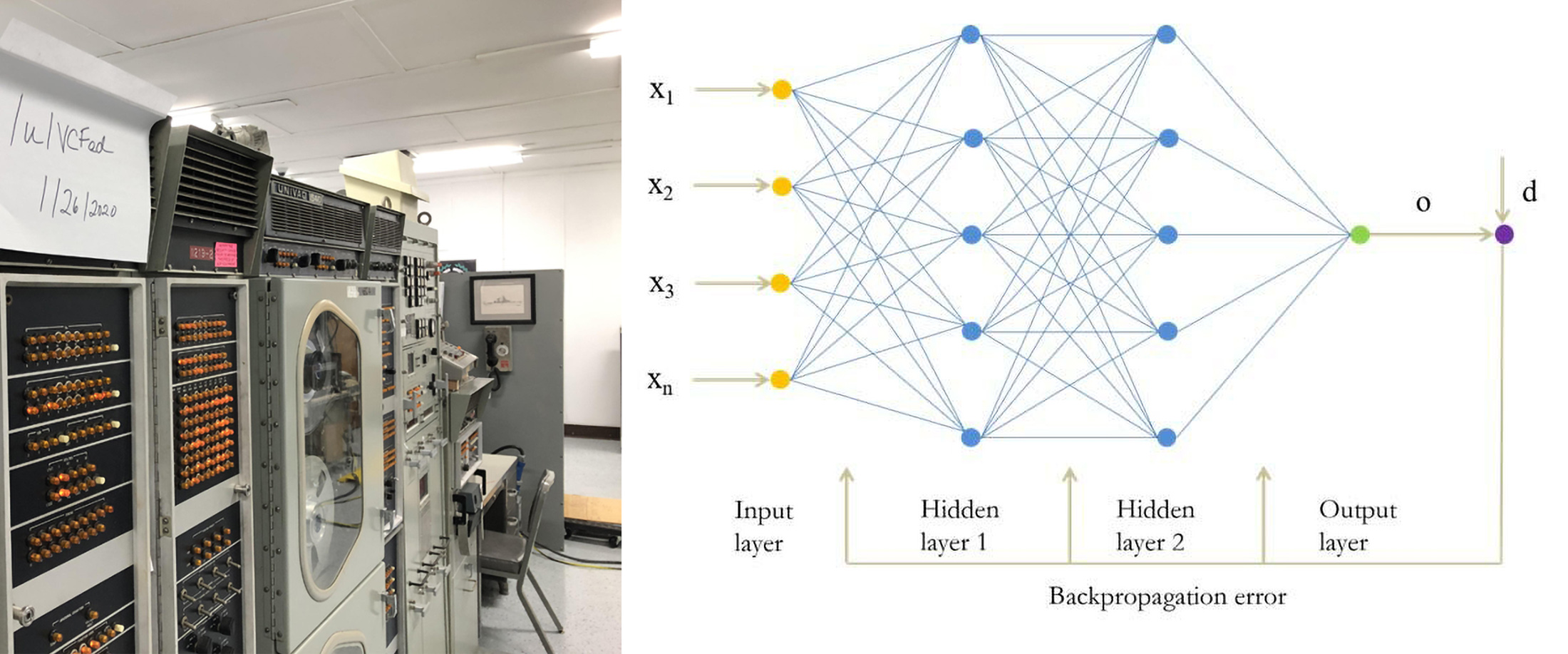

63. Backpropagation - The Most Fundamental Training Systems Algorithm in Modern Generative AI

A simple history and explanation of the significance, importance, and the almost infinite power of neural networks.

A simple history and explanation of the significance, importance, and the almost infinite power of neural networks.

64. The Dark Matter of AI: Common Sense Is Not So Common

There are still areas where AI lacks and causes problems and frustration to end-users, and these areas pose a great challenge for researchers right now.

There are still areas where AI lacks and causes problems and frustration to end-users, and these areas pose a great challenge for researchers right now.

65. Why We Urgently Need to Optimize Neural Rendering

The list of industries and use cases where NeRF technology is the most awaited.

The list of industries and use cases where NeRF technology is the most awaited.

66. But Is It Art?—AI and the Algorithms vs. Artists Debate

There is a common belief among techies these days that with the arrival of AI and algorithms, professions such as those that of artists are becoming extinct. This is a misconception.

There is a common belief among techies these days that with the arrival of AI and algorithms, professions such as those that of artists are becoming extinct. This is a misconception.

67. How to Build a Basic Recommendation Engine without Machine Learning

Explore the intricacies of building a recommendation engine without relying on machine learning models.

Explore the intricacies of building a recommendation engine without relying on machine learning models.

68. A Guide to Doing a Digital Forensics Examination on Digital Media (USB)

Digital forensic plays a major role in forensic science. It’s a combination of people, process, technology, and law.

Digital forensic plays a major role in forensic science. It’s a combination of people, process, technology, and law.

69. Deep Learning Runs on Floating-Point Math. What If That’s a Mistake?

Dive into the comparitive analysis between logarithmic and floating-point arithmetic in neural nets using the commonly used MNIST dataset.

Dive into the comparitive analysis between logarithmic and floating-point arithmetic in neural nets using the commonly used MNIST dataset.

70. What's Next for Adaptive Neural Systems?

There is a trend in neural networks that has existed since the beginning of the deep learning revolution which is succinctly captured in one word: scale.

There is a trend in neural networks that has existed since the beginning of the deep learning revolution which is succinctly captured in one word: scale.

71. How to 'Learn' Generative AI

Want to know- What is Generative AI? How to Learn Generative AI? This comprehensive guide will let you explore everything about Generative AI and its use case.

Want to know- What is Generative AI? How to Learn Generative AI? This comprehensive guide will let you explore everything about Generative AI and its use case.

72. Creating a Racist AI for my Browser with JavaScript and Brain JS

Can AI be racist with supervised learning?

Can AI be racist with supervised learning?

73. Top Machine Learning Algorithms

74. This Neural Network Paints Depressing Russian Cityscapes

Russian doomer neural network creates paintings and music videos. Tutorial. Stylegan2 was trained on thousands of images of soviet architecture.

Russian doomer neural network creates paintings and music videos. Tutorial. Stylegan2 was trained on thousands of images of soviet architecture.

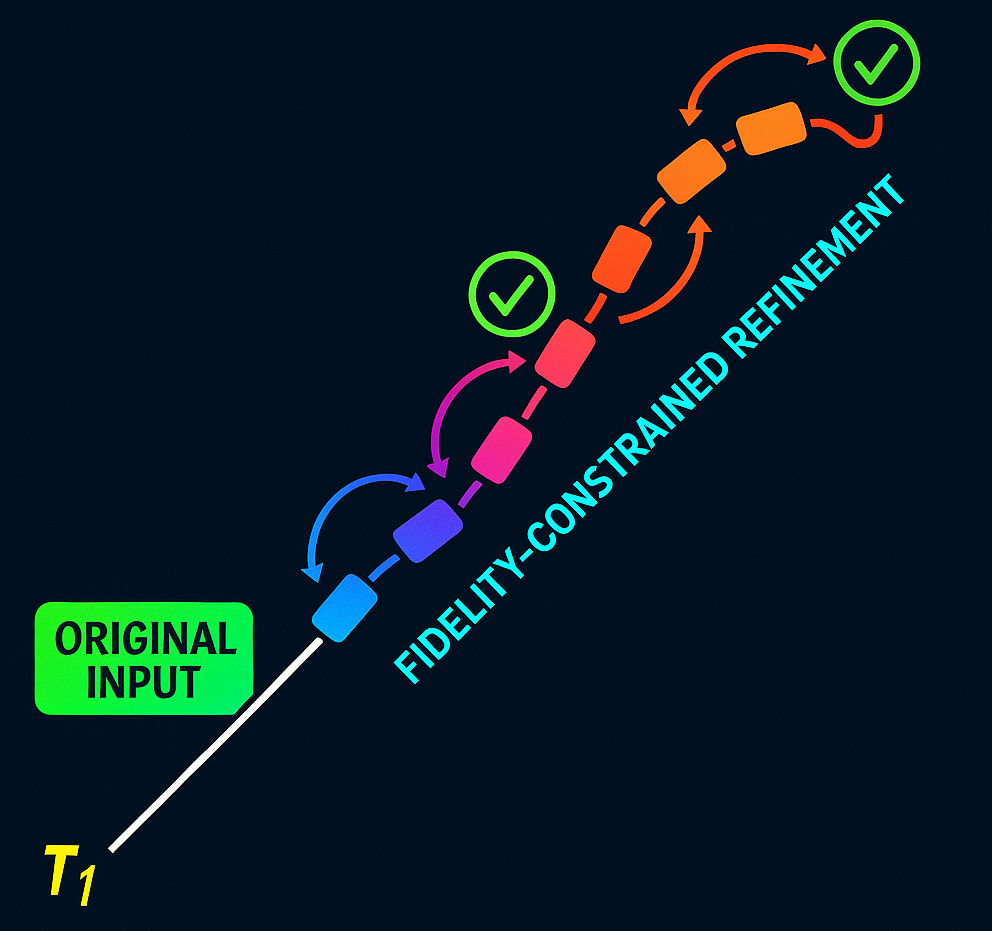

75. Zeno’s Paradox and the Problem of AI Tokenization

Token prediction forces LLMs to drift. This piece shows why, what Zeno can teach us about it, and how fidelity-based auditing could finally keep models grounded

Token prediction forces LLMs to drift. This piece shows why, what Zeno can teach us about it, and how fidelity-based auditing could finally keep models grounded

76. The Dawn of the Transformer Neural Networks

Why are GPT-3 and all the other transformer models so exciting? Let's find out!

Why are GPT-3 and all the other transformer models so exciting? Let's find out!

77. RethNet Model: Object-by-Object Learning for Detecting Facial Skin Problems

In August 2019, a group of researchers from lululab Inc propose the state-of-the-art concept using a semantic segmentation method to detect the most common facial skin problems accurately. The work is accepted to ICCV 2019 Workshop.

In August 2019, a group of researchers from lululab Inc propose the state-of-the-art concept using a semantic segmentation method to detect the most common facial skin problems accurately. The work is accepted to ICCV 2019 Workshop.

78. Revamping Long Short-Term Memory Networks: XLSTM for Next-Gen AI

XLSTMs, with novel sLSTM and mLSTM blocks, aim to overcome LSTMs' limitations and potentially surpass transformers in building next-gen language models.

XLSTMs, with novel sLSTM and mLSTM blocks, aim to overcome LSTMs' limitations and potentially surpass transformers in building next-gen language models.

79. Loan Risk Prediction Using Neural Networks

A Step-by-Step Guide (With a Healthy Dose of Data Cleaning)

A Step-by-Step Guide (With a Healthy Dose of Data Cleaning)

80. Your AI Can’t Understand Language Until It Learns This Trick

Discover the power of word embeddings in NLP! Learn how these vector-based representations capture semantic and syntactic relationships between words.

Discover the power of word embeddings in NLP! Learn how these vector-based representations capture semantic and syntactic relationships between words.

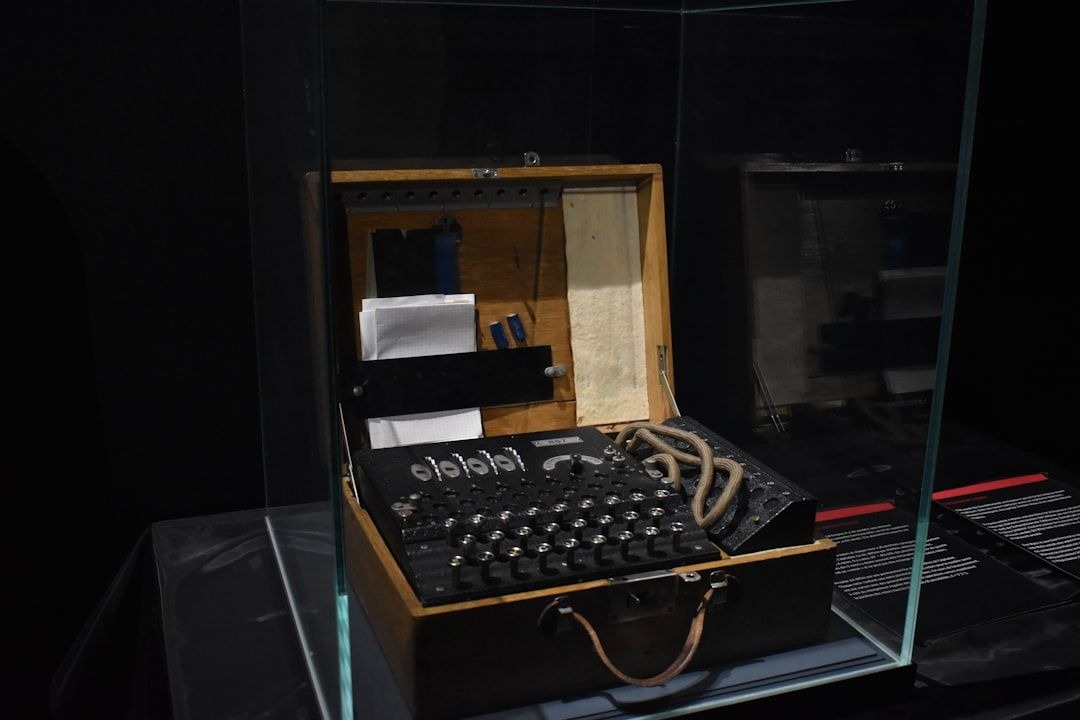

81. Backprop — The Russian Algorithm the West Claimed as Its Own

Backprop wasn’t invented in 1986. It was published in the USSR in 1974 — 6 months before Werbos. The author? Alexander Galushkin.

Backprop wasn’t invented in 1986. It was published in the USSR in 1974 — 6 months before Werbos. The author? Alexander Galushkin.

82. Playing Checkers With Genetic Algorithms: Evolutionary Learning in Traditional Games

a small article to immerse yourself in the world of genetic algorithms, neural networks and all that stuff

a small article to immerse yourself in the world of genetic algorithms, neural networks and all that stuff

83. How Neural Networks Hallucinate Missing Pixels for Image Inpainting

When a human sees an object, certain neurons in our brain’s visual cortex light up with activity, but when we take hallucinogenic drugs, these drugs overwhelm our serotonin receptors and lead to the distorted visual perception of colours and shapes. Similarly, deep neural networks that are modelled on structures in our brain, stores data in huge tables of numeric coefficients, which defy direct human comprehension. But when these neural network’s activation is overstimulated (virtual drugs), we get phenomenons like neural dreams and neural hallucinations. Dreams are the mental conjectures that are produced by our brain when the perceptual apparatus shuts down, whereas hallucinations are produced when this perceptual apparatus becomes hyperactive. In this blog, we will discuss how this phenomenon of hallucination in neural networks can be utilized to perform the task of image inpainting.

When a human sees an object, certain neurons in our brain’s visual cortex light up with activity, but when we take hallucinogenic drugs, these drugs overwhelm our serotonin receptors and lead to the distorted visual perception of colours and shapes. Similarly, deep neural networks that are modelled on structures in our brain, stores data in huge tables of numeric coefficients, which defy direct human comprehension. But when these neural network’s activation is overstimulated (virtual drugs), we get phenomenons like neural dreams and neural hallucinations. Dreams are the mental conjectures that are produced by our brain when the perceptual apparatus shuts down, whereas hallucinations are produced when this perceptual apparatus becomes hyperactive. In this blog, we will discuss how this phenomenon of hallucination in neural networks can be utilized to perform the task of image inpainting.

84. 82 Stories To Learn About Neural Networks

Learn everything you need to know about Neural Networks via these 82 free HackerNoon stories.

Learn everything you need to know about Neural Networks via these 82 free HackerNoon stories.

85. AI's Growing Role in Cybersecurity

Learn how AI is transforming cybersecurity through enhanced threat detection, automation, and its future potential alongside human expertise.

Learn how AI is transforming cybersecurity through enhanced threat detection, automation, and its future potential alongside human expertise.

86. Neuralink Draws Interface Between a Computer and the Human Mind

August 29 might possibly be remembered as the day when technology took over the limitations of human life. In one of the most appealing events of technological history, the tech entrepreneur Elon Musk presented a live demonstration of his brain hacking device LINK V0.9.

August 29 might possibly be remembered as the day when technology took over the limitations of human life. In one of the most appealing events of technological history, the tech entrepreneur Elon Musk presented a live demonstration of his brain hacking device LINK V0.9.

87. Is Society Just a Really Complicated Brain?

Can any system with sufficient complexity have "thoughts?" Is our society any different?

Can any system with sufficient complexity have "thoughts?" Is our society any different?

88. How War Led to AI Fighting Fake News

How does AI help to fight fake news? The use case that we created during the war.

How does AI help to fight fake news? The use case that we created during the war.

89. Style Transferring with TensorFlow

Style transfer is a computer vision-based technique combined with image processing. Learn about style transfer with Tensorflow, a prominent framework in AI & ML

Style transfer is a computer vision-based technique combined with image processing. Learn about style transfer with Tensorflow, a prominent framework in AI & ML

90. Variational Autoencoders (VAE): How AI Learns Whether Your Eyes Are Open Or Closed

Classify open/closed eyes using Variational Autoencoders (VAE).

Classify open/closed eyes using Variational Autoencoders (VAE).

91. DeepComposer By Amazon: First Neural Network Music Synthesizer

Amazon introduced the DeepComposer music synthesizer and the eponymous cloud-based music creation service based on generative adversarial neural networks. Using them, the user can set the main melody on the synthesizer and get a full song, in which the original part is supplemented with drums, guitar and other instruments.

Amazon introduced the DeepComposer music synthesizer and the eponymous cloud-based music creation service based on generative adversarial neural networks. Using them, the user can set the main melody on the synthesizer and get a full song, in which the original part is supplemented with drums, guitar and other instruments.

92. The Hunt for Data: Creating a Computer Vision Dataset for Road Safety

In this article, I would like to share my own experience of developing a smart camera for cyclists with an advanced computer vision algorithm

In this article, I would like to share my own experience of developing a smart camera for cyclists with an advanced computer vision algorithm

93. Generative AI: The Next Frontier in Healthcare

Med-PaLM can answer a wide range of medical questions, including those about diagnosis, treatment, and prevention.

Med-PaLM can answer a wide range of medical questions, including those about diagnosis, treatment, and prevention.

94. How Photonic Processors Will Save Machine Learning

Quick introduction of LightOn, aka how photonic processors will save machine learning

Quick introduction of LightOn, aka how photonic processors will save machine learning

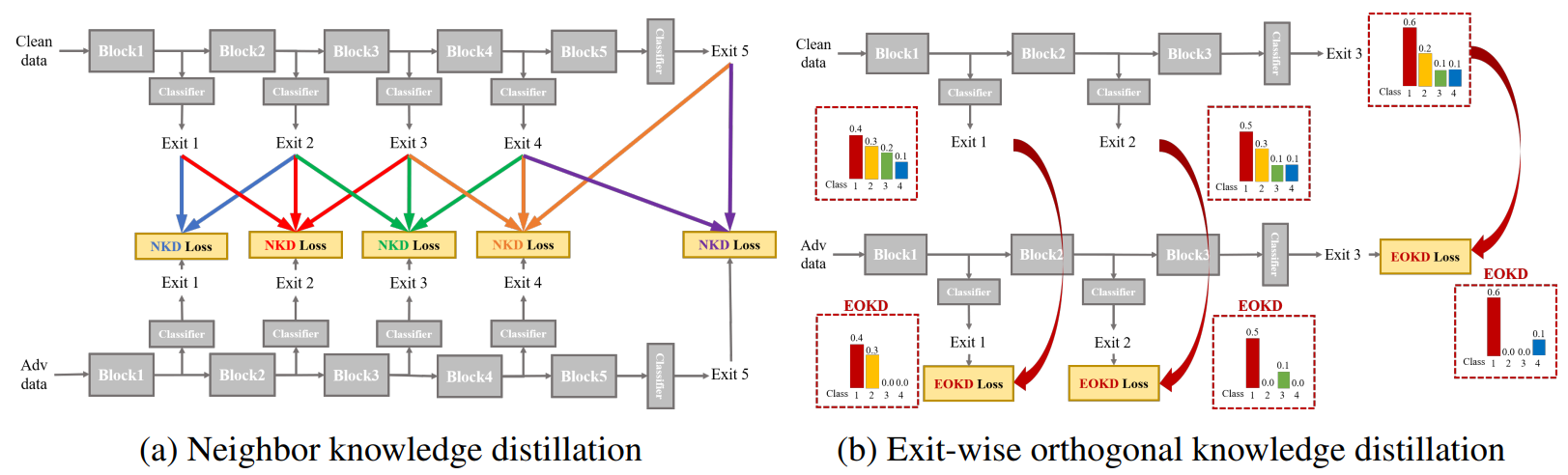

95. Knowledge-Distillation-Based Adversarial Training for Robust Multi-Exit Neural Networks

Discover NEO-KD, a knowledge-distillation strategy that boosts adversarial robustness in multi-exit neural networks.

Discover NEO-KD, a knowledge-distillation strategy that boosts adversarial robustness in multi-exit neural networks.

96. Researchers Push Real-Time AI Game Simulation Beyond Traditional Engines

How modern AI models—from NeRFs to diffusion—are reshaping real-time game simulation and interactive world modeling.

How modern AI models—from NeRFs to diffusion—are reshaping real-time game simulation and interactive world modeling.

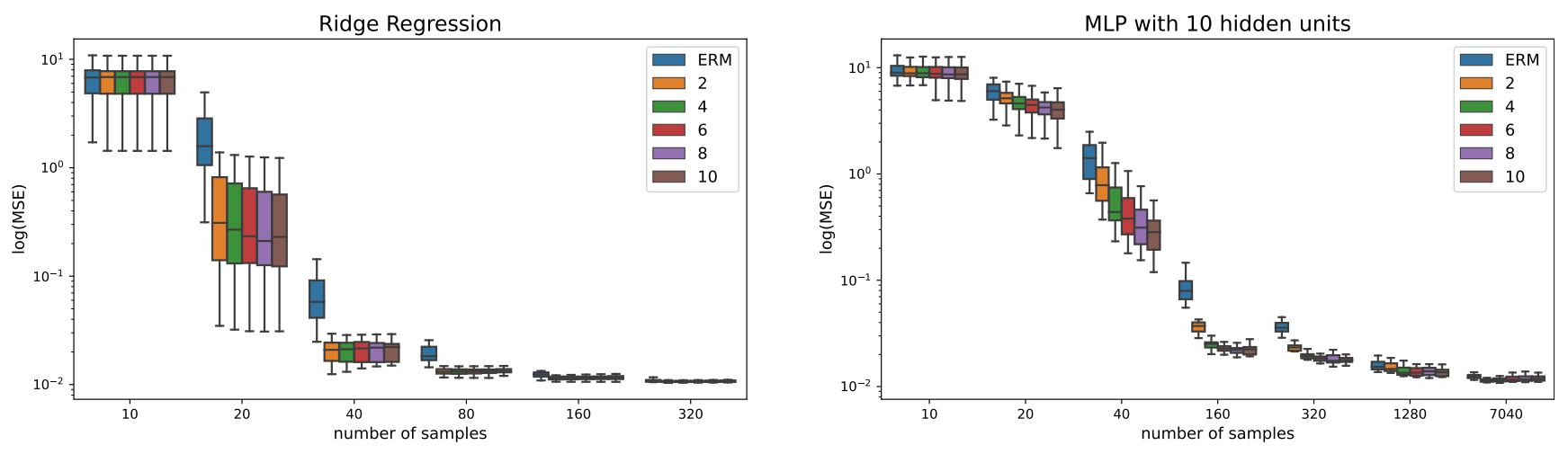

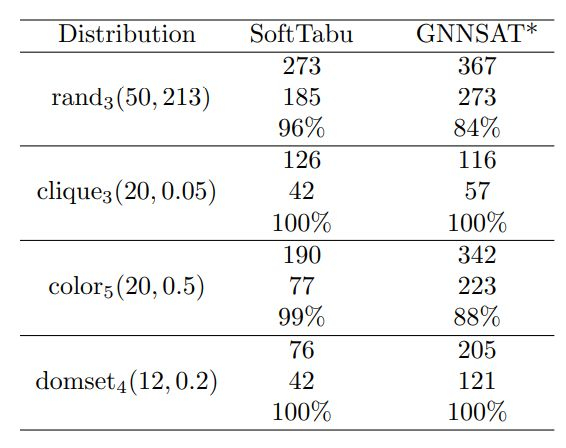

97. Unveiling the Limits of Learned Local Search Heuristics: Are You the Mightiest of the Meek?

Dive into the challenges faced in empirically evaluating neural network-local search heuristics hybrids for combinatorial optimization.

Dive into the challenges faced in empirically evaluating neural network-local search heuristics hybrids for combinatorial optimization.

98. Speedrun Your Understanding of Machine Learning.. in 52 seconds 🏎️

When you learn a new thing, tool or tech or anything — you do NOT start with it's implementations, you start with ideas, concepts and problems the tool solves!

When you learn a new thing, tool or tech or anything — you do NOT start with it's implementations, you start with ideas, concepts and problems the tool solves!

99. The Hitchhikers's Guide to PyTorch for Data Scientists

PyTorch has sort of became one of the de facto standard for creating Neural Networks now, and I love its interface. Yet, it is somehow a little difficult for beginners to get a hold of.

PyTorch has sort of became one of the de facto standard for creating Neural Networks now, and I love its interface. Yet, it is somehow a little difficult for beginners to get a hold of.

100. Build a Scalable Semantic Search System with Sentence Transformers and FAISS

Build a lightning-fast semantic search system using Sentence Transformers and FAISS to deliver context-aware results at scale with blazing speed.

Build a lightning-fast semantic search system using Sentence Transformers and FAISS to deliver context-aware results at scale with blazing speed.

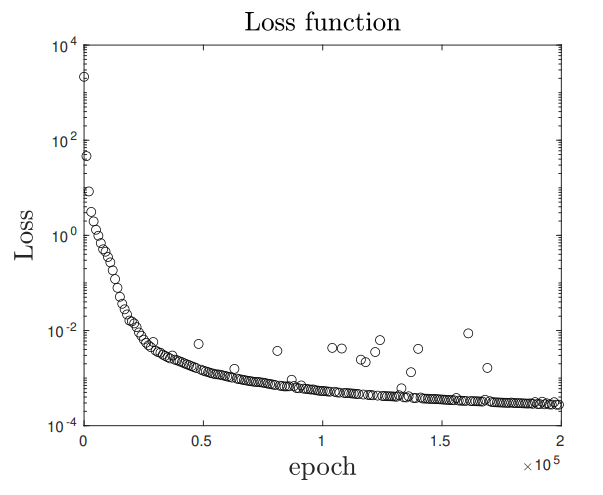

101. Brain-Inspired AI: Early Results from a Radical New Neuron Model

This work represents a complete research pipeline built from scratch to test and validate novel neural network architecture approach, starting with a neuron.

This work represents a complete research pipeline built from scratch to test and validate novel neural network architecture approach, starting with a neuron.

102. Unleashing Transformers: Overcoming RNN Conventions

How Transformers addressed the challenges posed by RNNs.

How Transformers addressed the challenges posed by RNNs.

103. Meta's Emu: The Foundational Model for Emu Edit and Emu Video

Meta's Emu paper unveils revolutionary image generation via quality-tuning, shifting LLMs' paradigm in AI development.

Meta's Emu paper unveils revolutionary image generation via quality-tuning, shifting LLMs' paradigm in AI development.

104. High-Resolution Photorealistic Image Translation in Real Time

You can apply any design, lighting, or graphics style to your 4K image in real-time using this new machine learning-based approach

You can apply any design, lighting, or graphics style to your 4K image in real-time using this new machine learning-based approach

105. From Fixed Labels to Prompts: How Vision-Language Models Are Re-Wiring Object Detection

How open-vocabulary vision-language object detectors overcome closed-set limits, with VOC/COCO/LVIS benchmarks and a hybrid recipe for fast edge deployment.

How open-vocabulary vision-language object detectors overcome closed-set limits, with VOC/COCO/LVIS benchmarks and a hybrid recipe for fast edge deployment.

106. Enhancing Robotics with Instance Segmentation: Achieving Precise Object Localization

Boosting Automation with Instance Segmentation: Accurate Object Localization for Industrial Robots

Boosting Automation with Instance Segmentation: Accurate Object Localization for Industrial Robots

107. Objects Classification Using CNN-based Model

— All the images (plots) are generated and modified by the Author.

— All the images (plots) are generated and modified by the Author.

108. The Topology of Meaning: Towards a "Unified Field Theory" for Artificial Intelligence

A bold argument that AI must move beyond language and tokens toward a unified topological model where words, faces, and sounds share the same meaning space.

A bold argument that AI must move beyond language and tokens toward a unified topological model where words, faces, and sounds share the same meaning space.

109. Between the Hows and Whys of Artificial Intelligence [feat. The Good, The Bad, and the Ugly]

Artificial intelligence can mean a lot of things. It’s been used as a catch-all for various disciplines in computer science including robotics, natural language processing, or artificial neural networks. That’s because, generally speaking, when we talk about artificial intelligence we’re always talking about the simulation of human thought by a mechanical process.

Artificial intelligence can mean a lot of things. It’s been used as a catch-all for various disciplines in computer science including robotics, natural language processing, or artificial neural networks. That’s because, generally speaking, when we talk about artificial intelligence we’re always talking about the simulation of human thought by a mechanical process.

110. How to Build Neural Network that Recognizes People Wearing Masks

CDC officially recommends wearing face masks (even though not everyone complies). Meanwhile, governments in European countries like Spain, Ukraine, or certain regions in Italy require everyone, big or small, to wear masks all the time, when shopping, walking a dog, or plainly going outside. Breaking the requirements could result in a hefty fine.

CDC officially recommends wearing face masks (even though not everyone complies). Meanwhile, governments in European countries like Spain, Ukraine, or certain regions in Italy require everyone, big or small, to wear masks all the time, when shopping, walking a dog, or plainly going outside. Breaking the requirements could result in a hefty fine.

111. Machine Learning, 5G and Data Science Will be Critical to the Future of the Internet of Things

By 2020, the total number of Internet-connected devices will be between 25-50 billion.

By 2020, the total number of Internet-connected devices will be between 25-50 billion.

112. What is an Artificial Neural Network (ANN)?

Artificial neural networks mimic the functioning of neurons in the human brain. They can learn from their original training and future runs.

Artificial neural networks mimic the functioning of neurons in the human brain. They can learn from their original training and future runs.

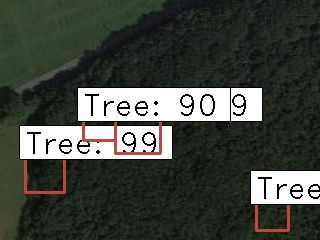

113. How To Identify Trees with Deep Learning

Idea / inspiration

Idea / inspiration

114. Neural Networks and Deep Learning

Before you can code neural networks in any language or toolkit, first, you must understand what they are.

Before you can code neural networks in any language or toolkit, first, you must understand what they are.

115. The Pain Points of Scaling Data Science

While building a machine learning model, data scaling in machine learning is the most significant element through data pre-processing. Scaling may recognize the difference between a model of poor machine learning and a stronger one.

While building a machine learning model, data scaling in machine learning is the most significant element through data pre-processing. Scaling may recognize the difference between a model of poor machine learning and a stronger one.

116. Are Brain-Computer Interfaces at Risk of Mass Cyberattacks?

Explore the potential consequences of software glitches in brain-computer interfaces (BCIs) and how they could impact cognitive abilities and mental states.

Explore the potential consequences of software glitches in brain-computer interfaces (BCIs) and how they could impact cognitive abilities and mental states.

117. QLoRA: Fine-Tuning Your LLMs With a Single GPU

QLoRA is the first paper that showed we can train LLMs on a single GPU. This article explains the approach of QLoRA in simple terms

QLoRA is the first paper that showed we can train LLMs on a single GPU. This article explains the approach of QLoRA in simple terms

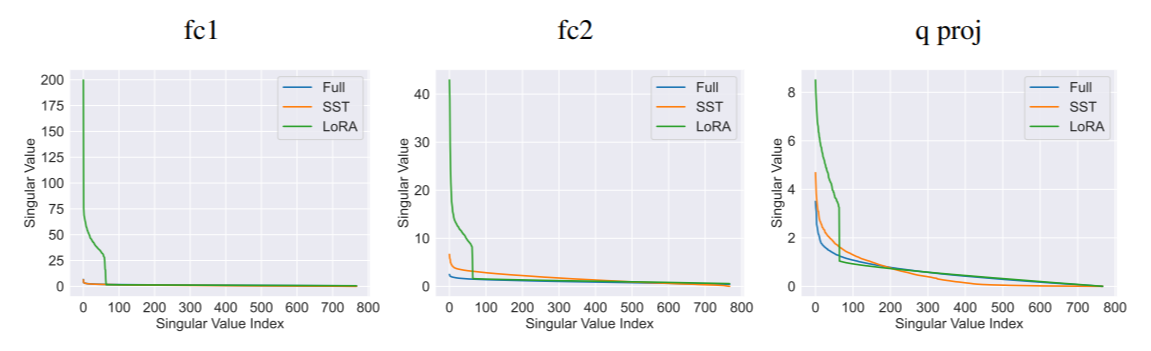

118. What Is LoRA? The Low-Ranking Adaptation of LLMs Explained

To get Large Language Models to work within your budged both in terms of compute and memory, LoRA is a fundamental quantisation algorithm.

To get Large Language Models to work within your budged both in terms of compute and memory, LoRA is a fundamental quantisation algorithm.

119. What Can Recurrent Neural Networks in NLP Do?

Recurrent Neural Networks (RNN) have played a major role in sequence modeling in Natural Language Processing (NLP) . Let’s see what are the pros and cons of RNN

Recurrent Neural Networks (RNN) have played a major role in sequence modeling in Natural Language Processing (NLP) . Let’s see what are the pros and cons of RNN

120. How Do Deep Neural Networks Work?

Every day we are facing AI and neural network in some ways: from common phone use through face detection, speech or image recognition to more sophisticated — self-driving cars, gene-disease predictions, etc. We think it is time to finally sort out what AI consists of, what neural network is and how it works.

Every day we are facing AI and neural network in some ways: from common phone use through face detection, speech or image recognition to more sophisticated — self-driving cars, gene-disease predictions, etc. We think it is time to finally sort out what AI consists of, what neural network is and how it works.

121. Machine Learning in Java: Getting Started with DeepLearning4J, Tribuo, and Smile

Learn how to build machine learning models in Java using DeepLearning4J, Tribuo, and Smile.

Learn how to build machine learning models in Java using DeepLearning4J, Tribuo, and Smile.

122. The Noonification: UX Considerations for Better Multi-Factor Authentication (4/12/2024)

4/12/2024: Top 5 stories on the HackerNoon homepage!

4/12/2024: Top 5 stories on the HackerNoon homepage!

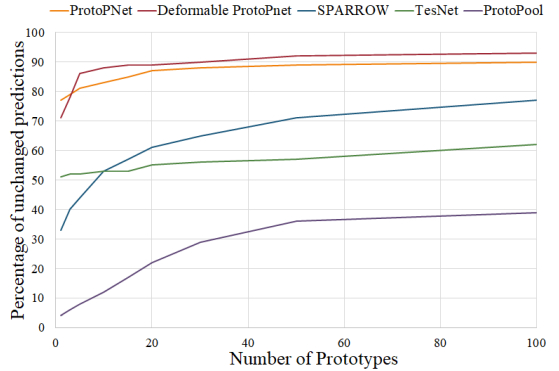

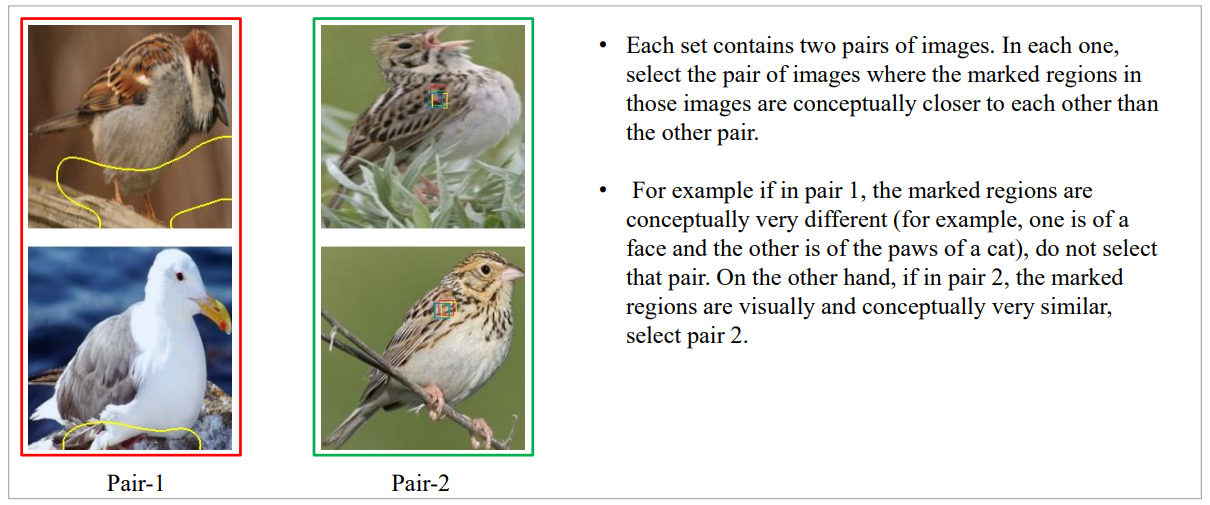

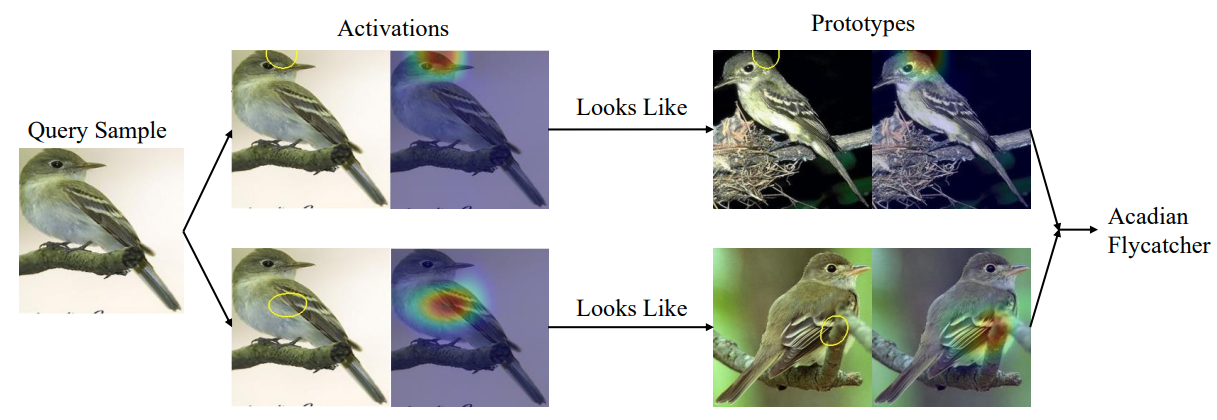

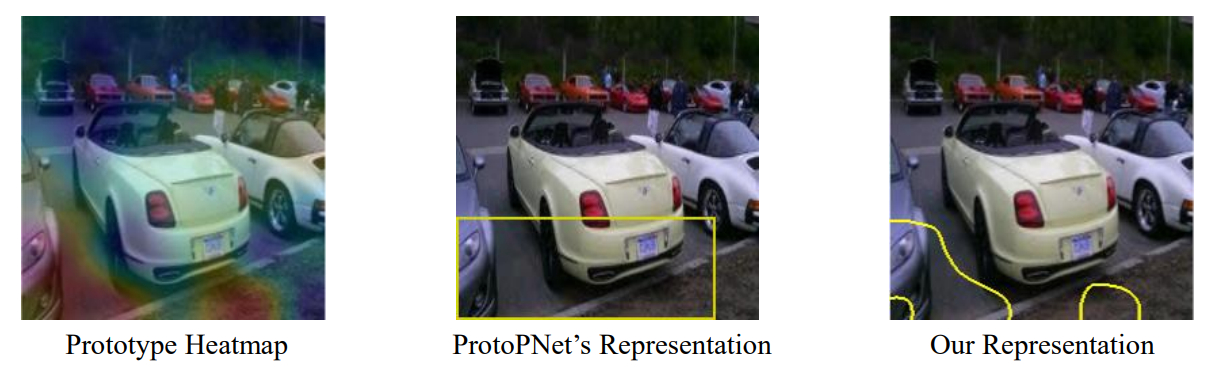

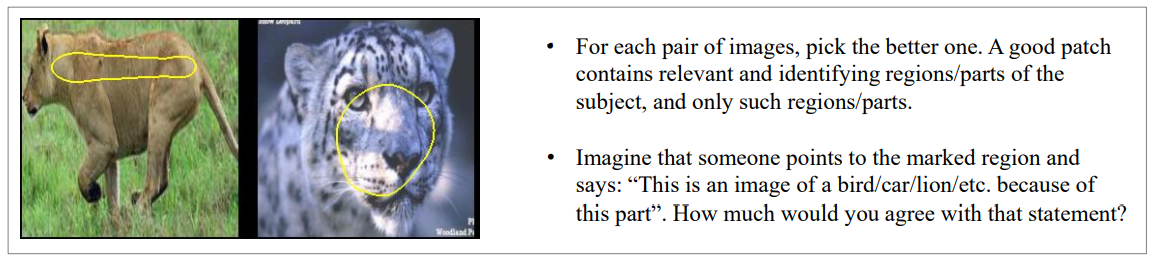

123. Human Understanding of AI Decisions

Explore an experiment designed to evaluate the interpretability of the decision-making process in prototype-based models

Explore an experiment designed to evaluate the interpretability of the decision-making process in prototype-based models

124. 3 Things I Learned Building My First Neural Network

I’ve been working with massive data sets for several years at companies like Facebook to analyze and address operational challenges, from inventory to customer lifetime value. But I hadn’t worked yet on something this ambitious.

I’ve been working with massive data sets for several years at companies like Facebook to analyze and address operational challenges, from inventory to customer lifetime value. But I hadn’t worked yet on something this ambitious.

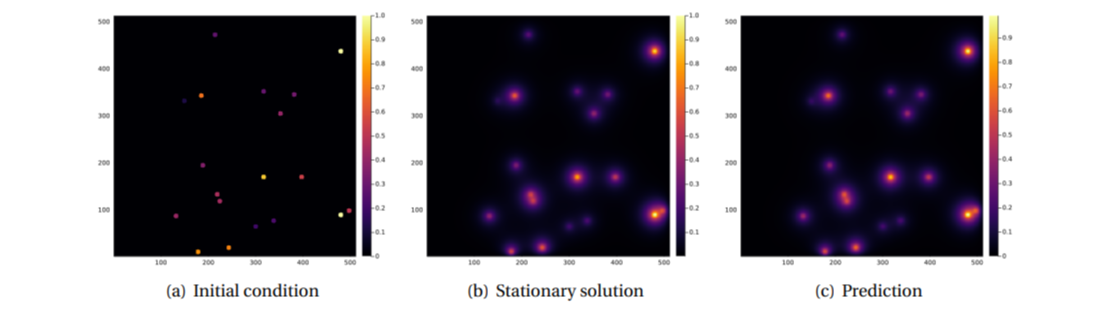

125. Integrating Physics-Informed Neural Networks for Earthquake Modeling: Summary & References

This study uses Physics-Informed Neural Networks to model earthquakes and invert fault friction parameters, integrating data with physical constraints.

This study uses Physics-Informed Neural Networks to model earthquakes and invert fault friction parameters, integrating data with physical constraints.

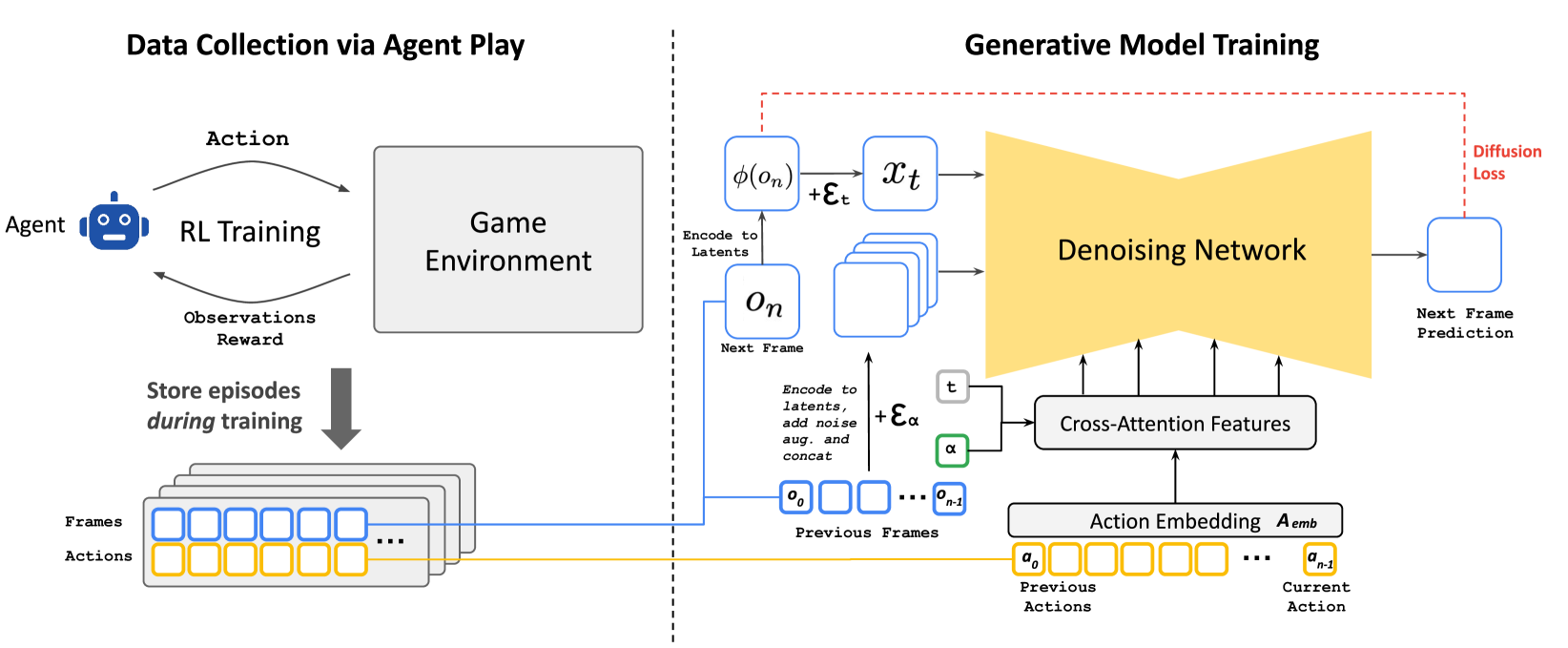

126. How Reinforcement Learning and Stable Diffusion Are Being Combined to Simulate Game Worlds

A detailed experimental setup combining PPO-based reinforcement learning with diffusion models to train agents in simulated game environments.

A detailed experimental setup combining PPO-based reinforcement learning with diffusion models to train agents in simulated game environments.

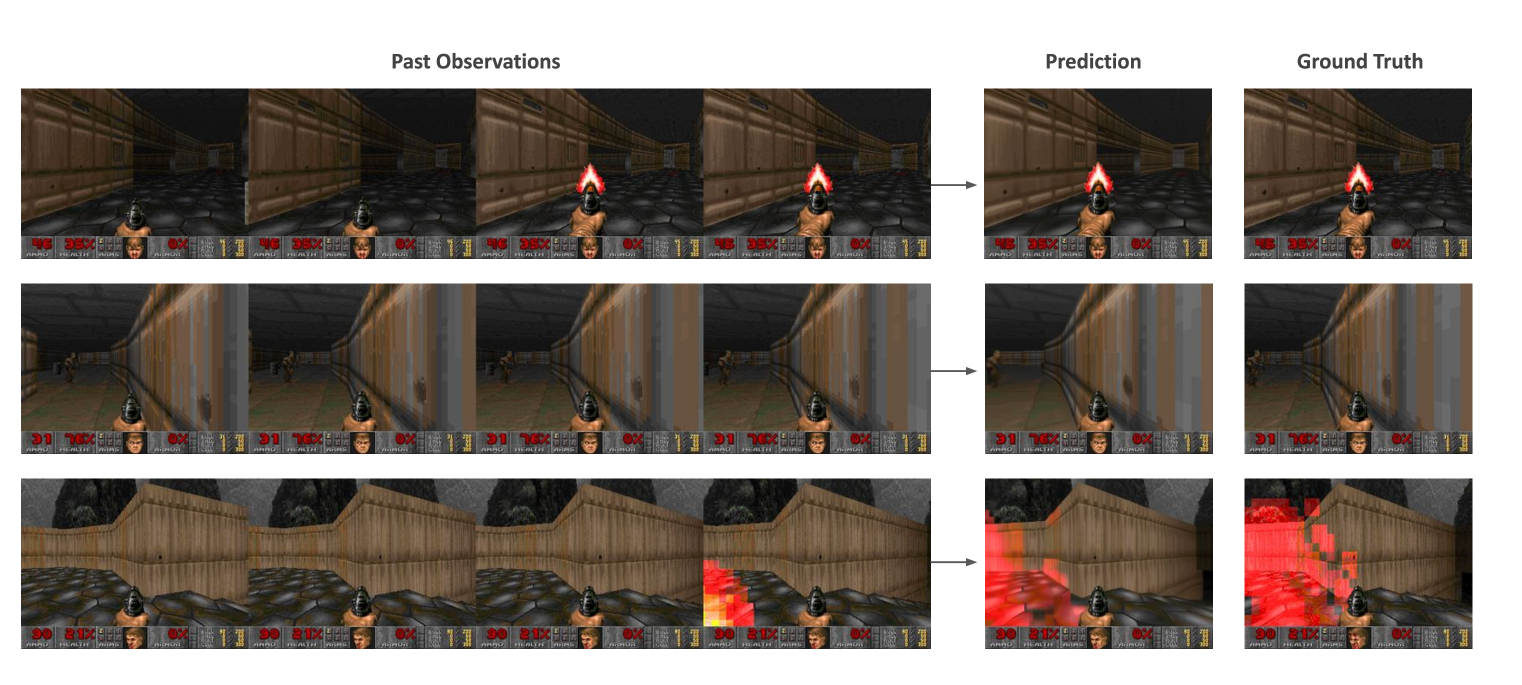

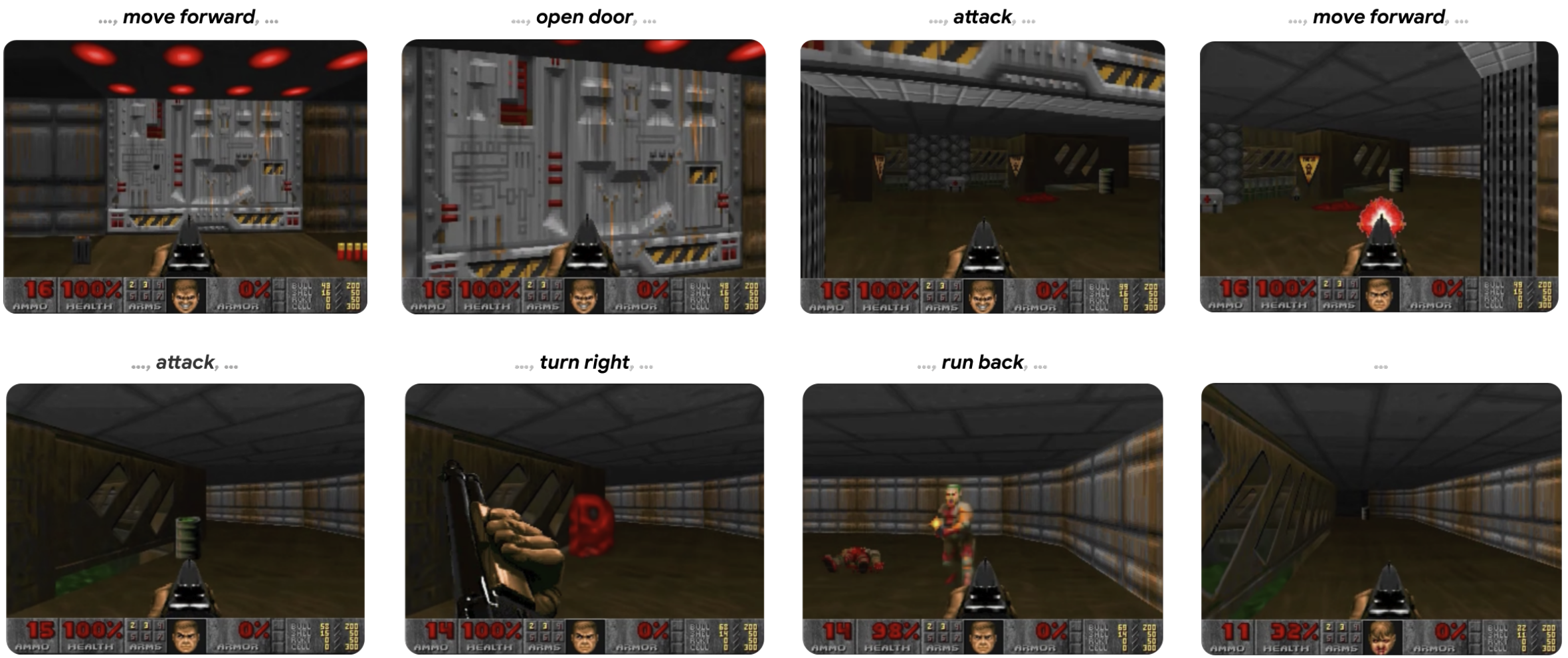

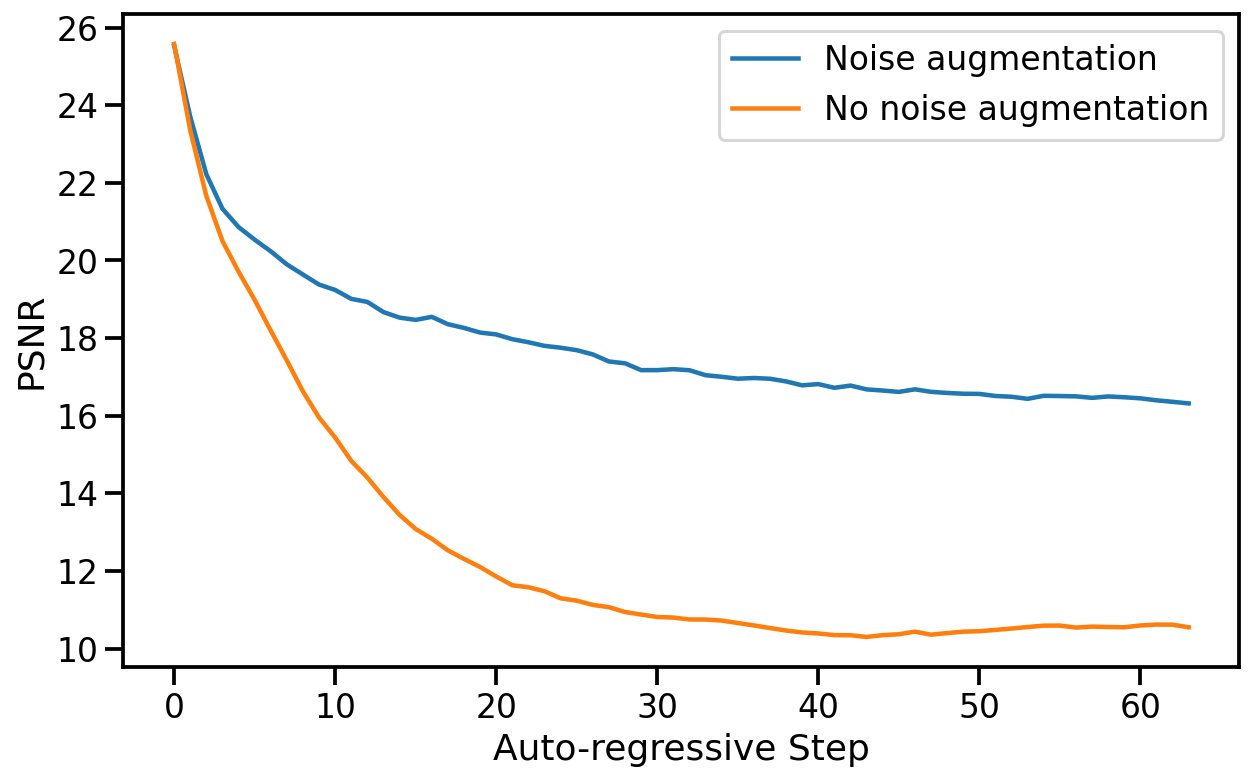

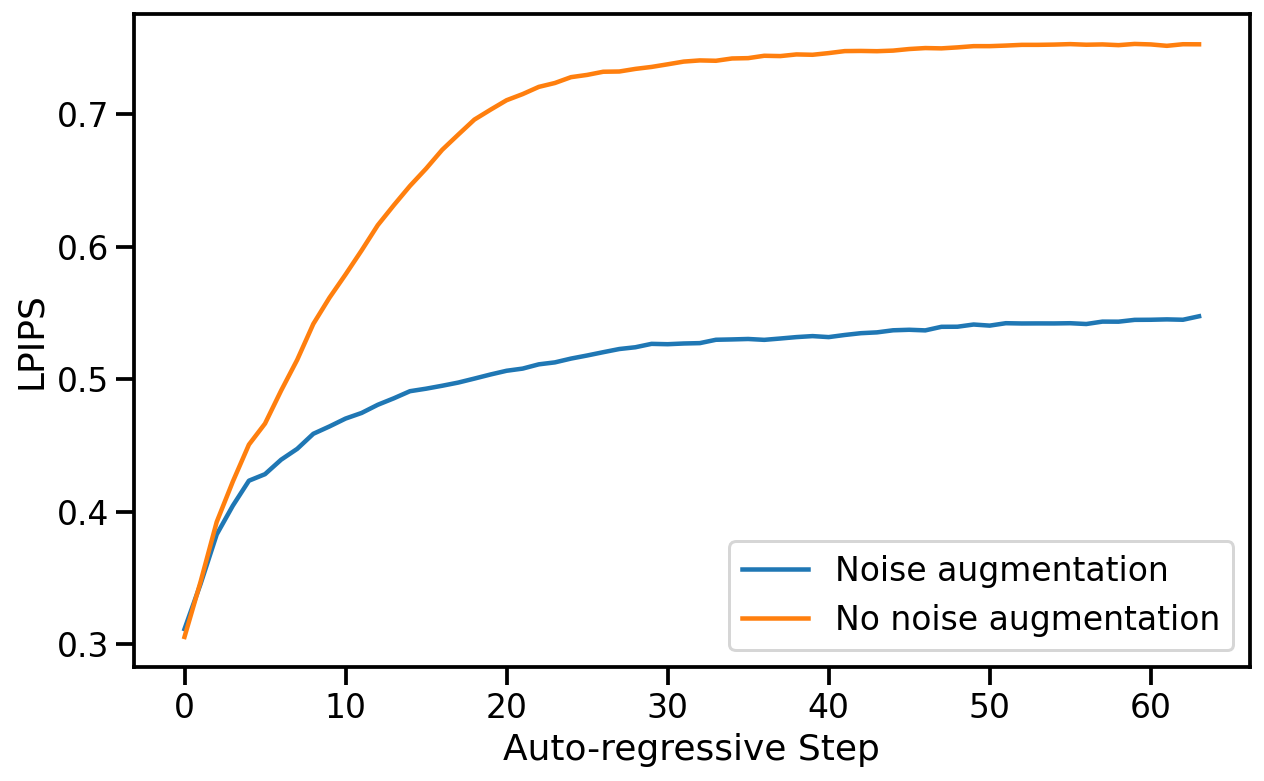

127. Diffusion Models Are Real-Time Game Engines

GameNGen shows how a neural network can run Doom in real time, simulating gameplay, state, and visuals without a traditional game engine.

GameNGen shows how a neural network can run Doom in real time, simulating gameplay, state, and visuals without a traditional game engine.

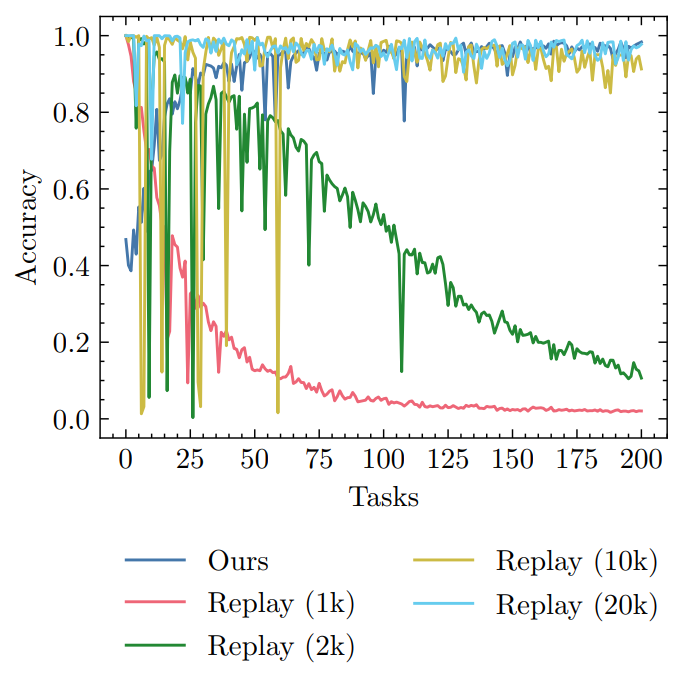

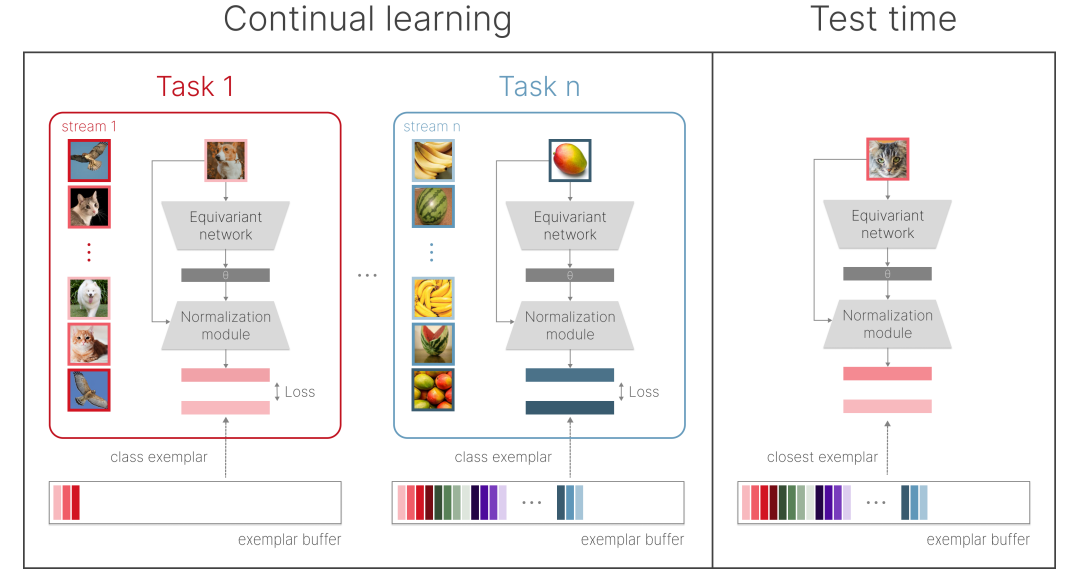

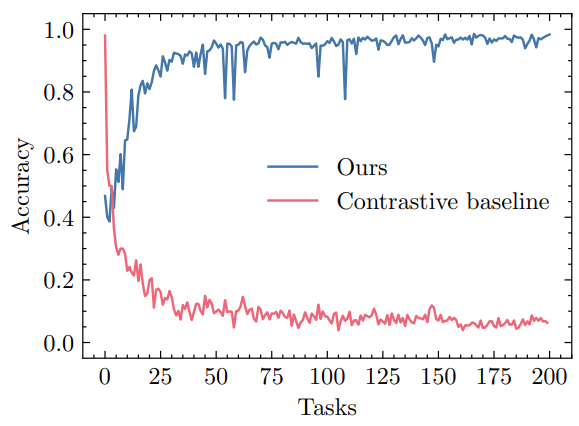

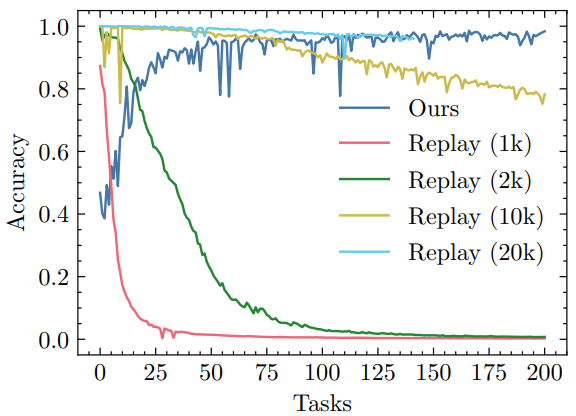

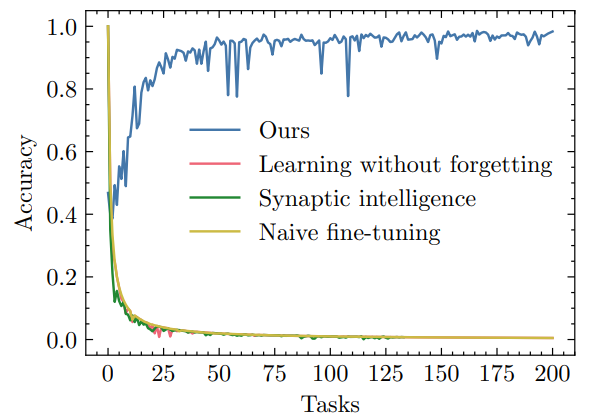

128. Batch Training vs. Online Learning

This paper compares a novel continual learning method's performance in online learning versus batch training scenarios.

This paper compares a novel continual learning method's performance in online learning versus batch training scenarios.

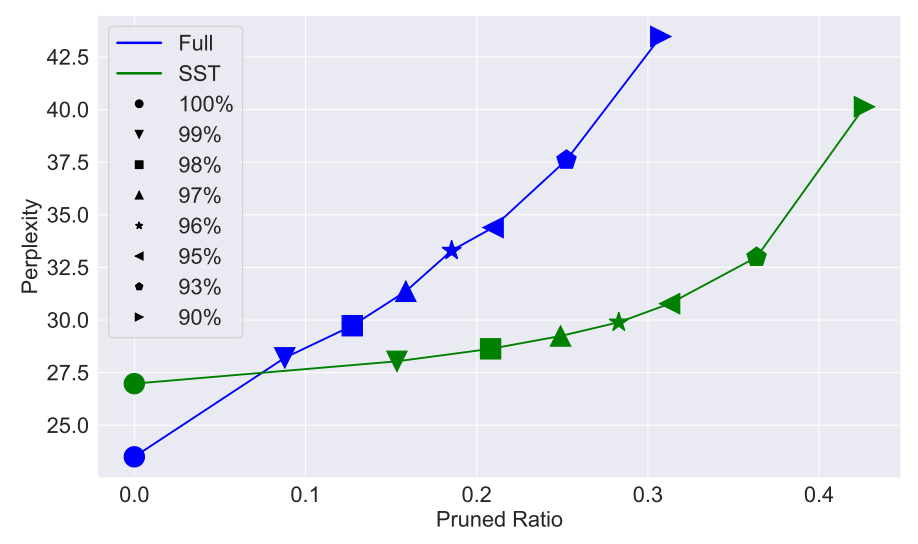

129. Can Sparse Spectral Training Make AI More Accessible?

Efficient, eco-friendly, and powerful — Sparse Spectral Training boosts LLM performance while cutting memory use and training costs.

Efficient, eco-friendly, and powerful — Sparse Spectral Training boosts LLM performance while cutting memory use and training costs.

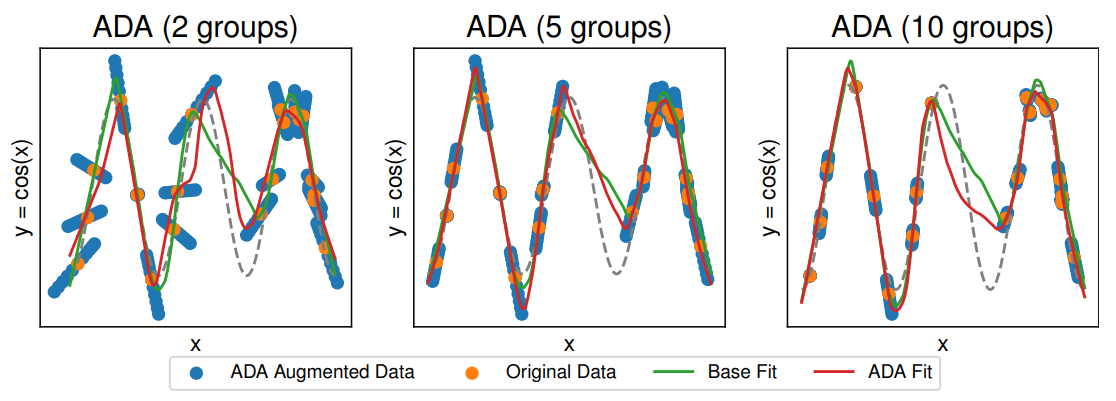

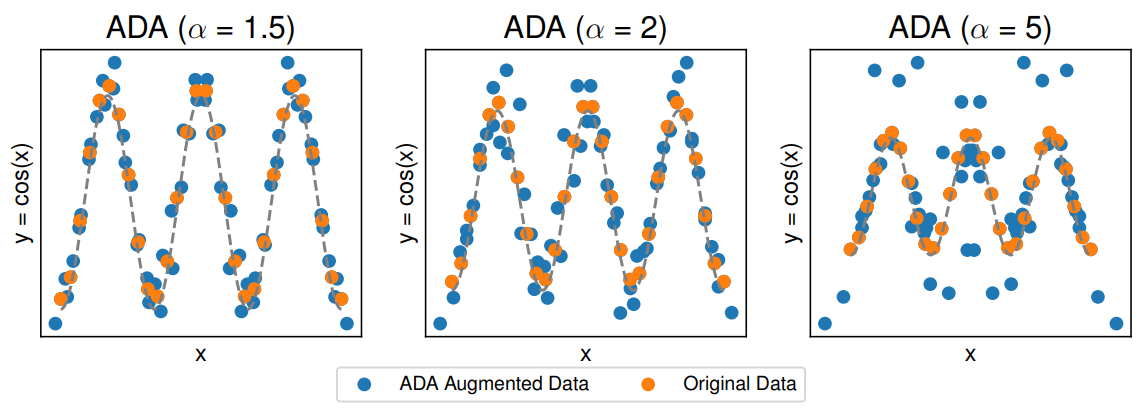

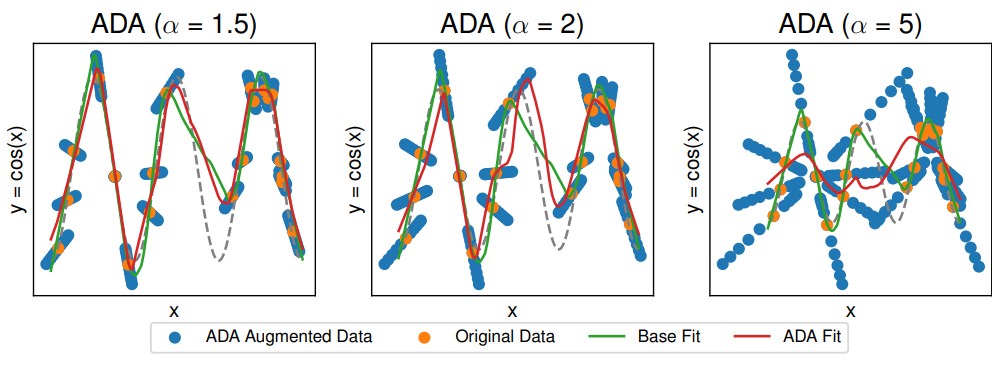

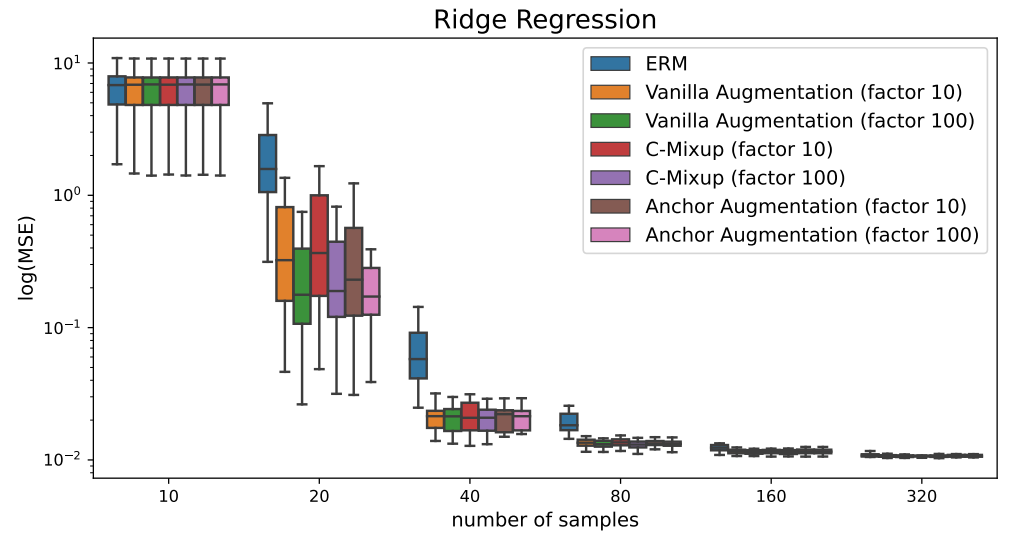

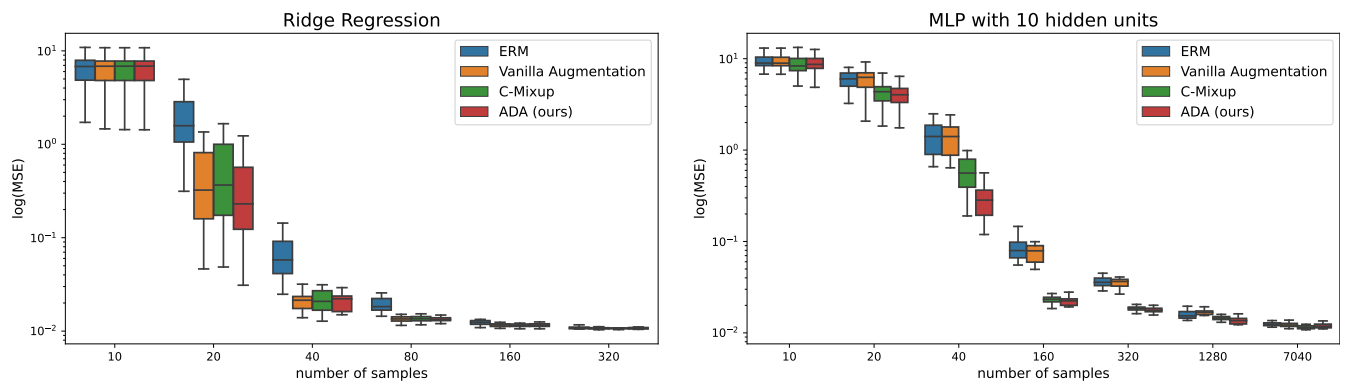

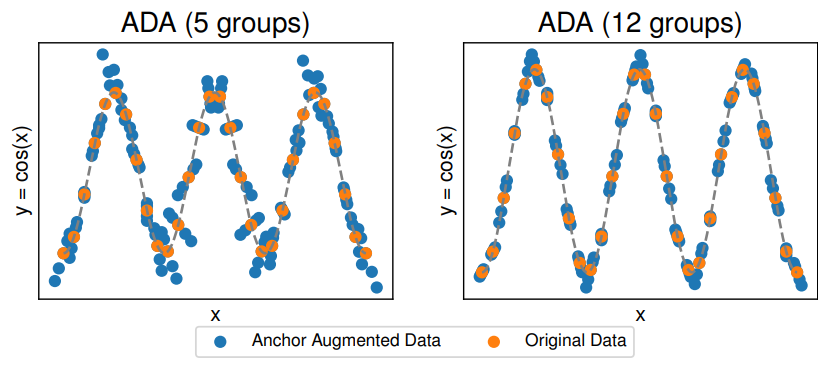

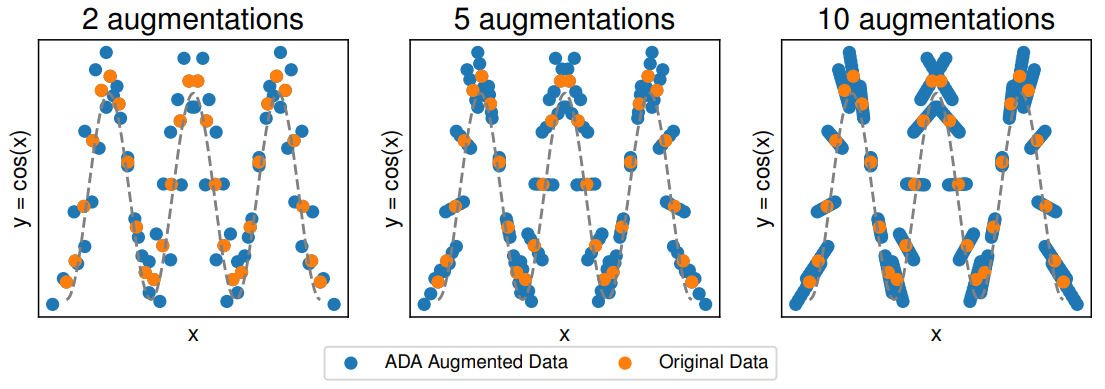

130. ADA: A Powerful Data Augmentation Technique for Improved Regression Robustness

ADA (Anchor Data Augmentation) offers a novel approach to data augmentation by mixing multiple samples based on clustering.

ADA (Anchor Data Augmentation) offers a novel approach to data augmentation by mixing multiple samples based on clustering.

131. Why Eigenvalues are the Key to Solving AI Hallucinations

Exploring how eigenvalues, eigenvectors, and spectral math are helping researchers decode neural networks and build more reliable, interpretable AI systems.

Exploring how eigenvalues, eigenvectors, and spectral math are helping researchers decode neural networks and build more reliable, interpretable AI systems.

132. Assessing the Interpretability of ML Models from a Human Perspective

Explore the human-centric evaluation of interpretability in part-prototype networks, revealing insights into ML model behavior, decision-making processes.

Explore the human-centric evaluation of interpretability in part-prototype networks, revealing insights into ML model behavior, decision-making processes.

133. Ways To Overcome Linguistic Barriers with Language Technologies

COVID-19 has impacted every other industry and has made people adopt newer norms. The traditional translation industry is no different. Several disruptions have been introduced to keep things moving, thanks to Big data and machine translation technologies that have enabled the world to do business as usual.

COVID-19 has impacted every other industry and has made people adopt newer norms. The traditional translation industry is no different. Several disruptions have been introduced to keep things moving, thanks to Big data and machine translation technologies that have enabled the world to do business as usual.

134. Turning Users Invisible: Edge Machine Learning in Privacy-First Location Detection

How to use edge machine learning in browser for privacy-first location detection. Turn your users invisible while building location-based websites.

How to use edge machine learning in browser for privacy-first location detection. Turn your users invisible while building location-based websites.

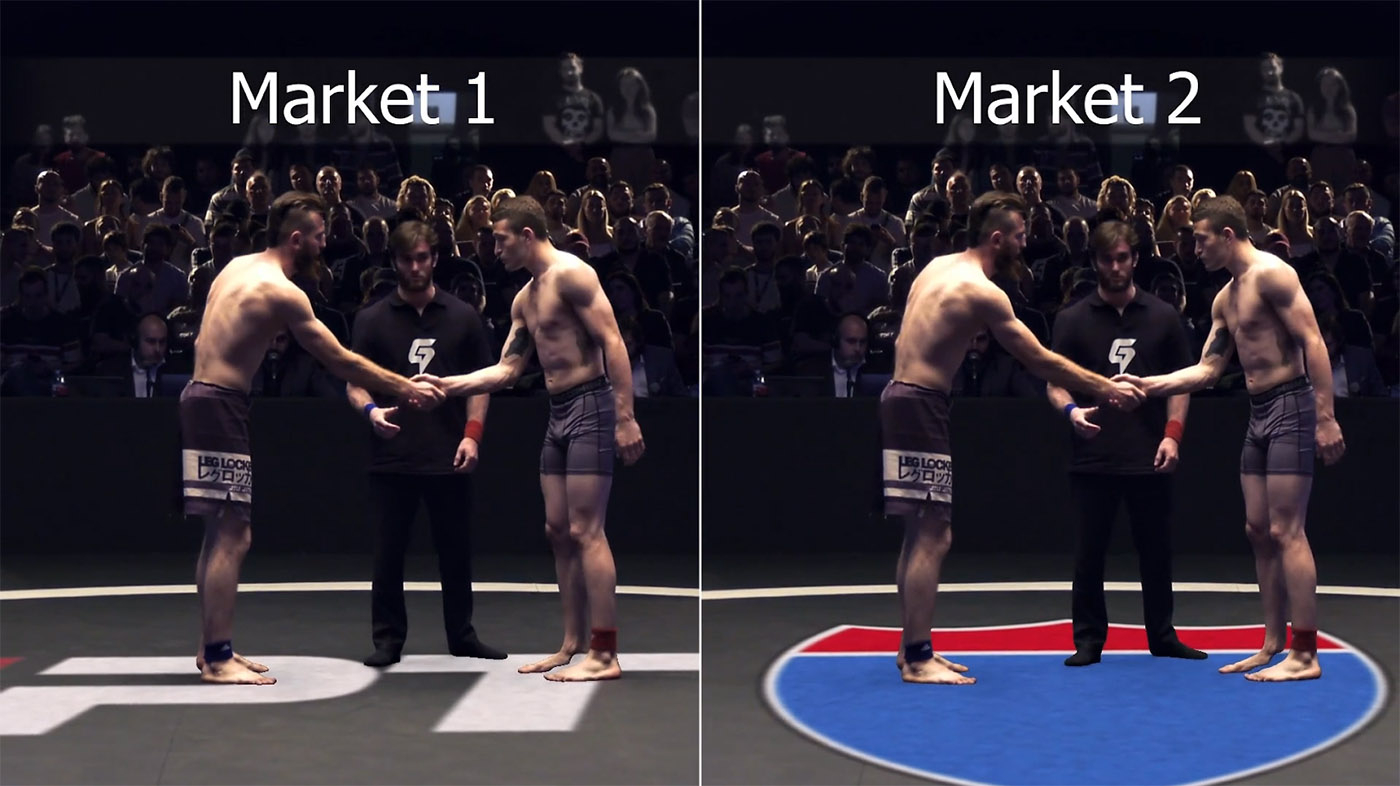

135. How AI Is Fighting Monopolies in Sports Advertising With GPUs and Servers

AI and AR technologies allow sports advertising to be customized to different audiences in real time using cloud-based GPU solutions.

AI and AR technologies allow sports advertising to be customized to different audiences in real time using cloud-based GPU solutions.

136. How Emerging Tech Will Revolutionize The Life of Physically Challenged People

The Age of Exciting Opportunities

The Age of Exciting Opportunities

137. Twin‑Tower Neural Architecture for Δ‑Estimation in Differential Financial Models

Neural networks act as adaptive parametric bases, improving hedging accuracy and reducing relative PnL variance versus classical methods.

Neural networks act as adaptive parametric bases, improving hedging accuracy and reducing relative PnL variance versus classical methods.

138. Classification using Neural Network with Audio Data

This is an example of an audio data analysis by 2D CNN

This is an example of an audio data analysis by 2D CNN

139. A Checklist of Changes That Await Us in the Field of Arts

These changes reflect the evolution of art and its role in modern society where technology plays an increasingly significant role and brings innovation

These changes reflect the evolution of art and its role in modern society where technology plays an increasingly significant role and brings innovation

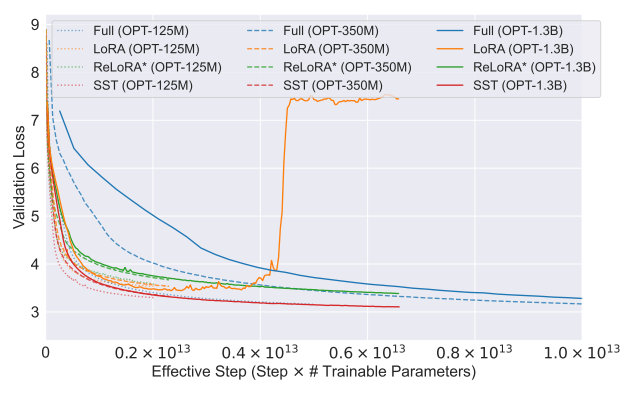

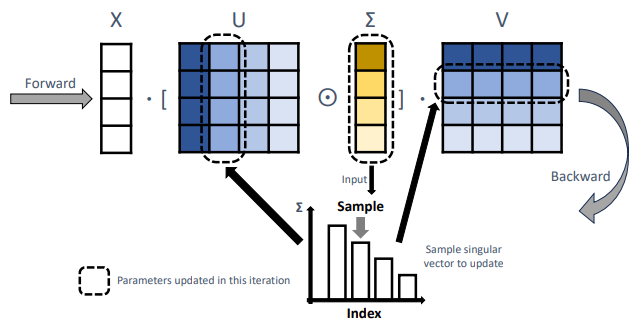

140. Why Sparse Spectral Training Might Replace LoRA in AI Model Optimization

Sparse Spectral Training (SST) boosts AI efficiency with selective spectral updates—balancing speed, accuracy, and memory use.

Sparse Spectral Training (SST) boosts AI efficiency with selective spectral updates—balancing speed, accuracy, and memory use.

141. Modern Antifraud systems using AI

I want to share my experience of how a simple anti-fraud system has evolved from a decision-making system by bank employees to an autonomous decision-making ...

I want to share my experience of how a simple anti-fraud system has evolved from a decision-making system by bank employees to an autonomous decision-making ...

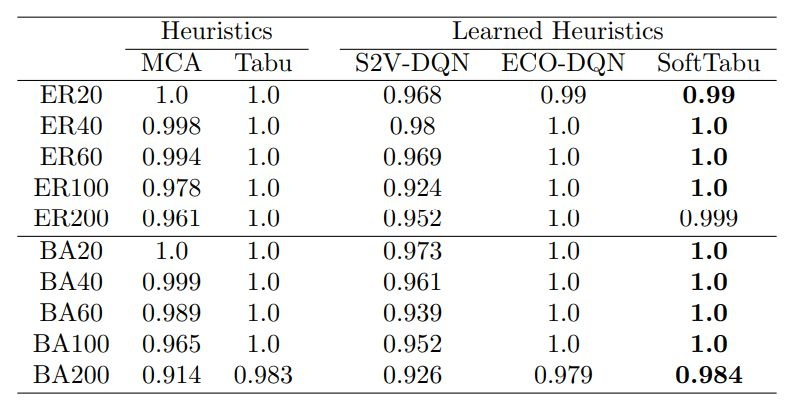

142. Analyzing Learned Heuristics for Max-Cut Optimization

Explore a detailed analysis of learned heuristics versus traditional algorithms in Max-Cut optimization.

Explore a detailed analysis of learned heuristics versus traditional algorithms in Max-Cut optimization.

143. AI Might Soon Watch Your Login Attempts the Blade Runner Way

Ready to prove your identity to AI using emotions?

Ready to prove your identity to AI using emotions?

144. Study Finds AI-Generated Game Simulations Nearly Indistinguishable From Real Play

An AI model simulates video game worlds with image and video quality close to the original, challenging humans to tell real gameplay from generated play.

An AI model simulates video game worlds with image and video quality close to the original, challenging humans to tell real gameplay from generated play.

145. The HackerNoon Newsletter: System Design in a Nutshell (10/24/2025)

10/24/2025: Top 5 stories on the HackerNoon homepage!

10/24/2025: Top 5 stories on the HackerNoon homepage!

146. Deep Neural Networks Are Addressing Challenges in Computer Vision

Computer vision techniques are developed to enable computers to “see” and draw analysis from digital images or streaming videos.

Computer vision techniques are developed to enable computers to “see” and draw analysis from digital images or streaming videos.

147. Generalizing Sparse Spectral Training Across Euclidean and Hyperbolic Architectures

Sparse Spectral Training boosts transformer stability and efficiency, outperforming LoRA and ReLoRA across neural network architectures.

Sparse Spectral Training boosts transformer stability and efficiency, outperforming LoRA and ReLoRA across neural network architectures.

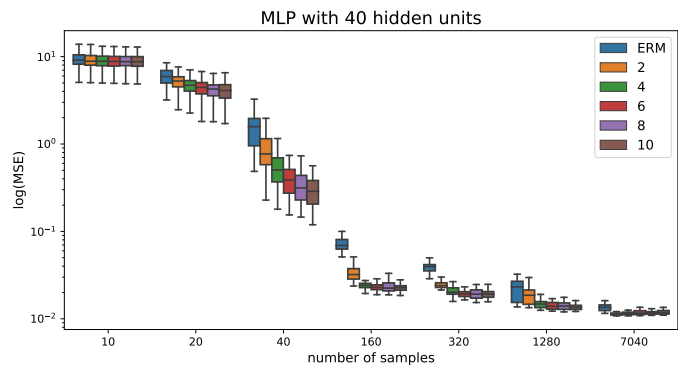

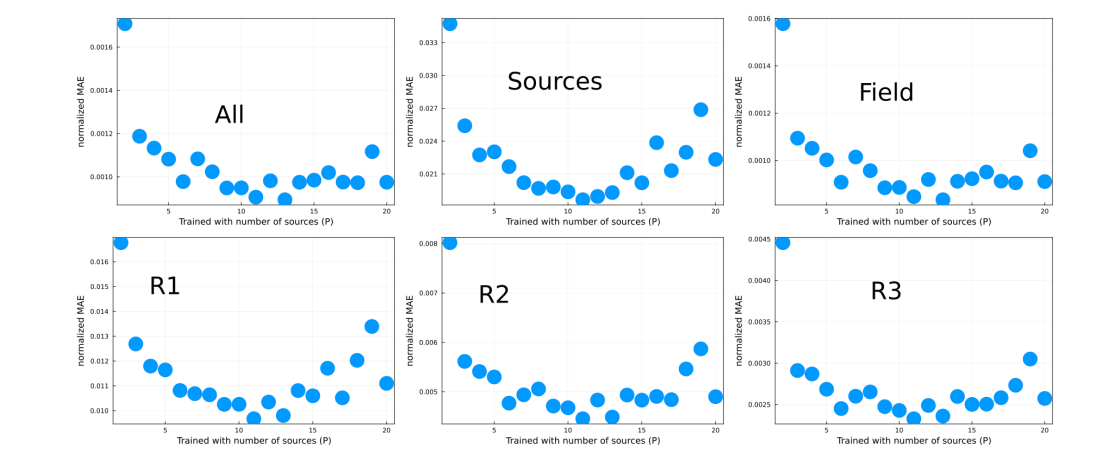

148. Understanding Factors Affecting Neural Network Performance in Diffusion Prediction

Explore the impact of loss functions and data set sizes on neural network performance in diffusion prediction models.

Explore the impact of loss functions and data set sizes on neural network performance in diffusion prediction models.

149. Detailed Experimentation and Comparisons for Continual Learning Methods

Explore the supplementary material detailing our experiments on continual learning methods

Explore the supplementary material detailing our experiments on continual learning methods

150. The Chosen One: Consistent Characters in Text-to-Image Diffusion Models: Additional Experiments

In this study, researchers introduce an iterative procedure that, at each stage, identifies a coherent set of images sharing a similar identity.

In this study, researchers introduce an iterative procedure that, at each stage, identifies a coherent set of images sharing a similar identity.

151. GameNGen Turns a Classic Shooter Into a Neural Network

GameNGen shows that neural networks can run a full video game in real time, hinting at a future where games are models, not code.

GameNGen shows that neural networks can run a full video game in real time, hinting at a future where games are models, not code.

152. How Gradient-Free Training Could Decentralize AI

Gradient-Free Training vs. Gradient Descent, How Gradient-Free Training Could Decentralize AI

Gradient-Free Training vs. Gradient Descent, How Gradient-Free Training Could Decentralize AI

153. The AI Industry's Obsession With Transformers Might Finally Be Waning

Newer versions such as Mamba of State Space Models (SSMs) appear to be winning some favor.

Newer versions such as Mamba of State Space Models (SSMs) appear to be winning some favor.

154. Anchor Regression: The Secret to Stable Predictions Across Shifting Data

Anchor Regression (AR) optimizes predictive accuracy while enhancing robustness to distribution shifts.

Anchor Regression (AR) optimizes predictive accuracy while enhancing robustness to distribution shifts.

155. ADA's Impact on Out-of-Distribution Robustness

This paper compares ADA's performance on out-of-distribution robustness tasks, highlighting its superiority with datasets like SkillCraft and RCFashionMNIST.

This paper compares ADA's performance on out-of-distribution robustness tasks, highlighting its superiority with datasets like SkillCraft and RCFashionMNIST.

156. The Inevitable Symbiosis of Cybersecurity and AI

While improvements in AI and Deep Learning move forward at an ever increasingly rapid rate, people have started to ask questions. Questions about jobs being made obsolete, questions about the inherent biases programmed into the neural networks, questions about whether or not AI will eventually consider humans as dead-weight and unnecessary to achieve the goals they've been tasked programmed with.

While improvements in AI and Deep Learning move forward at an ever increasingly rapid rate, people have started to ask questions. Questions about jobs being made obsolete, questions about the inherent biases programmed into the neural networks, questions about whether or not AI will eventually consider humans as dead-weight and unnecessary to achieve the goals they've been tasked programmed with.

157. Breaking Down Low-Rank Adaptation and Its Next Evolution, ReLoRA

Learn how LoRA and ReLoRA improve AI model training by cutting memory use and boosting efficiency without full-rank computation.

Learn how LoRA and ReLoRA improve AI model training by cutting memory use and boosting efficiency without full-rank computation.

158. ControlNet: Changing The Image Generation Game with Precise Spatial Control

Models like GPT-4V would not have been possible without the idea of ControlNet

Models like GPT-4V would not have been possible without the idea of ControlNet

159. Overcoming Expense, Labour, and Time Constraint Barriers in Machine Learning

Neural networks are rapidly improving thanks to advancements in computational power.

Neural networks are rapidly improving thanks to advancements in computational power.

160. Assessing the Justification for Integrating Deep Learning in Combinatorial Optimization

Explore the intersection of combinatorial optimization and machine learning through a comprehensive evaluation of integrated heuristics.

Explore the intersection of combinatorial optimization and machine learning through a comprehensive evaluation of integrated heuristics.

161. Pitfalls in AI-based Learning - A Supply Chain Example

AI-based learning has it's shortcomings when the system is trivial. Lack of theoretical guarantees, a minimum complexity of the system are reqd. conditions.

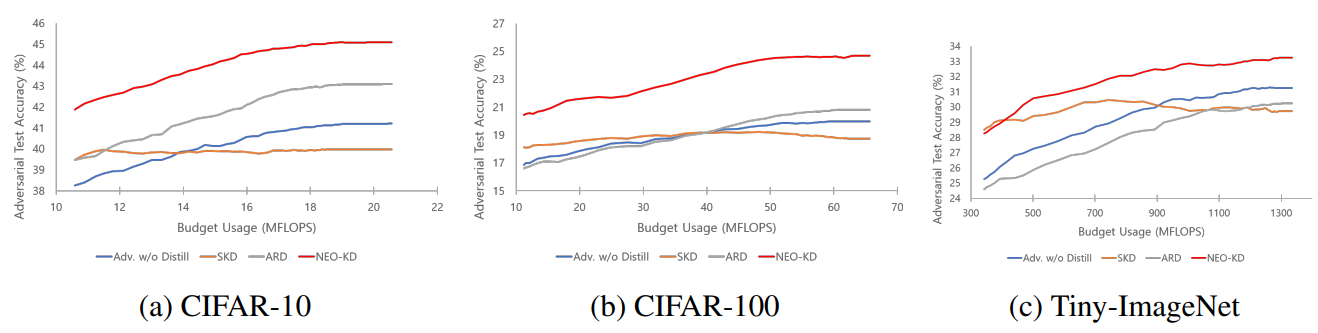

AI-based learning has it's shortcomings when the system is trivial. Lack of theoretical guarantees, a minimum complexity of the system are reqd. conditions.

162. Comparison with SKD and ARD and Implementations of Stronger Attacker Algorithms

Explore how NEO-KD outperforms SKD and ARD in multi-exit networks and discover advanced adversarial attack methods tailored for enhanced resilience.

Explore how NEO-KD outperforms SKD and ARD in multi-exit networks and discover advanced adversarial attack methods tailored for enhanced resilience.

163. Researchers Use Diffusion Models to Simulate DOOM Gameplay in Real Time

A new diffusion-based AI model simulates the game DOOM in real time, learning gameplay dynamics without a traditional game engine.

A new diffusion-based AI model simulates the game DOOM in real time, learning gameplay dynamics without a traditional game engine.

164. How to Implement ADA for Data Augmentation in Nonlinear Regression Models

This paper presents the ADA algorithm for generating minibatches in nonlinear regression models, using stochastic gradient descent.

This paper presents the ADA algorithm for generating minibatches in nonlinear regression models, using stochastic gradient descent.

165. SST vs LoRA: A Leaner, Smarter Way to Train AI Models

SST delivers full-rank performance with fewer parameters, outperforming LoRA across NLP and graph tasks.

SST delivers full-rank performance with fewer parameters, outperforming LoRA across NLP and graph tasks.

166. How Our Disentangled Learning Framework Tackles Lifelong Learning Challenges

This paper introduces the idSprites benchmark and a disentangled learning framework designed to address the limitations of current continual learning

This paper introduces the idSprites benchmark and a disentangled learning framework designed to address the limitations of current continual learning

167. Building Machine Learning Models With TensorFlow

In this article, I will share with you some useful tips and guidelines that you can use to better build better deep learning models.

In this article, I will share with you some useful tips and guidelines that you can use to better build better deep learning models.

168. Mathematics of Differential Machine Learning in Derivative Pricing and Hedging: Choice of Basis

Drawing from Barron, Hornik, and Telgarsky, it proves neural networks yield superior efficiency in higher‑dimensional pricing tasks.

Drawing from Barron, Hornik, and Telgarsky, it proves neural networks yield superior efficiency in higher‑dimensional pricing tasks.

169. ADA Outperforms ERM and Competes with C-Mixup in In-Distribution Generalization Tasks

This paper evaluates ADA's performance on in-distribution generalization tasks, comparing it to C-Mixup, Mixup, and other strategies.

This paper evaluates ADA's performance on in-distribution generalization tasks, comparing it to C-Mixup, Mixup, and other strategies.

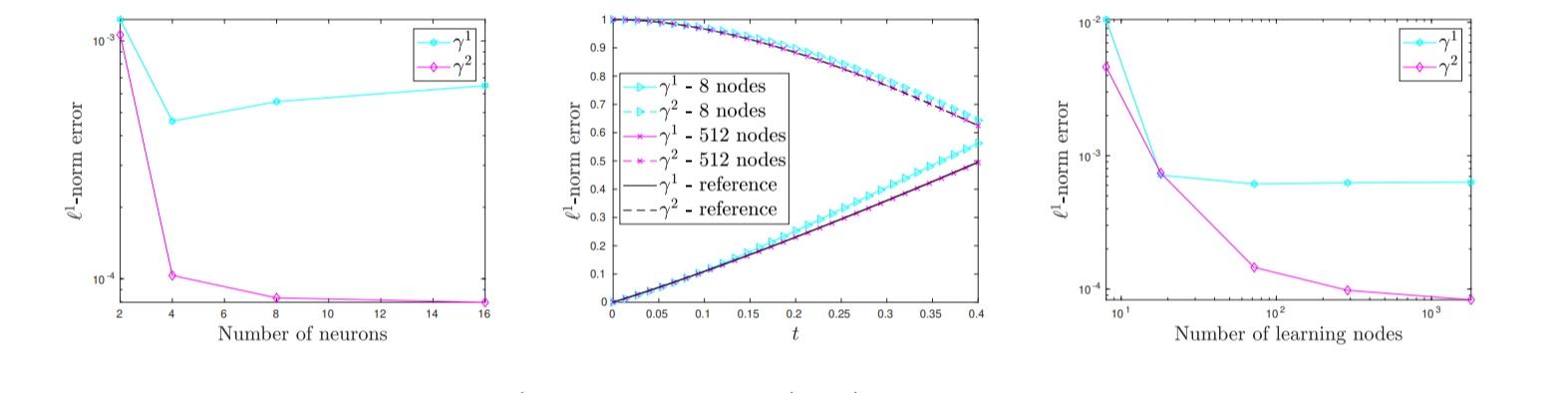

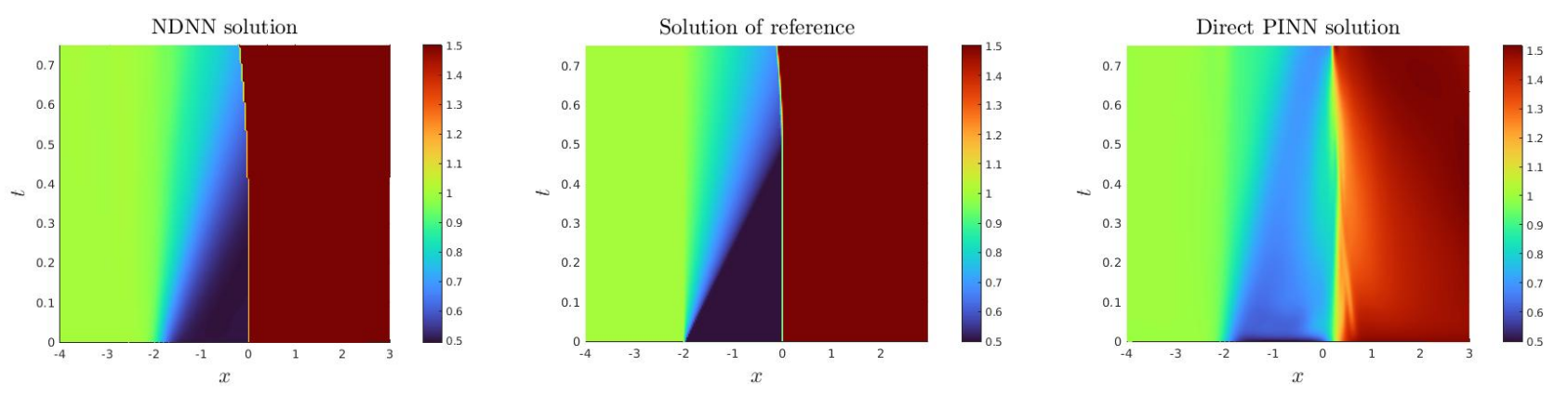

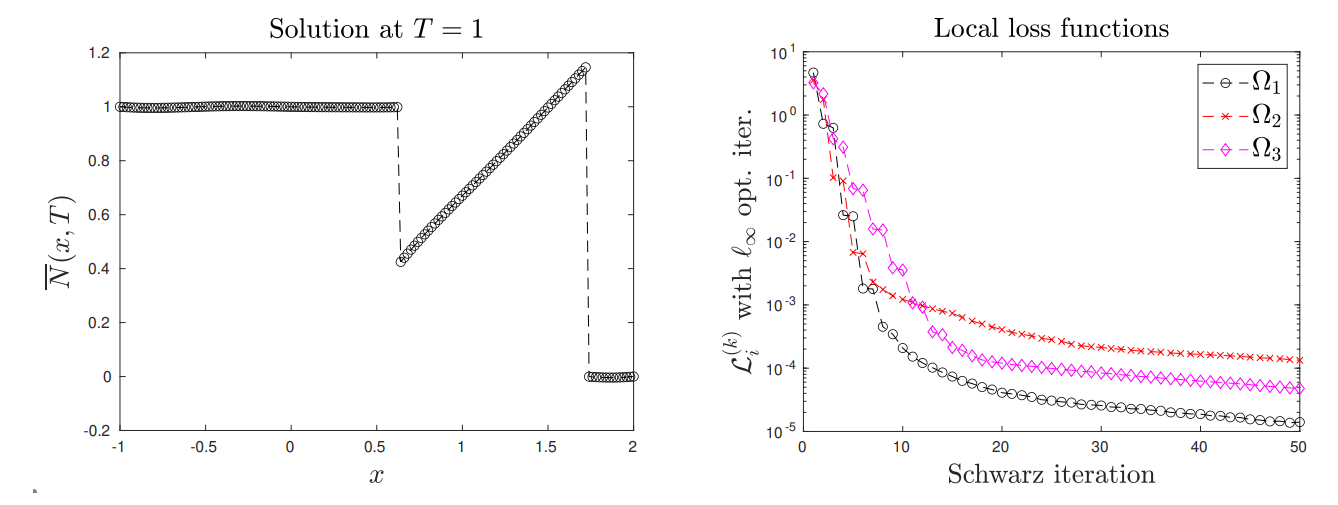

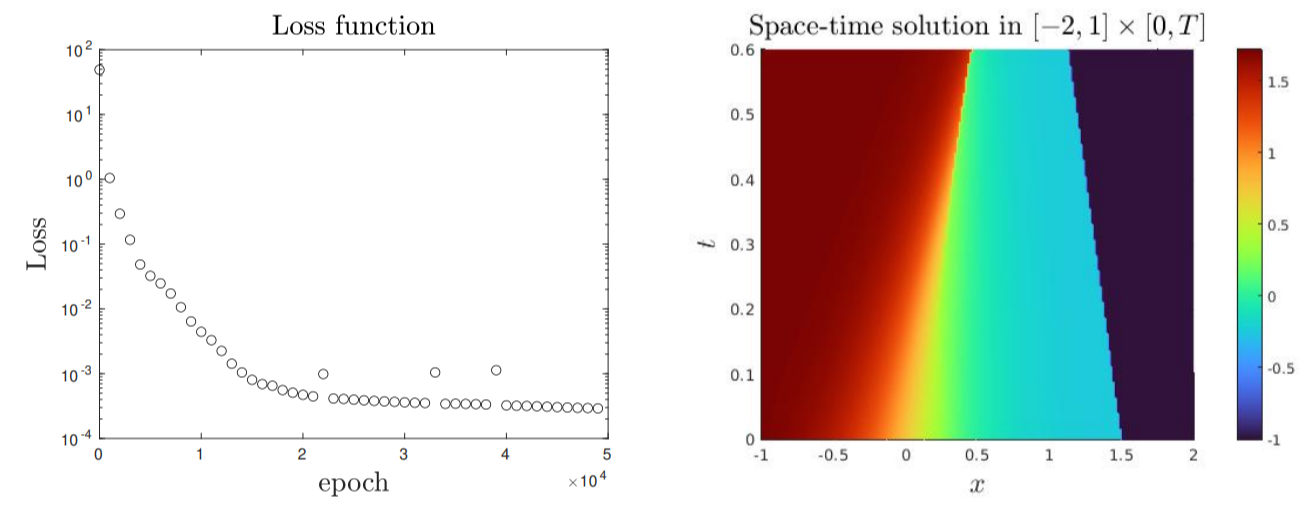

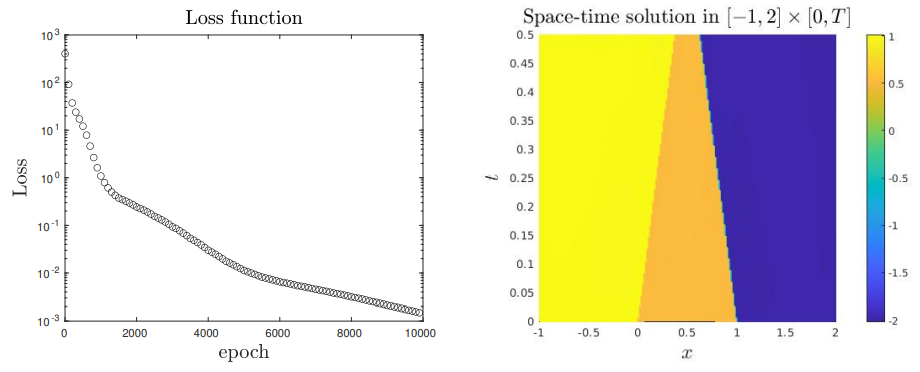

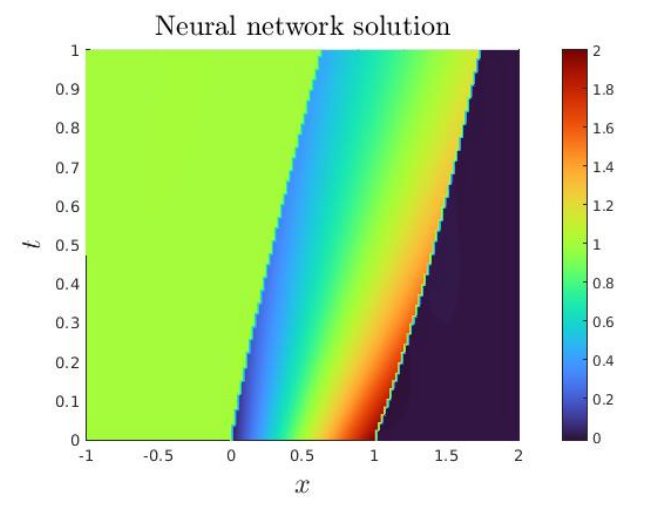

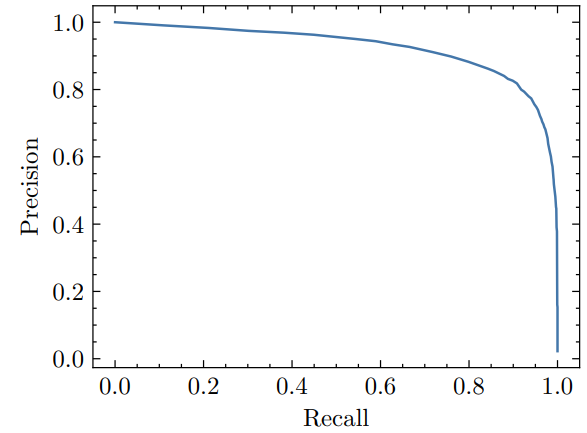

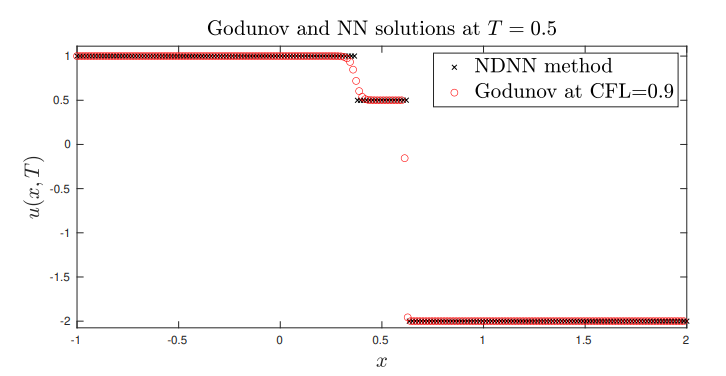

170. How Scientists Taught AI to Handle Shock Waves

Discover how JAX-powered neural networks (NDNN) outperform PINNs in solving PDEs and shock wave problems with simple, accurate results.

Discover how JAX-powered neural networks (NDNN) outperform PINNs in solving PDEs and shock wave problems with simple, accurate results.

171. Fine-Tuning NEO-KD for Robust Multi-Exit Networks

Explore the detailed experimentation methods for NEO-KD, including model training, adversarial strategies, and how to determine confidence thresholds.

Explore the detailed experimentation methods for NEO-KD, including model training, adversarial strategies, and how to determine confidence thresholds.

172. The Impact of Hyperparameters on Adversarial Training Performance

Explore the impact of hyperparameter tuning on NEO-KD's performance, focusing on the optimal values of α, β, and γ that enhance adversarial test accuracy.

Explore the impact of hyperparameter tuning on NEO-KD's performance, focusing on the optimal values of α, β, and γ that enhance adversarial test accuracy.

173. Integrating Physics-Informed Neural Networks for Earthquake Modeling: Abstract, Context, Motivation

This study uses Physics-Informed Neural Networks to model earthquakes and invert fault friction parameters, integrating data with physical constraints.

This study uses Physics-Informed Neural Networks to model earthquakes and invert fault friction parameters, integrating data with physical constraints.

174. Testing ADA on Synthetic and Real-World Data

ADA boosts regression models' robustness through data augmentation, improving performance across real-world and synthetic data with varying hyperparameters.

175. Disentangled Continual Learning: Separating Memory Edits from Model Updates

A novel continual learning approach overcomes catastrophic forgetting by separating class-specific memorization from class-agnostic generalization.

A novel continual learning approach overcomes catastrophic forgetting by separating class-specific memorization from class-agnostic generalization.

176. ADA vs C-Mixup: Performance on California and Boston Housing Datasets

This paper extends ADA’s evaluation to nonlinear regression on the California and Boston housing datasets, comparing its performance against C-Mixup.

This paper extends ADA’s evaluation to nonlinear regression on the California and Boston housing datasets, comparing its performance against C-Mixup.

177. Analysis of Prototype-Query Similarity Rankings

Learn the outcomes of experiments evaluating the similarity between prototypes and activated regions on query samples across various part-prototype methods.

Learn the outcomes of experiments evaluating the similarity between prototypes and activated regions on query samples across various part-prototype methods.

178. Examining the Adversarial Test Accuracy of Later Exits in NEO-KD Networks

Explore the reasons behind the performance decline of later exits in NEO-KD networks during adversarial testing and discover strategies to enhance accuracy.

Explore the reasons behind the performance decline of later exits in NEO-KD networks during adversarial testing and discover strategies to enhance accuracy.

179. How An AI Understands Scenes: Panoptic Scene Graph Generation.

Explore the groundbreaking AI technology of Panoptic Scene Graph Generation with Transformers for a deeper understanding of visual scenes.

Explore the groundbreaking AI technology of Panoptic Scene Graph Generation with Transformers for a deeper understanding of visual scenes.

180. Anchor Data Augmentation (ADA): A Domain-Agnostic Method for Enhancing Regression Models

Anchor Data Augmentation (ADA) is a domain-agnostic method for regression, using clustering to improve generalization with minimal computational cost.

Anchor Data Augmentation (ADA) is a domain-agnostic method for regression, using clustering to improve generalization with minimal computational cost.

181. Humanity in the Age of AI Agents: Redefining Our Role in a Multi-Agent World

Explore how AI agents are reshaping human identity, work, and coordination. In a world of intelligent actors, what does it mean to be human?

Explore how AI agents are reshaping human identity, work, and coordination. In a world of intelligent actors, what does it mean to be human?

182. Why Equivariance Outperforms Invariant Learning in Continual Learning Tasks

This paper contrasts equivariant and invariant representation learning for continual learning.

This paper contrasts equivariant and invariant representation learning for continual learning.

183. Integrating Physics-Informed Neural Networks for Earthquake Modeling: Quakes on Rate & State Faults

This study uses Physics-Informed Neural Networks to model earthquakes and invert fault friction parameters, integrating data with physical constraints.

This study uses Physics-Informed Neural Networks to model earthquakes and invert fault friction parameters, integrating data with physical constraints.

184. Exploring Classical and Learned Local Search Heuristics for Combinatorial Optimization

Explore the impact of machine learning integration on solving diverse Combinatorial Optimization problems.

Explore the impact of machine learning integration on solving diverse Combinatorial Optimization problems.

185. AI’s Cognitive Mirror: The Illusion of Consciousness in the Digital Age

Explore AI's cognitive abilities vs human consciousness. Delve into philosophical debates on machine thinking and the ethical implications of AI in the digital

Explore AI's cognitive abilities vs human consciousness. Delve into philosophical debates on machine thinking and the ethical implications of AI in the digital

186. Here’s Why AI Researchers Are Talking About Sparse Spectral Training

Discover how Sparse Spectral Training (SST) enhances deep learning with low-rank optimization and zero-gradient distortion.

Discover how Sparse Spectral Training (SST) enhances deep learning with low-rank optimization and zero-gradient distortion.

187. Evaluating ADA: Experimental Results on Linear and Housing Datasets

This paper presents experimental evaluations of ADA, comparing it with C-Mixup, vanilla augmentation, and classical risk minimization.

This paper presents experimental evaluations of ADA, comparing it with C-Mixup, vanilla augmentation, and classical risk minimization.

188. Nucleoid: Neuro-Symbolic AI With Declarative Logic - What You Need to Know

Nucleoid is Declarative (Logic) Runtime Environment, which is a type of Symbolic AI used for reasoning engine in Neuro-Symbolic AI.

Nucleoid is Declarative (Logic) Runtime Environment, which is a type of Symbolic AI used for reasoning engine in Neuro-Symbolic AI.

189. Analyzing the Performance of Deep Encoder-Decoder Networks as Surrogates for a Diffusion Equation

Discover how encoder-decoder CNNs serve as efficient surrogates for diffusion solvers, improving computational speed and model performance.

Discover how encoder-decoder CNNs serve as efficient surrogates for diffusion solvers, improving computational speed and model performance.

190. Introduction To The Convolution

In this article, we are going to learn about the grayscale image, colour image and the process of convolution.

In this article, we are going to learn about the grayscale image, colour image and the process of convolution.

191. Understanding the Limitations of GNNSAT in SAT Heuristic Optimization

Delve into a comparative analysis between GNNSAT and SoftTabu for SAT heuristic optimization.

Delve into a comparative analysis between GNNSAT and SoftTabu for SAT heuristic optimization.

192. PolyThrottle: Energy-efficient Neural Network Inference on Edge Devices: Conclusion & References

This paper investigates how the configuration of on-device hardware affects energy consumption for neural network inference with regular fine-tuning.

This paper investigates how the configuration of on-device hardware affects energy consumption for neural network inference with regular fine-tuning.

193. Neural Networks, LLMs, & GPTs Explained: AI for Web Devs

Good things to understand when building AI applications: artificial neural networks, LLMs, parameters, embeddings, GPTs, and hallucinations.

Good things to understand when building AI applications: artificial neural networks, LLMs, parameters, embeddings, GPTs, and hallucinations.

194. The Chosen One: Consistent Characters in Text-to-Image Diffusion Models: Abstract and Introduction

In this study, researchers introduce an iterative procedure that, at each stage, identifies a coherent set of images sharing a similar identity.

In this study, researchers introduce an iterative procedure that, at each stage, identifies a coherent set of images sharing a similar identity.

195. PolyThrottle: Energy-efficient Neural Network Inference on Edge Devices: Experimental Results

This paper investigates how the configuration of on-device hardware affects energy consumption for neural network inference with regular fine-tuning.

This paper investigates how the configuration of on-device hardware affects energy consumption for neural network inference with regular fine-tuning.

196. Can Neural Networks Capture Shock Waves Without Diffusion? This Paper Says Yes

New NDNN method shows how neural networks can model entropic shock waves in HCLs without artificial diffusion or viscosity.

New NDNN method shows how neural networks can model entropic shock waves in HCLs without artificial diffusion or viscosity.

197. Proble Formulation: Two-Phase Tuning

This paper investigates how the configuration of on-device hardware affects energy consumption for neural network inference with regular fine-tuning.

This paper investigates how the configuration of on-device hardware affects energy consumption for neural network inference with regular fine-tuning.

198. SST vs. GaLore: The Battle for the Most Efficient AI Brain

SST outperforms GaLore in compressing large language models, maintaining accuracy and efficiency for next-gen AI inference.

SST outperforms GaLore in compressing large language models, maintaining accuracy and efficiency for next-gen AI inference.

199. Anchor Data Augmentation as a Generalized Variant of C-Mixup

ADA is a generalized version of C-Mixup that mixes multiple samples based on cluster membership, preserving nonlinear relationships in augmented regression data

ADA is a generalized version of C-Mixup that mixes multiple samples based on cluster membership, preserving nonlinear relationships in augmented regression data

200. PolyThrottle: Energy-efficient Neural Network Inference on Edge Devices: Hardware Details