Let's learn about Data Engineering via these 347 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

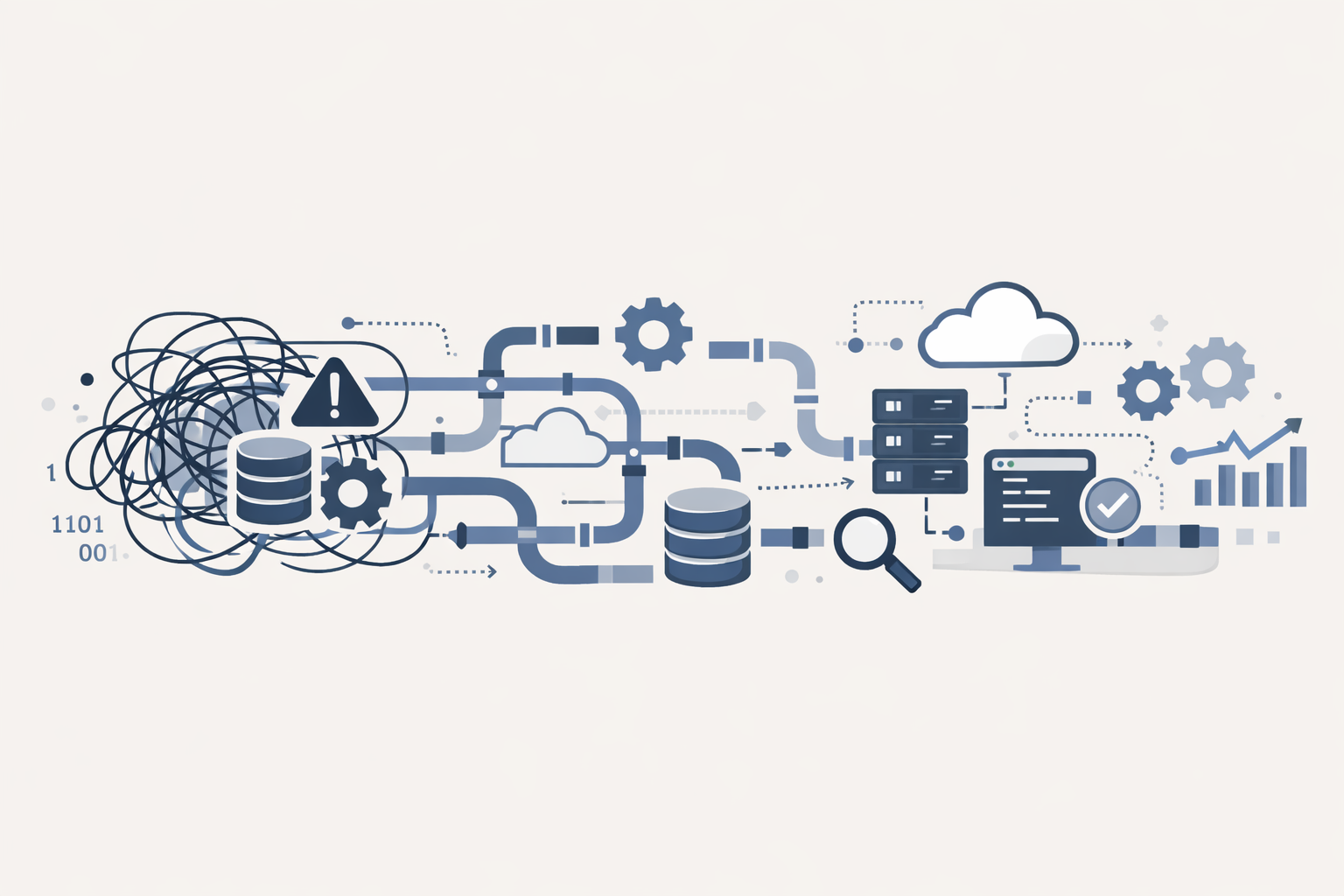

The process of designing and building systems for collecting, storing, and analyzing data at scale, foundational for data science and business intelligence initiatives.

1. 9 Best Data Engineering Courses You Should Take in 2023

In this listicle, you'll find some of the best data engineering courses, and career paths that can help you jumpstart your data engineering journey!

In this listicle, you'll find some of the best data engineering courses, and career paths that can help you jumpstart your data engineering journey!

2. Why Are We Teaching Pandas Instead of SQL?

How I learned to stop using pandas and love SQL.

How I learned to stop using pandas and love SQL.

3. Crunching Large Datasets Made Fast and Easy: the Polars Library

Processing large data, e.g. for cleansing, aggregation or filtering is done blazingly fast with the Polars data frame library in python thanks to its design.

Processing large data, e.g. for cleansing, aggregation or filtering is done blazingly fast with the Polars data frame library in python thanks to its design.

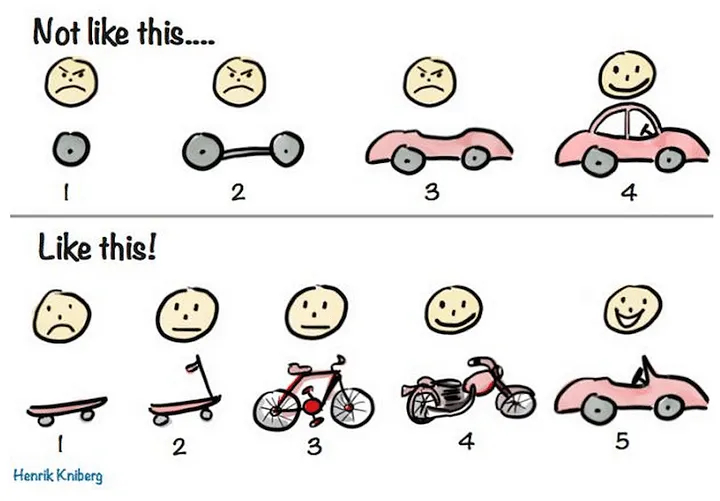

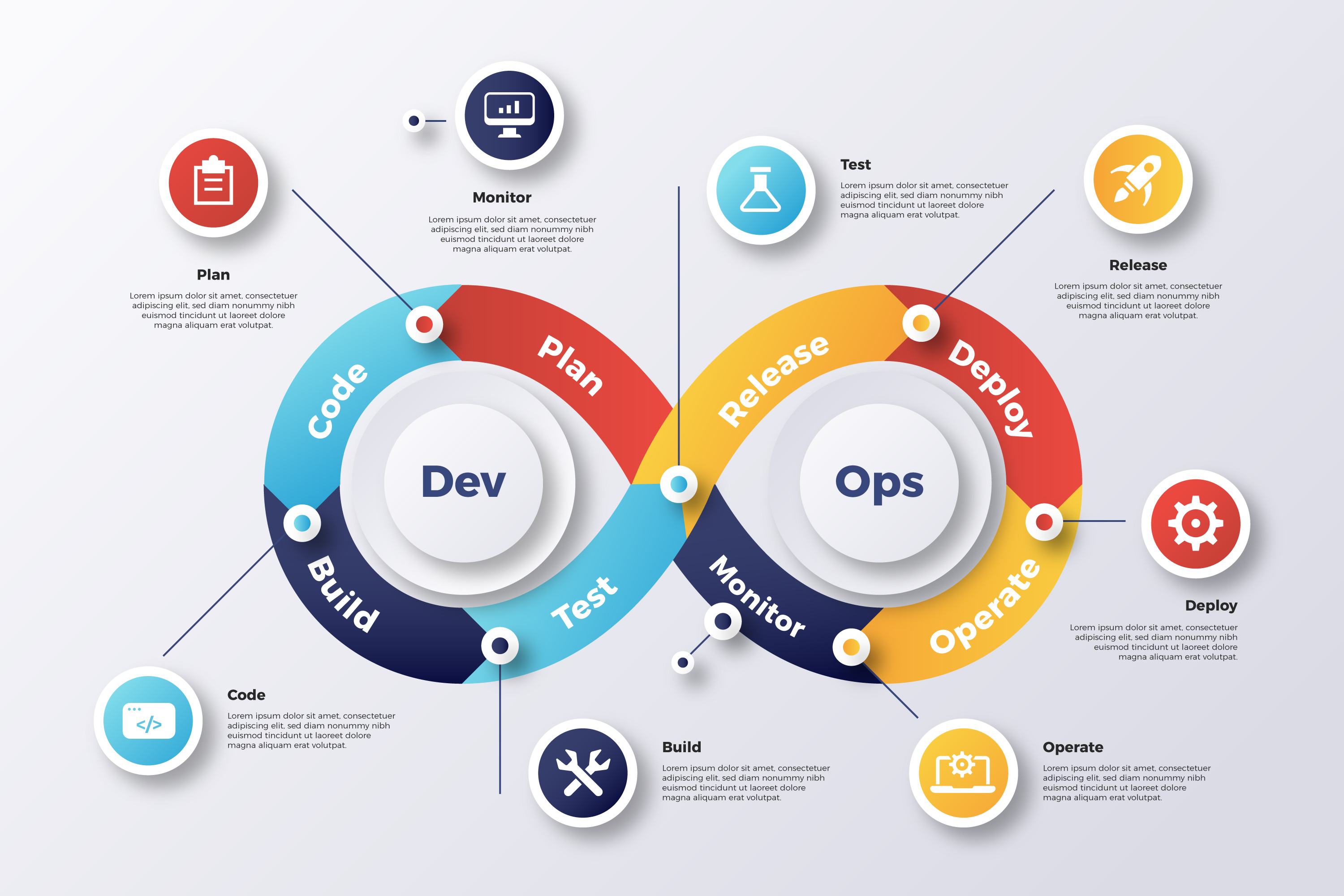

4. DataOps: the Future of Data Engineering

![]() Explore the evolution of DataOps in data engineering, its parallels with DevOps, challenges it addresses, and best practices. Transformative future of DataOps.

Explore the evolution of DataOps in data engineering, its parallels with DevOps, challenges it addresses, and best practices. Transformative future of DataOps.

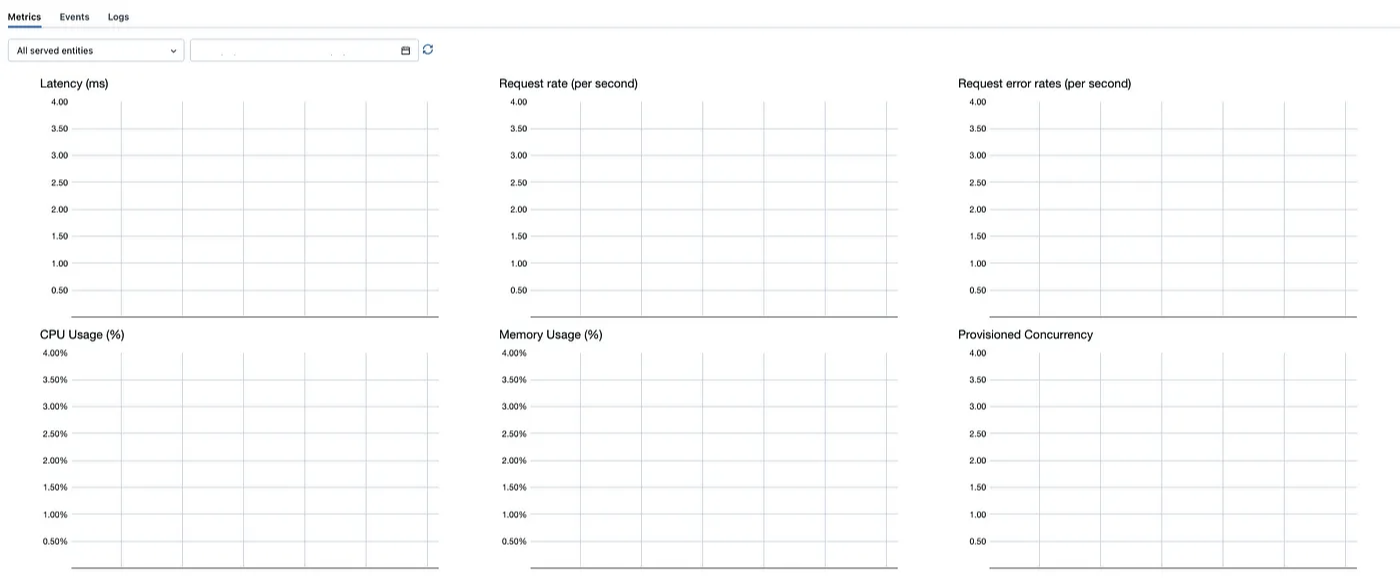

5. An 80% Reduction in Standard Audience Calculation Time

Standard Audiences: A product that extends the functionality of regular Audiences, one of the most flexible, powerful, and heavily leveraged tools on mParticle.

Standard Audiences: A product that extends the functionality of regular Audiences, one of the most flexible, powerful, and heavily leveraged tools on mParticle.

6. Saving Dataframes into Oracle Database with Python

Here are two common errors that you'll want to watch out for when using the to_sql method to save a data frame into an Oracle database.

Here are two common errors that you'll want to watch out for when using the to_sql method to save a data frame into an Oracle database.

7. Data Lake Mysteries Revealed: Nessie, Dremio, and MinIO Make Waves

Let's see how Nessie, Dremio and MinIO work together to enhance data quality and collaboration in your data engineering workflows.

Let's see how Nessie, Dremio and MinIO work together to enhance data quality and collaboration in your data engineering workflows.

8. Python: Setting Data Types When Using 'to_sql'

The following is a basic code snippet to save a DataFrame to an Oracle database using SQLAlchemy and pandas.

The following is a basic code snippet to save a DataFrame to an Oracle database using SQLAlchemy and pandas.

9. How To Deploy Metabase on Google Cloud Platform (GCP)?

Metabase is a business intelligence tool for your organisation that plugs in various data-sources so you can explore data and build dashboards. I'll aim to provide a series of articles on provisioning and building this out for your organisation. This article is about getting up and running quickly.

Metabase is a business intelligence tool for your organisation that plugs in various data-sources so you can explore data and build dashboards. I'll aim to provide a series of articles on provisioning and building this out for your organisation. This article is about getting up and running quickly.

10. Everything You Need to Know to Deploy MinIO in Virtualized Environments

When deploying MinIO in virtualized environments, it’s important to make sure that the proper conditions are in place.

When deploying MinIO in virtualized environments, it’s important to make sure that the proper conditions are in place.

11. Aptible Enclave: Elevating Data Security in DevOps Environments

Aptible Enclave fortifies data security in DevOps with its secure infrastructure for database management.

Aptible Enclave fortifies data security in DevOps with its secure infrastructure for database management.

12. Stop Hacking SQL: How to Build a Scalable Query Automation System

Result: predictable costs, fewer incidents, reproducible jobs across environments.

Result: predictable costs, fewer incidents, reproducible jobs across environments.

13. Must-Know Base Tips for Feature Engineering With Time Series Data

Master key time series feature engineering techniques to enhance predictive models in finance, healthcare & more with our comprehensive guide.

Master key time series feature engineering techniques to enhance predictive models in finance, healthcare & more with our comprehensive guide.

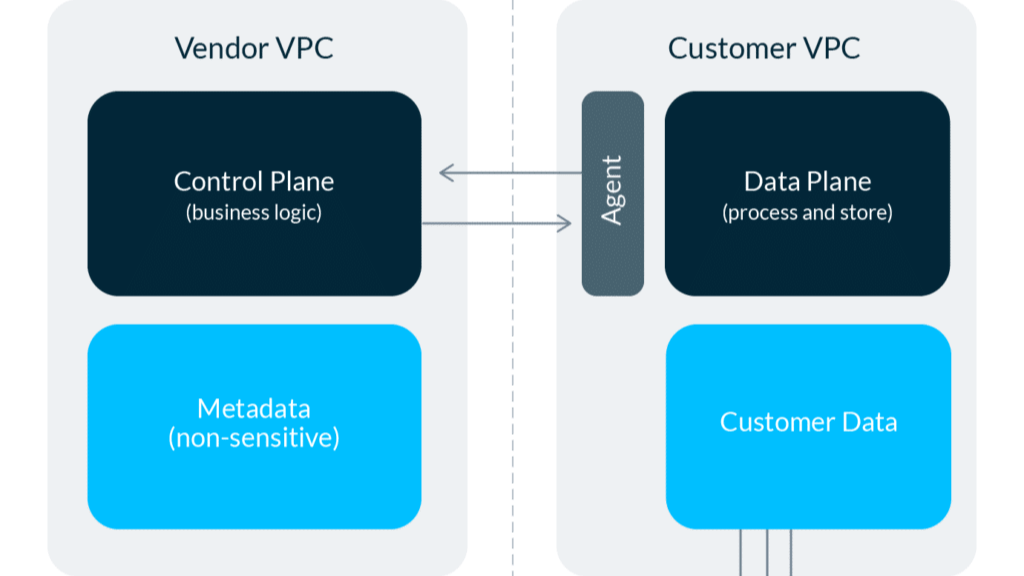

14. What The Heck is WarpStream?

Discover WarpStream, a powerful and user-friendly Kafka API-compatible data streaming platform designed to simplify your data infrastructure.

Discover WarpStream, a powerful and user-friendly Kafka API-compatible data streaming platform designed to simplify your data infrastructure.

15. Data Contracts Won't Save You If Your AI Agent Can't Read Them

We built data governance for a world where humans read the warning labels. AI agents don't read. They just query. That gap is now a production risk.

We built data governance for a world where humans read the warning labels. AI agents don't read. They just query. That gap is now a production risk.

16. Protecting Software-defined Object Storage With MinIO's Replication Best Practices

MinIO includes several ways to replicate data so you can choose the best methodology to meet your needs.

MinIO includes several ways to replicate data so you can choose the best methodology to meet your needs.

17. How Machine Learning is Used in Astronomy

Is Astronomy data science?

Is Astronomy data science?

18. RAG: A Data Problem Disguised as AI

RAG fails less from the LLM and more from retrieval: bad chunking, weak metadata, embedding drift, and stale indexes. Fix the pipeline first.

RAG fails less from the LLM and more from retrieval: bad chunking, weak metadata, embedding drift, and stale indexes. Fix the pipeline first.

19. Solving Time Series Forecasting Problems: Principles and Techniques

Explore time series analysis: from cross-validation, decomposition, transformation to advanced modeling with ARIMA, Neural Networks, and more.

Explore time series analysis: from cross-validation, decomposition, transformation to advanced modeling with ARIMA, Neural Networks, and more.

20. Python Script to Read and Judge 1,500 Legal Cases

What started as a simple script evolved into a full-fledged data engineering and NLP pipeline that can process a decade's worth of legal decisions in minutes.

What started as a simple script evolved into a full-fledged data engineering and NLP pipeline that can process a decade's worth of legal decisions in minutes.

21. Data Engineering: An Interview with Meta Engineer Leonid Chashnikov

As we sit down for this exclusive interview, Leonid offers a rare glimpse into the intricate process of weaving the digital fabric that shapes our lives.

As we sit down for this exclusive interview, Leonid offers a rare glimpse into the intricate process of weaving the digital fabric that shapes our lives.

22. Streamlining Data Operations: How a Grocery Chain Optimizes Workloads with Apache Doris

Cross-cluster replication (CCR) in Apache Doris is proven to be fast, stable, and easy to use. It secures a real-time data synchronization latency of 1 second.

Cross-cluster replication (CCR) in Apache Doris is proven to be fast, stable, and easy to use. It secures a real-time data synchronization latency of 1 second.

23. Performance Benchmark: Apache Spark on DataProc Vs. Google BigQuery

When it comes to Big Data infrastructure on Google Cloud Platform , the most popular choices Data architects need to consider today are Google BigQuery – A serverless, highly scalable and cost-effective cloud data warehouse, Apache Beam based Cloud Dataflow and Dataproc – a fully managed cloud service for running Apache Spark and Apache Hadoop clusters in a simpler, more cost-efficient way.

24. Build vs Buy: What We Learned by Implementing a Data Catalog

Why we chose to finally buy a unified data workspace (Atlan), after spending 1.5 years building our own internal solution with Amundsen and Atlas

Why we chose to finally buy a unified data workspace (Atlan), after spending 1.5 years building our own internal solution with Amundsen and Atlas

25. How To Build An n8n Workflow To Manage Different Databases and Scheduling Workflows

Learn how to build an n8n workflow that processes text, stores data in two databases, and sends messages to Slack.

Learn how to build an n8n workflow that processes text, stores data in two databases, and sends messages to Slack.

26. Build A Crypto Price Tracker using Node.js and Cassandra

Since the big bang in the data technology landscape happened a decade and a half ago, giving rise to technologies like Hadoop, which cater to the four ‘V’s. — volume, variety, velocity, and veracity there has been an uptick in the use of databases with specialized capabilities to cater to different types of data and usage patterns. You can now see companies using graph databases, time-series databases, document databases, and others for different customer and internal workloads.

Since the big bang in the data technology landscape happened a decade and a half ago, giving rise to technologies like Hadoop, which cater to the four ‘V’s. — volume, variety, velocity, and veracity there has been an uptick in the use of databases with specialized capabilities to cater to different types of data and usage patterns. You can now see companies using graph databases, time-series databases, document databases, and others for different customer and internal workloads.

27. How to Scrape NLP Datasets From Youtube

Too lazy to scrape nlp data yourself? In this post, I’ll show you a quick way to scrape NLP datasets using Youtube and Python.

Too lazy to scrape nlp data yourself? In this post, I’ll show you a quick way to scrape NLP datasets using Youtube and Python.

28. 30 BI Engineering Interview Questions That Actually Matter in the AI Era

The BI interview hasn't caught up with the job. Here are 30 questions that reflect what it actually means to be a BI engineer in 2026.

The BI interview hasn't caught up with the job. Here are 30 questions that reflect what it actually means to be a BI engineer in 2026.

29. What the Heck is OpenMetadata?

Everything you've ever wanted to learn about OpenMetadata.

Everything you've ever wanted to learn about OpenMetadata.

30. What the Heck Is SDF?

Is dbt kicking your butt? Take a look at SDF.

Is dbt kicking your butt? Take a look at SDF.

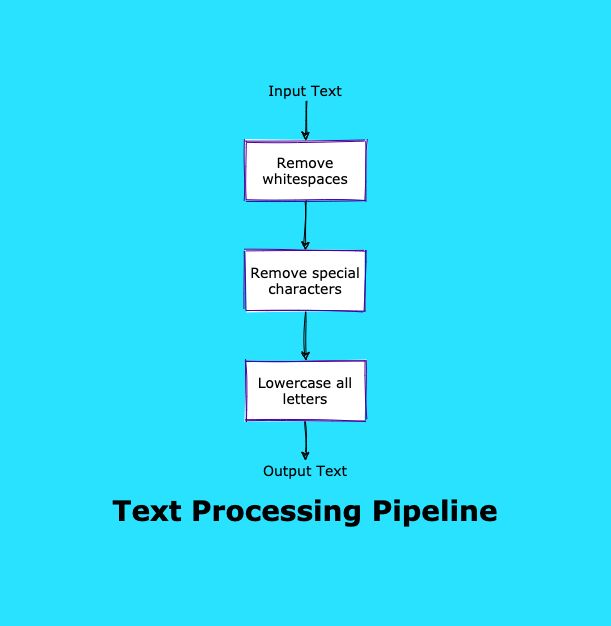

31. How To Create a Python Data Engineering Project with a Pipeline Pattern

In this article, we cover how to use pipeline patterns in python data engineering projects. Create a functional pipeline, install fastcore, and other steps.

In this article, we cover how to use pipeline patterns in python data engineering projects. Create a functional pipeline, install fastcore, and other steps.

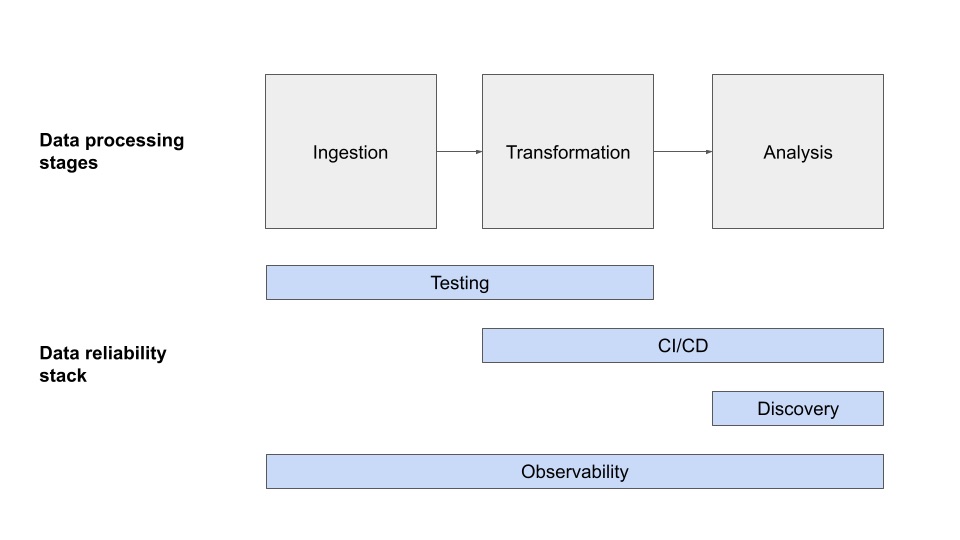

32. What is a Data Reliability Engineer?

With each day, enterprises increasingly rely on data to make decisions.

With each day, enterprises increasingly rely on data to make decisions.

33. What the heck is Apache SeaTunnel?

What is Apache SeaTunnel, and can it help you with your data engineering?

What is Apache SeaTunnel, and can it help you with your data engineering?

34. From Satellite Signals to Neural Networks

See how Andrei Shcherbinin built production-ready ML systems with 12x faster attribution, 95% chatbot automation, and stronger monitoring.

See how Andrei Shcherbinin built production-ready ML systems with 12x faster attribution, 95% chatbot automation, and stronger monitoring.

35. An Architect's Guide to Machine Learning Operations and Required Data Infrastructure

MLOps is a set of practices and tools aimed at addressing the specific needs of engineers building models and moving them into production.

MLOps is a set of practices and tools aimed at addressing the specific needs of engineers building models and moving them into production.

36. A Guide For Data Quality Monitoring with Amazon Deequ

Monitor data quality with Amazon Deequ, InfluxDB, and Grafana in a Dockerized environment using Scala/Java and Apache Spark.

Monitor data quality with Amazon Deequ, InfluxDB, and Grafana in a Dockerized environment using Scala/Java and Apache Spark.

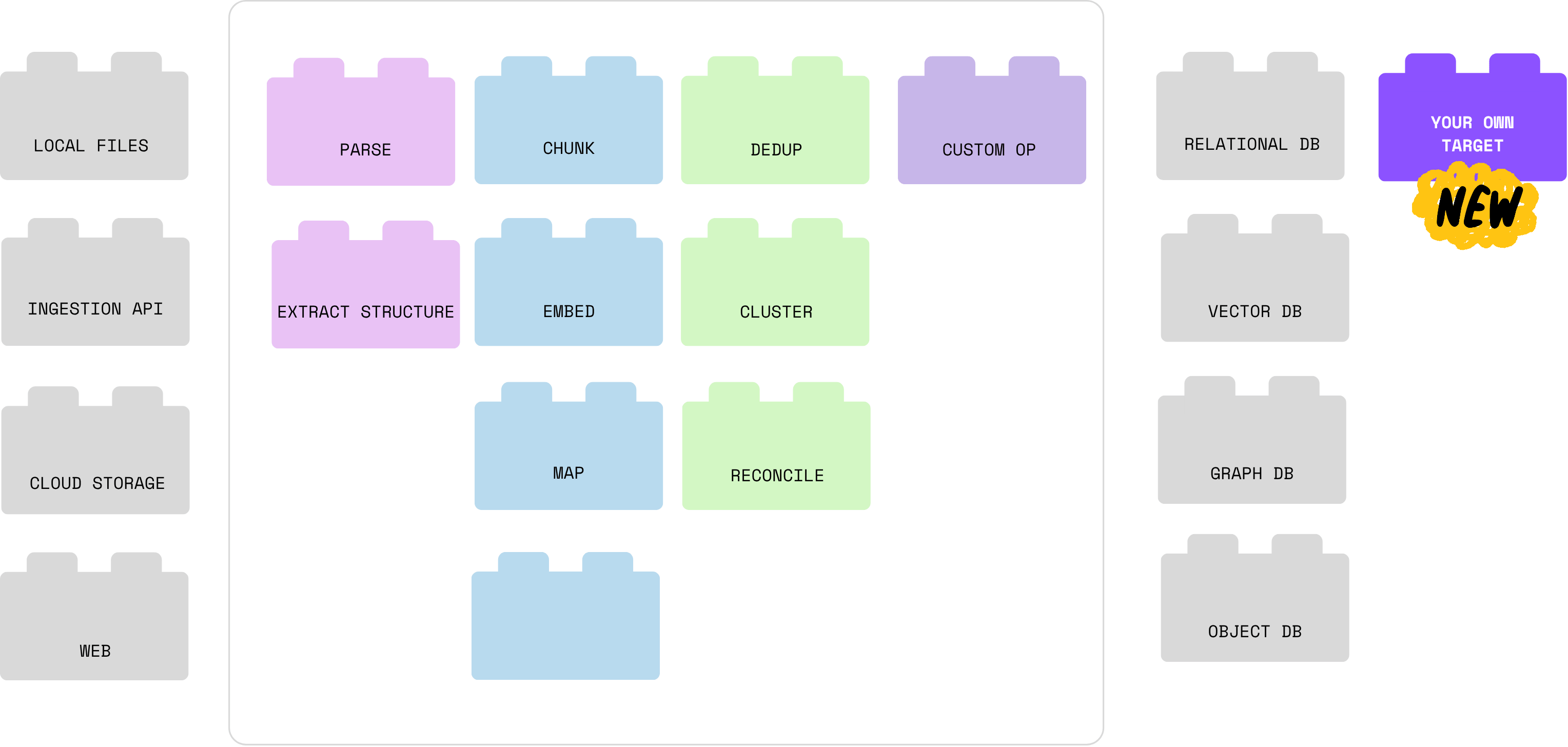

37. AI Native Data Pipeline - What Do We Need?

A new generation of AI-native data pipelines is emerging — built for unstructured data, dynamic schemas, and LLM-powered workloads.

A new generation of AI-native data pipelines is emerging — built for unstructured data, dynamic schemas, and LLM-powered workloads.

38. Is The Modern Data Warehouse Dead?

Do we need a radical new approach to data warehouse technology? An immutable data warehouse starts with the data consumer SLAs and pipes data in pre-modeled.

Do we need a radical new approach to data warehouse technology? An immutable data warehouse starts with the data consumer SLAs and pipes data in pre-modeled.

39. Python & Data Engineering: Under the Hood of Join Operators

In this post, I discuss the algorithms of a nested loop, hash join, and merge join in Python.

In this post, I discuss the algorithms of a nested loop, hash join, and merge join in Python.

40. The Future of Gaming: Leveraging Data Engineering to Revolutionize Player Experience

Explore how data engineering revolutionizes gaming with AI, AR/VR, blockchain, and more, enabling immersive experiences and shaping the industry's future.

Explore how data engineering revolutionizes gaming with AI, AR/VR, blockchain, and more, enabling immersive experiences and shaping the industry's future.

41. What the Heck is dbc?

An overview of dbc, an online open-source tool to facilitate adbc and apache arrow.

An overview of dbc, an online open-source tool to facilitate adbc and apache arrow.

42. Hot-Cold Data Separation: How It Cuts Your Storage Costs by 70%

Apparently hot-cold data separation is hot now. Let's figure out why.

Apparently hot-cold data separation is hot now. Let's figure out why.

43. Scale Your Data Pipelines with Airflow and Kubernetes

It doesn’t matter if you are running background tasks, preprocessing jobs or ML pipelines. Writing tasks is the easy part. The hard part is the orchestration— Managing dependencies among tasks, scheduling workflows and monitor their execution is tedious.

It doesn’t matter if you are running background tasks, preprocessing jobs or ML pipelines. Writing tasks is the easy part. The hard part is the orchestration— Managing dependencies among tasks, scheduling workflows and monitor their execution is tedious.

44. How to Perform Data Augmentation with Augly Library

Data augmentation is a technique used by practitioners to increase the data by creating modified data from the existing data.

Data augmentation is a technique used by practitioners to increase the data by creating modified data from the existing data.

45. Influenza Vaccines: The Data Science Behind Them

46. R Systems Blogbook—Chapter 1 is Now Open for Submissions🎉

Round 1 of the R Systems BlogBook: Chapter 1 contest is now live! Showcase your expertise, participate, and win exciting prizes. Submit your entry today!

Round 1 of the R Systems BlogBook: Chapter 1 contest is now live! Showcase your expertise, participate, and win exciting prizes. Submit your entry today!

47. What the Heck Is LanceDB?

Learn about LanceDB and how it fits into a stack that allows you to more easily create your own LLM models

Learn about LanceDB and how it fits into a stack that allows you to more easily create your own LLM models

48. How to Build a Directed Acyclic Graph (DAG) - Towards Open Options Chains Part IV

In "Towards Open Options Chains", Chris Chow presents his solution for collecting options data: a data pipeline with Airflow, PostgreSQL, and Docker.

In "Towards Open Options Chains", Chris Chow presents his solution for collecting options data: a data pipeline with Airflow, PostgreSQL, and Docker.

49. Optimizing JOIN Operations in Google BigQuery: Strategies to Overcome Performance Challenges

In this article, we explore these challenges and present a strategic approach to optimize JOINs in BigQuery.

In this article, we explore these challenges and present a strategic approach to optimize JOINs in BigQuery.

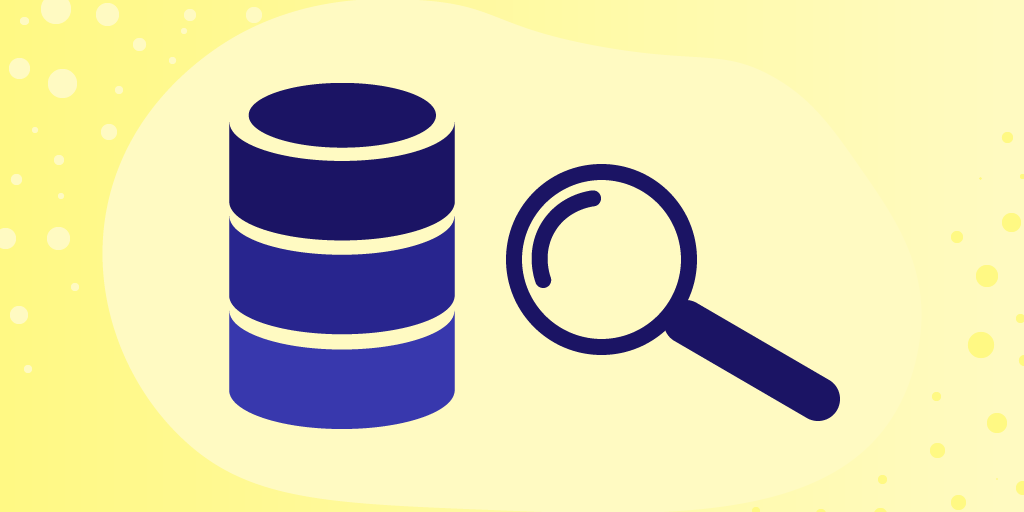

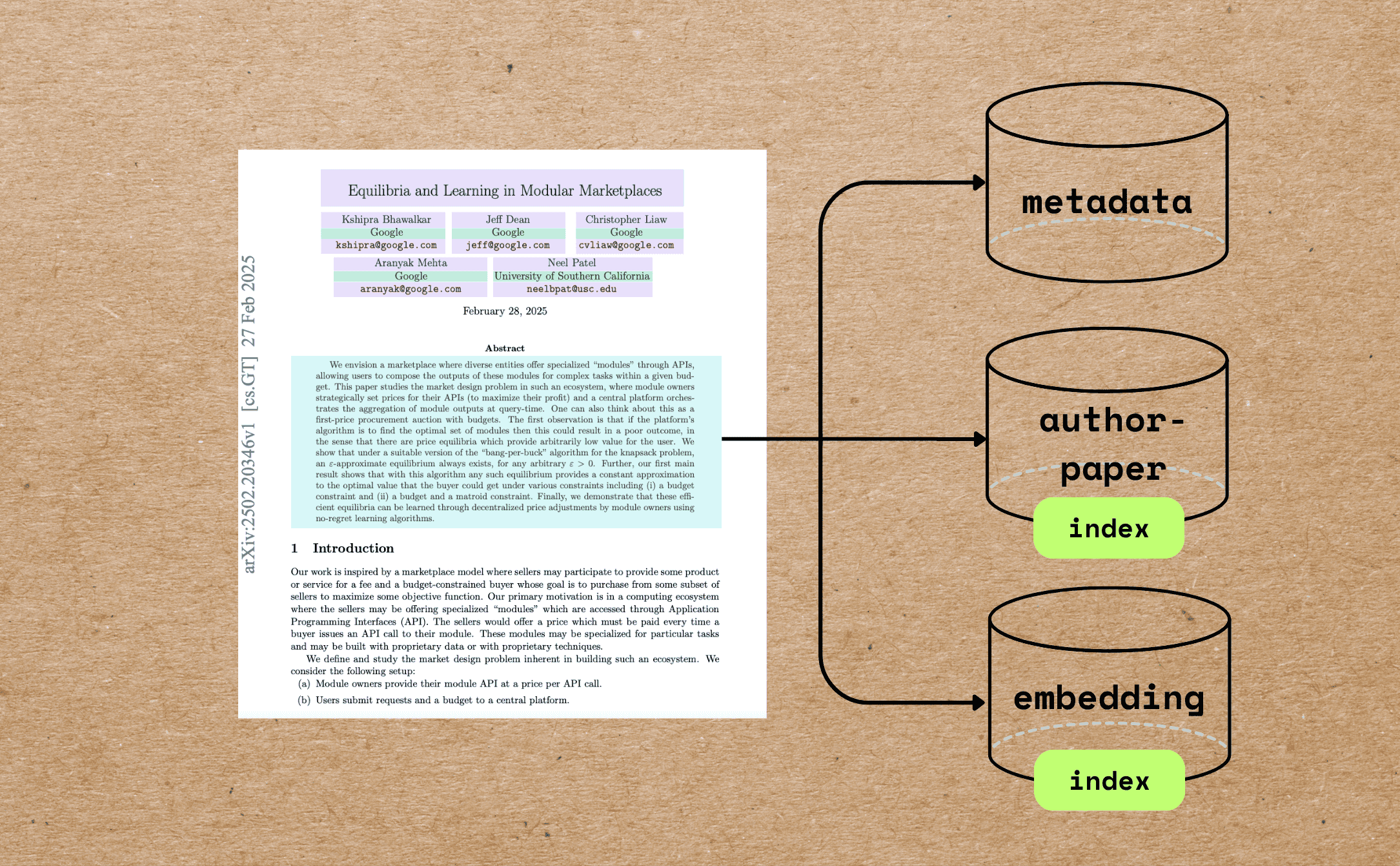

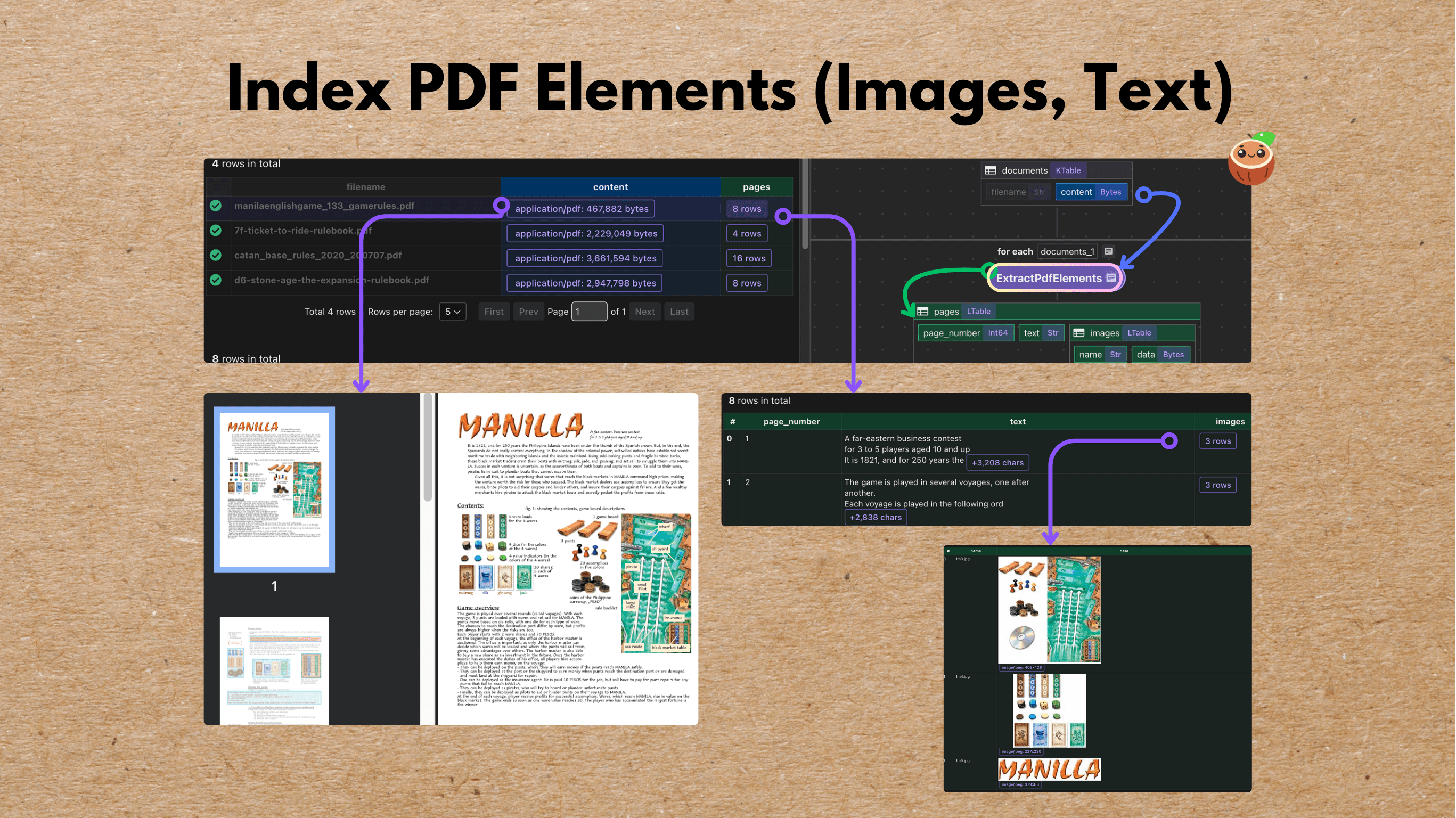

50. Turn Your PDF Library into a Searchable Research Database with 100 Lines of Code

How to index academic research papers by extracting metadata (e.g., title, authors, abstract) for AI agents and AI workflows using LLMs and CocoIndex.

How to index academic research papers by extracting metadata (e.g., title, authors, abstract) for AI agents and AI workflows using LLMs and CocoIndex.

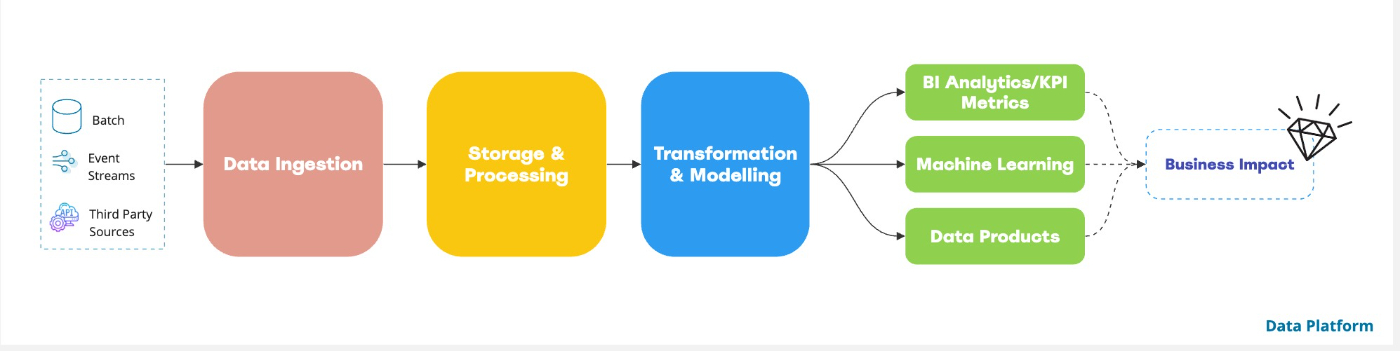

51. One Off to One Data Platform: Designing Data Platforms with Scalable Intent [Part 2]

Introducing a data platform architecture framework that enables organizations to systematically design and implement scalable data platform.

Introducing a data platform architecture framework that enables organizations to systematically design and implement scalable data platform.

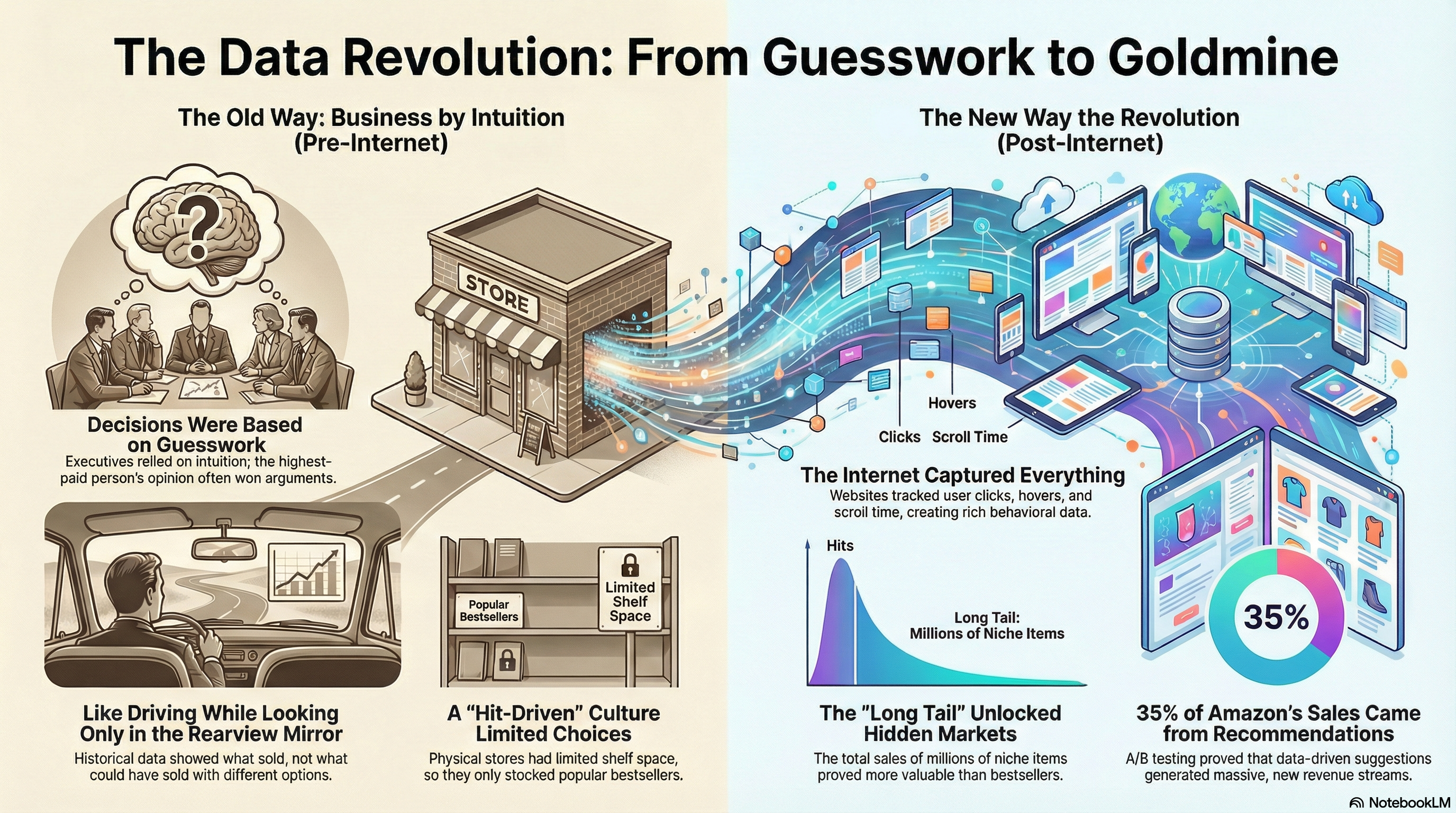

52. What You Already Know About Big Data

Every micro-interaction is silently recorded, analyzed, and monetized.

Every micro-interaction is silently recorded, analyzed, and monetized.

53. What DevOps for Data Really Means

DevOps for Data is not about fixing pipelines or deploying models. It’s about designing systems that remain reliable, secure, and predictable.

DevOps for Data is not about fixing pipelines or deploying models. It’s about designing systems that remain reliable, secure, and predictable.

54. Top 6 CI/CD Practices for End-to-End Development Pipelines

Maximizing efficiency is about knowing how the data science puzzles fit together and then executing them.

Maximizing efficiency is about knowing how the data science puzzles fit together and then executing them.

55. Langchain: Explained and Getting Started

Langchain is a crucial component for developing LLM models. It helps in orchestration and act as building block

Langchain is a crucial component for developing LLM models. It helps in orchestration and act as building block

56. Meet The Entrepreneur: Alon Lev, CEO, Qwak

Meet The Entrepreneur: Alon Lev, CEO, Qwak

Meet The Entrepreneur: Alon Lev, CEO, Qwak

57. How to Extract and Embed Text and Images from PDFs for Unified Semantic Search

Extracts, embeds, and stores multimodal PDF elements — text with SentenceTransformers and images with CLIP — in vector database for unified semantic search.

Extracts, embeds, and stores multimodal PDF elements — text with SentenceTransformers and images with CLIP — in vector database for unified semantic search.

58. Certify Your Data Assets to Avoid Treating Your Data Engineers Like Catalogs

Data trust starts and ends with communication. Here’s how best-in-class data teams are certifying tables as approved for use across their organization.

Data trust starts and ends with communication. Here’s how best-in-class data teams are certifying tables as approved for use across their organization.

59. What is the Future of the Data Engineer? - 6 Industry Drivers

Is the data engineer still the "worst seat at the table?" Maxime Beauchemin, creator of Apache Airflow and Apache Superset, weighs in.

Is the data engineer still the "worst seat at the table?" Maxime Beauchemin, creator of Apache Airflow and Apache Superset, weighs in.

60. LLMs in Data Engineering: Not Just Hype, Here’s What’s Real

Large Language Models (LLMs) represent artificial intelligence systems which learn human language from massive text databases.

Large Language Models (LLMs) represent artificial intelligence systems which learn human language from massive text databases.

61. Who Will Eventually Control Big Data in Web3?

Web 3 is loudly making rounds as a decentralized internet. How will this affect data control in general?

Web 3 is loudly making rounds as a decentralized internet. How will this affect data control in general?

62. How to Get Started with Data Version Control (DVC)

Data Version Control (DVC) is a data-focused version of Git. In fact, it’s almost exactly like Git in terms of features and workflows associated with it.

Data Version Control (DVC) is a data-focused version of Git. In fact, it’s almost exactly like Git in terms of features and workflows associated with it.

63. 10 Key Skills Every Data Engineer Needs

Bridging the gap between Application Developers and Data Scientists, the demand for Data Engineers rose up to 50% in 2020, especially due to increase in investments in AI-based SaaS products.

Bridging the gap between Application Developers and Data Scientists, the demand for Data Engineers rose up to 50% in 2020, especially due to increase in investments in AI-based SaaS products.

64. PandasAI: Chat with Your Data, Literally

PandasAI is an open-source tool that makes data analysis feel like a casual chat with a data-savvy friend.

PandasAI is an open-source tool that makes data analysis feel like a casual chat with a data-savvy friend.

65. Building a Large-Scale Interactive SQL Query Engine with Open Source Software

This is a collaboration between Baolong Mao's team at JD.com and my team at Alluxio. The original article was published on Alluxio's blog. This article describes how JD built an interactive OLAP platform combining two open-source technologies: Presto and Alluxio.

This is a collaboration between Baolong Mao's team at JD.com and my team at Alluxio. The original article was published on Alluxio's blog. This article describes how JD built an interactive OLAP platform combining two open-source technologies: Presto and Alluxio.

66. How To Productionalize ML By Development Of Pipelines Since The Beginning

Writing ML code as pipelines from the get-go reduces technical debt and increases velocity of getting ML in production.

Writing ML code as pipelines from the get-go reduces technical debt and increases velocity of getting ML in production.

67. Data Engineering Tools for Geospatial Data

Location-based information makes the field of geospatial analytics so popular today. Collecting useful data requires some unique tools covered in this blog.

Location-based information makes the field of geospatial analytics so popular today. Collecting useful data requires some unique tools covered in this blog.

68. What the Heck is Apache Iggy?

Apache Kafka has gotten rather long in the tooth, is Apache Iggy the successor?

Apache Kafka has gotten rather long in the tooth, is Apache Iggy the successor?

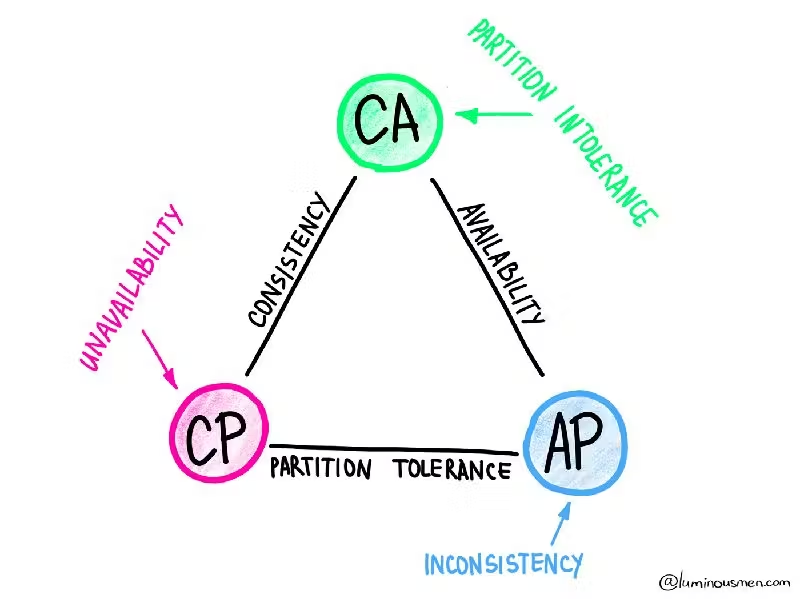

69. Why Distributed Systems Can’t Have It All

Modern distributed systems are all about tradeoffs. Performance, reliability, scalability, and consistency don't come for free—you always pay a price somewhere.

Modern distributed systems are all about tradeoffs. Performance, reliability, scalability, and consistency don't come for free—you always pay a price somewhere.

70. The Emerging Data Engineering Trends You Should Check Out In 2024

Integrating data engineering with AI has led to the popularity of modern data integration and the expertise required.

Integrating data engineering with AI has led to the popularity of modern data integration and the expertise required.

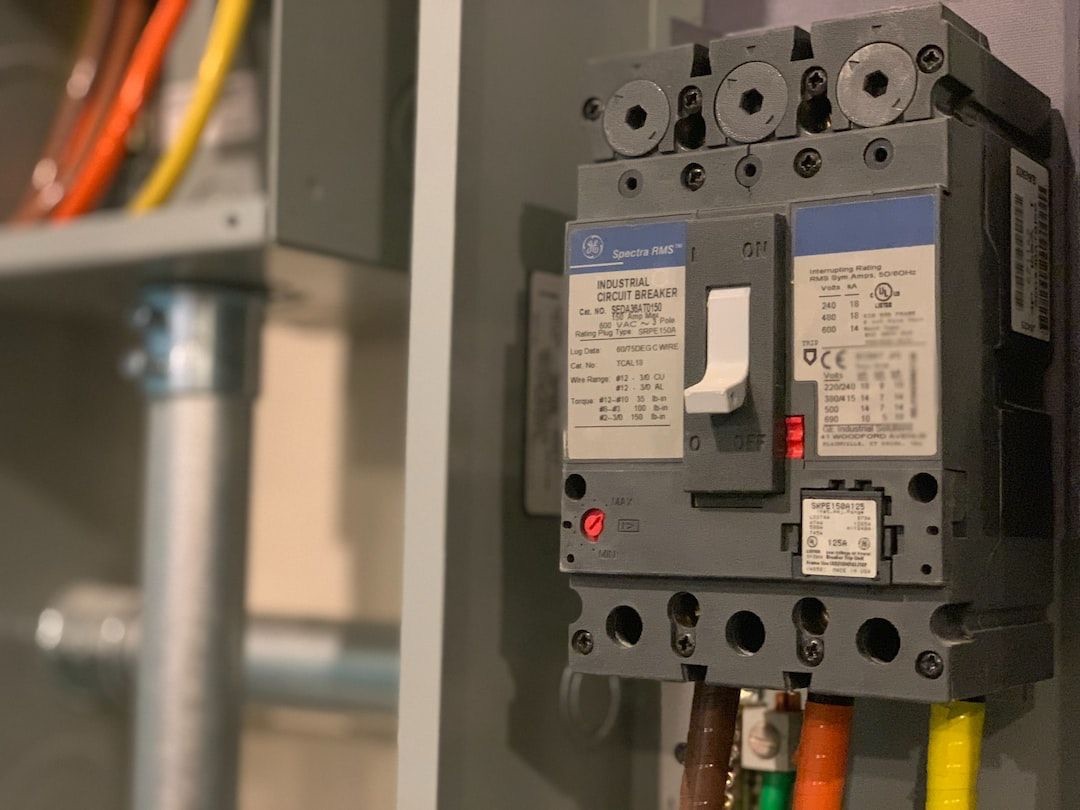

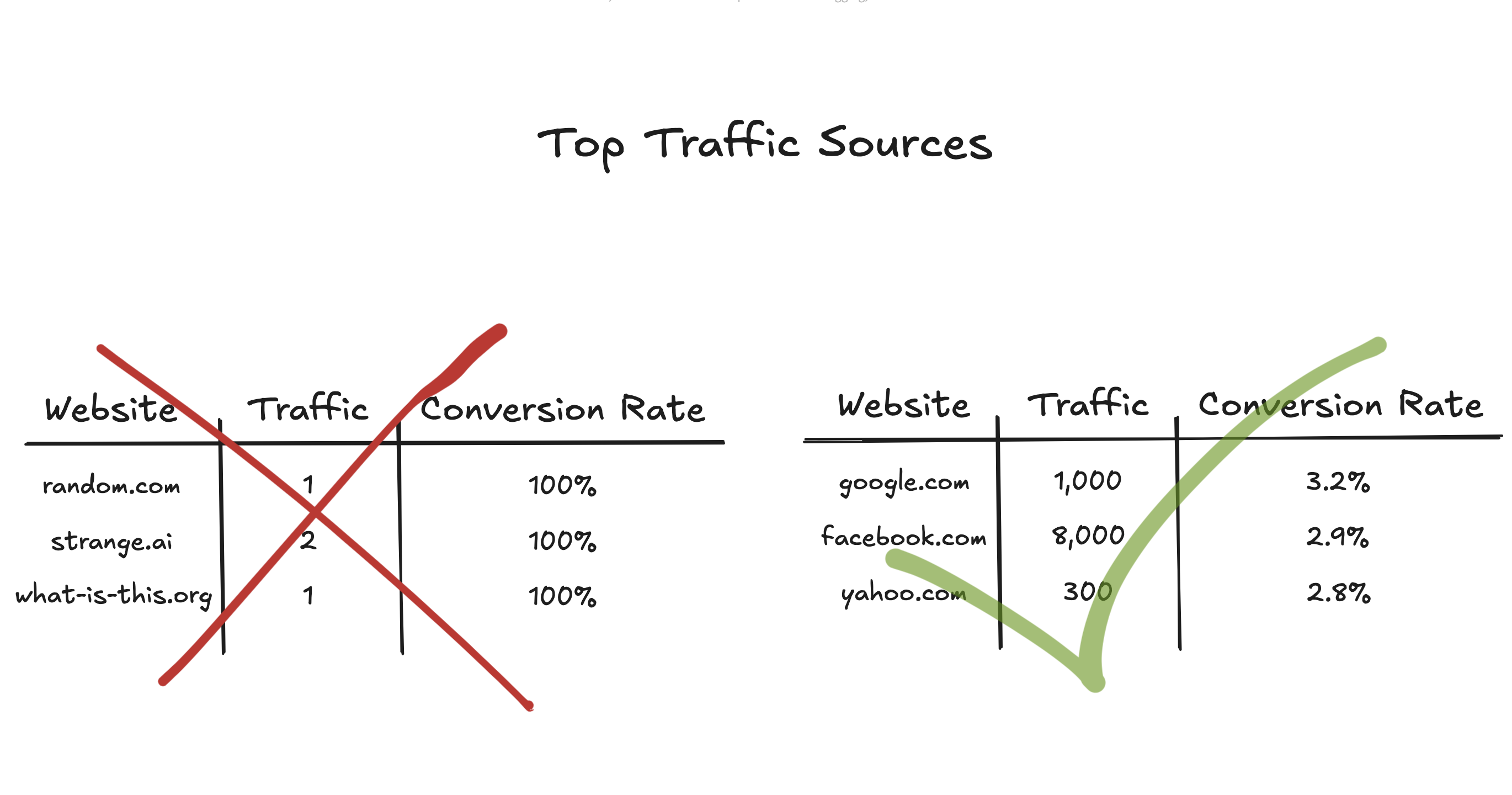

71. Want to Create Data Circuit Breakers with Airflow? Here's How!

See how to leverage the Airflow ShortCircuitOperator to create data circuit breakers to prevent bad data from reaching your data pipelines.

See how to leverage the Airflow ShortCircuitOperator to create data circuit breakers to prevent bad data from reaching your data pipelines.

72. How to Build Machine Learning Algorithms that Actually Work

Applying machine learning models at scale in production can be hard. Here's the four biggest challenges data teams face and how to solve them.

Applying machine learning models at scale in production can be hard. Here's the four biggest challenges data teams face and how to solve them.

73. Hands-on with Apache Iceberg on Your Laptop: Deep Dive with Apache Spark, Nessie, Minio and more!

Get hands-on with Apache Iceberg by building a prototype data lakehouse on your laptop.

Get hands-on with Apache Iceberg by building a prototype data lakehouse on your laptop.

74. What The Heck is DeltaStream?

A brief run-through of DeltaStream and how it simplifies working with streaming data such as Kinesis and Apache Kafka, taking advantage of Apache Flink.

A brief run-through of DeltaStream and how it simplifies working with streaming data such as Kinesis and Apache Kafka, taking advantage of Apache Flink.

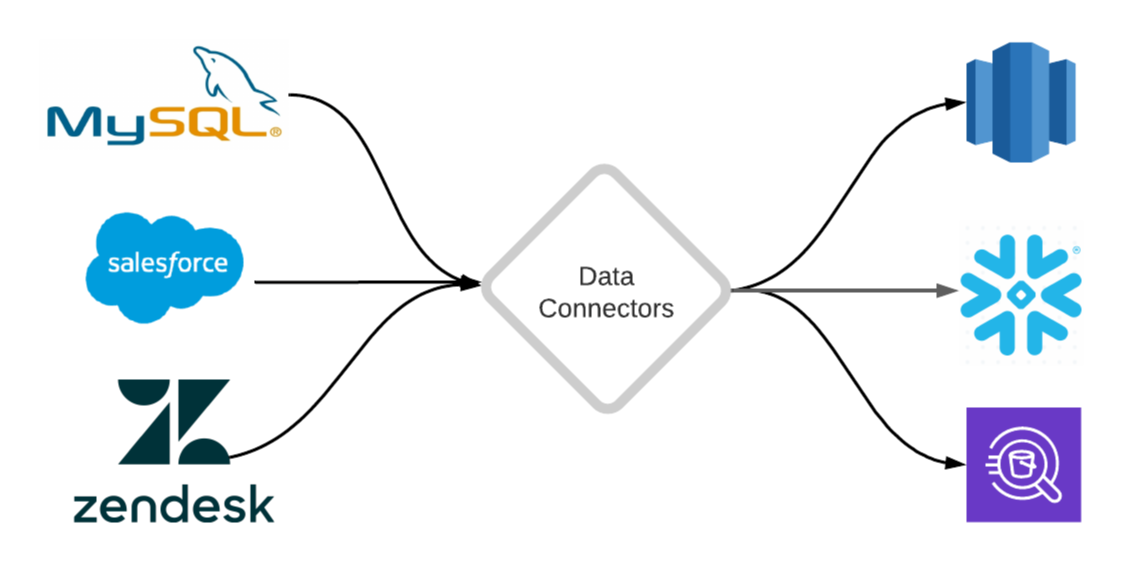

75. An Introduction to Data Connectors: Your First Step to Data Analytics

This post explains what a data connector is and provides a framework for building connectors that replicate data from different sources into your data warehouse

This post explains what a data connector is and provides a framework for building connectors that replicate data from different sources into your data warehouse

76. How To Build a Multilingual Text-to-Audio Converter With Python

Learn how to build a multilingual text-to-audio converter using Python. This guide covers essential libraries, techniques, and best practices

Learn how to build a multilingual text-to-audio converter using Python. This guide covers essential libraries, techniques, and best practices

77. LinkedIn's Skills Graph: Paving the Way for the Skills-First Economy with AI and Ontology

What is a skills-based economy and how is LinkedIn moving from vision to implementation? There’s AI, taxonomy and ontology involved in building the Skills Graph

What is a skills-based economy and how is LinkedIn moving from vision to implementation? There’s AI, taxonomy and ontology involved in building the Skills Graph

78. Breaking Down Data Silos: How Apache Doris Streamlines Customer Data Integration

Learn how Apache Doris breaks down data silos for insurance firms, streamlining customer data integration and boosting efficiency.

Learn how Apache Doris breaks down data silos for insurance firms, streamlining customer data integration and boosting efficiency.

79. The Growth Marketing Writing Contest by mParticle and HackerNoon

mParticle & HackerNoon are excited to host a Growth Marketing Writing Contest. Here’s your chance to win money from a whopping $12,000 prize pool!

mParticle & HackerNoon are excited to host a Growth Marketing Writing Contest. Here’s your chance to win money from a whopping $12,000 prize pool!

80. The Two Types of Data Engineers You Meet at Work

Discover different archetypes of data engineers and how their collaboration drives data-driven success.

Discover different archetypes of data engineers and how their collaboration drives data-driven success.

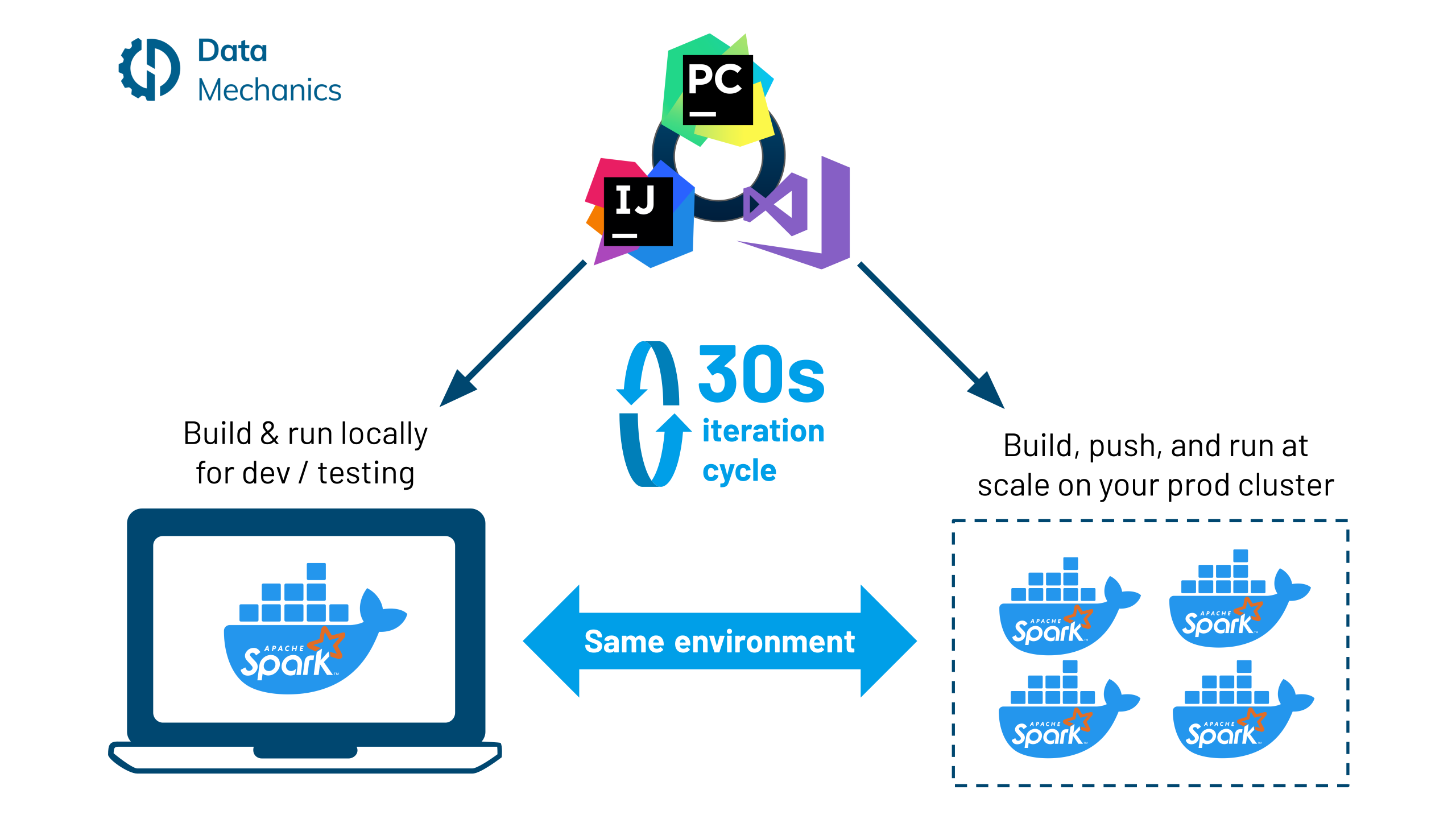

81. Docker Dev Workflow for Apache Spark

The benefits that come with using Docker containers are well known: they provide consistent and isolated environments so that applications can be deployed anywhere - locally, in dev / testing / prod environments, across all cloud providers, and on-premise - in a repeatable way.

The benefits that come with using Docker containers are well known: they provide consistent and isolated environments so that applications can be deployed anywhere - locally, in dev / testing / prod environments, across all cloud providers, and on-premise - in a repeatable way.

82. Google & Yale Turned Biology Into a Language Here's Why That's a Game-Changer for Devs

The team built a 27B parameter model that didn't just analyze biological data—it made a novel, wet-lab-validated scientific discovery

The team built a 27B parameter model that didn't just analyze biological data—it made a novel, wet-lab-validated scientific discovery

83. How to Scale AI Infrastructure With Kubernetes and Docker

Firms increasingly make use of artificial intelligence (AI) infrastructures to host and manage autonomous workloads.

Firms increasingly make use of artificial intelligence (AI) infrastructures to host and manage autonomous workloads.

84. How to Think Like a Data Systems Engineer: The Questions That Save You Later

Learn how engineers think about reliability, scalability, and maintainability—by asking the right questions early.

Learn how engineers think about reliability, scalability, and maintainability—by asking the right questions early.

85. Introduction to Great Expectations, an Open Source Data Science Tool

This is the first completed webinar of our “Great Expectations 101” series. The goal of this webinar is to show you what it takes to deploy and run Great Expectations successfully.

This is the first completed webinar of our “Great Expectations 101” series. The goal of this webinar is to show you what it takes to deploy and run Great Expectations successfully.

86. How We Use dbt (Client) In Our Data Team

Here is not really an article, but more some notes about how we use dbt in our team.

Here is not really an article, but more some notes about how we use dbt in our team.

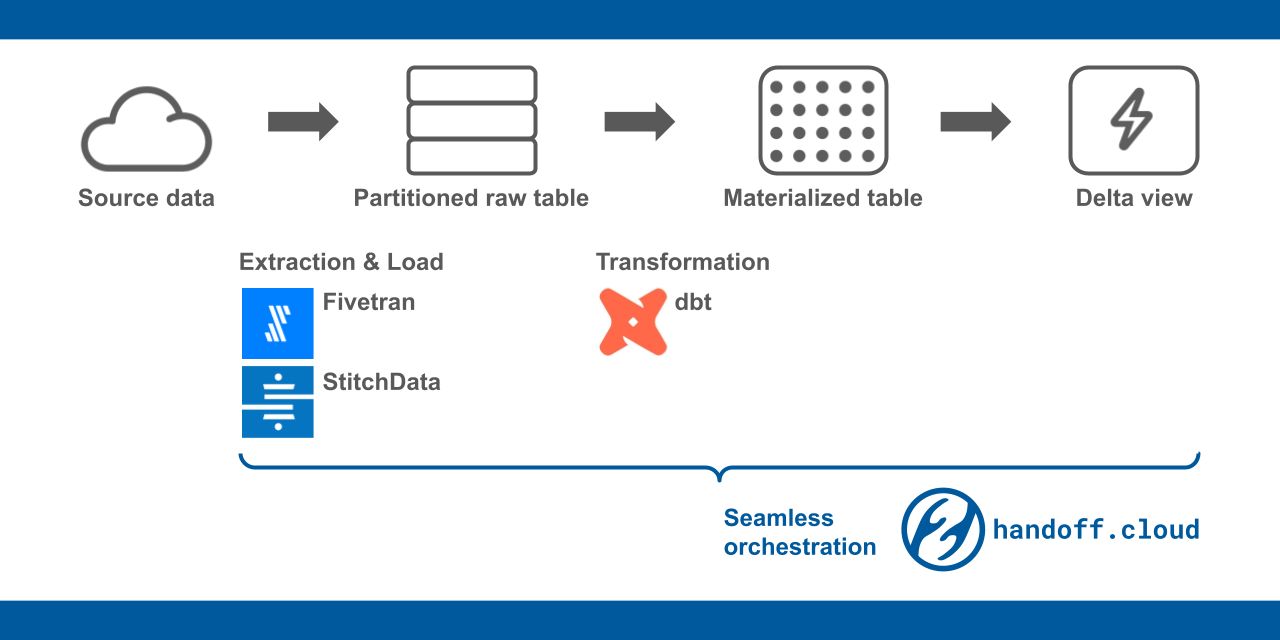

87. Introducing Handoff: Serverless Data Pipeline Orchestration Framework

88. Advancing Data Quality: Exploring Data Contracts with Lyft

Keen to delve into data contracts and discover how they can enhance your data quality? Join me as we explore Lyft's Verity data contract approach together!

Keen to delve into data contracts and discover how they can enhance your data quality? Join me as we explore Lyft's Verity data contract approach together!

89. Understand Apache Airflow in 2024: Hints by Data Scientist

A great guide, on how to learn Apache Airflow from scratch in 2024. This article covers basic concepts of Airflow and useful for Data Scientist, Data Engineers

A great guide, on how to learn Apache Airflow from scratch in 2024. This article covers basic concepts of Airflow and useful for Data Scientist, Data Engineers

90. Your Machine Learning Model Doesn’t Need a Server Anymore

Discover how serverless AI/ML pipelines streamline data engineering by automating scalable data processing and deployment without infrastructure management.

Discover how serverless AI/ML pipelines streamline data engineering by automating scalable data processing and deployment without infrastructure management.

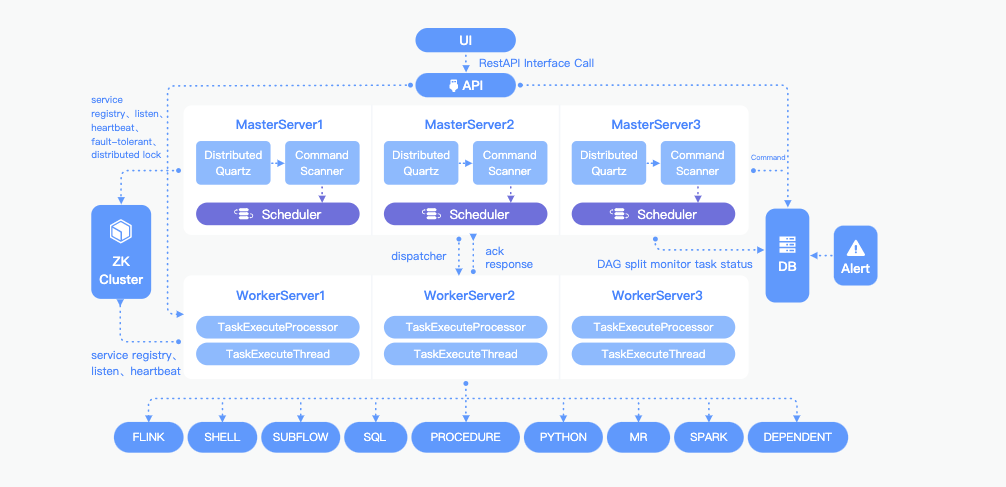

91. Breaking Down the Worker Task Execution in Apache DolphinScheduler

Discover how Apache DolphinScheduler's Worker tasks function within its distributed, open-source workflow scheduling system.

Discover how Apache DolphinScheduler's Worker tasks function within its distributed, open-source workflow scheduling system.

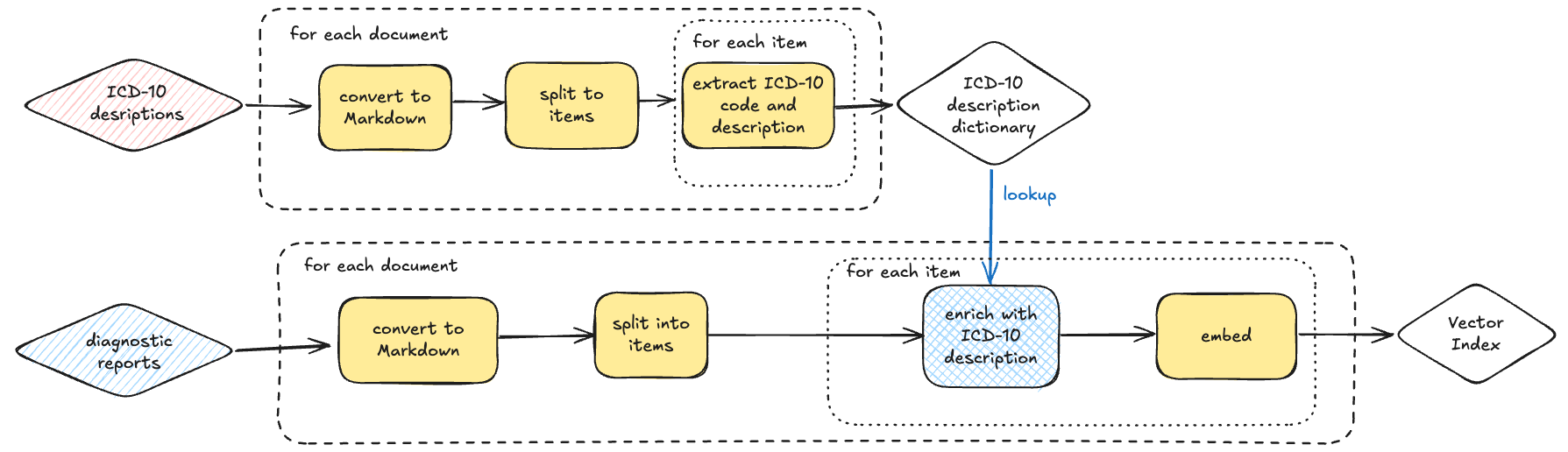

92. How to Design Customizable Data Indexing Pipelines

Learn how custom transformation logic enhances data indexing with AI, vector search, TF-IDF, metadata enrichment, and optimized document chunking.

Learn how custom transformation logic enhances data indexing with AI, vector search, TF-IDF, metadata enrichment, and optimized document chunking.

93. Data Teams Need Better KPIs. Here's How.

Here are six important steps for setting goals for data teams.

Here are six important steps for setting goals for data teams.

94. Coming Soon: R Systems BlogBook – Chapter 1, Powered by HackerNoon

The R Systems BlogBook contest, powered by HackerNoon, is coming soon! Get ready to share your experiences and win exciting prizes—stay tuned for more details.

The R Systems BlogBook contest, powered by HackerNoon, is coming soon! Get ready to share your experiences and win exciting prizes—stay tuned for more details.

95. Creating Data Pipelines With Apache Airflow and MinIO

MinIO is the perfect companion for Airflow because of its industry-leading performance and scalability, which puts every data-intensive workload within reach.

MinIO is the perfect companion for Airflow because of its industry-leading performance and scalability, which puts every data-intensive workload within reach.

96. Best Types of Data Visualization

Learning about best data visualisation tools may be the first step in utilising data analytics to your advantage and the benefit of your company

Learning about best data visualisation tools may be the first step in utilising data analytics to your advantage and the benefit of your company

97. Context Rot Is Breaking Long AI Sessions

Bigger context windows help, but not enough. Learn how Recursive Language Models improve long-context reasoning with better scaling and stable performance.

Bigger context windows help, but not enough. Learn how Recursive Language Models improve long-context reasoning with better scaling and stable performance.

98. Step-by-Step Guide to SQL Operations in Dremio and Apache Iceberg

Learn to set up a robust data lakehouse environment with Apache Iceberg, Dremio, and Nessie for scalable SQL operations.

Learn to set up a robust data lakehouse environment with Apache Iceberg, Dremio, and Nessie for scalable SQL operations.

99. Data Drama: Navigating the Spark-Flink Dilemma

Explore Apache Flink and Spark in real-world business scenarios. Choose the right tool for your big data needs

Explore Apache Flink and Spark in real-world business scenarios. Choose the right tool for your big data needs

100. How to Build a Data Dashboard Using Airbyte and Streamlit

In this tutorial, we built a real-time data dashboard using Airbyte and Streamlit, in Python programming language.

In this tutorial, we built a real-time data dashboard using Airbyte and Streamlit, in Python programming language.

101. Trying to Scale Apache Kafka? Consider Using Apache Pulsar

We compare the differences between Kafka and Pulsar, demonstrating how a logical next step for scalability when using Kafka is switching to Pulsar.

We compare the differences between Kafka and Pulsar, demonstrating how a logical next step for scalability when using Kafka is switching to Pulsar.

102. From Centralized to Federated: Evolving Data Governance Operating Model

See how a federated data governance model address challenges of centralized systems by enabling flexibility, regulatory compliance, and innovation for business

See how a federated data governance model address challenges of centralized systems by enabling flexibility, regulatory compliance, and innovation for business

103. How to Flatten Nested JSON and XML in Apache Spark

Flatten nested JSON and XML dynamically in Spark using a recursive PySpark function for analytics-ready data without hardcoding.

Flatten nested JSON and XML dynamically in Spark using a recursive PySpark function for analytics-ready data without hardcoding.

104. How to Setup Your Organisation's Data Team for Success

Best practices for building a data team at a hypergrowth startup, from hiring your first data engineer to IPO.

Best practices for building a data team at a hypergrowth startup, from hiring your first data engineer to IPO.

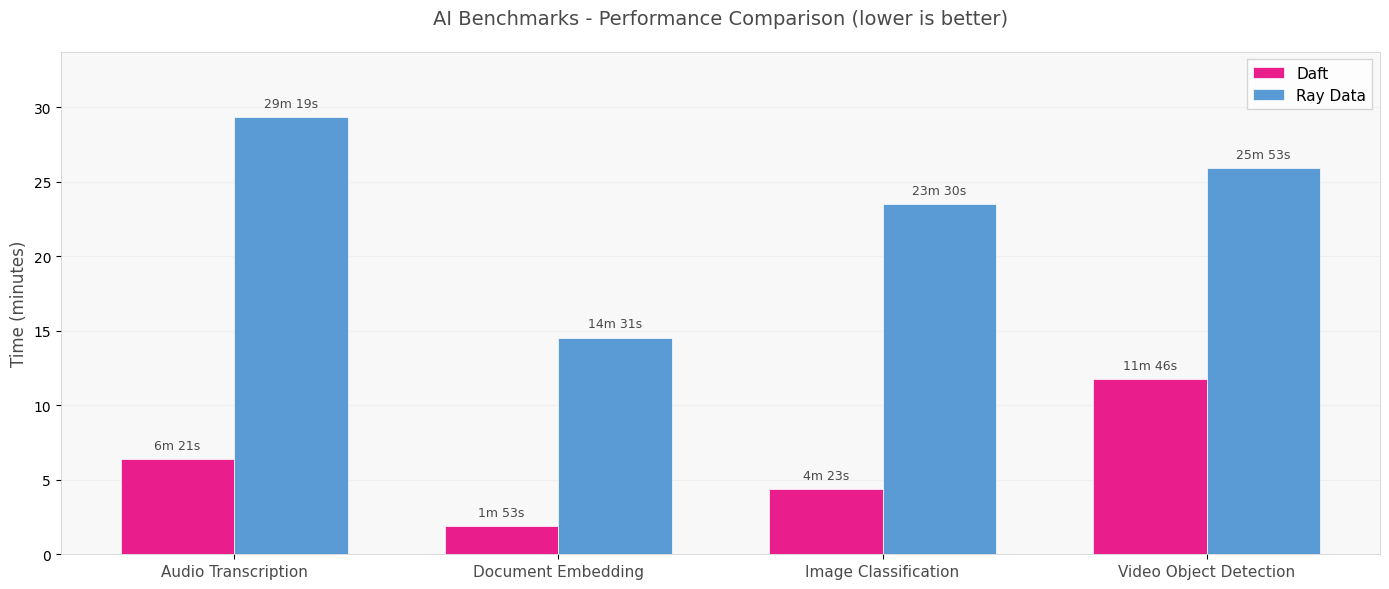

105. Why Multimodal AI Broke the Data Pipeline — And How Daft Is Beating Ray and Spark to Fix It

Multimodal AI workloads are breaking Spark and Ray. See how Daft’s streaming model runs 7× faster and more reliably across audio, video, and image pipelines.

Multimodal AI workloads are breaking Spark and Ray. See how Daft’s streaming model runs 7× faster and more reliably across audio, video, and image pipelines.

106. Using Arrow Flight SQL Protocol in Apache Doris 2.1 For Super Fast Data Transfer

Apache Doris 2.1 just got a major speed boost with Arrow Flight SQL for up to 10x faster data transfers.

Apache Doris 2.1 just got a major speed boost with Arrow Flight SQL for up to 10x faster data transfers.

107. Machine-Learning Neural Spatiotemporal Signal Processing with PyTorch Geometric Temporal

PyTorch Geometric Temporal is a deep learning library for neural spatiotemporal signal processing.

PyTorch Geometric Temporal is a deep learning library for neural spatiotemporal signal processing.

108. Writing Pandas to Make Your Python Code Scale

Write efficient and flexible data-pipelines in Python that generalise to changing requirements.

Write efficient and flexible data-pipelines in Python that generalise to changing requirements.

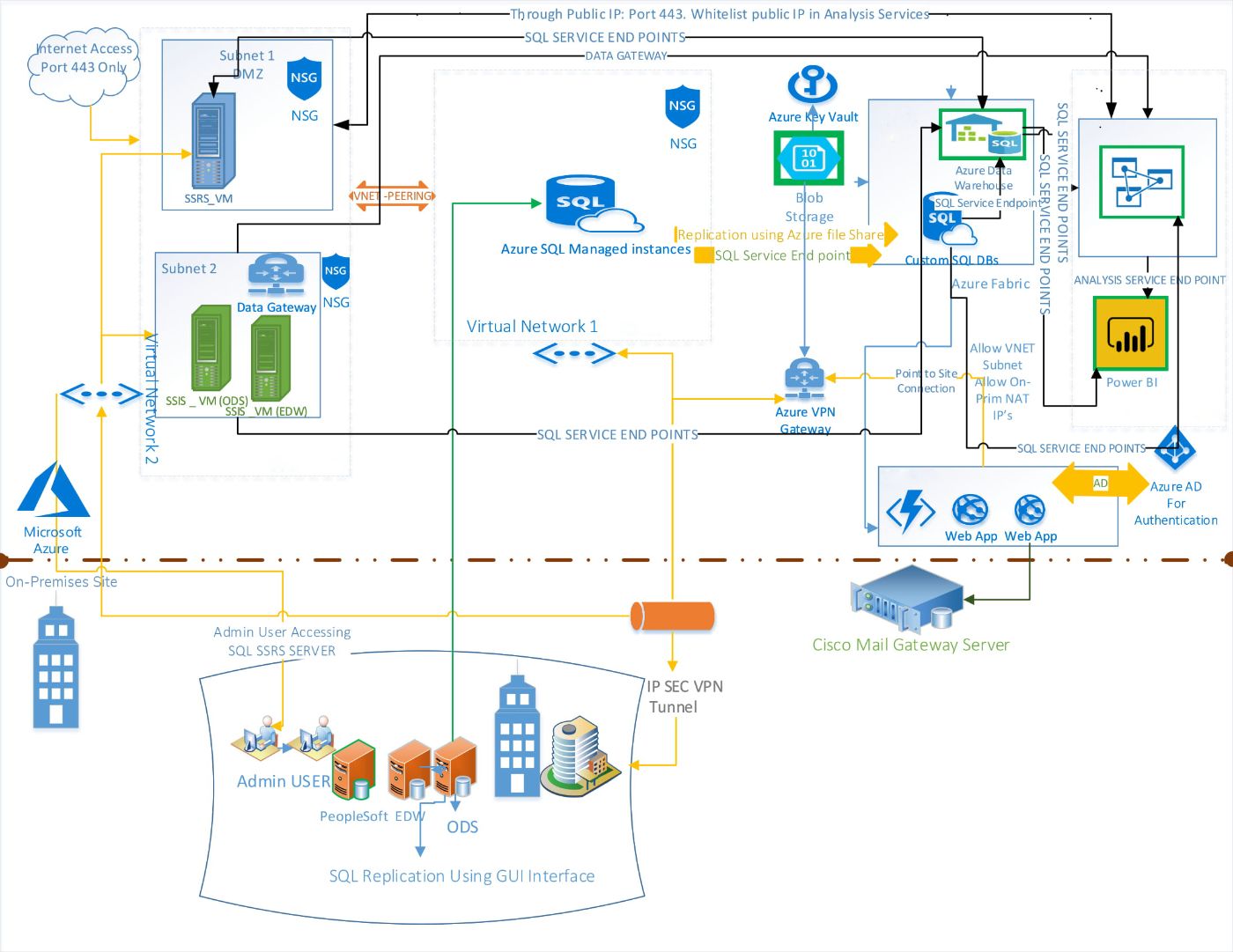

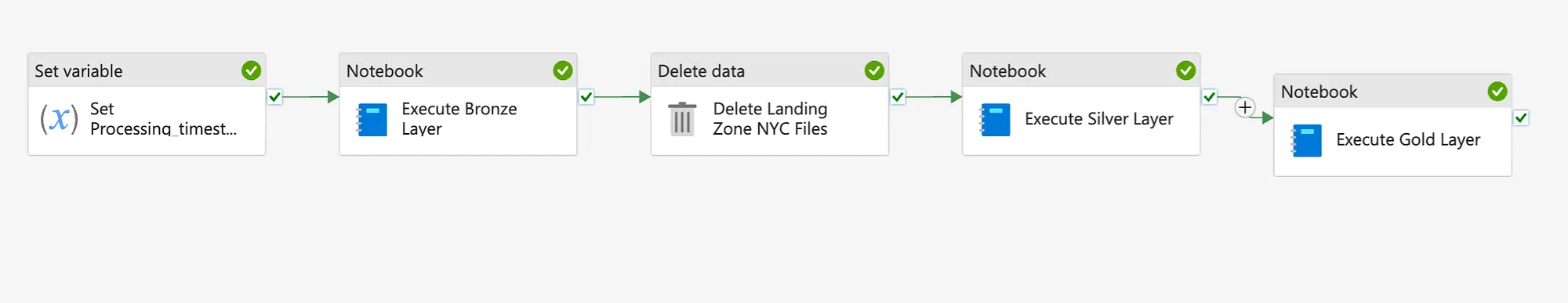

109. Seamlessly Migrate Your On-Premise Data Pipeline to Azure with These Key Steps

Scaling AI/ML Data Needs: Migrating On-Premise Data Engineering Workloads to Azure Cloud

Scaling AI/ML Data Needs: Migrating On-Premise Data Engineering Workloads to Azure Cloud

110. Inside the Bonkers DIY Project to Corral Every Gadget Rumor on Earth

My attempt to noodle around.

My attempt to noodle around.

111. Hands-on with Apache Iceberg & Dremio on Your Laptop within 10 Minutes

From creating and querying Iceberg tables to managing branches and snapshots with Nessie’s Git-like controls, you’ve seen how this stack can simplify complex da

From creating and querying Iceberg tables to managing branches and snapshots with Nessie’s Git-like controls, you’ve seen how this stack can simplify complex da

112. Why Microservices Suck At Machine Learning...and What You Can Do About It

113. Change Data Capture (CDC) When There is no CDC

How to handle changing data when the source system doesn't help.

How to handle changing data when the source system doesn't help.

114. Data Engineering: What’s the Value of API Security in the Generative AI Era?

Discover the importance of API security in the age of Generative AI. Learn how robust API protection ensures data integrity.

Discover the importance of API security in the age of Generative AI. Learn how robust API protection ensures data integrity.

115. Beyond Data: The Rising Need for AI Security

As organizations increasingly deploy AI systems for decision-making, ensuring both data and AI pipeline security becomes critical to safeguard integrity, trust.

As organizations increasingly deploy AI systems for decision-making, ensuring both data and AI pipeline security becomes critical to safeguard integrity, trust.

116. Kafka Schema Evolution: A Guide to the Confluent Schema Registry

Learn Kafka Schema Evolution: Understand, Manage & Scale Data Streams with Confluent Schema Registry. Essential for Data Engineers & Architects.

Learn Kafka Schema Evolution: Understand, Manage & Scale Data Streams with Confluent Schema Registry. Essential for Data Engineers & Architects.

117. The Role of Ontologies in Data Management

Ontologies organize data, enhance interoperability, and drive insights across domains with structured frameworks.

Ontologies organize data, enhance interoperability, and drive insights across domains with structured frameworks.

118. How Datadog Revealed Hidden AWS Performance Problems

Migrating from Convox to Nomad and some AWS performance issues we encountered along the way thanks to Datadog

Migrating from Convox to Nomad and some AWS performance issues we encountered along the way thanks to Datadog

119. I Built a RAG System for Our Analytics Team. It Worked Great Until We Added Real Data.

Everyone's demo uses 50 documents and a clean knowledge base. We had 14,000 files and a decade of conflicting policies.

Everyone's demo uses 50 documents and a clean knowledge base. We had 14,000 files and a decade of conflicting policies.

120. I Gave 5 Teams the Same Dashboard - Only 1 Made a Decision With It

Build for the decision, not the data. If you can't name the specific decision a dashboard is supposed to support, you're building a museum exhibit

Build for the decision, not the data. If you can't name the specific decision a dashboard is supposed to support, you're building a museum exhibit

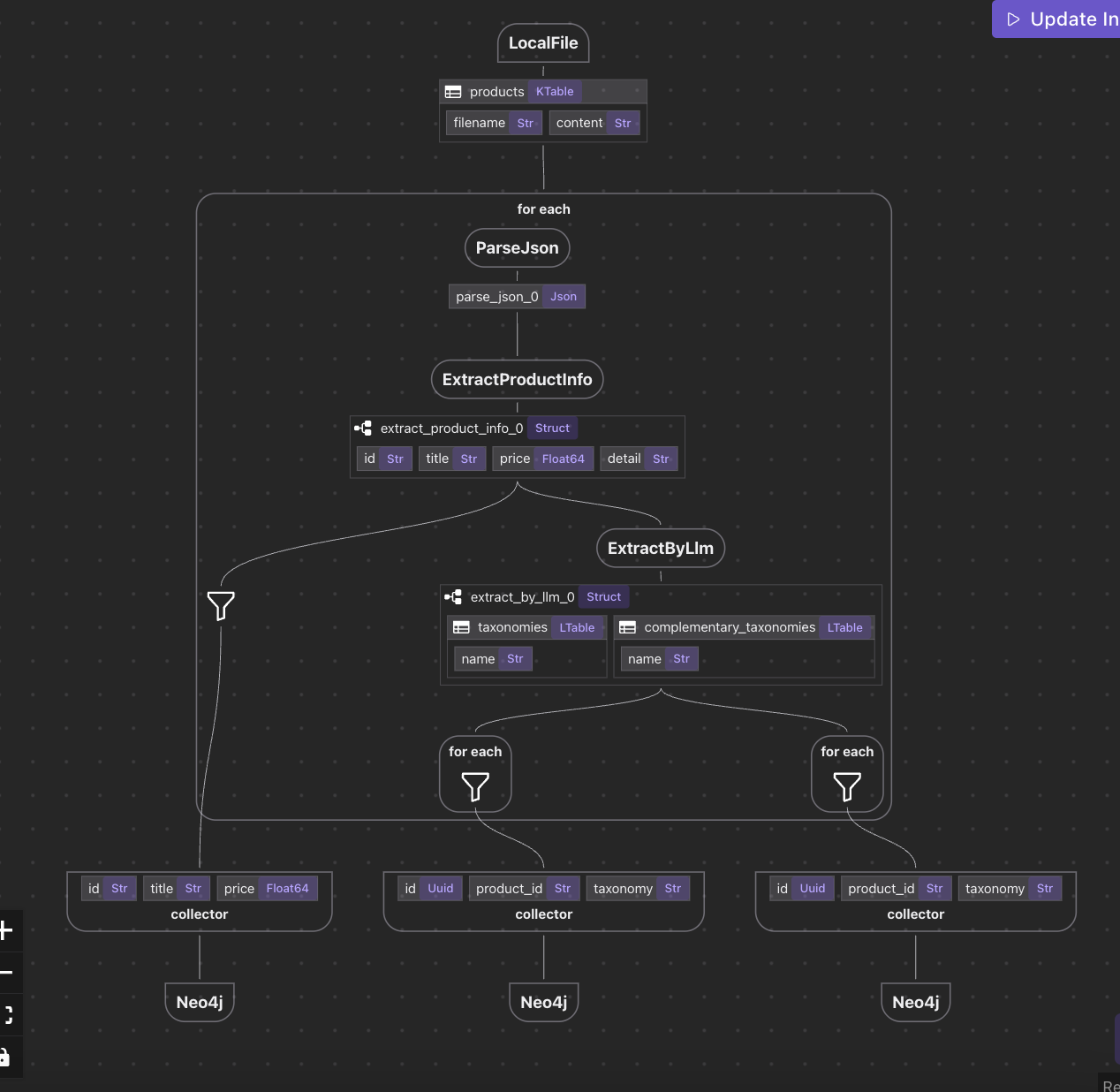

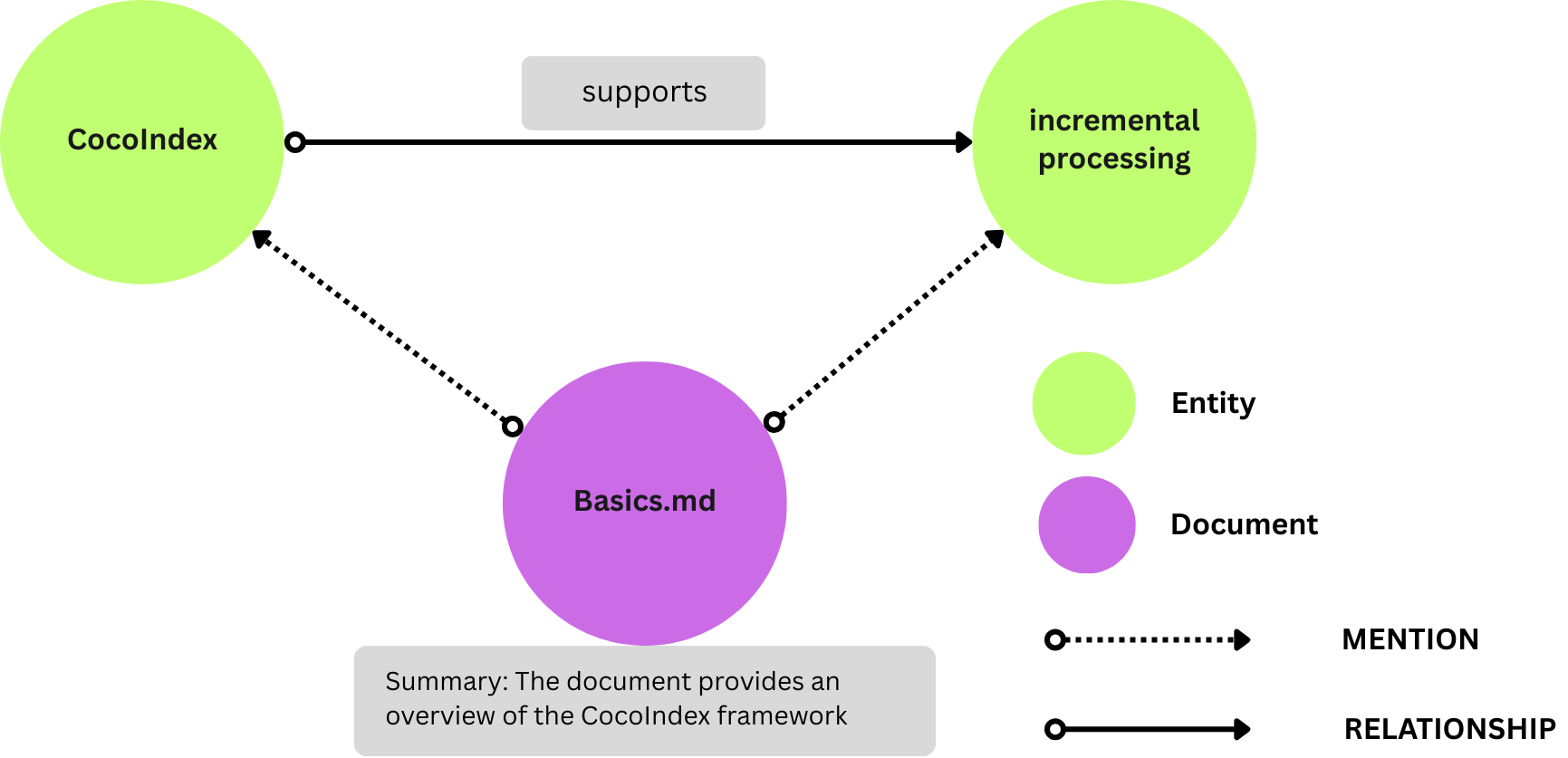

121. Redefining Data Operations With Data Flow Programming in CocoIndex

Discover how CocoIndex transforms data orchestration with a pure Data Flow Programming model — ensuring traceable, immutable, and declarative pipelines for know

Discover how CocoIndex transforms data orchestration with a pure Data Flow Programming model — ensuring traceable, immutable, and declarative pipelines for know

122. I Asked 5 LLMs to Write the Same SQL Query. Here's How Wrong They Got It

I tested 5 LLMs on 10 real SQL queries and graded them against actual data. Here's the scoreboard and the failure mode that should worry you most.

I tested 5 LLMs on 10 real SQL queries and graded them against actual data. Here's the scoreboard and the failure mode that should worry you most.

123. The DeltaLog: Fundamentals of Delta Lake [Part 2]

Multi-part series that will take you from beginner to expert in Delta Lake

Multi-part series that will take you from beginner to expert in Delta Lake

124. Optimizing Airflow: A Case Study in Cloud Resource Efficiency

Learn cost-effective Apache Airflow optimization for intermittent tasks. Explore Google Cloud automation, reducing idle time, and minimizing costs

Learn cost-effective Apache Airflow optimization for intermittent tasks. Explore Google Cloud automation, reducing idle time, and minimizing costs

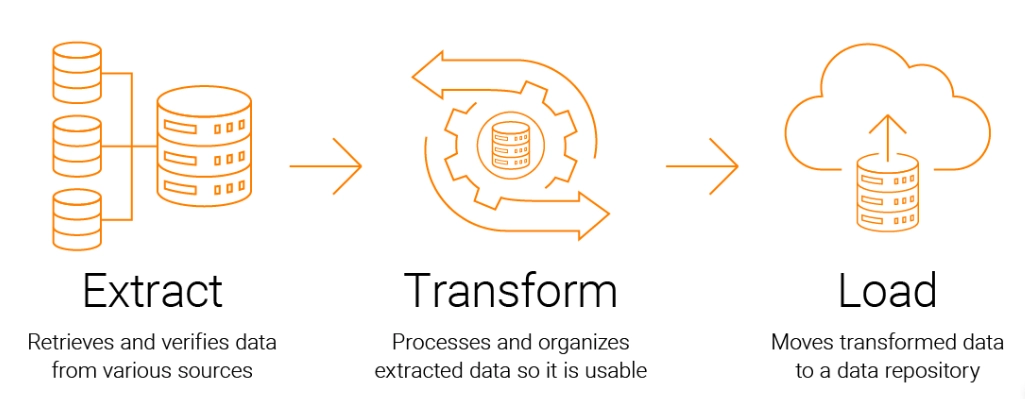

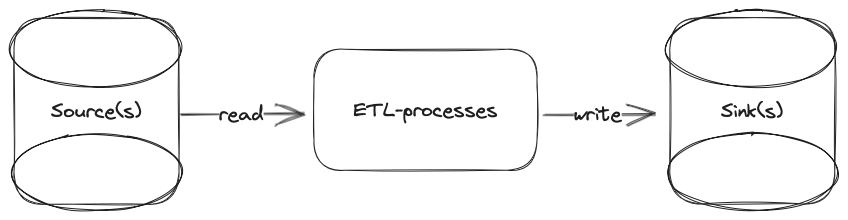

125. What's the Deal With Data Engineers Anyway?

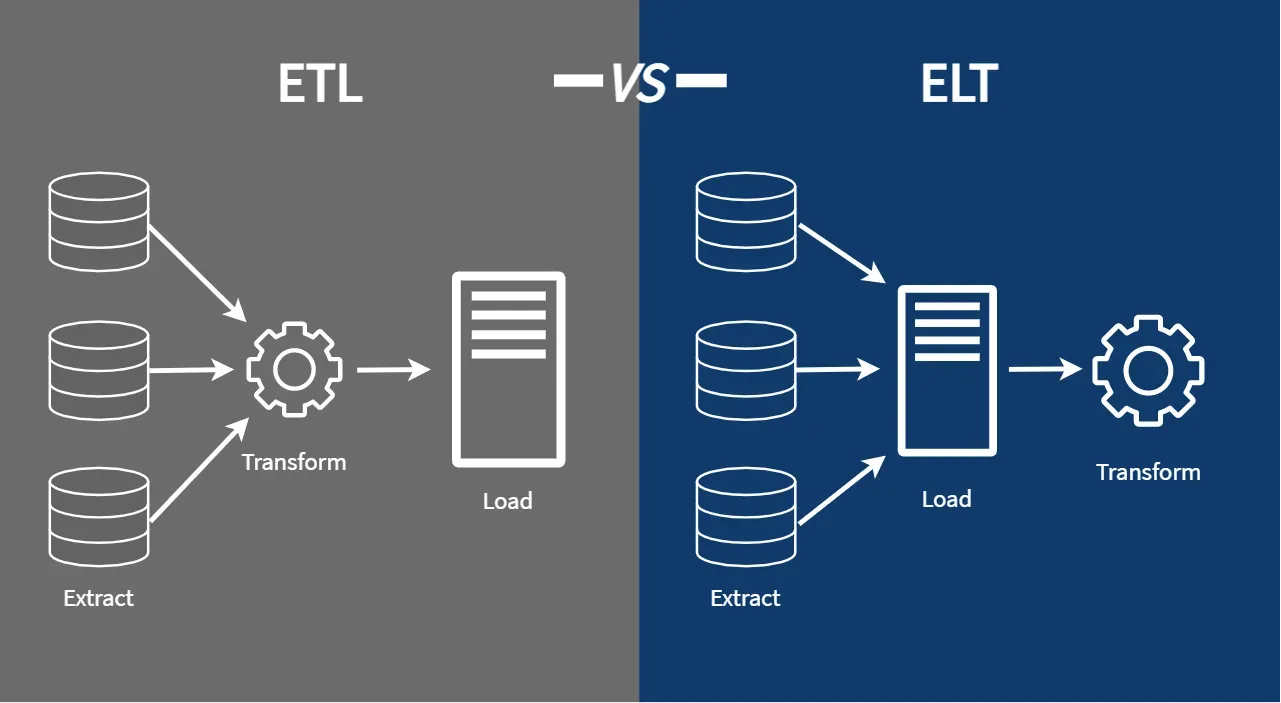

Learn the basics of data engineering with a practical ETL pipeline project. Explore how weather, flight, city data are extracted, transformed, loaded into a DB.

Learn the basics of data engineering with a practical ETL pipeline project. Explore how weather, flight, city data are extracted, transformed, loaded into a DB.

126. This New Data Type Is 8 Times Faster Than JSON: Improve Your Semi-Structured Data Analysis

Apache Doris provides a new data type: Variant, for semi-structured data analysis, which enables 8 times faster query performance than JSON with 1/3 storage.

Apache Doris provides a new data type: Variant, for semi-structured data analysis, which enables 8 times faster query performance than JSON with 1/3 storage.

127. Build Your Own Semantic Search Engine in Under 50 Lines—No Joke

Super performant Rust data stack to prepare realtime data for AI at massive scale - CocoIndex & Qdrant

Super performant Rust data stack to prepare realtime data for AI at massive scale - CocoIndex & Qdrant

128. This Real-Time Graph Framework Now Lets You Switch from Neo4j to Kuzu in One Line

CocoIndex now supports Kuzu as a native graph database target, enabling real-time LLM-powered knowledge graphs with plug-and-play configuration.

CocoIndex now supports Kuzu as a native graph database target, enabling real-time LLM-powered knowledge graphs with plug-and-play configuration.

129. AWS Regions and Availability Zones: A Useful Guide for Beginners

High Availability in the cloud: why us-east-1 alone is not a strategy (it's a gamble)

High Availability in the cloud: why us-east-1 alone is not a strategy (it's a gamble)

130. 16 Guides to Get You Started with Apache Iceberg

These guides are designed to provide you with practical experience in working with Apache Iceberg.

These guides are designed to provide you with practical experience in working with Apache Iceberg.

131. 5 Skills Every Successful ML Engineer Should Have

Uncover the five essential skills every successful machine learning engineer should have. Boost your ML engineering career with these invaluable insights.

Uncover the five essential skills every successful machine learning engineer should have. Boost your ML engineering career with these invaluable insights.

132. A 5-min Intro to Redpanda

A 5-minute introduction to Redpanda. An API-compatible, simple, high-performance, and cost-effective drop-in replacement for Apache Kafka.

A 5-minute introduction to Redpanda. An API-compatible, simple, high-performance, and cost-effective drop-in replacement for Apache Kafka.

133. Data Observability that Fits Any Data Team’s Structure

Data teams come in all different shapes and sizes. How do you build data observability into your pipeline in a way that suits your team structure? Read on.

Data teams come in all different shapes and sizes. How do you build data observability into your pipeline in a way that suits your team structure? Read on.

134. Data Observability: The First Step Towards Being Data-Driven

In a nutshell, data reliability is a BIG challenge and there is a need for a solution that is easy to use, understand, and deploy, and also not hea

In a nutshell, data reliability is a BIG challenge and there is a need for a solution that is easy to use, understand, and deploy, and also not hea

135. Rust DataFrame Alternatives to Polars: Meet Elusion v4.0.0

Elusion is a new contender that takes a fundamentally different approach to data engineering and analysis.

Elusion is a new contender that takes a fundamentally different approach to data engineering and analysis.

136. Streaming Wars: Why Apache Flink Could Outshine Spark

Comparing Apache Flink & Apache Spark in stream data processing. Exploring architectural nuances, applications, and key distinctions between the platforms.

Comparing Apache Flink & Apache Spark in stream data processing. Exploring architectural nuances, applications, and key distinctions between the platforms.

137. How to Improve Query Speed to Make the Most out of Your Data

In this article, I will talk about how I improved overall data processing efficiency by optimizing the choice and usage of data warehouses.

In this article, I will talk about how I improved overall data processing efficiency by optimizing the choice and usage of data warehouses.

138. Idempotency: The Secret to Production-Grade Data Pipelines

Stop duplicate records. Learn to build idempotent data pipelines in Databricks and Snowflake using partitioning, hashing, and atomic transactions.

Stop duplicate records. Learn to build idempotent data pipelines in Databricks and Snowflake using partitioning, hashing, and atomic transactions.

139. Shift-Left Data Platforms in Early-Stage Startups: Strategies for Data-Driven Success

Left-Shift Data Platform: How to overcome early stage startup challenges to be Data-Driven

Left-Shift Data Platform: How to overcome early stage startup challenges to be Data-Driven

140. Why Data Quality is Key to Successful ML Ops

In this first post in our 2-part ML Ops series, we are going to look at ML Ops and highlight how and why data quality is key to ML Ops workflows.

In this first post in our 2-part ML Ops series, we are going to look at ML Ops and highlight how and why data quality is key to ML Ops workflows.

141. Web3 Data Engineering Crash Course

How advances in cryptography and decentralization are reshaping conventional data architectures.

How advances in cryptography and decentralization are reshaping conventional data architectures.

142. Efficient Model Training in the Cloud with Kubernetes, TensorFlow, and Alluxio Open Source

This article presents the collaboration of Alibaba, Alluxio, and Nanjing University in tackling the problem of Deep Learning model training in the cloud. Various performance bottlenecks are analyzed with detailed optimizations of each component in the architecture. This content was previously published on Alluxio's Engineering Blog, featuring Alibaba Cloud Container Service Team's case study (White Paper here). Our goal was to reduce the cost and complexity of data access for Deep Learning training in a hybrid environment, which resulted in over 40% reduction in training time and cost.

This article presents the collaboration of Alibaba, Alluxio, and Nanjing University in tackling the problem of Deep Learning model training in the cloud. Various performance bottlenecks are analyzed with detailed optimizations of each component in the architecture. This content was previously published on Alluxio's Engineering Blog, featuring Alibaba Cloud Container Service Team's case study (White Paper here). Our goal was to reduce the cost and complexity of data access for Deep Learning training in a hybrid environment, which resulted in over 40% reduction in training time and cost.

143. The AI Agent Reality Check: What Actually Works in Production (And What Doesn't)

Your model works in Jupyter but fails at 3 AM. Why data quality and observability are the silent killers of 85% of AI projects.

Your model works in Jupyter but fails at 3 AM. Why data quality and observability are the silent killers of 85% of AI projects.

144. Event-Driven Change Data Capture: Introduction, Use Cases, and Tools

How to detect, capture, and propagate changes in source databases to target systems in a real-time, event-driven manner with Change Data Capture (CDC).

How to detect, capture, and propagate changes in source databases to target systems in a real-time, event-driven manner with Change Data Capture (CDC).

145. Compression in Big Data: Types and Techniques

This article will discuss compression in the Big Data context, covering the types and methods of compression

This article will discuss compression in the Big Data context, covering the types and methods of compression

146. Navigating Apache Iceberg: A Deep Dive into Catalogs & Their Role in Data Lakehouse Architectures

Dive into Apache Iceberg catalogs for organizing data lakes like a pro, tackling challenges, and picking the right fit!

Dive into Apache Iceberg catalogs for organizing data lakes like a pro, tackling challenges, and picking the right fit!

147. The Black Friday Query That Invented Data Engineering

Learn how one badly‑timed analytics query can crash your production database, cost millions on Black Friday, and why data engineering exists to prevent it.

Learn how one badly‑timed analytics query can crash your production database, cost millions on Black Friday, and why data engineering exists to prevent it.

148. I Interviewed 6 People Who Use Our Data Platform. They All Described a Different System.

We built one data platform. Six users described six completely different systems. Here's what that gap costs, and why documentation won't fix it.

We built one data platform. Six users described six completely different systems. Here's what that gap costs, and why documentation won't fix it.

149. How to Connect to Oracle, MySql and PostgreSQL Databases Using Python

To connect to a database and query data, you need to begin by installing Pandas and Sqlalchemy.

To connect to a database and query data, you need to begin by installing Pandas and Sqlalchemy.

150. The Ultimate Directory of Apache Iceberg Resources

This article is a comprehensive directory of Apache Iceberg resources, including educational materials, tutorials, and hands-on exercises.

This article is a comprehensive directory of Apache Iceberg resources, including educational materials, tutorials, and hands-on exercises.

151. Strategy for Incorporating Data Engineering for Computer Vision in Autonomous Driving

Learn how data engineering supports autonomous driving perception through annotation workflows, dataset augmentation, synthetic data generation, and versioning.

Learn how data engineering supports autonomous driving perception through annotation workflows, dataset augmentation, synthetic data generation, and versioning.

[152. Towards Open Options Chains:

A Data Pipeline Solution - Part I](https://hackernoon.com/towards-open-options-chains-a-data-pipeline-solution-for-options-data-part-i)

In "Towards Open Options Chains", Chris Chow presents his solution for collecting options data: a data pipeline with Airflow, PostgreSQL, and Docker.

In "Towards Open Options Chains", Chris Chow presents his solution for collecting options data: a data pipeline with Airflow, PostgreSQL, and Docker.

153. HarperDB is More Than Just a Database: Here's Why

HarperDB is more than just a database, and for certain users or projects, HarperDB is not serving as a database at all. How can this be possible?

HarperDB is more than just a database, and for certain users or projects, HarperDB is not serving as a database at all. How can this be possible?

154. AI Just Took Over Ad Targeting—And It’s Smarter, Faster, and Less Creepy Than Ever

Next-gen AI ad platforms use vector databases, indexing, and privacy-aware AI for real-time optimization, boosting ad spend efficiency while staying compliant.

Next-gen AI ad platforms use vector databases, indexing, and privacy-aware AI for real-time optimization, boosting ad spend efficiency while staying compliant.

155. Modern Data Engineering with Apache Spark: A Hands-On Guide to Slowly Changing Dimensions (SCD)

Learn how Apache Spark and Databricks implement Slowly Changing Dimensions (Types 0–6) to preserve history, scale analytics, and ensure accurate data modeling.

Learn how Apache Spark and Databricks implement Slowly Changing Dimensions (Types 0–6) to preserve history, scale analytics, and ensure accurate data modeling.

156. Bigger Models Won’t Fix Terminal Agents

<h2>The gap between talking and doing</h2> <p>Large language models excel at discussing programming concepts, explaining terminal commands, and reasoning about file systems. Yet when asked to actually accomplish a task in a terminal, they fail spectacularly. They suggest nonsensical commands, misinterpret output, and give up at the first error. This gap between linguistic capability and practical competence has persisted despite rapid advances in model scale and architecture.</p> <p>The industry's response has been predictable: build bigger models. Deploy models with more parameters, more training tokens, more compute. Yet recent work shows that even substantial models like Qwen3-32B achieve only 3.4% on Terminal-Bench 2.0, a standard benchmark for terminal task completion. This suggests the bottleneck isn't model capacity. It's something more fundamental: the training data itself.</p> <p>A new paper approaches terminal agent capabilities through a different lens. Rather than chasing model scale or architectural innovations, the authors conducted a systematic study of data engineering practices for terminal agents. The conclusion challenges conventional wisdom: a carefully constructed dataset combined with strategic filtering and curriculum learning can teach an 8B parameter model to match the performance of models four to ten times larger trained on standard data.</p> <h2>The unsexy truth about capability</h2> <p>The conventional story about AI progress emphasizes algorithmic breakthroughs and computational scale. What actually happens in practice is less glamorous. For embodied tasks, where models need to execute sequences of actions rather than simply generate text, <strong>what you train on matters far more than how much compute you throw at the problem</strong>.</p> <p>This paper introduces three key contributions that make this shift possible. First, Terminal-Task-Gen, a lightweight synthetic task generation pipeline that supports both seed-based and skill-based task construction. Second, a comprehensive analysis of filtering strategies, curriculum learning approaches, and scaling behavior. Third, Terminal-Corpus, a large-scale open-source dataset of terminal interactions that demonstrates these principles work in practice.</p> <p>The results vindicate this approach. Nemotron-Terminal models, trained on Terminal-Corpus and initialized from Qwen base models, achieve substantial performance jumps: the 8B version improves from 2.5% to 13.0%, the 14B version from 4.0% to 20.2%, and the 32B version from 3.4% to 27.4%. These aren't incremental improvements. They represent fundamental shifts in efficiency.</p> <h2>Where does high-quality training data come from</h2> <p>Manually creating thousands of high-quality terminal interactions would be prohibitively expensive. A human expert writing terminal task trajectories might produce a few per day. Building a dataset with enough diversity to teach genuine capability would require months of expert time and substantial cost. So the paper takes a different approach: systematize the process of generating diverse, realistic terminal tasks.</p> <p>Terminal-Task-Gen operates in two phases. The first phase, Dataset Adaptation, takes existing benchmarks and task descriptions from sources like Terminal-Bench, then reformulates them as interactive terminal interactions. This provides a foundation but is limited in coverage. Few benchmarks exist for terminal tasks, and even those that do capture only a fraction of possible terminal operations.</p> <p>The second phase, Synthetic Task Generation, is where the real leverage appears. The pipeline defines a Skill Taxonomy, a structured breakdown of terminal operations and concepts. These skills range from basic navigation (moving between directories, listing files) to more complex operations (understanding command output, iterating based on errors, chaining operations together). By combining skills from this taxonomy in different ways, the system generates novel terminal tasks that teach these skills systematically.</p> <p><a href="https://arxiv.org/html/2602.21193/figs/data_pipeline.png"><img src="https://arxiv.org/html/2602.21193/figs/data_pipeline.png" alt="Overview of Terminal-Task-Gen combining Dataset Adaptation and Synthetic Task Generation. The pipeline takes benchmark data and a skill taxonomy, producing diverse terminal interaction trajectories." /></a><br/><em>Overview of Terminal-Task-Gen combining Dataset Adaptation and Synthetic Task Generation. The pipeline takes benchmark data and a skill taxonomy, producing diverse terminal interaction trajectories.</em></p> <p>The output is Terminal-Corpus, a dataset containing thousands of terminal interaction sequences. Unlike static benchmarks, these trajectories capture the dynamic nature of terminal interaction: the user issues a command, observes output, interprets that output, and adjusts their approach accordingly. This mimics how humans actually use terminals, which is critical because models trained on static problem-solution pairs often fail to handle unexpected outputs or errors.</p> <h2>Curating signal from noise</h2> <p>Not all synthetic data improves model performance. Some generated tasks might be trivially easy, offering no learning signal. Others might be internally inconsistent, teaching the model to hallucinate plausible-sounding but incorrect commands. Still others might be so convoluted that they confuse rather than clarify patterns.</p> <p>The paper systematically studies filtering strategies to distinguish high-signal examples from low-signal ones. The analysis reveals which filtering criteria actually correlate with downstream performance on Terminal-Bench 2.0. This matters because naive scaling, where you simply generate enormous amounts of data and train on all of it, typically underperforms careful curation.</p> <p>Some trajectories might be rejected because they contain errors in their reasoning or incorrect command sequences. Others might be excluded because they're too similar to existing examples, offering little diversity. The filtering process is not arbitrary; it's grounded in empirical analysis of what data actually improves model performance.</p> <p>This represents a fundamental insight about data engineering: curation is as important as generation. A smaller dataset of high-quality examples outperforms a larger dataset with noise. The specific filtering strategies used here would be context-dependent, but the principle is universal.</p> <h2>Structuring the learning process</h2> <p>Once you have filtered, high-quality data, the question of how to present it during training becomes crucial. Not all orderings are equally effective.</p> <p>Curriculum learning applies a simple principle: harder material is easier to learn when preceded by foundational material. A model learning terminal tasks benefits from first encountering simple interactions, then gradually progressing to more complex ones. This scaffolding makes learning more efficient than random sampling.</p> <p>For terminal tasks, natural curriculum structures emerge. Basic navigation (changing directories, listing files) can serve as a foundation. File operations (copying, moving, deleting) build on that foundation. Multi-step reasoning tasks that require chaining commands together come later. Understanding command output and error recovery grow more sophisticated across the curriculum.</p> <p>The paper studies how these curriculum principles apply to terminal agent training. Strategic ordering of examples during training improves both convergence speed and final performance compared to random shuffling. This is particularly important because terminal tasks have inherent sequential dependencies. You can't reasonably ask a model to debug a complex pipeline if it hasn't yet learned basic piping syntax.</p> <h2>Understanding scaling behavior</h2> <p>Data engineers face a practical reality: training compute is limited. Generating more data costs compute to train on. At some point, marginal improvements from additional data diminish, and that compute would be better spent elsewhere.</p> <p>The paper includes scaling experiments that reveal how performance improves as training data volume increases. These curves answer a crucial question: have we hit a plateau, or would additional data continue helping?</p> <p><a href="https://arxiv.org/html/2602.21193/figs/scaling_results.png"><img src="https://arxiv.org/html/2602.21193/figs/scaling_results.png" alt="Impact of training data scale on model performance. Terminal-Bench 2.0 performance increases consistently with training data volume for both Qwen3-8B and Qwen3-14B." /></a><br/><em>Impact of training data scale on model performance. Terminal-Bench 2.0 performance increases consistently with training data volume for both Qwen3-8B and Qwen3-14B.</em></p> <p>The results show clear improvement patterns for both model sizes. Performance grows consistently with more data, though the growth rate eventually slows. The curves suggest that the models tested haven't yet hit a hard ceiling, but marginal returns are diminishing.</p> <p>Understanding the composition of these trajectories helps explain the scaling behavior. The token distribution shows what length trajectories look like, while the turn distribution reveals how many interaction steps typical tasks involve.</p> <p><a href="https://arxiv.org/html/2602.21193/figs/token_stats.png"><img src="https://arxiv.org/html/2602.21193/figs/token_stats.png" alt="Distribution of tokens in generated trajectories. This shows the length characteristics of synthetic terminal tasks. Distribution of turns in generated trajectories. This reveals how many interaction steps are typical." /></a><br/><em>Distribution of tokens in generated trajectories. This shows the length characteristics of synthetic terminal tasks.</em></a></li> <li><a href="https://arxiv.org/html/2602.21193/figs/turn_stats.png"><em>Distribution of turns in generated trajectories. This reveals how many interaction steps are typical.</em></p> <p>These statistics matter because they determine training requirements. If typical trajectories require thousands of tokens, then a dataset of several million trajectories becomes gigabytes of data. Understanding these distributions helps practitioners plan data generation, training infrastructure, and budget allocation.</p> <h2>The proof of concept</h2> <p>All of this methodology yields concrete results. An 8B model trained on Terminal-Corpus reaches 13.0% accuracy on Terminal-Bench 2.0, jumping from a baseline of 2.5%. The 14B model reaches 20.2% (from 4.0%), and the 32B model reaches 27.4% (from 3.4%). Scaling the baseline models without better data produces marginal improvements. Scaling the data engineering produces orders of magnitude improvement.</p> <p>Most strikingly, the 8B model trained on Terminal-Corpus now matches or exceeds the performance of much larger models trained on standard data. This comparison shifts the entire conversation around terminal agents. You don't need a 70B parameter model to build a capable agent. You need thoughtful data engineering.</p> <h2>Data engineering as a fundamental lever</h2> <p>This work reveals something important about AI capabilities that the industry often overlooks. Sometimes the bottleneck isn't compute, it isn't model architecture, and it isn't algorithmic innovation. It's training data engineering.</p> <p>For tasks where models need to execute, perceive feedback, and adapt, the quality and structure of training data becomes paramount. A model trained on synthetic trajectories that systematically cover the skill space, filtered for signal, and presented in a curriculum that respects task dependencies outperforms larger models trained haphazardly.</p> <p>This has practical implications. Unlike model architecture research or compute scaling, data engineering is accessible. It doesn't require the largest clusters or the most specialized hardware. It requires systematic thinking about what signals teach capability, how to generate diverse examples, what examples to exclude, and how to present examples during training.</p> <p>The open-sourcing of Nemotron-Terminal models and Terminal-Corpus accelerates this direction. Future work can build on this foundation, improving the pipeline further. The bottleneck moves from "how do we build capable terminal agents" to "how do we engineer training data even more effectively."</p> <p>The broader lesson applies beyond terminal agents. Any task where models must execute actions, perceive outcomes, and adjust strategy benefits from this kind of data engineering thinking. As AI systems move from pure language understanding toward embodied AI, systematic approaches to training data quality become not an optimization, but a fundamental requirement.</p>

<hr/><p><strong>Original post:</strong> <a href="https://www.aimodels.fyi/papers/arxiv/data-engineering-scaling-llm-terminal-capabilities?utm_source=hackernoon&utm_medium=referral">Read on AIModels.fyi</a></p>

157. I Built the Same Data Pipeline 4 Ways. Here's What I'd Never Do Again.

I built one pipeline four times. The winner wasn’t the fastest tool; it was the one that failed loudly, stayed debuggable, and didn’t punish ops.

I built one pipeline four times. The winner wasn’t the fastest tool; it was the one that failed loudly, stayed debuggable, and didn’t punish ops.

158. Intro to Data Vault Modeling: Agility, Scalability, and Practical Applications Explained

The practical use of Data Vault models, as illustrated through querying customer orders and analyzing product sales, demonstrates the methodology's flexibility,

The practical use of Data Vault models, as illustrated through querying customer orders and analyzing product sales, demonstrates the methodology's flexibility,

159. The Importance of Data in Machine Learning: Fueling the AI Revolution

In this blog, we’ll delve into the crucial role that data plays in machine learning and why it’s often said that in the world of AI, “data is king.”

In this blog, we’ll delve into the crucial role that data plays in machine learning and why it’s often said that in the world of AI, “data is king.”

160. The Observability Debt Hypothesis: Why Perfect Dashboards Still Mask Failing Systems

Perfect dashboards don’t mean perfect systems. Explore how observability debt hides behind metrics, distorts truth, and weakens engineering judgment in 2025.

Perfect dashboards don’t mean perfect systems. Explore how observability debt hides behind metrics, distorts truth, and weakens engineering judgment in 2025.

161. What is Data Profiling? Concepts and Examples

Learn the concepts of data profiling and how it can speed up the debugging the quality related incidents across the data stack.

Learn the concepts of data profiling and how it can speed up the debugging the quality related incidents across the data stack.

162. The Ghost in the Warehouse: How to Solve Schema Drift in Analytical AI Agents

Solve schema drift in analytical AI agents using sqldrift. Real-world validation on 255 BIRD queries achieves 94.1% success with automated LLM correction.

Solve schema drift in analytical AI agents using sqldrift. Real-world validation on 255 BIRD queries achieves 94.1% success with automated LLM correction.

163. 5 Ways to Become a Leader That Data Engineers Will Love

How to become a better data leader that the data engineers love?

How to become a better data leader that the data engineers love?

164. Modernization Is Not Migration: Here's Why

How operational engineering—not infrastructure—determines whether cloud modernization delivers reliability in regulated financial data platforms.

How operational engineering—not infrastructure—determines whether cloud modernization delivers reliability in regulated financial data platforms.

165. Understanding Data Lineage: Key Strategies for Ensuring Data Quality and Compliance

Data lineage refers to the process of tracking data from its origin to its destination, including all transformations and movements in between. It is crucial fo

Data lineage refers to the process of tracking data from its origin to its destination, including all transformations and movements in between. It is crucial fo

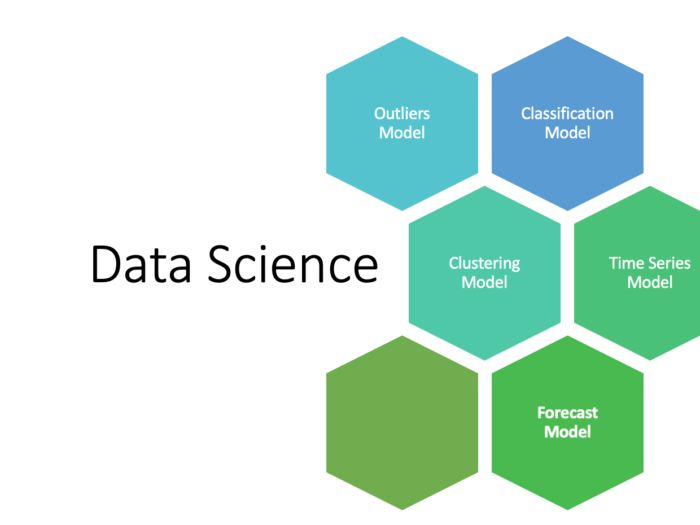

166. A Brief Introduction to 5 Predictive Models in Data Science

Predictive Modeling in Data Science is more like the answer to the question “What is going to happen in the future, based on known past behaviors?”

Predictive Modeling in Data Science is more like the answer to the question “What is going to happen in the future, based on known past behaviors?”

167. Efficient Enterprise Data Solutions With Stream Processing

Enterprise data solutions—handling myriad data sources and massive data volume—are expensive. Stream processing reduces costs and brings real-time scalability.

Enterprise data solutions—handling myriad data sources and massive data volume—are expensive. Stream processing reduces costs and brings real-time scalability.

168. Are NoSQL databases relevant for data engineering?

In this article, we’ll investigate use cases for which data engineers may need to interact with NoSQL database, as well as the pros and cons.

In this article, we’ll investigate use cases for which data engineers may need to interact with NoSQL database, as well as the pros and cons.

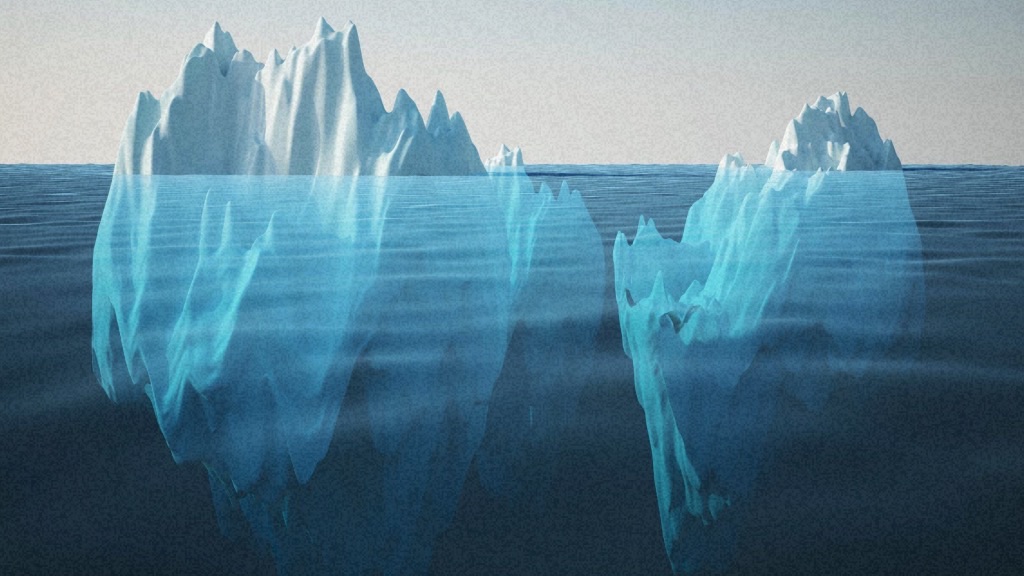

169. The Silent Killer of Data Lakes: Solving the Small File Problem

Stop the "Small File Syndrome" in your Data Lake. Learn how to implement Compaction, Z-Ordering, and automated maintenance in Databricks and Snowflake.

Stop the "Small File Syndrome" in your Data Lake. Learn how to implement Compaction, Z-Ordering, and automated maintenance in Databricks and Snowflake.

170. Architecting for Speed: Advanced SQL Performance Tuning in the Lakehouse

Stop slow queries and high cloud costs. Learn advanced SQL tuning for Snowflake and Databricks, including Pruning, Join Salting, and Search Optimization.

Stop slow queries and high cloud costs. Learn advanced SQL tuning for Snowflake and Databricks, including Pruning, Join Salting, and Search Optimization.

171. PBIX Is Not Going Away - But PowerBI Will Never Work the Same Again

PowerBI is shifting from "PBIX" to "PBIR". This article explains what actually changes, who benefits and how teams should prepare for the future without panic.

PowerBI is shifting from "PBIX" to "PBIR". This article explains what actually changes, who benefits and how teams should prepare for the future without panic.

172. Unlocking the Power of Advanced Data Types in Big Data

Features of the specialized data types near integers and strings, which we use in every-day life, will allow us to store and operate complex data structures.

Features of the specialized data types near integers and strings, which we use in every-day life, will allow us to store and operate complex data structures.

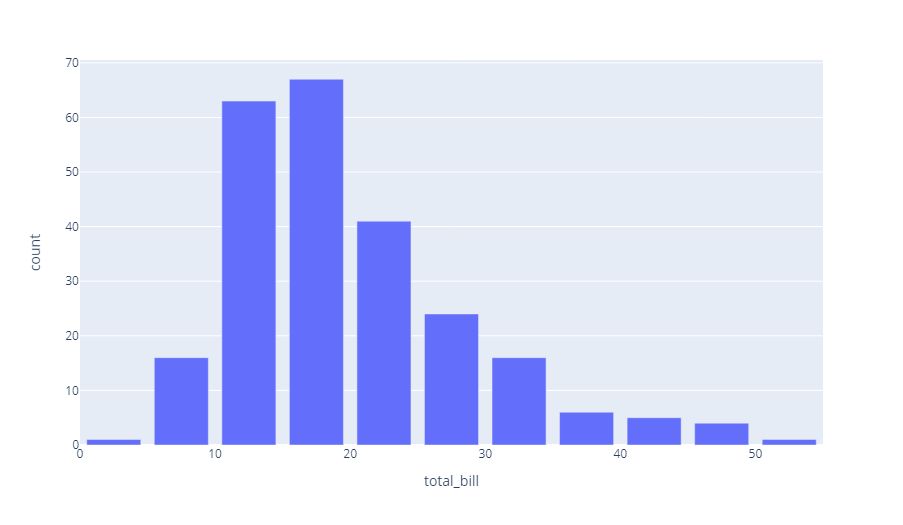

173. Data Transformation and Discretization: A Comprehensive Guide

Learn about data transformation and discretization in data preprocessing. Explore normalization techniques, binning, and histograms.

Learn about data transformation and discretization in data preprocessing. Explore normalization techniques, binning, and histograms.

174. If Data Is the New Oil, We Already Built a Planet-Sized Spill

This isn’t about saving bits—it’s about shaping history into a governed, trustworthy, searchable corpus for humans and AI.

This isn’t about saving bits—it’s about shaping history into a governed, trustworthy, searchable corpus for humans and AI.

175. Synchronizing Data from MySQL to PostgreSQL Using Apache SeaTunnel

A step-by-step walkthrough of building a real-time data pipeline to merge and synchronize MySQL data sources using Apache SeaTunnel.

A step-by-step walkthrough of building a real-time data pipeline to merge and synchronize MySQL data sources using Apache SeaTunnel.

176. What Is A Data Mesh — And Is It Right For Me?

Ask anyone in the data industry what’s hot and chances are “data mesh” will rise to the top of the list. But what is a data mesh and is it right for you?

Ask anyone in the data industry what’s hot and chances are “data mesh” will rise to the top of the list. But what is a data mesh and is it right for you?

177. Data Pipeline Testing: The 3 Levels Most Teams Miss

Dashboards don’t represent actual state, models degrade unnoticed, and incidents show up as “weird numbers” instead of errors.

Dashboards don’t represent actual state, models degrade unnoticed, and incidents show up as “weird numbers” instead of errors.

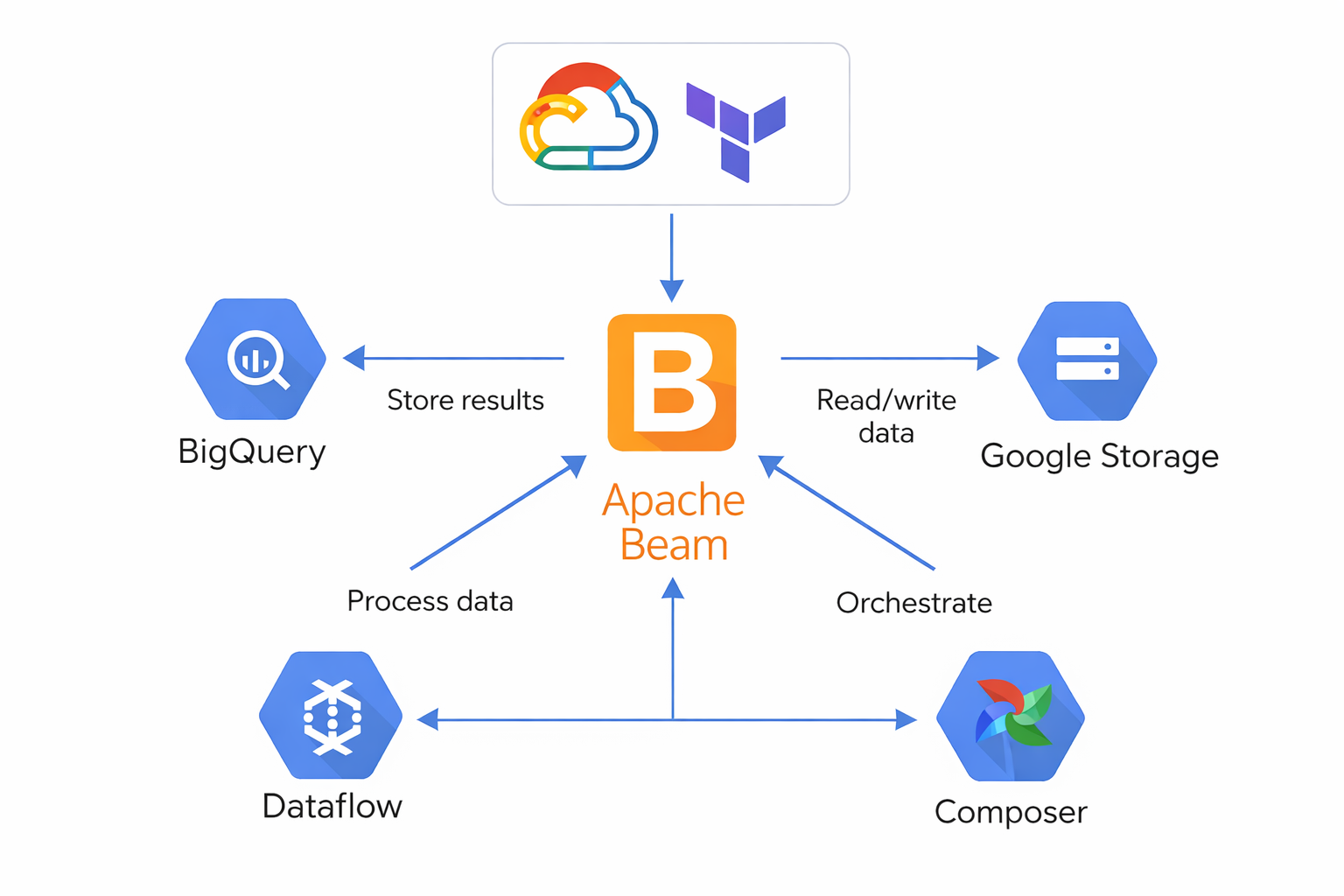

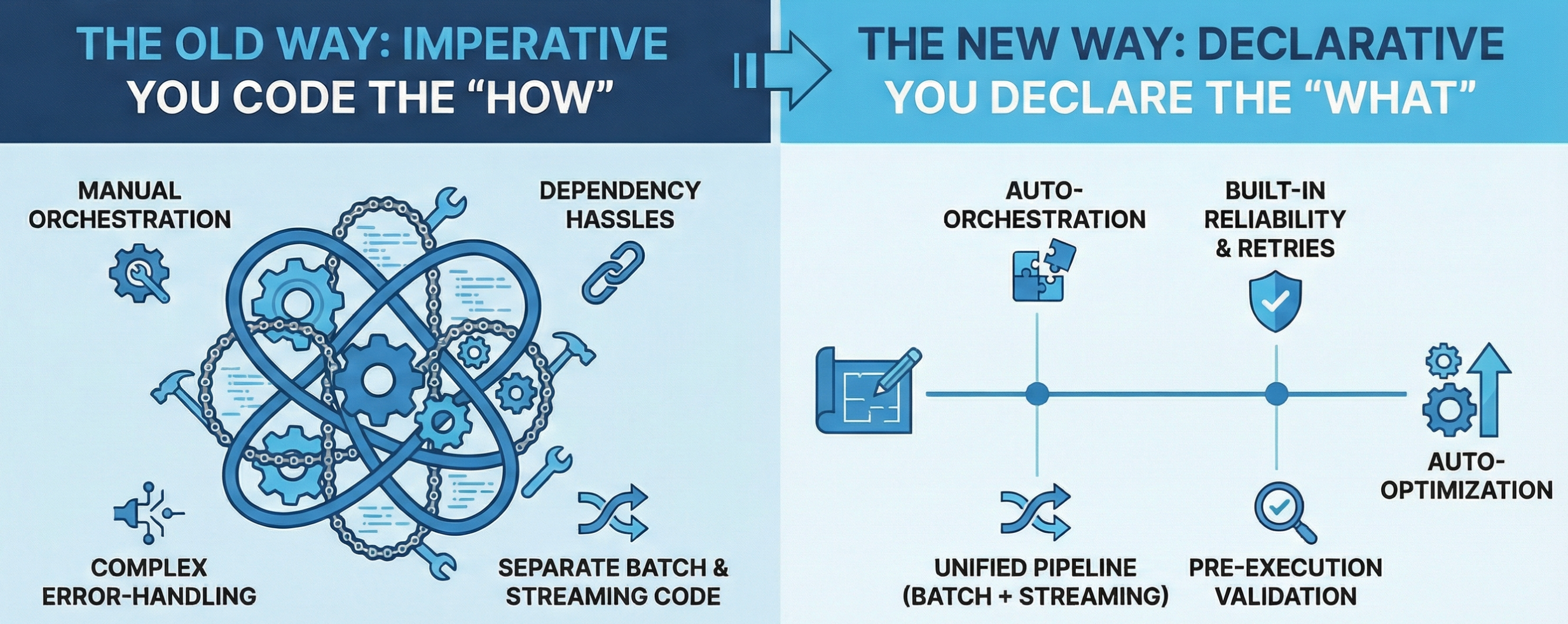

178. Apache Beam on GCP: How Distributed Data Pipelines Actually Work (for REST API Engineers)

Apache Beam is a declarative programming model for large-scale data processing, not a service or framework like a REST API.

Apache Beam is a declarative programming model for large-scale data processing, not a service or framework like a REST API.

179. Database Tips: 7 Reasons Why Data Lakes Could Solve Your Problems

Data lakes are an essential component in building any future-proof data platform. In this article, we round up 7 reasons why you need a data lake.

Data lakes are an essential component in building any future-proof data platform. In this article, we round up 7 reasons why you need a data lake.

180. From "Decentralized" to "Unified": SUPCON Uses SeaTunnel to Build an Efficient Data Collection Frame

SUPCON dumped siloed data tools for Apache SeaTunnel—now core sync tasks run 0-failure!

SUPCON dumped siloed data tools for Apache SeaTunnel—now core sync tasks run 0-failure!

181. AI Is About to Break Your BI Architecture (If You Don't Redesign It First)

AI is about to expose weak BI architecture. "DirectQuery" collapses under machine curiosity. Decision-aligned design is the only way forward.

AI is about to expose weak BI architecture. "DirectQuery" collapses under machine curiosity. Decision-aligned design is the only way forward.

182. Is Your Apache Ni-Fi Ready for Production?

Apache NiFi cluster can process up to 50 GB of data per day. Apache NiFi can provide a balance between performance and cost-effectiveness.

Apache NiFi cluster can process up to 50 GB of data per day. Apache NiFi can provide a balance between performance and cost-effectiveness.

183. The Hidden Tax of Cloud BI: Zombie Data Movement Between Platforms

Hidden cloud BI cost: data egress between platforms. Learn how “zombie data movement” quietly inflates analytics bills in modern BI architectures.

Hidden cloud BI cost: data egress between platforms. Learn how “zombie data movement” quietly inflates analytics bills in modern BI architectures.

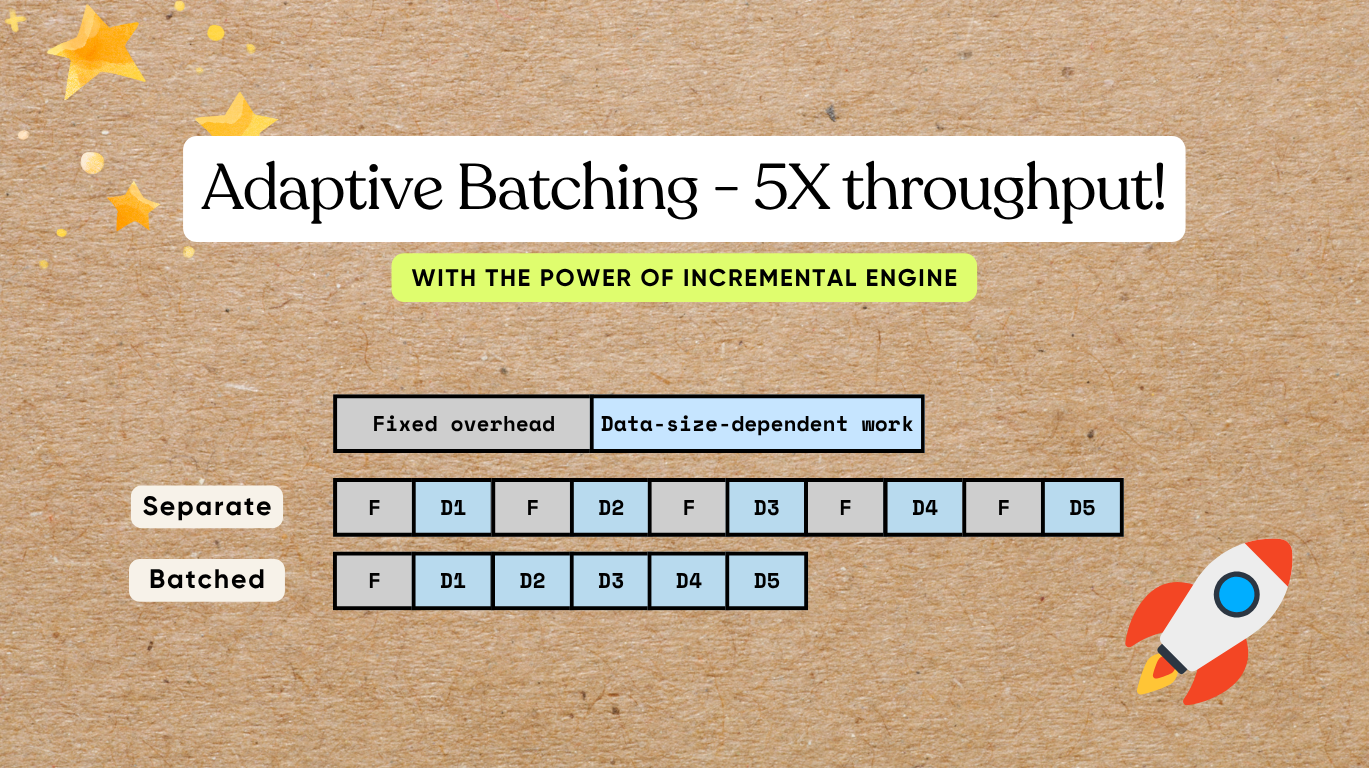

184. Make Your Data Pipelines 5X Faster with Adaptive Batching

Ultra charge AI native data pipelines with X times of performance boost by batching

Ultra charge AI native data pipelines with X times of performance boost by batching

185. The Data Security Duo: Data Encryption and Vulnerability Scans

How application and product engineering teams can implement data encryption to effectively address data vulnerability issues.

How application and product engineering teams can implement data encryption to effectively address data vulnerability issues.

186. Lessons From The Night I Met Dbt on Databricks

The Medallion Architecture is a framework that turns messy e-commerce data into business-ready insights.

The Medallion Architecture is a framework that turns messy e-commerce data into business-ready insights.

187. A Developer’s Guide to DolphinScheduler 3.1.9 Worker Startup Process

Dive into the detailed features and architecture of Apache DolphinScheduler 3.1.9!

Dive into the detailed features and architecture of Apache DolphinScheduler 3.1.9!

188. Minimum Incident Lineage (MIL): A Run-Level Evidence Standard for Reproducible Data Incidents

Traditional data lineage shows dependencies—not proof. Learn how Minimum Incident Lineage helps teams reproduce, audit, and resolve data incidents faster.

Traditional data lineage shows dependencies—not proof. Learn how Minimum Incident Lineage helps teams reproduce, audit, and resolve data incidents faster.

189. 96 Stories To Learn About Data Engineering

Learn everything you need to know about Data Engineering via these 96 free HackerNoon stories.

Learn everything you need to know about Data Engineering via these 96 free HackerNoon stories.

190. Solving Noom's Data Analyst Interview Questions