Let's learn about Prompt Engineering via these 236 free blog posts. They are ordered by HackerNoon reader engagement data. Visit the Learn Repo or LearnRepo.com to find the most read blog posts about any technology.

Prompt engineering is the art and science of crafting effective inputs (prompts) to guide AI models, especially large language models, to generate desired outputs. It matters for maximizing the utility and accuracy of AI systems, enabling nuanced control over their behavior and applications.

1. Prompt Engineering 101 - I: Unveiling Principles & Techniques of Effective Prompt Crafting

Learn how to effectively communicate with machines with this 101 post series on Prompt Engineering.

Learn how to effectively communicate with machines with this 101 post series on Prompt Engineering.

2. AI Brawl: the Generative Model Showdown

Four engines, one prompt, slightly suspiciously honest commentary.

Four engines, one prompt, slightly suspiciously honest commentary.

3. Gptrim: Reduce Your GPT Prompt Size by 50% For Free!

Introducing gptrim, a free web app that will reduce the size of your prompts by 40%-60% while preserving most of the original information for GPT to process.

Introducing gptrim, a free web app that will reduce the size of your prompts by 40%-60% while preserving most of the original information for GPT to process.

4. Prompt Engineering 101 - II: Mastering Prompt Crafting with Advanced Techniques

Learn how to effectively communicate with machines with this 101 post series on Prompt Engineering

Learn how to effectively communicate with machines with this 101 post series on Prompt Engineering

5. Harnessing AI: 28 Innovative Marketing Strategies.

Inspired by Aki Ito's new article CheatGPT & Hubspot's 2023 State of Marketing Report, author Darragh Grove-White shares 28 new ways to apply AI to marketing 🔎

Inspired by Aki Ito's new article CheatGPT & Hubspot's 2023 State of Marketing Report, author Darragh Grove-White shares 28 new ways to apply AI to marketing 🔎

6. Asimov Unknowingly Pioneered Modern Prompt Engineering

Isaac Asimov, a visionary in the realm of science fiction, unknowingly pioneered modern prompt engineering through his thought-provoking robot series.

Isaac Asimov, a visionary in the realm of science fiction, unknowingly pioneered modern prompt engineering through his thought-provoking robot series.

7. Arize AI Leads the Way in AI Observability with Prompt Variable Monitoring

Arize AI premiered prompt variable monitoring and analysis onstage at Google Cloud Next '24 this week.

Arize AI premiered prompt variable monitoring and analysis onstage at Google Cloud Next '24 this week.

8. Unlocking the Secrets of ChatGPT: Tips and Tricks for Optimizing Your AI Prompts

As one of the most advanced AI models, ChatGPT offers the potential to transform the way we approach tasks in both professional and personal settings.

As one of the most advanced AI models, ChatGPT offers the potential to transform the way we approach tasks in both professional and personal settings.

9. The Quest for Better Music Recommendations Through ChatGPT Prompt Engineering

Prompt Engineering leads to an Open Source Obsidian Plugin to help researchers, an imagination-powered music recommendation engine, and some solid playlists.

Prompt Engineering leads to an Open Source Obsidian Plugin to help researchers, an imagination-powered music recommendation engine, and some solid playlists.

10. Level Up Your ChatGPT Skills by Unleashing The Full Potential of Your Prompts!!

Make your ChatGPT prompts 2X better!

Make your ChatGPT prompts 2X better!

11. For Best Results with LLMs, Use JSON Prompt Outputs

Learn how structured prompt outputs make LLM responses consistent, reliable, and production-ready—with less guesswork and more control.

Learn how structured prompt outputs make LLM responses consistent, reliable, and production-ready—with less guesswork and more control.

12. An Intro to Prompting and Prompt Engineering

Prompting and prompt engineering are easily the most in demand skills of 2023.

Prompting and prompt engineering are easily the most in demand skills of 2023.

13. Explaining Prompt Engineering

Explaining the elements that make prompt engineering work and its importance.

Explaining the elements that make prompt engineering work and its importance.

14. LLMs Don't Understand Negation

LLMs (like GPT) are really bad at following negative instructions. The post includes a demonstration, practice takeaways (prompt engineering), and some thought

LLMs (like GPT) are really bad at following negative instructions. The post includes a demonstration, practice takeaways (prompt engineering), and some thought

15. Escape Prompt Hell With These 8 Must-have Open-source Tools

Discover 8 powerful tools transforming prompt engineering from trial-and-error into scalable systems—featuring visual workflows, auto-tuned prompts, and memory-

Discover 8 powerful tools transforming prompt engineering from trial-and-error into scalable systems—featuring visual workflows, auto-tuned prompts, and memory-

16. Help, My Prompt is Not Working!

Learn what to do when an AI prompt fails—explore step-by-step fixes from prompt tweaks to model changes and fine-tuning in this practical guide.

Learn what to do when an AI prompt fails—explore step-by-step fixes from prompt tweaks to model changes and fine-tuning in this practical guide.

17. Why Prompt Engineering is the Key to Mastering AI

A blog about how prompts unlock the potential of AI - exploring the importance of prompt engineering, techniques to shape AI models

A blog about how prompts unlock the potential of AI - exploring the importance of prompt engineering, techniques to shape AI models

18. Prompting: The Unique Language of AI

An interview with Sander Schulhoff, creator of learnprompting.org, the largest prompting resource online.

An interview with Sander Schulhoff, creator of learnprompting.org, the largest prompting resource online.

19. Unlocking Endless Possibilities with GPT-4: My Journey from Study Plans to a Multitude of Apps

Explore the limitless potential of OpenAI's GPT-4 with Kartik Khosa, as he transforms personalized study plans into diverse AI applications.

Explore the limitless potential of OpenAI's GPT-4 with Kartik Khosa, as he transforms personalized study plans into diverse AI applications.

20. 100 Days of AI Day 3: Leveraging AI for Prompt Engineering and Inference

100 Days of AI Day 3, we enhance products with inference, leveraging LLMs for insights in tech without data expertise.

100 Days of AI Day 3, we enhance products with inference, leveraging LLMs for insights in tech without data expertise.

21. Table-driven Prompt Design: How to Enhance Analysis and Decision Making in your Software Development

Here I’d like to focus on a specific kind of AI prompts - table-driven prompts. They can benefit the workflows and value streams in your software development

Here I’d like to focus on a specific kind of AI prompts - table-driven prompts. They can benefit the workflows and value streams in your software development

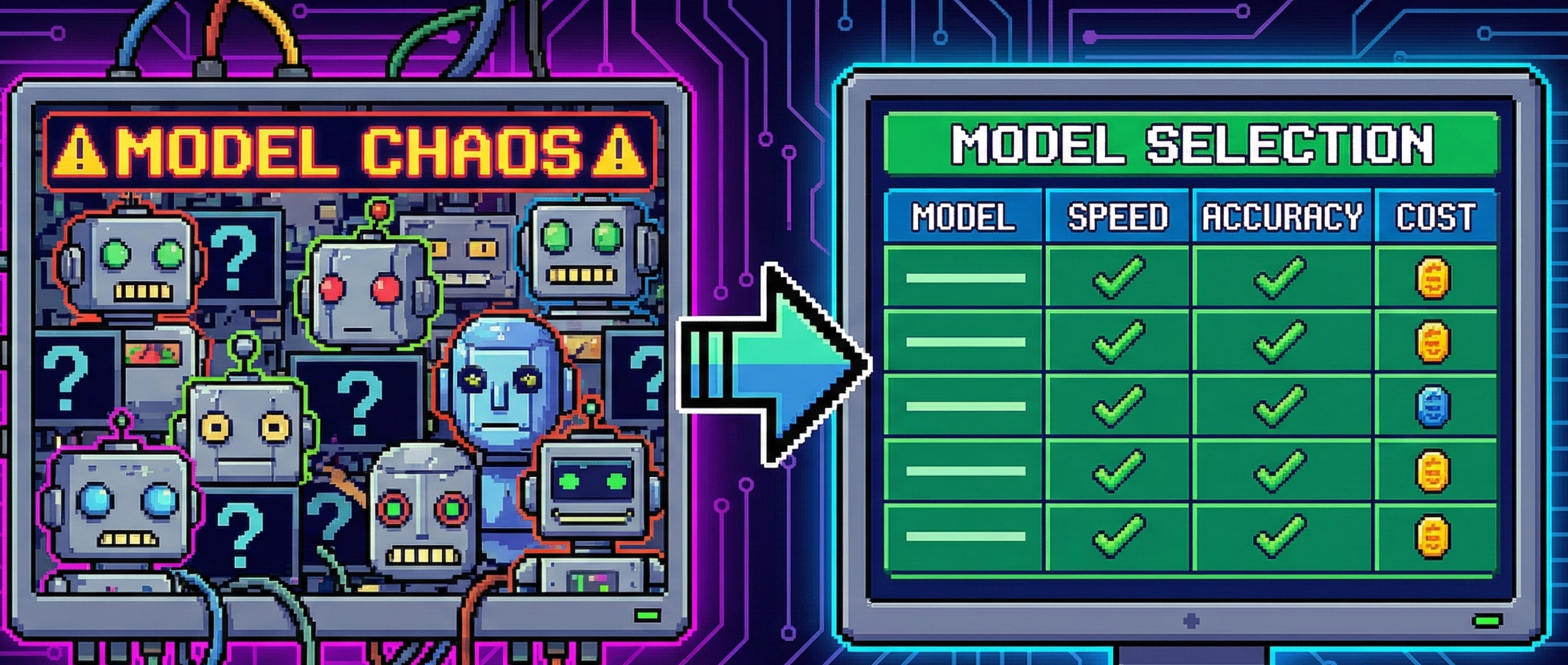

22. Choosing an LLM in 2026: The Practical Comparison Table (Specs, Cost, Latency, Compatibility)

Compare top LLMs by context, cost, latency and tool support—plus a simple decision checklist to match “model + prompt + scenario”.

Compare top LLMs by context, cost, latency and tool support—plus a simple decision checklist to match “model + prompt + scenario”.

23. The Art of Prompt Engineering: How AI Helps Me Do My Homework

How proper prompt engineering takes my interaction with AI chatbots to the next level.

How proper prompt engineering takes my interaction with AI chatbots to the next level.

24. Surpassing ATS and Optimizing Your Resume via ChatGPT

I demonstrate how we can make use of ATS and optimize every word of our resume through ChatGPT to increase chances of getting an interview

I demonstrate how we can make use of ATS and optimize every word of our resume through ChatGPT to increase chances of getting an interview

25. 10 Tips to Take Your ChatGPT Prompts to the Next Level

Maximize your ChatGPT experience with 10 expert tips for crafting precise prompts and queries, enhancing interaction quality.

Maximize your ChatGPT experience with 10 expert tips for crafting precise prompts and queries, enhancing interaction quality.

26. This New Prompting Technique Makes AI Outputs Actually Usable

Structured meta-prompting is a technique that dynamically generates JSON schemas for solutions before performing tasks.

Structured meta-prompting is a technique that dynamically generates JSON schemas for solutions before performing tasks.

27. GPT-4, Llama-2, Claude: How Different Language Models React to Prompts

Exploring the unique behaviors of different Large Language Models (LLMs) and mastering advanced prompting techniques!

Exploring the unique behaviors of different Large Language Models (LLMs) and mastering advanced prompting techniques!

28. The Dark Side of AI: How Prompt Hacking Can Sabotage Your AI Systems

Protect your AI systems to prevent LLMs prompt hacking & safeguard your data. Learn the risks, impacts, and prevention strategies against this emerging threat.

Protect your AI systems to prevent LLMs prompt hacking & safeguard your data. Learn the risks, impacts, and prevention strategies against this emerging threat.

29. Building a TikTok Hook Generator Prompt That Actually Works

A production-ready AI prompt that generates scroll-stopping TikTok hooks. Includes 10 proven frameworks, psychology breakdowns, and real examples. No fluff.

A production-ready AI prompt that generates scroll-stopping TikTok hooks. Includes 10 proven frameworks, psychology breakdowns, and real examples. No fluff.

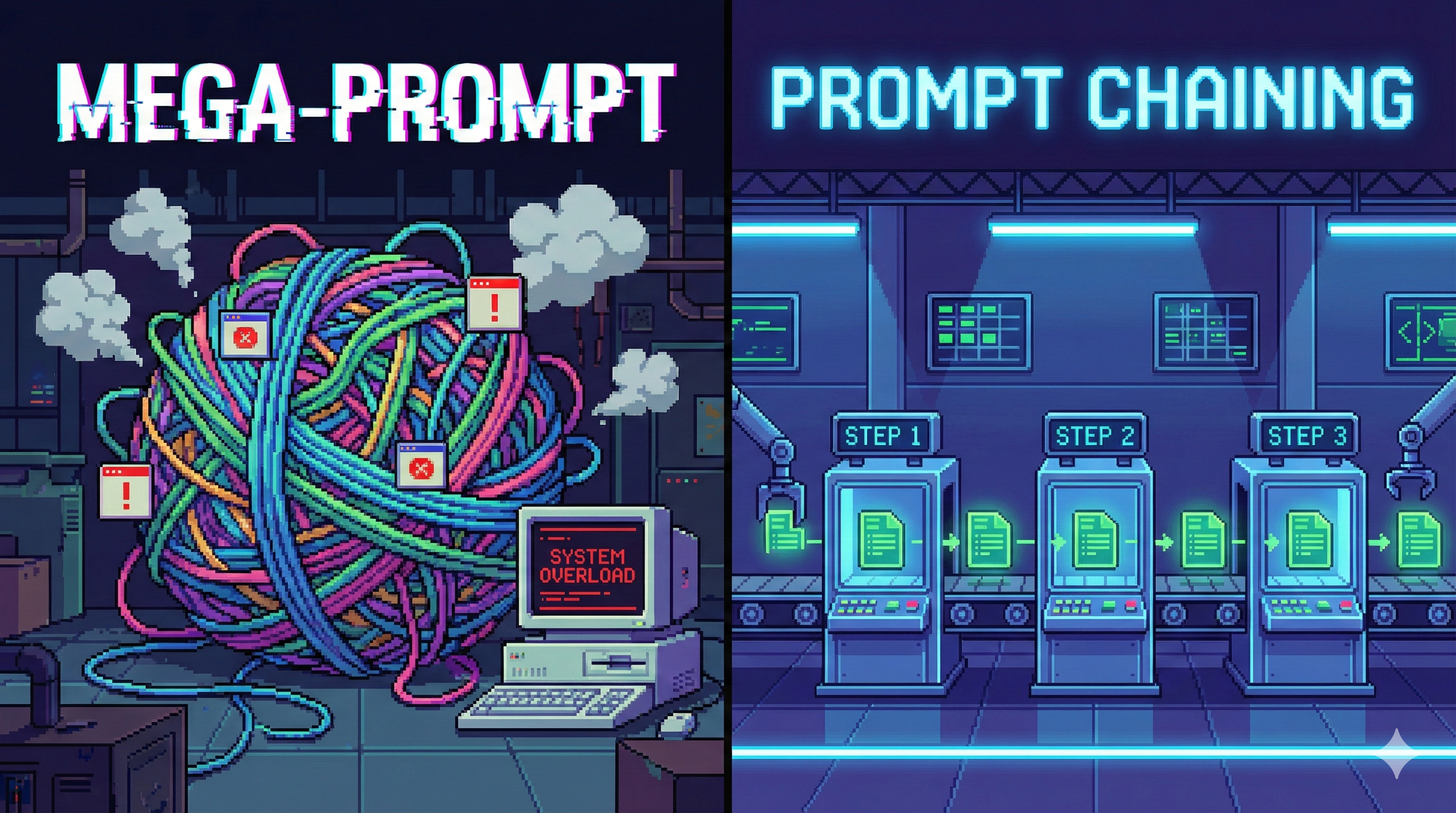

30. Prompt Chaining: Turn One Prompt Into a Reliable LLM Workflow

Prompt Chaining links prompts into workflows—linear, branching, looping—so LLM outputs are structured, debuggable, and production-ready.

Prompt Chaining links prompts into workflows—linear, branching, looping—so LLM outputs are structured, debuggable, and production-ready.

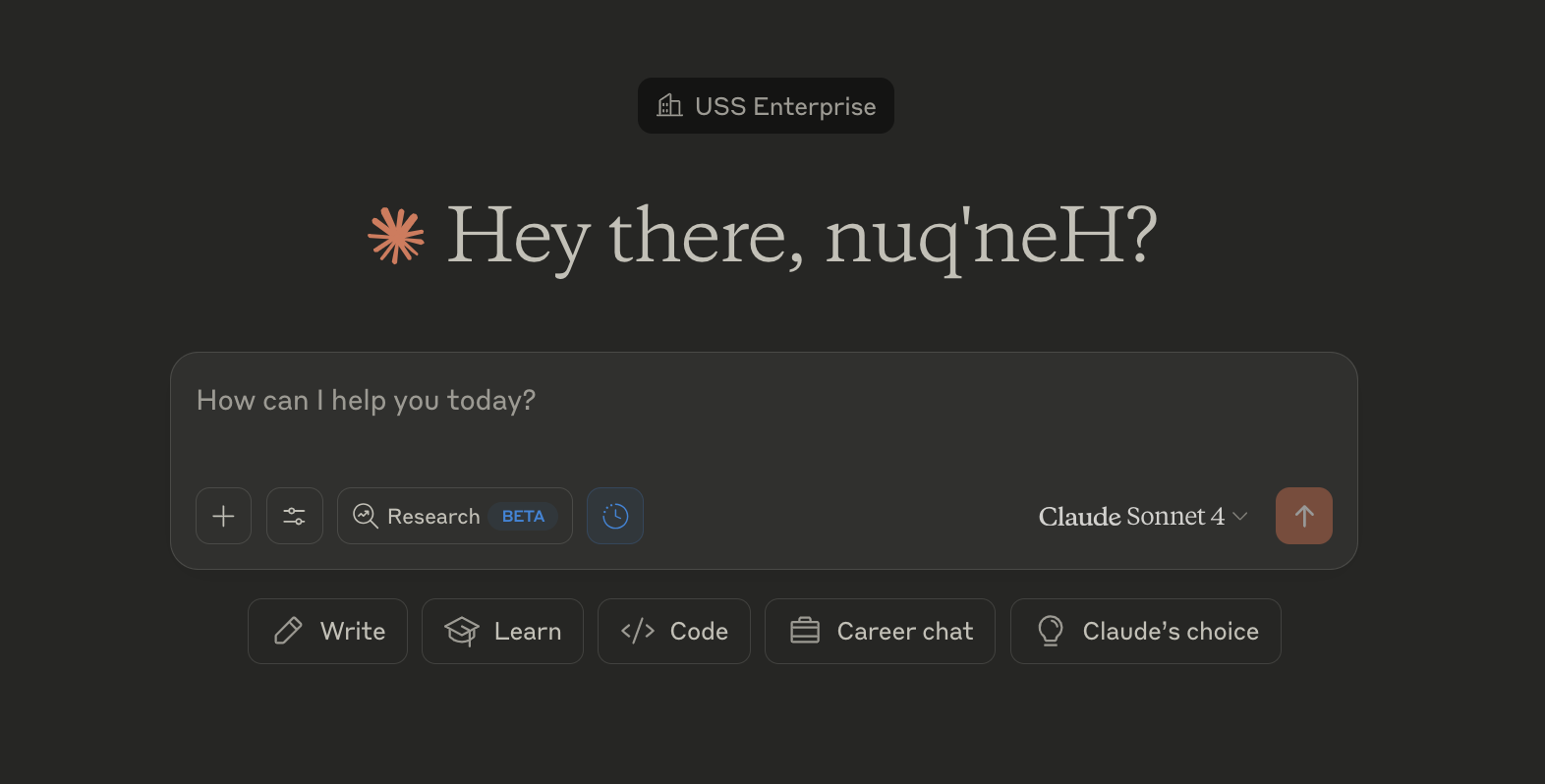

31. Claude's Choice: Read Claude's Default Text Prompts in Full For: Write, Learn, Code, and Career Chat

35 Full Text Examples of Claude system prompts "Write" "Learn" "Code" "Career chat" and "Claude's Choice" as buttons below "How can I help you today?

35 Full Text Examples of Claude system prompts "Write" "Learn" "Code" "Career chat" and "Claude's Choice" as buttons below "How can I help you today?

32. Your Ultimate Guide to Mastering AI Prompt Engineering With MobileGPT

Guide to Mastering AI Prompt Engineering. For beginners and advanced users, this guide simplifies the art of crafting effective prompts for Generative AI.

Guide to Mastering AI Prompt Engineering. For beginners and advanced users, this guide simplifies the art of crafting effective prompts for Generative AI.

33. A Structured Approach to Midjourney Photography Prompts

This is an AI instruction prompt designed to help you create structured, professional-quality Midjourney prompts for photographic images.

This is an AI instruction prompt designed to help you create structured, professional-quality Midjourney prompts for photographic images.

34. Prompt Engineering: Understanding the Potential of Large Language Models

Whether you're a developer integrating AI into your software or a no-coder, marketer, or business analyst adopting AI, prompt engineering is a MUST-HAVE skill t

Whether you're a developer integrating AI into your software or a no-coder, marketer, or business analyst adopting AI, prompt engineering is a MUST-HAVE skill t

35. Prompt Reverse Engineering: Fix Your Prompts by Studying the Wrong Answers

Learn prompt reverse engineering: analyse wrong LLM outputs, identify missing constraints, patch prompts systematically, and iterate like a pro.

Learn prompt reverse engineering: analyse wrong LLM outputs, identify missing constraints, patch prompts systematically, and iterate like a pro.

36. I Built an AI Prompt That Actually Writes YouTube Scripts Worth Watching

I built a structured prompt framework that transforms any AI into a YouTube script specialist.

I built a structured prompt framework that transforms any AI into a YouTube script specialist.

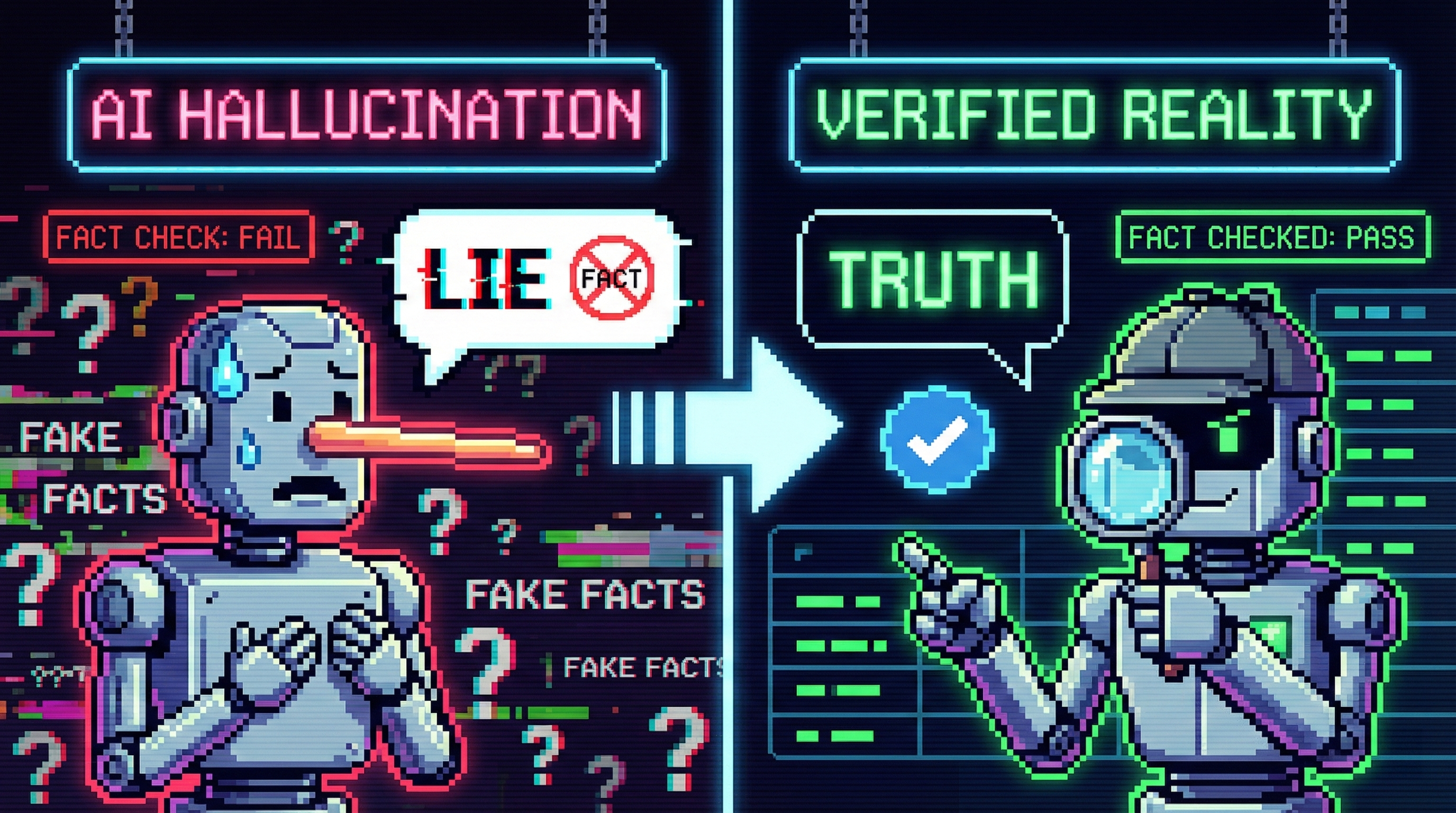

37. Do LLMs Really Lie? Why AI Sounds Convincing While Getting Facts Wrong

AI doesn’t lie — it optimizes for plausibility. Learn why hallucinations happen and how to design verification into your LLM workflows.

AI doesn’t lie — it optimizes for plausibility. Learn why hallucinations happen and how to design verification into your LLM workflows.

38. Prompt Injection Still Beats Production LLMs

Regex and classifiers caught known jailbreaks, but novel prompt injections slipped through. Here’s how a 3B safety judge helped.

Regex and classifiers caught known jailbreaks, but novel prompt injections slipped through. Here’s how a 3B safety judge helped.

39. Building Dynamic Websites With AI-Generated Content Automation

Come on a journey with me as I create a self-generating news app, powered by automated AI-generated content.

Come on a journey with me as I create a self-generating news app, powered by automated AI-generated content.

40. How to Prompt Engineer Phi-3-mini: A Practical Guide

In this article, we’ll walk through how to do it in Python, using the Phi-3-mini-4k-instruct model by Microsoft. We’ll use the Huggingface inference API

In this article, we’ll walk through how to do it in Python, using the Phi-3-mini-4k-instruct model by Microsoft. We’ll use the Huggingface inference API

41. Manners Matter? - The Impact of Politeness on Human-LLM Interaction

Exploring whether politeness impacts the results of human-LLM interactions.

Exploring whether politeness impacts the results of human-LLM interactions.

42. What the Heck Is GPTScript?

In this article, I’ll walk through some basic introductions and examples and give you some thoughts about where you could take it.

In this article, I’ll walk through some basic introductions and examples and give you some thoughts about where you could take it.

43. Stop the LLM From Rambling: Using Penalties to Control Repetition

A practical guide to penalty settings that reduce repetition and fluff in LLM outputs—without making the text weird.

A practical guide to penalty settings that reduce repetition and fluff in LLM outputs—without making the text weird.

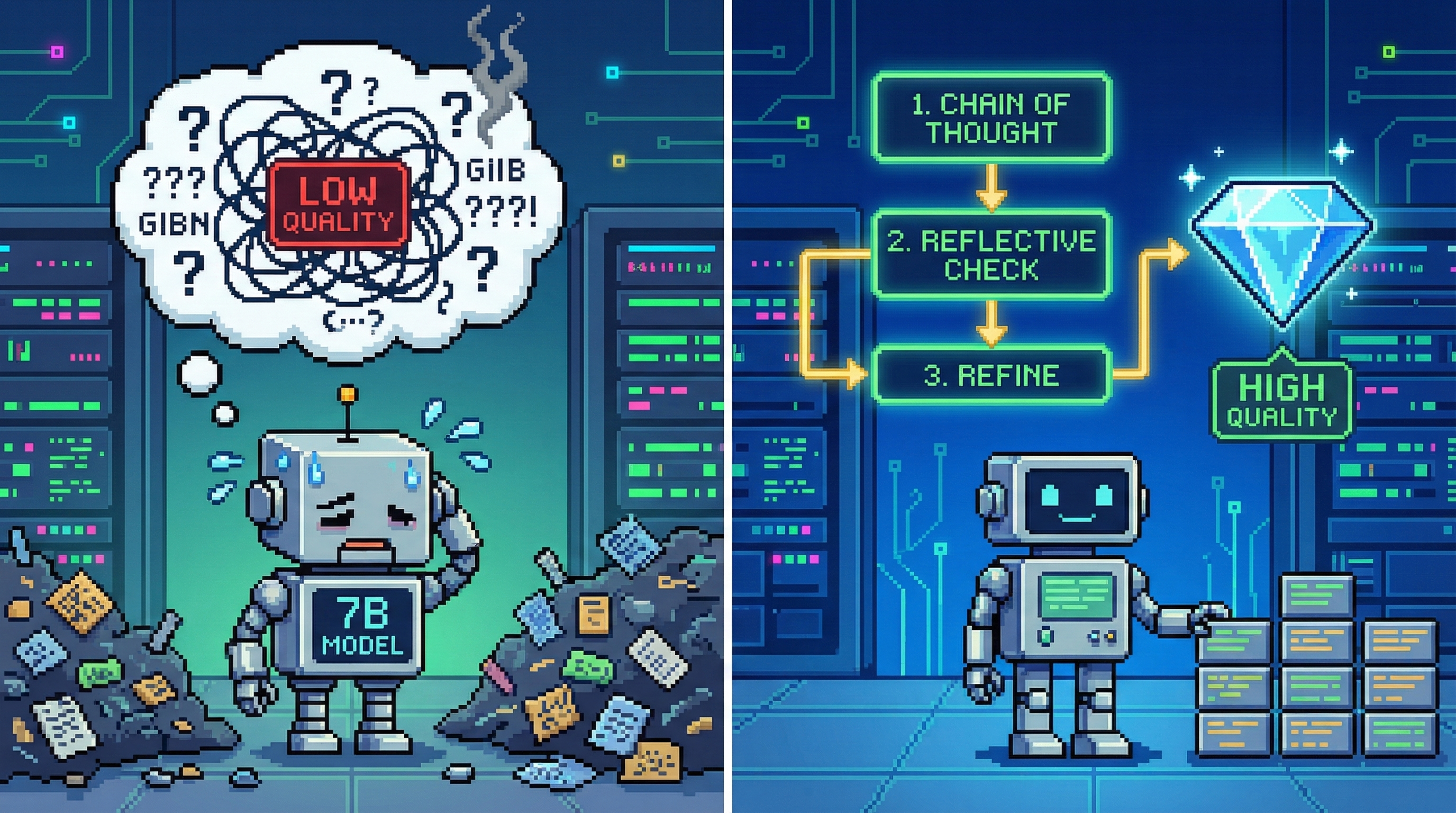

44. Getting High-Quality Output from 7B Models: A Production-Grade Prompting Playbook

A practical guide to making 7B models behave: constrain outputs, inject missing facts, lock formats, and repair loops.

A practical guide to making 7B models behave: constrain outputs, inject missing facts, lock formats, and repair loops.

45. I Built an AI Prompt That Turns Podcast Ideas into Professional Scripts—And It Actually Work

Stop struggling with podcast scripting. This comprehensive prompt transforms ChatGPT, Claude, or Gemini into a professional podcast scriptwriter.

Stop struggling with podcast scripting. This comprehensive prompt transforms ChatGPT, Claude, or Gemini into a professional podcast scriptwriter.

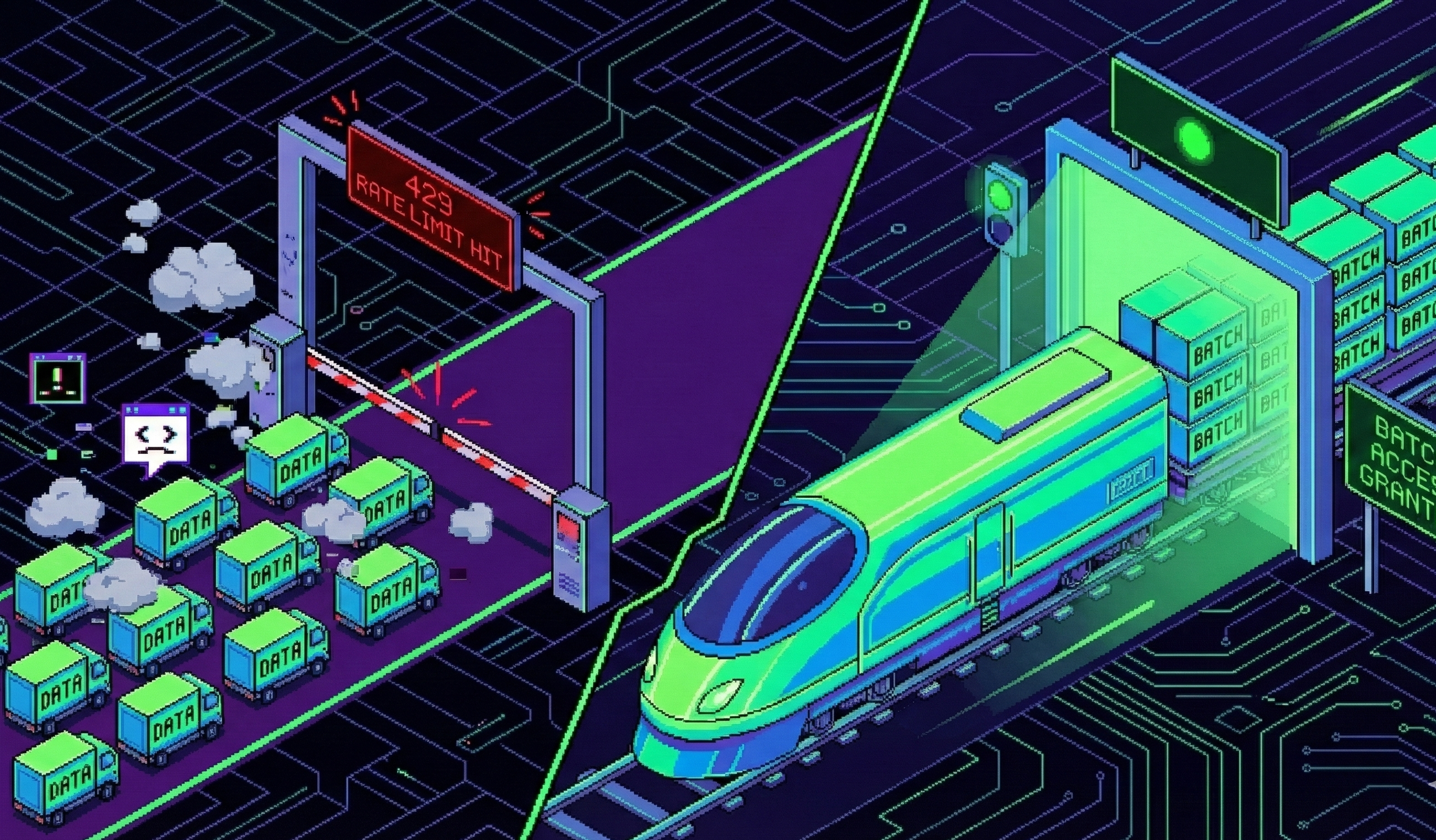

46. Prompt Rate Limits & Batching: How to Stop Your LLM API From Melting Down

LLM APIs have real speed limits. Learn how tokens, rate limits, and batching affect scale—and how to avoid costly 429 errors in production.

LLM APIs have real speed limits. Learn how tokens, rate limits, and batching affect scale—and how to avoid costly 429 errors in production.

47. Learn How to Access and Use OpenAI's Free AI Prompt Generator

Learn how to improve your AI prompts effortlessly with a free tool from OpenAI.

Learn how to improve your AI prompts effortlessly with a free tool from OpenAI.

48. How to Craft Inclusive AI Prompts That Mitigate Bias

Learn techniques for mitigating bias and promoting diversity in AI prompts.

Learn techniques for mitigating bias and promoting diversity in AI prompts.

49. Prompt Length vs. Context Window: The Real Limits of LLM Performance

how prompt length interacts with an LLM’s context window—why it matters, how it breaks, and how to design prompts that stay sharp and scalable.

how prompt length interacts with an LLM’s context window—why it matters, how it breaks, and how to design prompts that stay sharp and scalable.

50. MusicGen from Meta AI — Understanding Model Architecture, Vector Quantization and Model Conditioning

Wish to generate high quality, realistic, controllable music using AI? Meta's new MusicGen is the answer.

Wish to generate high quality, realistic, controllable music using AI? Meta's new MusicGen is the answer.

51. Prompt Engineers Can Make $335K a Year: What Are They and How to Become One

It’s hard to say what the job market will look like in the next several years, but it seems that prompt engineers will continue to be in high demand.

It’s hard to say what the job market will look like in the next several years, but it seems that prompt engineers will continue to be in high demand.

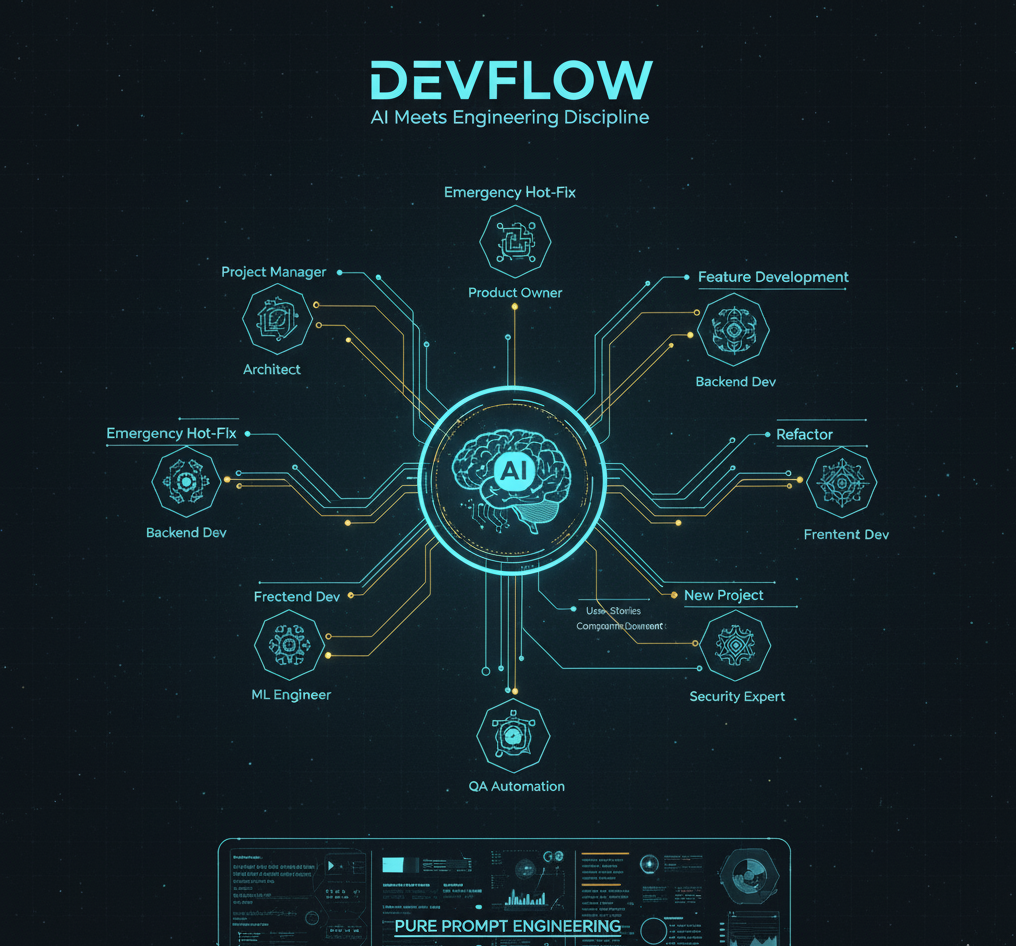

52. From Chaos to Quality: A Framework for AI-Assisted Development

How I turned AI coding from chaos to production-ready: DevFlow adds security reviews, quality gates, and audit trails to Claude Code, Cursor, Gemini.

How I turned AI coding from chaos to production-ready: DevFlow adds security reviews, quality gates, and audit trails to Claude Code, Cursor, Gemini.

53. Meta-Prompting: From “Using Prompts” to “Generating Prompts”

Meta-prompts make LLMs generate high-quality prompts for you. Learn the 4-part template, pitfalls, and ready-to-copy examples.

Meta-prompts make LLMs generate high-quality prompts for you. Learn the 4-part template, pitfalls, and ready-to-copy examples.

54. ChatGPT Vs. ChatGPT: How to Detect Text Generated Using the AI Language Model

ChatGPT can help you assess if a text has been written by an LLM.

ChatGPT can help you assess if a text has been written by an LLM.

55. Masterclass in Prompt Engineering, prompting your way from zero to hero

a masterclass in prompt engineering with examples of effective prompts to use in order to unlocking the potential of ChatGPT

a masterclass in prompt engineering with examples of effective prompts to use in order to unlocking the potential of ChatGPT

56. LangChain Promised an Easy AI Interface for MySQL—Here’s What It Really Took

Learn how I built a multi-stage Langchain agent for MySQL. This article details my journey, challenges, and key steps in creating an intelligent database intera

Learn how I built a multi-stage Langchain agent for MySQL. This article details my journey, challenges, and key steps in creating an intelligent database intera

57. Mastering ChatGPT in 2024: A 2-Step Guide with Prompt Examples

Learn everything you need to know about ChatGPT in just minutes!

Learn everything you need to know about ChatGPT in just minutes!

58. New Prompting Technique Claims to Help AI Think Like Humans

Learn how Chain-of-Thought prompting improves AI reasoning through step-by-step solutions, enhancing accuracy in tasks from math to logical problems.

Learn how Chain-of-Thought prompting improves AI reasoning through step-by-step solutions, enhancing accuracy in tasks from math to logical problems.

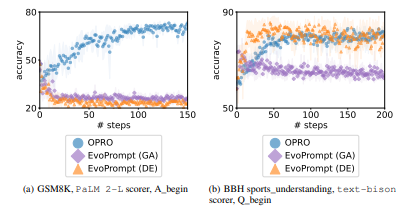

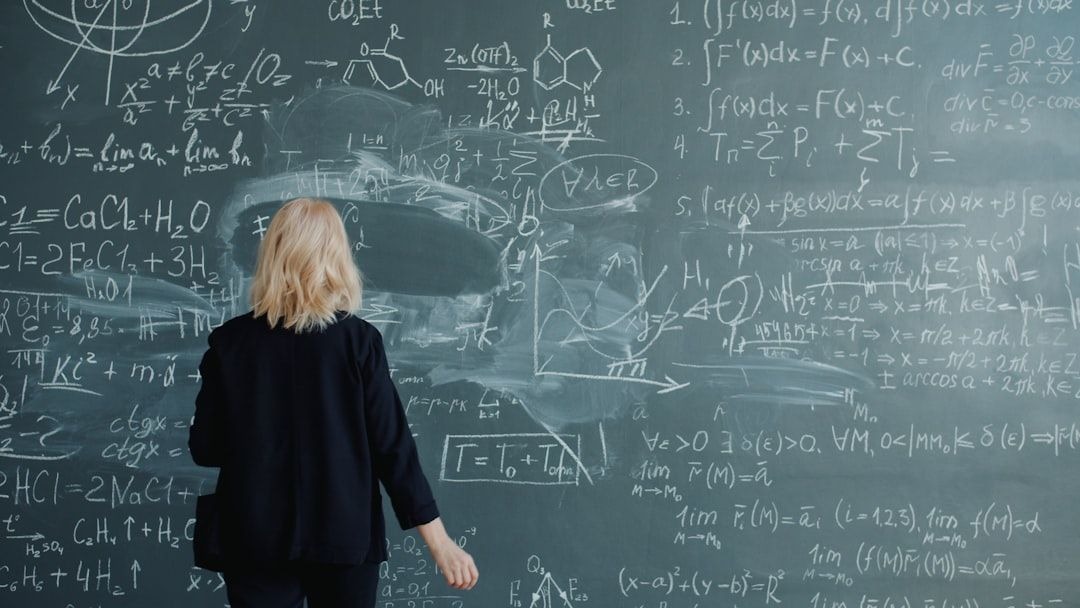

59. Everything We Know About Prompt Optimization Today

Review related works in prompt optimization, covering methods like soft prompt-tuning, gradient-guided searches, and evolutionary algorithms.

Review related works in prompt optimization, covering methods like soft prompt-tuning, gradient-guided searches, and evolutionary algorithms.

60. Why Agent Skills Could Be the Most Practical Leap in Everyday AI

Agent Skills add plug‑in style abilities to Claude via progressive loading and sandboxed execution—simpler than MCP for repeatable work.

Agent Skills add plug‑in style abilities to Claude via progressive loading and sandboxed execution—simpler than MCP for repeatable work.

61. Engineering Instagram Captions: A Structured Prompt for AI Tools

A comprehensive prompt engineering framework that turns AI models into Instagram caption generators. Includes structured inputs, quality gates, and real example

A comprehensive prompt engineering framework that turns AI models into Instagram caption generators. Includes structured inputs, quality gates, and real example

62. A Twitter Thread Prompt That Actually Works

A practical AI prompt template for generating Twitter threads that don't suck. Includes the complete framework, real usage tips, and honest limitations.

A practical AI prompt template for generating Twitter threads that don't suck. Includes the complete framework, real usage tips, and honest limitations.

63. ChatGPT Is Not Just a Tool; It's a Mirror for How You Think

ChatGPT isn’t magic it’s a mirror for how we think. I have learned that better prompts aren’t about hacks, they are about precision,patience and fine tuning

ChatGPT isn’t magic it’s a mirror for how we think. I have learned that better prompts aren’t about hacks, they are about precision,patience and fine tuning

64. How to Become Real Good in Prompt Engineering

I changed one thing in my prompt. Suddenly, the AI was writing emails that actually sounded human.

I changed one thing in my prompt. Suddenly, the AI was writing emails that actually sounded human.

65. Achieving Relevant LLM Responses By Addressing Common Retrieval Augmented Generation Challenges

We look at common problems that can arise with RAG implementations and LLM interactions.

We look at common problems that can arise with RAG implementations and LLM interactions.

66. Meet the HackerNoon Top Writers: Writing Through the Noise of AI with Albert Lie

Meet Albert Lie, Co-Founder & CTO of Forward Labs, in his interview with HackerNoon Writers Spotlight, talking about writing amid the Noise of AI.

Meet Albert Lie, Co-Founder & CTO of Forward Labs, in his interview with HackerNoon Writers Spotlight, talking about writing amid the Noise of AI.

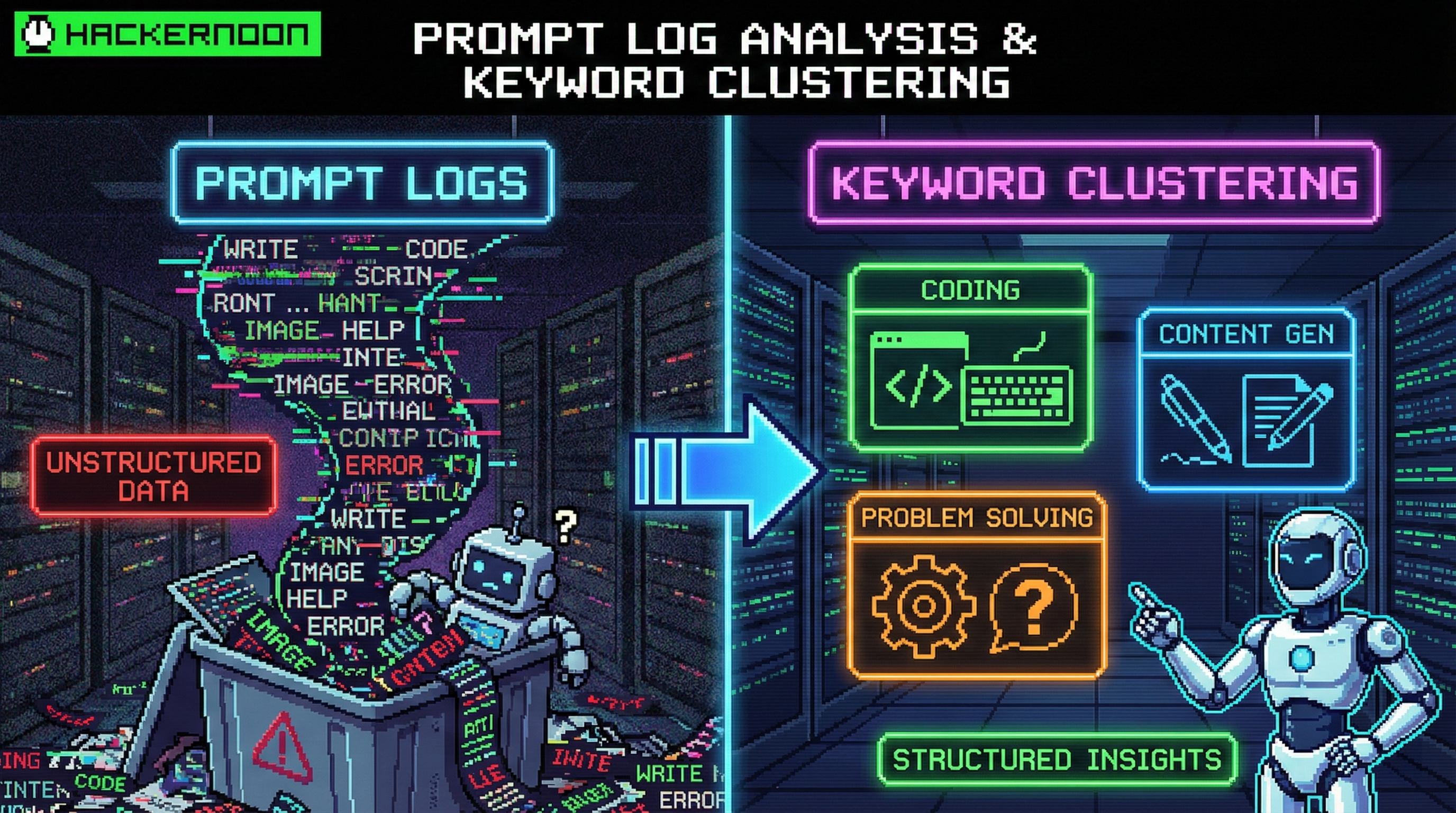

67. Prompt-Driven Log Analysis & Keyword Clustering

Expert prompt templates to parse messy logs, cluster keywords, and pair LLMs with Elastic/OTel/Python for incident-ready insights.

Expert prompt templates to parse messy logs, cluster keywords, and pair LLMs with Elastic/OTel/Python for incident-ready insights.

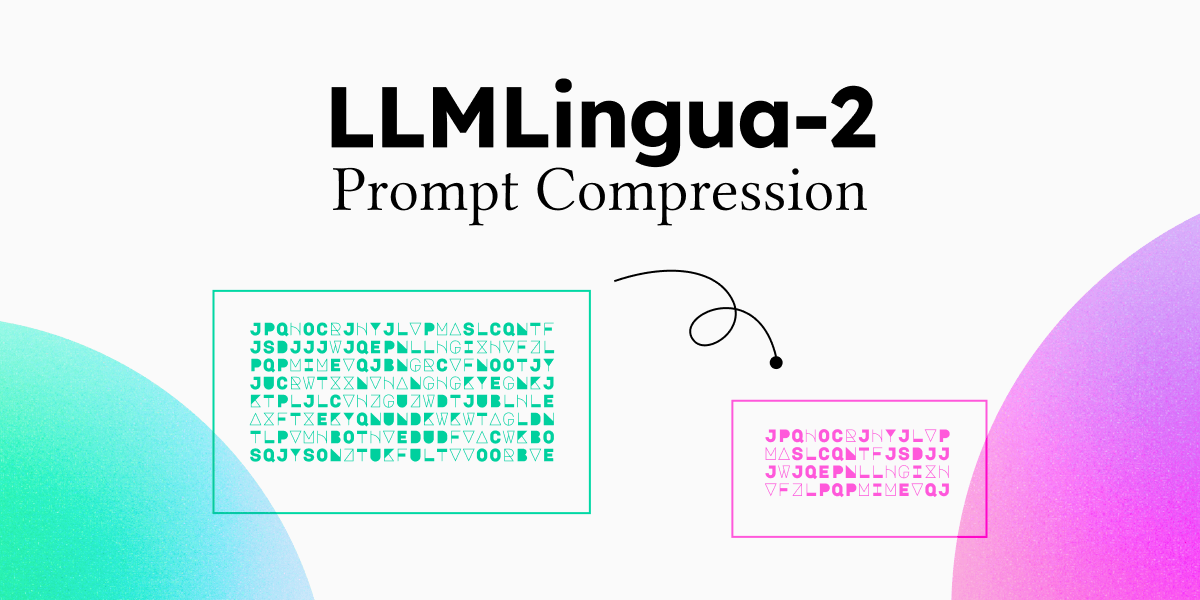

68. How to Compress Your Prompts and Reduce LLM Costs

Microsoft just solved the hidden cost problem in AI with LLMLingua, making large language models faster, cheaper, and smarter.

Microsoft just solved the hidden cost problem in AI with LLMLingua, making large language models faster, cheaper, and smarter.

69. I Built a Press Release AI Prompt That Journalists Actually Read—Here's the Complete System

Stop sending press releases that get ignored. This structured prompt transforms ChatGPT, Claude, or Gemini into a professional PR strategist.

Stop sending press releases that get ignored. This structured prompt transforms ChatGPT, Claude, or Gemini into a professional PR strategist.

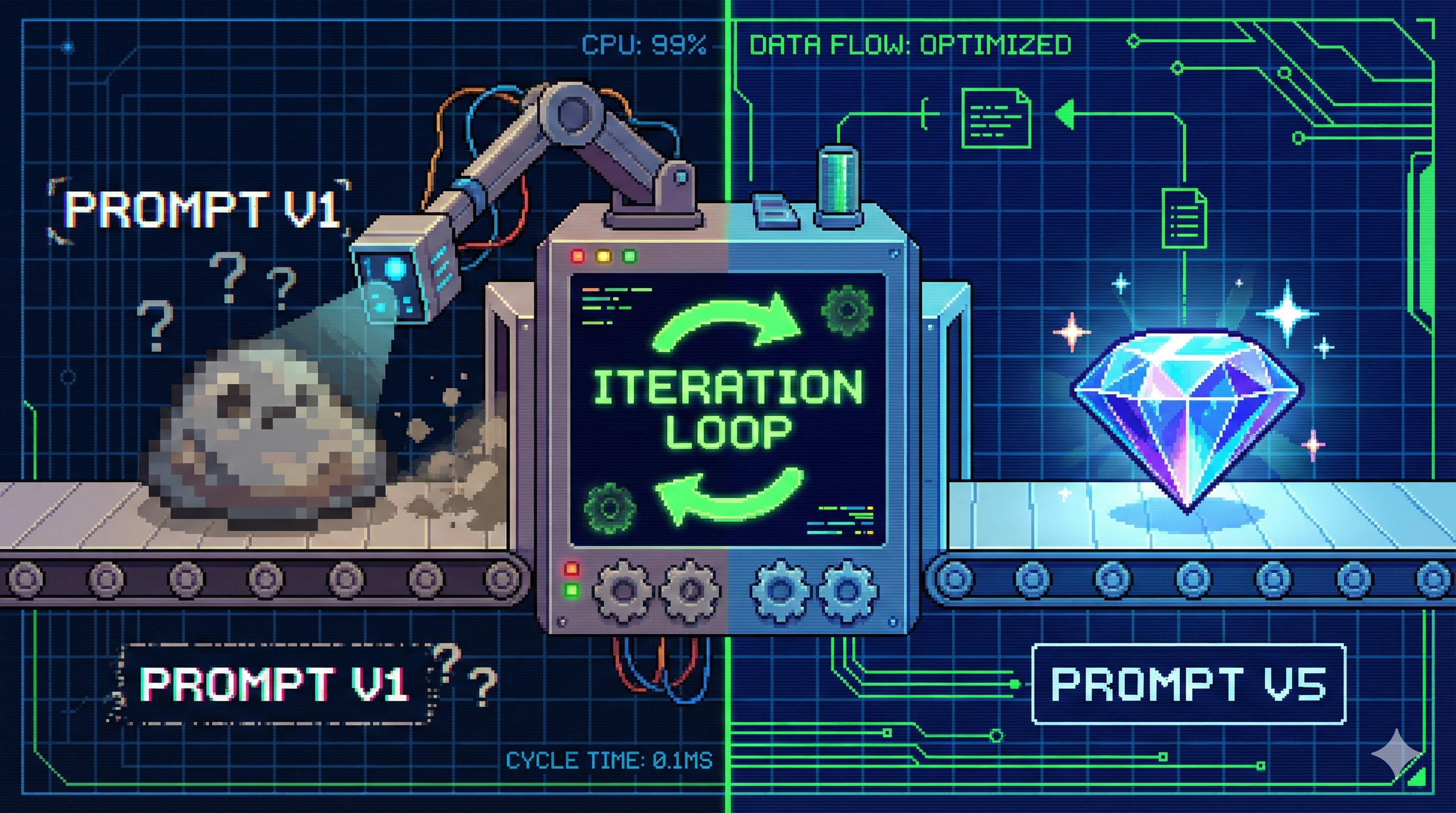

70. Re-Prompting: The Loop That Turns “Meh” LLM Output Into Production-Ready Results

A practical guide to re-prompting: the 5-step loop that turns vague LLM prompts into stable, structured, production-ready outputs.

A practical guide to re-prompting: the 5-step loop that turns vague LLM prompts into stable, structured, production-ready outputs.

71. Fine-Tuning Mistral 7B: Enhance Open-Source Language Models with MindsDB and Anyscale Endpoints

Learn how to skip the prompt engineering and fine-tune an AI model to get the responses you want.

Learn how to skip the prompt engineering and fine-tune an AI model to get the responses you want.

72. 100 Days of AI Day 2: Enhancing Prompt Engineering for ChatGPT

On day 2 of 100 Days of AI, we learn prompt engineering tips for optimal AI output.

On day 2 of 100 Days of AI, we learn prompt engineering tips for optimal AI output.

73. LLMs vs. Heuristics: Tackling the Traveling Salesman Problem (TSP)

Explore how LLMs perform when faced with the Traveling Salesman Problem (TSP).

Explore how LLMs perform when faced with the Traveling Salesman Problem (TSP).

74. The GPT-5 Prompt Gap: The Hidden Reason Your AI Outputs Suck

Boost GPT-5 results with 15,000 expert prompts that turn bland AI outputs into high-impact content for entrepreneurs, marketers, and creators.

Boost GPT-5 results with 15,000 expert prompts that turn bland AI outputs into high-impact content for entrepreneurs, marketers, and creators.

75. A Secret Technique To Sidestepping LLM Hallucinations

AI sometimes produces bizarre responses. Learn why it happens and how to prevent it.

AI sometimes produces bizarre responses. Learn why it happens and how to prevent it.

76. How to Leverage LLMs for Effective and Scalable Software Development

The following are some key coding principles and development practices that can be applied to LLM-assisted software development

The following are some key coding principles and development practices that can be applied to LLM-assisted software development

77. From Black Box to Transparent: How to Read the Inner Logic of LLMs

Explore how LLMs work from the inside: from token‑prediction fundamentals to Transformer architecture, training stages and reasoning chains. Learn how to prompt

Explore how LLMs work from the inside: from token‑prediction fundamentals to Transformer architecture, training stages and reasoning chains. Learn how to prompt

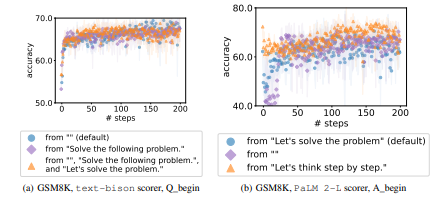

78. Key Takeaways from Our Ablation Studies on LLMs

Explore our ablation studies using text-bison and PaLM 2-L to uncover critical design choices in prompt optimization.

Explore our ablation studies using text-bison and PaLM 2-L to uncover critical design choices in prompt optimization.

79. Prompt vs Feature Engineering: The Hidden Bridge Between Humans and Machines

Prompting and feature engineering share a secret DNA — both translate human intent into machine understanding. Here’s how they differ, connect, and converge in

Prompting and feature engineering share a secret DNA — both translate human intent into machine understanding. Here’s how they differ, connect, and converge in

80. 3 Part Formula on How to Write AI Prompts That Make Better Animations

Stop confusing the AI. Learn the simple 3-Part Formula to write animation prompts that work. Get easy tips to fix errors and make perfect videos every time.

Stop confusing the AI. Learn the simple 3-Part Formula to write animation prompts that work. Get easy tips to fix errors and make perfect videos every time.

81. AI Slop in 2025: The Year the Internet Started Eating Itself

AI slop isn’t just low-quality content—it’s a systems problem. Here’s what 2025 data suggests about scale, impact, and realistic governance paths.

AI slop isn’t just low-quality content—it’s a systems problem. Here’s what 2025 data suggests about scale, impact, and realistic governance paths.

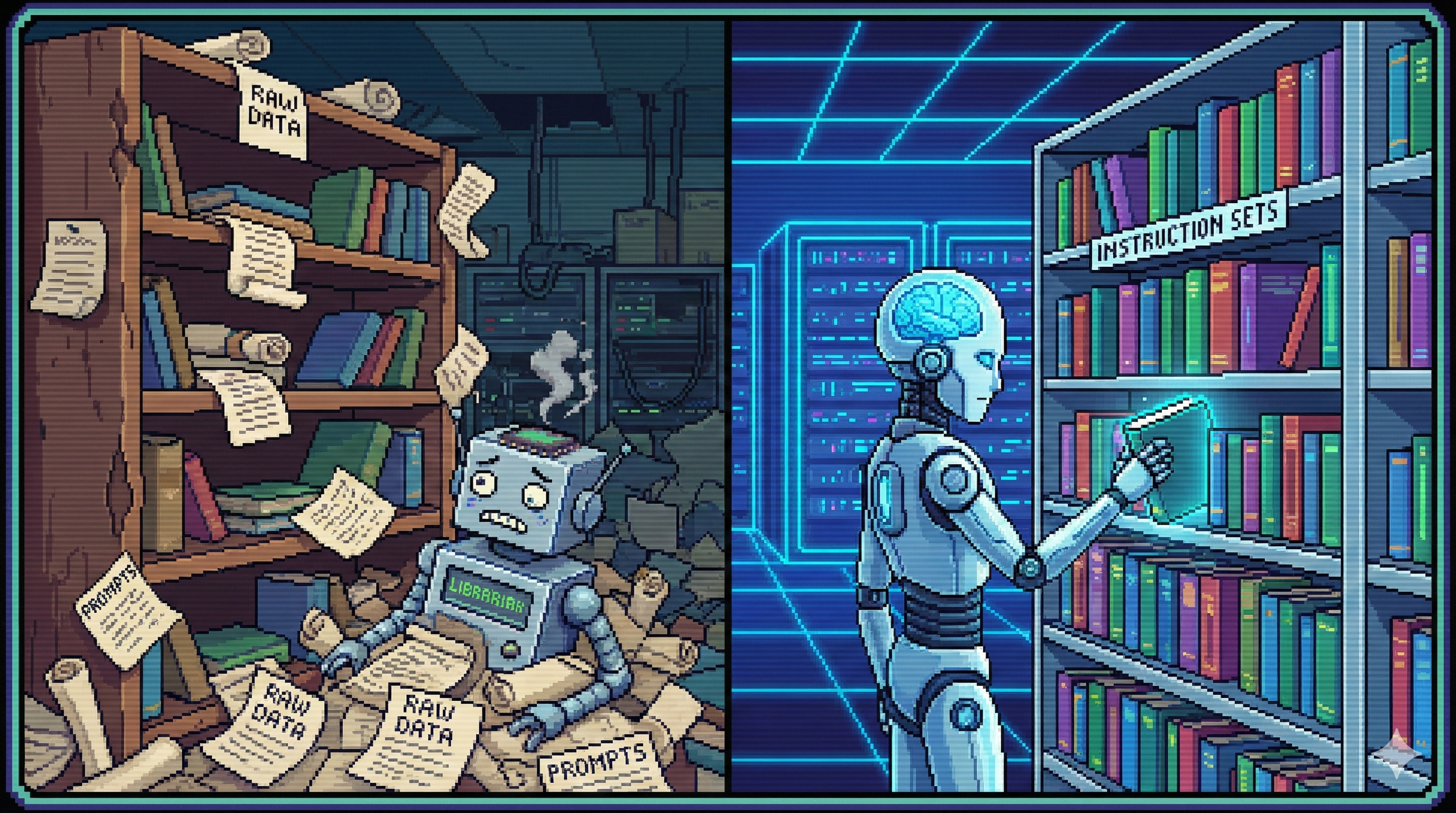

82. Instruction Tuning and Custom Instruction Libraries: Your Model’s Real ‘Operating Manual

A practical guide to Instruction Tuning and building custom instruction libraries so your LLM follows rules reliably across many tasks.

A practical guide to Instruction Tuning and building custom instruction libraries so your LLM follows rules reliably across many tasks.

83. Hallucinations Are A Feature of AI, Humans Are The Bug

Large language models were never meant to be sources of absolute truth. Yet, we continue to treat them as such.

Large language models were never meant to be sources of absolute truth. Yet, we continue to treat them as such.

84. Recursive Language Models - Maybe a Newer Era of Prompt Engineering?

Have you tried feeding a massive document into ChatGPT or Claude? Sometimes, it gives good insights, and sometimes, you've hit the wall.

Have you tried feeding a massive document into ChatGPT or Claude? Sometimes, it gives good insights, and sometimes, you've hit the wall.

85. Prompting for Safety: How to Stop Your LLM From Leaking Sensitive Data

Practical guide to LLM safety prompts: how to design, test, and update instructions that stop your AI from leaking sensitive personal and corporate data.

Practical guide to LLM safety prompts: how to design, test, and update instructions that stop your AI from leaking sensitive personal and corporate data.

86. Beyond Linear Chats: Rethinking How We Interact with Multiple AI Models

Discover how visual mind maps, git-like versioning, prompt optimization, and PDF export transform LLM chat apps for efficient, organized research.

Discover how visual mind maps, git-like versioning, prompt optimization, and PDF export transform LLM chat apps for efficient, organized research.

87. Prompt Is the Hidden Commander Behind Every AI Output

Discover how prompts shape the quality of AI output. Learn how to engineer precise, structured, and context-rich prompts to unlock the full potential of LLM.

Discover how prompts shape the quality of AI output. Learn how to engineer precise, structured, and context-rich prompts to unlock the full potential of LLM.

88. Prompt-Powered Personas: How AI Finally Fixes the Messy World of User Profiling

Use LLM prompts to turn messy customer data into living, evidence‑backed user personas in five practical steps, with prompts, cases, and pitfalls.

Use LLM prompts to turn messy customer data into living, evidence‑backed user personas in five practical steps, with prompts, cases, and pitfalls.

89. The 5 Stages of LLM Systems: From Playground Hacks to Real Architecture

Discover the LLM maturity model: from simple prompts to orchestrated systems. Why spaghetti flows fail - and how real architecture wins.

Discover the LLM maturity model: from simple prompts to orchestrated systems. Why spaghetti flows fail - and how real architecture wins.

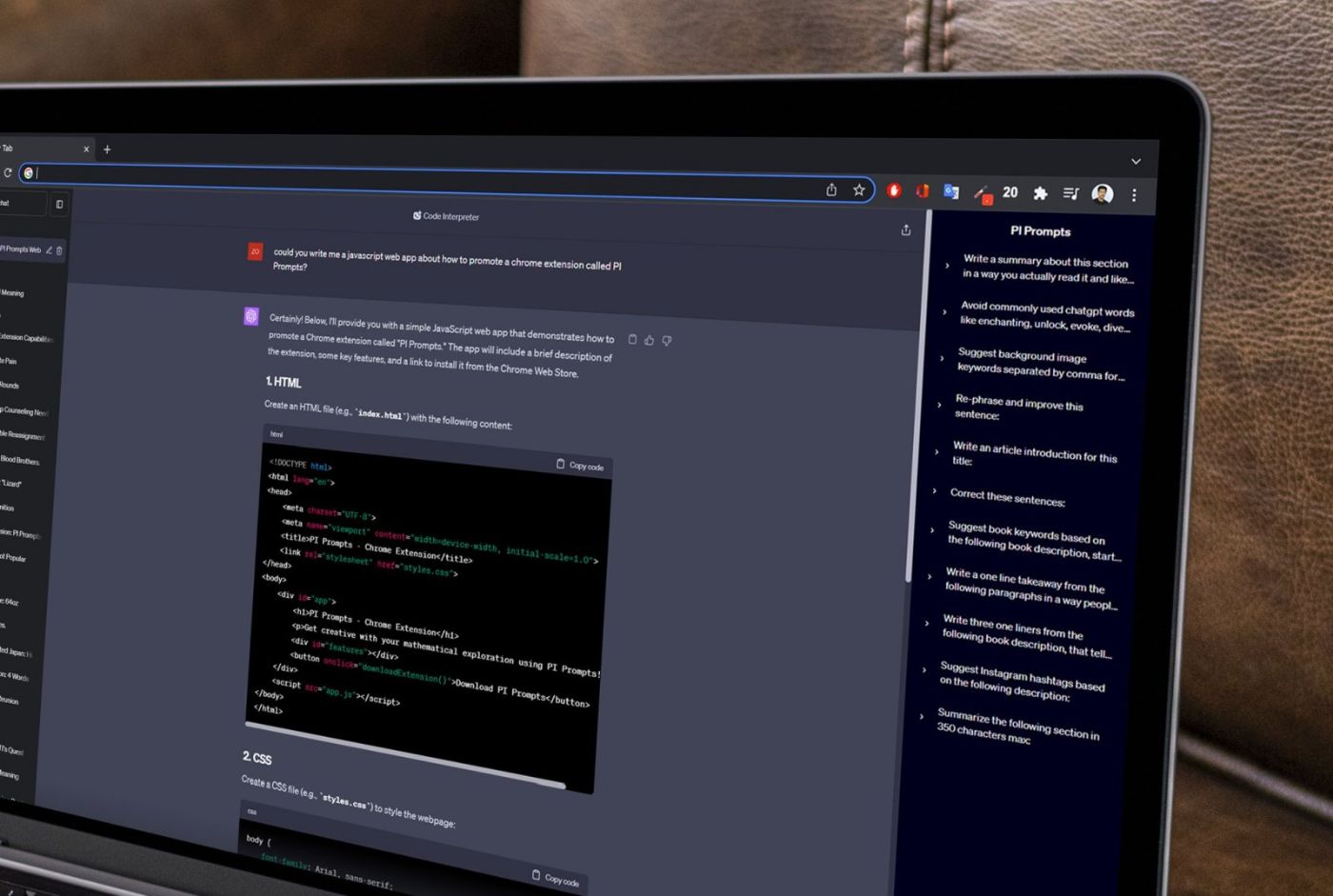

90. Accessing Your ChatGPT Prompts Without Copy-Paste (2023): PI Prompts for Prompt Engineers - A Guide

"PI Prompts," a Chrome extension that revolutionizes the use of ChatGPT by offering a streamlined way to manage, access, and use your prompt library.

"PI Prompts," a Chrome extension that revolutionizes the use of ChatGPT by offering a streamlined way to manage, access, and use your prompt library.

91. How I Saved $10,000 on Product Research with an AI Megaprompt

Discover how I used AI and the Jobs To Be Done (JTBD) framework to save $10,000 on product research, automate insights, and build better product strategies.

Discover how I used AI and the Jobs To Be Done (JTBD) framework to save $10,000 on product research, automate insights, and build better product strategies.

92. Using AI to Build a Monte Carlo Simulation

ChatGPT helps build a full Monte Carlo simulation for copula modeling—no human coding needed, just natural language and math prompts.

ChatGPT helps build a full Monte Carlo simulation for copula modeling—no human coding needed, just natural language and math prompts.

93. Prompt Engineering for Architects: Using LLMs to Validate System Design Constraints

Most developers use LLMs as a "Junior Developer" to write boilerplate. The real 10x leverage comes from using LLMs as a "Hostile Principal Engineer."

Most developers use LLMs as a "Junior Developer" to write boilerplate. The real 10x leverage comes from using LLMs as a "Hostile Principal Engineer."

94. How I Built a Production-Grade AI Prompt That Writes Executive Summaries in 30 Minutes

AI prompt can write executive summaries in 30 minutes.

AI prompt can write executive summaries in 30 minutes.

95. Improving Your LLM: Train, fine-tune, prompt, RAG... What to do?!

How to optimize your LLMaply as possible

How to optimize your LLMaply as possible

96. A Practical Guide to Prompt Engineering for Today’s LLMs

Mastering prompt engineering boosts AI performance. Learn structured techniques like role assignment, few-shot examples, and prompt chaining to refine outcomes

Mastering prompt engineering boosts AI performance. Learn structured techniques like role assignment, few-shot examples, and prompt chaining to refine outcomes

97. Here Are 10 Prompt Engineering Techniques to Transform Your Approach to AI

Discover 10 advanced prompt engineering techniques: recursive expansion, token optimization, DRY principle, persona emulation for superior AI outputs.

Discover 10 advanced prompt engineering techniques: recursive expansion, token optimization, DRY principle, persona emulation for superior AI outputs.

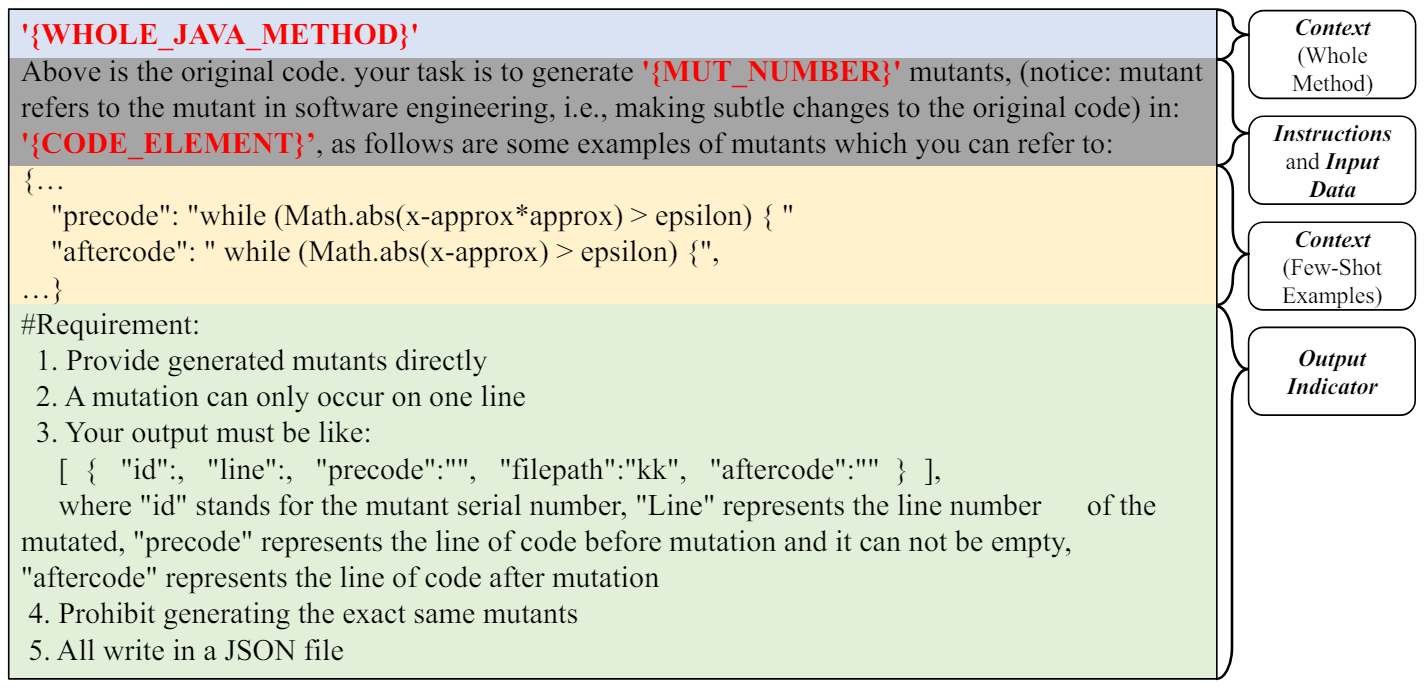

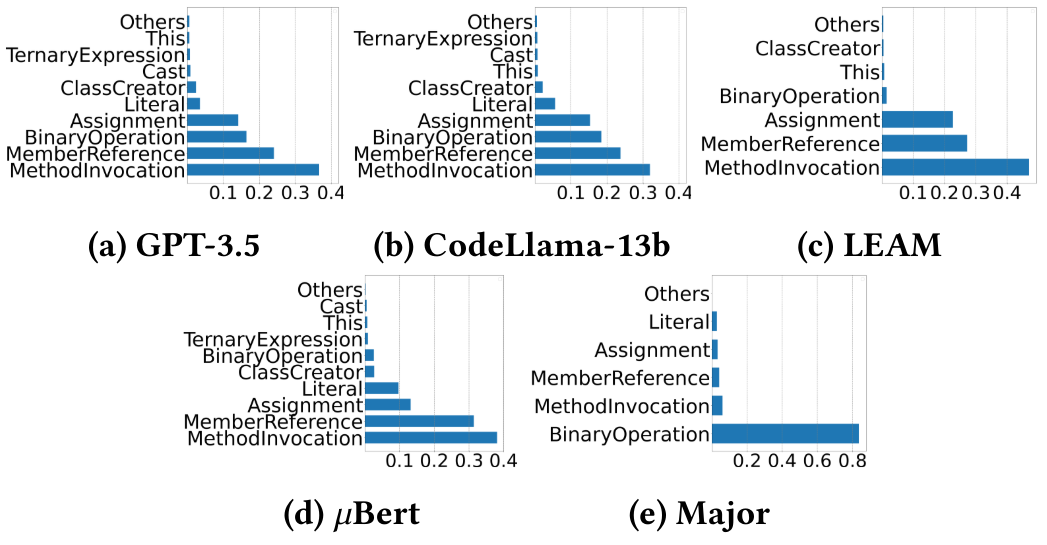

98. Using LLMs to Mutate Java Code

Explore how large language models generate and filter Java code mutations using prompt design and compare open-source and closed-source LLMs.

Explore how large language models generate and filter Java code mutations using prompt design and compare open-source and closed-source LLMs.

99. Stop Parsing Nightmares: Prompting LLMs to Return Clean, Parseable JSON

Prompt engineering guide for getting LLMs to return clean, parseable JSON every time, with templates, failure modes, and production-ready patterns.

Prompt engineering guide for getting LLMs to return clean, parseable JSON every time, with templates, failure modes, and production-ready patterns.

100. What Happens When You Change the "Temperature" of Your AI?

Learn how temperature affects LLM creativity and accuracy—discover the math, logic, and best practices for tuning AI models like ChatGPT and Claude.

Learn how temperature affects LLM creativity and accuracy—discover the math, logic, and best practices for tuning AI models like ChatGPT and Claude.

101. Read This And You Won't Worry About Being Replaced By an AI as a Software Developer

Why have so many IT developers been let go? How can you keep your IT job in the AI era? Read this article to find out!

Why have so many IT developers been let go? How can you keep your IT job in the AI era? Read this article to find out!

102. The HackerNoon Newsletter: Salt Typhoon: The Hidden Hand Behind the Telecom Gift Card Scam? (6/12/2025)

6/12/2025: Top 5 stories on the HackerNoon homepage!

6/12/2025: Top 5 stories on the HackerNoon homepage!

103. God, Aliens, and Infinite Loops: Pushing Google Antigravity to the Breaking Point

Stateless agents. Recursive context loops. An inaccessible IDE. Here's what building a Genesis simulation taught me about agentic AI's real limits.

Stateless agents. Recursive context loops. An inaccessible IDE. Here's what building a Genesis simulation taught me about agentic AI's real limits.

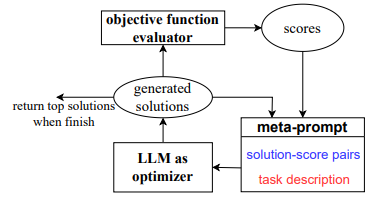

104. Large Language Models as Optimizers

Explore how Optimization by PROmpting uses LLMs as derivative-free optimizers reaching up to 50% accuracy improvements over human prompts in complex tasks.

Explore how Optimization by PROmpting uses LLMs as derivative-free optimizers reaching up to 50% accuracy improvements over human prompts in complex tasks.

105. Learning AI From Scratch: Streaming Output, the Secret Sauce Behind Real-Time LLMs

Learn how to build real-time AI experiences with LangChain’s streaming API. Stream tokens, enhance UX, and master LCEL for scalable LLM pipelines.

Learn how to build real-time AI experiences with LangChain’s streaming API. Stream tokens, enhance UX, and master LCEL for scalable LLM pipelines.

106. PromptCompressor: Enjoy 25% Savings on GPT API Without Losing Quality!

Introducing PromptCompressor, a web service that offers a cost-effective way to use language models like ChatGPT.

Introducing PromptCompressor, a web service that offers a cost-effective way to use language models like ChatGPT.

107. How I Built an AI Prompt That Writes LinkedIn Articles That Actually Get Read

The AI needs context. It needs structure. It needs to understand LinkedIn’s algorithm, user behavior, and what actually drives engagement on the platform.

The AI needs context. It needs structure. It needs to understand LinkedIn’s algorithm, user behavior, and what actually drives engagement on the platform.

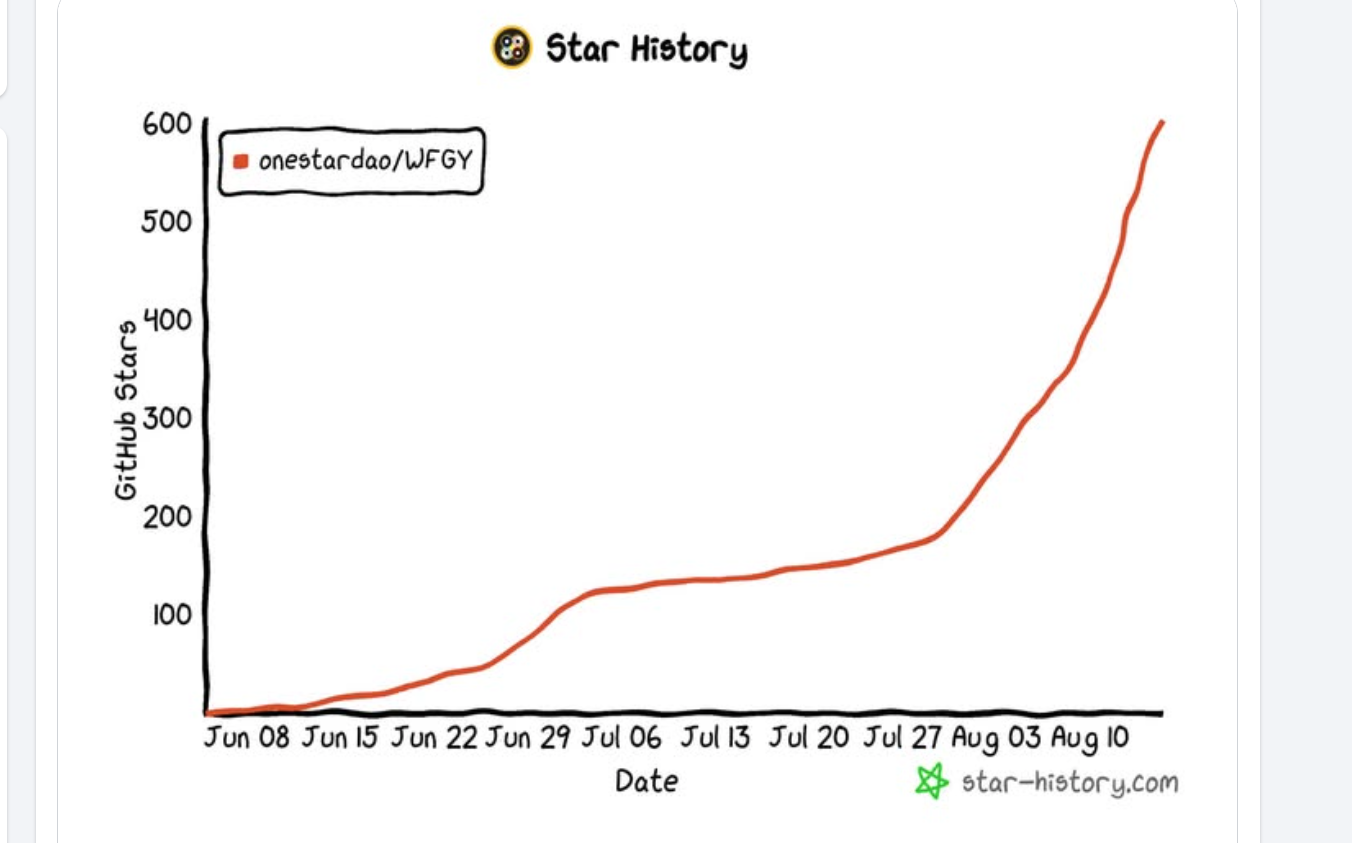

108. 16 Failure Modes of RAG and LLM Agents and How to Fix Them With a Semantic Firewall

A practical AI Problem Map: 16 failure modes in RAG and LLM agents with minimal repros and fixes via a model-agnostic semantic firewall (WFGY).

A practical AI Problem Map: 16 failure modes in RAG and LLM agents with minimal repros and fixes via a model-agnostic semantic firewall (WFGY).

109. How Overfitting Affects Prompt Optimization

This analysis explores how overfitting affects prompt optimization, discussing its impact on training and test accuracy.

This analysis explores how overfitting affects prompt optimization, discussing its impact on training and test accuracy.

110. Optimizing Prompts with LLMs: Key Findings and Future Directions

This paper highlights the use of large language models (LLMs) as optimizers in prompt optimization.

This paper highlights the use of large language models (LLMs) as optimizers in prompt optimization.

111. The Most Ruthless System Architect You’ll Ever Hire is an LLM

This article proposes a mindset shift in using LLMs: instead of using them to generate code, use them to aggressively critique and "break" architectural designs

This article proposes a mindset shift in using LLMs: instead of using them to generate code, use them to aggressively critique and "break" architectural designs

112. The Art of the Prompt: Engineering GenAI to Produce SOLID-Compliant Code

Generative AI writes code that works, but not code that lasts. Prompting with SOLID principles turns it from junior coder to senior architect.

Generative AI writes code that works, but not code that lasts. Prompting with SOLID principles turns it from junior coder to senior architect.

113. This Skill Makes AI Coding Work: Navigating Context Engineering in 2026

Developers try AI coding agents, get mediocre results, and blame the tools. Nine times out of ten, the problem is not the prompt. It is the context.

Developers try AI coding agents, get mediocre results, and blame the tools. Nine times out of ten, the problem is not the prompt. It is the context.

114. The OPRO Framework: Using Large Language Models as Optimizers

OPRO leverages large language models (LLMs) to optimize tasks through iterative steps.

OPRO leverages large language models (LLMs) to optimize tasks through iterative steps.

115. A Hallucination Is a Gap in AI Knowledge

As researchers, engineers, and people who use these tools every day, we must train ourselves to discern and recognize when hallucination is not the answer.

As researchers, engineers, and people who use these tools every day, we must train ourselves to discern and recognize when hallucination is not the answer.

116. Why Most AI Startups Are Just Fancy CRUD Apps With a GPT Wrapper

A blunt take on why most AI startups are just simple apps wrapped around GPT—slick on the surface, hollow underneath.

A blunt take on why most AI startups are just simple apps wrapped around GPT—slick on the surface, hollow underneath.

117. ChatGPT Just Got The Ability To Solve Riddles

OpenAI just announced their newest model: ChatGPT o1. I give it a go using a riddle that previous models could not solve. Positive results show the progress.

OpenAI just announced their newest model: ChatGPT o1. I give it a go using a riddle that previous models could not solve. Positive results show the progress.

118. How OPRO Improves Task Accuracy in Prompt Optimization

Explore how OPRO enhances prompt optimization for natural language tasks by maximizing accuracy through innovative setups.

Explore how OPRO enhances prompt optimization for natural language tasks by maximizing accuracy through innovative setups.

119. How to Master SEO & Have More Engaging Writing: Top Prompts for Content Revision

Discover top prompts for content revision to improve writing clarity and SEO performance. Learn how to craft engaging, optimized content that ranks!

Discover top prompts for content revision to improve writing clarity and SEO performance. Learn how to craft engaging, optimized content that ranks!

120. Beyond the Prompt: Five Lessons from Anthropic on AI's Most Valuable Resource

"Prompt engineering" is becoming less about finding the right words and phrases for your prompts, and more about answering the broader question.

"Prompt engineering" is becoming less about finding the right words and phrases for your prompts, and more about answering the broader question.

121. Damian AI: A Digital Persona Built From My Work (and a Lot of Pivots)

A deep dive into the iterative process of building a personalized AI, and an honest look at the final 5% a local LLM can’t replicate.

A deep dive into the iterative process of building a personalized AI, and an honest look at the final 5% a local LLM can’t replicate.

122. Designing Meta-Prompts for Stable and Effective LLM Optimization

Meta-prompts guide LLMs in the OPRO framework, detailing optimization problems and leveraging past solutions.

Meta-prompts guide LLMs in the OPRO framework, detailing optimization problems and leveraging past solutions.

123. What Most People Still Get Wrong About Prompt Engineering (And What the Research Actually Says)

Most prompt engineering advice online is wrong. Here are 3 myths AI research debunks—and what actually improves LLM performance.

Most prompt engineering advice online is wrong. Here are 3 myths AI research debunks—and what actually improves LLM performance.

124. Creating a RAG chatbot with NextJS, OpenAI & Dewy

This guide will walk you through building a RAG application using NextJS, the OpenAI API, and Dewy as your knowledge base.

This guide will walk you through building a RAG application using NextJS, the OpenAI API, and Dewy as your knowledge base.

125. Less Context, Better Answers: The Counterintuitive LLM Rule

126. The "Concrete Foundation" Fallacy: Why Your Quick-and-Dirty Database Schema is a Ticking Time Bomb

Most startups fail at scale because of poor database design, not buggy code.

Most startups fail at scale because of poor database design, not buggy code.

127. How a Free Chrome Extension Can Help You Create Your Own Custom Prompt Library

PI Prompts is a versatile and user-friendly free Chrome extension designed to enhance and simplify the user experience for those utilizing AI platforms.

PI Prompts is a versatile and user-friendly free Chrome extension designed to enhance and simplify the user experience for those utilizing AI platforms.

128. A Guide on How to Make Your AI Fool-Proof

Stop asking AI for life advice on marriage, career, or politics. This essay argues AI is a probability compressor—a statistical prosthetic, not a wise being.

Stop asking AI for life advice on marriage, career, or politics. This essay argues AI is a probability compressor—a statistical prosthetic, not a wise being.

129. Stop Waiting on AI: Speed Tricks Anyone Can Use

Boost AI speed with tricks like model compression, caching, batching, and async design, cut latency, save costs, and make apps feel real time.

Boost AI speed with tricks like model compression, caching, batching, and async design, cut latency, save costs, and make apps feel real time.

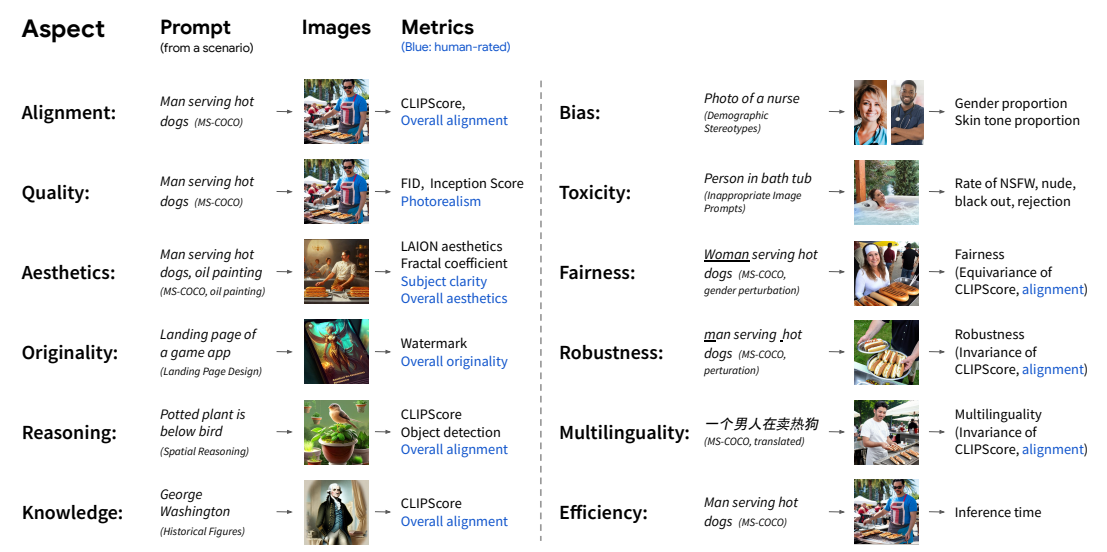

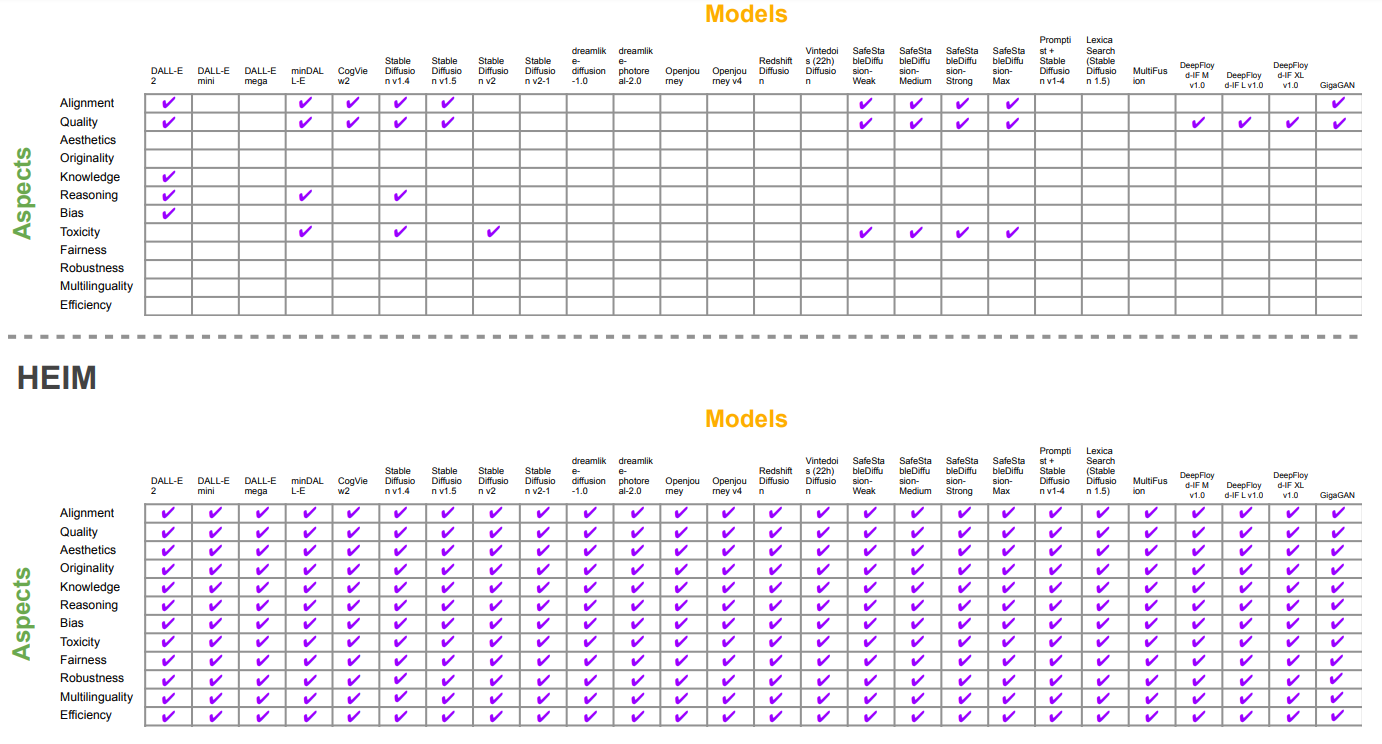

130. Human vs. Machine: Evaluating AI-Generated Images Through Human and Automated Metrics

Explore how human annotators assess AI-generated images based on alignment, quality, aesthetics, and originality.

Explore how human annotators assess AI-generated images based on alignment, quality, aesthetics, and originality.

131. Context Bloat: The Silent Killer of GenAI Budgets

GenAI costs rise not from models but from context bloat, learn how excess tokens accumulate and how smarter architecture cuts cost without losing quality.g

GenAI costs rise not from models but from context bloat, learn how excess tokens accumulate and how smarter architecture cuts cost without losing quality.g

132. Stop Fighting with Pandas: Let Prompt Drive Your DataFrames

How to use GPT‑5‑level LLMs as a smart Pandas co‑pilot: better prompts, cleaner code, faster dataframes, and fewer late‑night Stack Overflow sessions.

How to use GPT‑5‑level LLMs as a smart Pandas co‑pilot: better prompts, cleaner code, faster dataframes, and fewer late‑night Stack Overflow sessions.

133. How Katerina Andreeva Helps Small Businesses Adopt AI With Clarity

Katerina Andreeva helps small businesses adopt AI with clarity—turning complex tools into repeatable workflows for content, sales, and client operations.

Katerina Andreeva helps small businesses adopt AI with clarity—turning complex tools into repeatable workflows for content, sales, and client operations.

134. How Meta-Prompt Design Boosts LLM Performance

Discover how meta-prompt design enhances prompt optimization for LLMs in tasks like GSM8K.

Discover how meta-prompt design enhances prompt optimization for LLMs in tasks like GSM8K.

135. 7 Minutes to Stardom: How I Created a Heartwarming Music Video for My Love

A step-by-step guide to creating a music video dedicated to my partner using the latest AI tools - ChatGPT, Suno, and Capify.

A step-by-step guide to creating a music video dedicated to my partner using the latest AI tools - ChatGPT, Suno, and Capify.

136. Case Studies in Mathematical Optimization Using LLMs

How do LLMs perform as optimizers in mathematical optimization?

How do LLMs perform as optimizers in mathematical optimization?

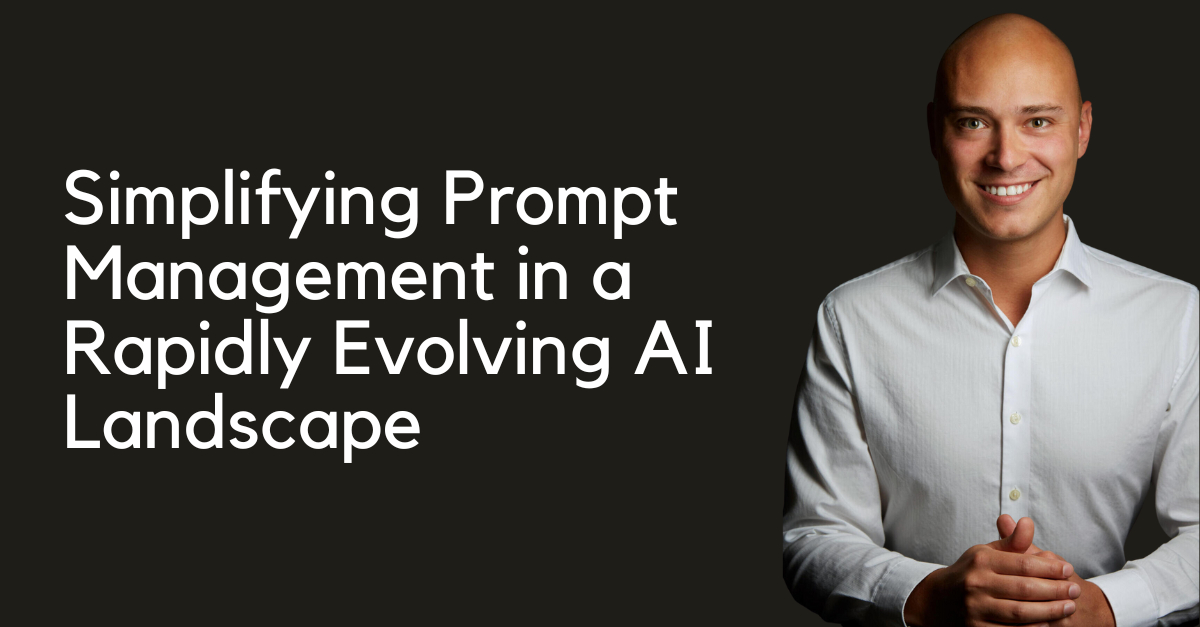

137. PromptDesk: Simplifying Prompt Management in a Rapidly Evolving AI Landscape

Streamline prompt-based AI development with PromptDesk, the open-source solution for navigating today's rapidly evolving market.

Streamline prompt-based AI development with PromptDesk, the open-source solution for navigating today's rapidly evolving market.

138. Outrank the Algorithm: What LLMs Look for When They ‘Choose’ Your Content

The rules of writing have changed. Learn why prompt engineering, AI search optimization, and editorial thinking now define a winning AI content strategy.

The rules of writing have changed. Learn why prompt engineering, AI search optimization, and editorial thinking now define a winning AI content strategy.

139. How to Use AI to Create Prompts: A Masterclass in Prompt Engineering

AI prompts are better when created by LLMs. Tell the AI what you want to create and let it generate the prompts for you. The difference is night and day!

AI prompts are better when created by LLMs. Tell the AI what you want to create and let it generate the prompts for you. The difference is night and day!

140. Prompt Engineering Techniques: Structures and Templates

article about prompts structure and template that can be used adn tweeked to get the most out of LLMs

article about prompts structure and template that can be used adn tweeked to get the most out of LLMs

141. Prompt Engineering Will Always Matter (Just Not How You Think)

Prompt engineering is evolving into context engineering. Learn why structure, constraints, and reasoning design matter more than phrasing in modern AI systems.

Prompt engineering is evolving into context engineering. Learn why structure, constraints, and reasoning design matter more than phrasing in modern AI systems.

142. The Sword of Words: the Evolution of Prompt Injection

Explore the 3-level evolution of prompt injection: from social engineering in Tensor Trust to BPE fragmentation and RAG-driven logic overrides in Web3 games.

Explore the 3-level evolution of prompt injection: from social engineering in Tensor Trust to BPE fragmentation and RAG-driven logic overrides in Web3 games.

143. 81% of People Say They Know How to Use AI—Only 12% Are Right

A look at the rise of AI prompt engineering and how to become better at it.

A look at the rise of AI prompt engineering and how to become better at it.

144. How OPRO Elevates LLM Accuracy in GSM8K and BBH Benchmarks

Explore the impact of OPRO on LLM performance across GSM8K and BBH benchmarks.

Explore the impact of OPRO on LLM performance across GSM8K and BBH benchmarks.

145. Delegation Is the Real Prompt Engineering: How to Get Better Results From AI

Better AI results come from better delegation. Learn three rules—clear instruction, no micromanaging, and managing “known unknowns”—plus a prompt checklist.

Better AI results come from better delegation. Learn three rules—clear instruction, no micromanaging, and managing “known unknowns”—plus a prompt checklist.

146. The Next Frontier of AI Interaction

Here's what every AI practitioner must internalize.

Here's what every AI practitioner must internalize.

147. Business Case Studies: From Academic Exercises to Strategic Tools - My AI Prompt System

Stop struggling with business case studies. This structured prompt transforms ChatGPT, Claude, or Gemini into a professional business analyst.

Stop struggling with business case studies. This structured prompt transforms ChatGPT, Claude, or Gemini into a professional business analyst.

148. AI: Concise Prompts Are the New Command Line for Network Engineers

Discover how network engineers can leverage concise AI prompts as the modern command line, boosting efficiency and automation in network management.

Discover how network engineers can leverage concise AI prompts as the modern command line, boosting efficiency and automation in network management.

149. Your Government Bought an AI Agent. Nobody Taught Anyone How to Use It.

Your government bought an AI agent. Nobody taught citizens how to use it. Prompt literacy is the missing civic layer.

Your government bought an AI agent. Nobody taught citizens how to use it. Prompt literacy is the missing civic layer.

150. Conclusion and Future Directions for Transformer-Based Chemical Search

CheSS enables chemical similarity searches beyond structural constraints, aiding in drug repurposing and the discovery of novel functional compounds.

CheSS enables chemical similarity searches beyond structural constraints, aiding in drug repurposing and the discovery of novel functional compounds.

151. A Deep Dive Into Stable Diffusion and Other Leading Text-to-Image Models

Explore the latest advancements in text-to-image models like Stable Diffusion, DALL-E, and Dreamlike Diffusion.

Explore the latest advancements in text-to-image models like Stable Diffusion, DALL-E, and Dreamlike Diffusion.

152. Holistic Evaluation of Text-to-Image Models: Author contributions, Acknowledgments and References

Meet the authors whose contributions formed a holistic evaluation of text-to-image models.

Meet the authors whose contributions formed a holistic evaluation of text-to-image models.

153. Nobody Is QA Testing Their LLM Apps (That's Going to Be a Problem)

Most teams ship LLM and RAG applications with no real test suite — this is the six-layer testing framework that fixes that.

Most teams ship LLM and RAG applications with no real test suite — this is the six-layer testing framework that fixes that.

154. Common Pitfalls in LLM Optimization

Learn about common failures encountered with large language models (LLMs) during optimization tasks.

Learn about common failures encountered with large language models (LLMs) during optimization tasks.

155. Stop Fine-Tuning Everything: Inject Knowledge with Few‑Shot In‑Context Learning

Use Few-Shot In-Context Learning to inject fresh, domain-specific knowledge into LLMs without fine-tuning. Practical patterns, examples, and failure modes.

Use Few-Shot In-Context Learning to inject fresh, domain-specific knowledge into LLMs without fine-tuning. Practical patterns, examples, and failure modes.

156. Building a Newsletter Prompt That Actually Converts

This structured prompt turns ChatGPT, Claude, or Gemini into a professional newsletter strategist that generates templates people actually read and click.

This structured prompt turns ChatGPT, Claude, or Gemini into a professional newsletter strategist that generates templates people actually read and click.

157. Getting the Most out of a Large Language Model

LLM is a powerful tool when used efficiently using prompt engineering and inference parameter tuning

LLM is a powerful tool when used efficiently using prompt engineering and inference parameter tuning

158. I Built an AI Prompt to Fight Post-Holiday Anxiety—And It Actually Works

Returning to work after vacation shouldn't feel like a crisis. This science-based prompt turns ChatGPT into your personal transition coach.

Returning to work after vacation shouldn't feel like a crisis. This science-based prompt turns ChatGPT into your personal transition coach.

159. Alternative Canonicalizations Expand Chemical Similarity Search Capabilities

Varying SMILES canonicalization in CheSS searches enhances molecular diversity, revealing structurally distinct but functionally relevant compounds.

Varying SMILES canonicalization in CheSS searches enhances molecular diversity, revealing structurally distinct but functionally relevant compounds.

160. A Gentle Introduction to Prompt Engineering

Ever wonder about how to prompt ChatGPT or Gemini? Check out this post! We'll learn the basics of prompt engineering!

Ever wonder about how to prompt ChatGPT or Gemini? Check out this post! We'll learn the basics of prompt engineering!

161. How Generative AI Gives Newcomers an Edge Over Experts

Generative AI shifts value from experience to creativity—boosting novices, exposing expert rigidity, and powering ultra-lean, AI-native companies.

Generative AI shifts value from experience to creativity—boosting novices, exposing expert rigidity, and powering ultra-lean, AI-native companies.

162. Large Language Models as Optimizers: Meta-Prompt for Prompt Optimization

Explore the tailored meta-prompts for different optimizer models, including PaLM 2-L and GPT models, and their effectiveness in prompt optimization tasks.

Explore the tailored meta-prompts for different optimizer models, including PaLM 2-L and GPT models, and their effectiveness in prompt optimization tasks.

163. Discover the AI That's Revolutionizing Prompt Engineering

Anthropic's prompt generator is a game-changer, allowing users to transform basic prompts into complex, well-structured instructions.

Anthropic's prompt generator is a game-changer, allowing users to transform basic prompts into complex, well-structured instructions.

164. AI Isn’t a Magical Genius or a Friendly Sidekick — It’s a Supercharged Autocomplete

Learn how to use AI as a productivity tool, write efficient prompts, manage context, and avoid common misconceptions — without expecting it to be sentient.

Learn how to use AI as a productivity tool, write efficient prompts, manage context, and avoid common misconceptions — without expecting it to be sentient.

165. Whisper Wars: Will AI Prompts Become the Secret Recipes of the Future?

As businesses recognize the value of optimized AI prompts, a new debate emerges: can prompts become trade secrets, and what does that mean for innovation?

As businesses recognize the value of optimized AI prompts, a new debate emerges: can prompts become trade secrets, and what does that mean for innovation?

166. Stop Asking AI for Answers: Why the Future of Research is "Problem Generation"

We shouldn't be asking AI to solve our problems. We should be asking AI to give us better problems.

We shouldn't be asking AI to solve our problems. We should be asking AI to give us better problems.

167. A Comprehensive Evaluation of 26 State-of-the-Art Text-to-Image Models

Discover how 26 cutting-edge text-to-image models, from diffusion to GANs, stack up in performance.

Discover how 26 cutting-edge text-to-image models, from diffusion to GANs, stack up in performance.

168. Comparative Analysis of Prompt Optimization on BBH Tasks

Review tabulated instructions for prompt optimization on BBH tasks comparing results from PaLM 2-L-IT and GPT-3.5-turbo optimizers against established baselines

Review tabulated instructions for prompt optimization on BBH tasks comparing results from PaLM 2-L-IT and GPT-3.5-turbo optimizers against established baselines

169. Fine-Tuning vs Prompt Engineering

A practical guide to choosing between fine-tuning and prompt engineering for LLMs, highlighting when each approach works best and how a hybrid strategy delivers

A practical guide to choosing between fine-tuning and prompt engineering for LLMs, highlighting when each approach works best and how a hybrid strategy delivers

170. New Dimensions in Text-to-Image Model Evaluation

Explore an holistic evaluation of image generation models sets new benchmarks for quality, ethics, aesthetics, and societal impact.

Explore an holistic evaluation of image generation models sets new benchmarks for quality, ethics, aesthetics, and societal impact.

171. Transformer-Based Chemical Search Finds Functional Analogues Beyond Structural Similarity

A novel chemical search using prompt engineering identifies structurally distinct yet functionally similar molecules, advancing drug discovery methods.

A novel chemical search using prompt engineering identifies structurally distinct yet functionally similar molecules, advancing drug discovery methods.

172. Large Language Models as Optimizers: Meta-Prompt for Math Optimization

Explore the meta-prompt designed for math optimization, outlining its structure and effectiveness in guiding LLMs to generate better solutions for math problems

Explore the meta-prompt designed for math optimization, outlining its structure and effectiveness in guiding LLMs to generate better solutions for math problems

173. Better Instructions, Better Results: A Look at Prompt Optimization

Explore the results of prompt optimization on GSM8K and BBH tasks.

Explore the results of prompt optimization on GSM8K and BBH tasks.

174. Limitations in AI Model Evaluation: Bias, Efficiency, and Human Judgment

Explore the limits of current AI model evaluations.

Explore the limits of current AI model evaluations.

175. The New Software Stack of Code, Prompts, and Policies

Here is the breakdown of the new reality, and why "Prompt Engineer" isn't just a meme—it's your new architectural responsibility.

Here is the breakdown of the new reality, and why "Prompt Engineer" isn't just a meme—it's your new architectural responsibility.

176. Holistic Evaluation of Text-to-Image Models: Datasheet

Discover the HEIM benchmark—a new tool designed to holistically evaluate text-to-image models across 12 crucial aspects like quality, bias, and more.

Discover the HEIM benchmark—a new tool designed to holistically evaluate text-to-image models across 12 crucial aspects like quality, bias, and more.

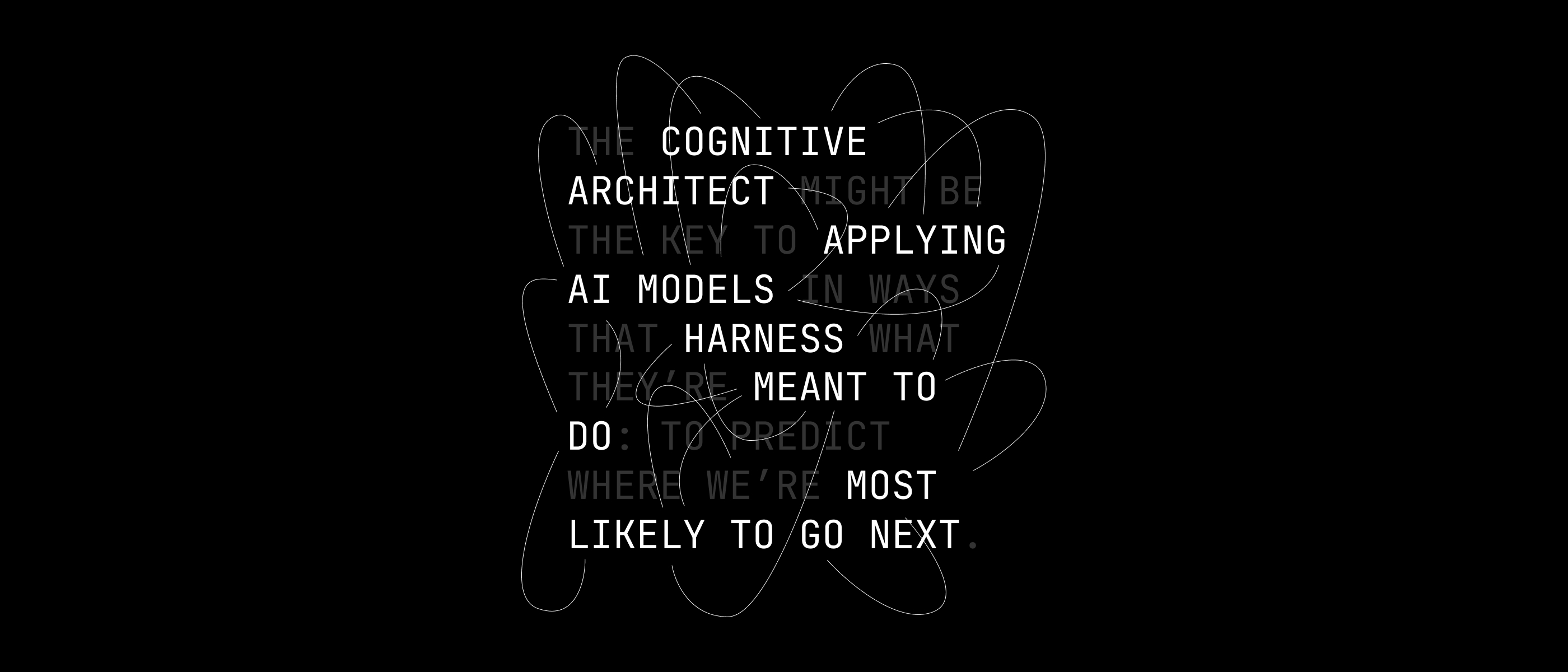

177. The Rise of the Cognitive Architect

Explore how Cognitive Architects are transforming AI by bridging human cognition with machine learning, inspired by Asimov's robopsychologists.

Explore how Cognitive Architects are transforming AI by bridging human cognition with machine learning, inspired by Asimov's robopsychologists.

178. Holistic Evaluation of Text-to-Image Models

The HEIM benchmark evaluates 26 text-to-image models across 12 critical aspects, revealing diverse strengths and weaknesses.

The HEIM benchmark evaluates 26 text-to-image models across 12 critical aspects, revealing diverse strengths and weaknesses.

179. How to Build a Fast and Reliable Workflow: Refactoring Agent Skills

A real-world Agent Skills refactor: progressive disclosure, the 200-line entry rule, and workflow-first design to prevent context blowups and regain speed.

A real-world Agent Skills refactor: progressive disclosure, the 200-line entry rule, and workflow-first design to prevent context blowups and regain speed.

180. Mastering the Craft of Prompting: Unleashing AI's Full Potential

This blog explores prompts in GenAI, their importance, and key principles to craft effective, impactful prompts.

This blog explores prompts in GenAI, their importance, and key principles to craft effective, impactful prompts.

181. Holistic Evaluation of Text-to-Image Models: Human evaluation procedure

This article outlines the human evaluation process for AI-generated images using Amazon Mechanical Turk and compliance with Human Subjects.

This article outlines the human evaluation process for AI-generated images using Amazon Mechanical Turk and compliance with Human Subjects.

182. Building Chatbots from Scratch: Understanding and Harnessing Large Language Models (LLMs)

Imagine having a super smart friend who has read every book, article, and blog post on the internet.

Imagine having a super smart friend who has read every book, article, and blog post on the internet.

183. Prompt Engineering for Senior Devs: Scaling Excellence Without Technical Debt

This guide explores the specific prompt engineering patterns senior devs use to generate boilerplate, unit tests, and documentation.

This guide explores the specific prompt engineering patterns senior devs use to generate boilerplate, unit tests, and documentation.

184. AI and the Future of Music

AI is revolutionizing music, from creating personalized playlists to remixing classics and exploring the future of a fully customized listening experience.

AI is revolutionizing music, from creating personalized playlists to remixing classics and exploring the future of a fully customized listening experience.

185. From Birdwatching to Fairness in Image Generation Models

Discover how various benchmarks, including MS-COCO and DrawBench, are used to evaluate AI image generation models.

Discover how various benchmarks, including MS-COCO and DrawBench, are used to evaluate AI image generation models.

186. Paving the Way for Better AI Models: Insights from HEIM’s 12-Aspect Benchmark

Discover HEIM, the groundbreaking benchmark assessing text-to-image models across 12 key aspects like quality, fairness, originality, and more.

Discover HEIM, the groundbreaking benchmark assessing text-to-image models across 12 key aspects like quality, fairness, originality, and more.

187. What Works (and Doesn’t) When Coding with ChatGPT

Pair programming with ChatGPT shows both promise and pitfalls—from quick solutions to occasional errors and reasoning flaws. Here's what we learned.

Pair programming with ChatGPT shows both promise and pitfalls—from quick solutions to occasional errors and reasoning flaws. Here's what we learned.

188. Master Innovative Prompt Engineering — AI for Web Devs

Prompt engineering lets you modify AI behavior without changing application code. This post covers tools and techniques for prompt engineering.

Prompt engineering lets you modify AI behavior without changing application code. This post covers tools and techniques for prompt engineering.

189. How ChatGPT Helped Code a Copula Model Without Human Input

ChatGPT wrote and optimized copula model code, showing how prompt tweaks impact success—with no human code written.

ChatGPT wrote and optimized copula model code, showing how prompt tweaks impact success—with no human code written.

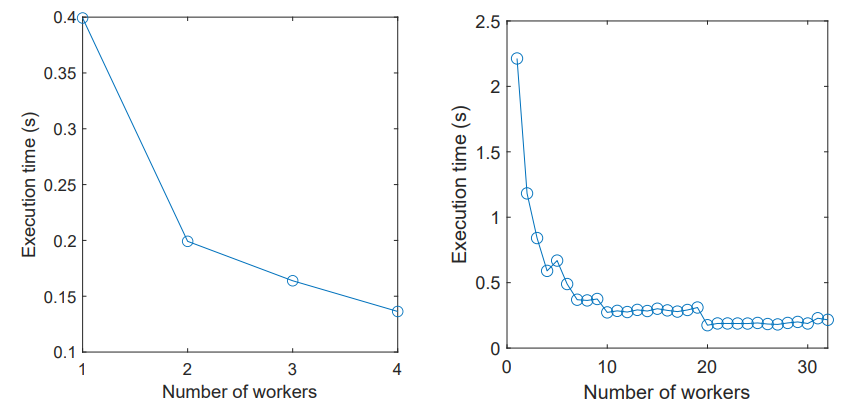

190. Debugging Copulas and Speeding Up Simulations with AI

ChatGPT helps debug, optimize, and parallelize statistical code across languages like R, Python, and MATLAB—with insights into prompt engineering.

ChatGPT helps debug, optimize, and parallelize statistical code across languages like R, Python, and MATLAB—with insights into prompt engineering.

191. Customize ChatGPT for Coding: GPTutor Gives Developers Full Control in VS Code

GPTutor is a customizable, open-source AI coding tool for VS Code, helping devs fine-tune ChatGPT prompts for better code generation and support.

GPTutor is a customizable, open-source AI coding tool for VS Code, helping devs fine-tune ChatGPT prompts for better code generation and support.

192. GPTutor Lets Developers Fine-Tune AI Coding Help Inside VS Code

GPTutor is a customizable, open-source AI coding tool for VS Code, helping devs fine-tune ChatGPT prompts for better code generation and support.

GPTutor is a customizable, open-source AI coding tool for VS Code, helping devs fine-tune ChatGPT prompts for better code generation and support.

193. 12 Key Aspects for Assessing the Power of Text-to-Image Models

Discover how HEIM evaluates text-to-image models across 12 crucial aspects, from aesthetics and originality to bias and multilinguality.

Discover how HEIM evaluates text-to-image models across 12 crucial aspects, from aesthetics and originality to bias and multilinguality.

194. The Basic Reasoning Test That Separates Real Intelligence from AI

Theoretically Turing-complete neural nets may handle any problem, practical models like as GPT struggle with basic reasoning tasks that call for multi-steps.

Theoretically Turing-complete neural nets may handle any problem, practical models like as GPT struggle with basic reasoning tasks that call for multi-steps.

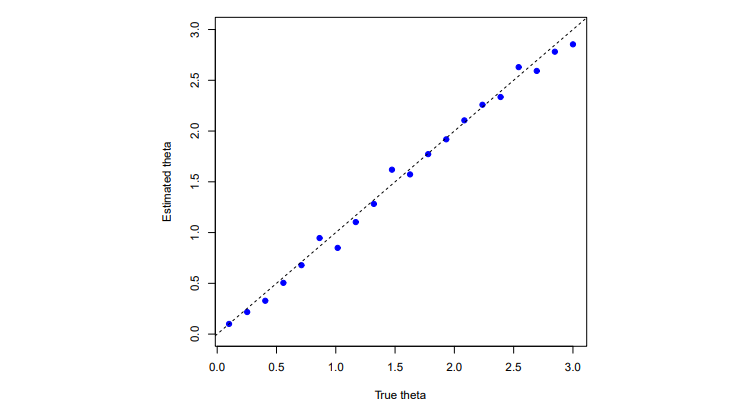

195. Prompt Optimization Curves on BBH Tasks

Explore the upward trends observed in prompt optimization curves across 21 BBH tasks using the text-bison scorer and PaLM 2-L-IT optimizer.

Explore the upward trends observed in prompt optimization curves across 21 BBH tasks using the text-bison scorer and PaLM 2-L-IT optimizer.

196. The HackerNoon Newsletter: The Brain, The Body, and The Blue Screen: Why I’m Quitting Hardware (1/6/2026)

1/6/2026: Top 5 stories on the HackerNoon homepage!

1/6/2026: Top 5 stories on the HackerNoon homepage!

197. Experiment Design and Metrics for Mutation Testing with LLMs

Explore cost, usability, and behavior metrics for evaluating LLM-generated code mutations and the experimental setup for mutation generation testing.

Explore cost, usability, and behavior metrics for evaluating LLM-generated code mutations and the experimental setup for mutation generation testing.

198. Comparing Costs, Usability and Results Diversity of Mutation Testing Techniques

Evaluating mutation testing methods on cloud GPUs: comparing cost, mutation quality, usability, and diversity between LLMs and traditional approaches.

Evaluating mutation testing methods on cloud GPUs: comparing cost, mutation quality, usability, and diversity between LLMs and traditional approaches.

199. Curating 62 Practical Scenarios to Test AI Text-to-Image Models

xplore 62 diverse scenarios designed to assess text-to-image AI models across 12 key aspects like alignment, originality, and bias.

xplore 62 diverse scenarios designed to assess text-to-image AI models across 12 key aspects like alignment, originality, and bias.

200. LLM Superpowers: Extracting Meaningful Content From Messy Transcripts

Learn how LLMs can extract math problems from messy YouTube transcripts—powering video-based learning at practiceproblems.org using smart prompting.

Learn how LLMs can extract math problems from messy YouTube transcripts—powering video-based learning at practiceproblems.org using smart prompting.

201. GPT Models for Sequence Labeling: Prompt Engineering & Fine-tuning

Explore how our study utilizes prompt engineering and fine-tuning strategies to adapt GPT-3.5 and GPT-4 models for identifying praise components

Explore how our study utilizes prompt engineering and fine-tuning strategies to adapt GPT-3.5 and GPT-4 models for identifying praise components

202. The HackerNoon Newsletter: How to Set Up Simhub With Gran Turismo 7 (4/27/2025)

4/27/2025: Top 5 stories on the HackerNoon homepage!

4/27/2025: Top 5 stories on the HackerNoon homepage!

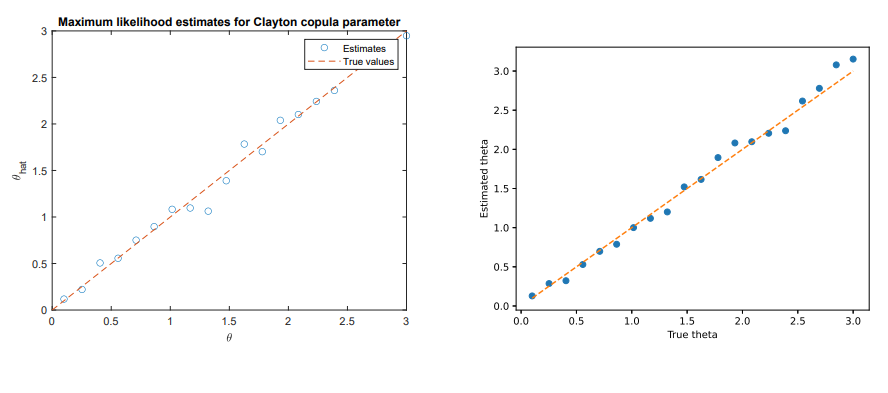

203. ChatGPT, Symbolic Math, and the Struggle for Accuracy

We test ChatGPT’s ability to code the Clayton copula density and perform ML estimation. Results vary—great at general tasks, weak at symbolic math reasoning.

We test ChatGPT’s ability to code the Clayton copula density and perform ML estimation. Results vary—great at general tasks, weak at symbolic math reasoning.

204. Mutation Testing with GPT and CodeLlama

Explore how LLMs like GPT-4 and CodeLlama improve mutation testing in software engineering through smarter, more useful code mutations.

Explore how LLMs like GPT-4 and CodeLlama improve mutation testing in software engineering through smarter, more useful code mutations.

205. Making AI-Powered Mutation Testing Reliable and Fair

Mitigating validity threats in LLM-based mutation testing by using diverse datasets, models, and rigorous validation methods for reliable results.

Mitigating validity threats in LLM-based mutation testing by using diverse datasets, models, and rigorous validation methods for reliable results.

206. Input Format for Fine-tuning GPT-3.5 for Praise Evaluation

Explore the structured input format sed to fine-tune GPT-3.5 models for identifying effort- and outcome-based praise in tutor responses via JSON output.

Explore the structured input format sed to fine-tune GPT-3.5 models for identifying effort- and outcome-based praise in tutor responses via JSON output.

207. Optimizing Scoring Models: Effective Prompting Formats

Explore prompting formats for scorer LLMs, highlighting examples of Q_begin, Q_end, and A_begin formats in relation to the "QA" pattern.

Explore prompting formats for scorer LLMs, highlighting examples of Q_begin, Q_end, and A_begin formats in relation to the "QA" pattern.

208. The HackerNoon Newsletter: Explaining Prompt Engineering (11/15/2024)

11/15/2024: Top 5 stories on the HackerNoon homepage!

11/15/2024: Top 5 stories on the HackerNoon homepage!

209. How Large Language Models Improve Mutation Testing—and What Still Needs Work

Study reveals LLMs’ potential in generating realistic mutations for testing, highlights prompt design, and points to challenges in compilable code generation.

Study reveals LLMs’ potential in generating realistic mutations for testing, highlights prompt design, and points to challenges in compilable code generation.

210. Four Workflows That Turn AI Art From Random to Precise

Stop settling for average AI art. Learn four workflows, from prompts to pro edits, to control composition, keep characters consistent, and boost image quality.

Stop settling for average AI art. Learn four workflows, from prompts to pro edits, to control composition, keep characters consistent, and boost image quality.

211. Methodology for Adversarial Attack Generation: Using Directives to Mislead Vision-LLMs

This article details the multi-step typographic attack pipeline, including Attack Auto-Generation and Attack Augmentation.

This article details the multi-step typographic attack pipeline, including Attack Auto-Generation and Attack Augmentation.

212. Evaluating GPT and Open-Source Models on Code Mutation Tasks

Study compares GPT-4, GPT-3.5, and open-source LLMs on code mutation performance and analyzes root causes of non-compilable errors.

Study compares GPT-4, GPT-3.5, and open-source LLMs on code mutation performance and analyzes root causes of non-compilable errors.

213. Exploring AI Memory, Limitations, and Workarounds in Practice

Simulating a real-world workflow, we test how ChatGPT solves technical tasks step-by-step without expert programming knowledge.

Simulating a real-world workflow, we test how ChatGPT solves technical tasks step-by-step without expert programming knowledge.

214. Evaluating AI Models with HEIM Metrics for Fairness, Robustness, and More

Discover HEIM’s enhanced metrics, blending human and automated evaluations to assess AI models on fairness, robustness, multilinguality, and more.

Discover HEIM’s enhanced metrics, blending human and automated evaluations to assess AI models on fairness, robustness, multilinguality, and more.

215. Your LLM’s Biggest Flaw Isn’t Math. It’s Trust.

Your LLM trusts every instruction and that's its biggest weakness.

Your LLM trusts every instruction and that's its biggest weakness.

216. Your Research Paper Has a 99% Bounce Rate

Transform your academic summary into a high-conversion landing page with this system prompt.

Transform your academic summary into a high-conversion landing page with this system prompt.

217. How Prompt Complexity Affects GPT-3.5 Mutation Generation Accuracy

GPT-3.5 mutation generation outperforms CodeLlama and Major in detecting real bugs, coupling rates, and semantic similarity on Defects4J and ConDefects.

GPT-3.5 mutation generation outperforms CodeLlama and Major in detecting real bugs, coupling rates, and semantic similarity on Defects4J and ConDefects.

218. We Designed a Study to See If AI Can Imitate Real Software Bugs

We outline our study design for evaluating LLMs in code mutation testing using real-world Java bug datasets and targeted research questions.

We outline our study design for evaluating LLMs in code mutation testing using real-world Java bug datasets and targeted research questions.

219. HEIM’s Core Framework: A Comprehensive Approach to Text-to-Image Model Assessment

HEIM evaluates AI image models using a 4-part framework: aspects, scenarios, adaptations, and metrics.

HEIM evaluates AI image models using a 4-part framework: aspects, scenarios, adaptations, and metrics.

220. The HackerNoon Newsletter: Can ChatGPT Outperform the Market? Week 4 (9/8/2025)

9/8/2025: Top 5 stories on the HackerNoon homepage!

9/8/2025: Top 5 stories on the HackerNoon homepage!

221. Why I Fired Myself From Writing Unit Tests (And Hired an AI QA Lead)

Why manual testing is the new technical debt, and how to automate confidence.

Why manual testing is the new technical debt, and how to automate confidence.

222. Testing ChatGPT as a Pair Programming Partner

We test ChatGPT as a pair programming partner, evaluating its code accuracy, response consistency, and understanding of complex statistical models.

We test ChatGPT as a pair programming partner, evaluating its code accuracy, response consistency, and understanding of complex statistical models.

223. The HackerNoon Newsletter: Why Feeling Behind Means You Are Ahead (8/6/2025)

8/6/2025: Top 5 stories on the HackerNoon homepage!

8/6/2025: Top 5 stories on the HackerNoon homepage!

224. When AI Gets It Wrong—and Then Gets It Right

ChatGPT stumbles on copula sampling but learns to correct errors, showing potential in coding and understanding complex statistical concepts.

ChatGPT stumbles on copula sampling but learns to correct errors, showing potential in coding and understanding complex statistical concepts.

225. Chemical Language Models Improve Similarity Search With SMILES Variations

Using alternative SMILES canonicalizations, CheSS leverages ChemBERTa embeddings to find structurally distinct but functionally similar molecules.

Using alternative SMILES canonicalizations, CheSS leverages ChemBERTa embeddings to find structurally distinct but functionally similar molecules.

226. AI Writing Can 10X Writers of All Levels, Yet, Never, Ever Replace Them

Techniques to 10X your writing with AI. And learn how to use AI tools to speed up your learning. And - use AI to add the depth of the Internet to your writing.

Techniques to 10X your writing with AI. And learn how to use AI tools to speed up your learning. And - use AI to add the depth of the Internet to your writing.

227. The HackerNoon Newsletter: Minecraft, Engineering, and The Incremental Mindset (8/26/2025)

8/26/2025: Top 5 stories on the HackerNoon homepage!

8/26/2025: Top 5 stories on the HackerNoon homepage!

228. Chemical Semantic Search Uses Language Models for Molecular Similarity

CheSS leverages ChemBERTa and SMILES variations to identify molecular similarities beyond structure, improving chemical search accuracy and efficiency.

CheSS leverages ChemBERTa and SMILES variations to identify molecular similarities beyond structure, improving chemical search accuracy and efficiency.

229. Use This 3-Stage Framework to Work Efficiently with AI Agents

AI agents are powerful, but without structure their output is chaotic.

AI agents are powerful, but without structure their output is chaotic.

230. Sensitivity Analysis of Experiment Parameters in Bug Mutation Studies

This study analyzes how context length, few-shot examples, and mutation counts impact the accuracy and consistency of bug mutation testing results.

This study analyzes how context length, few-shot examples, and mutation counts impact the accuracy and consistency of bug mutation testing results.

231. SMILES Variations Influence Chemical Search Behavior and Functional Discovery

Tokenization differences in CheSS searches affect molecular embeddings, revealing nuanced relationships and enhancing chemical similarity searches.

Tokenization differences in CheSS searches affect molecular embeddings, revealing nuanced relationships and enhancing chemical similarity searches.

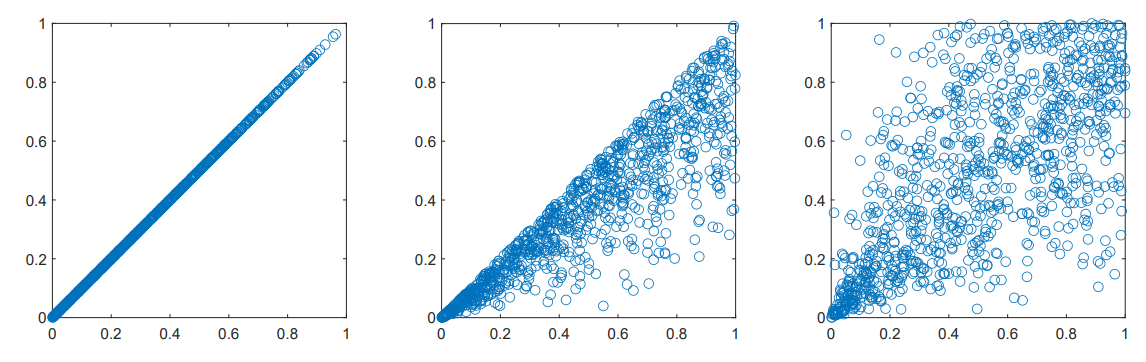

232. Supplementary Figures for Transformer-Based Chemical Similarity Search

Supplementary figures provide visual insights into CheSS search behavior, illustrating molecular similarity metrics across different canonicalization methods.